Stop Playing Security Whack-A-Mole

Show original YouTube description

Show transcript [en]

Okay. Hello everybody. How's everybody's day going? >> Okay, awesome. I mean, I was expecting a little more energy, but I know we're kind of still come off lunch. Um, so hopefully you're in the right place. If not, you can stay. Or if you don't want to talk, you know, like our talk, you can go listen to something else, but we'd like you to stay. Um, so our talk is stop playing security whack-a-ole. And my name is Lee. I am a technical adviser to the deputy cizo for identity. I am also one of the OG durability architects. Um, specifically for the secure future initiative protect identities and secrets pillar ID for short. Um, and I've been doing this

since we started this program. And hey and uh I'm Mike. I'm a cat herder in uh Microsoft um specifically the Azure core and I try to focus on secure by default and uh I am the um NS pillar driver in the uh SFI program. >> Yeah. But tell them what NS stands for >> network security. I mean, so the SFI program has a whole bunch of has a whole bunch of, you know, different different pillars from identity to tenant security to engineering systems and um one of them is obviously the network and so >> all right so here's our agenda for today. I'm just going to flash this and keep going. So I mean raise of hands like how many

people feel this is this is security work like yeah we we feel this to our core right it's just like we keep fixing things over and over and over and over it gets a little annoying right um so we have this very okay wait let me ask this how many people here are aware of the Secure future initiative for Microsoft a little bit right it's this very very large program that exists as a company we're basically trying to move all our engineers in one path direction it involves a lot of scaffolding and we made some mistakes at the beginning so what's the durability crisis >> so we as a security industry um love finding Oh, that is bright up there. I'm

out of the way. Um we as a security industry and a bunch of security people love pointing at things and saying that's bad, right? We have got so much of them from scanners to red teams to well practically everything. And so if that's the case of where we have a security issue gets introduced then we detect it somehow whether it's a scanner or a team or someone says here's that problem then eventually that will appear on a report or a ticket or something and then maybe we'll get round and remediate it. All of these kind of TTX's add up and that's the time we're at risk and we've got to get better at that. We've got to get from being reactive.

Hey, I found something prod. Go fix that to actually being durable in the case of maybe we found that, but let's not go back and do that again. Similar to what if you're in the talk that cat was just saying, right? And being able to put in things like, you know, uh policies, code, right, and things like that. We've got to bake that initial finding so that we don't get the introduced, right? We get proactive at that first side and as we call shift left. So we don't have to shift right and find things and react to things as we go. And that's what we found as part of the durability crisis in the early days of the SFI program. Um

more of that in a bit I think. Ah here we go. I did do that. >> Yeah. All right. So let's say for example that I've got um um yeah I've got some starting number of bad resources that that that we that we start with and over sprint one I'm going to go and fix some of these things remediate them but as I'm doing that production environment is never static it's always changing so at some point new resources are going to appear that if I don't have that durability built in right it's going to regress right my metric is going to regress slightly Also, some of them resources someone might touch and also make them bad again

for some for for some reason. Multiply that over sprint two, sprint three, sprint four. What we end up finding is we've done all this work over here, all this work for that much gain. That's all we've done. So, these red arrows like are a problem for us. effectively is extra work that we're doing if we can't make things durable and shift things and and shift things left. And in the early days of the SFI program, we found that that was a that that was the case, right? We were doing work and there was new things showing up all the time, which was giving us more work to do. And so therefore, we were always on that

hamster wheel. And so, um, one of, you know, one of the great people who we work with, Mark Rosanovich, come along and said, "No, we can't do this. We've got to sponsor a durability program." He's got a great blog post up if you if if you uh if you search for it. If you search for Mark's name and durability, it's one of the first hits. Um and it describes it really really well and effectively it was a thing that said anytime we launch something in SFI now it has to be durable. So we don't get this repeat and we don't stay on the hamster wheel. And the way we do that is by this get

green and stay green. Verify green, get green and stay green. So verify green first of all comes along and says there are certain resources or code or systems or whatever it is that are not conforming to a standard that we want. Right? So that's the verify green. We need to iterate to go and do the work to go and get them green. And then once they're green, we want to do something to make sure they stay that way. They don't regress. Same thing with new resources. As developers at a keyboard or a system goes and does something, we want to make sure they start green. They don't start in a red way in a bad way.

So we start in a good way for first and then once they start green we also have controls to make sure they don't regress and much of that is done from using policies and standards. We are very opinionated on what green means. There's no wiggle room in this. If it means that a storage account cannot be absolutely accessible. There is one way and one way only of defining that and that's what we look for and that's how that gets encoded into the system and what we look for similar thing on um on le side with identity like there is one identity platform that's it you're going to use managed security identities that's it there's no using SAS keys

there's no using usernames and passwords there's no using anything else this is a very opinionated standard and that's what we're going to push and because we've got them standards, we've got paved paths to get there, and we've got secure by default controls, these policies that once we got there, make sure we stay there. And so this means that we can get teams to fall into the pit of success. And so definition of making things secure by default and definition of paid paths, thank you. Thank you, uh, Copilot. But we really do want to make things easy for people. So, one way of doing things and then once you've got there, one way of ensuring that you're

there and you stay green. Um, but defining on what them standards are and defining on what them paved paths are, especially at a big company like Microsoft, is really hard cuz lots of people have different opinions on what that means and how to do it. And with that, I'm handing it over to Lee. >> All right. Um, I did not design this. I can take no credit for it, but I get to talk about it. So, that's pretty awesome. Um, before I get into governance, I think it's actually good to level set how we actually even think about work item design because if you don't design the work item correctly out of the gate, you're you're never going

to get your durability. It's just not going to work. who when you start all the way over we focus on risk. SFI engineering exists to mitigate the top risks to Microsoft. Every deputy CEO comes up with a list of their top risks, right? And that's how we drive work through SFI. Second, it's got to be a standard. We don't like bespoke, snowflake, whatever other word you like for something that's not a standard. We don't approve those. You've got to have a standard. Now, that means you may need to develop it. I want to be very clear about that. It doesn't mean you have to have a standard immediately, but you might need to go and spend some time

and figure out what it should be, but we're not going to push a bespoke item. So, then, you know, we come in and we make sure that you actually you you say it drives down risk, but we actually make sure that's true, right? Um, so the examples like eliminating pin searcherts for Microsoft accounts. Um, it's interesting because one of the things I often would talk about with durability with people, I said it's just engineering excellence. And often what I found when I worked with my teams is we're not just doing security work, we're actually also improving resiliency. So it's like a win-win for engineering teams. Um, we want work items such as traceability. That's the ability to actually be able

to quickly do a query and say, "Here's all the things in scope." Um, another time will gate is when people can't do that. They're just like, "Oh, I have this list." No. Um, >> it has to be clear, actionable, aligned, right? Like that's that sounds obvious like it has to be a key result, but sometimes people come in and we do our reviews and it's not clear at all. So that's one of the things we stick to. And we also for everything we release because there are times that teams have to take on work themselves, we make sure that the runbooks are clear and we make sure that people are doing things safely, right? Because what we don't

want to do is have you go out and make a change and cause a massive outage. That's a bad experience for not just the engineering team, but for the customer as well, right? So that's a very important um tenant for us. So then okay that's how we begin. So then we go through like thinking about how we select. Now when we first started SFI because I remember this uh in wave two I reviewed 42 KPIs. We did not release 42 K. That's just my pillar. Remember there's six pillars this time, 42. So you have to have a good criteria for what you're going to actually pick, right? So we actually will always prefer a central fix. It's not always possible.

Sometimes we need the dev team to make a coding change. Yes, we could automate that, but for safety reasons, we prefer they do it themselves, right? So that's a fan out versus a central fix. But we prefer central. Central is much easier to get through. We want to make sure that when we pick the items that they have a good defense value proposition. So basically, does it provide the most value? So safe secret standard is one that provides a boatload of value, right? And it it when it first launched, I think it was like millions of secrets that we went after. Millions, right? So, I would prefer a KPI that goes and fixes huge swaths of problems versus one

that's like, I have 10 teams that are doing the wrong thing. Cool. Just cut them tickets. >> Just just just explain just for a second what safe secrets are instead. It's not about the secret. >> It's not about the secret. We really want people to use managed identities. That's what we push. Um, not everybody can and we work with those teams to figure out why not, but we our mantra is get to managed identities. Um, we want standards and pay pass as Mike mentioned, right? But we want to make sure that they're always aligned to those standards. Don't skip the standard for ease. Um, this is preference, but Mike's right. We've shifted that. You really do have

to have a plan, a control for your stay and start green. Um there's lots of ways to do this, right? Like policy is one way, code is one way. Oddly, even governance structures are one way to do this. Um and then get green is again we want um so when you do a large fan out and you're you're impacting, you know, 40,000 engineers of the company, right? What you don't want is something that 40,000 engineers have to do themselves, right? So, we've really started to focus on what can we automate. And so, for example, we may actually give you a PR. We're not going to do we're going to make sure that you do your code review.

I bet it looks okay. We'll give it to you, right? So, we can skip a bunch of steps and you can just go and implement what we've given you. So, who's involved? So the KPI owner is the person who has the delight of filling out all the paperwork because of course there's a lot of paperwork with this. Um but they make sure that it's you going to mitigate a risk you know is durable. They can add it to the tooling. They've doc they've put their documents into the runbook and that they have automation. So a lot of teams will write what we call like quick start guides for our the teams we support that walk them through

these steps. the risk owner or a DCZO representative or a DCZO delegate or even in some cases the DCZO themselves is involved to validate that they are actually going to mitigate a risk and that it's a risk we want to mitigate right now and then governance actually is multiaceted and picks up a lot of people so as I said the telemetry piece is critical if you can't get your stuff into telemetry forget it um we do have reviews for runbooks so there's someone who's like going through the runbook with the team, validating all the steps, validating makes sense, ensuring a safe deployment process is documented so people don't cause outages. Um, it meets the bar for automation. There's

automation reviews. Again, there's a lot of people that go and do those reviews and make sure that, yeah, you've automated everything you can. And sometimes that means, you know, as I said, we'll give you the PR, but you're going to have to do the rest of the work. And then it meets the bar for durability. And that's kind of like where Mike and I sit, right? Like we go through the reviews. We work with teams. In the early days of durability, we would actually tell people, "Oh, I see that you're trying to do this. Have you could you do this in code?" And they would sit there and they think about it and they be like, "Can I call you back?"

I was like, "Sure." Um, and they would figure out how to put the block in code and we could move forward. Right? So there are other teams that have struggled with this and we work with them a lot. There's a team I've worked with for many ways and we are still start green is a bit elusive for us still right and we we may have a solution finally which is very exciting. Um but we do have a path for things that need to still go out even if they're not durable. We strongly strongly encourage teams to never come talk to us until they've like done everything they can do themselves, right? Because we don't really want to push anything that's not

durable. So, as I said, these are our expectations, right? That your stay green control is on and functioning, meaning you can't regress. So once I do the work and I fix the thing, I should never ever have to do it again. That's what stay green is. Start green means you've got a paved path for teams. They start green out of the gate. Um if you don't have those, it's not acceptable, right? Like as I said, we do have a path for people when there's just the bar is too high, it's too difficult, we're still trying to do the technology. There's a there are exceptions. They're very very rare. So um the example that we have is the Microsoft accounts that

is all entirely done in code. I remember when they came to to go over with me and I was like this is amazing like I don't have to do anything. I don't even like and it was amazing because the minute they launched that it was one of the it is a one of the safe secret standards. Um most of them don't use code which is interesting right? Most of them use policy and they just flew. I mean, it was very, very easy for people to on board and they knew they wouldn't go backwards. >> Oh, me again. >> Oh, that's you still. >> I didn't know that. Um, >> can I can I just take a pause here

because there was one thing that you were mentioning. Go back about about the DCSOs. Um, the DCSOs are very important in our organization. Everybody knows what a CISO is pretty much. I hope DCSOs are deputy CISOs. And so what we have is we have a number of deputy CISOs for certain areas like network or identity or Azure. We also have deputy CISOs for different organizations whether that is office or Xbox right or or whoever. They end up being our gatekeepers, right? Because they not only hold the risk, but they also, you know, are generally execs, CVPs above that can then say, okay, like here is my biggest risks. I want you to address these things. But they also are very empowered

to be able to say, "We're not taking this work up, this KPI up until it meets a bar because I don't want my teams to be overly burdened on repeat work or overly burdened on doing work that isn't got a start green and an easy path on because otherwise again we just end up on the hamster wheel. So these are a very important people in this entire process.

Okay, so I know I just gave you guys a bunch of overview of governance structure. It sounded like, oh my god, you need a small army of humans. You actually don't. So the derivative architects are what what we call them is our dedicated security stewards, right? They're normally um already embedded in the product teams. They're very familiar with the standards and the tooling and the product. Um there are last time I counted 14 at the company for there's more than six pillars now and I don't actually remember seven pillars I think now. So that would make sense. 14 >> seven now. Yeah. >> Seven now. >> Yeah. >> Yeah. A minimum. There's probably a few

more than 14 but there's definitely at least two per pillar because people have vacations. I mean, I only know about this because I had to demand that I got someone to help me. Um, we do meet pretty regularly as this tiny cohort of people that have very busy schedules that don't have a lot of time, but we're I have to say the program team who runs the durability program for us does a really good job, right? And so we get together on calls and we discuss what's going well, what's not going well, what do we want to change in the program. And once a month we do meet with Merc who is our executive sponsor and so that way we can

really affect change. So what I want you to hear is that yeah this look like this huge scaffolding and this huge amount of people. It's not. Every sort of element of SFI has a small number of people that are actually doing those roles. Those runbook reviews the automation reviews governance durability. You don't actually need a lot of humans to do this. Okay. So, why durability architects? Um, so I mentioned we're all considered to be independent. So, we're not actually product um engineers ourselves for the most part. We're mostly security architects and we have this independent lens so that we can say like no looks good, no looks bad, right? And the idea is that as we go through

these development gates, we're doing these reviews. Um the reviews depending on the pillar are anywhere from 30 minutes to 55 minutes. A lot of that comes down to how well the KPI owner does in their documentation that we review prior. So it's not even like a lot of meetings. So we enforce ownership. We drive accountability. We make sure people aren't taking shortcuts. like our main goal is to ask hard questions and we can put a pause if the qu if the answer comes back and we're like no that doesn't sound right like feel like you skipped a step um we want to make sure as I said that the fix will endure like our mindset is fix it once fix it

forever right we don't want to end up back on that hamster wheel we don't want to just remediate you don't want to be stuck in a a system where you're constantly remediating and you're never moving forward Right. So that's why a lot of these are really when you think about them, they're more preventions than just fixes. >> Um, and the whole point was when we got going on SFI, it was a really good idea. We really needed it, but it felt a bit chaotic at first. And this is a very coordinated process to ensure that things meet the bar. >> Great. So now I take over and um okay how do we build them controls in at

Microsoft at scale and so I'm going to talk about a very specific layer of that by using Azure policy but there are various layers that these can be in they can be in the code right um as we was saying earlier there are places in safe secrets where there is a standard way of doing things and we check them as part of build pipelines and things like that in there also a way of handling it as governance, right? Let's say, for example, there's a network that we want people to be getting off of. Um, the governance there is, yeah, no one's going to authorize you to be on that network anymore. There's no control. It's just no one's going to sign off and

click the button to say, "Yeah, deploy there anymore." So, there's various ways that you put these controls on. Not all of them are policy. Not all of them are code. Not all of them are governance, but they they they do have to come together, right, as part of the durability program. But I'm going to be talking about policy here. And um one of the things I found was um it's really not easy being green. Someone used to sing that. Um and so the project used to take on its name, which by the way, the project is not named after this character at all. Ca says I'm not allowed to use this. This is nothing to

do with, you know, some green furry uh furry thing. Um um if you want to know why, then Google butthole astronomer. um you'll find out the reasons why internal code names can get you into a lot of trouble. So, what this is is this is keeping environments resilient by minimizing insecure tenants. Um because once you've got an EVB that knows the name of your product of your of your project, you you you kind of don't want to get off of that because they know it and have referred to it. So anyway, what this means is as you get green, there are certain durability and security controls that enable you to do the stay green and start green. But what we found

very early on in the program and just recently as well um is that when you turn these controls on, it's very dangerous because you're now stopping lots of systems, lots of autonomous systems from doing something that they may or may not have been doing for a very long time. Like let's say for instance my one that I usually go back to which is anonymous access blob containers. Um there might have been many many systems that have been creating them. They might not have even needed to have be anonymous access. But just pulling a big lever and saying you can't create them anymore can break other services because all of a sudden they then can't deploy their resources and if it is part

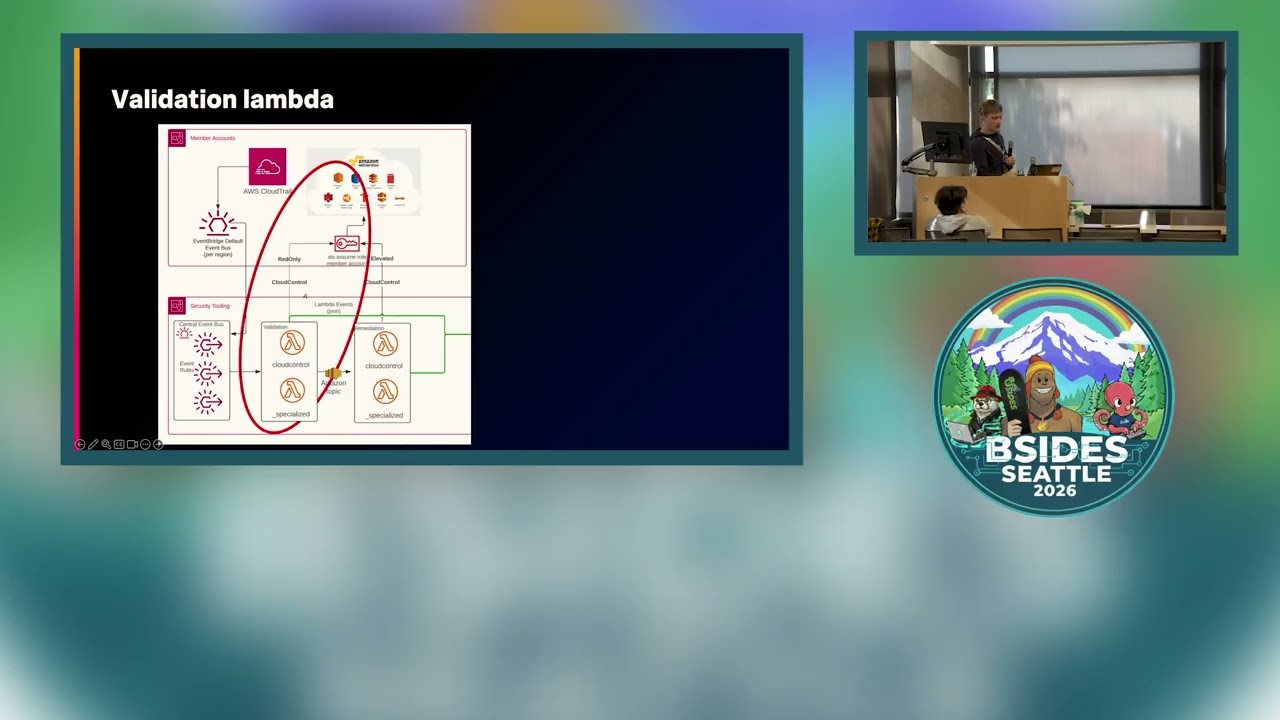

of a critical path then they go down. So it's very very dangerous of just turning on a control at any type of scale. So we didn't. So, how do we bake durability in? This is a big eye chart of a path of how we deploy and how we manage and how we get into a how we get into a um get to a get to a policy. But I want to kind of like really just be showing like the steps in the way. Someone defines the policy and tests it. They on board it to orchestrators to go and deploy it to the various places. They roll out an opt-in mode which I'll talk about in a second.

Right? Teams then opt into the policies when they're ready and when enough of them done, we then enable central enforcement. And so how does that work? Well, what happens is teams get green gradually. It's not one big thing that everybody says, "Oh, I'm going to be green by the end of this sprint or by the end of this quarter or by the end of the year." Like stuff happens over time in a big enough environment. So like no one's ever green all at the same time. They all end up being green at different at different parts, different opportunities. And you don't want to wait for everyone because you will find out you have a very long

tail. And while you're waiting for that tail to finish off, all of these other teams that are green because they don't have the policy could then regress because they don't have the durability built in and also are not getting that security. So remember, um, we want to be more proactive and reactive. And so we want to get them green. Once they get green, we want them to we want them to enable the policy. So what happens is we start it at the lowest levels of the of of the branch for a given for for a given service and um you know this service in production maybe it's the Azure policy production service and they have certain regions

and then as they've done all the work in one region they enable it in that region. When they go to the next region and done all the work in there they enable it and slowly you start building up up the tree. When more of the tree becomes green, then you get a chance to to enable um uh enable it centrally at the at the root. But in the meantime, all of these things that are green that have opted in are now secure by default. So policy can be gradually rolled out across a hierarchy by using opt-ins. So as I said, everything's not green at the same time and central policy can can break things. So it's over there like

you've got a tenant where some of them things are some some things are green and we audit that to say okay are you ready yet to turn green some teams are already green opt in and and and and bake the security in some teams are not they get green but they find that there are certain resources that they can't get green they they need a blob that's anonymous access for you know for virtual machine images or for CDN or something like that in them cases they need to get an exemption and they say we're not going to get green here. But as they opt out, then they're excluded. And then we we roll up to the center enforcement, we can enable

central enforcement safely at the root because everybody's either opted in or they've opted out. And slowly we build it over time. And so um when service have enough, they've either green, opted in or exempted, then we can move to center enforcement. So how are the policies developed? Um well this is how we kind of do it at the start. There are guess there are better ways of doing it today. Can't talk about them right now. Maybe if you're at Microsoft you know how these things have been developed or I can talk to you um about it. But generally what happens is you have a policy definition logic that says hey here's my storage account. I

don't want to allow anonymous access to it. I have a skip tag against this which enables me to basically break glass on that. If I put that tag on that resource, then the policy gets opted out, right? I skip the I skip it because it might be a case of, hey, this is an emergency life site and I need to do something about it. I want to go to the, you know, fire alarm. I pull the fire alarm and say whatever that policy is, right? I I need to get it out the way. It might be, for example, um outbound internet access. I need outbound internet access on this because I need to test that my

subscription here can actually call out to the rest of the world and I need to test some things. So, I'm going to pull the I'm going to pull the lever here. Um, and I'll tell you a little bit about more about break glass in a second. Then you have the opt-in logic, right? And these are what we call SDP, safe deployment practices or safe deployment process. They're scoping selectors and they're up to the service teams to decide what them scoping selectors are, but they basically say, okay, in this region I want to opt in. And when they put the tag on it will lock it in in that region or whether it's in this subscription versus that subscription.

So they can they can they can using the tags that they have either say you know ring one ring two ring three here are my tags I'm going to apply them and the opt-in go happens on them places or they can say within these regions or these regions and that region might be canary or that may be maybe you know um pilot or stage one or something like that and then after that you have the policy effect which is most of the time deny right so once you've opted in and the policy is enacted you effectively want to say, "Yeah, no, you're not allowed to create that thing." And then you push it back to the team, they can't create it.

They shift left. They go and change their code, their deployment systems to say, "Okay, now I'm going to set up to be deployed green." Now, you want people to shift left and update their code. In some small circumstances, that may be a modify policy, like if it's a VM and you want to make sure, hey, I need to make sure that antivirus is installed on everything. Install this extension on my VM for me. teams don't have to then shift left and do that. It's automatically done for them. Or it could be a modify that if not exist, right? Or deploy if not exist. Hey, you're deploying into this VNET. You should really have a net gateway to get behind.

Like if you're, you know, deploying something here and you haven't got that net gateway deployed, go and deploy it for me. So most of the time it's a deny to stop people from doing anything, make them shift left and change. But in some small cases, it might be, hey, we're going to go and help you get green as well as part of this. So, what happens when teams don't want to opt in? So, what our KPIs and what we call TSGs, troubleshooting guys, but they're basically the playbooks, right? What they all say now as part of the durability program is once you've done the work to get yourself green, here is how you opt in to go and lock that

durability in. But service teams don't do that. we found. Why? It's cuz they're lazy. No, they're not. It's a very easy thing to say, but they're not. They're overwhelmed. Or it could be a case of, hey, this policy existed after the team had already done the work. So, the team had already got green. There was no policy for them to stay green. So, therefore, they didn't know to opt into that policy yet. Or it could be that they've got other priorities of things to go do. So what happened on here right number of subscriptions that were that storage opt-in policy stayed pretty flat for a while some teams did the work some teams didn't and so we went ah how

do we how do we make this better how do we make this better so what we did is we developed a another tool it was called the opportunistic optin polic uh optin placement system uh but no one liked that acronym and I seem to be pretty bad at doing acronyms And so it's called 03 opportunity opting orchestrator. What that does is it looks at the subscriptions and say is it safe to opt in centrally. So teams don't have to do that work we will do it for them. And the way we do that is we look to see if the RP has ever what we call resource provider right for that KPI. if it's ever been. So if storage has ever been

enabled on this subscription scope or has never been enabled on this sub scope, you're good to go. It doesn't matter. You couldn't have ever created a storage account. So I'm going to opt you in just in case you do enable that at some point. There are no resources of that type. Obviously, I can enable you because you don't have any storage account. So I can certainly enable you. All of the resources in there are green. So therefore, if I opted you in, you should be okay cuz you're green today. But also more importantly, I look back a given amount of time, 30 days. Have you always been green? Have you been creating resources that are red and then

fixing them up? You're probably not ready to be opted in yet because you have to do the shift left work. Right? So, there's a way that we can determine and say, "Hey, look, rather than teams doing the opt-in themselves, can we take on that responsibility and help them up?" And so, that's what happens, right? Take the take the work off of the teams and get it done centrally. and then you place the optin using STP anyway. So that helps that if I place something and it then breaks in Canary or something like that, someone you know that they've ex they're exercising the workload, um we can then roll that placement, roll that opt-in placement back.

And then just to finish off here, when we can't enforce when you can't enforce something because something isn't green and isn't going to get green because of business requirements or whatever, then we can exempt out of a policy by either putting a skip tag. It's like a brake glass. The alarm will go off. Someone will then get a ticket to say, "Hey, look, do you know that you've broken glass here?" And someone needs to do something. And then we do thing called a P process improvement review. What happens during the incident to say, why did someone have to break glass here? Why did they need to go and do that? Um, they shouldn't have needed to

broken glass. They should have been able to have dealt with the issue in and of itself. So, what do we need to build to help them so they don't go and break glass again? Supposed to be temporary. or they place an exemption on. I'm never going to get green on here. Place an exemption and then them exemptions get managed. Either the CVPs and others sign off and say, "Yep, they're good." Or they're unmanaged and they have to then get turned into managed or removed. And so, um, yeah, that's, uh, that's generally how we operate durability at this level at scale. >> So, it sounds like a like magic, right? In some ways, you're just like, "Wow,

they did all these things." Um, we kind of look at this as a framework, right? You start way down at the bottom of reactive, right? It's all manual fixes. There's inconsistent um enforcement. There's lots of drift. That's where we that's why we started this program is that we were reacting to the fact that we kept getting out of alignment, right? And then I'm not kidding. We've been on a journey, right? So then it was defined. We had some basic processes. We would meet with people. We'd try to be like, "Okay, so are you durable? Are you not?" There were a lot of things moving through the system that we were approving because they said, "I will

become durable. I have a plan, right?" And then that didn't really work. So then we had a manage program, right? And that's when you start to standardize things. That's when you're measuring drift. You're not just observing it. You have measurement. you're constantly checking your KPIs and saying, you know, are the things that were green going red now? And if so, that means our control is not working. Um, and that's sort of early automation. Optimize is kind of what you start to see, and that's the state I would argue we're in today, right? We're moving out of optimized into autonomous. But for the most part, I'd say we're right smack and optimized. You know, you've changed

the culture, right? I remember those really early reviews and telling people why this was important and not I mean people be like yeah that sounds great and you know it took some time for people to come into the table and tell me okay I heard what you said last time I met with you here's how I'm going to do this new KPI and here's how it's durable right so that's an engineering shift um you have secure by default templates you have real time enforcement where we want to be. Our northstar is autonomous, right? So, you have AI assisted controls, you have self- remediation, you have adaptive security. When we get to that state, I don't know

how important people like Mike and I will be for this program. And that's fine. We can retire from it. We're totally fine with that. >> Trust me, you work yourself out of a job, you get a new job. >> Yeah. So, we'll be fine. And this is you know kind of the dimensions is you if you weren't if you wanted to build your own program right that's similar you know you got to figure out when you put in a change that's resilient. So we do very much care that's why we keep harping on things like safe deployment practices or processes or policies because we do very much care that when we make changes we are not causing

outages for customers. Right? You've got to make it scalable. This is why we care so much today about automation and making it possible so that again 40,000 engineers aren't clicking buttons, right? And they're not writing a bunch of additional code. We're trying to make it so they can go faster. That's automation readiness. You've got to have the we have a very large governance uh integration. We have a tool that everybody can go to and they can pull for their team all the way up, you know, to the very top of the company. You can look up and down seeing how it's going. And it's got to be sustainable because if it's not, we wouldn't be here talking

to you guys, right? Like we would have shut down this program, gone on doing other work, but it is it sustains itself because it's so important. Um this is the milestone. So you can again this kind of walks you through how we thought about doing this, how we drove the mindset kind of like where we started, right? you establish it and then where you would like to get with AI powered agents and now we're going to talk a little bit about metrics. >> All right, one of the last things um someone and I can't remember who it was now but said something really important that was measure what matters. So if you don't measure it then it

doesn't really count. So what we do is we measure how we're doing on durability. And this is our durability dashboard. And I have to say this is not the data that is on the dashboard because if anyone thinks that 1337 is a metric that we're actually tracking at the moment or 8675309, you can go ask Jenny whether that is um an actual number or not. Um, so I just have to be clear on that that we don't have 867,000 uh issues with HPA apps. Um, but this is how we measure it. And so for each of the KPIs that have an ID, these are not the KPI IDs. Um, and what the KPI name is that we're doing. Um, we have a

tracking that says how are we doing start green and stay green, whether that is Azure policy, whether that's code policy, whether that is other and things like that. What is that date that we expect to start green? Because if that date is in the future and that is not enabled, we don't expect to be fixed on regressions. They will happen because we don't have the control in place. So you have to know when these things are when these things are going to go and get enabled. And so then we track and say, okay, if that policy was enabled there, how many new items start green have we created in the last month? Well, we've created a thousand of them, thousand or

so of them. How many of them have been regressions that haven't started green? Zero. We're good. How many of them have been created over the last quarter? How many regressions that are either not been created green or regressed from from that? And so we track the number of them are. And then we basically come back and go okay is that verified or not tick we are green we've been durable for the last quarter or the last month or something like that. On the other hand if we have another one which says okay we created 42 items in the last month 12 of them have not started green or regressed but we are past the date when

we have got that policy in place. Something's wrong there. the control isn't working or the control isn't applied to the places where we expected it to be. We expect it to be everywhere and it's not. It's not hit that, you know, saturation yet. So each of these kind of like what we do is for each of the KPIs, for each of the pillars, for each status of where things are in the program, we measure and we say does it have a control? What control is it? Is that control deployed enabled? And if it is, are we creating things? Because if you've got something that is like new items created in last month is zero and

you've got zero regressions, what what do you know about that? You know, nothing. There's, you know, trees falling in the forest. No one's around. The tree isn't even falling. We don't even know. So, we look to see how many have been created and whether any of them have regressed or not. And so that's how you turn an entire program into a dashboard where we just look at every week with the architects and certainly monthly with Mark to pick out where problems are and to go and figure out how to make these better iteratively over time. And we actually like when earlier we mentioned our P process. If we do have controls that we believe to be durable,

right? And we're seeing them sort of thrashing and they're no longer durable, we actually work with the KPI owner and go through a full P to figure out what broke because what we don't want to do is then go do that for someone else, right? So the whole point is to learn where is there a problem and prevent that problem from showing up in a different KPI. So that is a I would say like we we haven't done the P um I would say until just recently but that's really helping us to redesign new KPIs and go back and figure out other hotspots. So I think like you can have a great program but if you aren't paying

attention to this type of data you're not going to know if stuff's breaking right. You're not going to know that someone did a bunch of work and they just regressed. So this was a really critical dashboard for us to get stood up. It took a bunch of work to make sure we could actually measure it properly. Again, that goes into a governance structure. But I would say like without this you you have no idea if your controls are working. >> It's that simple for us. And then do you guys have questions for us? >> Yes, please. >> So I have a I have a question then I'll have an observation. The question is you put a policy in place then and n weeks

or months later you put another policy in place and then you put a third policy in place that contradicts the first one. Something breaks. How do you ensure that it breaks in a way that is constructive and does not impact the customer? Don't care about the engineering James. Customer comes first. >> Right? Remember how I talked about the standards? Each KPI has a standard one way of doing things. There are not conflicting policies on this. So there is one way of saying okay for a SQL server this is how you do safe secrets. There is not another policy that says and starts to conflict against to conflict against that. Um now um we try very hard on the

KPIs not to overlap them right because that might be a case of that that might be a case but in but in but in terms of policies themselves um they the the KPIs themselves very very rarely overlap and effectively the the standards say they don't. Now there may be the case that teams have gone and defined their own policies and own ways of doing that but therefore what we do is we we make sure that our policies because they get deployed at the tenant route are the ones that preempt everybody else. So um it's efficacy right we make sure that the policy that is the that that is the standard policy the the one there has efficacy and make

sure that it is the one that everybody goes and use and it is everywhere. >> Okay. I was going to observe that just to comment on what you just said Mike of course Microsoft teams never go off and do their own >> no they don't absolutely not >> never >> the observation is I can see where this is the ongoing continuation of efforts that started 20 to 25 years ago in windows having I had hair then >> nothing's new come on >> nothing's new the good news is I can see that evolution the bad news the first two slides I think I first saw in like 2003. >> One of my one of my favorite things I'm

saying a lot today is um history doesn't repeat but it echoes. >> That's fair. >> Other questions please. >> Oh, are you going to do it? Okay. >> Where do KPIs come from? Are they coming out of MSRC reports? Are they coming out of compliance frameworks? >> No. No. No. They come they are rooted in risk. That's where we started. Remember we started when we said at the very beginning we the deputy CIOS who are embedded in different business units right they're the ones that are responsible for developing their top risks. I can't really I haven't been cleared to say how they do that. Um but if the KPI does not map to risk it it

gets blocked. It basically gets gated. So that's how all of them are formed, right? Like so safe secrets, there is a risk that backs that I know because I wrote it. >> The the dashboard looks an awful lot like a common controls framework status dashboard. I'm just wondering if there's a larger conversation or you're going to successfully isolate this engineering risks. >> We isolated it to security risks that impact both the company internally and our customers. >> We do the same thing with privacy risks. >> No. >> No. Yeah. >> Yeah. >> Privacy risks are not in SFI. >> Yeah. >> What if any level of executive sponsorship was required to launch this or do the deputy CISOs have the agency

to just kind of execute? >> No bill. >> Very good question. >> You want to take that? >> Um all the exec sponsorship, all the things is what it takes to go do these things. Um yeah, at the highest level it took basically all of the even the SLT, what we call our senior leadership team, everyone that reports to Satia to basically agree that yes, this is one of the biggest things that we did and we brought on Charlie Bell to go and start to run that program. Then it requires like a another very chief senior executive like Mike Rosanovich to pick up and help you know define like what these what what these mean also requires

you know big senior executives um like either you know like Nesh or some others in the in the uh business units to basically say I'm going to adopt things. If you don't get that buy in at that level, then you you you've got to have it from top down. STA said to us, security is the most important thing you do. Um, if there's one thing you get to do and choose security, that's that that's it. So, you do need that buy off that relaxes over time as risks go down, then obviously we start new programs up and we do things cuz, you know, we can walk and chew gum at the same time. But yes,

you do you do need that executive sponsorship to start the program. You don't need it to be there to continue the program. But having it so that everybody agrees and then everybody has the same hbook and are singing off of it, right? And here are the people that's going to publish that hbook for you, you do kind of need that to kickstart the program and then after a while it does start to become self sustaining. other questions.

>> No. >> Well, thanks for your time here. Thank you.