Challenges in Applying Machine Learning to Cybersecurity

Show original YouTube description

Show transcript [en]

all right I'm Tim hopper I'm a data scientist at silence I assume most of you at least heard of silence we are a next-generation antivirus anti-malware product unfortunately after I submitted to apply to speak here this is the first security conference I'm speaking at actually the first security conference I've been at thank you I applied in as I go they probably won't be interested so I bought tickets to the mandoline orange concert in Raleigh tonight so I'm gonna have to jet pretty soon after I talk but if you want to get a hold of me I'm happy for you too I'll stay around for maybe an hour or so but I'm gonna have to go pretty quick so my background is

in math and operations research which is kind of applied math not in cybersecurity so much I did a minor in computer science in college but I'm relatively new to cybersecurity in my life but been doing it for I guess a little over three years now in the data science space I came into cybersecurity through a data science and machine learning type applications I've worked at KTM and distill networks after that which is a malicious web bot mitigation company and then I'm now at silence love Western North Carolina grew up visiting my grandparents here and dream moving here one day I'm in Raleigh I work remotely for silence and I pictures of the mountains if you'd like to see

that so as I start there there's a distinction that I think is is mostly useless here which is machine learning versus artificial intelligence in the last couple of years artificial intelligence has had a resurgence as a term largely through marketing departments there is really no significant distinction to be made in talking about applying artificial intelligence versus machine learning so my talk is challenges as implying machine learning to cybersecurity you could replace that with artificial intelligence and it would be exactly the same except if you use the term artificial intelligence people might give you more money so it's less challenging but you know we see these articles coming around and you know as I was looking for

articles on the kind of the hype of AI or machine learning in cybersecurity there are a lot of articles you see also that are I think more reasonable approaches but you you know these these high particles executives love to post on LinkedIn say things like the scalability and automation capability machine learning can efficiently analyze large pools of data correlate simultaneous events and learn to separate a typical from typical behaviors thus enabling it to detect potential threats even at an advanced level and you know the title there being AI is the future of cyber security and certainly there there are a lot of great and valuable applications of machine learning to cyber security but it it's

not simply just a magic bullet I mean this is actually a grateful to dance talk just now and thinking about automating threat intelligence and someone could come along and say well you don't even need to do all this automation effort you just need to do machine learning you just need to do artificial intelligence to your threat intelligence work you don't have to do all this automation but manual automation that's that's do it and and the reality is that's just the manual effort the human effort isn't just going away overnight and applying machine learning effectively is an extraordinarily challenging and thus very expensive thing to do to try to give a little bit of technical context

I'm not going to go in deep into what is machine learning here but in this talk I'm largely going to be focus on this concept called supervised learning which probably many of you have heard that term machine learning can can roughly be divided into supervised and unsupervised learning methods and I'm focusing here on supervised learning methods because if supervised learning methods are hard to apply to cyber security unsupervised methods are even harder so you know the things that I'm suggesting would apply even for unsupervised methods but roughly supervised learning is methods for algorithmically learning patterns from data terrible grammar here and making predictions on unlabeled data so you're trying to learn patterns from data where

you have labels of something you're trying to predict that you can then apply the the patterns you've learned to make predictions on data that doesn't have labels so you could think in my my previous company and malicious web bot detection we have essentially enriched engine X logs coming in before customers making HTTP requests we get to see this HTTP request and we want to make some prediction is is the user or system behind that request malicious or not so you might go add a bunch of labels manually label data and learn patterns that you can predict in the future or in the anti-malware case you know you can collect a bunch of binary files label

them as malicious or not and then you teach your machine learning model which is just some sort of mathematical formulation to detect patterns in those in those files that correlate to malicious or not and then you can make predictions in the future so I'm going to use the term model pretty liberally it can mean a number of things but it's really just a function that we can train on some data to make predictions about something and training means finding parameters for that function to to make specific predictions for your example so you can think of the model in the general class that has all these free parameters that you can fit and then you are

automatically learning or mathematically updating your parameters based on your data to make predictions in the future so that's maybe a three minute introduction to machine learning just to if you don't have context to give you a little more context for the terminology and the rest of the talk so there are these are some applications I could think of machine learning in the cyber security space bought detection which I was describing distill network provides I mean this is a huge space of companies trying to stop malicious hackers from stealing their data or ddossing or doing account fraud all kinds of things it's a big space it's almost impossible to strictly do manually if for no other

reason it happens in real time you have to be able to respond to your HTTP requests instantly intrusion detection [Music] biometric authentication obviously a huge area these days malware detection like silent send and many others are providing these days using machine learning automated penetration testing and and of course one of the most famous examples and really one of the most successful examples here is spam detection and we all remember our email 15 years ago or we just got tons of spam into our inbox and now if you use a major email provider you almost never see spam anymore those are machine learning models or probably more realistically a whole combination of models and probably even some human

rules and things but spam detection is an incredibly effective system and I think there probably some reasons actually why about the particular problem that are making it easier than others that make it so successful so if you've done any study at all even you know casually into machine learning you've probably come across one of the most famous sort of toy data sets and that's the Fisher's iris data so Fisher was a geneticist a hundred years ago or so and he took 150 measurements from three different types of irises so he he measures four different parts of the iris which were the numbers in the chart there and then there's also the the name of the

varieties of irises which are pictured on the right so a classic example of doing machine learning or even you know if you if you took statistics classes before machine learning became all the rage you could you can do this with quote statistical methods which are the distinctions they are pretty blurry also but the question becomes can you train a mathematical model to receive these measurements as an input and output the type of iris as the prediction and the data on the left this is four-dimensional data so that the charts on the left are an attempt to visualize the four dimensions and in a two-dimensional sphere by the the just looking at pairs of dimensions together

and so you can see the in each of the dimensions the data is somewhat separated the the colors are somewhat separated from one another and so you could even just with a pencil go and draw a line that says well I think this line roughly divides these this data and then machine learning is essentially teaching a computer to draw some sort of optimal line and the hired space so this is a really common example it's very popular people say oh I'm here's here's how a machine learning works and you can go you know pip install scikit-learn Python and you could train a whole bunch of different types of machine learning models on this my zero accuracy and say okay great

here's a machine learning model I can make predictions and do all these things but the reality is even though conceptually there's a lot of overlap between this problem and cybersecurity problems where you are really just thinking of whatever data you're dealing with whether it be your web logs of your binary files or something you're just thinking of a mathematical representation a vector space representation essentially you're transforming your data into numbers like these and saying can I learn patterns in those numbers so there is that similarity and yet the the realities of a quote toy problem like this and using machine learning effectively in a real world production environment are are just worlds apart in terms of what it

actually looks like and my my desire here is not to be like a gatekeeper for machine learning and try to scare people off or discourage you from going out and just you know take an introductory Coursera class and look at problems like these really if anything my desire is to encourage you to be a skeptic as you hear companies marketing feels about their machine learning and artificial intelligence and and hopefully ask questions better and also just be able to evaluate the claims that they're making and to know that silence has solved all these problems and our product is perfect if anybody's listening

several aspects of this problem that distinguish it from many real-world problems and thence more specifically from cybersecurity problems one is we're dealing with a static data set here so if you're if you're doing machine learning models on this you have 150 data points you could go collect more data if you wanted but if you're simply operating on the toy example it's just easier to deal with data that you know and that you know isn't growing similarly it's very low dimensional problem so we if our four dimensions of data and it's just easier for us as humans to conceptualize it's easier for models to find patterns in it turns out it's actually there are a lot of things

about low dimensional data that the analogies don't extend into higher dimensions and that's a whole nother talk on itself but we can we can conceive things about two three four dimensions that if we think about hundreds of dimensions they the things we might assume don't don't apply the data is mostly separable and by separable we mean if you you know as I mentioned before if you look at those graphs you could draw a line between the colors and do that pretty effectively one of the challenges of doing machine learning on more complex problems is defining your features which is your numerical representation of your data in a way that makes it separable the data is pretty interpretable

I mean Fisher is these are measurements straight from your flowers interpret aural data makes it easier to interpret how your models are operating and understand how they're operating and understand when they're when they're failing if you wanted to get more labels to your data if you you only have 150 data points here so you needed a thousand data points you could pretty easily if you can find enough irises you could pretty easily go out and collect more measurements and similarly you if you knew I actually don't know how there's measurements work but if you understood how they worked you could train someone else to go make those same measurements pretty effortlessly that turns out to to

certainly not be the case in cybersecurity and finally this problem is somewhat stationary which is a technical term in the statistics world but when we think about stationary I mean it's more like how we just use it colloquially it's that the the the the the the the data isn't changing if we added more flowers or we had to do this in real time so say we're a Iris processing Factory and we had a machine that could take these measurements and we wanted to know what types of irises they were it's reasonable to expect that the measurements are not going to be coming from a different distribution of data over time and maybe the flowers regionally vary or even seasonally vary

but but we would hopefully expect that an iris now in an iris a few years from now the same variety is going to have measurements that are from the same type of distribution so all that to say cybersecurity is not a bed of irises the the iris problem as a toy example is helpful in understanding some aspects of what machine learning is and yet it really covers up all right that's not that's not fair really it just is not as complex as the reality of machine learning and real world problems i I think covers up is unfair because it's not Fisher wasn't making any claims that his data was applicable for those trying to apply and she learned to

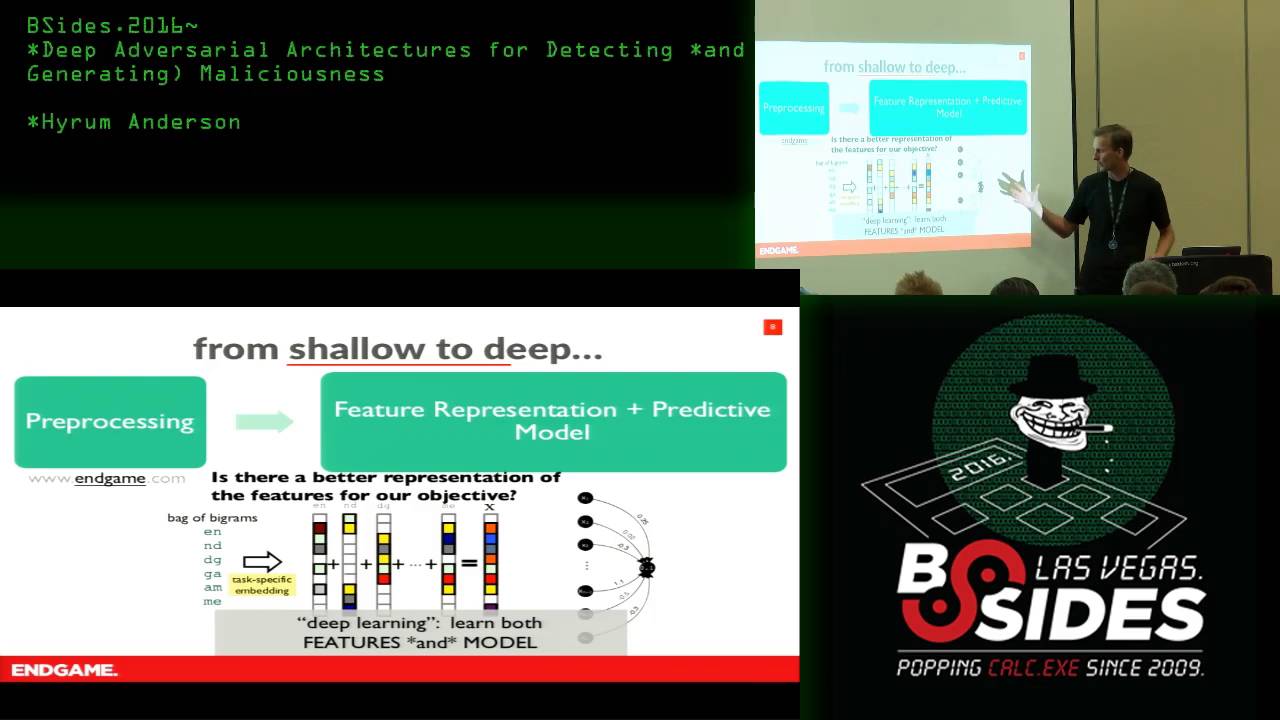

cybersecurity hundred years ago you might have heard about deep learning one of the the popular things today you might come along and say well yeah that this was true before you know 2009 but now we have convolutional neural networks or now we have recurrent neural networks so this is it's actually easy now that's just patently false so the deep learning is really extraordinary at solving some problems very well and yet most of the caveats I'm going to give here or still completely apply so don't let people trick you into that and for the most part deep learning is not categorically different from the things that people were doing prior to it it has some it does have some advancements

but it's not it's not just completely different so nine challenges to applying machine learning to cyber security in the next 25 minutes one is defining the problem so we could think about all kinds of definitions to cyber security here's just an arbitrary one cyber security entails the safeguarding of computer networks and information they contain from penetration and from malicious damage or disruption in dance talk this morning he was saying one of the the challenges that you need to accomplish for effective threat intelligence is to really understand what problem is that you're trying to solve and in my relatively limited experience with cyber security coming into this world in the last few years you realize that it's easy to say terms

like malicious or damage or disruption and yet to concretely define what that means in a way that you could teach a computer to recognize those things can be significantly more challenging it's not it's not necessarily black or white how you define those things charles kettering who is head of research at General Motors in the very early days and famous for for a number of things in that space has a quote a problem well a problem well stated is half solved and that that could not be more true in in applying machine learning to your cybersecurity problems that just getting to where we know what what problem it is we're trying to solve is at least half of the

work and actually solving the problem so in the malicious web bot space well there's there's two immediate questions you can ask you have traffic coming to your website and you want to block malicious spots the first question you have to ask is what is a bot I mean a bot could could does it strictly mean a computer operating independent of a human operator all kinds of difficulties there and if you define it can you detect it as another question and then secondly what is malicious what's a bot with malicious intent versa but without malicious intent in many ways that depends on who the who the end-user is as to how you're gonna define that also you could think of a

sense in something might have both malicious purpose or intent and not I mean yeah I mean you can get into ethical questions there they're just all kinds of questions that have to be answered before you're deciding how to do that in the malware space you know what is malicious software a temptation for a company blocking malware is to prevent any system from running Auto IT because you can do all these dangerous things with auto IT and yet many people use it as an important part of their toolkit and running there there are companies it's example of software that has both good and bad uses and and can I can I necessarily know you know I want to try

to come up with the definition of malicious software just because I define it can I actually know it when I see the software how do I know that I can know that and and similarly does the definition of malicious stay constant over time I mean you could mean a somewhat simple example is you could think of a piece of malware from 20 years ago or something that that can no longer run on a system or maybe it was malware that operated on a system that we know has been patched to where the Maur can no longer execute the malicious functions if it can't be if it can't do any damage but we still consider it

malicious and you could think that can get more complex examples of that but all that to say is nailing down what you actually mean by the problem is very challenging and going to a data science team potentially speaking from experience here going to a data science team who aren't domain expertise aren't domain experts in your particular realm of cybersecurity and telling them oh go block this malicious thing go prevent this malicious thing and then just kind of hemming and hawing when they're asking you what you mean by malicious what you mean by damaging but just doesn't work there's no magic magic thing in data science that knows what what malicious means similar to that supervised learning is is effectively

pattern matching I presented this talk to my colleagues this week and my one colleagues complaint was that there are no dog gifts so this is the best I could do that this was somewhat relevant but anyway doing machine learning requires a sufficiently large amount of accurately coded examples so you have to you're training a computer to recognize patterns it has to have some kind of ground truth to recognize those patterns from it and in most cases it needs ground truth from both what we call positive and negative examples so if you're talking about malicious versus not you need a good number of examples of malicious things and a good number of examples of benign things and this is

one of the biggest challenges for anyone applying machine learning and I saw a relevant tweet last night quote from every CEO ever I thought a I meant we didn't have to label data anymore and that's just just not the case having data good label data is still essential for for doing machine learning and this is one of the areas that in the in the space of image recognition deep learning has really made changes because it has essentially and reduced the need it's allowed the models to learn more things from unlabeled data and then learn faster when you then have labeled data but that's deceived people into thinking that we just don't need label data anymore

so in in the case of silence or anyone doing machine learning in the anti-malware space we have teams of human experts who are looking or executing files and sand boxes who are looking at you know decompiling files doing all this research into whether or not things are malicious we're collecting examples of non malicious software to just store as examples so that we can learn we need to be able to learn the patterns both in malware and in non malicious software this is a huge challenge is very expensive and and this is absolutely essential and this going back to the IRS example you're talking about how the iris is you could really train someone to label iris data by taking the

measurements this is it just requires more advanced skill set to train somewhere to train someone's who distinguish between examples in the security space and more advanced skill set means more expensive this is what is definitely one of the hardest problems in this in all my symbols challenge three what's the cost of predicting incorrectly so machine learning models are never going to be completely right all the time if you want to if you want to never incorrectly say that something is malicious with your machine learning model you're just it's never going to be able to say anything at all if you if you're making predictions there it's gonna be it's going to be wrong at time

so from the business perspective you need to be able to quantify or at least attempt to quantify what are the implications of a machine learning being wrong machine learning model being wrong particularly if it's making automated decisions so again using examples from spaces I've worked in in the malicious web but space you know your machine learning models wrong suddenly on Black Friday it starts blocking people from accessing your website or trying to buy items that you have on sale and you're immediately losing revenue because you're blocking people from your website similarly in the bot blocking space if you block humans incorrectly they're going to get mad at you and they might not come back again

or you might fail to block a you know there's an advanced botnet you fail to block it at DDoS is your site that can be very costly and and I think in that those cases well the loss of revenue is easier to quantify sometimes I think the cost of being attacked might be harder to quantify in the in the malware space you know obviously if we fail to block malware you know our customers get ransomware on their computers they blame us for not blocking it very costly to them very bad for our reputation we block things incorrectly and this is actually a important nuances if we block something incorrectly we might annoy people a little bit but in the malware

space you can then go in and like whitelist something it doesn't have as detrimental effects as like maybe blocking someone from buying something on us on Black Friday and the the nuance there is that there can be a huge imbalance for a malware provider there's a big imbalance between blocking some playing in correctly that's not malicious or failing to block something malicious so failing to block something malicious could have these huge detrimental cost blocking something that's not malicious is mostly just annoying unless you do it too much and it really damages your reputation and why that nuance is important is as your training machine learning model says you're fitting your model to your data most machine learning models if you just

use the default settings are assuming that those two costs are exactly equal that the the cost of a miss classification a false positive or false negative where the terms used are equal in cost but the reality is probably in most spaces that's not true and then beyond that the challenge is how do you actually quantify those costs or measure them to evaluate your models and evaluate whether or not they can be used in production I'll try to refrain from saying at each point that this is one of the hardest problems because these are all these are all real challenging problems number four model predictions decay over time this is the technical term is model drift that model machine

learning models being used in largely human systems and and maybe you know other things tend to get worse over time unless you're continually updating them so one of the advantages of silence as a product or potentially other machine learning driven antivirus any Maur tools is is we can actually predict we can block malware in the future from models that we train now because we're we're not looking just at databases of hashes we're looking at patterns inside malware so we can know we can stop things in the future without having seen them before that's one of our huge selling points and yet if we just stopped now and said well we can do it we can predict things

in the future we can block malware that has not even been written yet this stop now and rest on our laurels and drink a lot of beer are our models they're just gonna get worse and worse so there could be all kinds of examples for that you could think of or reasons for that that you could think of one being the malware authors are going to learn how to work around our tools or simply that the the the systems are changing the operating systems are changing and updating so maybe we're looking at patterns that just aren't applicable in the future yeah so there could be other reasons there as well but if we just stop and

you these trained models they're gonna get worse and worse so once you start doing this you have to stay on top of it and this is not just in in the cybersecurity space but in any type of machine learning models dynamically updated models are rarely used if if someone tells you that they're machine learning model is learning all the time as as new data comes in they're probably actually lying to you that's what every marketing team wants to say and there are cases in which you can do that but the the challenges of doing that are typically insurmountable for for a number of reasons sometimes just the structure of the machine learning models aren't conducive to what's called online

learning which is that they're learning from data as it comes in there's way too much risk and the models being gamed if you're doing that you could you know you're you have a model that's recognizing malware and someone does something to just throw tons of examples at it that are forcing it to go off course not they're just a number of other reasons this is a really really really hard thing to do everyone wants you to think that they're doing this but it's it's virtually impossible in many applications exception being sometimes you can make like some local adjustments something like you think of face ID on an iPhone or something they have a machine learning model that's making

these local adjustments to adapt to your face that is that is actually a real-time example but it's there's still like underlying it's it's making changes to small parts of the model or their underlying things that are having to be static and then updated in a in a more controlled environment

adversarial actors have an incentive to bypass your models so this someone made this video when I was working in the bot mitigation space and right so it's this robot arm clicking the I am not a robot box and I mean it's just you know it's silly but the there's so much reality behind this as to what what is going on and that you know so the computer has cookies that Google thinks is good so there's it's not it's not quite as simple as this but the the reality is that if you are trying to stop someone from doing something that they have an incentive to do they're gonna try to find ways around your model and this is

something that we spend a lot of time on in the end the malware space is making our models so that you can't just you know add a bunch of junk code you have some malware and then you just add a bunch of junk code that doesn't do anything or maybe doesn't even execute and all of a sudden your your PE file looks different and so then it the model fails to to classify it as nauseous or things like that we we spend a lot of time hardening our models to prevent this but malicious actors have a incentive for whatever reason financial or otherwise to get around your security products they're gonna they're gonna continue to

try to find ways around it and there's a subtler thing here which is that repeated access to model predictions allows adaptive behavior so if you have your machine learning model accessible in some sort of Oracle form which unfortunately an antivirus or anti-malware tool is either in simplistic ways or actually they're more kind of advanced mathematical ways people can yeah that's a good that's a great way putting they can model your model and they can intelligently decide how they throw data at your model to see what it predicts to then try to find out ways to to work around it this is basically unavoidable in the any malware malware space but in other spaces you might be able to limit access that

someone has to your model but your your in some sense giving away data as you make your predictions visible challenge six feedback loops blocking malicious actors changes the nature of your data so the the bots mitigation problem is a really good example of this so you have a bunch of weblog data that you manually somehow magically classify as a malicious or not and then you want to train a model to recognize future logs as malicious actors or not so then you put your model into production and start running it and then you start blocking all this malicious traffic which is changing your log data so you have less malicious traffic because your and a reason for that is it you know in weblog

data a malicious actor doesn't just have one log line they have hundreds or thousands or millions of log lines and if your able to effectively stop them they might just have one or two you see them a couple times you block them out of your network or for making your HD there HTTP requests and they're gone from your data then your model drifts as we talked about your model decays over time and quality so you need to go retrain your model and you don't want to retrain it on old data you want to train it on new data but your new data is biased against the malicious actors because you're removing them from your data so then

training a model on data that's biased from the naturally occurring data that you would have apart from your mitigation strategies so this creates a feedback loop that if you don't take into account you retrain your model and suddenly you get worse predictions and you you you have to explain why there's a really amazing paper from Google researchers called machine learning the high interest credit card of technical debt great title and it's just a great paper if you're interested in a production machine learning system or if you're doing it already and you haven't read this paper you really should go read it it's very approachable it's not not highly technical but they talked about this feedback loop problem I mean

just a number of the other challenges of running a production machine learning system that may scare you off from from really doing that challenge seven data access I'm going to speed up a little bit so I so I've some time for questions by the nature of security problems we're dealing with data that's restricted are you as the researcher going to be able to get access to your data to learn patterns from it so you can make predictions of the future from the future similarly can you get access both to malicious and benign class data some in some examples you know someone's happy to give you their examples of malicious data but they can't share you

share with you any benign data because it's like their private business files or whatever somewhat unexcited Lee gdpr is changing this also in that you know if you have customers in Europe they they might be legally prohibited from allowing you to take their data into the u.s. to train a machine learning model on it and then send the machine learning model back to Europe we're hiring malware analysts in Ireland specifically for to look at data that we're not able to bring to the US it's just the reality of the way it is but it's actually changing how people are going to do business are you going to be able to get access to the data

that you need challenge 8 model interpretability many classes of machine learning models are inherently difficult to explain and and by that we mean the predictions that they make if someone comes assist you well why did it make that prediction on this piece of data the even defining the what explained ability means is a challenging thing on its own but assuming you have some definition some machine learning models do such convoluted things with your data that it's very difficult to give any explanation other than looks like a literal showing what it did which is like oh well we just modified it or multiplied these things by these decimals and did these mathematical operations and then we got a result

which is meaningless to anyone who really is trying to understand why that happened but if you provide some sort of security product that your CEO dog foods something's gonna happen where your CEO sees something get blocked or something not get blocked or something along those lines that he doesn't expect or she doesn't expect and they're gonna come to your data science team on Monday morning and tell me why did this thing happen and you get to be MARY POPPINS the the neural network and just walk away and say I can't explain this but analyst teams you know your customer success teams your customers potentially regulators in some spaces are going to want to know answers to

these questions and if you haven't put forethought into this it's going to be very hard for you to provide those answers yeah this is a this is a huge question of machine learning in general that people are wrestling with but it comes up a lot in my work there's more more to be said there but I'll save that for another talk to you finally this is my worst title challenge non Pareto model transitions and this is probably also one of the harder things to conceptually understand if you haven't worked with machine learning but machine learning models as you update them which we call retraining they can introduce regressions in the classifications and and this is regressions not in the

statistical sense but more unlike the software testing sense where your your model that once made a correct prediction if you update it in some examples might suddenly start making incorrect predictions and even worse for some types of machine learning models because most machine learning these days is like a probabilistic type methods they're depending on on these statistical algorithms for finding the the parameters for training them you could have a machine learning model that you train the exact same model on the exact same data and you in some cases even if it's a very good model in some cases you could be getting different predictions just because of the nature of the probabilities so this can

introduce security holes we deal with this in in the malware space you want to retrain your model and you you've always you know your models always blocksome know malware thing and then you train your model and for whatever reason kind of going back to explain ability it might be hard to explain it suddenly stops blocking the model because it's we're dealing with probabilities or it suddenly stops blocking the malware or maybe you know you release your new model and you're you're blocking malware and all of a sudden your new model is blocking Microsoft Word on all your customers computers this isn't this is not like it's universally worse it's not like it's just getting everything wrong

the reason for choosing non purrito as the term is it's it's it's just that it's probably not going to be better in every possible instance it's it's gonna be better in a lot of instances but in some instances you're gonna have these regressions where your model suddenly starts being incorrect

there are ways that you can then deal with this but however that is the reality is that you just have to deal with it you can't you can't just let this happen and in many applications probably there's someone you can get away with that so in conclusion nine ways that machine learning is challenging to apply to cybersecurity problem one just defining the problem two getting labels on your data having your data being labeled as malicious or not or whatever your taxonomy is three what's the cost of being wrong can you even quantify it and if you can quantify it how it spins up is it going to be to quantify then can you fit it into your your models once

you've done that for models decay over time in in the real world you have to deal with models getting worse as they as the world around them changes five adversarial actors have an incentive to work around your models six biased feedback loops are going to change you're retraining you're updating of your models unless you control for the feedback that you're getting recognizing that as you act on your your data you're changing the the nature of the data seven can you even get access to the data that you need can you get it out of Europe well your customers allow you to have it do you have to work on their system can you put in AWS all these

questions eight model interpretability once you get a machine learning model and it starts making predictions are you going to be able to tell why those predictions were made and what they mean and nine model instability your models as they transition might suddenly make bad predictions that that are going to cause you trouble I'm happy to take questions I don't know on the time

yeah so the question is can you like use machine learning to detect interesting things and then maybe somewhat manually put those into an expert system or something like that yeah there's a lot of possibility for that depending on the application you could certainly do that like a manual review you train your machine learning model and then it's detecting things that you're you're manually evaluating that completely is application independent but there's there's certainly a lot of opportunity for and many problem spaces for kind of the human machine learning model interaction - yeah I mean you know it's basically like Google right Google is a machine learning product that's just it's just ordering your cert it's doing

the the search and then ordering the search results right so it's putting the things at the top you think might be interesting there are a lot of analogies to using analysts in products machine learning products the other all right we're gonna wrap it up there I'll be around for a little while people have more questions I'm happy to talk thank you [Music] [Applause] [Music] you [Music]