Navigating the Ethical Frontier: Governance and Ethical Implications of AI

Show original YouTube description

Show transcript [en]

Thank you for coming Dave. Welcome to to Lisbon and to Besides Lisbon. You're the last talk of the conference. So, thank you. And this is your stage. >> Thank you. Oh, there we go. Thank you for having me and thank you for the intrepid souls that have stuck around. I do appreciate it. I know it's always kind of hard. So, I've been in security now for 31 years and it kind of pains me to be able to say that. Um, I have done every role you can think of in a security organization except for cryptography because math not my strong suit. Um, and along the ways I've done various things like I ended up becoming a part owner in a whiskey

distillery as well as a soccer club and it just keeps life interesting to say the very least. So in my day job I work as global advisory CISO at one password and that is u well I'll get into that a little bit in a moment. I also serve on the advisory boards for several other companies as well uh in the AI space as well as this particular one here, Sighteline Security. This is a nonprofit that does cyber security work for other nonprofits. So, battered women shelters, things like that. They provide them cyber security services. The only reason I put this up here is because I have this conversation over and over again where I'll ask people if

they heard of one password like, "Yeah, I use it at home." Do you use it in your office? No. Okay. 18 and a half years as a mom and pop shop doing one thing very very well. And for the last year and a half, we've been in the enterprise space doing um SAS management, uh password management, uh security for AI and all those sort of things. So that's the only reason that's up there. I am Canadian. In today's geopolitical climate, I think it's kind of necessary for me to point that out. Reasons And I started out as a hacker in Canada that can mean little bit of a different approach and it's really kind of

interesting now is the team that I run at one password is called special operations and engagements and it's two parts. Part of it is ex CISOs that are jaded like myself and the other half of the team is security AI researchers where we break various types of AI implementations. But I still end up feeling like this guy. And more often than not, when people ask me what's changed over the last 30 years, we really do seem to have the same conversations over and over again. Go back to the advent of the internet when cloud, Bluetooth, Wi-Fi. For some reason, we keep making the same mistakes over and over again. And we have yet to learn from those errors.

We have to look at things like the modern supply chain. Nowadays we have all sorts of different aspects SAS integrators processors libraries but the risks are fundamentally the same. It's just now when we're looking at it from the perspective of artificial intelligence, the speed and the blast radius that have come up are far more significant. Now imagine if you factored into into these particular data breaches if artificial intelligence was part of the mix. These in of themselves, these are were pretty bad. And I don't put these up here to make fun of anybody. This is just shows that it could happen to anyone. Now, when we're dealing with artificial intelligence part of things, we look at the pro pro.

Yeah. Try that again. The probability of having a significant blast radius or a black swan event like we've never seen before. And the thing with a black swan event is we look at it is it was something that was unpredictable. It was something that had a significant impact and in hindsight ah we could have fixed that and yet we didn't. Anybody remember the Marai botnet? We got some homework in here. So the Marai botnet was a botnet that was built out of these. It was various different IoT devices that were connected to the internet and they had hardcoded default credentials. This is one of those things that absolutely makes me insane, especially when you look at the types of

credentials. Root, admin, admin, admin, all the way down. At the initial implementation, I think it was 62 different devices that were on the internet. The attackers built this into a botnet. And at the time, it launched an attack of 1.4 terabytes of traffic. This was the biggest distributed dialog service at that point in time, and it was launched at Brian Kreb's particular website. But people say, "Oh, Dave, that's old news." I'm like, "Okay." So, I had to go search it up. And when I first did this search, I went, "Oh, okay. Wait a minute. Let me just check that date right there and zoom in." So, that was January. And I found other articles in

the intervening time. The Mariah Botnet is still a going concern. And I bring this up because we're not learning from the mistakes we made years ago. And it still feels like everything's on fire. If we roll it back to something as simple as default credentials or passwords, I look at it very much as a house key. Have you ever seen me given a talk before? I talk about this a lot. You take your, you know, your key, you lock your door, you go off to school, go off to work, go off to buy groceries. Your house doesn't know any better, but your stuff is safe. If you lose your key somewhere along the line, somebody picks

it up and has your address on it or a picture or something like that. something where someone of malicious intent can then gain access to your house. Your house doesn't know any better and we have to find ways to improve that type of security because the attackers already know this and they are approaching it in ways that are making them a lot of money. This particular group, this is an older story here, but this was a great example where it was a group that was responsible for $71 million in stolen revenue from various different sites because people's passwords were stolen. This was four people. This is one of many types of crews out there that are

doing these sort of things. And unfortunately, the data breaches that we talk about keep happening. Back in 2012, I started tracking data breaches because apparently I needed a hobby. And the biggest one at the time was about 6.9 million records. That was big news back then. Nowadays, we are talking about orders of magnitude of billions of records. This is from a site called informationisbul.net. And I use these examples over and over again because it really lands. We look back and we look 6.9 million records. Now we have 2.7 billion, a billion, 1.3 billion and we want to layer in artificial intelligence. We have to figure out how to improve matters because the attackers are going

to keep coming. These are ransomware attacks by years. Back in 2017, they all started up 2018 2019. Defenders figured out how to deal with them. Something happened in 2020. Wonder what it was. Oh yes, the pandemic. Unfortunately, people were scared. So, the attackers knew this and they prayed upon this and they would send out emails saying, you know, if you don't click on this link, you're going to lose your healthcare coverage or so on and so forth. And obviously, that had varying degrees of success around the world, uh, depending on the market you were in. But the attackers have realized that as they've gone through this over time, if they come over to this far

side, which is the most recent data I was able to get my hands on, the smaller the bubble means a smaller size organization. The thing with these organizations is they usually are short staff from a security perspective and the attackers will go after these ones specifically because these smaller organizations by and large have contracts with the larger ones. So if you're able to compromise these and then pivot into other organizations, ask me how I know. I've lived through that with another company I used to work with many years ago. We do have to approach it from a way that makes sense because then we layer in things like LLMs and all the rest of it

where the security perspective is absolutely changing. Has anybody here heard of Echolak? Not seeing any hands go up. It might be the lights. So Echolak was a test that was done by a third party against one organization where they sent an email. The per the human at the other end opened the email, read it. It was perfectly benign. The bottom of the email were instructions for the co-pilot agent written in white text. The co-pilot agent read them, executed the commands, and then deleted all trace of the conversation from the logs as per the instruction set and gave the remote party full access. To Microsoft's credit, they fixed this before it ever became a news item. But

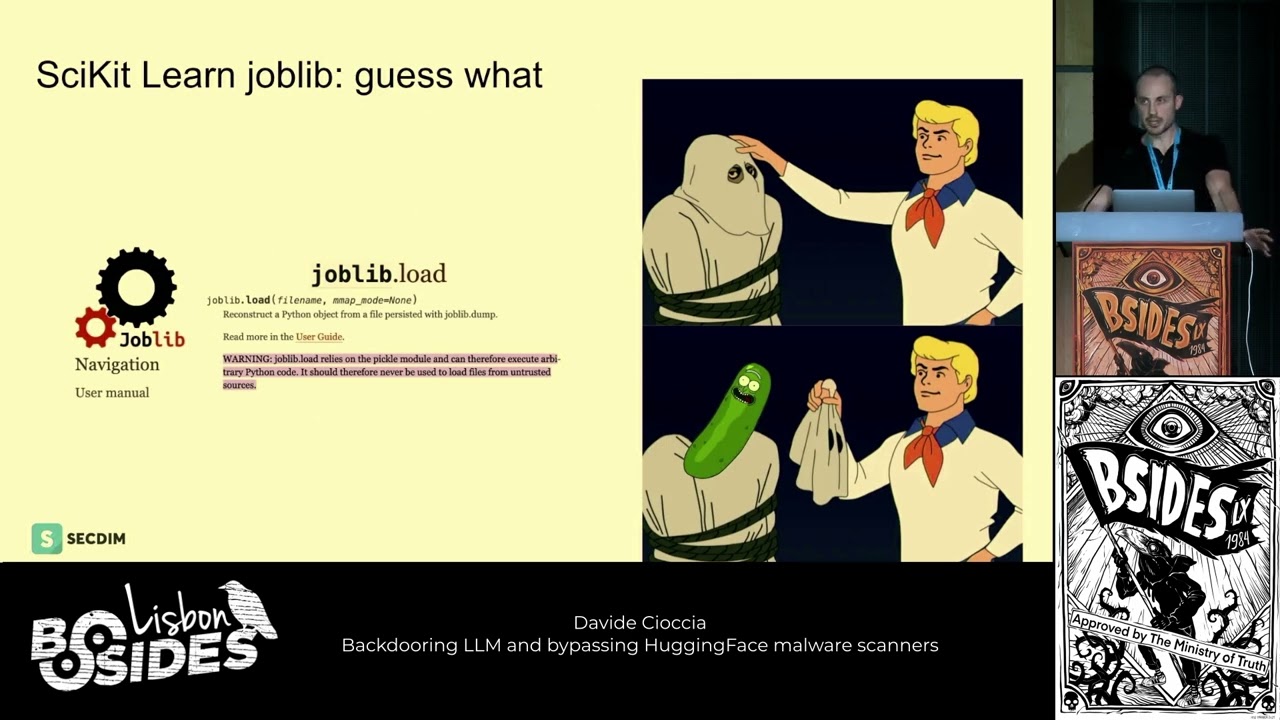

as a former pentester, this would have been kind of one of the first things I would have tried. So, it's actually kind of staggering that this is a problem that we are dealing with now. And when we take artificial intelligence, overlay it, we're looking at fishing, malware, data breach, all the rest of it. But we're also looking at data poisoning. We're looking at access. We're looking at bad things that can and will happen. For example, hugging face. I use this site quite a bit. And in this particular case, someone was able to gain access or some people were able to gain access and gain uh access to the tokens. And this was a problem here because a lot of

people build out their AI test projects on this particular site. Flip it on his head. Something as like this 10 years ago. This is an example of a company in Europe that I was dealing with and they had somebody call in pretending to be the CEO. We've all heard this sort of example. In this particular case, the CEO had just walked out to the parking lot, was going on vacation. The call came in. They're all on the phone. They're all bewildered. They're like, "He was just here." Well, he forgot something. He came back into the office and he got to listen to himself on the call. This was 10 years ago. So, the amount of effort that would

have had to gone into building out this particular attack was really significant. Nowadays, you can do this off your cell phone. AI is an amazing tool when used correctly and also can be a real big problem because the attackers know this. They are using it. Worm GPT, um, fraud GPD, there's all sorts of different platforms out there that are utilizing this. And even more recent news, which I'll get to in a little bit in a little bit. You can use it from the perspective of, you know, threat intel, all the automated uh, triage responses. In our own organization, we use it for gathering information, disseminating it within the organization uh, to other parties. We have to understand that agentic AI is

something we need to make sure that we are approaching from a coherent perspective early on. And I've been doing a lot of these CISO round table dinners around Europe. And the conversation that keeps coming up over and over again is they don't know how to get their arms around it. They do not know how to secure their environment. They have governance in place for everything that we're used to, but when it comes to artificial intelligence, there's a nuance that's applied to it as well. Agents are moving away from being co-pilots to being autonomous actors. They are the intern that you're training up to do a particular task or the way I like to put it a toddler running with

scissors. They can chain tools. They can do all sorts of things. But with that comes different kinds of failure modes. When things do go bad, they'll go really, really bad. But you know, in security, we do tend to follow our own rules. Historically, we were always that flaming sword of justice. The answer is no. Oh, would you like me to say it again? The answer is still no. That did nothing to enable the business. That did nothing to empower people going forward. And silly things would continue to happen. And we had a lack of diligence around the data. One organization I was working at, we had an assessment that we did of all the super user status

accounts in our organization that belong to people that were no longer there. And as we went through this, we've discovered there were 10 super user accounts that had root access, all the rest of it. But one of those accounts had been used in the last two years. The problem there is that particular individual had actually been deceased for five. This may seem very trit, very trivial, but when you add in artificial intelligence there, you expand that problem very, very quickly. And when you think about it from a genetic AI, it's accessing all of these different systems. it is going in and trying to access APIs and things like that. There are credentials involved. So you have to

figure out ways to manage that. Dantic AI is something that can do perceive, plan and act. And then the key traits here are, you know, dynamic use and it is different from a passive LLM in that it can take actions on your behalf. I built out a very simple agent that would go out and get me travel information because I was trying to book an off-site for my team and I said just go do the research and it went off and did the research. Day later I came back and said well I found this in this particular location. Would you like me to escalate my privilege so that I can contact them directly and book the uh reservations

for you? I never asked it to do any of that. I had an agent that was very basic had come back and asked to increase his privilege. And these are the kind of things we have to get our head around because things like this particular thing happen. There was one CISO at a dinner that I did not too long ago where they said somebody in their organization pulled up their internal LLM and said, "Who doesn't like me at the company?" He got an answer. The co-pilot pulled information from the HR system and told him verbatim who didn't like them. This is why we have to put guard rails in place. This is why we have to set

goals before we implement any AI projects going forward because it shows up everywhere. We haven't sock triage, identity access management, threat hunting, thirdparty risk, all of these different places. And this is something that we have to be very clear at when we're looking at agentic AI and the agents that we're dealing with. They have a plan they go through and they iterate through this and you can use this to assess any agent in your organization. You have the sensing aspect where it's pulling in information planning setting out the goals as to what you want that agent to do. I didn't specify clear goals for my travel agent. That's funny. I didn't even think about it that way. um and it

went out and did things that I didn't ask for because I didn't set proper goals in place. It can act by going through and taking actions on your behalf as well as going through and evaluate the outcomes. You can iterate through all of your agents using this sort of thing because when you're dealing with SPAR as an example, it has maturity levels. Level zero advisory only sandboxed actions, low risk, high risk needs personal approval. And this is one of the things I ran into when I was in Stockholm a few hours ago. I was dealing with a CESO there and uh yeah I'm running on three hours sleep so if I seem a little bit out of it I

apologize. So they had a tiered access pro program within their organization. So very basic stuff the AI would take care of it but for high-risisk things the human and the loot came into the equation and they were able to step in and have a way to deal with it. And level three is a way to kill switch and roll back. So if something does go wrong how do you respond? How do you protect your organization? How do you get back to a place where you are in a good state? These are the kind of things we have to take into account because if we can't explain a transparent way to an auditor or whoever it happens to be as to what happened,

it's going to be a huge problem. But thing when things are done right, you can risk uh risk reduction on the meantime to response analyst focus. You can have 27 coverage. You can have consistent policy enforcement when these agents are set out correctly. But we have to look at the ethical fault lines. People don't like to talk about ethics a whole bunch. I was talking to a rather uh senior AI developer about a month ago and I said, "Well, how are you approaching ethics for your product?" And he looked at me like I had three heads. He hadn't even considered that. And he's like, "Well, it's it's nice to people. It doesn't do anything right."

There was no conversation about who's accountable if things go wrong. You can't send an AI to prison. Who's going to own that particular risk? How do you deal with bias and drift? Every time you have an algorithm or any sort of instruction set that's put in there, it's put in by a human. We all have our biases, whether we like to acknowledge it or not, that either good or bad, we still have those biases that get introduced into the system. and the system picks up on that and it creates its own responses. How can you explain your decisions that are being made by your AI systems and how can you undo harm quickly? Have to have a chain of custody to deal

with accountability. We have to have some way to have that human in the loop to manage the risk and transparency is absolutely essential. You have to have a way to go through and have action cards to explain what you're expecting the behavior to be. prompt policy for verging and having reproducibility. So if something happens, how do you go back and show how it happened or why it happened to an auditor, investigator, whatever it happened to be and have to have decision trees with summaries so you can document all of this information. I've seen a few companies that have let go thousands of people because they thought AI would solve everything, but they hadn't gone through and set this sort of thing up

and they're realizing the error of their ways and trying to hire those people back. We have to figure out a way to protect our organizations and dealing with bias, drift, and the ethics of our data. We have to have good controls and safeguards in place. You have a matrix set up to deal with the context of what you're dealing with in your organization. the risk tiers like I was talking about earlier with the very basic can do the functions on its own but for something that is high impact having the human the ability to intervene and if it's at a certain scale how do you manage that so that it doesn't become a bottleneck and the kill

switch and the automated roll back

there's that three-hour sleep catching up with me Sorry. You have to have your lines of defense when you're dealing with AI. You have to be able to say, you know, have the build and run. You have to have the understanding what the compliance is that you're dealing with because you may have different regimes depending on what you're dealing with in the world. You have, you know, AI policy in Europe. You have different related pieces of legislation around the world with various types of associated um penalties with them. And you have to have some sort of independent assurance. You have to have an acceptable actions matrix. Being able to go through your data and understand from the row

perspective, column cell, right down to the nitty-gritty what exactly is supposed to be happening within that particular system. Having the ability to have the human collaborate with the AI is essential. Having an AI that just runs and does its own thing without any human intervention is going to lead us down a rather unfortunate path. You have to be able to escalate and intervene in when something does go wrong. Before you turn anything on, red team your agent. I was at a round table at RSA last year in San Francisco and we had a a hacker round table. I don't know why they had me there because I'm so far out of that. But I asked them, I said,

"Have any of the there was three black hats at the table and I said, "Do any of them have any of you tried to pull the credentials out of an agent?" and all they did was put their head in their hands. They hadn't even considered it yet. That's how early days it was at that point. But I can guarantee you they've gotten further down the road there. And how are you managing those credentials that your agents are using? You're managing it as a human identity, a non-human identity. What level of access does it have? And how can you tell that it's an agent using versus a human? You have to have some way to monitor and

understand what's happening in your environment. immutable logs, guardrails to deal with violations and how you get back to the rollbacks. I keep hammering on that. How do you measure having good measurements in place to understand how many percent of overrides, how many times a human has had to intervene, how many near misses have you had to can deal with because as we put AI into our systems or have systems that are being, you know, augmented with AI, that blast radius of what can go wrong is going to expand exponentially. Look at it from a perspective of a financial services company. I was dealing with one just yesterday where they were talking about how they had

this agent in mind that was going to go out and do negotiation for uh mortgages for somebody when they wanted to get a new mortgage that would go out and try and set it up for them. I said, "Well, what if somebody of malicious intent got access to that with stolen credentials and started getting mortgages assigned?" And they went, "Oh." They hadn't really wrapped their head around that particular aspect of things just yet. healthcare. You have electronic health records that are being put into these systems. If something goes wrong, you have to have some way to address that critical infrastructure. This less so there are bits and pieces of this cropping up in various places. I was at

a conference in Copenhagen just a couple days ago where they were talking about these sort of things. And by and large in the OT space it's more towards you know billing systems and HR systems and things to that effect as opposed to um excuse me as opposed to running SCADA systems and HMIs and the rest of it but that could be coming. There are different kinds of threats that we have to look at when we're dealing with resource injection, messing with the data. You know, if it's an RPA, if you change the data even slightly, it dies. Tool abuse as well as supply chain risk. Is the model that you're using something that is accessible as an open

source model. Can somebody manipulate that model? Can they mess with it? Can they change the data that's coming in? These are the things we have to consider. We have to look at the controls that we can put in place. Input sanitization. Sadly, this never goes away. SQL injection was on the OS top 10 for ever. And roughly the same sort of uh principle applies having arbback and scope credentials so that your credentials that you're giving to your agents are limited to having the ability to do their job as opposed to doing everything. Making sure you have signed policies in place. Compliance is absolutely key from a high level perspective. You have to be able to map your internal risks and you

have to look at how are you going to apply security frameworks against these systems. You have to figure out an implementation roadmap. And this is one of the things I love to talk about is like the first 30 days going through and doing an inventory of all those tools in your in your environment. I mean um the AI act really specifies that you have to have this inventory by next year. I believe it is here in Europe. 30 to 90 days evaluating, looking at shadow mode, what are the racy dashboards, 90 to 180 days, promoting level one and level two, doing your tabletops, external review, and then 180 days plus having a red team cadence going through

and trying to break it to see how you can improve matters going forward. and then going through and having a spar assessment in your environment, rating systems on a on a per use case basis within your environment, identifying the risk controls and owners and deciding what level of autonomy your agents should have. We need that humanentric oversight at all steps of the line. Yes, it's going to be very hard, but anything worth doing is going to be difficult. If it's too easy, we're missing something. You have to have a good reference architecture in place. Human gateways, side logs, roll back service, guard rails, all of this sounds like lip service, but all of this really goes

back to core fundamentals of things we should have been doing all along for other parts of technology. And unfortunately, when I use the password examples from earlier on, we keep making the same mistakes. We need to get better at this. Now, before you put anything into production, you have to make sure you go through and do a checklist. Have you done an evaluation of your agent or your system that you're trying to deploy? I used to work at a financial institution in Canada and anything that was internetf facing had to come through my team to be evaluated before it went live. One times a system went by and bypassed this entirely went live and we

shut it down within about three minutes and we found that they had commented out in the HTML username and password of admin and admin and they couldn't understand how we were able to get that. They said, "Oh, you hacked the system." These are the kind of things we're up against. and internal Toronto or owned organizations, we have to look at the access trust gap because internal systems that are corporate owned assets, yes, you can control those and configure those, but the vast majority of organizations out there now have people bringing in their own devices. It's it's inescapable. So, how do you put the security controls in place to deal with that? We have to look at ways

that we can have a pragmatic approach to AI certification in our environments. Having that use register is absolutely ca absolutely key. Having declared cases within the organization all the way down to having the human in the loop that is inescapable. We have to make sure that we don't give up and seed that territory to techn to tech. Try that again to technology. Bear with me one second. So as we're talking about that there are five levels to identic AI. Skipping level zero, we go to level one. That is fundamentally, you know, your car with driver assist so you don't veer out of your lane. As we go up through the stack, we get to level four, which

is semi-autonomous, and that is sort of advanced reasoning, self-driving car ostensibly, but you still have the human intervention. Level five is nirvana for most technology types. The problem is is we don't really have the technology at this point to hit that. But that can change very very quickly. Fully autonomous systems and we have to make sure that we're doing the hard work now before this becomes a reality. Broader access for agents means a greater level of risk. How do they authenticate across these systems? How do they access the APIs, databases, all the rest of it? We have to understand that most AI systems especially agentic AI are built just to work. They are built to make

sure that things are operational as opposed to implementing the fundamentals of zero trust or any sort of you know least privilege as aspect of things. AI governance is something that we need to make sure that we are addressing early on. Strict controls on how these projects are being implemented because AI is cool. It can do all kinds of amazing things, but if we're not doing the hard work first, it's going to be a problem because adding security on afterwards can be really detrimental. It can also give us all careers. When the internet was born, security was not part of the equation. When I was doing my graduate studies, one of my professors was Scott Bradner. He has his

names all over the initial RFC's for the internet. I asked him, I said, "Was security ever considered at the beginning?" He said, "Hell no, we just wanted to make it work." And unfortunately, we've seen that iterate over time through different types of things. I remember seeing somebody giving a talk about Bluetooth security on stage at Black Hat with a cell phone in his hand and then you hear, he looks down, somebody popped his phone over a Bluetooth vulnerability from the audience while he was giving a talk about Bluetooth security. We got to be able to do better. looking at things like shadow AI within an organization. Shadow AI, shadow IT, it sounds bad. It's just people trying

to get their jobs done, but they're doing it outside the confines of their organizational structure because they may not understand that their tools in place because they are being pushed to develop and implement things as fast as they can. It's like building the airplane when it's already in flight. If an attacker is able to steal the credentials out of an agent, what can they do? What sort of damage? How long before you would notice this is happening within your organization? How much latitude will they have in your organization? Do you have network zone segmentation? Sadly, I find too many flat networks even today in organizations. AI agents can also be used to launch of attacks. If they're able to be

perlloined and changed, they can cause trouble. If you could do them at scale, you have agent swarms, which is I've read a couple of research papers about that very recently. And then the good old my voice is my passport verify me.

Ah, there we go. You have to worry about the identities of the attackers that are coming after you because somebody may seem innocent may actually be causing all sorts of trouble within your organization. So ways we can approach this is having secure credential management, AI identity governance, AI friendly zero trust. Remember zero trust is fundamentally just risk reduction within an organization. Audit and explanability, supply chain security because that is not going away and compliance alignment. Autonomy without governance is a risk debt. I've been talking about security debt now for about 15 years. This is just going to compound it in a very quick fashion. Spar is a great way to look at your agents and be able to go

through and unify design, audit, and ops and make sure that you have an iterative process to address how that agent is working in your environment. Start small, promote with evidence, run spar as a self assessment in your environment this month. Pilot a level one or level two use case with that matrix that I had earlier. Now these slides will be made available and stand up audit and logging and dashboard so you have that visibility because things happen and when we look at it when we're doing the core fundamentals wrong it's amazing what can crop up since 2014 that was the password you can be sure though everything is fine now is now Lou one I'm sure

but yesterday we had news about this from Anthropic where apparently uh state actors were able to use their systems to uh 90% automate a cyber attack. But then today security researchers from the community said e not so much. This is the problem. We don't have clear information. We have to make sure that we are doing the hard stuff upfront to secure our environments because we're contending with conflicting information. So rather than worried about the churn out there, we have to worry about how to protect our organizations. And because I have, you know, f parental issues growing up, I need to always be out there in front of an audience talking. You can always catch me on the

Chasing Entropy podcast. Latent self-promotion. We have to understand you got to tackle the threats before they go grow rather because change happens speed is increasing and we need to be able to adapt. I really do appreciate the opportunity to be here yet again. I've been coming here since 2003. Thank you so much, Alberto. And I appreciate it.

>> And I don't know if anybody has any questions.

Oh, we got one up front. Of course, Trey. Not like anything bad is going to happen in the next 60 seconds.

So great presentation. If the problem is getting bigger, faster, and more complex, and we are still circling the same toilet bowl as you've described, where is the evidence that just try harder is in fact a well-formed problem statement? are I I I because I've been going to conferences for a long time too and I've been seeing the same stuff and I'm starting to wonder there's this old saying that it's very difficult for people to accept something when their salary depends on them believing something else. And it seems like there's some of that effect in play in our industry as well.

All right, let's try that. >> You're absolutely right. Um there is that's a consideration we have to take into account because people, you know, they are worried about their paycheck. They are have conflicting priorities, but if we start having these conversations more often, more frequently, I'm hopeful that that will start getting into the psyche overall because it it's I mean, we're all going to have jobs for a very long time. That's the positive outcome for us as security practitioners. But the reality is is that, you know, the implications of what could potentially go wrong are significant enough that it's concerning. And I've been doing these, you know, meetings with CISOs all over Europe for the last couple months. And the vast

majority of them don't know where to start. The outlier, strangely, was Ireland. They were they were on top of things. And that absolutely blew my mind. And I asked them, "Why is that?" And they said, "Because we're an island." Okay. >> Yeah, I think I got to keep this one. Check. Check. Oh, wait. No, I'm back over here. All right. So, we can give him that one. >> Question. >> Thank you very much for your talk. Um >> Oh, there you are. Okay. Yeah. So about aentic AI and um I work with SDLC so maybe this is a narrow scope definitely not driving cars but um I got asked by the CIU actually um what protections do we have in place

about agentic ID threads and risks and I haven't answered yet but the more I think about it regarding producing code in the end. Isn't it the same that we scan the code, scan the dependencies, look for secrets, all that basic stuff if if the code is produces produced by humans or AI, >> that's that's part of it. And but the thing is when you're doing it with, you know, vibe coding, all the rest of it, you have volumes that are exponentially greater. So are you sure that you're going through and doing this thing? Because in my way of looking at vibe coding, it's sort of like Stack Overflow on steroids. and you're going to be

introducing errors. I've seen a few reports uh from a couple of university studies where they found that the error rate um with Vibe code for example was higher than code that was you know vetted by humans. So there is that potential that it's injecting risk into your supply chain. I'm not saying that as a doom and gloom thing. It's just something that we have to be aware of and we have to be very vigilant to make sure that we're going through and reviewing all those sort of things. We will get to a place where it's going to be a lot better but we're early days. This is like the wild west kind of thing. >> Yeah. Thank you. And I think it's even

more than VIP code, right? It's like people don't even look at the code if it's an agent. >> Yeah. Fair play. >> Thank you. >> I saw a hand. Yep. >> Oh, it might be off. >> Speak loudly and I'll repeat it. >> Uh, so what Oh okay. >> Thank you. One of the problems that I think that we're getting wrong is we're inverting the authority um with LLMs and prompts. For instance, um if you have a proxy, a web proxy, >> Yeah. >> and if you need to access an API, obviously you have to send a token that gives you that proves that you have the authority to access a resource. >> Correct. >> Right. But with LLMs, it's often the

case that you will embed some sort of token like god mode token in the LLM so that when someone asks for something, the LLM has full authority to get whatever answer it needs to return. Right. Yeah. >> So it seems like uh that we should go towards more in like whoever asks the question has to prove that they have the authority to get the answer and they would pass on like a let's say a timelimited token that proves that they have the authority to get their answer and they would give that token to the LLM which would then use it in the API to get the answer and you know that token would expire. So the LLM could not

be convinced to you know to return using prompt injection the token to be abused by like subsequent um prompts. >> Mhm. Yeah. And that's one of those things we have to look at things like that. Like one of the folks on my team what they did was they took a executable they zipped it uploaded to an LLM that will remain nameless said decrypt this for me. It unzipped it then decrypted it said now execute it ran it gave him a shell so he had a root shell on an LLM and this is the kind of things with like you you're absolutely right we do have to look at those sort of things but there are fundamental problems with

these tools that we have all just rushed madly into you know making use of like chatpt was a catalytic event for the world because you know AI has been around since 1954 this is not new but to most of the people out there it was brand new when that came along then when we look at the errors and the issues that are cropping up you know cursor all the rest of it is something that we have to be just very very vigilant about and I you know I like that approach as well. >> Thank you. >> Anybody else?

>> Movement. Do we have somebody back there? Oh okay. All right, there's no more questions. I will release you to your prizes. Thank you.