PG - Building a Security Audit Logging System on a Shoestring Budget

Show transcript [en]

hello everyone uh welcome to my talk uh by the way I've got this QR code on every single slide so if you want to look at anytime you can follow along uh just as well thank you all right so first of all um why would you even want to build a uh security login stack well this is because um problems happen all the time and um for intrusion detection and um I IDs and IPS you really have to be able to capture those events to actually do something about them so it's very important to do that um your company might already have existing places um to store logs but it's good to have like one centralized location for everything

and also always good to have a data sync for this type of events a lot of times I see that seems things happen but because there's no great places to um shove data these events get lost so it's great to have just a generalized place for that um a little bit about myself uh my name is George I work at a company called Cloud kitchens uh we bu build a lot of ghost kitchens we do a food delivery uh my company runs in kubernetes we actually run across multiple clouds um I used to work AWS but we currently runs across you know multiple different clouds um I built one of the largest uh elastic search slop search login sacks I

think in the world perhaps um and I actually buil several several more afterwards um and one specifically for security oh what happened to

the did I step step one on cable sorry could you help us a I don't know if I step on the cable or something I don't think so

try your turn off and back on again yeah we don't have the budget for that as we as we established I think it might be back wait wait for it there we go all right all right be careful not to make any sudden movements all right so my takeway for you is hopefully is that um you give a sense of when to build versus buy once not once's actually not cheaper or e the other as it turns out um if you do choose to build I like to show you how to build it uh very cost efficiently and also how to pay for the stuff you need to make it very efficient in terms of um uh cost

and performance and uh went through about a year of optimizing for Speed and cost I like to toell that down and then pass that knowledge onto you so here we go all right let's talk about the benefits of uh build versus buy so let's start with uh buy obviously if you buy something it you make it someone else's problem and that's very nice for being a manager and also engineer because you don't have to worry about as much that's a fantastic uh point of view um also gives you uh your team a single point of contact when something goes wrong that's a also very nice um and sometimes you already logged into a vendor so for

example you have a big contract with existing uh provider that actually can give you very very good discount on buying buying stuff so actually that can be cheaper than anything you build yourself um I can talk about that later as well um some downsides to buying is that number one you're vendor locked so over time you know they'll ruce the price it's hard for you to get out so let's say all your data stored in one uh login framework going to another one it's hard to make move that data across um sometimes they actually will lie about their performance so a lot of demos I saw from other companies is that they will give you a demo that works

well for like a small size but as you scale up over time actually doesn't scill at all so that's actually a big thing to watch out for um and sometimes Al also thing we seeing is that support for them can be kind of slow as well the multiple sport TI for everything you buy and for the cheapest sport here they don't give back to you with it like a month or two or several weeks and that could be a big problem you have something that's going wrong um oh and also uh key features are sometimes hide behind pay walls which is a problem as well uh but for build um you actually uh you build with no components so you

actually don't want to roll with your own crypto to get the best bank for the buck the biggest benefit of build is actually you own everything both the software infrastructure and the data so one example is that if you work with government compliance uh you buy software from another country in case of war with some kind of diplomatic Fallout uh you might no longer have access to that software that could be a big problem uh if you build yourself you you can always choose what you store that data so you also can have a backup for it uh should you need it oh you can also choose to optimize between the cost and speed which is a big thing uh typically

when you buy from a vendor they give you like these tiers that you put data into um you really have no choice over that but if you choose to to build um you can actually really make a lot of um good choices about how you optimize for cost uh oh you also have a generation op you also have knowledge of how to operate this so in case something does go wrong you can fix it yourself which is way better than having someone else manage your stuff um obviously there some downsides to build as well uh number one is the time and energy so you have to be willing to do this um you can build a

stack and proposing in about two weeks comfortably if you got some knowledge of um Cloud um Cloud uh one week is probably fine um the tun it you probably want another two weeks or so so maybe a month just to um generate for this you have a bit of uh liability as well because after you build you're responsible for patching your own data you're responsible for all the ESS Ingress rules as well it's kind of a IC but um it's probably worth the cost if you want to save money uh and finally it might be a little bit of people's Warehouse because uh people like to do um you know like penetration testing it may not be familiar with building stuff

uh but a friend who's really good at hacking stuff together I told him if you just hack more things together eventually come up with a full loging solution which is what happened with our company he did a really good job doing this cool all right so let's talk about some of the benefits other benefits of uh DIY uh besides control over data security um you can really do that slider between the cost and and speed which I'll show you um oh also you can build your own plugins so I'll be talking about using open search so open search has a really rich plug-in framework um almost all of it is not behind the pay walls so if you want to

build like a plugin for you know managing like Korean or like searching like Elvish for example if you need to you can actually build that for yourself um you also control your own security model and actually have a very rich security model you can control who can access what very easily all right so let's take a look at the the cost so here I'm using the public information um obviously with different discounts you have different negotiations it can vary greatly I'm doing what's out there so if you start with Splunk which is the biggest guy in the in the room um they charge about $150 per um gigabyte of data G per per day so it comes to about $60,000 per

year and oh also the size of the custom using here is pretty standard it's a 40 gigb of inje per day comes to about uh4 megabytes of injections um per second so let's say you have like 100 um application each generate about 10 kilobytes per second that's kind of what it comes out to um ins up with 7.2 terabytes of storage per month so your cost will actually break down to both the compu Port portion which is memory and CPU and also the cost um also the storage portion so the longer you operate a stack the um the more the storage will um come into effect I'll show you how to optimize that as well um

if you go toward the next one which is elastic sech from the vendor you actually get a cheaper um package but this actually using the the cheapest possible support package meaning if you message them they'll get back to you with some 3 to5 business days uh if you pay for the best support package you double your cost actually almost doubles so you have to make a decision there about you know support versus um what you get um also AWS sells a version of elastic search as well called open search they actually broke from elastic search well back because commercial licensing disagreements um typically runs slightly slower than elastic search but that's one thing you actually know

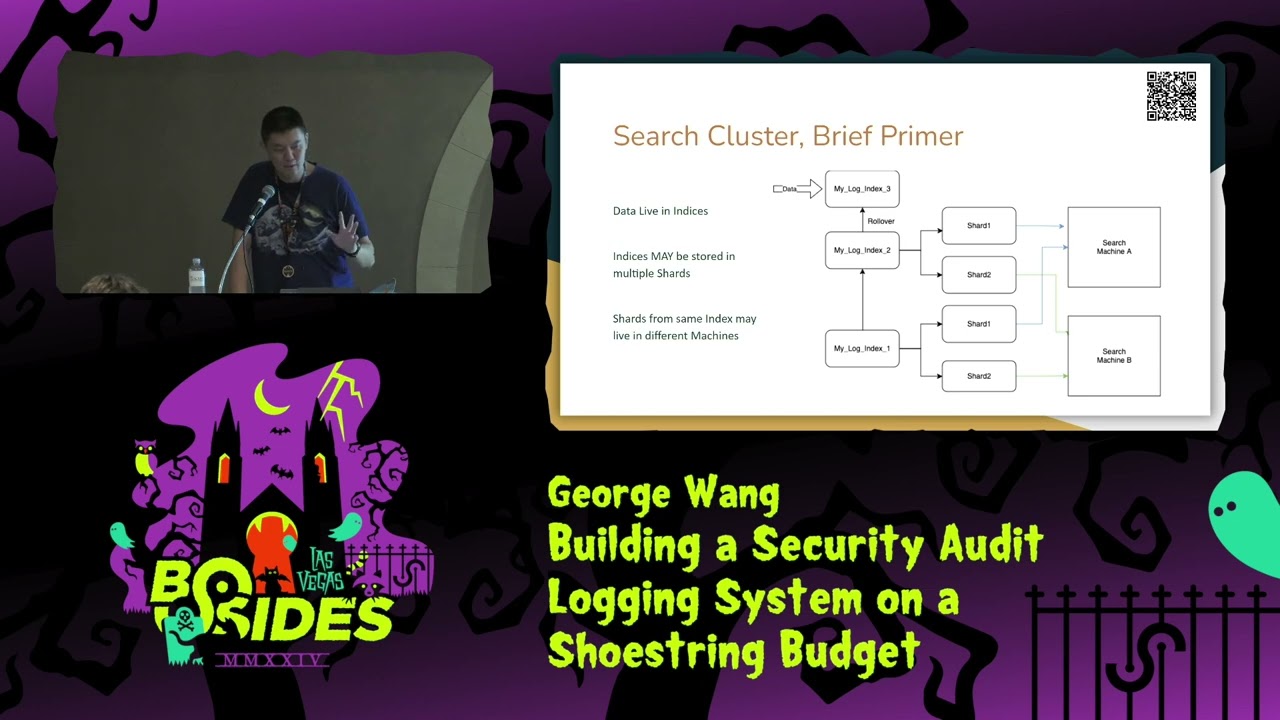

the difference if you run it yourself um they have a service like that you pay if you want if you get it it's about half the cost of Splunk um and if you just run open search yourself um was the same exact specs without paying AWS for the management you get about 20% cheaper and finally if you just build yourself super cheap um you can get down to even about half the cost of um using AWS manage yourself so yeah there you go all right before we dive into how to build a cluster let's have a quick Overlook at how the open search license works so data basically lives um in things called indices uh essentially

like a file of data um as time goes on your file gets larger and larger you probably want to draw that file from one indic to another so like week one is index one week two is indexes two so on so forth uh each uh file or indexes also BRS into shards so essentially components of the file you don't have to do this but uh doing this has some benefits because number one the shards can live on multiple um computers for you know resiliency uh two is actually the shards can be um searched uh in parallel by multiple CPUs so the more CPUs have the um the better your search could be but there's obviously like a

trade off between number of the shards you have and also the speed so you want to optimize for that as well I'll talk about all this toward the later slide for optimization cool all right yeah so this is the uh simplest um architecture for the login stack this will work for pretty much everybody and actually use a lot of off shelf components so you start with the uh application which um or whatever the gener log sources and you have an application that takes that data and shoves it into open search so there's a lot of of the shell component um I think log uh what's they called log stash is actually a common one that

people use um an open search or search will will do the job uh just fine um as you start building this you actually want to think about access already uh because each thing that push data into open search has different access to different indices you want to already think about how you want to control um what they can write to so for in our example um when we buil this basically different sources have different log forwarders and different log forwarders have access to different indices and they can only write to them read to them so even they're compromised you actually don't lose any data uh from the perspective of the uh the user they go through something

called open search dashboard or Keyana if you're familiar with the elk stack uh it's a simple UI allows you to search the framework itself um and then the simple login solution is username password uh set up for them uh which will work just fine uh Pro for this is that I think it should work fine for almost every setup uh the setup I built in my company actually ingested about a thousand times the um the ingestion of this I'll show you architecture that's more complex that can deal with a high level of skill uh but I think for most people this will would work great um some cons is that it's not as resilient to failures because um you obvious have

failures in both the um the log forwarder the uh open stash itself or the um application that shows data like if some problems I'll show you how to deal with that um and also you don't have probably the best way of managing logins because I'm usame passwords to to do this is uh kind of [Music] troublesome all right so here is architecture I use for the stack that inest about thousand times the uh the rate of the previous one um actually this stack only cost about 10 times more than the um the St you so about 200k to to do this uh the biggest changes are are several so let's start with um uh the log applications so applications can

live anywhere they can live in multiple clouds multiple um regions whatever you want uh they for data forward uh down down the stream and what you need to have is actually you have a buffer and uh here we use CA we can actually use almost anything this is actually like a key um importance because with so many log sources and so much information being put we sell a buffer like kka to buffer it uh failures will cause you to lose information and this is actually a very important key to make it work the second thing you need to have is have a second log forward after the buffer uh this is to really smooth out the um the

data you ingested because events don't happen like you know at even rate sometimes you have a burst events some have no events we s a buffer to really like make this even you have to have problems pushing this stuff into um open search itself and when you push data into open search you want to push it into a chunks and a good chunk size I I like to recommend is 5 megabytes at a time for large size you have to do up to like I think like 30 megabytes but um I think you do get some Dimension return uh over time uh now you you look at the open search clusters uh there are multiple of them so they actually they

can be collocated with the data meaning if you have data in AWS or Azure or gcp you can have one open seource cluster per region um with the data this makes it actually faster in terms of you know Lev agency you also like um saves a bit of money because you can be collocated for that and the beauty about open search is that you can actually search across multiple clusters multiple regions simultaneously as don't have the right access uh rules and uh for the user perspective you got a user you also have SSO so SSO is a simple integration that built into open search uh using saml you're able to um use that uh for your system and the

good thing about SSO is that a lot of people companies already have people defining ssos and they're tied to different groups so for example you you'll know someone come into application are they a engineer are product manager Etc and from that you can give them the appropriate access to the data they're trying to search so Engineers can only search something other people can search other stuff so with this result you actually have a very resilient um application you can basically um scale it to a very large degree and um also you don't have any pay walls that prevents you from doing SSO because you're using open search all right cool now let's talk about optimization

so I'll first talk about cost and I'll talk about performance so for cost um you really want to understand how people use your application and that's the thing with um loging Frameworks basically events happen you typically people typically only care about stuff that happen recently like uh usually something happened is like you know with the last day or two that's want to spend and that's where you want to spend your money so one thing you can do is that you can store the data that's most um commonly accessed um in a hot cluster and everything else push on to a lower cluster so let's say you have like two um uh computers the first one is for hot

data has the most CPU the most Ram the best ssds for example and then anything pass you let's say a week or so you push to other computers that have less Ram less CPU and more a larger discs so there's a little bit of trade-off for that because if you search data that's older obviously can be slower but um you do save a lot of money doing that uh the second thing to do is use the S3 bucket storage so this actually is one of the biggest Innovations I think have happened in terms of storage because your cost is so expensive for St large amount of data let's say you store like um multiple terabytes of data over

time that becomes tens of thousands dollars pretty easily but you S3 you actually become much much cheaper it's about a 90 sorry 90% reduction in cost in a lot of use cases but you don't want to do that you do not want to do that for um for Access Data because you charge per API access so if you confidently access S3 data you actually end up paying more than you actually pay for this oh the thir thing to do is use spot noes so spot noes is a concept with kubernetes where basically people will pay for um additional uh resources but they actually can't use it up and then when people are not using the resources

paid for uh the car providers will actually Le them out to other people to use um in the meantime uh one downside Spa noes is that um you can actually be kicked off the spa noes any time um so you really need have a result in build in so you can really recover your cluster if you kicked off the one of the notes you can be SP schedule on different note but the cost reduction is actually really high usually get about 50% to 90% of reduction in using cost um oh yeah uh in terms of opiz for performance so one thing I recommends you don't have exactly two replicas so you have a primary primary Shard and a

replica Shard so this means at any time half your cluster can technically be down and you speed up and running you do double the cost but actually is pretty useful so that combined with Spa meing you paying way less uh infrastructure cost but you also have a really high degree of residency we running spos for like years and actually being mostly fine but sometimes you do run into uh trouble when spawn those discount being kicked off so you actually do need need permission like a real um Reserve Noe or on demand not to really pay for that but I think most of the time you're probably okay um for shard sizes you won't have as many shards as you have CPUs

allocated because each CPU can search A Shard in parallel and The Shard sizes should be between 20 and 50 gigabytes this is done through empirical testing and plus a lot of numbers that El search publishes but does vary from computer to computer so you can want to experiment that a little bit but generally that's a pretty good um guideline uh finally if you do injust the rate as we do like you know several hundred megabytes per second you need to use SSD uh recommend magnet maget magnetic storage from almost everybody um because it's fast enough um because most things are cached in memory but for ssds you you really need ssds for um like you know hundreds

of megabytes per second for ingestion and one thing to know about ssds they're actually vary across clouds so for the same dollar uh in aure you probably get more um more storage but you do get less uh iops so once you actually hardly limited on is the iops for um for this uh for your cloud provider another thing to note that we learned is that the iops is based on the size of SSD provision so let's say you only need like a 10 ter 10 let's say like one terab SSD but you actually need a higher iops in that so you actually need to provision a two terab SSD so even though you waste some

space you need to have that iops to support your operations well another thing too is that if no one complains about your search speed you can actually reduce the CPU memory over time we do that gradually over a period of several months we actually will save a lot of CPU and and memory just by doing that because you know you don't need to spend that much money on the performance if no one complains about your cluster being slow so there you go all right uh let's talk about security oh and also maintenance so maintenance actually is pretty easy it's um there's an idea of a ISM policy in open search which basically allows the Custer self-manage how you roll the data

forward so um once you said that saying like hey the all this data lives um in this awesome really powerful box and after that it goes to a gradually like slower boxes you get pushed out um you can just do that once you set that it pretty much is set and forget um let's see what else oh yeah you want to use git Ops for configuration because it's very easy for people to apply configuration and forget about them so it's I think it's just good practice to do that um cic is also very important for pushing out in timely manner uh finally once you document the process you can basically just relax and you know drill it and you're pretty much

fine uh for security it's actually kind of like an ongoing process you need to constantly think about um access of different data uh one of the biggest takeaways I had from previous jobs is that you w have like a corly access review so when I was say Amazon every quarter people ask ask hey do you you still have access to this and if you don't answer back they basically remove your access just so people don't have access to things that you don't need uh oh yeah also you have to roll out patches yourself which is kind of um it's not that hard it's just something you have to keep on doing because you build your own

cluster all right so uh that's basically uh my presentation um hopefully I give you a good idea of when to build versus when to buy and um when you do build um how to like optimize for cost and performance um and uh oh yeah and thank you very much to bides for letting me do this talk my mentors and people who are here to support me uh that's my talk um if you have any questions uh please let me know uh my name is George thank you all

right yeah oh yeah I've got a Bonus slide with a bunch of references for tech technical references um for Best Practices if you guys care about so all right thank you you