Securing the Skies: Safety and Security in Aviation System Design

Show original YouTube description

Show transcript [en]

All right, everybody. Um, who was here earlier this morning for the opening ceremonies? Yeah, that was a really lively, great bunch, wasn't it? Um, myself included. So, as said, I am Lillian Ash Baker. I am uh one of the representatives and volunteers with the aerospace village. I also work for the Boeing company as a product security engineer and also a subsidiary of theirs Whisk Arrow um as the lead product security engineer for that product that we're building. Um today what I would really like to talk about is one of the most important parts of aircraft system development. Does anyone know what that is? No. It's standards and certification and safety. Those are the three main

pieces for aircraft development. And what I hope to for you to get out of this is something a little bit different than a lot of other talks. A lot of other talks are going to talk about how very specifically you can take some of this information and apply it into your own areas. What I'm talking about here is ideas that we use in aviation that you can use to augment your own processes and your own ways of thinking in order to uh better your systems development. So first thing that we need to do is discuss safety and what safety is and what we actually mean by safety in a system because every industry will have

a little bit of a different take on what exactly safety is. Um in aviation it is a legal definition and we'll get into that. So I hope you all find this as interesting as I do. I still have not shut up about safety in aviation for the past 17 years. I think this is like one of the most important and interesting things about the industry. So first principles of safety here. Safety classifications come from two documents part 23 and part 25. Part 23 certification is general aviation aircraft under I believe it's 16 passengers. Anything over that is part 25. Um there's a very important reason why there's a distinguishing line between the two of those and that's

because the safety levels vary depending on the type of aircraft that you're actually developing. So with that we have these failure conditions these minor major uh hazardous or severe major which was added in as part of point uh part 23 and then adopted in part 25 years later and then catastrophic and with that is these probabilities right so the way that safety works is that this is a probabilistic process that we have to take a look at that is quantitative ative and qualitative in nature. Now, this little graph over here off to the side for uh is directly out of part 25. That number up there, it's kind of hard to read. It says 62188. Who was born after that

date? Yeah. So, the classifications for safety were around before you were born, right? This is stuff that has been around for almost 30 years at this point. It's very uh sorry uh 40 years. Getting getting my own age a little bit mixed up. This is the bedrock of how civil aviation works and why it works so well. Because a lot of this information comes from a time period where a lot of people were killed in accidents repeatedly because aircraft failed, systems failed. So all of these regulations come from a time period that was created from blood. This is why it's so important for us to understand why these uh classifications are important. So I talked about the

probabilities in this and these are the numbers for probable that come from part 25 and also part 23. So minor probability is on the order of 10 the minus5 right that's just for minor. minor is basically some damage to the aircraft but still usable. It's when we get down here to the catastrophic levels which are extremely improbable on the order of 10 theus 9th or less which means that your system has to operate for approximately uh somewhere between uh 10,000 to a million hours without failure. Single failure time before a single failure. This is something that no other industry really does in the same way. Now, this is also system level, right? So, single lus or line repable units, equipment

that you put on board an aircraft cannot have probabilities better than 10 to the minus 4. And that also comes from a qualitative analysis. So, you have to build a system of multiple pieces of equipment to even hit the single loss for functional level. So that might mean three systems can fail or have to fail in order to get to this catastrophic probability. Um major got redefined as remote at some point. Hazardous came in because they needed something in between uh major at the uh 10 to the minus 9 or sorry 5 to 9 range. So they put hazardous or severe um uh uh severe uh extremely remote in between at 10 to the minus 7 and we'll get to what that

actually means here in just a moment. With that comes this chart. So we have different classifications of the severity condition with the name over to what it actually means. improbable, se extremely improbable, remote or no safety effect, right? None with the on the order of what the actual probabilities of that happening are. And that goes into the functional safety analysis uh or hazard analysis for system development. Then we get to this other area which is the functional DAL assignments A, B, C, D and E. Design assurance levels are definitions for safety impacts as you develop equipment. So if the function that I'm developing say it is uh the uh autopilot for an aircraft the flight director it's probably going to

have d a db b effects which means when I do my analysis that system cannot fail more often than this percent uh this uh probability and I have to ensure through my design assurance level and my design assurance processes that I can achieve that. Now, what's really really cool about this is that we get into development objectives. So, this is where we start to talk about the other letters that I was uh mentioned this morning. DO178, DO254, DO uh sorry, ARP 4754 for system development and those DAO levels ABCD and E dictate exactly what you have to do in your development processes. So development processes, it's not telling you how to do your development. It's telling you what you

need to do in order to prove that you did the work to make your system safe. It's a very different uh approach to how this all works. With that comes paperwork, lots of paperwork, ridiculous amounts of paperwork and a lot of other work on top of all that. If you start to get up into DA, uh, so this is actually SA that I pulled the chart from for security assurance. But once you get up into DAL A, you start to see an R with a nice little star next to it. That star means that you have to have independence which means that the person that writes code cannot be the person who verifies the code. You now have to

have somebody else either within your company in a completely different organization that does the verification of your code. It could be a contractor. It could be multiple other ways of achieving that. But the point is the person who wrote the code cannot be the one that says the code does the functions that it's supposed to. That takes a lot of additional work, incredible amounts of additional work. It requires a lot of additional uh requirements work and all these other objectives to be met. But it's all in the name of making these systems as perfect as they can possibly be. Now, with that, we start to talk about how that ties into security a little bit

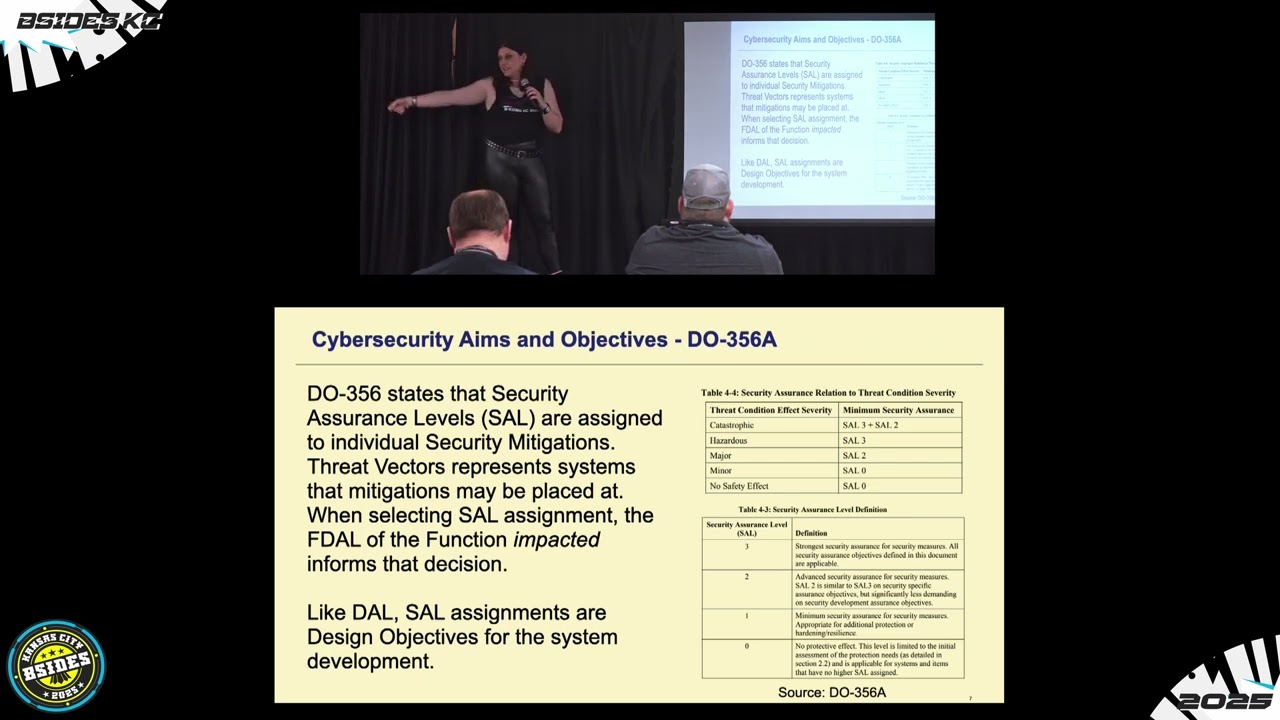

more. So shifting our our perspective a little bit here, we're going to talk about DO356, which is uh security objectives um for aviation systems, how you do your development, what uh sort of artifacts that you need to produce as part of your development process. And we have this really cool definition that comes up in DO356 called IUI. UN intentional unauthorized electronic interactions. The concept there is that you have to prove that the interaction that you're having with your system was intentionally done by somebody. They did not have the authorization to do such a thing. It is electronic in nature. So that removes the physical security part of our conversation because physical security is completely different than the

electronic security for uh aviation systems and that it had to have some interaction with those electronic systems on board. Now what's really interesting about this is that you get kind of different questions that safety and security ask. safety starts to ask questions about uh failure conditions of what happened and why did it happen, what led to that effect for the safety to be impacted on the system. Security looks at a completely different set of questions. The who and the where. Who was it that did the interaction and what was the entry point into the system? Because one of the big differences with security and safety, safety looks at uh threads throughout a system. You have to

ensure that the safety runs from originating system all the way over into the uh system that is actually using the data and presenting it to a pilot or takes pilot input and moves an actuator back and forth. They don't look at intermediaries in between. That's all wrapped up into the functional hazard assessment. We already tooken that into consideration. Now security however you can have multiple entry points to impact the safety of a system function at any point of the system and that's a big difference that is made in this. So when we talk about the safety levels and the threat vectors to a system, we have to know exactly what the safety function is on that system and

what the level is. And the reason is because we want to know how bad a hacker or some other unintentional um uh user has impacted that system. And after we know that, we're then required to go put mitigations in place into the system. Now, what's a little bit different about safety uh or security side of things? Safety just says you just develop your system until it's perfect. You just start adding stuff. You add in mitigation or not mitigations, you add in uh uh safety um in order to bring the numbers up higher and higher. That might be cross-checking data. that might be having a third piece of data uh a third data source to cross-ch checkck against might be in

multiple different places. What's interesting is that when you get up to catastrophic, it has cell 3 + 2 which goes back to our objectives of the system. Uh S being uh security object um assurance level 3 + 2 means that you have to have two mitigations for every attack vector. Now what's really different about that is that it does not have to be in the same system. It can be spread out across multiple systems as long as it has the ability of stopping that attack vector as it moves through a system. So it's a very different way of thinking about it. So that it takes pivoting into consideration as part of the attack vectors as

well with that. It also affects how you do your development. And what's really nice about it and is not uh tied into this here is that those security objectives for cell three, cell 2, cell one actually have mappings over into the safety objectives for D A D B and D C. So you kind of get the assurance baked right into your development processes if you're following the development processes. Right? The other piece that's really interesting is that if you do your analysis and you say that look my analysis does not take into account the actual uh criticality of this system be it maintenance systems. Maintenance systems are typically very low-level doubty systems, but they can have high

impacts to a system. So, you have the ability to actually raise the security level and say, I'm raising the security level of that function and apply it across your system. It's a very different way than how safety approaches the problem by saying like, nope, the analysis shows that it's good. It's good. And it also kind of shows where safety or security uh needs to mature a little bit more to take those into account for the threat vectors and the analyses that are going on. Um and if you look down here at the assurance levels as well, it gives some definitions behind this. So it says the you know sal 3 is strongest security assurance for security measures. It

really ties back to that design assurance piece of it as well. All right. So, what I would like to do is I would actually like to walk through a sample system, right? We can't sit here and talk about how we do system analysis and how you throw all these numbers and letters through. So, we're actually going to walk through a system thread. So in this case, in this example, our functional hazard assessment says that this system thread is a DALB system, right? So the data as it goes from system A to system B to system C out to the user display has a hazard level um of 10^ theus 7. Okay, which means if there's any threat

vectors, we have to have cell 2 mitigations in place. Um, safety analyses are very much a linear top- down look at a system from first first entry point of data into a system all the way out to the endpoint user. So, in this case being the uh the user display. So, let's take a look. So, we do an analysis here and we apply safety requirements. There was an analysis done. the FHA came back and said these are the requirements that we have to put in place of our system in totality. So we're going to uh monitor failures and enunciate in system A. We are going to check the val validity of the input on system B which takes an

input adds two and outputs the data. We're then also going to check the validity of input into system C. Cool. Our system now meets Dalby by by all of our analysis. Oh. So the cyber team went through and started looking at the system and they found on system B that an attack could actually modify the inputs or the code in that area and now it can modify the output of that system. That's not good. We now show that a attack in the middle of the system can impact erroneous data to the user display impacting the DALB B functionality of that system. Okay, not a problem. We can get through this. Okay, we can we can do memory

hashing. Cool. So now we took care of the problem. We hash the memory space. We know when there's going to be an attack that happens. We also added in a secondary system on system B so that we could do cross-checking of that data so that we know that if one system gets impacted we can always check a second independent system and see that it's not being impacted except our cyber team also found that they could shut down the interface by flooding system C with junk data and and and now no data goes into this impacting the function of the unit uh of the system again. Okay, so we put in some DOS protections. Everything's great.

This time they figured out that they can set a mode command which back propagates into system B and goes all the way back to system A, switches the mode of the system which then forward propagates back through the system and changing modes of that data without the user ever knowing on the user display. But part of that was also that system C sent it down the system B prime uh pipeline on the cross side and also commanded it to do it. So all that cross-checking that we did, it doesn't matter because both systems are now in the same mode. So this example is to show you that there's all these different areas where safety and security touch, right?

Memory hashing is something that you typically do for the safety of a system, right? You want to ensure that no sort of environmental effects change the memory spaces. So you hash them and you rehash them and you copy that hash into a third uh memory space and then you pull that in and when it's wrong you pull a secondary copy and when that one's wrong you pull the third copy and if it's wrong again you throw the whole system away but also it helps protect the security of the system right we added a security requirement that's hash the memory spaces so in this example we can put in requirements that could either be a safety requirement or a safety

requirement and increase the other ones uh overall safety or security levels. If I put it in for security, it improves the safety of the system. Likewise, for having this secondary system with DOS protections, I put in a very specific security uh implementation that has safety implications as well. Because if that system now sees that there's an attempted DDoS attack on that interface, guess what it's going to do? It's going to cut off all interfaces, which means I no longer have safety data, safety critical data going to the user display. I've cut it off. I've stopped it from going. In this case, the DOS protection has now reduced the amount of safety in the system. So, in

summary, aircraft cyber security is still a very young uh discipline. Do356 was only released in 2014. It's not that long ago, but it has had the rest of the cyber security industry in order to grow upon. If you go look at 356, one of the really cool things about it is that it has a references table that has all sorts of information in there for other sources uh like NIST uh 160 uh 800 160 853 etc etc etc. It's built upon the rest of cyber security industry. And as we kind of discussed earlier this morning, the connectivity with aircraft has now increased and keeps increasing with every successive generation of aircraft. At the same time, we're taking away federated

systems and putting multiple functions together into a single system. And so with this, it presents more opportunities for cyber attacks to become worse and worse. So in short, the only truly cyber safe system or safe system has to be a cyber secure system. Uh we do have some time for Q&A. If anybody has any questions, please let us know here. Any questions? You might get off the hook here. I might One question just sorry about that. All right. Just one quick question. Um so as we progress forward in the future, right, and we start to look at autonomous driving, I mean sorry, autonomous flying of airplanes, right? Do we expect there to be like a massive pool for kind of

standardizations across all aircraft, both human piloted and drone? Yeah, so that's actually a really interesting question. Um so there's actually two roads that I have developed here in the US. We develop our uh UAM that's urban air mobility aircraft to be under part 23. So just as your Cessna, just as your Cirruses, just as your small aircraft, they get developed under part 23. And so they fall under uh yet another standard uh ASM F-3532-22 uh which also gives you a back door to start using 326 and 356 as well. Europe is going a little bit in a different direction. And what happened in Europe with Euro K is there's an organization of EV toll manu uh manufacturers and groups that

got together and they started their own uh cyber security um standard ED305. Now the interesting thing about ED305 is that none of the working members from U RTCA SC 216 or you're okay working group 76 actually worked on that standard. Someone else not involved with a part uh 25 security worked on that standard and it completely has a different look on the um safety security levels and impacts very different. So there's there's a little bit of market play happening going back and forth. The problem is is that we start to get into reciprocity issues between the FAA and and Euro and um YASA where they try to be the same as much as possible. And

this is one area where like the the link is definitely broken. Any other questions? Thanks for ending it on a downer. Yeah, absolutely.