Data Wrangling Lessons from OWASP Top 10 - Brian Glas

Show transcript [en]

everybody mics on now all right so we're gonna get started here this is brian glass he's gonna do a talk on a wasp and i'm sure it's gonna be very exciting so it's a great way to start the day and enjoy it there we go all right so we only have one mic on that's good good morning everybody I know it's a little after 9:00 but you know that's why we have padding and schedules is so that we don't like end up with talks till 6:00 my name is Brian glass I've work for a small offset consulting company called invisi 'm i spent a lot of time working on oo stuff was one of the co leads for

Sam and then in parallel like a lead for the a aw stop ten so today will be some of that story about last year has who all has heard about the top ten before I'm hoping for a sizable percentage okay good the rest of you just haven't had enough coffee or you're still staring at your phone uh-huh so I'm having a small competition with my colleague next door so Kevin Cody who just received the black badge he talked me into submitting up here he's like you need to come up to besides Pittsburgh is pretty awesome so I had gone with him - besides Knoxville because I'm from Chattanooga so he was kind of in my territory so I

decided to try and come up to his territory so we somehow ended up with dueling opening talks where he's got the other room and I've got this one so I told him I was gonna take a picture of everybody and then we were gonna count how many seats are left because he said I had an unfair advantage because I had 150 seats and he only had a hundred so I said fine we'll just count how many empty seats there are and we'll see who was closer to capacity so what I'm gonna talk about today is there's a lot learned from dealing with the open data call with OS top ten and unfortunately only a tiny

fraction of what you can learn from the data is actually in the top ten document itself so I wanted to go through and try and help people understand and some other information you can learn from that because we ended up collecting one of the largest open public data sets about vulnerabilities found in applications that I've ever seen and I'm not sure there's a bigger one than this set so OS top ten how many people know is actually released in those three much fewer hands so most people know the top ten as like a three year cycle oh four oh seven ten thirteen they were too busy on sixteen so it bled in the 17 and then all sorts

of fun stuff started but it actually started in 2003 and it was actually intended to be an annual document and then when they went through the second revision they're like that's a little bit much let's like push it out to about three years so it's it's an awareness document that's what its intent was is basically at the start of app sack when it was really getting going in the early 2000s people are like what do i what do I do I just don't know where to start and so Jeff Williams and Dave Ricker's were like let's give people ten things to start with everyone likes a top ten list right David Letterman's top ten which I

should add in here at some point but haven't gotten around to it so it's just awareness and then it got added the PCI and so awareness went to baselined and then vendors were like we need something that's measurable that people have heard of and guess what we're gonna pick the top ten and so now it's essentially the pseudo de-facto sort of tongue-in-cheek standard of sorts when the intent was that it's just for awareness to help you understand where to start and not I've heard people say yeah I have a whole app sect program it's it's the top ten like that's that's a fraction of a knapsack program that is not a whole program so like I said the

top ten was supposed to go out in 2016 it didn't make it it was a little bit busy so in April of 2017 they released the release candidate and I don't know some of you probably remembered too if you were paying attention to Twitter or wasp there was a lot of backlash and controversy over the release candidate for the first round of loss top ten 2017 there are two new categories that were added one was insufficient attack protection and the other words under protected api's now neither it's not that neither of them are actually a problem it became more that the community backlash against the way they got in and more the way they were

written because a seven ended up being more written like you need to buy a tool to solve this and unfortunately there was connections between the guys who wrote it and some tools they were selling and I don't really know I have no idea of intentions I'm not passing judgment on intentions one way or the other but social media like social media likes to do will absolutely pass judgment from everybody's individual phone and laptop and everything so it got ugly how many people followed that anybody so this is new to you Wow I guess we live in our little like spheres of little chasms and such but so I only got tired of this I got four kids I try

and insulate them from social media for a part but I really got tired of the security community started tearing on each other and they started tearing on ooohs over the whole thing so I got tired of everything buddy just saying burn it to the ground and nobody was trying to come up with anything constructive so I wanted to do a little research because I feel like by if I'm gonna weigh in on anything I need to have a background and I need to understand what's going on rather than just snapping off an opinion and then finding out that I'm wrong because I didn't know the story so I reviewed the history of the top 10 development all

the way back to 2003 and I was one of the people who didn't realize it started in O 3 but I found that the release candidate for every cycle didn't change between the release candidate and the final for whatever discussions they were had sometimes there was some discussion sometimes not it never changed and so that became concerning on its own because at that point said the process is whatever the release candidate came out with it was basically gonna be the same thing at the final one year they flipped a couple of them around but they didn't actually change what the categories were so then because the data was all public I decided to do some analysis of the data and see if it

backed up what was in there trying to figure out get to the root of all of this and then for better or worse I wrote two blog posts on it one was more about the process in trying to plead with people to stop just ripping into it and try and figure out something a little more constructive and the second one is after I started digging into the data I got way more curious about what was actually in the data so the original data collection they wanted full public attribution they wanted it fully public so that meant many contributors actually wouldn't contribute at the time that the call went out for data I was working at

Microsoft's trustworthy computing and I asked them I'm like hey do you guys do anything with OAuth and they're like no not really why I'm like your Microsoft we have like massive amounts of data can we share it like yeah you can try so I put I've talked with a bunch of internal teams spent weeks on spreadsheets and calling out stuff for data had over half a million different findings that they had found and fixed before ever any of their apps ever being published and then in a near the end and execs like nope can't release it there's like all it takes is one person to look at that data and write one blog about how horrible

Microsoft is about security because they had half a million vulnerabilities that they found in their own stuff and so unfortunately that data never made it I I couldn't do anything about it so what happens is when you do something at that full public level the only people that contribute our consultants and vendors because they can bury the actual origins of the findings in within their whole client base because it gets all aggregated together you don't know which client those actually come from so those end up being the only people who can really contribute and that's one thing when when we were working with the team I was trying to plead with them that we need a better way to do that we need

some pseudo public some way to be able to contribute data with each other because better right now everybody's learning stuff and then we don't get to share it with anybody relearn the same things over and over and over and it's ridiculous so looking at the the initial data set I realize there's there's really two patterns you have human augmented tools and tool augmented humans they're a little awkward but you know so it comes down the frequency of findings so if you look at have you ever gotten the report for that's generated off of a tool vendor versus a report off of a human pen tester what's different about them everything so when you ask a

tool to find stuff it tries to find every single specific instance of something and will report you every single specific instance of something so you get hundreds and thousands of findings in the tool report so when you get a report from a human you get hey I found it three times it's pretty pervasive I can see that you have no control in your code or no control in the dynamic this is a big problem but I'm not going to find you every single one of them so then there's context like humans understand context tools do not so I worked at FedEx for about a decade as both developer and security and we would run security tools against the

code and bless its heart it was one of the better dynamic web scanners at the time and almost every app it would find a multitude of social security number exposures anybody want to hazard a guess why so social security numbers are nine digit numbers right so what else for a shipping company is a nine digit number is it plus four yes so without knowing context the tool has to say I don't know but it could be a social security number it's nine digits so but I can't tell you that it's like after a city-state therefore most likely zip code but human will tell you that in this heartbeat well know exactly that you're looking at

a zip code the other thing comes into is natural curiosity how many people have found the tool that's curious it actually wanders around out of curiosity and says hey that's a that looks weird let me poke that let me fuzz that let me put something else in there tools don't do that they do exactly what you tell them to sometimes most of the time hopefully they do exactly what a developer told them to whether or not they realized they were telling them to do that but on the other end of the scale humans don't scale human the scalability tools you can point at something and they will work 24/7 they will work for as many as much

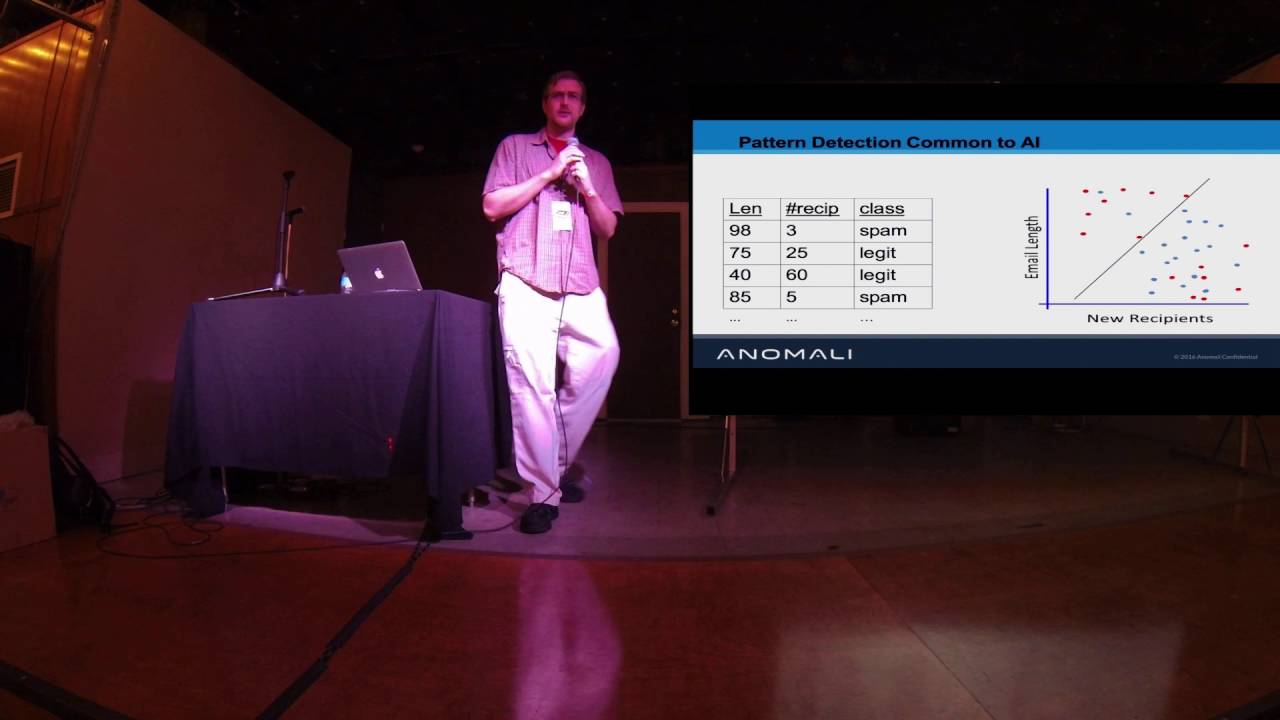

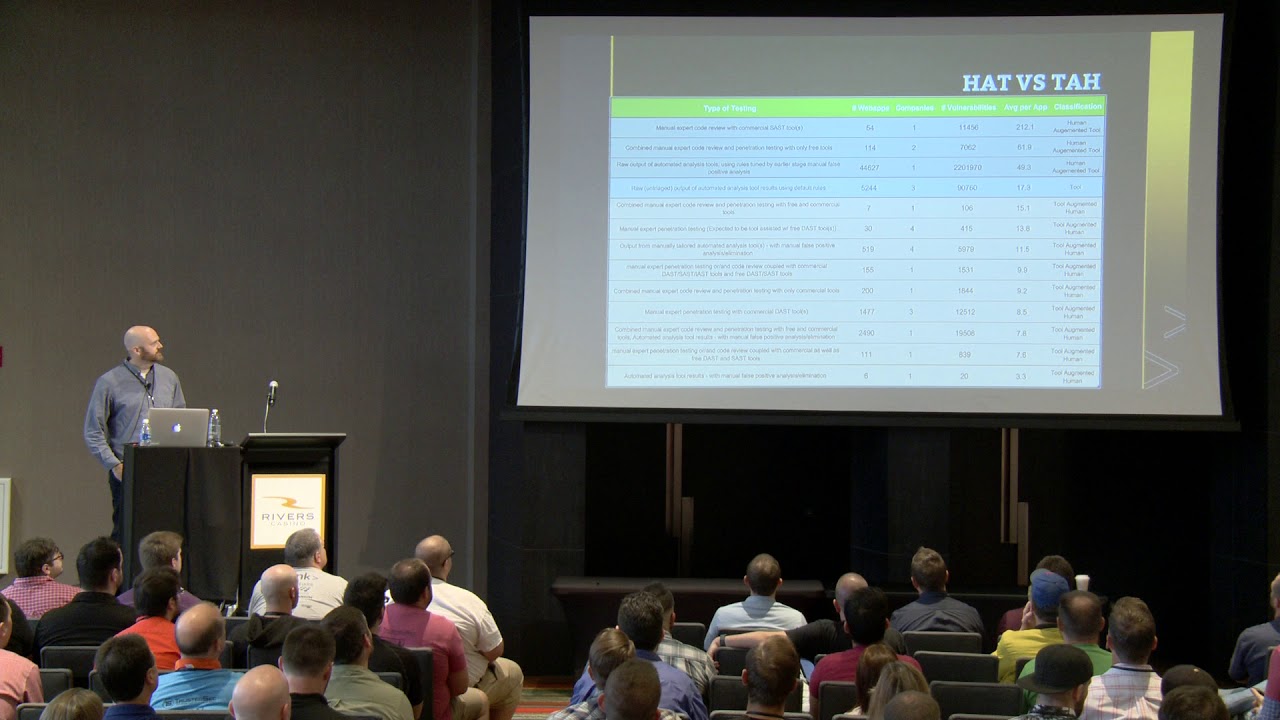

electricity or CPUs or disk space you to give them humans nope we need a break and then consistency so as much as I hate to say this if you get a pen test from the same company over and over and you get different people they're gonna find different things humans aren't consistent in that space we all have patterns that we like to follow we have different areas of expertise but a tool it should find the same thing over and over again and in the same space so one of the things like I split up the data and at the top you can look there are various you can see very clearly the average per app the second of the last

column going at 212 findings per app do you think a human wrote that report like no need not 61 not 49 there's one in there at 17.3 but they identified themselves as being pure raw untreated output from a tool that had nothing to do with humans but then you get down you get down to 15 and less and that's definitely a sign that you know a human is doing it and so that's where the the tool augmented humans come into play so so I wanted to see I'm like alright so we're playing with this what are humans good at finding versus tooling and so I broke it down as like hey of all of the

findings that were identified what are the machines good at finding versus the humans and anything in orange-ish color the humans were like 70% or better of the findings anything in gray which is almost everything else the tools were better at finding but then I realize there's a small problem with looking at it that way and that's frequency tools are finding a lot more and the original data collected for the top ten was based on frequency so I said alright so looking at the top the humans and machines are quite nicely almost exactly tenfold different so I'm like alright let me let me just pretend for a minute I got 10 times as many humans and

let me try and level the playing field and say hey we we tested the same number of apps it changes all of a sudden you realize the scalability problem and humans are actually decent bit better than tools still at finding stuff we just don't have the time to find everything we need to find we're writing way too much software way too fast for the number of people to be able to find stuff in it so all of a sudden you the chart flips and you've got stuff that humans like almost exclusively find over tools if you could scale them so then we have the chart that I like to call the pac-man effect and that usually happens

when you decide to make a pie chart with something one single thing having at least 80 some percent and then you tilt it on the side so it looks like pac-man uh-huh the original data had was very much overwhelmed by very code very code was kind enough to submit data but the thing was is their individual data submission comprised these some percent of all of the initial data and so whatever they found drowned everything else out at the time especially in frequency and guess what they found quite frequently cross-site scripting what's been number one on the top 10 for forever cross-site scripting so I was curious I'm like so what happens if humans look at this and it's a very much

more diverse lot when humans try and find stuff within an application actually the interesting thing is cross-site scripting number three number one is security Miss configuration which we'll get to in a little bit to why that's actually interesting setup so I went through and I did these blog posts and you know talked with a few different people and then have my one transition slide because like my kids snuck spongebob into my deck so it has anybody heard of the summit or the open security summit okay is anybody active in a wasp right now not really interesting okay so Lois has and they did it last year and they did it this year they have a

project summit that's like a dedicated separate summit and it's a week in London and essentially the project's get together and they just work on their projects it's not a conference there's not talks you have working sessions all week and yeah honestly we end up working ten twelve hours for the day because we go all the way through dinner and such but in June of 2017 I was heading there because I was one of the co leads for Sam if that's the Software Assurance maturity model so if you're having to deal with program type stuff and you need to know where you're at I highly recommend you take a look at Sam but the top ten leadership that's had it from oh

three finally decided after fourteen years or like yeah we're kind of done this was just too much too much controversy we're gonna turn it over and they turned it over to Andrew Vander stock who's been longtime ojas he's been board member he's been working on the top ten really good guy I was definitely a pleasure working with him the top ten had working sessions there because they were gonna try and figure out what to do with art with the release candidate so while I was there they asked if I would help work on the top ten because little did I know the data analysis I did for the blog post was more than anyone else had done on

the data yet to date so they're like well since you're so familiar with the data can you help us do this part of the project so while we were at the conference or at the summit a new plan was was put forward and we basically said look our target is to release it by Thanksgiving of this year but we have a boatload of work to do before then and the data is not a lot and we actually need it better so but we also we didn't disagree with the original intent of trying to have a couple of a couple of new categories within the top ten because if the top ten was only ever built off of data it's

always looking back to a year or two or maybe three so we're like we might need some emerging categories because they kind of did that with CSRF no7 andrew added CSRF into the top ten because from talking with other folks in the industry they just knew everything was vulnerable to it we had no defenses for it at that point and it worked it got attention defenses got built CSRF isn't what it used to be in terms of a risk a lot of languages have stuff built in by default the same site cookies or kicking in and so CSRF isn't as much of a concern as it used to be but we also changed it to have a fully open process

in github so part of the process that the top ten that had was you just every now and then it would the project would surface with some output but there was no insight into who all was working on the team who all was doing stuff what decisions were being made what was being discussed so there's Andrew Vander stock and Torsten giggler and Neil O'Neill's going because I can't remember his last name standing up here under lights sorry Neil and myself so and then also being able to put it in the markdown and github it allowed the top ten is one of the most translated OS projects and so it allowed groups to be able to actually

actively translate it so now we have like Spanish French Hebrew Indonesian Japanese Korean it's amazing I've actually talked to folks from France and they basically said if you can't give their developers stuff written in French they won't read it they just won't pay attention to it you can give them English stuff all day long they don't care it's like if it's if somebody translates it to French then they'll read it so we decided to get two forward-looking categories we would open up a survey it was mostly grassroots over Twitter and everything we would do a survey I have this slide because on day one of saying hey we're gonna do a survey we're gonna survey the industry

everybody who can chime in please do tell us in and we'll commit to having two of the categories into the top ten of course somebody goes and asked within like six or eight hours hey what happens if someone games the system like thanks that that's so helpful now everyone now people are like I wonder if I could game the system so this slides here to basically show other than the like initial spike of oh this is interesting maybe I'll spend time like filling out a survey of what I think is important coming up that's not touching the top ten yet pretty much all the other spikes in there are only when we went back and

like retweeted again on Twitter or like hey please fill out the survey so there is no weird anomalous behavior in here and to be honest it would have been hard to game the system because you didn't know how many submissions there were so you either ran the risk of being too low and not getting what you wanted or being so ridiculously high that it was absolutely clear that somebody had gamed any particular answer so we were when we were talking this is a project we are really hoping that we would have one hundred hundred and fifty responses we figured if we got to that point we could at least say this was pretty good we end

up with five hundred and fifty people who were actually willing to go through and fill out the survey to do this influence so the interesting thing was is so we did it in a ranked set up there anybody here fill out that survey just out of curiosity yes thank you by the way at least one so number one and this is interesting so exposure of information so privacy violations so not really surprised cryptographic failures is number two so both of those fit really well into the sensitive data exposure it was a fine it was one that we already have so within the top ten each one is essentially a collection of different cwe's number three became d

serialization so that stood out on its own so that became one of the categories that was added in 2017 authors a bypass essentially path traversal insecure direct object reference so that went into the brokest act broken access control and then the fifth one on there was insufficient logging and monitoring which i found to be quite fascinating that that got identified as a number fifth most important so that became a ten the one that aim here that I I have a feeling will only continue to grow is the one right in the middle the inclusion of functionality from untrusted control sphere it's essentially third-party code that you're integrating into your web app that you don't control if you think about it that

should scare you in some respect where there is the number of times you have site metrics third-party ads whatever you basically let them put code in your app that says hey go pull code from some other server and embed it in the page that's only gonna get worse and that's at from my perspective that's something that I'm absolutely keeping an eye on because I expect that to go up further it's not being abused like it could be yet but I'm waiting for it so one of the big things that we did when we reopen the data call is we switched from frequency to incidence rate so like I said we have a big problem of frequency

when you get data from tools and you get data from humans you can't compare them because tools are absolutely drown the humans when you look at frequency so we looked at different ways that we could try and compare the two and so we went into the space of Epidemiology so part of that's my dad's family practice I grew up with all the large medical terms and everything but incidence rate actually fit what we were doing and that's really looking at how many apps had particular vulnerability types at least one of and so now if a tool finds cross-site scripting an app it's a 1 if a human finds it it's a 1 they're now comparable you now have

whether or not that vulnerability existed in the app you don't get the granularity of knowing is this a systemic problem where you never actually designed for trying to defend against this vulnerability or is it like a you had the framework and the developer goofed or they purposefully turned off the control you don't get that but you do get the ability to go back and forth between a human and the tool so very code was kind enough they three separate years worth of data is a ridiculous amount of data checkmarks chimed in how many people know that fortify is no longer HP just a few so fortify is actually micro focus now micro focus last fall bought fortify Ark

site and a few others from HP so anyway if you see microphone why synopsis contributed bugcrowd contributed they managed to get it under the wire at the last minute they had a lot of discussion and my hat's off to them because they're the bug hunting industry right now is incredibly competitive and there is whenever whenever one of these vendors contributes this data that there is some risk to their business because that allows their competitors insight into some of this data that they wouldn't have had otherwise so I cannot thank them enough for being willing to actually contribute and my hope is it will actually get others to contribute and we can keep growing doing more of

this we actually had data for over a hundred and fourteen thousand applications tested which is to me it's just it's astounding so looking at an incidence rate so we try and blow this up a little but notice something interesting what's number one now it's security miss configuration it's not cross-site scripting it's not that cross-site scripting is totally dominated all of a sudden we have security miss configuration happens in almost 25 percent of the apps tested in that data set cross-site scripting has almost the exact same incidence rate cryptographic issues information information leakage or disclosure then sequel injection I mean seriously sequel injection ten percent of the apps tested still have sequel injection how many years have we

known how to not write bad sequel it's been more than a decade and we still have it in ten percent of the apps but you know why right because sometimes we have to write queries that are too complicated for whatever what ever structured setup we have built for the a particular language so if in the names of backwards compatibility are still being able to do whatever we need to do with the data we still get sequel injection unchecked redirect xx e's were kind of surprised that they showed up all of a sudden but looking at incidence rate we all of a sudden had a very different view about what was actually showing up in applications so all of this is public

you can find it in the OS github if you want to play with it if you want to challenge it if you want to argue with me about it that's fine I don't mind I'm not right all the time I like to be just ask my kids but I'm not but it's interesting when you look at it this way so I put in these are the largest contributors so I've got microfocus checkmarks bugcrowd three years of very code synopsis soft tech anything in green means they found at least one in one app it showed up everything in white was nothing so in that entire data set they never found that vulnerability once and all the apps

they tested so what does that likely tell you they didn't look so one of the biggest challenges I've run into and I that might when I was working at Microsoft so I wanted to work there because I was like this is the beginning of the SDL this is the company that started all of that this is the company that owns the dotnet framework and so if anywhere you can actually fix security at the root it would be there so I wanted to go and learn all that and it was interesting because we had access to the guys who like wrote dotnet wrote calm objects who wrote all that kind of stuff even in the Rosalind compiler

which is amazing now they said one of the hardest things was to get these guys to write test cases I said to font to write a good static analysis test case is exceedingly difficult to actually understand the language well enough to know well whether or not you actually have a problem at a particular point they said we can't pay these guys enough to do it they know how to do it but it it's just so painful for two having how hard you have to think about it and how hard it is to get right that that is where part of the problem is is we're just having a real problem getting people to write the right test cases to

get the static analysis to do what it needs to do so it's interesting so if you look at like in check marks and I'm not picking on anybody specifically they're just easier to see from afar in their data FN occation and security misconfigurations are both zeros so basically they'll never find that right now now this now I have to caveat this this was the data set they sent me last year they may have added tests to that since if you look at vera codes so there's a very obvious one in the second row down in 2014 and 2015 data they have zeros in 2016 all of a sudden they have data so somebody wrote test cases and

they started looking for security misconfigurations now when they started looking they found it in 44% of the apps that they tested that year which tells me it's been there it just wasn't looked for and to have security configuration come out as the most prevalent at the incidence rate and we haven't been looking for it and all of this tells me there's even more of it out there so it's interesting because like sometimes and it's interesting looking at the tool some tools have more breadth than others the other challenge with this is its structured by cwe's so putting all of this data together company store it differently some people will actually associate findings with cwe's and some don't so we

ran in the struggles like we even had so anybody know HP Seven Kingdoms so HP the there guys decided that they weren't really crazy about how cwe's are structured and to be honest there's some challenges with cwe's it's still one of the better things we have I'd rather try and fix it than build yet another standard like you all have seen the xkcd cartoon where they're like we have 14 competing standards let's make the one standard to unite them all and then the next frame we have 15 competing standards too it's like stop making new ones let's fix what we have so HP stores all of its data based on those seven kingdoms that they do not necessarily

cwe's so that's how fortified data is actually collected so when I asked for it structured in cwe's they had to go through and they had to go try and align what they had with cwe's and we were in in this some times where it's like you're your aggregation of all injections is actually a smaller number than sequel injection by itself I don't think you're aggregating worked quite right and so they're like nope you're right and they had to go back and they had to readjust it so the biggest struggle we have is with there's no standards for how we're doing this cwe's the closest we can do at this point but even with cwe's the way

they're laid out you can have overlap and you can have three really smart security people disagree which cwe a problem actually is so let me ask real quick I'm using cwe's as if I'm assuming you know what they mean does everybody know cwe is generally so you know what CVEs are right so see v's is that common vulnerability enumeration so you get the cool little number when you find a specific vulnerability in a specific piece of software cwe's is abstracted a little bit it's like a common weakness and so it is more in general it's not talking about a specific vulnerability that was found it's talking about a vulnerability pattern or more of a more

architectural or more at a higher level so it's are just aside I realize I forgot that one other thing I wanted to do is I wanted to look at the data call results and so I wanted to see what percentage of all of these people that submitted actually ever found a particular vulnerability in an app so like nine ever like in their data so like cross-site scripting is actually probably one of the easier things to find right now at least one right so there are some cross-site scripting that you would have to fuzz for a long time to actually find some of them but there is some dirt simple cross site scripting and quite honestly it's like the most

commonly used a little pop-up alert box of hi or 1 or pwned or whatever so but also almost all of the vendors that found sequel injection or path traversal or security Miss configuration or crypto errors but where we start to struggle is we start looking at insufficient anti automation less than half of the vendors ever found it once in any app or xxe or unvalidated for words or unrestricted upload of files so or insufficient security logging at the very bottom we have less than 20% of the people of the vendors that submitted data ever found that once in that data not like I didn't find it in this app they never found it once in their entire data set so what

can it tell us it was like humans we're humans are still winning right I mean if we weren't around nobody tell the machines what to do so I'm not surprised that humans find more diverse philander abilities they just do we're more creative in that space tools can find what they know to look for and that's a challenge because it doesn't take much of a difference I mean look at a V and signature chasing and how hard it is to write signatures for every possible variant of a particular virus or worm or something I mean it doesn't take much to throw off Chex tools can scale they can scale really well at times but then I run into the same

problem where I'll do different talks about more about the SDL and people are like my static analysis scanner can't scale for my CI CD setup feel like it's trying to run static analysis and it takes it to ours and I need it to be done in two minutes was I got there's no solution to that right now not at that scale but from my perspective we need both we're not at the point where you can just do tools and no humans because you're gonna miss a lot but you can't do humans and no tools because you're gonna miss a lot because you can only look at a small subset of applications and honestly we're not looking for everything we just

aren't I mean we're happily ignorant about a number of things cuz we just we don't have the time we don't have the manpower to actually look for everything that's out there the more languages we write the more complicated we get the more vulnerabilities we have we just don't know about I mean look at look how long deserialize ation was sitting out there before somebody realized you could do it in java and then the amazing thing was look how long it took somebody to make that jump from java.net Microsoft sat there cuz I was there they were like don't say anything about deserialization feel like cuz it exists on net but nobody's made the connection yet and

we're fixing it and they basically got it patched completely before somebody made the jump and they're like hey wait a minute what if dotnet has the same D serialization problem it was like a year between Java and.net for talking about it it was amazing so what can the day to not tell us so right now this is difficult because we don't store this I work for consultancy we don't store this because it's too hard to actually capture this apps are so complicated and convoluted now I'm looking at like ten twelve fourteen different programming languages within the same app trying to identify which language of inversion and framework that we actually found a vulnerability in to

try and attribute to that is like ridiculously hard so what I would love to know is I would love to know which language or framework is more susceptible to what types we know there are certain frameworks that have more default controls in place where developers are less likely to run into a problem but we have some that are far more susceptible PHP is more susceptible to certain types of vulnerabilities than that it's just the way the language of the framework is written our problem like I said incidence rate hides this our problem systemic or one-off that can make a really big difference because when you're looking at remediation or trying to fix something it's a big

difference between oh I goofed and I accidentally made this true instead of false or I didn't use the right method here it's a big difference in the time it takes to fix that versus the time it takes to reaaargh attack something because you never even considered having to put that control in place is developer training effective it's kind of a pet peeve to me really good at developers by using multiple choice do you know what developers are really good at seeing multiple choice but it has zero bearing almost zero bearing I'm sure there's gonna be some but almost zero bearing on how secure the code is at your company after the training the problem is is it takes a certain level

of effort to actually understand that correlation between developers take in the class let's look at the code they do over time beyond that class do we see changes in what's introduced some of it is we just haven't built the infrastructure to do it and some of it is there some companies are like we're not gonna play the blame game we're not gonna identify which developer actually did which vulnerability so I mean wouldn't you kind of want to know at times right so I mean not not the single a mountain fire them be like dude you kind of keep writing some nasty dynamic sequel queries can we help you out with that maybe they got assigned all the

requirements for the nasty sequel stuff where they have no choice but to do that our IDE plugins effective there's an interesting point right so if you get to a developer while they're in the mindset of writing code and you can say hey by the way I don't think you really want to do that right then and there they can fix that and then like really quick within a minute or two if you wait - that gets published to production and then you're like hey by the way good job hashing everything with sha-1 but the company's standard is sha-256 you can have to go back and change that it's like how much more time is it to go back make that

change do the regression testing revert a bunch of hashes or not or migrate users of everything that was hashed versus at the moment of time of typing sha-1 it says no there's a huge difference the amount of time that costs you to fix the other thing I have no information on or how unique these findings are they gave me a number of apps and they gave me number of findings in terms of incidents but I have no idea how many apps are in there more than once I don't know how often I mean because like in vericose 74,000 I just don't know how many times the same app was retested over and over I just don't

have that level of granularity the other thing I had this fleeting thought and now I've lost it Oh bug crowds data is fascinating it was all human generated and human verified right so of all of the data set we had of the ones that people claimed that they had verified and vetted and all of that jazz bug crowds people paid money for it because they paid out the bounty this was all confirmed bounties that they pay so this should be the most accurate data guess what I can't really get out of an incidence rate because incidence rate requires you knowing how many apps you tested bug crowds like we can't tell you how many apps these people tested we can

only tell you what they reported on we can't tell you what they tested and so it became a different kind of a problem or you're like this is my most accurate data set but I don't know the scope and so it's like it do trying to do this with data is incredibly challenging we're still part of the picture and that has to do is we're not testing everything we don't really know what we're looking at half the time so the numbers in this don't matter I just used it to build a pattern because I wanted to show you this so data in prod versus testing so this is kind of something you would kind of want to

expect to see so you start where you're not looking you're blissfully ignorant you know stuffs there but I just don't have the resources to deal with it's fine' gonna look so you start looking so you get this really nice deep cliff you implement some testing within your lifecycle and all of a sudden you have this massive amount of vulnerabilities technical debt and whatever people like to call it so you get to work on it you send people to training you're getting a good steady decrease somebody bypasses the process you get a little hiccup that never happens right nobody ever bypasses the process because you know some executives said we need this out and we're gonna use this cool new tech but

also the other thing to note is it never actually hits zero that that's a fallacy you you will never run out of open vulnerabilities if you do you probably have one app and you haven't looked at everything just don't expect it it needs to be manageable I mean we're we're in the business for security of managing risks not eliminating risk eliminating risk is way too expensive for the business and as much as it pains me to say as a security guy every dollar spent on security as a dollar not spent on the business so when you're looking at security you better be darn sure you can sell them that this is worth the money that they're putting into it so if you

look at defects and testing now notice I didn't call these vulnerabilities I called these defects because these are things that have yet to be released there's stuff you're finding and you're fixing before they ever get published so you have a similar large climb and spike but I expect you just sit more in the middle and you're gonna get spikes every time somebody introduces new languages new frameworks new dev teams new apps new something right it's not gonna be a steady decline it's gonna do this so be careful what you attempt to incentivize your developers for what you reward them for everybody remember the Dilbert cartoon where the pointy-haired boss goes up to Wally and says hey I'll give you 10

bucks for every bug you find Wally's like sweet and Dilbert comes back he's like what are you doing he's like I'm writing myself a new house and he's writing a ton of bug so he can find them all for 10 bucks a pop so be really careful with you incentivize developers for and testers for finding but looking at structuring vulnerability data look at the what what app is it related to when did you find it language or framework what point in the process you found this is really important trying to figure out did I find this when I was writing it did I find this in the initial test or in the build or did

the third-party that I'm required to do annual pen tests with find it for me later in my entire process fail or did I not even have a process to find this before it went to production like I've run into problems where like folks are like with DevOps they're like hey we deploy four times a day if there's a problem we'll fix it like that like that's cool but if you lost four million records out of your database they don't come back like that they're just gone so knowing when in the process you find stuff really tells you where you need to be looking otherwise like if you only find it too late try and see if you can find something

earlier the later you find any type of vulnerability the more expensive it is to fix and that's one of the things like and it's sorry well the next slide is good for soapboxing that oh wait so again with CBS CBS and cwe's how many people know CW SS actually exists like one two okay so Steve OSS is really cool it's like CBS s right so you're CVE gets a particular rating of severity based on all the known components related to it because you know of a specific instance within specific software CW SS is its Big Brother it's the same idea but it's for common weaknesses and many ways actually like C WS s better than CBS s it's just

nobody's ever heard of it it's amazing it's it's out there and it's been out there for a while but it was never really published so one of the things I'm doing in my doodles of spare time with four kids in the consulting gig oh he's trying to build some default CW SS scores for did common cwe's because they just don't exist nobody's actually gone through and written them yeah and then the last in the data structures is verified especially if it comes from a tool did somebody come and verify it or was it just found was it triage did it really exist so I mean when you look at different things and like I said each of

the vendors gave me different stuff and they look different and they smell different we tried to structure them but it's a challenge it really is and it's really hard to do data analysis without clean data and that's one thing that a lot of a lot of times people won't say outside of like inner circles is without clean data you're not going to get accurate analysis you're gonna end up with the wrong solutions and the wrong answers so this is why I was gonna get into a loop for a minute there's a difference between architectural flaws and valen so an architectural flaw is when you've messed up in the design you either didn't put the control in the

right place you didn't even put the control in place those I've never seen anybody who can code their way out of a bad design you just can't really do it one of the stories Microsoft liked to tell when they first started implementing threat modeling is in Windows 7 they had a feature that like some admins had asked for or at least voice of the customer people said admins had asked for so they started threat modeling this feature and they went through what all the controls need to be put in place to keep this feature from being abused by the time they were done the only person who could actually use the feature and he benefit out of it was the

attacker because it was just something that never should be written there was no way to protect it from malicious use and so they cited that for a while because they're like we just through threat modeling realized we cannot actually code this feature they're like just think about what would happen if we hadn't done it rewrote the feature we released it as part of Windows 7 and we had how many hundreds of million deploys and then found out how bad this was and how badly it could be abused so think about it it doesn't require massive like days worth of work but just every time you start to put a feature and be like if I wanted to Beus this

feature how could I abuse it and what would I need to put in place that's reasonable to try and keep people from abusing this feature applying secure design principles I was at a developer conference last week and I asked him I had a room of like 80 people and like 80% of them are developers and I said how many of you know of a security standard that you're held against like does your company have security standards six six hands raised out of 60 some people there's like if if your dev teams don't know what's expected of them related to how old a write secure software you cannot expect them to write secure software they're getting tested

against something at the end of the day when a pen test that they never knew they were supposed to do it's kind of like that nightmare right when you were a kid you have that nightmare you're sitting in class you're your underwear and you're taking a test that you've never studied for you don't know what you're looking at and then if we start looking at data is like what about training data and I count a hit on this a little bit before is like how are you measuring training are you simply measuring it by attendance are you measuring it by like little multiple-choice quizzes before and after are you actually trying to correlate data from training with it vole nur

abilities found within your testing cycles can you create some link between can you track it down to individual teams or individual people in terms of you know knowing where problems are and like I said it's not to like shame them or anything but it's like certain people just may not grasp certain concepts and that's fine but would you not want to help them or be able to write more secure code it's really about how you do it but also within your own data do you know your top ten you know what your dev teams struggle with in terms of ulnar abilities within your company not a global super high level loss top ten but down to their specific stuff so what can

you do so I mean part of it is what story do you want to tell and what data is needed to tell that story so if you're looking at a program or you're looking at trying to figure out hey I need some more help with insecurity in a certain space you need to build the story for that and then you need to figure out what data needs to exist to tell that story do you want to tell how effective training is doing then you need to set up the infrastructure to be able to correlate findings from time into matching up to what is going on with your training program structure your data and keep it

clean that is so dang hard I mean it is meat and potatoes it is just grunt work it's it's dirty in the trenches but if that's not right you're gonna be telling your bosses the wrong things you're gonna make the wrong decisions but oh I think right stories was in there for security related stuff when you write stories when you're doing user stories they just think about it I mean there's a difference between writing a story of like hey users should be able to access their account and download a CSV with users should be able to access only their own account or one their authorized for and download CSV there's a big difference between how you can

code those two just by having a few different words in that sentence every now and then I'll have somebody asked me like is it better to have security sprints or is it better to have security built into each of the different stories and in general I'll tell you it's the second one it's built into the stories if you if you try it because this is what happens this is what used to happen in waterfall of testing we're like we can compress testing it's fine we need the features in place we'll just shrink the testing time I'm sure the devs did a good job at writing it the same problem happens when you have security sprints

because they're like it's not functionality or you build a ton of functionality and then you decide I'm gonna do security sprints to secure it guess what it doesn't work well you can't go back and tack it on later it doesn't work you can do security sprints like if you need to at the beginning build an authorization model or you need to build authentication chunks or you need to build some kind of common framework for security controls that's fine but that's functionality at that point and it should be its own story but like I said in general having that specific language and your stories makes a whole the difference in the world and then if at all possible

try and figure out how to contribute to the top 10 20 20 like I said I have the utmost respect for the companies who are willing to contribute and I hope it only encourages more to do so because I mean we're our problems way too complicated to solve in a ton of tiny silos and we'll never actually get it right or even come close to getting it right the fun thing about Security's we'll never solve the problem we will only ever be playing catch-up with the next tech but we can start trying to be a little bit smarter about how we do it and a lot of that has to do with being able to actually share with

each other there's a lot of people who are really afraid of what they perceive is airing dirty laundry but to be honest we've got to go somewhere and make some progress on that space but that's what I had so questions thoughts Oh open documents as a secured container

so some of the I've seen some people take like the wasp ASVs because it's more of the positive control side and use that to start trying to create standards I it's not part of this deck I have I do more talks on SDLC type stuff standards should really be technology agnostic so try and stay away from language specific stuff that's a good question I don't see a lot of it I there's a lot of companies that will make it and then they'll sell it i waas has some guidance and there's some if you do some Google searches but there's nothing like like totally stands out to me like this is the best starting point it's a challenge it really is when

I was at FedEx it took us four months of meeting the team of six of us to actually create the app set standards within FedEx

so it was Web Apps api's all that jazz but it was static analysis dynamic analysis hybrid but you know humans doing both yeah it had that full set in there yes and no some were and some weren't sometimes people were willing to give us enough of metadata to say yes we attest that these findings were very right and sometimes not so it's a challenge right so then how far has verified me so but yeah I like I said that that's like hard part to get clean data to actually get to understand what you're actually you know yes sorry I can't hate security misconfigurations oh so that's like so most tools or most software when you buy

it it's by default configurations just to get it to work right so you don't want to get your company around and support calls so like Liferay and a number of other like large portals or other things like that they default to like all the services are on all the ports are open the usernames and passwords or defaults and easy to understand because it just needs to work or the customers going to throw it away and not do anything with it so Mis configuration is like s3 buckets that are public that shouldn't be or you never hardened your software or you never change different configuration elements it's that kind of thing that makes sense okay anything

else all right thank you [Applause]