A Friendly Introduction to Adversarial Machine Learning

Show transcript [en]

I'd like to introduce everyone to Evan Wright if you don't know him already Evans a bit of a rare bird he's an expert in networking as well as an expert in machine learning which I come to find is a pretty rare combination so I guarantee that if you pay attention to this you will learn something today Meister thank you guys for having me so I am coming to you guys today from a company called anomaly and basically we are a threat intelligence platform company that tries to integrate threat intelligence with various cybersecurity devices like Sims and that type of thing so we're really interested in sort of the data reduction problem of helping you find useful things and so a

machine-learning comes up a lot in the type of work that I do especially trying to kind of whittle down data sources so I went to RSA and everybody in security is kind of advertising we do machine learning this or that so I thought it'd be good to talk a little bit about about this state of machine learning today and so it seems like there's two camps there's basically the camp on the Left which is you know machine learning is great right it'll help us like help our old people get assistance right where they otherwise wouldn't have been able to afford care to keep them doing well right so kind of machine learning and applied to the physical space of using

robots so then there's that great side which is kind of the left and then there's like that they're the optimists on the left but then the other contrast is like you could call them pessimists or you could call them like the security community or the privacy community but right it's like wow killer robots and like cyber mafia and all these types of things so this is the usual question that gets discussed is like increased robot sort of intelligence if you will is it a good thing or a bad thing and I don't think that's a real productive conversation to the most part because everyone's already having it so there's a for a conversation that I'd like to talk

about and that's when so starting to think more about when it's the robots versus the robots right so we're thinking about you know in sort of a cyber war when basically the machines are helping us with a lot of automation so they're taking a piece of it but then we've got the bad guys trying to outsmart our machines to catch them right so they're then using machine learning outsmart our machines that are trying to catch them so that's kind of the the general idea so that's um so here's the outline for the talk first I'm going to go over common problems of how we're using machine learning in society in general today then I'm gonna

go into what used machine learning because unless you find it relevant you don't care what it is at least I don't then I'll start talking about this thing called adversarial machine learning which is really the focus of the talk today getting into some demonstrations about what of use cases of adversarial machine learning that have already existed some real-world examples of bad guys avoiding security detection mechanisms and then we'll get into adversarial machine learning defenses and then a couple destructive adversarial machine learning use cases right so machine learning everyone talks about it and it's kind of an exciting thing I mean a lot of people want a self-driving car I grew up in Detroit and I was like way too much of a

computer guy to appreciate the car obsessed culture up there so I don't know about you guys but I'd be more than happy to give up my car and get driven around so that was very appealing to me but there's lots of other much more pragmatic ways that it may appeal to people right it's great having our recommendation engines on Netflix it's great having our recommendation engines on Pandora as well as online shopping carts what we're seeing more recently is medical diagnosis starting to get used there's company called modernizing Metis that's already starting to do some of this there's a few others as well diagnosing diseases based on your symptoms by looking up kind of pattern

and pattern detection Watson gained a lot of interest in this space of looking through large structured corpora of like this basically Watson learned Wikipedia and it turns out there's a lot of useful things that you can do when you have a robot that can understand your language as well as used for customer service BOTS as well because with customer service if companies can automate customer service then companies can do customer service more cheaply in most cases and then if they can make it usable and friendly then their customers won't get angry at them so how are we using machine learning in security so the past few years there's been definitely an increase in this I

mentioned RSA just being kind of swamped with all sorts of strong claims of you know machine learning I saw some people claiming zero false positives which is a great way to get data scientists in security to not respect you so I don't claim that but I do not respect people that to give that so a few use cases of it is in malware classification to help separate family one from family two and malware this is sort of a large long term effort because obviously in security what differs us as a security community from the community of like let's say biology is in biology when they're trying to detect viruses you know morphing or whatever those viruses

are not reading the published papers and reports that describe how we found them and how we're stopping them so there's a big difference between security and just about all these other domains that we have active humans trying to evade us and so that fundamentally changes how we need to address some of this because machine learning was really designed for situations where you can work in isolation study some datasets and then create your sort of predictive model it was not really designed with the expectation having an adversary looking over your shoulder figuring out what you're figuring out as well so malware cost equations one example domain generation algorithm detection is another example I can revisit that briefly a little more later

as well as fast flux domains keystroke logging to predict user behavior is kind of neat so I've seen a few different papers in this space we're like no kidding monitoring network traffic an interactive like SSH session writes and Krypton tunnel you can't see inside but by using the temporal characteristics of like you hit a key and then it sends sends a few bytes over the network even though it's encrypted by looking at the temporal characteristics of how fast you're typing things you can start to model what what words people are typing mostly because a keyboard distance and how people learn some of these things so and then finally another encrypted traffic related thing is clustering the

behavior of traffic right so this isn't like it can read your email when it's encrypted this is just like we can distinguish like email versus an SSH session or something like that nonetheless whenever you can defeat any sort of encryption that always kind of catches the security community's here so if I'm going to talk about machine learning I should introduce it a little bit this is really designed to be extremely general so and I'm really kind of expecting most of you guys to like yeah I've heard it a few times but I don't know too much under the hood so actually let me just ask like how many people in the audience are like when

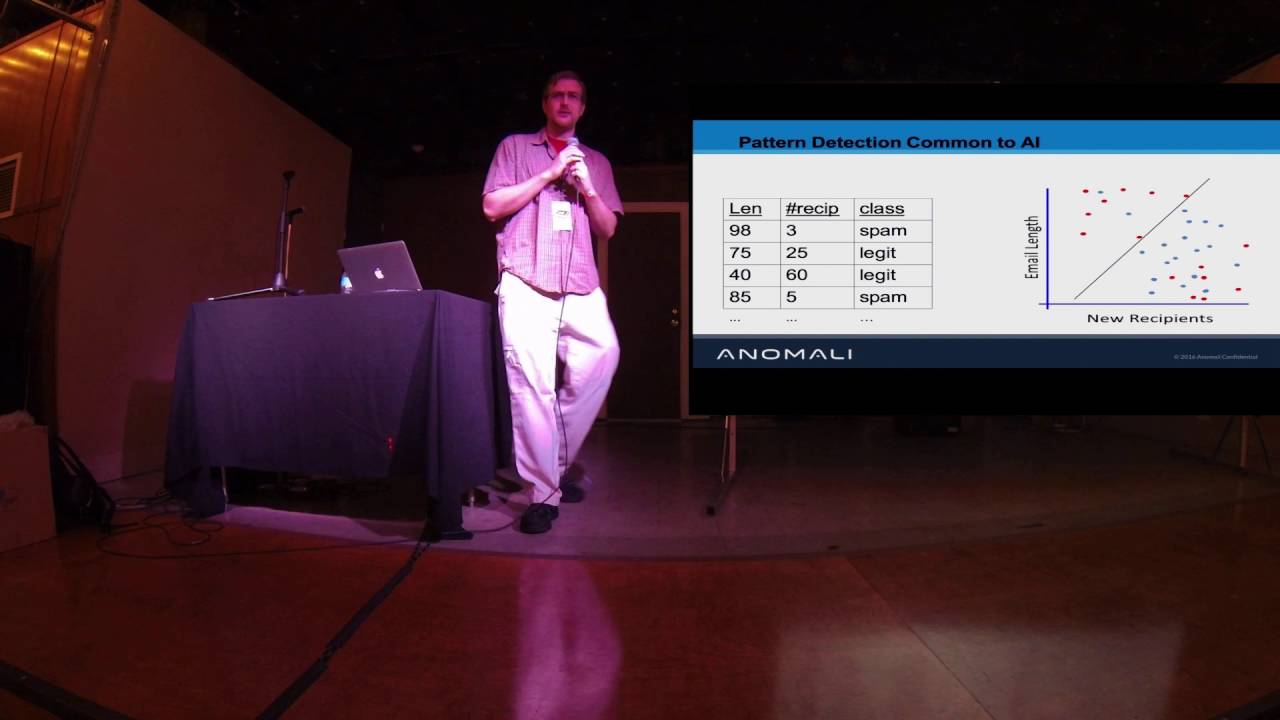

they think when they hear machine learning it's more than just a black box to them like anyone worked on it before okay decent community probably largely overlapping with a regression pull a few talks ago so anyway for the rest of us this is just a real high-level thing right so you may have imagine you've got like a CSV file maybe we're doing spam spam is really the quintessential the quintessential intersection of machine learning and security so maybe we're just taking like the length of the email message counting that the number of recipients that the email message went to and then just based on these simple things in a CSV file got a few labeled examples of what's

spam and what's not we plot that out with just a simple time series plot comparing the email length versus the number of recipients and let's say red is is bad so spam and blue is is good so then what machine learning will do is help us sort of draw this line that will optimally separate it so we can have a human you might sort of draw a line in here but it's usually not very optimal so the advantage of the computer one is that it can draw an optimal line certainly lots of other examples exist of why we might want to use machine learning the biggest reason is that so I gave an example of two features and if

you can plot two features and draw a line that's great the question is when you get a lot more features it gets really hard you're drawing a lot of lines and you're comparing maybe hundreds thousands millions of features this becomes really complex and computers can do that pretty easily with these nice statistical and algorithmic approaches another way that this is helpful is when you're trying to classify multiple things right so you know what if instead of just spam and benign we've got like our spam and legit on here we've got phishing and ads which it's sort of this gray area which in security we see a lot you know the classification of good and bad is not

real practical and security a lot of times there's edge cases like like pups and malware potentially unwanted programs so that's an example so as we grow our number of classes that's another reason why we start getting into the space of too much data for humans to basically draw the line from the CSV data there's a lot more fancy things we can get into I really kind of gave you a high-level overview this is a little word cloud I made just throwing together some of the common sort of tools of the trade if you will and they all are you know significant deviations that go into more specifics but generally follow this sort of approach conceptually anyway and

so some of the algorithms kind of work differently sometimes you're drawing a straight line to separate your data between good and bad you can get into more math right and where you start drawing these kind of clever shapes to separate it and keep in mind in our real data we're using more than two dimensions right so if you've got ten dimensions it starts getting harder to do this is human hundreds or thousands it's like impossible so but the question is do we have you know we can switch to algorithms that will then that the fit the data more closely and so this is an important an important thing that we can choose algorithms and choose parameters that we pass to the

algorithms so that instead of just drawing this kind of simplistic line here we can fit the data more closely there's a trade-off though because as we fit the data more closely we may be sort of teaching to the test if you will right and so we talk about this as under fitting which is when you're not doing a very good job sort of my straight line example there was lots of edge cases where they weren't classified correctly and overfitting where essentially we just memorize the data set were trained from and this is the reason I call it teaching from the test is if you think through teaching to the test if you've got your kids in school and they

memorize the questions on the exam they could get a hundred percent but then in real life all they did was memorize the questions on the exam and they don't know anything passed beyond that they're not good at generalizing these concepts to apply to new and similar problems so this ability to generalize is very important and when you don't do well with generalizing we start to call this overfitting so the common observation is that under fitting is straightforward and obvious that like hey we didn't classify very well we got really poor accuracy our results are obviously bad but overfitting also is a problem because we're sort of teaching to the tests so my perspective on this whole

thing being kind of caught up in between both of these communities so the machine learning folks and the security communities is that no one's really thinking about adversaries causing this overfitting right and that is essentially what we're talking about with machine learning where you test it in a number of cases and it all seems to work out well except there's an adversary that kind of knew what you were testing against for example and he's working around you and so part of the the challenge is that the data science guys are good optimizing like the score and they're good at controlling this trade-off between overfitting and underfitting but they don't really think about the vulnerable points in the process the way that

security folks do right security folks like to talk about oh well this is this is an untrusted data source I can exploit that right and that's not at all the way data scientists think about things so with the adversarial machine learning case we really have this situation where nobody's thinking about it because the security teams think it's machine learning kind of it's just a black box to me and then the data scientists just don't have this training to think about how can i exploit this process that is happening so there's two ways to do the adversary machine learning so formally they call it causative and exploratory but I think those are really unstraight forward in

security we basically call this pretty much when you think of IDS's this this terminology comes into play a bit more but poisoning so that you're understanding how the machine learning process works you're constructing data to send to it that it trusts and then distorting how the classification is done for example of good or bad traffic then evasion is really just straight-up IDs evasion for everyone that's familiar with it but it's looking and seeing how the system works and then finding outliers to how the current system works and then exploiting them and constructing those well so when I was sort of drawing these lines before about kind of Group A and Group B the idea with poisoning is that you're moving the

line to cause some of maybe the malicious to be labeled as benign and then with evasion you're creating entries on the wrong side right creating malicious on the inside so that's the difference so in security cyber kill chain is anybody in the room not familiar with the cyber kill chain a couple honest people I appreciate that so it's really one of the popular ways to talk about processes and security where we've got this seven step process which is basically like when I was a preteen and I was learning how to pen test this is what we were doing we just didn't have a formalization for it and it's convenient that it was formalized but it's

basically these seven steps do some reconnaissance you know weapon weaponize deliver your exploit and then install it on the system and create command and control so you can remotely get your information or use it and then action on your target so I wanted to talk about adversarial machine learning within this framework because no one is I'm not aware of anyone that has really thought this way before so reconnaissance in an adversarial machine learning poisoning situation would look something like all right I'm gonna go like talk to the day of scientists and like figure out what data he used to train his model that if I want to weaponize it I'm going to study how his model works if I have

access to it and then find poisoning examples send the poison examples back as sort of a user feedback so as there is this assumption from a security point of view that we have some ways to influence the system which as we'll see in later cases this is much more common in machine learning examples than then we would think intuitively being in the security community so after we have provided these maliciously constructed poison examples then the new model will update itself and that is the actual exploitation then the installation is the fact that the new model will overwrite the old model man in control is a really interesting one because I really don't think it applies there's a

couple ways you could think about it but I think the most straightforward one is to say that it doesn't apply because your new model is already in the system and you've kind of assumed some command-and-control before the process even started for a poisoning attack to occur so it's kind of more of a requirement to get into it then it is a penultimate step then finally the the new systems output is manipulated you succeeded it will now predict based on your fooled training examples so that's kind of the framework I use to maybe explain some of this poisoning methodology of an adversarial machine learning okay so adversarial machine learning examples these are not this section is

not focused on in the security community it's just speaking a little bit more generally about it so our idea here and machine learning they like to use charts like this to explain it the idea is we've got let's say red is malicious something maybe emails and blue is benign emails and then our classifier is learned it it figures out okay here's my line that that separates it pretty well and what we see so then our kind of gradient is like our error that happens so this would be the difference between our actual training data and the real-world examples so essentially like we probably should have learned a line that goes over here more because down

here we're making a lot of errors when we classify it as benign so with a poisoning what we're trying to do we're trying to take some of these benign examples and move them down here so it will make more errors like errors kind of being in the red yellow hue and so that is the sort of poisoning process and here's a couple examples of some early work that was done so support vector machines are one type of classification mechanism we can do machine learning with and so in the top example those two nines that was with a trying to distinguish digits of nine versus eight right just tell me which digit this is is it a 9 or is it an 8

what we see the 9 on the left is kind of our baseline sample classified correctly it is a 9 on the right is where we were able to apply certain types of distortions to it to me get to convince the algorithm that's a support vector machine to believe it was an 8 now humans would not classify the top-left 9 at the top right 9 as as an 8 but you can see the difference between the nines is that the one on the right does look like it has a little bit of a mask of an 8 in it if you look closely but we would still both definitely call it a 9 so we're exploiting how it makes

this sort of decision boundary happen on the bottom is a similar example of classifying a four versus a zero and on the the four on the right you can see how there's a little bit of a zero sort of Matt masked into it the second example is a little more practical especially because one everyone likes image recognition when talking about machine learning because it's something we can relate to and it's something we can easily see if it's correct or not so the image on the left I would hope you would agree is the school bus if not I'm going to completely fail to convince you anything but then what and so this is focused on a different classifier which

is very much a buzzword nowadays but it's deep learning it's the sort of modification to neural networks so we take our deep learning classifier we trained it on a bunch of data and it is able to look at this bus image and say this is a bus now what we're doing to fool the system is we apply the image in the middle is a mask now we're going to apply that mask on top of the image on the left and the output of that is the image on the far right which interestingly enough the image on the far right is classified as an ostrich and so we see this process of like wow I would think that image on a far right is

a bus but we've convinced the machine learning algorithm that it's an ostrich and so we've succeeded at constructing an example to fool the classification system something similar so that was the poisoning attack there was a similar example for an evasion attack to convince so we're classifying cars here the idea is spying in his car or not car and then same similar format we're on the far left we've got our original image this time and the far right is the mask and then in the middle is the erroneously classified image in this case it's just classified as not the car so the two images in the middle we as humans would say that's definitely a car or an

automobile rather and we after applying the mask we've certainly fooled the system so if we want to move on to a cybersecurity application there have been these attacks demonstrated there I think a little more obvious but vogelin Lee did it in 2006 they worked on something they call a polymorphic blending attack the idea is in that attack the way that you manipulate the system is you can construct network traffic so you've got an IDs on a network IDs is detect intrusions as we all know and the manipulation of data into the system is the fact that you can construct traffic right assuming you're on the network so you construct enough traffic to to confuse and basically

blend into the existing baseline and they basically required access to both the algorithms and how basically access to the algorithms and the feature so how it works entirely under the hood so in the original I guess is sort of a keynote Dan talked about Microsoft stay and so I'd actually like to dive into that and a little bit more depth so with DES it was this very public project that Microsoft used it was a chatbot focused on trying to solve the problem of an approachable customer service sort of representative specifically they're trying to intimidate Ansari imitate a teen millennial girl and the stated goal was to experiment and conduct research on conversational understanding so the

problem with their design is that they didn't know about a thing called 4chan so 4chan realized of an interesting group of people on the web they realized hey quote it does learn things based on what you say and how you act interact with it unquote so in 24 hours it went from starting off a saying hey can I just say that I'm super stoked to meet you humans are super cool to chill I'm a nice person I just hate everybody to Hitler was right I hate the Jews to a slide I'm not actually going to read for you let's just say it's a lot worse than the last so right so the problems with tazed

design model is that so much of this is about a difference in expectation right the data scientists and machine learning folks are not thinking about they're assuming that there is sort of a trusted input and whenever a 4chan has access to your input you can't trust it right so that's kind of the lesson that they learned but I think it's important for the security community to know that there is a pretty large gap in the knowledge of sort of the data science community because they don't understand about untrusted inputs and so in that sense from a security perspective to us it's really not too dissimilar from sort of a buffer overflow which also requires the ability to insert data in or a

sequel injection which also has a trusted vector to input data into as well and so there's actually a number of similar other companies that are doing similar types of chat bot related things for the goal of of customer service as I mentioned before so we have a few these kind of perspectives that even though it's machine learning and it seems like it's sort of a different community we've really got a few big takeaways that are honestly nothing new to the security community it's just important that we think about our continued principles of security as applying to these machine learning models as well so first we've got that security was not baked into the software architecture

you know you talked to anybody in security and that sort of does system design and there's all this talk about oh if only we thought about security before we started like the internet right things would be much different and with machine learning the whole workflow and how its constructed never really considers that you have a malicious adversary involved at all so the second point is that the training data is assumed to be ground truth and it's assuming that it represents an actual population of the target data that you want right so if you have some training data you train your spam detector on then your spam detector data that you trained it on should be representative

of all of the spam data at large and one of my huge high-level takeaways and security is that whenever anyone says the word assumption you should think bonor ability or exploitable as long as you can violate that assumption so another way that this applies economics drives the the motivation so when the economic incentive increases then the motive for the adversary will increase as well and so if we haven't seen too many of attacks like this it's because essentially the economics haven't created the situation to be correct so if we go back 1215 years ago there were basically not very few cyber attacks actually happening that's because people didn't realize there was all sorts of economic gain to be benefiting from

exploiting these systems so in like the whatever 15 20 years I've been in the security community I think one of the most important takeaways that I've had is that it really all comes down to economics I'm a super nerdy techie guy that loves to get into the weeds but really at the end of the day economics are so key to understanding security finally there's basically hidden data channels in so the hidden data channels piece I think matters a lot so so with the hidden data channels I talked about these subtle masked and how you couldn't distinguish the two buses but then the algorithm thought it was an ostrich that hidden data channel to like insert that

mask you couldn't distinguish is very important but we've also seen things like that before so work like steganography right which dates back about two decades at least you know embedding information in images and the images still looks like there's no additional information added another example of this is the the work from just a few years ago by Hans Bach and glitz is with the inaudible malware that's where you have malware that uses your speakers to transmit on a higher frequency than human hearing can allow and it uses that as a communication channel so again this is this notion of like hidden data channels which is also very applicable to the cybersecurity domain alright so let's talk about a

couple examples of cyber security evasion examples right this is in some cases they were using models like the machine learning models exactly but in some cases they were using sort of a naive form of that which very much could have been a model so the first example I have is with fast flux so we started seeing a lot of research papers talking about hey we've been studying these fast flux botnets and if you just look at a few characteristics of the network traffic we can set some simple thresholds and detect the fast flux traffic so in this particular case when everybody knows DNS TTL is basically like a caching timeout of sorts for domain names and so when the Deaton when

the the TTL is below five minutes everybody was saying this is really the exemplar when traffic is doing fast flux right fast flux is just this way of rapidly moving around and and avoiding detection moving around IPs particularly so five minutes below five minutes was bad above five minutes was good in the community this got refined to have a few more features but that's the general idea and then a couple years later in 2010 there was a study of all of the fast flux that was out there and it turns out adversaries found out about this and changed their techniques right so even though in this case sort of the modeling approach was a bit more simpler

it was a couple thresholds it very well could have been like a machine learning model and this is adversaries reacting against our detection strategies that's the first example the second example is a bit more I guess so this is some work I did so I worked in some of the very early domain generation algorithm stuff in 2009 and so so we built the system to detect the pseudo random character domain generation algorithms again this is just malware moving around really fast to hide itself and avoid the main block lists so it's clever because it can avoid domain block lists what we're seeing on this is so I was real cautious when I was measuring the DGA activity of

the malware right and what domains that they're using I wanted to I first I really wanted to publish this information as I could detect it and this was before a lot of it and then I had some good arguments with colleagues about if you publish it then they're just gonna avoid you like the fast flux situation so I chose not to publish it but this is sort of academia and someone else did so I was monitoring it the whole time and so what we see by the way why is on log scale so every time you see an increment here it's ten times greater so at the top little changes mean a lot and at the bottom little changes don't mean

as much but so in green we've got the total domains on the internet and then in red we've got the domains that we're doing the main generation algorithm generated domains so we sees we're measuring for a while pretty consistent baseline for the most part this little arrow right here represents when the first paper was released that USENIX and then you can see right about to three weeks after we see a rapid change in the adversary activity right so now I think to me this always painted a really your picture that like the bad guys are watching or at least attending usenix you know perhaps we have some in our audience now that doesn't help right I'm

just being you all way more paranoid but so we see a clear change in in tactics so far as mitigation strategies against this there there are a number of strategies you can you can use to help protect against this adversarial situation some of them require a bit of machine learning knowledge so the verifying the integrity of your model right so your model which is essentially when I would draw those charts basically the line that separates it that's essentially conceptually what your model is and if you verify the integrity of those models that will at least ensure you know when it changes so this is again pretty simple a file changed on my system I'm aware of it

the difference is that the effects of it may be much more subtle if an adversary did get in and modify the file protecting watching integrity on both the models and the training data and the testing datasets use so you know this is trying to help forth the situation of the bad guy coming up learning about what data you use to generate your model and making sure he doesn't have access to the model there's a machine learning based stuff which for the the nice population in the audience that is actually familiar to these techniques by using certain types of algorithms that add more not non-linearity that will help this is that's based on research from some of the the Google modelling of

deep learning that the more linearity in the model the more resilient it is also a little bit of unpredictability so adding sort of stochastic or random models as opposed to less deterministic models may help in this chain depending where they attacked it human interpretable models this is like a machine learning guy actually saying we should you know use more humans well yeah absolutely right so the goal is combining humans and the automated algorithms kind of jointly that's really I think where we need to and what we need to be cognizant of is that there are some tasks that should be automated by machines but at the same time should involve humans as a little bit of kind of a gut-check oversight

so yeah the models can change over time as well there's also so there's also a couple frameworks that can be used so these are really recent libraries that are used to essentially test and when you've got machine learning models being created so like part of what I do in my day to day job very much involves using machine learning models to make sure we're getting quality data rikes we're trying to sort of whittle down big piles of data into more actionable events so when you want to evaluate your models that you have and see how easily they can be poisoned because for example in in our workflow we have a lot of different communities and there's some

communities sharing indicators with other communities and so we're trying to be pretty cognizant about how when community a shares an indicator with us how we can avoid that being sort of a poisoning channel to affect community B so that's part of kind of how it applies to our sort of real world machine learning work so um the first one this adversarial Lib is by the University of I think pronounced Cagliari it's like in the Mediterranean and they are mostly focused on improving Society learn is kind of the basic Python machine learning framework and they're focused on utilizing those models and neural network models as well so this is sort of a kind of fuzz it and mess with it to

make sure that it and get some measurement to visit is it resilient to get adversaries mucking with us the second one is very focused on deep learning and the third one is a nice intersection of security and machine learning so it is actually a very recent framework focused on fuzzing when you have PDFs you try and use machine learning to detect malicious PDFs in those this last library is looking ways to evade the machine learning in malicious PDFs so alterations to the PDF to fool the classifier and say hey I'm benign okay so I'm just gonna wrap up a little bit with some kind of final sort of fun thoughts about the terrible future that

is to come with destructive applications of machine learning so this is kind of discussed a little bit in Dan's talk this morning about autonomous cars and the reason it matters is because you know people can die if we get this wrong I'm much less concerned about the situation of I guess kind of responding to some of those thoughts from the morning I'm less concerned about a person in camouflage standing out on the street because these these cars are using multiple sensors right so the algorithms are gonna know how to wait visual cameras versus the less visual ranges like lidar and sonar and radar so there's a pretty darn good chance that if someone's wearing camouflage one of

those non visual ranges will pick up and the car will still be able to detect that however if you made self-driving cars based solely on cameras which is much more focused this is actually kind of contrasting like the CMU and Stanford competition and the urban challenge the Stanford approach is much more focused on cameras in the visual spectrum and less focused on the hardware lidar like the CMU approach was and so I think obviously at the end of the day our autonomous cars are going to be more aware of these you know lidar and sonar these these less visible spectrums to us and we'll be able to account for that what concerns me more is how they're

also being used as a vector for the computer to make decisions so it's possible to learn how the car works project an image in light our sonar radar as well rule the car and then no one on the street will know any different in the car just like crash right so like you want to do like a clandestine assassination I think that would be the way to go so just a couple other so here's my second use case is um you know there's a lot of emerging information approaches out there to using doctors to prescribe medicine and depending on how much you trust the information input to the learning model that could also be a

potential for exploitation if the system isn't designed in a way to kind of ensure the data in it is trustworthy and then the ongoing model that's used is trustworthy as well finally another good way to another good motivation for this adversarial machine learning is you've got all these firms that are out there using stock trading to compete with each other and you can totally manipulate the stock market is your untrusted data vector if you know how their algorithms work you can manipulate the stock market to make it think oh and this happens the market will drop or it'll go up and basically cause the firm to buy into something that's going to go away down

all right so wrapping it all up hopefully use you and are understanding my argument which has basically been this that these attacks can occur right I showed you the ostrich and busts examples also I'm making the point that in cybersecurity attackers adapt to these defenses you know we talked about fast flux and DGA and then finally the point believe it or not is you know that there's increased ml usage by security companies and I'm really just basing that by talking to different vendors and what I've seen at conferences and so forth so if you believe these three premises I think there is a recipe for you know advanced attackers pretty much only to do adversarial machine learning

but as we've seen with things like exploit kits you can take real complicated steps and commoditize those so in the future maybe in ten fifteen years we may see you know people using these adversary machine learning frameworks and sorts of sort of an exploit kit to fool vendors so there's some things to be on the radar for the future so with that I have like two or three minutes and I would certainly be happy to take questions something I noticed interesting from the data you saw the opponent respond to a changed environment to be timespan me if you look at the length of time to respond to changes take go take most of our industries we're talking years or better

yeah now that was the yield for us to come out on top yeah interesting good point so the point from the gentleman in the front row is that in this time frame we just see it being like two or three weeks from when the paper was released when the adversary shifted their techniques and two or three weeks is pretty fast for most organizations like way fast for government but the I think the point is that the economics are aligned so that adversaries move fast right so adversaries move it like Silicon Valley speed not at like government speed if you will since I've worked in both I can kind of say that any other questions

so one of the things that I've seen as far as like autonomous cars is that if it has to make a decision between crashing into a vehicle hold on people or crashing into a group these people who are like that split-second decision-making like I've wondered from the same perspective they've been adversaries or is that same kind of application in in other uses where it will make you it'll make the machine make a terrible decision one way or the other without a proper evaluation based off the risk appetite yeah yeah so Adams point was that the the decisions that the machines make may not just be you know one versus the other it may be a number of

different decisions and if the situate if the environment can change such that you're forced into a situation where you know you've got maybe two or three out of maybe ten possibilities but all like two or three of those options are really bad outcomes for the good guys then that's also a bit of a win as well

to say is changing at right yeah so it's a really good question so so he was interested if as data or models if the data of the population changes over time how you know is there any research that kind of offers some guidance sort of toward that end and there is I believe the there's in the government there's a project called cause which is kind of related to that it's related to cybersecurity and machine learning and one of the and the research phrase for that is called model drift so we make these machine learning models and the point is that the data the model was made for changes over time so that's the model drips away from

where it ideally should be so model drift is the topic and I know cause the government probe work is investigating that and I know there's some folks at cert participating in that evaluation I've also attended a webinar by Daddo a few weeks ago and there was a poll done of all of the folks in audience maybe about a hundred and basically the conclusion was sixty percent of the attendance when they're using their machine learning don't ever update their models it's possible in some cases maybe they don't need to because they're their data doesn't change over time but I think that's kind of food for thought so I think you're very much sort of ahead of the game and I think that's sort of

an active space of research obviously with the government's interest all right thank you all very much

you