Evading Detection with Dynamic AI Mimicry

Show original YouTube description

Show transcript [en]

Cool. Well, thanks for coming all. Um, I'm Darren and this is Mosam and we're presenting some research we did alongside a couple more colleagues who couldn't be here today, owner Erdigan and Ray McCormack. Um, and specifically we're going to talk about a uh framework we built and some offensive tradecraftraft for um evading detection using AI and uh cloud mimicry and then Mosam's going to talk about some analysis we did of you know how that does um expose itself in an environment. So we won't go into detail on detection. That's kind of uh not the point of this talk. But most will give you some ideas. All right. So before we get to all of that, how has AI already broken a lot of

our uh defensive methodologies? Um well last year in 2025 we really started seeing a lot of malware families utilizing AI for um polymorphism. So if anyone is unfamiliar uh polymorphism basically means many forms right. So um not expressing yourself for the same way every time or not performing the same behaviors every time, right? So that makes things harder to profile. So this is not new to AI. AI itself didn't come out in 2025 obviously. So all of this has been around for a while, but it really took off I would say last year. Um and then around the same time uh legitimate authentic tools also kind of took off and proliferated. So you can think of quad code um open AI's codeex

etc. Right. And the intersection of those two trends really accelerated uh this threat I would say because a you can use um AI for really effective polymorphism and b you can use AI to write this kind of malware right. Um so uh let's go into the existing threats in just a little bit more detail and then I'll get to what we did. All right. So there's a few different approaches um that you can utilize uh AI for in this area. So, uh, one would be you can use AI to really, um, trick defensive AI agents, right? Uh, and get them to do something or maybe crucially not do something uh, that helps you in some way, right? Um, so

fruit shell was an example of that that we saw last year. Uh, another thing you can do is um you can hijack legitimate AI tools, aantic AI tools, um, and get them to do malicious things on your behalf, right? So, prompt injection, maybe data source poisoning, different approaches. Um but you know getting a a legitimate tool to perform illegitimate actions. And then finally there's what uh what we're talking about and what we started with but then expanded upon which was um using an LLM API. uh so a cloud service API that for um a hosted LLM model to provide variable instructions um on how to perform behaviors right uh so this enables that really sophisticated polymorphic malware

approach um so what's the specific real world example that we saw of this well one of them was lame hug So this was a um sample reported on originally by the Ukrainian commu computer emergency response team or uh and it worked pretty much exactly how I was describing. So there was a small implant that itself didn't have any really like defined malicious behaviors embedded in it. um that ran on a victim's endpoint and then it talked to hugging face uh and an LLM hosted in hugging face and asked it for instructions on how specifically to execute some highlevel goals, right? And the LLM provided those commands and sent them back down to the implant and it

executed them. uh and then you know it looped over that process for a while and eventually uh exfiltrated data out to a a separate command and control server. Right? So um that's pretty much what I was talking about. Now uh being you know redte teamers researchers sometimes we were like well but it got caught. How could we make it not get caught? uh and the way the answer we came to initially was that it got caught by network analysis, right? So, uh the the victim environment that this malware was found in was doing uh baselining of their network traffic and they knew what you know legitimate services were being used in their environment. Um, and hugging face was not one of

them, right? And neither was this custom C2 server. So, that triggered alarms. IR got involved, blah blah blah. I don't know. I wasn't involved in that investigation, but I assume that um that's how it go. And I do know from uh what was published that uh that's how it got caught like an NDR doing baselining of networking traffic. Um so we thought well let's try and uh advance the offensive tradecraft a little bit here and fix that method that uh you know distracted from the stealth. So let's let's build a AI malware framework. Not real malware because we're not actual bad guys, but a uh a red taming tool um that you know does similar things to what lame hug does uh

to evade edrs but then also doesn't get caught we hoped by network detections or at least network anomaly detections. Um, and we know that enterprises typically use at least one, maybe multiple legitimate cloud providers. So, if we could profile uh which cloud providers they utilize already and then blend in, probably we wouldn't trigger those kinds of uh network anomaly detections. And then also we know that most teams, defensive teams don't really inspect um what we're calling thinking traffic, but specifically this kind of AI traffic um in terms of the actual payloads, right, for various reasons. Uh so we went ahead and did that and tested it out and that's what we're here talking about. All right. So, dynamic AI malware. Um,

there's some prerequisites here that a threat actor has to do. You first need to um actually create cloud accounts ac across all the major providers, right? So in our cases we used um Amazon web services uh Microsoft Azure thanks to Microsoft for hosting us today. Uh Google cloud platform and um Anthropics public AI API or application programming interface. Um and we support all of those but you could support more. Uh for example, if you know that you're testing in a environment that uses Oracle cloud, you could support that whatever. Um and then you need a framework uh that enumerates what your victim uses legitimately. So that's what we built, part of what we built, um and determines

what you can blend in with. And then once you have that, you're off to the races. And you can use it for both um command and control and Xville. And then you um you remove not one but actually two uh potential detection opportunities at the same time there. So um here's a quick diagram of how this works. It's, if you'll notice, rather similar to the lame hug one except with a lot more cloud provider options and uh you know you pick one or rather our framework does that for you. So that's the idea. Uh and then we we called this framework LL MALJ. Uh one of our collaborators likes funny names for projects. So hopefully you all enjoy

that as well. Um, and yeah, it this is pretty much how it works. So it's also a minimal implant without much built-in logic. Um, so you know, if you throw it in a malware sandbox or try to build a a profile of its typical behaviors, well, every time it runs, it'll behave differently. So that's hard to do. Um, but it also does the dynamic memory feature we talked about and reaches out to, you know, a wide variety of different cloud providers. Um, and then finally, once it's done that, it operates with a um pretty standard, I would say, reasoning loop that my colleague Moan's going to tell you about. So let's uh dig dive into the uh

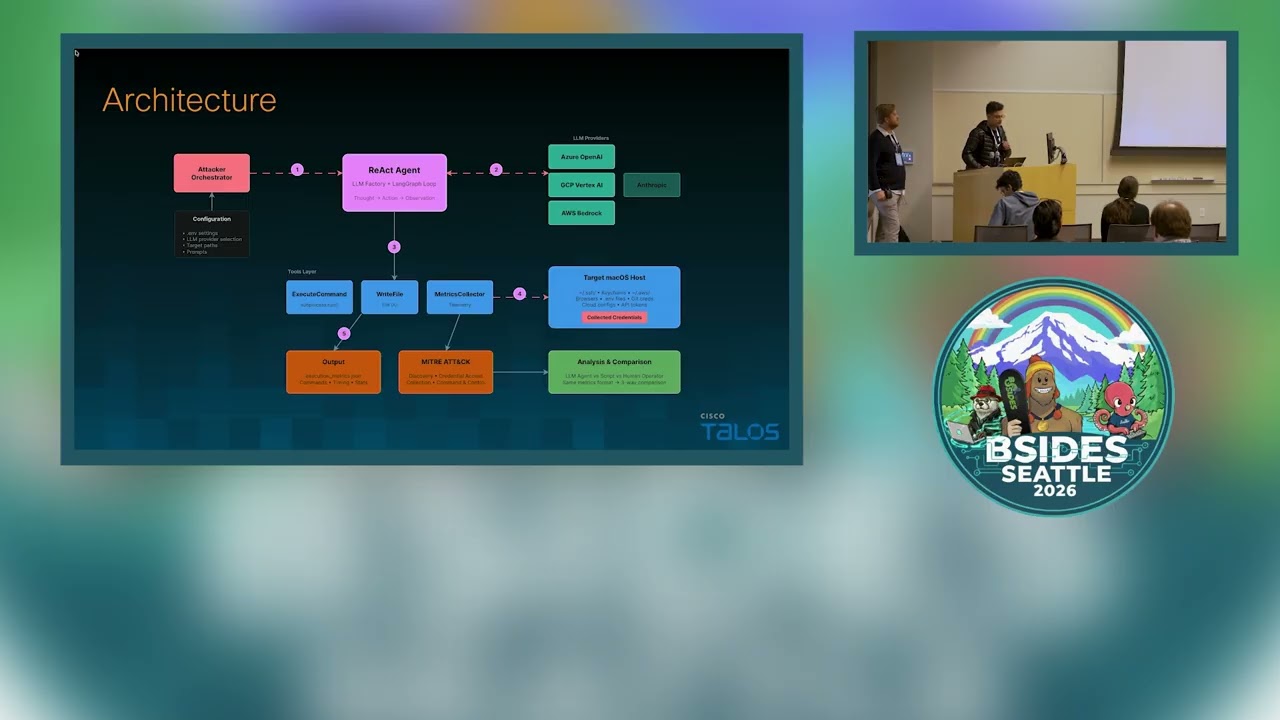

architecture. So the main key element uh in the architecture is this react uh agent which is um reasoning and acting. U the the reasoning part is happening at the LLM layer which Darren had outlined how we selected and um acting is happening at the local tool level where we have tooling to execute commands and uh write uh uh the credentials that we have identified on the Mac OS and then we have some tooling uh that enable us to uh collect some matrix which uh we use for driving useful insights which I'm going to show. So let's see what uh it actually does. Um it goes through phase zero which uh that highlighted where we are identifying which LM provider the

victim is using and from here here onward it is uh trying to enumerate all the credential files on the Mac OS system. So it goes through these steps and each step is a react cycle which I'm going to go over in the next slide. But here it is going through these uh folders if you will or the areas where different kinds of uh u credential files are residing. It thoroughly goes through each of them and the result is u all the list uh where important credential files are residing. uh on the second phase it is passed onto uh the uh the agent where it gets uh compressed in a zip file and uh ready for the the phase 4 where it gets

excfiltrated. So now let's look into the uh working of one react loop. Um almost everything that happens in our agent is this loop. It is happening and all it is doing is two steps. one is the reasoning with the help of the LLM. It asks LLM what to do and then executing those steps and whatever the finding is it is fed back into the LLM for uh for asking the next uh action to take. So let's say we are doing this cycle for the enumeration. The question that agent asks with the LLM is what credentials exist and the LLM does its own reasoning and provides you that you should check this SSH folder first and um the agent uses its tools to

take action and run this command um in order to find what is in this folder and um as a result we find these files in the folder. the agent may decide that it wants to continue and uh if it does not then the result is passed on to the next phase. If it does want to explore more, it runs in a cycle. The important point that you I want you to take from this slide is that even though LLM we don't know how it is thinking how it is coming to this conclusion we do have a very distinct um footprint or fingerprint specific to this architecture because it is it is making a continuous call to

the LM agent and then it is executing uh some tools on the uh on the endpoint itself. So keep that in mind because it's going to help you in the next slides because that's give us a a signature that uh we can fingerprint that thinking process of these agents. Um so let's go over some of the insights we gathered from the telemetry that we are collecting. Um we did this experiment where we competed this agent with humans in a static script that we had written. The purpose of all three actors was to enumerate and harvest credentials on a Mac machine. Um typically um uh an agent took 18 commands to complete this task. Whereas a human took uh about 12 commands and

the script was constant. We did not use any framework. So that was a constant. It always run those uh 26 command for the second timing signal. That is interesting part. That's where that that signature that I was talking about in the previous slide come into play. We saw that there was this consistent uh gap of two to six seconds uh between the running of two commands on an agent. Whereas this was very different uh for a human because we as a human take breaks, we make mistake, we go Google things or GBD commands and try to research what is the next action we should take. So that was more of a bell curve with high

variance. um the uh 10 or 8,00 minutes of execution time might might uh you might find it in strange why agent is taking more than a human which is about uh six minutes. And there is a reason there the the instructions that were provided to our test human was very limited whereas the agent was very thorough very greedy it was trying to find every single directory on a MacBook so that's why it took it uh time and it it has to go uh talk to the LM agent this the latency network delays were involved that's why that um that that u time is higher this recon ratio is also very interesting aspect because we wanted to see how much

time um agent is is spending in each phase and it was spending about five times that in the in the recon phase meaning again uh it was very greedy very aggressive uh before it actually compress the files themselves. Um the jakard similarity is an interesting one because it it it highlights the polyomorphic nature of our agent. If you are not familiar with jakard similarity consider two circles. If they completely overlap each other then the jakard would be a score of one. If they are separate then it would be an ideal zero. So a a score which is close to zero indicate that uh within two runs of our agent uh we did not find any uh any

similarities. Um which which indicates that we cannot generate any signatures uh for the uh for detecting this this agent. Uh the last one 46% of recovery rate is when an LLM made a mistake did it recover. Um we are only calculating or or measuring the next command uh that that uh it ran after failure. Uh it we are not measuring the entire process. So the the enumeration and harvesting process may have succeed succeeded um like in the fourth or fifth run but the immediate failures were uh recovered 46% of the time. Uh with these um insights uh let's see how we can our defenders can fight this attack. Uh before I give you some uh defense mechanism let's reiterate why

this trick even worked. This trick the trick mean main mainly is um the attacker is sending the AI traffic to its own tenant and the the tenant level information is invisible to a firewall. The firewall only knows that um this traffic is going to this IP which is a Microsoft IP and we have whit listed it. So it just let the uh traffic which belongs to the attacker uh as same as your your invoice traffic. So that's why we are invisible at the fire level on the um endpoint the EDR misses it because it is looking for malicious shell code or some known uh exploitable pattern some signatures and our um uh implant does not have any of that. It is a Python um

code which is using a standard uh libraries. It does have an orchestration framework um but that's used by many AI um agents. There's nothing malicious there. It is polymorphic in nature. So you cannot create signature because next time it runs it it it morphs itself. We saw that with the Jakarta score which is close to zero. So with and the third most important one why the LLM is operating in the first place because if you ask in an LLM okay provide me instructions to excfiltrate data from SSH to my server it would simply refuse. But if you break it down into smaller steps in a husk it way then it provides you the answer to

each of those steps. uh because it does not have any memory. It does not know the intent that the agent has in mind and uh so each step is benign but the the entire chain is what makes the uh the attack possible. So with these three uh things in mind the attack is possible and that means if you can block these your your attack would be blocked. So that's the motivation. So if you look at this attack you you notice that your EDR is not able to detect anything because there is no binary to scan. Um there are no signatures. Uh the um firewall is obviously whitisting your traffic because it is going to a known

cloud provider that you allow. Um the uh NDR is also blind because the traffic is encrypted. Um so your the connection is um established and you have a C2 channel established. We are making some assumption obviously um we are assuming that uh your organization is not doing uh TLS inspection because if you are doing it and you are validating that the traffic is going to your tenant then this this would be blocked. So let me stop here and ask you guys are you guys doing that in your organization like doing TLS decryption and then validating if the traffic is going to your account. >> Oh only two >> two. >> Okay. So that means this is something to

um look into and and validate. Another way um to validate that is actually I'm going to explain that in this slide. So notice that how eager this agent was. It was trying to uh look into every single directory and it was very aggressive which means you can deploy and every >> okay I will just go quickly and give you the best um defense. If you inspect traffic and ensure that your your traffic is going to your tenant that or use an AI proxy then this this would definitely kill this attack. And so I recommend that um where this field is headed we are seeing multi- aent coordination um in the attacker uh slide uh where you seeing

embedded model um you don't when you bring the models to your muh to your machine then that signal or signature that we saw on the uh network just disappear. uh then there is cross platform mimicry and then the prompt injection for lateral uh movement which means you don't need such implant you can use existing AI tool and and inject them on the uh defensive side we are seeing um intent detection like intent firewall um to identify what the LLM is actually trying to achieve then there are some baselining effect to identify the normal traffic for your organization and then there's this movement for zero trust AI um I would leave you with this quote which accurately

uh identify the root cause of the problem. You cannot identify um how your AI is thinking and but we also notice that that thought has a signature and we can look for that uh thought. With that I conclude. >> Thank you.