Teaching AI To Protect Planes - Matthew Reaney

Show transcript [en]

okay so uh I am Matthew REI I am a first year PhD coming to the end of it so what I'll be presenting today is sort of what I've been working on uh in my first year in um cyber security with AI um so the goal of my research is basically to assess the viability of using um AI techniques for network security and in particular I've uh I'm focusing on Cyber physical systems and I've chosen an aan um or aircraft Network um for this and the real motivation behind this is that modern networks are well they're really large nowadays and they're very Dynamic and uh literature shows that some of the old rules based techniques and other

approaches are maybe getting a bit outdated um especially if you have ai parted uh adversaries um so without further Ado if we can all make sure um we've got our seat belts on on stay seated during the duration of my talk um so I'm going to start very simple with some uh AI stuff and to sort of give a bit of context to the problem um of teaching things uh to AI so um we've all seen classifiers or hopefully know the idea but you basically train them on something and to do a task so for this it's uh classifying fish um so we train it on fish and then we test it on fish uh and

hopefully hope it says this is a fish and then if we feed it something that's not a fish it hopefully says this isn't a fish so by looking at the accuracy Alone by something like this we can say that look works really well but if we um maybe put in something bit more unconventional or something new that comes along into our under our training or testing set um for example that this image here of a fish and we say here we go it's that's a that's a fish um we we can look sort of deeper than just your metrics or or trusting this and try and understand the the behavior um for this example I'm it's really trying to say

that this is learning the water rather than the actual object um so um behavior is is the real key that you need to make sure that the AI is being taught the right Behavior not just the right result um so my particular um problem scenario um so I've collaborated with um Roy um it was uh going on a bit before I started but I came onto the project and um had various meetings about the various bits and pieces that would make up an aircraft Network um so uh the first part we have is like a a history in that's called the management and just logging all the communications and then we have an auto throttle which is kind

of like the brain or the calculator taking in lots of data and then telling um the computer or what the plane what what to do and speed up slow down and then we've got uh no clue what that is uh we've got the uh the fadec which actually directly interfaces with the uh engine um and and does the control and this communication between the fedck uh and the auto throttle is really important um so that's that's the thing that we want to protect here in this um scenario uh we also have our blue team or um sort of our monitoring software that looks at the network and to mirror that um we have an adversary

um so they might be coming in um via an external network link maybe there's Wi-Fi on the plane um more likely it would be the ground link um of how the actual you know people who manage the airplane would would get access to it um so the adversar objective in our in our problem scenario is to disrupt this communication um between the the auto throttle and the so there's no there's no thrust information and no telling it to speed up and slow down and the adversary goes through various steps of um the you know miter framework reconnaissance um privilege escalation and we've got an eight-stage attack and if they succeed and complete critical failure well it's no good for our

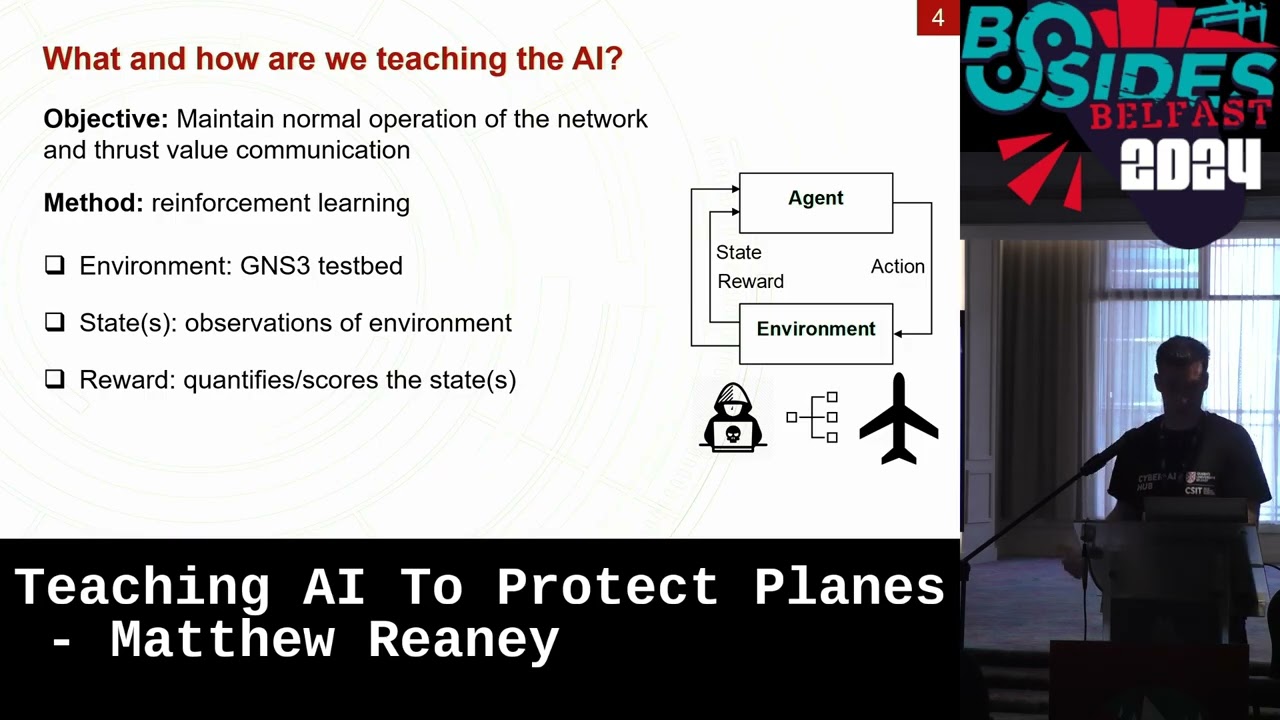

scenario so now we know what we want the trainer to do um which is maintain normal operation of the network and the thrust value communication so we can't just say it must stop this at all costs it also needs to not stop how it normally functions and the method that I've been looking into is reinforcement learning and particularly uh deep reinforcement learning but I'm just going to explain the basic type um so it works in a loop um and the the first component of that is the environment which is sort of that problem scenario I just showed and we have implemented it in a software called gns3 um so it's an emulation of all those components so it's made up of

various Linux systems um routers and we've got a PLC for our controller um being a maybe debing system so they're all running in real time uh and it takes maybe 30 seconds to a minute for the adversary to succeed so it's maybe going a minute or two uh per scenario um and from this environment we can observe um things from it which we call States so these make up various different things like it might be our alerts it might be the status of our our uh computers turned on and off or our systems on and off um and we also then want to quantify these is this a good thing that this is switched on is it bad that it's switched

off um you know are the alerts good no um but we we need to quantify that some way and we do that by defining a reward um and then our agent is basically our our blue team in this scenario um acting as our Defender and um they take actions um which is going to be like threat response um or you know what do you do how do you you know fix the problem uh and this action is then driven by your reward it want the agent wants to maximize their reward to be as high as possible rather than something like accuracy in the classifier show this goes off reward there isn't really an accuracy that you can

use so as I said you're you're going to use reward as your main metric and ideally it starts off low the first few times you train it on this scenario doesn't really know what it's doing but it should figure it out and then it'll learn that and then Plateau um but we don't want to just look at reward this is the same thing as just looking at accuracy we want to understand the behavior and we can do this through few different ways so we can go deeper than just this we also have all the state information I was talking about and we can get insights from that of what the actual state of the network is rather than just always

trusting that the reward is high so everything's perfect um and as said the behavior you can start to picture together once you gather all these things and look at the actions that the agent is actually taking to see is this a good action to take um and and for us looking in and it's often quite obvious so um does it work well my first example um we see the reward is quite low and then it goes high now there's an onlay in there we all have those uh in our data um around 80 um but it looks pretty good so far um but if we if we look at the alerts again we go we bit

deeper and we can see okay there's a few alerts at the start but they stop so maybe it's maybe it's solved the problems you noring this one too um if we go on and say okay well what stage did the attack to get to oh it's still it's still very high um it doesn't seem to have solved the problem what's the what's the AI been doing oh it's been uh it's been blocking the connection to the security node um so it's been stopping all the security logs coming in so essentially um in the event of a fire it's turned the fire alarm off um so yeah uh and another example here um to go to go through this quickly gets a

good reward we go straight to the attack this time oh it's starting to decrease maybe it's figured it out let's look at the number of computers turned on that seems to have decreased a little it's turned off our management so the system's still working but it's just turned off the the historian um so it this is better but you're still disrupting the normal operation you're going to you know have to go and look to see well what was what was it up to all the information's not being stored anywhere um so yeah uh does it work uh yes eventually so these are the results from my um first uh publication through my PhD um and we can see it gets a bit

better um with the reward the attacks are down we'll look is it turning things off that it shouldn't be um no they're all still okay well what's it doing it's resetting the router that is the external network uh gaining or how you gain access from the external network so it is um in doing that um resetting that it puts the firewalls back up that the adversary's taken down during his attack which has sort of stopped him from from getting in and getting any further so it does eventually come to a a solution that is good um takeaways from this I hope I've sort of given you some insights here um of how I've created this um how the

training works and some of the challenges faced and I'm only in my coming to the end of my first year so there's a lot of future work to be done on this um is this Sol going to solve all problems no uh the first thing that comes to mind this is one attack that it's trained against and I don't think uh there's only ever going to be one attack uh and I'm I'm sure you know that too um so um there's lots more to be done that uh that I'll be looking at um but but uh takes me to the clothes uh of my talk um so if you want to check out more about this you can find my um

paper about it here um or I'm I'm happy to speak about this uh later um I don't know any time for no time for questions you'll have to find me later or or read the paper um okay thank you very much folks