AI Cyberoperations: Boosting SOC Efficiency with Artificial Intelligence

Show original YouTube description

Show transcript [en]

It's a pleasure to introduce Gustavo Gómez. I don't know who was at the B-Sides last year. Do you remember? We even sang his birthday song there last time. Gustavo is a person who has been collaborating with us on the whole community issue for several years. Last year we were at Microsoft's facilities in Bogota. and Gustavo was one of the people who collaborated with us on the whole logistics issue with the organization. Gustavo is a cybersecurity professional for more than 15 years, he works with Microsoft and today he is going to present a very interesting talk and also related to a topic that we saw in the training for the people who attended. And it's how to increase those capabilities of

a SOC through artificial intelligence. Yesterday we saw concepts of engineering in the detection of threats, how is that maturity, where organizations now require to effectively lower times and lower impact. So, Gustavo is going to present this with the topic of artificial intelligence. So, welcome Gustavo. Thank you very much and I ask you for a big applause for Gustavo. Thank you. Well, a total convinced of the community. For me, the issue of expanding knowledge and being able to share it is one of the greatest joys that we can have and I know that many here share it. What I've tried to work on, in part research and in part my work, it helps me a lot to make

a call to study more, to collaborate more, to be able to unite more in community and that the knowledge base of all grow. The reference of movies, those who have been in some of my talks, I always bring a reference of movie, here the one I bring to For this one, it's how we imagine that cyber operation in terms of artificial intelligence. Perhaps, and I don't know if it has happened to you in the last two years where we have had a lot of interaction with artificial intelligence and with the GPT chat and with everything that are the LLMs. As well as there are people who we see benefited and we use it in the day to day. there are many people

who are still skeptical about the use. My family is not a technology person, my wife is not a technology person, and it scares them. It scares them to ask a chat GPT knowing what they are going to answer. And maybe what many people, and that's a comment that is at a general level, is this is coming to replace us as people, it is coming to take away jobs and the answer, at least so far, is no. It is how we support ourselves in these new technologies to improve our day to day. I asked it before I started doing the presentation and many people still think about Skynet and that's why the Skynet reference for those who have a little more than 30, 40 and a bit. Everyone

thinks about robots as such operating these technologies, moving people and taking them to another level. But in reality, no. In reality, cyber operations is helping to balance this concept of security a bit. We saw it in the initial presentation, Marco commented on it. They are much more organized, they have some training cycles, they are grouping. And in terms of the blue side, if we can call it that way, we need help. There is a huge failure worldwide of what is happening in terms of helping to protect organizations or us as people. And for me, and I know for many, artificial intelligence came to it.

I'm Gustavo Gomez, I'm a Microsoft specialist, I work in cybersecurity. A little over 15 years, Luis, but dedicated more than 15 years ago. And well, we're going to be working in cyber operations. What I'm going to show from here, a proper introduction to how artificial intelligence is going to be contributing to the roles. Also, that deficiency, and I know that many of you like us to put cyber to everything, cyber talent, cybersecurity, cyber infrastructure, that's why I wanted to call it that. Cyber talent, which is that deficiency that we have at a global level, that the adversary sector is doing, that those who are on the other side are doing to be able to affect

us as people and as organizations. What does artificial intelligence have to do with automation? And I hope it turns out well, a practical demonstration of a research and some conclusions. In terms of concepts, and for those who, if there are students watching the session, the Security Operations Center is finally that unit within an organization that can be owned by the organization or can be outsourced. that is doing the analytics and response of the security incidents and its main purpose is to monitor and respond to those incidents. Here you see a security operations center in Lego that is available. No, I'm kidding. It's not true. But they have and we have a lot of challenges. Those challenges are in that the

threats are evolving, the attack vectors are still growing and our skills in security operations centers are getting smaller and smaller. One, because there are many elements that we have to take into account. Many technologies, many attack vectors, the cloud, migration to the cloud, those who are still working on on-premise, right now they were talking about OT issues, those who are responding to the OT part. And talent is what we are going to see later. Also, and I don't know if there are people who operate here, security operations centers, there is what is called the fatigue of information or the fatigue of data that is coming. We are talking about thousands, if not millions of security alerts that arrive by network, by cloud, by identities, by devices,

that fatigue makes us go blind, that we can't see what is happening in reality in that organization or in that company, but that we overwhelm ourselves and we go to the highest level or to those who are categorized as the highest level, but in reality we have a fatigue in the process. And also that the processes are disconnected. This is a reality that has been seen for some time and it is every evil that happened or that we found, that we found within of our organizations, we put a cure, a technology that did what I got from the incident or what I wanted to protect. If I wanted to protect servers, I had a technology, if I wanted to protect the endpoint part of the devices,

I had another technology, if I had network issues, I had a firewall, an IPS, among others. What happens here finally? What is adding to that volume of data and alerts that we are having? That we have disconnected products. If you go a little to literature, if you go to look at global security incidents, most of the security incidents had been detected by technologies, they had raised their hand a while ago that no one had seen them, it was different, but this was happening and was moving properly by a disconnected process that did not activate an action, did not activate an answer. And the rapid evolution of threats, for me the adversary sector has not changed so much, as the technology part is changing, but they go

further than us, they go further than us from the blue side. And the approaches that they could have. Hence, the concept I want to bring here for you is precisely that generational transformation that artificial intelligence is bringing for those of us who serve on the side of security operations or organizational protection. And it is to transform it in the way in which there is consolidation and I can have an artificial intelligence on my side to help me. It's not that we're going to answer and transfer to all people, but we're going to see in the market, and you will see it in your specific needs of what you can get to see in what we are going to face in the

market, is a trend in integration. And that integration is that the security manufacturers will want to have more fields of action, they will want to have to get to be able to protect more and more elements. Why? If it is not integrated, I will have again the threats disconnected, I will have a large volume of alerts and I will not have this traceability that I want to have. And it is a multiple vector trend. Manufacturers who see very niche, possibly be acquired or possibly be merged with other manufacturers to be able to reach this technological consolidation. And it is where, as As people focused on cybersecurity, we must also consider that we must also be

looking at the future of what the market will have to also project our knowledge. And what will actually help, or what we want from artificial intelligence at this moment, is that there is greater speed, we are working with the contexts that organizations need, and have, well, it's not a smoke, but an augmented experience, in reality, that we can have an augmented experience of the capabilities that as security operators or as people focused on cybersecurity we should have. So, artificial intelligence is going to be the solution, we don't know, let's look at it or explore it, but there is a tendency of the market to be used. And possibly those who are in corporate environments or those who are focusing on corporate environments are seeing that businesses

are using artificial intelligence or they have been using it for a long time. If we see Netflix, it is based on artificial intelligence. Everything that had to do with Facebook, everything that had to do with WhatsApp. is using it, but effectively in a productive process so far they are involved this type of technologies to accelerate processes. This is a Gartner report regarding the cybersecurity operation and it is what should be the future of that cybersecurity operation based on cloud as cloud native. Many organizations are already cloud native, they don't even have a server or went through that sequence to work on local networks, but they started working directly towards cloud processes and we see that directly in the market. What Gartner shows is that we are

starting to work and it is expected that by 2025 we will have some federated assistance towards the cybersecurity operation, but the objective, both in the market and the vision we are having, is to be able to work with cybersecurity and artificial intelligence, is to be able to get to 2030 where there are already preventive operations based on artificial intelligence. For the moment, and you can read that, artificial intelligence can't take an action. It's not going to be that I do a direct block on a Firewall or I do a threat or stop absolutely nothing. Based on some conditions, I'm going to take some actions, but many of them must already be modeled by the users.

If we see it in terms of, and I'm listening to a talk I was watching from Chema Alonso a few months ago, Chema Alonso said that only for Europe and also some scenarios, the use of artificial intelligence in the military sector was already being considered, although they do not know much, they know almost nothing precisely about that type of technologies, but there was a definition that in the military operation, artificial intelligence was not going to be used to make the decision, that is, to hit the red button and If everything goes well, it won't happen. It won't happen, or at least not yet, in theoretical terms or in terms of definitions that governments are taking. But at the cybersecurity level, there is a path

focused on 2030 having the possibility of taking more action. This also shows us that within the 2024 trends, this came out a couple of months ago, it was being shown that precisely the organizations were going to start connecting artificial intelligence models to help with analytics, to help with the processing of information, to be able to speed up that talent gap that we have with a lot of information to be able to take actions. And this From there, it is recommended a responsible use of artificial intelligence, and it is one of the messages that this talk brings, and it is to whom we are delivering our information to be able to analyze. It should be valid or not valid to deliver to a third party or a public

base the information of my organization in terms of security incidents. That would not open more gaps, it could not be coming out, we will see it later. and even so, 55% of organizations are already moving systems to production, not exactly security, but yes, user interaction, yes, customer service in terms of artificial intelligence. And the message here is: what will be helping the most in security operations centers at this time are the This is an official translation, also made by ChatGPT, in terms of large language model, or Large Models, which is what makes that user interaction to pass messages, not messages, but a text communication to a communication that goes and analyzes information and can also

respond in a readable text that is understandable to users. Also for those of us who have lived a long time and who have perhaps lived cybersecurity in different phases or in different moments, this is one of the moments that perhaps we wanted to have had a long time ago and it is to be able to interact with something that can be an artificial intelligence and that understands me and that also responds to me in those same technological terms that I expect. This part of the language models leads us to a need that as professionals, and not only as security decision-makers, but as professionals we must have, and that is to increase our ability to ask questions, our ability to generate prompts, our ability to say something to

receive an answer or the answer that I want to have. It is a new, not only a trend, but a professional need that we need to have. In a few years, the security professional that can have the most fields of action is not necessarily the one who has all the knowledge or who answers like a robot, but the one who can ask the questions that are to the interaction systems and can receive an effective response to be able to take action within my company. A while ago, in the job interviews, there was a question topic. Sometimes the questions were discarded, many questions were asked. "What's going to happen? Where is it?" In that case, the questions that are most asked, or the ones that are most interactive to ask

for everything, the most restless within the questions, prompting is giving them a huge possibility to work in cybersecurity. The Large Model language is used in multiple elements, from code generation, and possibly here there are developers, to be able to generate the sequence of sentences I need or the objective of my program, and a code is generated. So we no longer need to know all the sentences of the code. It happened to me a lot, and I don't know if it happened to some of you here. I programmed from Turbo C, and in Turbo C until I missed a comma, I had broken a program. things that are no longer happening, the code is already being

generated, I can go documenting it while I'm working, but finally, and the message in the Large Language Model is that we have the logic, the logic is ours, the logic of the processing of information is ours, what happens in an incident and the fact of understanding that knowledge is what brings the person, what happens is that he will have an additional tool to work with. That's why I reaffirm, is the power of prompts. For the moment, that interaction is to study prompt engineering, to look for it on YouTube, to look for it in different elements. Try to practice prompt engineering because it is important to know how I want the intelligences to be responding to the day-to-day of what I am working on. And this also takes me to

the power of context. Many think that by connecting my organization to artificial intelligence, all the questions we have in terms of cybersecurity will be answered. And it's not true. We need to know, and also for some who have worked with security events concentration systems, security events and correlation, the context is everything. If I have something that is correlating security events that come to me from all the alerts, from all the technologies I have, that is the context. If I miss something, if I didn't put the teams in the context and a ransomware was executed and it affected all the teams in the organization, I will not have the way to get to that root cause or analyze what happened with the machines. How it moved through the entire

network or how it arrived through all those sources until it passed to the team and from there I have no information. So here the context is everything. I don't know if anyone has asked questions to ChatGPT. Yesterday the DIMM played, right? For those who are from the DIMM here. So if I ask ChatGPT how the DIMM was yesterday, what will he say? No, no one heard. It doesn't have the database. Precisely, the context of ChatGPT is until January 2022, if I'm not wrong. Or at least ChatGPT 3.5, which is the one we can access. So, that context, what it does is, if I don't have the information, I won't be able to give an effective answer. So, if I ask it, "What happened

with this ransomware?" effectively, I won't be able to have the translation or the step to what I'm going to get or what I need to get. So, the context is everything. What are contexts? We'll see them later. And this is like the call to the cyber talent, to what is being seen the market of the current need for professionals in cybersecurity. And it's not a problem in Colombia, it's a global problem. And I'm going to present them precisely here. The global demand for professionals in cybersecurity has grown in the last year. These are already cybersecurity adventures that are being analyzed globally. It is estimated that by 2024 there will be a drop in about 4 million security professionals. For what

the market needs, imagine with these evolutions, of technologies, of threat vectors, of everything that is happening, what can happen in two years or three years. They will be much more than those four million. Going to a more aspect towards Spain, in Spain INCIBE makes an analysis of security professionals that are needed for the operation. Within Spain it is estimated that it is about 63,000 jobs in 2021 and it is expected that by the end of this year they will reach 83,000. Where do they get 83,000 people who know about security in a country to be able to operate productively. And only in Latin America, this is information that CIS delivers, in Latin America and the Caribbean it is estimated that there is

a failure of more than 700,000 professionals to close the gap of talent in cybersecurity. It is gigantic. It's huge, but it's also an opportunity for us, an opportunity for the community to be able to expand these scenarios and fortunately it's also being recorded to be able to get this kind of information to other people and wake up that chip that makes us sit here receiving so much information so that we can grow. There is an opportunity and we will have it for a long time. Within what is Cybersecurity Operations Center, returning to my Lego figure, Maybe it's not very clear, but they are the relevant profiles in the security operations centers, which we see here that it is from the chief information security officer,

incident managers, threat specialists, architects, auditors, educators, researchers, risk analysts, hunters, all the part that is analyzed forensic and penetration testing. There are many people or many roles that a security operations center standard needs. That's why it's very difficult for an organization to have a complete security operations center and there's a tendency towards third-party or complementing it with a third party that helps me precisely to that type of operation. For this scenario that we are working on today, which is how artificial intelligence within language models extended, increase the possibility of working or increase those work capacities and functionality within the operators, we see that both incident managers, threat specialists, researchers, risk analysts and hunters will have a greater

possibility of being able to support an artificial intelligence in an operation. And that's how it actually looks. we will have more working capabilities. And something that should encourage us more is that the adversary sector is already using artificial intelligence to attack us. The adversary sector is already on its way, it is already collaborating and using this type of technology. This is another Gartner analysis associated with the security adoption part, the NDI adoption of threats. And this is what it means to me that artificial intelligence models will not be infallible and they will not be infallible in terms of that they can be contaminated or they can be poisoned in a certain way to be able to give answers that

are not. And that's what we have to keep in mind to know who we are going to work with in artificial intelligence models. So here they go from specific issues to hallucination, which I don't know if it has happened to you, again with ChatGPT, it happens a lot. that gives us answers that are not and affirms that they are the official answer and we know that it is not. We know that it is not. Extended natural language systems usually have these hallucination problems, although the manufacturers and developers of the algorithms are improving it, but we also see how we are going to have problems, and I speak more from our side, in terms of answers that can be

failures or answers that can generate some kind of wrong decision or also that we can have data leak extraction. And Gartner does not show it directly, for this is Mitre Atlas that we are going to see right now and for this there is also another type of analysis that tells us that artificial intelligence systems can be contaminated in their algorithm like most information systems, or in their inputs, what they will do is that there is a failed response or that someone modifies the output too. And that's what we're going to be working on within this scenario. There is a very important point in terms of escape, and that goes for those who are going to interact with artificial intelligence, is many scenarios by the same prompting

of knowing how to ask those questions, they can reach the source code, they can reach the information with which this artificial intelligence system was fed. It's just knowing how to ask questions. So for you, you will no longer have to learn assembler or maybe you do not use it in your work environment, possibly we no longer have to learn SQL, possibly we no longer have to learn the whole programming code because we will have assistants within artificial intelligence, but here it is knowing how to ask questions again to be able to do it. And the adversaries are doing it. So everything that is public, and that is one of the problems we have in that

there is no such opening to be able to work in open artificial intelligence is because the information has to be used to train the model. And this makes them reach their base of incident information, their base of alert information, to know their IP addresses, to know what are the domains or certificates that are missing, or what was the failure of their own code. If I'm uploading it to an artificial intelligence to analyze the security of my code. The adversaries They go a step further here, and here is how there is also prompt injection, there is already injection of prompting, poisoning of prompting to have the answers, and here I leave you more as a focus towards research, that you can read this report of OBAs, the top 10, based

on the Large Language Models. But we also see things that we saw in other types of scenarios, such as service denegation, source poisoning, being able to modify the output of information and the model theft. The theft of a language or the algorithm, if we can call it that, of a language, an extended language model, this would lead us to know how the information that is working will arrive. And this is also an example of a paper that is on the internet, it's also published, the link is in the part below, called Crescendo, where they are asking about which is a Molotov cocktail and they don't answer, the messages don't answer, the chat GPT has that bias of

not being able to deliver it. Here is the example also with Gemini, but then making a counter question, knowing how to do that counter question, because I can get to the information that I actually wanted, it is to modify the way I want to access that type of information and here it is, what it has to do with this type of incident, that at the moment there is no technology that can block it, nor is there any design that can block it, that can't get to these answers that it wanted, simply asking differently or working differently with the model. So, the threat actors and their motivations can be expanding, but they are still the same threat actors and their motivations, without talking about them here.

we usually work with or find that they are going to do the same techniques or the same tactics that may be reaching the associated security incidents that are going to reach our artificial intelligence models and also that we have to evaluate the methods to detect and respond to these incidents. This is simply a specific issue of what is happening globally, the increase of trends and adversaries continue to increase, now having more tools also from the artificial intelligence side to work and the leaks that are being presented, without going directly to it. Based on artificial intelligence, this is an analysis that CrowdStrike has and that presents it at a global level, year by year. There is also the explanation

of how LLM models are being used by attackers to to extract or analyze information and be able to pass it to a work scenario. This is based on a key of a base of immutable IDs, which are like the identifiers that there are of certain users, certain types of connections, and through LLMs they were able to be part of that reversing and that generation of information that was needed, because they are talking about a lot of information that can be being used, and also possibly that many of us are also victims now of this. It's that spam that we received ten years ago, all badly written, from the Prince of Nigeria, because it doesn't come that way anymore. It's already written in Spanish, very well worked, until you already

feel feelings within the conversations, and this is both deepfakes, like voice deepfakes are being used with artificial intelligence to be able to generate elements that should not be. The same is happening with spam, with phishing, it is already being much more directed because in reality artificial intelligence is already being used and the Spanish person does not have to speak to generate an email that convinces us or that understands our country's problem. And this example is associated, or it is not so much an example, but actually it is the other focus that they could be analyzing and studying, which is the part of MITRE ATLAS in terms of technical tactics and procedures focused on artificial intelligence.

So the matrix is already created, you can't see it here, of course, the zoom that it has here is that it also has phases where the techniques are, tactics and procedures associated with artificial intelligence in LLM models. So we see here how persistence is done, how credentials are stolen, how you can make discoveries, and this is actually fully documented within Mitre's database, which is super interesting that they can get to work on how to avoid it. But, since this talk is not focused on the adversary sector, but rather on the automation of operations, what we are going to do here is that we can be helping, collaborating from the automation vector or from the application vector of artificial intelligence in our work environments, and that is to

be able to have and those aids go from data collection and processing, classification and filter of threats, so this is helping us to have more time, we just send, "make a collection, process this data, bring me this incident" and that will help us a lot. And generation and sending of reports, It's something that is very difficult for us who work in cybersecurity, sometimes explaining what is happening in a simple way is very complex, because they are complex threats, they are APTs, they are everything that we are presented with and we have to answer another person, we have to explain it to the director of that organization, who does not understand absolutely nothing. So part of it helps me to tell the artificial intelligence as a seven-year-old person or

as a manager who knows nothing about technology, generate a report and that should attract it, it should attract it properly to artificial intelligence and it helps us based on this fatigue of alerts, this fatigue of alarms that we can have reduction of human errors, that is, stop passing so many elements that we had before or we have at this moment to be able to work at that speed and consistency to reduce our human errors and of course improve productivity. So it's the balance, it's having someone who is working with us to be able to have better results. However, the operator or the analyst or the hunter will have the same ability to analyze, will have

the same ability of what I need to be able to get there. Here I go to what I call "garbage in, garbage out". It is heard a lot in artificial intelligence. If my context with which I am working is not nourished, is not good, then my answer is going to be bad. That is why we are going to have information about devices, both vulnerabilities, security events, compliance status, the priority of assets. from the part of threat intelligence, what is the exposure of my organization, what is the evolution of my applications that I am presenting, what digital certificates they have, what is the threat trend at a global level. and focused on identities, if there are

risks of connection, if there are risks in authentication, if they have several elements that may be. But that's how it's going to happen with everything. It's like adding more context to an analytics that I need to work on. And this again goes to that we as professionals must know, we must understand absolutely everything. We must know what is a risk associated with the data, what is going to be a service, what is going to happen with an email, what is a phishing, how it arrives, how it tries to interact. That will not take away from us that knowledge or that traceability that we can have in our head. It is: have the base of information

that I'm going to ask you questions about what I have. If I don't have the information, well, I'm not going to have it. Or if I carry information that is garbage, My answer is going to be totally garbage. So that's why I was saying again that most manufacturers tend to have more information about security events or incidents of security because it will be the context with which it will be able to generate a greater automation and delivery. And this, in broad terms, will provide many insights in real time, create a lot of information, which we have already seen in the way of operation, If you notice, and you're going to see it right now, I hope you see it in

the demonstration, you will be able to generate scripts with which I can execute a search that I needed, or if I want to send a report, or if I want to generate security codes, generate a new commitment indicator, or go and look for the last commitment indicators, tell me which ones are affecting my organization. So it will be very useful for that kind of interaction with these operating teams. And, well, there are many artificial intelligence that are working within the market, and some have been working for a long time. Like IBM, that has been working with Watson for a long time. But what we are seeing here is that we are trying to cover more to be able to give a better response. So CrowdStrike is

already working on an artificial intelligence to respond to security incidents, but it doesn't have all the contexts. Palo Alto is on the same path. That chronicle you see below is precisely from Google, which is that base of information that it is creating in incident management and as a center of events, as a correlator of security events. and others that go more to use artificial intelligence per se in their developments, in their source code to analyze a threat, to be able to give an answer within the operation, like Inteaser or Darktrace, which is that one you see there. And of course, Microsoft is also going in the same way, within those interactions and what you have

seen as the co-pilots. And here we return to the challenge. What is going to happen? What is happening and what is the care we should have as a challenge if we want to go on this kind of paths. We have to be very careful with open models and from there we have to keep in mind that we are going to be using large amounts of information that I can not be delivering to an open model. And it's not because I'm going to find the open models, but actually my information, the best way to train in artificial intelligence is with real data. If I train an artificial intelligence with false data, the answers will not be

the ones I need, I have to go with real data. So if I choose a provider or an analysis, an artificial intelligence technology to work with my security agents, Well, that can't be at the mercy of anyone, or even the same provider. There are confidentiality agreements, there are terms of use that must be taken into account when working with open models, or with public pages, or with providers. There is one more call to evaluate trust providers. There are, which was what I mentioned, confidentiality agreements of the models and also analyze a little based on Mitre, based on OWASP, if these models already have some kind of security characteristics associated with their models. How are they being protected? Who is going

to provide me with that service that has security within the model? One of the main challenges in artificial intelligence, and I don't know if it has happened to you, only with ChatGPT, which sometimes responds very slowly or sometimes goes like two per hour, That's because artificial intelligence in information analysis requires a lot of time. So if my context is very large, if my amount of alerts and my amount of security events are many, the processing It is needed, it is needed and it is one of the main challenges, that's why the definition of how this is eaten, how this is going to be consumed by companies, has a lot to do with processing. If I

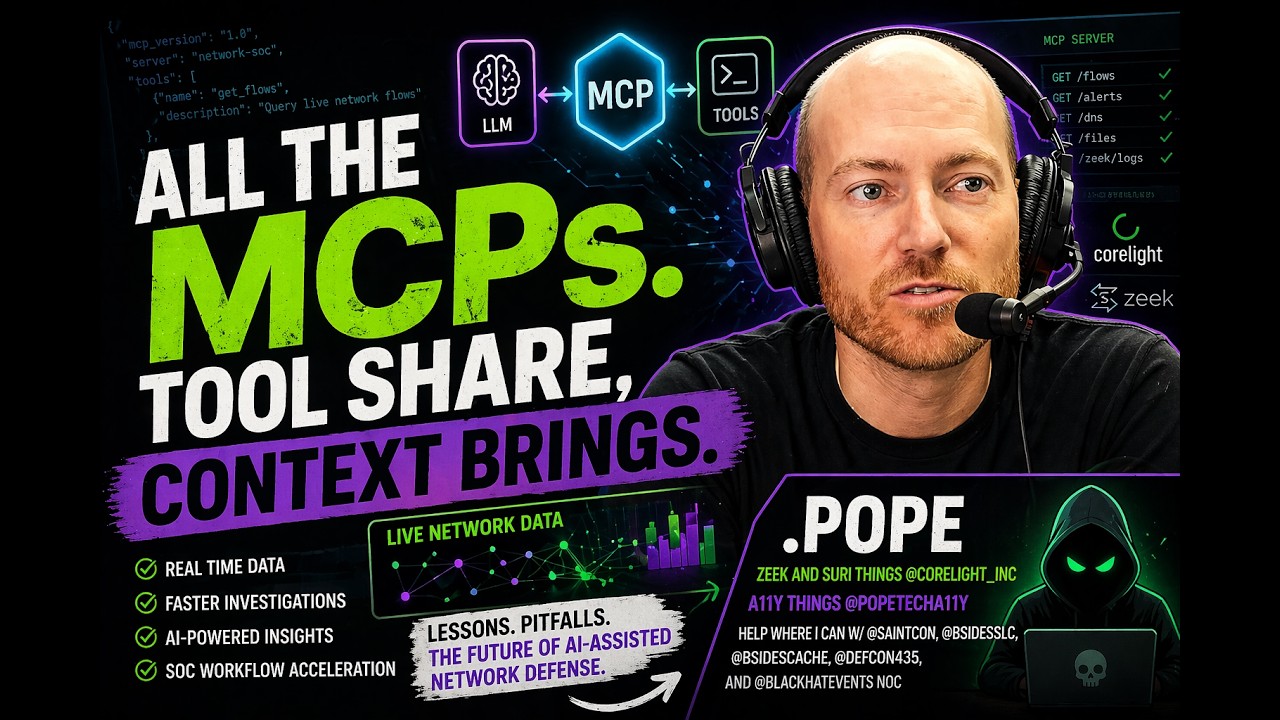

have to assign processing, possibly an organization cannot do it with hardware, and that's why the trend is to raise it to the cloud, where there may be larger processes. I'm not talking about infinite, but with great reach to be able to have them. and what makes the acquisition form a challenge is with whom I will be working this type of LLM elements. The opportunity, and more associated to those of us here and to those who are also watching the video part, is an opportunity for collaboration and this is a GitHub repository associated with the security co-pilot where people can be developing that way of improving contexts or be able to generate plugins where I can be using the

contexts and the information that is being used. The opening up to us as cybersecurity people is that we can be analyzing how I can collaborate in these new initiatives. Many organizations will require a plugin for this technology so that there is a greater context within my organization. Or I need an automation that analyzes ABC and gives me this report. That's the point where we have the possibility to also collaborate and where we can also present it publicly to be able to improve in those collaboration schemes. I wanted to put the meme of of the Simpsons grandpa saying that he shows the demo, but we're going to this. This demonstration, in the first part of this image, is part of what I was

telling you a moment ago in terms of being able to ask you, explain it to me as if you were seven years old and you get an explanation of a security incident as if you were someone of that age, right? So it explains that there is a video game, everything that happened. At that point we can get there, using the same information base, being able to work in this scenario. And now, to see it, I'm going to show you a little bit about Copilot for cybersecurity. This is a case which doesn't mean that the operator can't do it, the operator can do it, but we don't know how many hours, how long it will take,

and I hope to be able to show it to you in these few minutes. It's an investigation of an incident per se, of an execution of a RAM software operated by humans, which is another topic completely apart, but where this type of technology serves to be able to do the analysis of the script, to be able to search for the entities within the organization that are affected or that are interacting with this type of incident, search for the reputation of that internet or what is happening, you can do an analysis of the adversary, a device investigation or what is happening with the device within the organization and that generates a summary. That's what we want

to do in this case and that's what this operation is looking for. Let's see how it goes. This is based on a tool that we have of work. which is this one, I'm not going to talk about it, but I'm going here to an opening that is a prompting, this is going to lead me to a prompting interaction, I hope it comes out, that the connectivity is good, it's a prompting, it's a cybersecurity chat with the information sources of this organization. So, as I said, I analyzed the chat, I don't know if you can see it well, I don't know if you can see it well from behind, But what it analyzes is the script.

If I already found the script, if I already reached the source that my technology tool already told me where the script is, now I'm going to analyze the script. And this analyzes me, that there is an execution, right? Down here is the analysis. This execution has different factors. It has the getUserPRTTToken, it executes an element, then it makes a download and then it makes Mimikatz or downloads Mimikatz within the organization. So, at that point, I also get to the analysis of the script of the getUserPRTTToken function. And what you see there, that little circle, It's what we have to talk about in this type of scenarios. But here what I'm going to say is: "Go to the technology that detected where the incident is and

generate a summary of the incident." So what it's going to do here is go to the security technology tools that are connected to this context and in this context it makes the delivery of the analysis of that security incident. So here it says: it is of high severity, it is from the date, the description is a potential human operated malicious activity, included in this, which if you see it, is a log bit issue, from what IP is being generated or who is being affected. In addition to that, what machines are playing. Well, that's it. Possibly the operator would have arrived with 3 or 4 more clicks within his work tool, but it's much longer. Besides

that, it explains based on Mitre ATT&CK, what is the sequence, what was its Initial Access, its Credential Access, its lateral movement, its impact. the evasion, as the evasion of the defenses, the command and control, among others. And this will allow the operator to interact. That's why it can't be an open model, that's why we have to take into account the information I'm going to take, because it's the information of my organization, it can't be anywhere. So if I go from here, what if the attacker had access to what he found within the organization? So here the question we asked is associated In the opposite direction, towards which entities, which is what I was telling you

in the text, which entities are involved. So, there are these URLs with which I can generate the commitment indicators, the blockages that it needs, which are the functions that are executed, which are the processes that the incident touches, and the files that are involved, also in case I want to go into the operating systems to take it. Apart from that, the timestamps and the description of what is being done. So, it's a potential Operated Human Malicious Activity, it does an RDP session, it's involved in adversary groups like Manatee Tempest, it does Mimikatz, it does credential theft, among many things, and these are the IPs involved, the usernames they touch, possibly some of those machines have a local administrator access and machines that

have been touched. If I go to the extraction of the entities from the script, then it goes and works with the script and tells me which are the generated files or the ones it touches and where they are being stored, which is the part of the temp. Again, It's not that we have displaced or the operator has not been displaced, but the operator will have more tools. How long would it take the operator to get to this type of analytics with simply that generation of associated questions? And these questions in most technologies will be able to be saved. The operator himself will be able to generate his book of prompts that will be being used

and which he will send to be able to extract the information. So here the question about reputation goes to the intelligence sources of threats that can be local, from the technology itself that has, or can be external, and brings me that information regarding the cyber threat intelligence that is connected. But in addition to that, I want to find out about my adversary, that would also lead us to open Google, to look for information, to look at something that is not going to affect me, but that I can analyze about that organization. And here I can analyze what Manatee Tempest does, what is the threat actor, so here it tells me that it is associated with

drydex campaigns, Manatee Tempest is from a certain country, affects certain types of organizations and works this way. And here it analyzes the other adversary group that is within the security incident, bringing me information not similar, but if associated with that threat vector. So, what the user or the operator does in this case, because this is not for the end user, but for operators, is to be able to work with their environment, if the identity of the user is involved or not, if it is at risk or not, and what can I ask to do. But here what it does, and I'm skipping a little, it's more about time, what it does is, if it considers that the user is at risk, which says yes, of course, and I also

ask them to generate a PowerShell to be able to look at the SMB connections that the user is having and that will be able to take me to the local machine and be able to know what happened with the local machine if I also have that type of information. If you notice here, it generates the PowerShell code to be able to search for those devices and be able to get to the address if I want to get to the local issues. So that's part of the exercise. You noticed here, I didn't do the summary, but I'm going to show you here. This is generating a summary of the script analysis online with the script execution, the summary, the cybercriminal groups, the user and device status and the

data lookup. And that approach will serve in this little 8-minute demo, because we did all this about the investigation of an incident. It's going to be a tool of this power in the hands of an operator. It's going to make life much easier. It doesn't mean that it's going to move you, but it's simply going to give you more tools and it's going to make this type of activity easier. As conclusions, Well, you could have easily detected it, it's a moment to be able to work with artificial intelligence. It's a moment that fortunately we are experiencing and we will be able to interact with it. That we have to take advantage of it, we have to increase our knowledge base to study more. Use artificial intelligence to also

prioritize your study sequence. You can create your study sequence within the GPT chat. Be careful with who we deliver the data, even though we have the need to make this more operational, it will be very difficult, or at least those of us who are within organizations, to deliver information to third parties and be analyzed. We have possibilities to collaborate and also to get involved. if they create a prompt book to be able to analyze an incident, if they manage to create a plugin to connect certain technology and add more context to artificial intelligence, it will be something that will possibly bring us also an economic benefit also in our work areas or will open us a greater labor field. Remember, "Prom Engineering", "Prom Engineering", "Prom Engineering",

be more anxious to ask questions, challenge yourselves to develop this capacity that as humans is implicit but we must also develop it and study in Prom Books. Of course, and it is part of what we want in this type of spaces and I am faithfully convinced that it is, to continue that learning curve of new competencies, new concepts and to promote our current knowledge. That was what I brought for today and I thank you very much for being here.

Thank you Gustavo. Questions? Yes. If it's about the images, they are all made in artificial intelligence. You can also try it. Ready. Yes sir. Well, you had seen in the presentation the open models. Yes. How about, I mean, I do understand the need not to deliver privileged data to those models because they are used for training and can be filtered. Yes sir. But there are also open source models that one can use locally or in private clouds. How about training them and doing the same thing? You have to analyze it very well, in reality it is not, we could say it like this, it is not against the algorithm, but in any case in its

use agreement, it is not that this information is shared or it will feed the operation again. No, literally, it's local, you can put it on your laptop, on an S2, so you design that so that nothing is filtered. Yes, yes, yes, you would add more, that guarantees that nothing is going to happen, that this is something that has to be done. And it doesn't mean that the manufacturers can't do it, because the manufacturers can't guarantee that nothing will happen, but there are some agreements that they will notify you, that if something happens they will comment on you. But if you can do it like that, it would be an excellent scenario. Literally, right now they

got more goals, they got a model called the matrix that will be used as a foundation for a lot of open source systems. That's right. And I saw this week up to one that will be used open source to be able to make artificial intelligence from cell phones. That is also excellent to work. The problem is not to get to open it. Yes, thanks. Gustavo, good morning, thank you very much. Two questions: What advances is artificial intelligence offering today in the issue of responses to situations that require actions, which is what artificial intelligence cannot take today due to the lack of reliability in those detections? So let's say we've been seeing this for a while. The

question is: What's new? What has improved in that? The second question would be: Let's say, if the models have to be trained for detection and they train with data, every day in operations we are seeing new attacks with new techniques, we can even talk about new ways of attacking. How likely is it that artificial intelligence without training can detect these new techniques for which it has not yet been trained? Yes. Excellent questions. I go first to the action part. The action part, at the moment, goes more towards technologies that perform controls. There are some that perform controls that have been used in artificial intelligence for many years. such as those with the ability to detect an advanced spam, or the same tools of sandboxing and automation of static

and dynamic code analysis to take actions. But those take actions because it is part of the security tool, that security tools have been working for years with artificial intelligence. About LLMs, still, and I say still because the trend is that they may also be proactive, On that field of action, what can help us is what the exercise does. Help me create a script, help me look for commitment indicators, look for the entities, look at what is happening to be able to generate some kind of code, script or automation that could lead. That's where it goes, let's say, that point of operation, but the idea is that this in the future, and it is more what

I think as a security professional than as a human, is that as we see that this makes processes faster and that possibly remain in the human in being able to make the decision, then we will see that many things will stay there and more in security issues, as I was saying right now, is that in the CIEM is the lock that you entered through such port, such day, such time. where we begin to have that resistance, but know that we can automate it, for me as a human, not as a security professional, we will give the option to make decisions in the future. Yes sir. Hello Gustavo, thank you for your talk. A question, in

the part of the demo, Can you go into detail on what was the input you gave to the conversational agent? And also, if you can explain a little bit if the conversational agent has information about the context, because you can ask generic questions and he can generate entities, certain recognition of entities, but if he is not trained with the information of the company, with the information of the context, they will probably be generic answers and they will not be answers of the organization that is being affected. Yes sir. Yes, look, there, and the issue of context, because you have to look at it from different points. There are models of LLMs that can work with the information that you are working with,

that is, with which you are working, with which organizations are going to start working. And that is one of the points that can lead to a filtration of that type of incidents. The contexts that this type of environment handles and that's why I didn't want to go into it so much, because they are part of our own demonstrations within my company, but they are associated with issues of the state of the devices, that is, the state of vulnerabilities, the state of security base lines, of security policy configuration. It has the internet connection base to be able to search for the adversaries, It has the part of Threat Intelligence, which is a Threat Intelligence engine to be able to detect the level of exposure of that entry and also the

URLs that it is running. And it has, within this part, it did not analyze issues of identity, but they are also involved. So, those contexts are what they are going to take and, looking here, What is generating the PowerShell? Well, it doesn't have to know anything about the organization, the only thing it needs is to have those sources where it can consult. It doesn't mean that these artificial intelligence are constantly analyzing the information, right? They are not analyzing, but you send it, it needs to go to incidents and check the incidents that are associated with this threat. So they are not models, that's why they are chats and prompts, it's because they require a an

action to be carried out to go looking for the information bases that it has. But the ones I have here are specifically for the management of devices. The anti-malware or the anti-LDR that the machine can have is the identification of Threat Intelligence. And what is scripting, that does it directly by the foundational model of this artificial intelligence. That is, the analysis of the script, you don't have to know anything either. That comes within the used model. Thank you, Gustavo. From what we have understood is that the contexts are super important in the process of this generation of good answers. But, In our environment, the majority of contexts are logs, and they are logs that come from different

sources, different structures. Sometimes they are codes, they are not languages, so they are not easily analyzable with LLM. How do we do for this, for a log structure to become language? How is that problem being solved? Precisely the part of, like with the CIEM or what are the... cyber security correlators, concentrators and correlators, there is a call to look for that way of connecting information. It will be very difficult, very difficult, that all manufacturers, despite that if you look at security news right now or security manufacturers, they all talk about artificial intelligence, not all applied to this issue of interaction with security operations. That means that there is a call, and that's why I called the

collaboration part of how to create connectors and take them to the artificial intelligence that wants to do. That is open in most models of how I carry additional contexts. And that's what I said could open not only challenges to support technology, but also that it can be an important factor in our work environment. That you can help to carry contexts of your organization, of standard tools or blocks towards systems of management of artificial intelligence information. It is a challenge, it is very big, and as not everyone will be able to develop it, what the majority is doing is looking at how they connect to those who are evolving, to those who are already carrying an

LLM model for security operations. It's like I connect to base 1 or base 2 and I deliver my technology context to that artificial intelligence base. Yes, it was what I showed, at least the GitHub one. The GitHub one is precisely to be able to look at connection plugins, to be able to develop plugins. Ready? Hello. Hello, how are you? First of all, thank you very much for your talk. - - for concept projects and then other types of things like network, AI, teams for production issues. That's to support the first question. I would like to align myself to the message you mentioned, that there is a gap in cybersecurity, but I also think that there is a problem at the level

of companies. Because sometimes, let's say, Sometimes they don't know what they want. Sometimes they ask a lot of what they really don't... And there are memes, right? Obviously, that are about the topic. In fact, in Microsoft, I can mention it. The last position that came out for a Security Data Scientist, I saw it about a year and a half ago. And I haven't seen anything else related to the subject. And in fact, regarding this, if I'm very honest, things are out there. If you really want to gain opportunities, relevant, earn money too, learn English, No thank you and I also share that I think We have a moment and perhaps that failure is not reflected also in the positions

we see. Although we see some that last a long time, that do not get the role specifically. I think we have so many fields of action to be able to work, which is what everyone actually sees that have greater pleasure and can develop their competencies from there. This is like an additional piece in terms of artificial intelligence applied to cybersecurity that can open new paths and maybe encourage many to work on it. And well, to continue collaborating. With great pleasure. Well, thank you very much, Gustavo. Thank you.