DIY Cyber Threat Intelligence

Show transcript [en]

Hi, uh my name is Mark Han. Um I'm a uh security solutions architect for Qualis. I come to this by way of uh uh development background. Um this is Hi, I'm Ted Han. Uh I'm his son. I'm also a independent site reliability engineer. I help startups get started with the whole cloud thing. Um I'm also an ex Google SRE. Uh, so I've been doing this for a while. Um, did anybody come to my workshop yesterday? Anyone? Nope. Okay. Totally different crowd. Great. You'll I can reuse all the same jokes then. Yeah. Um, if you want to follow along with the uh presentation, you can click on that link that goes to to our website. Um, and you can see the slides.

Great. Um, so a lot of people get into cyber threat analysis through many different career paths. Um, a lot of them end up at cyber threat intelligence kind of through this path. Like who in the room sort of recognizes themselves in in these boxes? Okay. Most most people a bunch of hands actually about half I'd say so far. Um, this is kind of my point of view from a more of a developer point of view. How many people kind of recognize this as their career path? Fewer hands. Okay. Yeah. So that's going to make this talk weird because coming at it at a different point of different viewpoint from this. Yeah. So you know I

think about things as a developer um as an architect. So I think more about application security than I do about like sock stuff and vulnerability management and so forth. So um we we took a different approach on this. Um so my thought in doing this when I wrote it up like hey I want to learn about this stuff. So, you know, I'm just going to fire up um a bunch of open CTI tools, you know, log into Showdan, read some dark webs, and like that's it, man. This is going to be great. Great stuff. Um I didn't disapp. Yeah. Um and then um next thing you know, scratching the record, that's not how it worked out. Um totally different

adventure than the one that I set out on. Um, and there's a disconnect. Kind of my point of view is a disconnect on this in that, you know, your your CTI people tend to work, you know, sock operations. They're looking at at files and indication of compromises and they're looking at ways that attackers, you know, using the MITER attack framework and this is all laid out for them. Um, me, my problem is the cloud. I manage an AWS organization. I really don't care about the infrastructure because honestly, it's for demo purposes. all the infrastructure is is disposable. It's also in many cases built to be vulnerable in weird ways because it shows off what our product

can do for detection. And and so much of your infrastructure today is disposable, right? you're not thinking about these in the the same old ways and you're not really worried about persistent threats on your Kubernetes machines that are reimaged every two weeks like the this is the the tooling has not caught up in that way right and the other thing I'm thinking about is the cloud control plane which operates very differently from your internal infrastructure um number one you've got this shared responsibility layer with your clients with your with your cloud provider and So you have you take on a different set of roles than you do with your infrastructure where you're building everything up. And

honestly, people haven't talked a lot about what account compromise and cloud attacks look like. Yeah. Um you know what we're looking for is an attack matrix but for the cloud control plane, your AWS API, your Azure API, your Google your GCP API. And if you do research, if you just kind of Google for what are cloud attacks, Aquasc is one of the kind of the premier cloud security vendors. This is their top 10 list. This is how they order it. Number one, everything on the left side here is not a cloud attack. It's a traditional infrastructure attack. So like figuring this stuff out is a pain. These are the old attacks, but they've just slapped cloud on them because your

infrastructure is in the cloud. But they are not the cloud attacks. They are infrastructure attacks that you happen to still see in the cloud. Denial of service attack. Do you think AWS is is a attacker going to prevent you from using the AWS API by flooding it with a DOS attack? Ain't happening. Just not happening. Um and and if it does like the world has bigger problems. Um security misconfiguration. Yes, it can be a cloud problem, but it is not the cloud problem. And it's also not really an attack. This is my stupidity. It's not an attacker doing stuff. Cloud malware injection attacks. Well, this is actually just malware injection attacks on your cloud infrastructure. Yeah,

cookie poisoning, not not a thing here. That's browser stuff. Insecure APIs. I'm sure there are bugs in the Azure API. I'm sure we'll find them. But they're not your problem. Exactly. That's they're not something that you're going to worry about cloud cryptomining. Somebody abusing your your infrastructure, not abusing the cloud. Like there's nothing cloud about this. They did get like four, right? And sort of four and a half. Account hijacking. That's what I worry about. Like is somebody using my accounts that shouldn't? Um user account compromise. Is there somebody pretending to be one of my users that shouldn't be? kind of the same things, but I account hijacking is like they took over an administrator account my organization

versus just a user one way. Yeah. Insider threats. Yeah. I mean, equally applies in the cloud. And I more than more than equally, it's even bigger of a problem because all of your infrastructure is in the cloud and all of the it's very it's much easier when everything's centralized in this cloud control plane for an insider threat to escalate itself much much more quickly, right? And and touch much more side channel attacks. So yes, a legitimate cloud attack, but whose responsibility is it? Yeah. Not mine. Not mine. Yeah. It's it's AWS is there should be the one looking for this stuff. Um session hijacking legit attack in the cloud that I should be looking for. So

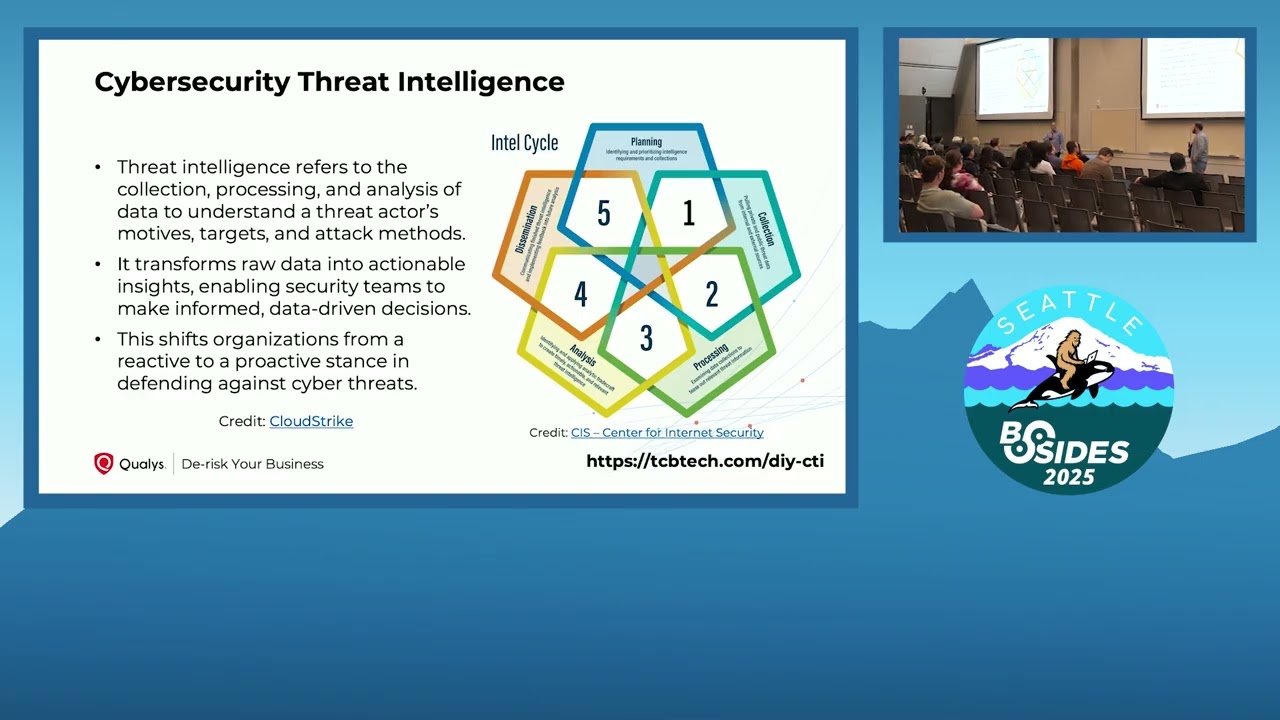

um you know what what I'm finding is that like it's a desert out there in terms of thinking about how do I want to protect my cloud infrastructure from cloud specific attacks. Yeah. Um so where does that leave us? Um this is the wrong expertise. I'm a developer, not a sock guy. Um, taking the wrong approach, right? And it's not easy to find the prior art. So, what do we do? Um, back to basics. So, what is cyber security threat intelligence? What are our basics? How do we work up from from first steps to where we want to get to? And then how do we approach it? And then what can we do to what this is the ttps

of what should I be doing? what are the things I should be doing to find the TTPs of my my foe, my enemy? Um, so number one, take the um the definition of cyber security intelligence and you're going to be looking at data. You're going to be doing this um yeah, what are what are the things you're going to the actionable insights like the word actionable is like pops right out. Every definition that you look at is going to say actionable. Um, there's plenty of false positives and there's plenty of non-actionable ones and the whole point is not to overwhelm yourself. Yeah. Because you can jump at every little pretend threat and you will just tire

yourself out. Yeah. Um, but Whoops. My definition is slightly different because I'm a programmer. So, you know, when you're running a security operation center, you have a set of processes that you go through. Cyber threat intelligence is about debugging those processes. Yeah. What more do I need to look for? If you've already got an alert set up, that's not CTI. That's just operations. So, what we're doing is debugging those operational those security operational processes. It's out of band. It requires a human and it's a feedback loop back into those operational process when you do find something actionable. Um so this is this is what I started to look at was um was this this approach. Um I read a lot

of background reading on just what is CTI. Um this I think is recommended the psychology of intelligence analysis. How many people have heard of this book? Okay read it. If you're gonna go into CTI, read this first. It's not that hard, but it's it's also, you know, it's it's 1999 by the CIA. Like, this is this is how their intelligence analysts work it. It's great. Um, I recommend that um the DoD intelligence community directive, but basically the that is the um what do they call it? Authority to act. Yeah. Um from the the director of national intelligence, DNI. uh worth reading. It's fairly quick. It's kind of boilerplate, but it's worth reading. Um Splunk has a good paper that um I think

does a good job of kind of laying out the basics. I'm not a big fan of their peak, but they do explain squirrel and Tahiti, which are structured methodologies that help you think about cyber threat intelligence. And so reading till you get to that point and stopping is probably worthwhile. the other 10 pages of that document are not worth um tradecraftraft primmer um also good. I also recommend just for anybody in in um doing agile development, building new things, searching out new solutions for problems have been around the US Army Fieldman Reconnaissance and Security Operations um really talks about how do you how do you learn new stuff in the field when I recommend reading it as a kid.

Great great read if you're about 12 years old. So um but yeah no um seriously give it to your kids. Yeah. Okay. So the what what as a CTI analyst do you do right? So number one you need a framework for your hypothesis not just tools but you need to know how to think about CTI. You need to make sure that you're not getting caught up in cognitive biases. we assume um that certain things are going to happen and you need to make sure that that that isn't coloring the way that you look at the data because there's a crap ton of data and it can scare the living daylights out of you or you can look at

it objectively and so you need a way of of working on that. Um you need to learn how to basically ask questions. So the the biggest thing I learned here probably is it's hypothesis driven. ask yourself a question, figure out if you can look at the data to prove or disprove that hypothesis. And so we'll go through some of that here and we'll show you some of the tools that we use. Yeah, there actually will be tech in this like it is DIY. Actually, they misspelled it in the in the schedule. Yes, we are DYI or do yourself in apparent way. Yeah. Yeah. But we we will get to tech here and and we'll talk about how we came up

with some hypothesis and um how we tested those and we'll talk more about like how I think as an industry we need to think more about this in particular because I think too many people are getting surprised by the fact that they have attackers that are not attacking their infrastructure but their cloud control play and and it's a constant it's it's it is a race it is a constant arms race and you need to be developing in this you know yeah field you need to be not just using tools that come off the shelf, but actively developing and thinking about your own tools and your own threats because not everything is going to be, you know, an off-the-shelf attack.

Yeah, exactly. So, what what are our tools? So, again, we're starting at basics. So, um focus in on the cloud trail logs, which tends to be the vast majority of data that you can get. like yeah there are certain things that the infrastructure you build is going to tell you but the vast majority of in your cloud trail logs your infrastructure logs on Azure same um and the tools that we use are jq anybody familiar with anybody not familiar with jq is this first time you've heard of jq a bunch of people okay explain go look up jq jq is not JSON query uh but it is a tool for querying JSON um and it provides a a simple

language to say basically just parse over uh lots of JSON. It allows you to to slice and dice the data. So if you've used JSON lines such as your cloud trail logs, um it allows you to say find me all of the rows in this in this cloud trail log that have this user ID associated with them. The it allows you to um then reconstruct and parse and process JSON. So if you've got, you know, you you want to construct an object that from each object, you want to uh aggregate or gather all of those objects and and create an array that's based on here is the list of all of the the users that showed up. JQ is a great

little uh domain specific language for JSON parsing. Um and it should be in everybody's tool book. JSON's a command line tool, so you got to have the files on your local PC to run it. Well, Athena is an AWS tool. Um, and we we started in Athena because it's kind of the most accessible. You can you can do the same thing in in Azure with Cosmos DB. You can do it in Google with Big Table, BigQuery, BigQuery. Um, it's roughly the same thing. Yeah. Um, so we asked ourselves a couple of questions here before we got started and tried to figure out how do we how do we find the answers to these questions? Has someone logged into my my accounts?

I have an organization of of about 84 different um AWS accounts. Has anybody logged into those accounts? Um has somebody created and used access keys? Um so how many people here use AWS on a regular basis? Okay, fewer. So AWS has two ways you can get accessed. Um, one is to use what's called access keys where you basically create um, a stored secret and an identity and a and a pointer to that secret. The whole user system. Yeah. Um, and it and it's designed from a traditional user account perspective, right? It's the original way to get access to AWS, uh, you know, way back in the day. You would create a user and that user you would give that user

permissions and you would get a a secret that was that user's effectively password. It's a long live long live password. Yeah. Long live password. You would store it on your developer machine and every time you you know went out to to AWS, you transmitted that password to them. Yeah. Um that worked but it has main problems. The most notable of which is it's longived right. Um so AWS has more or less discouraged using user accounts at all. Um, if you try to create a user account in the AWS console, it will spend, you know, three screens talking you out of it. Uh, so you should probably not be doing that anymore. And that's why we're asking the question,

did anybody try to do this? Um, yeah, because it's important. It's important that you in in most organizations, it should now be policy. You don't create user accounts and then you have exceptions. So, and then what are what are the attack matrices? And that's sort of an open question here, but um so how do we start answering some of these questions? So again, cloud trail logs. What we did here was to download some cloud trail logs and we will um uh quickly show you how this works. Yeah. Demo time. Straight to the gods for us. Yes. Okay. So um first thing that we're going to do, all of our cloud trail logs are up in the cloud. We need to get them

locally so we can play with them on a command line tool. And you can see you can't the pointer's too light. Okay. Well, you you can see the the structure of the cloud trail logs. It gives you says AWS logs. Here is the organization ID and here is the uh the account or the the region at which it happened the date time and then the account again. this this account this second account is an account as opposed to the organization account. Yeah. So two different numbers that that's the org we belong to. This is the account inside that organize. Yeah. Um and then it's got the time again because of course it does. And then Yeah. And then they're all just

gzipped files of a bunch of JSON messages. Yeah. Line JSON lines. Right. So step one is like what what does this look like? So what does it do? What what can you do with this? Yeah. Um where is my There we go. So, what we're gonna do is run this command with um GNU unzip and we're just going to pick a selection of files. So, we're picking stuff that happened today um because that's today's date um at UTC time at 15 and 16 past the hour. So at 3 and 4 past the hour um 3:00 4:00 in the afternoon UTC time um all those files were unzipping. Then we're asking jq look inside that list pull out the

records. Um show me just the event source inside there. So there's a big long object that's hey this is the the event that happened. This is what's in the log but I just want to look at the event source. Where's this stuff coming from? and then little uh bashes to basically say these are how many events happened and the the number. Um so yeah, so first first brush with our with our seam. Yeah. Um so then let's go back to the presentation. Y if I can find PowerPoint. Come on. There we go. There you go. Yeah. And um so dealing with cloud trail logs. Yeah, they sprawl. As you can see, that was that was one

day's worth of cloud trail. That wasn't one days. That was two hours worth of cloud trail logs. Right there. They they they they create them by the dozen. They create a new one for each of many different operations every single minute. It sucks, but you know, it's what you work with. I mean if you can concatenate them all together like we did you get you get the full result but they create for for um operational reasons they create a file about every two minutes some faster than that but the idea is they want to give you the cloud trail logs sooner rather than later. Yeah. So they're not batching them up. They're giving them to you. Each file contains

anywhere between one to 20 different events. Um and uh they don't index easily. Um and of course if you want to um this this is the size of what we're dealing with. So about 20 gigabytes of data in cloud trail logs for a day. Um oh no actually I'm sorry. This is this is a quarter. That's a quarter. This is three months. This is from January through through March. Yeah. Yeah. Well, it's it's not a whole lot in terms of you know data processing. Um, you can do this on your on your local machine if you so wish. Yeah, but it's also a pain to download all of those logs if you're just going to query it and then you'd

got to go resync them live all the time. Nobody wants to do that. But it's also two and a half million objects. So two and a half million files. That's a lot. So very very small files. Um, this sprawl makes life difficult. Um, it also makes it difficult to to work with like seam tools and so forth, right? Yeah. Um, one of the reasons I chose Athen was that it actually kind of was built around this use case, not well, but but it was. Um, but trying to get these into a seam tool like Grey Log, like part of my thought was I was going to fire up Greylog and I was just going to pump

this data into Greylog and let it do the indexing and do the searching. How many people know what Grey Log is? Good. So, yeah, it's a seam tool. It's built to do this sort of thing. getting these logs after the fact into grey log is a month worth of effort. Um it's just not not feasible, right? And you you want to have the seam set up well in advance, but if the seam doesn't do exactly where you want it to do, you you want the alternate pathway and you're not going to set up a second seam uh because you can't pump those real-time events back into your seam. How many already have of the dozen

people who have a seam up, how many are putting their cloud trail logs into their seam? Okay, most of you. I mean, I think if you've got a seam, it becomes easier to do that than than not. But starting from scratch like how long did it take you to implement a seam? One week, a month. Yeah, I hear laughing over here. Yeah, it's not Yeah, not easy. No. Um, so and I had a full-time job. Plus, I was trying to pack to move to the other side of the country. Yeah, this was not not not gonna happen. So, hypothesis one, like going back here. Um, I've got a dormant account. So, the way that the what my well, let me go back

here. So, no, I'll I'll come to it later, but I run I h I am a lead for a team of about 30 SSAs. We give each of them a couple of accounts and then there's some dormant ones, some SSAs who've left. So my question was, is one of these dormant accounts, one of these SSA accounts that I'm not using anymore still being used? Like, should I be worried about this? Is one of my posts SSA still logging in and crypto mining on my my dime? You know, maybe honestly for some of them that have left, yeah, that would that would be what I would expect. Um, so how do we do this? Like, well,

number one, we're probably looking for IM keys. We're looking for signs of access. Yeah. So, what would that look like? I mean, my first check was, well, thank goodness there are no IM keys in this account, and my security tool tells me that there's only a few IM keys inside of all of my 30 SSA accounts. So, I'm like, okay. Or 84 SSA accounts for for reasons right? Um but let's see, where do we where do we have this next demo set up? So, what I did, so this is what Cloud Trail looks like in AWS. um you know it's it's pulling stuff in and saving it in an S3 bucket. This is all of those files seen through

the AWS console UI. Um and then some more jQ here to see hey look um can we see what's going on with with keys? Um so hold on a second we'll we'll switch to demo here. Yes. Um, that's this window. Yep. So, what we did three or four? Yeah, there we go. First of all, we started looking at, hey, what what um does this need to be bigger? Can you guys read this bigger? Okay. Um, so these are the events coming out. Um, interestingly, down there at the bottom, there are two AWS signin events. Okay, so somebody is signing in here. Let's see what we can do. Um, so the first thing we did was what

do those events look like? So this is in its gory detail. Yeah. Uh, cloud trail record. Yeah, jQ also nicely prints pretty prints your JSON and makes it so much easier to work with. That's maybe my number one use. Um, so it it, you know, it's got a a bunch of different information in here. Um, some of the fields we've already been talking about, you know, here's the the event the event version, the user identity, the event source is the key thing um down there. Um, an event name, which is, you know, the the AWS event, right? You've got these AM actions and that's that's what you do. Yeah. So, this is somebody logging in

the console. This is actually we did this um today just to see. So one of the things you're going to do is like what action would my attacker be doing? How do I know what that looks like in AWS? I'm going to go do that in AWS. Copy down my trail cloud trail logs and look for the event that looks promising. That's what we did here. So you know just basic test your hypothesis by breaking it yourself. Yeah. you know, do do like if you've got a seam, you should probably be, you know, breaking policy every so often just so that you know that it will alert you when you break policy. Yeah. Um, and then the last thing we did

was, okay, so what was the ARN of the user that logged in, right? So with JQ, we're able to pull that out. Um, lo and behold, it's me logging in. That that's the test. So now I can go look for these in bigger sources. Now JQ is just gonna choke. Yeah. Plus, I don't think I want to download all of these logs to my local machine. Like, they're already in kind of a pain. Yeah. Yeah. Um, but we did some other things that we did along this line was I'm not going the wrong direction. I'm going the wrong direction. Yeah. Yes. Um, was look for through a series of actions. Yeah. Um, where can we find the action of

create access key? Yeah. like this is what we're looking for. Somebody's created an access key. This is what it looks like in JQ. This I found it in JQ for a test run. Yeah. Um so like now we're beginning to understand what's going on. Right. Now we understand the structure of the log. Yeah. So you know this is great for jq here. We're down at the raw the assembly bits of the the thing. We can use it to find specific items. We can do it for trial and error. And we don't pay cost on it because every time you run a theta, they bill you. Yeah. So, at a time, but it adds up quick.

Yeah. But again, jQ isn't going to isn't going to do 20 gigabytes of data easily for you. Yeah. Um, so hence um I'll use this the cloud trail. So the first thing that you do when you get to Athena for cloudt trail which is kind of a known pattern is AWS wants to sell you cloud trail lake which is better than Athena for doing this stuff. Oh my god because AWS wants to sell you a service to manage the service to manage your service. Yeah. Like and they've created the whole problem to begin with. But yes, let's Yeah. Um, so, um, you know, we we'll we'll sell you coffee and then we'll sell you beer to calm you down

after you've had too much coffee. Um, Starbucks is missing that business model, by the way. Maybe I'll patent it. Um, so this is what those JSON structures look like in Athena. AWS will help you create this thing. So that's very cool. So in Athena you create this and the first thing you do is try to query you know just a a sample set. Yeah. Um in jq we basically get the results as soon as we press the enter key. In Athena it takes us six and a half seconds to give me a sample. Um and it gets even worse if I want to sample from a particular account. So, this this query ran for much longer than 20

seconds. Um, I canceled out of this query because at some point I was afraid I was going to break it. It actually probably would have ran for about a minute and a half here. That's a long time to process and it was processing probably a couple hundred megabytes of data because of the the the um trying to filter on a particular account. Yep. So that's just problematic. Like if you're doing, you know, I just learned something I run. I want to query to answer this question. You know, what does it look like if I tweak it a little bit, right? You want to be able to kind of just do that back and forth. And

right with jQuery, the repetition, the development cycle, it's got to be short. Yeah. And it's not short with jQ on a small set of data. Like I it goes as fast as I can type the commands. So like I'm right in my udal loop, right? So, I can think, ask the question, get a result. Think, ask a question, get a result. If I have to wait 30 or 40 seconds or 90 seconds between queries, I'm sorry, squirrels, beer, Coca-Cola, something. I'm off getting chips. Um, but you can improve this by doing Athena partitioning. what if partitioning in Athena does is say okay I know that the data for this index is in this file but Athena doesn't know

that until you tell it even though AWS built the thing and it knows like they built it this way but you got to go tell it this is the way it's built however doing this partitioning this this is an art it's not a science it's an art and um you know the cloud trail logs are broken up by um account, region, and then days, which you know, natural sorts of ways, but it also can be broken up by service, but but the files aren't, but the logs are. So, we can only partition off of what's in the file name. And so, it turns out we have 16 regions in AWS. We're collecting logs on all of

them. I happen to have 84 accounts and I had a time period of 90 days. do that multiplication, you come out with about 120,000 partitions that I've got to pump into Athena. And if you just start doing this the straightforward way, every API call is about um 08 seconds. So 08 seconds times 120,000 partitions is multiple days to try and do this. So this like this is painful. It took me a full day to figure out how I saved myself three or four days. Um and I don't think I did it right. There's a way to do batch partitions and I just discovered that you can run these things um you can launch them um in the

background in your shell as long as you don't m la launch too many of them at a time and you can increase the rate by about a factor of 15 or 20 which was enough that I could just set the um set the computer to go do this and then go pack a bunch of boxes to shove in my pod to get ready for moving. Um, so but this painful like and I'm sure seams do this for you, but you still have to give the seam some information. Um, that's the beauty of using a seam. Um so in the end I ended up with like 152,000. That's what's in there now because there's couple of extra days and some

other stuff. Um, now that query that would have taken multiple minutes is down to 30 seconds. That's a win, but still a little long. Yeah. Yeah. But we're getting there. But I'm doing this over the entire three months of data, not just on a few hours out of a day, right? And across multiple accounts across the entire worldwide, not just one. Um so um now we can we can do this for the entire quarter for a day. It's 36 seconds. So we're you know what we're seeing here is that as we scale up the query time doesn't scale linearly. What Athena is doing in the background is doing multiple right multiple batched systems, multiple threads to go do this and it's

optimizing in the background. So accessing a little bit of data, there's a ton of overhead, but once you pay that overhead price, larger queries don't take as long. And so if we do this over the entire set of accounts, all 84 accounts across all regions across the first quarter of the year, the first three months, I can run that query in four minutes, which is not bad. So you run the query, you you do the work to figure out what it needs to look like on a smaller subset of data in your UDA loop and then you go run the query big time across your entire swath of data. Um so then again now that's all the tech

back to who's created access keys in my accounts. Like that's what I want to know, right? So um I came up out of out of the entire set of accounts the 84 accounts across the I came up with four instances of access keys being created. This is good news there there are 30 some odd SSAs who are all logging into their their AWS accounts. Only four of these guys are making mistakes. So somebody read the documentation. So um we'll dig into these. So I numbered them I lettered them ABCD, right? And this is I did some more research digging into each one and this is exactly what you're going to do in computer thread intelligence. You found a little bit of

something. Does it make sense? Does it not make sense? What what what's going on here? So um on January this this is the one legitimate use case. On January 6, one of my SSAs provided another SSA account to his AWS account to look at a project that he'd done. So, they were collaborating and this was a a good use. Well, uh better better than awful use. Better better than awful use. You should have created a role that could be assumed by the other accounts role, but we can excuse that, right? Yeah. Um this was the shortest path for them. I'm like, okay. and and it showed up because we're creating access keys. It showed up in our in our

vulnerability tool. So, it's okay with that. So, you know, it's it's a demo of how to detect this stuff. Um, the second one is a new SSA that got hired in February just using whatever he learned at his previous job. Did not read the manual, stopped at step one, did his own thing. Right. So, training opportunity here. Um, on March 24th, a different SSA started with the company and SSA number one taught him how to do this without reading the documentation that I'd wrote. So, more training opportunity. Last one was that new SSA who'd been taught by the other guy doing something on a different project. So, what I have here is, you know, a sprawling problem

if I don't catch it now, but I caught it. Um, but what I did not find here and I still need to keep searching for is there anybody else in weird places using my account. So, um, let's see. I want to back up to let's see what this looks like in Athena. Um, we've got a couple of queries in Athena we can run live. Um, any questions? Yeah.

So, we can start with this sample here, which is basically the Just show me kind of what um Did we screw this up? You you clicked on it again, and when you click on it again, it reloads the query. Oh, yeah. Um Oh, no. I don't know. I think it reload. Yeah, it reloads the query and the query had changed. Yeah. Yeah. Okay. Um Yeah, it's hard to see here. Where's recent queries? There. No, the top top. Yeah. Oh, recent queries. Thank you. All we wanted to do was select star and um uh limit 10. Yeah, this one right here. So, um so this is the equivalent of just printing out a few records in the

database. Um, and so we're using SQL like queries which is kind of nice like people have learned SQL. Um, and this is just showing a random selection of of tin queries and then stopping in the back end comes back gives you the data shape and some idea of what it will look like. Yeah. Um, this is looking at basically event sources. So again, you know, this is the question of where is my data coming from and um uh you know what beginning to pick apart the data. Yeah. Um but again, you know, we're going to wait like I think 27 seconds for this one to come back. Roughly 30 seconds. Yeah. So, but we're just counting up, you know,

what are the what are the event sources and how many records of each these event sources if you really want to get into the the technical details like AWS each of their each of their hosted services can be an event source each of them has essentially its own you know starting point into AWS. So and I think as at as as was mentioned by this guy you know there are some useful features like your queries are all saved in fact there to set up Athena you set up an S3 bucket that's your output and in that output is going to be saved um a metadata file and an output file. So this data the query is saved in a

metadata file and the output is saved in a separate output CSV file. So all that record is there in an S3 bucket for me to go back and look at like I'll never never forget anything. Of course, it's going to create one of those files for every query I run. So if I'm doing, you know, run a query, get a result, run a query, like yeah, that S3 buckets filled up and guess what? It's partitioned by days. So like if I have to remember on what day did I run query was it in the morning, afternoon because I got to go Yeah. Yeah. So but it's all there. Like it's all useful. Yeah. Um Yeah. And then

don't rerun the query for this one. Yeah. Um this was our console login. So this was essentially and now I've limited it to one day. Yeah. Um you know if I did the whole thing it would probably be four or five minutes but for one day for um St. Patrick's Day March 17th. Oh no sorry. Um yeah. Um what console login? So this is this across all of my accounts but for a single day. Yeah. Um useful to to look in. So this is answering the question how many console login right. Um so this stuff can be useful. One of the things that we did with this was look at um where what are the IP addresses of these

login. Yeah. Um and so oh yeah we only had three results here. So here's the source IP address. Y um hold on one second. So let's take these and plug them into. So if you're not familiar with MaxM mind, it's a IP geoloccation database. Um it's sort of the IP geoloccation database and if you need that data, go to them. Oh, of course. Uh let's refresh this page and then re Yeah, there we go. Yeah. So, um, reasonable. This is out of Beaverton, Oregon. So, um, this is probably, um, Ziply fiber. Is that industrial or you think residential? Uh, probably residential. Actually, Ziply is a is a Yeah, it's what I used to have in Kirkland,

actually. Okay. So, the good news is Joe is an SSA who lives in Portland, so it's probably Joe. Yeah. Um, and then the other address um was a address in Redmond. Probably me actually. So um so uh but this gives you the ability to kind of you know bring some stuff together and and and look at data slice and dice. Slice and dice. So yeah um it's a bit of a hodgepodge of tools and it would be better. No, it's do it yourself. Yeah. Or do yourself in. Yeah. Um so uh yeah it's a lot of work. I mean I think just using a seam for sock is a lot of work, right? Yeah. Yep. Okay. So, um, but you need good

hypothesis. You need to ask good questions. And this is where I think as an industry, we're kind of like not thinking about the cloud the way we should. Maybe you could educate me. Maybe I've been searching in all the wrong places for what are cloud attacks and what do I need to go look for in my logs. Um, but this is the toolkit you can Yeah. do ad hoc and figure it out for yourself. Yeah. Cool. So, thank you [Applause] So you guys who are familiar with seams, does this look familiar or am I barking up the wrong tree?

Open search elastic search might be a better tool. Yeah. Yeah. Okay. Okay. So that may be true. Yeah. Any other any other thoughts? Yeah. Yes. Yeah. So the same the the same exact data is available in your GCP account with BigQuery. Um and the it's more or less the same experience. It's all in a a cloud bucket and you can download it to your local machine. You can jq over it or you can run it through BigQuery and just get it. I think AWS and and and GCP do a good job of saying these are our cloud logs. Yeah. When you deal with Microsoft, they're like, "Oh, here's the tool you qu to query your cloud logs."

Yeah. Right. So, actually trying to get your hands on the cloud logs in raw form is harder just because they want to push you through their experience. They have a they have cloud uh log analytics. Yeah. They push you to this tool. And so if you really wanted to just download off the the storage account where this stuff is stored, they don't really document that. Well, you harder harder to do. Yeah. But yeah, it's the same experience, same exact thing. Yeah, it sounds like these are issues that occur when you are serving people at scale. As somebody that doesn't have much enterprise experience, it's only been implementing scene locally and in small environment, how how soon or at what level

at what point am I generating so many? I think that that that is a good question. So, you're starting with a smaller smaller base like I started with, you know, I I started with 84 accounts to begin with. That's pretty big. Yeah. Like I we have lots of cloud customers. Lots of them are in the the we have a dev, test, and prod account and an infrastructure account. Four or five accounts right? If you've just got you if you've just got one AWS account, you can probably S3 sync your cloud trail logs down. Yeah. And be fine. Um when it starts getting painful is when you're running up against it, right? If it worked for you, it works for you.

Yeah. I'm I I would have engineered this differently if id had more time like they they told me in February and yeah, you know, by by beginning of April, I had to have a a working seam for worldwide cloud operations functionally in my spare time. Yeah. Without, you know, pissing off my wife because I wasn't talking to her for weeks on end. Um you did that anyway. I did that anyway. But yes, um so really when it gets painful like it's time to find a new tool. Yeah. Um when when your seam tool is charging you per record ingestion and you're paying too much money for it, it's time to get a new tool. Yeah.

Right. Yeah. I mean, yeah. Uh so, and it can be fun. Like I you know, this there were parts of this were that were painful, but but a lot of it in the end was kind of fun. It was an interesting exploration. The painful part Athena is useful for more than just this like the the ability to query over a set of JSON like logs in your AWS S3 account is uh like there's a reason they have a whole product devoted to it. It's not just for cloud trail. The other thing I'd say it's helpful to have somebody you can ask questions of. Yeah. So I think in he was setting up for doing his own

talk. we were supposed to collaborate on this. Um the two or three times we talked about this like I came away with much better ideas than I did on my own. Yeah. So collaboration is useful. Yeah. Yeah. Yeah. Cool. You mentioned earlier about particularly with cloud security that you're constantly trying to develop tools. Yeah. Are there any other examples that you like to share about that? Um other tools that we built for cloud security solutions. Um well I mean the I I we sell a cloud security tool. Yeah. It's it's the the technical term for it is a cloud security posture management CSPM. That's what the industry calls for it. Um there are also everybody's sort

of like now if you've got a CSPM you need other tools around it to differentiate yourselves. Gartner now calls that a CNAP cloud native application protection platform CNAP. Um so there's a whole s source of tools there. Aqua Qualace Whiz. Um yeah where's my badge? What's they're probably have them on the on the badge or Yes. Look at the back. Yeah. Um so Rapid 7 who's one of the sponsors sells sells a CSPM. So CSPM is the configuration piece. The where am I making mistakes? As for tools around the what are attackers doing to my piece, I don't know that we've got cloud focused seams. I think we have infrastructure focused seams, things that like we'll

suck in your Windows event logs. We'll suck into your Linux um systemd logs, right? As a smaller practitioner like the the sorts of tools that I have built. So I've done exactly the same sort of thing with uh Postgress. So I will suck down the RDS logs from Postgress and look for connection attempts to see if there is any or any you know machines in our cluster or or pods in our Kubernetes cluster that are uh trying to connect to Postgress and failing like we should never have a connection failure. We should never have a login failure within our our production environment. Um, and I've built the tooling to check that. Only run it once a quarter because it

never finds anything. But I know it would find anything because I've, you know, gone and failed login to make certain it works. Um, that I've done the same like that that sort of thinking can be applied to a bunch of other things. Um, I've found that sort of logging uh in application web logs. So I, you know, basically every application is going to be a little different in terms of what queries you might find and what app what outputs it's going to have in your logs. Um, but this process of looking over the jq output from a day's worth and figuring out what I might be looking for and then set sending it off to Athena or

a map produce to actually run it across the the whole set. Um, is pretty pretty common. Um so like another place I've I've done this is you know we had a application vulnerability and you know if you if you set a certain kind of header um you would appear to be coming from and this is just the the classic uh the classic uh uh proxy you set the proxy header and pretend that you're coming from inside. like we we checked to make certain that nothing had the proxy header that wasn't actually proxied through through that. Um and you know easy enough to do when you have the logs there but it's something you need to do on an ad hoc basis when

you discover that vulnerability. Yeah. I mean what what you do is you take these questions and then you operationalize them, right? So yeah, I run this once a quarter. I run this once a once a time period. Yeah. Something that I check for on a regular basis. Yeah. So, um, and that's what I'll do with this is start looking for, you know, who's creating access keys because that's the wrong way to do it. Like you there's better approaches for everything. Um so um, same thing, you know, and so those CSPM tools tell you where where do you have mistakes in your infrastructure? Where do you have access keys, right? Right. And they're kind of focused like

I mean they're focused on what what people have known for a while. It takes a while for for yeah like attacker tactics to percolate into don't have these settings so you can or set things this way to prevent those tactics from succeeding on your infrastructure to building it into a tool so that now you can automatically check with the tool. Right? That's what this the CSPM is for. Um there's a a long lead cycle there for that. So you know there's still like if you go to this the center for internet security CIS AWS foundations benchmark that tells you all the you know the proper settings for your AWS stuff not by and it's not

told to you by AWS like AWS will be happy to tell you how to run your infrastructure. The guys at CIS are practitioners like you and I and him they've been burned by it. So they're going to give you I think more straightforward advice and that's what a lot of CSPM tools built from is that CIS. Anyhow, it takes a while for people to get burned to get it in the next revision of that document for it to get into a product update for you to have, you know, a tool that you're paying a company money for to help you protect your environment. Like that that period's at least two years long. Yeah. Um so you need to be able to think about

some of these things, run your own queries so that you can do your your own thing. So cool. Okay. Well, we're kind of at time. 10 minutes. We'll give you 10 minutes between things unless anybody else come on up front if you got questions. Thank you. Thank you very much.