GF - CICD security: A new eldorado (talk)

Show transcript [en]

unsupervised or really less monetary that the classic windows world and did we found this target xavier so yeah so we feel that cicd pipeline will actually check most of those boxes the first thing we can talk about is that devops teams usually come with a agility mindset meaning that in well at least sometimes translates within devops teams as less security policy less monitoring and more freedom to set up whatever they want what we've seen in some of our previous audits went as far as devops teams becoming actually another i.t teams within the information systems with their own budget their own systems and overall they were doing whatever they wanted so i wouldn't call that shadow i.t at

this point but rather actually shadow information systems within the information systems which as you can guess will come with tons of security issues that are very very interesting for attackers and pen tester another thing which is not always necessarily the case but we feel that devops teams will usually take advantage of new type of infrastructure such as kubernetes such as cloud provider and things like that which are in our experience less supervised less monitored than on premise infrastructure another thing to keep in mind is that you cannot manage cannot administrate cloud infrastructure as you would do on on-premise infrastructure and what we've seen in the past is that there is a lack for example of cloud

expertise within the devops teams meaning that they made a transition from on-premise infrastructure towards cloud infrastructure but they don't really know how that works and what are the risk security risk that comes with it so what we've seen is that within the devops teams there will usually tons of security issues and security misconfiguration that will be obviously very interesting for us and the last thing quite interesting when we talk about cicd pipeline is that well there is to the cd means at some point there is deployment so at some point within your pipeline you will need to have credentials you will need to after some type of access that will allow the pipeline to deploy in production

meaning that if you successfully compromise the pipeline you now have access to production environments and as we will try to show you today sometimes you can have access to even more than that and the whole information system so what we wanted to share with you today is actually a real life scenario that we've performed on cicd pipelines um just a quick disclaimer before we start cicd pipeline will come with tons of different flavors depending on the technical stack that you might be using obviously uh you might not completely reflect what we will be talking about today please keep in mind that while the very technical steps to compromise different technology might vary the overall methodology will honestly

roughly be the same and the problematics will be quite similar and lasting we don't have that much time we have 45 minutes something like that so we will have to go quickly over some of the subjects but if you're really interested in it feel free to come talk with us at the end of the talk or i think we have a workshop tomorrow morning if i'm not wrong so for those of you who will participate in it we'll be able to talk a little bit more in depth of those different steps so the first thing that you might do when you want to pen test the security pipeline is how do i get in i'm an external attacker how can i

successfully obtain initial access within the pipeline so the first thing we might want to we want to talk about is the dependency confusion or dependency hijacking we will talk about this later on but it's a type of vulnerability where you are able to trick the pipeline to download a malicious package from the internet and you will be able to execute this package within the pipeline another type of vulnerability is well weak passwords it applied to cic pipeline but it's pretty much applied to everything all over the world but if you have exposed assets cloud solution used within your pipeline on which you have weak security configuration weak password policy and no multi-factor authentication it can be

used by an attacker obviously to obtain access within the pipeline to find secret and some time to pivot within the pipeline the last one which might be interesting and we've used it actually quite a few times is you might have s3 bucket for example containing artifacts that might still have secrets within it and again it might be a way to get within the pipeline however after that said what we feel and what we want to share with you based on our experience is that cic pipelines are very interesting if you want to escalate your privilege within the information systems but we however do not feel that they are a very efficient way to obtain initial access within information

systems we feel that there are classical way i would say that are more efficient and quicker to obtain we can talk about phishing even physical intrusion or exposed assets with vulnerability honestly we feel that it's sometimes easier to use those than to try to really focus on the pipeline itself just a quick word after saying that actually last week there was something that came to light which is um 35k repository of 35k it's on github on basically a bad guy that fork known project and add malicious code within it hoping that somebody would download them and run them so these type this type of thing thing is still happens as far as i know there has no really

known impacts for now but as you can see people still try to exploit this type of pipeline to get inside the network but we do not feel that's the most efficient way so if we go back to a scenario well i called remy and i told him okay give me an access within the information system so he did his magic it did some kind of fishing or whatever and we were inside the pipeline so the first thing we were interested in interested in is how can we obtain source code we know from experience that source code are very interesting assets because it usually contains secrets and it's a great way to escalate privilege within the information systems

so obvious targets would be source code management solution for example gitlab or github or something like that might be very interesting so you might be able to obtain access and you might be able with anonymous access to find code but if that's not the case well there are other way to find code you might be able to find the same source code in other parts of the pipeline the source code actually flows in basically all the parts of your pipeline so you might be able to find it within a scan source code scanning solution such as check marks sonar cube on others and you might be able to also find source code indirectly within the artifacts built by

your pipeline so even if you do not have success on the gitlab or github or whatever directly please try other thing and you'll find that it's almost all the time easy to find one way or the other source code within the pipeline when that's done what you will be looking for is secrets within the pipeline so there are multiple ways to do this but two of the the most efficient way we have is automated review there are known tools we can talk about such as geep leak or truffle args so these type of tools will have a detection mechanism based either and are on entropy or regular expression meaning that they will be able to go

over the whole repositories and tons of repositories in quite a short amount of time um but to be really efficient they need usually need some kind of customization depending on the context something that an attacker and a pen tester will not be able to set up in a short amount of time and just to give you a quick example what you can see here on the bottom left is a dot f file containing a secret the password the password being secure password 2021. in that example actually all automated tools will not be able to detect it why so because this is a low enthropy password and this actually does not match any known and any obvious regular

expression so automated tools are great but they are blind sites and if you only use them you will miss some type of secrets the other thing you can do is manual review obviously it's much more slower it's harder for the mine but you can by searching for very specific keywords within the comments secrets password or things like that you can actually quite often find secrets that were not detected by the automated tools and just to give you a few feedbacks on credentials credential hygiene in our previous pen test what we found is it team uh an id team with one hour client that was using git leak to detect secrets within the source code so

basically they add get git leak setup and every time a secret was pushed on a repository what they would do is they would remove the file push a new comment with a remove tile and that's it they were not revoking the password so obviously they were not very familiar with yet because the only thing we had to do at that point was to search for all the comments made by the it teams and we added a list of all the passwords that were still working within the cicd pipeline another thing is that a user pushing bash history file within their repository i don't know how that can happen but they did and within the bash

story file we'd add all the comments that had been typed by the user which was very interesting to understand how developers were interacting with our application including obviously clear text passwords within the bash story file and the last one is actually one of the shortest ci cd penta that we could perform because we arrived they were there was anonymous access on all the git repository and they were a repository dedicated to ansible and they were using ansible to manage service accounts within the active directory and they add in clear text a text file containing all the passwords of all those uh service accounts with adeliemus access on their git repository so at that point maybe like

20 minutes after the beginning of the pen test we were pretty much two men admin on the active directory so as you can guess this type of vulnerability and credential hygiene is going nowhere and it's something that will be still exploitable in our opinion in the years to come and yes and last thing i would say is that on all the source code management painters that we perform that contain tens of or more repositories we've always and always hundred percent of the time found at least one functioning and valid passwords so what are the prevention measures that you could set up and that clients could set up within their environment the first one is ensure that there is

available secret management tooling and that developers are aware of them are trained to use them and know how to use them another thing that could be used is scan repository with automated tool that we talked about limit access to technical teams and from time to time maybe perform a manual scan and manual review of the source code to ensure that you've not missed some kind of secret there are tons of other things that you could set up and just to cite a few what we've seen and that we feel are very interesting in some of our client that's actually pushed fake secrets within their repository as a form of onipot so they were pushing false secrets within

the repository and they had alert if somebody was trying to use them to know that they had an attacker within the network so there is tons of things that you could set up to protect against that so at that point you know found secrets within the gitlab and more especially we've found secret allowing us to access the gitlab and push source code to the gitlab the next obvious target will be something called the orchestrator i think most of you are already familiar with it but just to remind you very quickly the orchestrator is the main solution within the city pipeline it's the the the solution that will be in charge of pulling the source code of building

at the project of performing the test of deploying sometimes a project and so on so to do so obviously the orchestrator needs access to a vault or two secrets to perform all these actions in other words if you successfully compromise the orchestrator you've obtained all the secrets available within the cicd pipeline so for attacker and pentester it's an obvious target and a very valuable one so to do so there's a type of vulnerability called poison pipeline execution some of you may already be familiar with it just to explain it in a few words what is poison pipeline execution is that the orchestrator will build projects by pulling the source code from let's say the gitlab

in other words if you're able to change the source code stored within the gitlab you might be able to change what will be built and you might be able to change the different steps that will be performed to build a project so this can be done in two way either directly like you might have um build configuration file stored within the git repository itself what you can see here on the bottom left is a jenkins file so it's a file that we detailed all the different steps that will be performed to builder projects so if the jenkins file is stored within the repository and you can modify it well you can change the steps used to build a

project and as such you can add malicious steps within it you can also do this in a more indirect way for example by modifying make file file adding malicious command within it and when the build will when the build will be performed obviously the make file will be called at some point and your malicious code will be executed so if we take back uh we go back to a scenario you push the malicious code to the repository a web hook is sent to the orchestrator the orchestrator will pull the source code and it will send the jobs to be built on one of the build nodes just in a few words the build node is

usually in at least his best practice is another server that will be in charge of building the project you usually don't want to build a project directly within the master server because if the master server gets compromised as i said earlier all the secrets are compromised what we've seen however is that these best practices is pretty much never followed and i would say almost 100 percent of the time we asked we were successful from pivoting out of the build agent back to the master server either by having secrets within the built agents such as ssh keys that were the same used for the master server sometimes by having secrets within the bash history file there's tons of way

that could allow us to pivot back to the master server and well hopefully for us but bad news for devops 100 of the time we were able to compromise the master server so the question is once you've compromised the orchestrator what can you do at that point the first obvious thing that you could do is pivot out of the pipeline get out of the pipeline you know have access to tons of different secrets and i'll let goche talked about that in a few seconds but you can now access other systems you can now compromise other systems and well the pipeline is not very useful at that point what you can also do is inject backdoors within project in the

stealthy way meaning that nothing would appear within the user interface of the jenkins but you could very subtly at malicious comment or malicious code on all the projects that will go through the orchestrator and the last one is poison the well i talked about dependency confusion and dependency hijacking earlier you could also try to obtain persistence within the information systems by compromising or by pushing malicious packets within the packet repositories in order to keep access within the information systems so i will very quickly provide a few feedback also of what we find on orchestrators quite often we found poor access management as i said the orchestrator is the most important part of your cicd pipelines however most of

our clients don't really harden it well and what we found again is sometimes a jenkins with sign up enabled meaning that everybody could create an iphone on the jenkins server and another option which was all the sign up user all the logging user administrator of the jenkins so you arrive you create an account and you've not successfully compromised the jenkins server what we've also found is logging too much within the jenkins server or any type of orchestrator you usually have build logs and within those build logs we very very often find secrets that are stored within it allowing us again to escalate privilege within the jenkins and the last one is sometimes people still think that containers that dockers

are a security solution and that it will help them to secure their pipeline so what we found quite often is that the master server will have agent and those agents are only containers run on the master server itself and they think that since the agent are within containers attacker will not be able to do anything but i think you all know here that containers are not a security solution and it's i wouldn't say easy but it's quite frequent that we are able to escape the containers and compromise the host system so i just want to go very quickly over dependency poisoning and dependency confusion so as i said earlier you might want to poison the well to keep

persistence within the information system so what it might look like is here on the left a developer wants to download for projects it will need to install a very public public package okay so you say i want to download the latest version of the package called public package at that point what i've done as an attacker since i've compromised the orchestrator i've pushed a malicious version of the public package within the internal repository meaning that when the user will try to download the public package it will ask the internal repository hey internal repository do you have the latest version of the public package and the internal repository will say oh i have yeah i have a version locally but

i can check i can check on the internet i can check on the public repository well the same version is well the same number version is available on the internet but well since i have it locally it's always the local version that will be prioritized so it's a great way to build persistence because it's very stealthy it's low visibility because the package the attacker pushed on the internal repository is a working version of the package it will not break anything it will not come with any bug it's just a compromise version of the package another thing you might have heard about is dependency conversion which is a little bit different which is a user wants to download an internal

package the package is available internally but the attacker has for some reason no knowledge because they found it in the documentation or something like that that there is an internal package it will push a malicious version of the intel package to the public repository and the internal repository will be tricked into providing the malicious package coming from the internet to the internal user so dependency poisoning is a great way to build persistence and it's very stealthy dependency confusion is a great way to obtain initial access but it's very high visibility and high impact because the malicious package downloaded from the internet is not a working version of the internal package so the user might realize very quickly that

something has gone wrong and say okay there is a problem and i need to find what's going on okay so i just want to talk about this very quickly and we can we can go back to what i was saying earlier about pivot out of the pipeline and maybe try to compromise other parts of the information system such as kubernetes cluster and our let's go to talk about that so from the jankage we could retrieve credential to deploy application within a kubernetes but what is kubernetes firstly actually kubernetes is a solution used mainly to deploy container applications such as docker application and to manage them throughout their lifecycle for instance communities will handle restarting your application whenever it

crashed or if you lose one of your cluster node it also has some features to manage secret for instance and networking kubernetes is actually highly configurable and has many protection to be secure however on a bear installation it comes with none of this protection installed so it is up to the cluster administrators to be aware of them and to properly configure them and what we often see that it's not all well the case during our pen tests so we'll go through some of the common and critical issue we often see during a cuban span test for this uh scenario we are also considering uh kubernetes running within a cloud environment as it provides additional interaction with the cloud

and so it increases the attack surface the first common issue we see comes from the networking actually by default the inner network of the cluster is completely unfiltered that means that one application may communicate with any other application within the cluster so during a pen test we can easily use that to scan the internal network and to get access to services and exploitable vulnerabilities on them to get a photo on the other application and this is interesting also because not only can we access services exports to the outside of the cluster but also the services which are not so it is very important to continue to harden your container such like you would do also with your

application server it is also interesting when communicating to outside of the cluster when one application try to communicate with an external component there are net mechanism which occurs on the cluster node and on the clone environment this can be exploited on the instance metadata api on cloud environment this api is used to for resources to retrieve information about themselves including cloud credentials and to know who is requesting the information the api based its choice on the source ap so when one application try to request this api and thanks to the nut it also looks like the the requests come from one of the cluster node and so one application may retrieve credential the credential of one of the cluster

node and pivot them to to aws with its privileges so it is important to enforce uh filtering within the cluster thanks to the network policies which exist within the kubernetes the second common issue comes from the i am like often with with policy management whenever permissive rights are given we may move and escalate quite easily i won't dive deep in it because others has already done that such as cyberark but i will only tell you few of the issue we commonly found so the first issue we sometimes find fun is that one application may require to commun to interact with another application resources and usually this is done through a highly permissive set of right and so we can easily exploit

them to move laterally to this application another issue also comes with the jenkins itself actually for sake of simplicity and because jenkins is used to deploy several applications in several different contexts it is given often a wide set of rights on the whole cluster and so just the credential of the jenkins usually is usually enough to compromise the whole classic in a cloud environment also you may give your application a set up right on the cloud environment itself so if you get access to one of this application you may retrieve the service accounts used used by the application and private once again to aws and sometime with high privileges so it is important to review your

policies and like always to unfold the least principle prove the least privilege principle and for that there are some tools which may be used on the kubernetes clusters such as cubiscan ukraine last but not least is the lack of restriction of your in your deployments actually when you deploy you start an application within the kubernetes cluster you decide how this application should be running and so you may decide which user will run your application but also which set of capabilities you should give to your application and even which container isolation to enable or disable so when one user is capable to start a new process a new application with no container isolation it's quite easy to to escape it to compromise the

cluster node itself and once you have compromised the cluster node it's easy also to get access to any resources within your kubernetes cluster such as the the secrets you may also as you are capable to run code on the cluster editor you may once get private to aws uh by requesting the instance metadata api so it is important to enforce a strict restriction in what your developers develops are allowed to start on your kubernetes and this is even more true because only a partial isolation is sufficient to uh to compromise the whole cluster and on that bishop force already did a thorough work and described several attacks which can be done based on different set of enabled and disabled

isolation so we see here uh three of the way to private to aws but there is one last thing i'd like to share on kubernetes is that kubernetes has all the capacities to be monitored and properly monitored it has a complete audit policy and it also has several compliant software which exists like falco to at every moment ensure only secure application are running within your your cluster so it is important to also set up a monitoring of your clusters so i will leave remy continue on the aws port okay so inside the kubernetes cluster we found an awbs account what could we do with it to quickly understand a privileged escalation in awvs and then lateral

movement i need to quickly introduce how the identity access management works in aws it's quite simple there is two major ways to delegate privilege in aws the first one is called direct attach as this name you define an i am policy with the privilege and you attach these policies to an awbs identity that could be a user that could be a group a virtual machine services as you want and then this identity uses security token to pair from action inside the awvs the second part is known as role based it's quite the same you define that iron policies you attach it to a wall and then an identity could assume this fall to retrieve the period

you must know that the world delegation need be defined in both way first of all the war have an assumed policies that will define who could assume it and the identity need to have the privilege to assume the role so the delegation works in two paths so once we have an account in aws we could try to perform some enumeration we were really lucky in that environment we directly found a really high privilege that is pass four this four is really interesting because it will allow you to pass and to share any role of the awbs account to a service what is really interesting on that point is you could pass a role that have more

privilege that your current accounts it's always used to perform local private scholar education inside an aws account so we exploit it with a ram the creation function we pass so another really privileged role attached user policies in the i am uh private scalidation and then we grab the administrator access privilege it's like domain admins in uh active directory world and we take over this first account you should know that there is actually around 25 different ways to perform a previous validation and to abuse some privilege in aws i should recommend the really great blog post from renault security labs we will find the reference at the end once we have compromised this first aws account that

was used in the environment from the devops team we should know that in aws there is not always only one account there are several accounts into a company to handle different criticality and different assets this all these accounts are put together into an aws organization and at the top at this of this organization you have a root account this will have all the privilege over the world organization and could go back on it so repair from the enumeration again with your newly acquired privilege to find a way to bounce back on the root account we found that a role a custom administrator wall defined by the divorce team culled assumed another role into the root account

the name of a scroll was pretty easy to understand it was like read only i am policy in master accounts so we assume this for and then we assume the other role into the root account as his name we had a lot of privilege but only read-only privilege we start again the animation all the users of war the im policies and we identified what we should compromise in it like who is administrator access in the root account we found several roles but one bring our attention on it why it's because inside these asian policies we found that an account from the devops account and user from the devops account could assume this war in the root account

so we bring back in the devops account enumerate again and check what is the monitoring war that could be used potentially to access the most privilege in the awbs organization at that point we find a little issue is the asian policy of the monitoring world is quite restrictive it allows only a really specific services of awvs to assume this fall it was the first problem but actually we don't care because we are administrator of the devops account so we could just update the assumed policy put our compromise a role inside it and steal the monitoring credential and then we bounce into the root account and compromise it once we are administrator access in the awvs

organization we could access every account defined in the organization in order to demonstrate the impact to our clients we decided to find a way to pivot into the impromise network so we start our enumeration again and we found an aws account with all the terraform and ansible configuration and all the password defined inside we grab them and we bounce back into the active directory world and compromise the active directory we like to show with that kind of scenario that we could for example deploy run somewhere set up some backdoor to extract sensitive data etc there are three interesting mutual to put in place inside awbs to harden it the first one is not really easy

the im policies in aws could be really complex actually you could define a policy with really restrictive access such as ap location multi-factor authentication uh rexxar regex on the name of the user etc so i really miss a tool like bloudon in awks to create a big graph with all the configuration and see the interconnection between the wall different aws account a few tools on aid on the open source uh could try to do some stuff such as cloud splitting from salesforce or scoot suites from ncc group the second point is to use an abuse from service control policies this configuration will allow to define a master rules at the organization level that nobody could modify from the local

account and to block for example some privilege or to avoid that someone could perform an action on sensitive assets that will could define thanks to really simple tag this uh service control policy are not readable by anyone outside the root access in the organization so it's pretty hard to export an aws configuration when you don't know what are the policy defined and the last one is to define everything log everywhere export them because it's quite often like the local expire in cloud providers such as azure or awvs and after a third day you lost everything and try to perform some dedicated collaboration on it there is really interesting rules in great duty for example

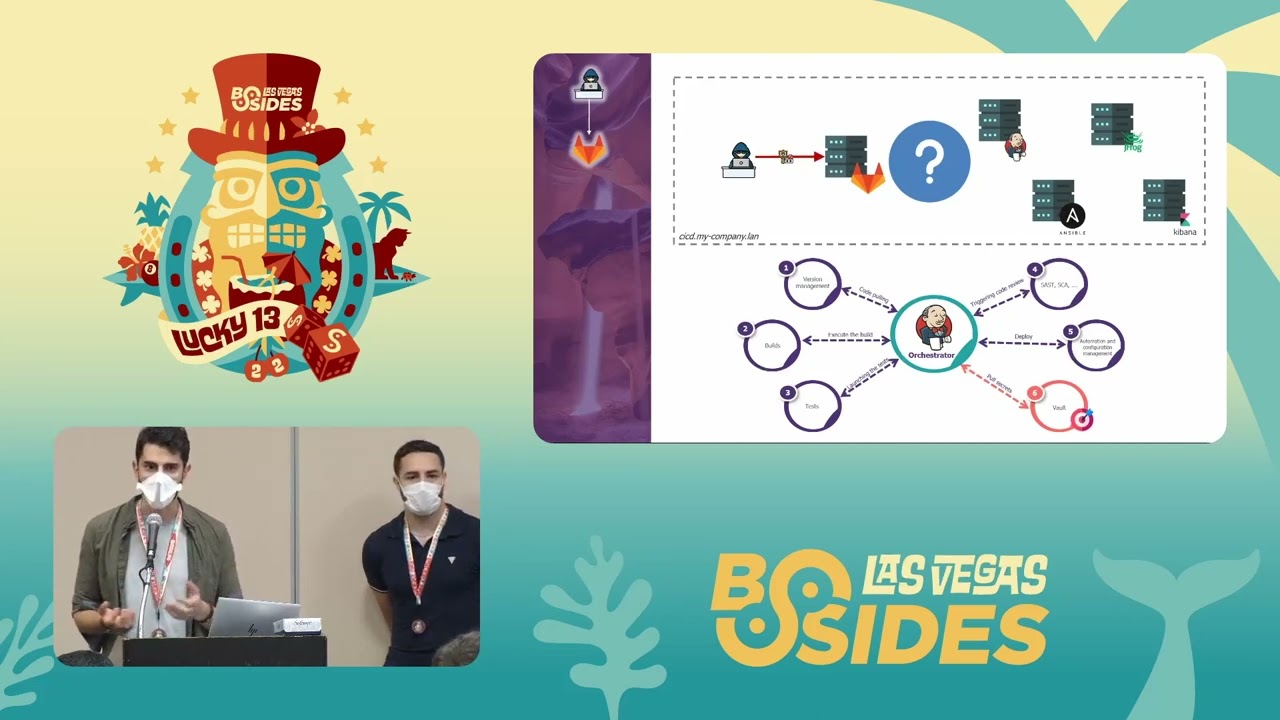

okay so let's just take a step back i'll go quickly because apparently i talk too much so we're already a little bit late on schedule but um we wanted to take a take a step back very quickly and look at the overall scenario what i wanted to share with you is that we went very quickly from an authenticated user within the information systems to well access to the repository then poison pipeline execution compromise of the orchestrator and at that point we had already access to production environment which means that after steps four from step five at that point we could add real life impact on business application and basically compromise all the application within the company

um we didn't stop there and as as gautier and remy showed you we were able to go from the application deployment environment back to the office environment and the active directory meaning that it was quite easy at that point to really compromise the whole information system but even if that was not the case we went very very quickly from unauthenticated to access to production environment last word i wanted to talk about that as i said at the beginning we had to focus on a very small set of tools we think it really provides a picture of what is usually deployed in in our clients environment but obviously there might be tons of different tools depending on your technical stack and so

on all those tools are great and usually have tons of functionalities and provides useful functionalities for the devops teams i just want you to keep in mind that all those functionalities can't be also used by attackers and the message behind behind this slide is to say that we feel that sometimes devops teams want to deploy new solutions a new type of solution before really hardening and before really understanding and managing existing solution so our advice there would be maybe focus more on quality and security level of your application before trying to deploy a new one and i'll just let me finish on the few recommendations and that will have a few minutes for questions if you sure only remember five points

about this talk the first one is to ensure less privilege and to review your am policy to be honest actually we should never found animals access on jenkins for example are really critical privilege in aws and all power some random services the second point is to focus in secret management password and careertechs are really such things but please it's pretty easy to remove them you could propose to use some vault on that point i'm sorry for all the developers but agility is great is awesome but agility could also be done with some procedure and some restriction to avoid to impact the world companies the monitoring of the pipeline is always done in order to validate the

availability of the pipeline and never to identify some bad behavior or exploitation current exploitation inside it you should really understand that code review is interesting for what is inside your pipeline but you should also monitor the world infrastructure that under the pipeline and all this process the last point is more about the architecture of this pipeline you should segregate different environments inside it to avoid a basic marketing application could compromise some really sensitive assets on your network it's the same in the kubernetes world network celebration is the key and in aws you could set up so many features to segregate the rights and the accounts to ensure that even if an attacker put a foot inside it will not be able to

escalate his privilege what i'd like to say in the last point is the cicd pipeline is not only a stuff for developers or application it's it could impact the world network and the world infrastructure behind a few reference before starting some question we will find some orienting stuff in the middle talk at black hat long years ago i already speak about the renal security labs around aws privilege escalations they also provide some terraform code to tape load this misconfiguration and see what is done the ncc group published at the beginning of the year a really interesting blog post on cacd2 right after we'll have the chance to list an omer that also speak about the

most risk present in cicd pipeline and i think what at the blog post i prefer it's from a free chat definitely take a look about hiking the cloud there is also some really good reference from bishop and cyber arch around kubernetes world thank you so much and if you have a few minutes only two if someone has a question [Applause]

thank you everyone now have a nice day oh sorry sorry is there a question yeah uh is there anything you can do to harden your pipeline so they can't be poisoned like are there security settings that github actions or git lab pipelines has that you could do to like of you know avoid that from happening or is it so so yeah the problem with uh poison pipeline execution is that it's not invulnerability itself it's the whole goal of the pipeline is to build the code into artifacts so what you can do to try to prevent those things is take advantage of branch protection take advantage of for example what you could do is ensure that your build

configuration file i talked about the jenks file earlier you could put those build configuration file on another branch on which basically nobody can push and retrieve those files from that specific branch meaning that you know that the build configuration file will never change and even if an attacker were able to compromise an account it would be able to compromise the code of the application but not the build configuration file so there are a few things that you can you can set up honestly they are not that easy to set up and it decrease the risk but no there is no way to magically prevent all poison pipeline execution sadly i would love to so you're suggesting that you could move

the pipeline file all the all like the actions and stuff into its own repository or something like that just separating the exactly basically the thing that will contain the steps for your builds you could put them in another it can be another branch or another place okay on which you know that you can trust it and it will be less likely to be compromised so there are a way to limit the impact of poison pipeline yes thank you no problem any other question okay