Advancing Network Threat Detection Through Standardized Feature Extraction and Dynamic Ensemble Learning

Show original YouTube description

Show transcript [en]

Good morning everyone. Good morning everyone. Uh welcome to the uh besides Las Vegas and this talk is mainly about advanced network thread detections through standardized feature saturation and dynamic enable learning and by the speaker Jason 4. And I would like to do a few announcements before we begin. our sponsors. We would like to thank our sponsors especially our diamond sponsors Adobe Caddy and our gold sponsors formula and run zero is their support along with our sponsors donors and volunteers make this event possible and I would like to say everyone please don't take pictures we would like to post our videos in the in our YouTube panel

and and one more announcement. Today we have a data science meetup at 700 p.m. in uh pool party entrance. And that's it.

>> Good. Awesome. Everybody hear me? Okay. >> Outstanding. All right. And right on time. Well, thank you all for coming. Um, this is my first talk at Besides, so I appreciate the fact that you are my first audience. Thank you so much. [applause] Advancing network threat detection through standardized feature extraction and dynamic ensemble learning. You know, when we write academic research papers, they do not have catchy titles. They are very descriptive. They are also incredibly wordy. But for the next uh 19 minutes or so, I'm going to walk you through some research that I've been working on for the better part of the last two years. Um, and then talk about where I'm hoping to take this work next.

So, a little bit about me. Um, I'm a research engineer at Proof Point. Been there for a little over 10 years now. Um, done a bunch of school at a few different places. University of South Carolina, go Cox. Um, published papers on a variety of different things. I'm not a threat researcher, but I've had the opportunity to do some research on state sponsored actors um using machine learning to detect QR codes in email. For those of you who have any exposure to email security, you know that the last couple years that's been one of the hot topics in the industry. But most recently, and the reason that I'm here to talk to you today, uh is about using

ML for network intrusion detection. Uh when I'm not working on this stuff, um I live in Colorado. I'm usually out enjoying the outdoors, whether that's when the weather's real nice, whether the weather's real cold, uh, finding a mountain to ski down, usually something to that effect. Uh, and my career mentor, um, for those that know Sherid Degrio, who works on the threat research team over at Microsoft, um, she told me, "You really need to add something fun to your about me slide, like tell them what your favorite protocol is." And I was like, "Shared, why would anybody care what my favorite protocol is?" She's like, "Just do it. Just put it on the slide." So favorite protocol is DNS. And

why? because it's what makes a lot of other things work. And usually it's the first thing to break that really becomes a problem. So let's talk about this talk. What is the problem that I was trying to solve or at least find an answer to as part of this research? Um I don't think it's any surprise to anyone in this room that the attacks that we are asked to defend against are becoming not only more sophisticated but more difficult to detect. Now, NIDS has played a really important role in detection over the years. Um, but one of its main constraints is the fact that NIDs is going to be reliant on reputation, on signatures, right? So, the ability to

detect things that it has seen before. A lot of the prior research in this domain um has focused on using an individual ML classifier with a very very limited or very small data set which looks really good in a lab but when you actually try to turn it loose on real traffic it does not generalize well. So end up with very poor efficacy in a lot of cases and in a lot of the studies uh anything that was sort of turned loose outside the lab saw efficacy under 40%. So, these are some of the things that we wanted to try to tackle with this work. Now, just as a brief bit of background, uh, for those

maybe that aren't as familiar with the terminology, just wanted to do a quick 30 seconds on NIDS versus NDR because that's usually the first question I get when I talk about this work. Well, Jason, you know, they use ML in in network detection and response. I know we're specifically focusing on uh NIDs as part of the comparison for this talk. So, just a a brief uh explanation. and NIDS going to be looking for things based on, you know, rulebased or signature based detection. NIDs is going to or NDR is going to incorporate some of those behavioral analytics and things of that nature. A lot of times it's using anomaly detection models in order to be able to do that. Um, but also

offers uh if you've used products like Verra or others the ability to do um threat hunting and some automated response capabilities. So the research uh or the objectives of this research was really to find uh if it was possible to improve accuracy in detecting threats by looking at stuff on the wire. And there's three pieces to that. Uh the first is developing a standard feature extraction framework for analyzing the traffic. Um, for anyone that has any experience, uh, you know, working with machine learning, training classifiers, I'd argue that in a lot of cases, the features you extract are actually more important than the model that you choose to train. It's not to say that they're not important, but

in a lot of cases, uh, what we see is that models that generalize poorly didn't necessarily extract the right features during the training phase, which leads to them not being able to detect the stuff that you want reliably. So uh one of the things that that you know I worked on as part of this was looking at what everyone else had done. What sort of feature extraction frameworks were out there. One of the things that I found is that in a lot of cases people were relying on things like training the data off of IP addresses and port numbers. As you can imagine that probably didn't scale super well once you turned it loose on traffic that

it hadn't seen before. Uh in other cases they would actually use another classifier to do the feature extraction automatically. That's actually a fairly common approach in ML is let the model decide what things are important and extract those things. The problem with temporal data or you know things that um are transactional in a lot of cases is the temporal dependencies don't necessarily lean themselves uh to uh the type of work that we're talking about where what I actually want to do is to be able to distinguish between benign traffic and potentially malicious or suspicious traffic. Right? it's not necessarily going to be able to pull out the nuances that are required when you're just allowing the model to decide

on its own what things it should be paying attention to. So, step one, figure out a feature extraction framework. Step two, pick a bunch of classifiers, use the train framework to then uh train them to be able to actually do detections. The last piece of this and and where I got to flex a little bit, where the creativity comes in as part of the research was actually designing an ensemble classifier. Ensemble classification in ML has been around forever. This is just yet another opportunity to be able to leverage uh ensemble learning in order to be able to hopefully provide uh a classifier that will work really well when it's exposed to things that it hasn't seen before.

So you'll see this visual a few times. I don't expect everybody to read the text. Essentially what we're going to do here is walk through the process of how I extracted the data, train the classifiers, and then we actually get to the point where we can start providing scoring. So we're going to work on that first box first. Load the samples, extract the features, and then apply scaling. Now, one of the things that I found as part of this research um that is also very common in this domain is we don't necessarily have a lot of really good public data sets that have the stuff that we want in order to be able to do model training. Um in a lot of

cases the data sets that have been used in prior research uh actually leverage a subset of the data. So someone will take some pcaps they'll reduce that down to just the features that they want to train their models on. They'll then convert that over to something like a flat text file or a CSV. They'll make those public but unfortunately it doesn't have again all that other metadata that we might want to actually train uh our models on. So for the purposes of this research, I went out and sourced data sets, public data sets uh that actually provided raw pcaps. We want to see the whole thing. This al this allows a lot of flexibility in

terms of uh doing things like looking at flows uh the ability to look at forward and backward packets um you know as well as a bunch of other metadata that you might not get if it's just a bunch of sequential lines in a CSV. So the data sets that I sourced um there was three of them um one from Czech Technical University um another from the University of New South Wales and then the last one there from the University of Science and Technology of China. Um in addition to that we collected some uh benign traffic from home and academic networks. So uh with ML training in a lot of cases you're looking for balanced training data sets right both for

actually training the models as well as validating them after the fact. In a lot of cases, these public data sets were great because they gave me very very good representations of known bad traffic, right? It was labeled. I had to have the ability to say this is actually something that is malicious for the purposes of of training the model. What they didn't have was the good stuff, the benign things, uh, you know, a Zoom conversation or, you know, two people chatting on Microsoft Teams or Slack, things of that nature, regular web browsing. So we collected some network and or network traffic and controlled environments uh to be able to then balance out the training data set for

feature selection. Um the things again that I had seen in a lot of the prior research were based around unfortunately fields that did not end up generalizing well when they were shown traffic that they hadn't seen before. So I focused on a lot more uh metadata related things um at the packet level things like the count of the TCP flags the average time to live of the packets and then most of what the model was going to be trained on was flow level statistics. So we talked about things like forward and backward statistics inner arrival times the number of packets that were observed in the last 10 seconds duration uh etc. So back to the graph. Um moving on from

there, extract the features out. The idea then was to uh generate predictions from each model. But I had to select some classifiers to train uh in order to be able to do that. Now some of these have been researched exhaustively in this particular domain. Uh the first two random forest and isolation forest. As I mentioned a lot of NDR solutions will do behavioral analytics or UIBA type things. Isolation Forest is more often than not one of the models that gets used because the nice thing about that is if you don't have the data set of all the malicious traffic, but you do have one of what good traffic looks like, you can just train it on one and not the

other. The idea is that it has the ability to identify anomalies that don't match the things it knows. Some of these others, uh, GMM in particular, not a lot of research on using that in this domain. So, um, decided to include that to see, uh, if it would be feasible to use it. Same thing with QDA and some of the boosting um classifiers as it relates to their ability to again be able to generalize well against things that they haven't seen before. Uh the neural network models that I selected have also been used fairly extensively in identifying network traffic. Um I'll we'll come back to that closer to the end because as I found out um

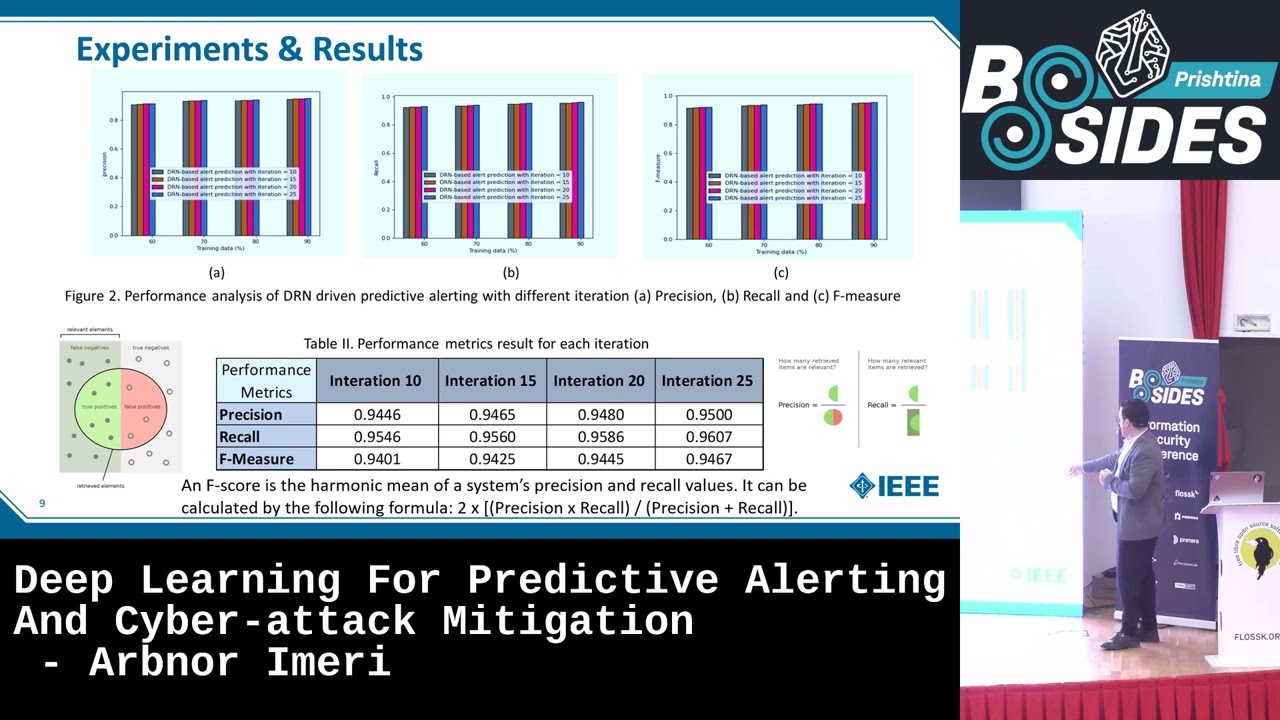

hyperparameters for training those models become that much more important in terms of their performance. So moving right along uh through our process flow here. Um after training the individual classifiers uh then actually aggregating them uh based on benign and malicious scores and coming up with an ensemble score. So this was the art part of the presentation and I'm just going to warn everybody ahead of time. This is also the math part of the presentation. Stay with me. It'll be fairly quick and painless. Uh but but we are going to get into the the math part of this as well. So uh the idea as I mentioned at the beginning was to create an ensemble classifier and yes when you are the

author of the paper you get to name it after yourself. Ford class specific weighted values uh is the name of the classifier. Um I'm going to just go ahead and build this all out because there's a lot of text um a lot of it atalicized symbols uh and then uh an explanation of how the waiting function works uh for the purposes of understanding this. The idea here is that we train all the individual classifiers. We then run validation testing against them to get their accuracy scores when they are scanning traffic individually. We then use that as the base weight to then be able to combine them together so that we can have them effectively cancel out each

other's weaknesses but gain the benefits of all the things that they do well. Um what is somewhat unique and novel about this is we're actually looking to give more weight to less confident predictions. Right? So simplest way to think about that, go to the uh next slide here. Uh let's see. Applying the thresholds, this is probably the one that makes the most sense. For things that generated a score closer to 0.5, they would get higher weight. For things that were closer to zero benign or one malicious, they would get less weight. The idea is to filter out overconfident predictions. And in testing, uh you'll see here in just a second that actually performed incredibly well. Um, the math

part of the presentation is right there. It's just some basic addition and division. Didn't want to scare anybody away. U, but basically taking the weighted, benign, and malicious scores, adding them together, dividing by two to come up with an average, and then the classification is made by comparing that to 0.5. Basically, if it was greater than a half, the sample was labeled as malicious in testing. So, that gets us to the last part here, actually classifying the traffic. Um I realize that may be somewhat difficult to see. Um but the point here is that the ensemble classifier is listed at the top in contrast with all the individual classifiers that comprise it. Um the ensemble classifier achieved over 97

almost 98% accuracy in being able to identify samples. Um now the the big difference here between it and the individual classifier, some of which performed exceptionally well on their own. Um the boosting algorithms in particular which are a bunch of weak classifiers combined together. No surprise um actually did really well at being able to uh properly classify the traffic. The point here was that the ensemble had the most balanced precision and recall for all of the classifiers that were identified and tested as part of this. The idea being if we get the opportunity to actually test this against live traffic, hopefully this thing would perform exceptionally well. Um, in terms of future work, um, probably the number one thing I'd like

to revisit if given the opportunity, um, is retraining some of the poorly performing models. So, I mentioned this a little earlier on. Um, things like, uh, GMM and the neural network models actually did not perform nearly as well uh, as what I had seen in some of the other research in this domain. Um, I have a few theories for why that is. I mentioned uh, using different hyperparameters um, is is absolutely part of this. That's something that I need to take a closer look at to determine what the feasibility is of actually using these at scale because other studies have shown there's actually a lot of promise using uh CNN and RNN's in particular for um

identifying potentially malicious network traffic. And then the part that probably excites me the most um is the feasibility of actually productizing this. And what I mean by that is actually seeing if this works well outside of a lab, right? Um everything that I did here was Python based. So, um, in terms of the ability to run it on different platforms, fairly easy to to set up, to scale, um, and not a big, uh, footprint required in order to actually be able to run this, uh, in a production setting. So, to that end, don't want to jump too far ahead here, but if you all get to see me next year and I'm giving a talk at Bides, hopefully it's about the

fact that this week I've actually been testing this thing out um, to actually observe uh, traffic to see if we have the ability to classify things. You're probably not going to be able to see anything really good on that photo. Um, I just grabbed that in the room to be able to show. We've just got a little Raspberry Pi with an external uh, Wi-Fi adapter set up there and basically grabbing anything by channel hopping and seeing if there's open Wi-Fi network so we can actually observe the traffic. So hopefully more to come on that um, you know, in a future talk. Uh, with that um, just want to leave you with a couple of things. Um, the feature extraction

framework that I built as part of this research um, is available um, under a GPL license. It's on my GitHub. you can grab that either through the URL or if you want to scan that QR code. Um, and then probably the most important thing to mention is that none of us gets anywhere on our own. I did the research on my own, but I had a lot of help. Um, I want to thank my adviser. um a lot of my friends and colleagues that had the opportunity to uh you know assist me with this and and the last person named there is my wife and I can't tell you the number of times that she read reread

reread again and then offered feedback on both the paper and these slides uh before I got here. So I want to make sure that I take the opportunity to to thank those folks as well. As I mentioned that's totally not a credential harvesting attack. So uh feel free to scan that QR code. I'd love to connect with you. Um, have the opportunity to give you to to read some of my research uh and maybe collaborate on stuff in the future. So, with that, love to take any questions. Thanks so much. [applause]

>> Hi. I was just wondering does that change over time? So, is this actively monitoring traffic? Does this like real time start changing those weights depending on, hey, it started out looking benign as I start receiving more, understanding more, it's starting to look more malicious. >> It's a great question. So I I think what you're referring to, I don't want to put words in your mouth, is a retraining mechanism for those classifiers as it observes more traffic. Does it get better over time? Is that the question? Yeah. Um, in an ideal scenario where we would actually deploy something like this in in production, yes, that would be the goal. Um, as it stands, the the

classifiers that I used for the experiment were were static. So, the only thing they know is the data that they've been trained on. If I was actually able to turn this into something that we could deploy um, you know, in real life outside of the little Raspberry Pi that I've got set up in the hotel room, um, yes, that's absolutely one of the things I'd want to build uh, into any platform that that would do this because it's only going to get better by seeing more data. >> Right. And then as the like live packet, so a TCP connection, you're intercepting the packets, maning it. Is it like classifying like once it classifies it's done or is it actively monitoring that

connection and then reclassifying based off the parameters? >> Yeah. So without giving too much away because obviously this is something that I cobbled together in the last week just to be able to to see if we could actually uh uh test this with live traffic. That absolutely would be part of the idea right now. Um, the way that it works based on the feature extraction framework is it's looking at a sliding window of 10 seconds. So 10 seconds before, 10 seconds after, so 30 seconds worth of traffic. That's obviously a window that could be changed based on performance uh, and things of that nature. But the cool thing is that works really well on a Raspi 4. So the ability

to do this and get a classification decision in under 30 milliseconds is something that absolutely could be feasible uh, in a production scenario. >> Thank you. >> Yeah, you bet.

Thank you. Great presentation. Uh can you share any uh results or findings? You know, what kind of stuff did it detect? Uh and uh and then a second question would be how do you deal with encrypted traffic? Can it detect things, you know, behaviors and patterns based even though the traffic's encrypted? >> Yeah. No, two great questions. So, um I'll try to to touch on the the first one um as as best as I can. Um again, as I as I mentioned to the uh the other individual that asked a question, all of the testing up to this point has been based off of um static traffic, right? So, we're actually looking at PECAPS, not live traffic. So the experiment that

we did as part of the research was against a corpus of traffic that was known. It was already labeled benign and malicious. Um in testing the idea would be that you know if we turn this uh on live traffic you would have to decide what things you would want to capture outside of the metadata that was being extracted to then make a determination. I can give you a score. I can tell you that it's 57% confident that this is malicious. But the next phase of that of well what is it then? um I I think would be be sort of the next thing in terms of of developing this and actually turning it into a tool that could could be used

by others. Um and I apologize. Repeat the second part of your question for me. >> I was curious how it uh it handled with encrypted traffic. Did it detect any patterns? And then a third question that occurred to me cost uh in terms of the the tokens and the >> Yeah. Yeah. Um, so, so the little experiment that I've been running, you know, this week at at summer camp, um, is only looking at open Wi-Fi networks. So, we're not inspecting, uh, any sort of encrypted traffic. I think in an ideal world for what I had aimed to create as part of this project, this would actually be something that you would plug into a trunk port uh, on, you

know, your back plane as opposed to doing this over Wi-Fi. It's not to say that you couldn't. Um, there's certainly interesting things to observe over Wi-Fi. I can tell you it's really interesting being in a casino full of hackers and observing everything that those who choose to connect to the open Wi-Fi actually put out there. So, just food for thought there. Um, in terms of cost, um, because of the fact that we are using all I guess what I'd put in the category of traditional ML classifiers, uh, the cost associated with this is something that you can run off of a 3060 on your desktop PC. I mean, there's uh nothing in terms of training that would be prohibitively

expensive for an undergraduate college student to be able to do this probably on either their own PC or one that they could grab out of the lab. Um, you know, that being said, um, the rig that I've got at home's a little bit bigger. The classifiers, uh, trained in under 30 minutes in most cases on the training data set. >> Thank you. >> Yeah, you bet. Any other questions? I know we're right at time here. Okay. Well, I'd love to connect with any of you if you have other questions. I'll be hanging around for a little bit. Thank you all so much again for being my first besides audience. I appreciate it. >> [applause]