GT - Repurposing Vulnerability Tickets to Predict Severity Levels

Show transcript [en]

welcome brittany [Applause] hello and welcome to my talk my name is brittany bach and i'm here to share notes on my project which is repurposing vulnerability tickets to predict severity levels and introduction to natural language processing and classification algorithms

thank you so how i got here i started my career mostly on the receiving end of information as a tech writer and then transitioned into infosec as a security analyst working on the compliance side of things afterwards i moved into hands-on role as a security engineer where i found my niche as an operations process lead it's this role that allowed me to work with multiple security workflows and continue to ask the question how can we do it better from my experience doing something better or process improvement starts with a lot of talking typically teams get together and discuss how something is supposed to go afterwards there are some testing to work out issues once enough tests have been run and a

working process is in place then commitment in the form of documentation occurs the documentation signals there is an agreed-upon method of carrying out a process the expectations at this point are set eventually the process is completed enough times that consistency of expected input and output are generated and then the process is automated which simply speeds up the rate of completion it's at this stage a lot of data is collected there's always the possibility of going back with new information found and running through these steps again but eventually you start asking the next question which is what do we do with all this data so in the context of this project the data we're talking about is in the form

of vulnerability tickets vulnerability tickets are created to track the remediation of detective vulnerabilities they typically hold a lot of sensitive information about the impact of product or service including associated teams remediation instructions published descriptions and completed resolutions to name a few they are usually maintained in a repository where they can be tracked and recalled as needed they are prioritized based on severity they can provide descriptive statistics where the gathered information can be quantitatively described or summarized vulnerability tickets are generated in natural language

natural language processing is under the artificial intelligence umbrella since there is not a one-to-one match between natural language and computer language you must convert natural language into a format the computer can understand once this com conversion is completed then your data set can be run through various machine learning algorithms that can detect patterns in your data natural language processing is a two-fold process first there's the data preprocessing and then second there's the algorithm development both of which we will explore shortly

so check point one before we go any further we'll just take a moment to pause and reflect on why all this might be important to you as a recap there's a lot of tribal knowledge and accepted analysis within vulnerability tickets by repurposing the information process improvement can continue following automation this process can then lessen human dependency and increase accuracy you're also creating a nice transition from descriptive analytics to predictive analytics predicting severity levels is just a start any information that is consistently tracked within the vulnerability tickets is fair game to be analyzed

project methodology there are three main steps to this project first data pre-processing which is the collection and preparation of data to run through machine learning algorithms the end of this step will result in a final data set for step two second there is algorithm development we're going to cover five common classification algorithms and how they help to detect patterns in the pre-process data set the end of this step will result in observing the accuracy percentage of each algorithm and selecting the best one for the prediction task finally we combine the data set and selected algorithm to complete the to complete the prediction task the end of this step will result in a right or wrong prediction from the test

input

okay step one data pre-processing the first thing you need is data so normally you would already have this internally within whatever ticket repository your company uses in this case because we're using public data the exercise of web scraping came in handy to gather the needed information as an alternative you can try finding a data set in a community repository like kaggle but for me i wanted the experience of building and cleaning my own data set so i want the python route the other steps of pre-processing include removing dupes replacing missing values with nan removing irrelevant words and using a stop word list to help with the removal and adding a custom header and just as a side note a stop board

list is basically a list of non-essential or no impact words like pronouns articles prepositions and conjunctions examples of these would be like a and the your are and there so it's basically words that offer no value for the prediction task the final step was applying count vectorization to the natural language data set to make it algorithm ready this is basically running a script on the data set to convert the text to numerical form in this case we are counting the frequency of pre-selected words mentioned in each cve vulnerability description all of the above changes were completed using python

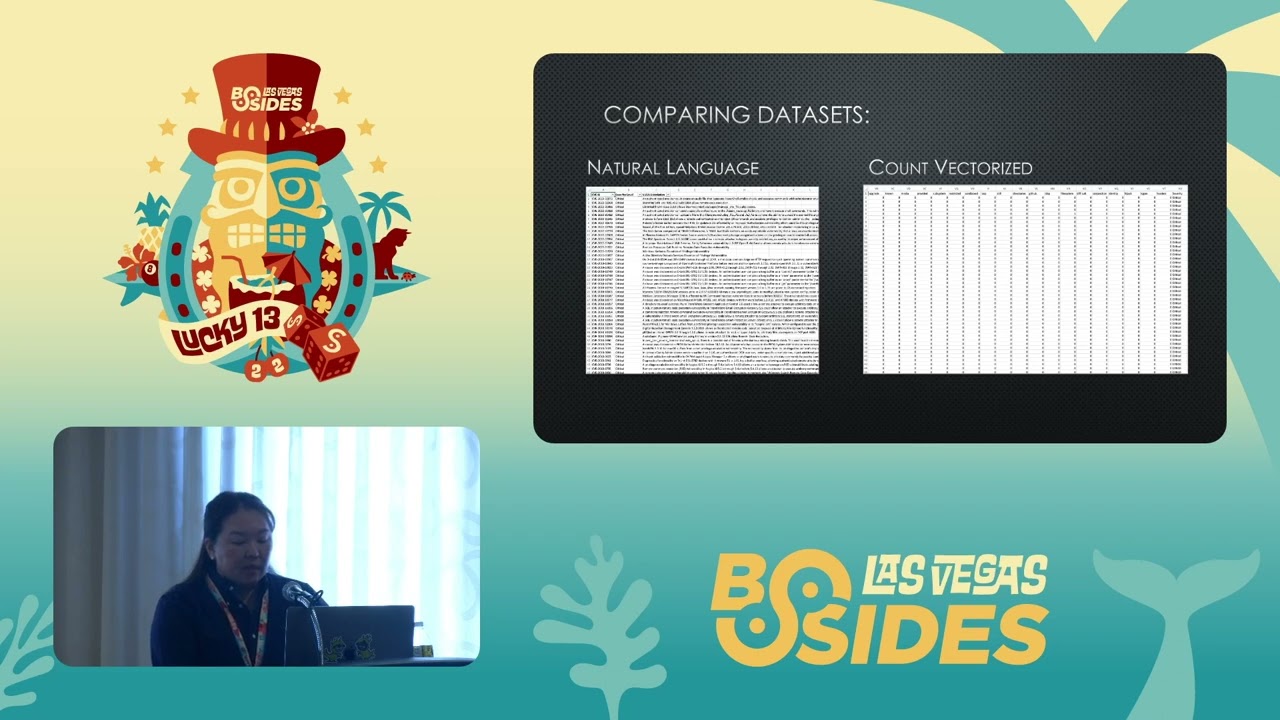

so here's a quick side-by-side comparison of the natural language data set next to the count vectorized data set this is considered the completion of step one data pre-processing of natural language processing as mentioned the data set on the left includes the cve id severity level and most importantly the vulnerability description in natural language upon count vectorization the frequented words become the header and the number of times they are mentioned in each vulnerability description are catalogued underneath the severity level is still maintained because it is what the algorithms will use for the classification the data set is now ready to be added to the classification scripts

okay step two is algorithm development so as mentioned before the second step in natural language processing is algorithm development the classification task is figuring out whether the frequency of certain words across multiple vulnerability descriptions are more likely to appear in one severity level over another therefore allowing us to predict severity level for a discovered vulnerability for this project i opted to focus on five common classification algorithms these are going to be helpers in detecting patterns in the data set for example perhaps there is a certain word or cluster of words that are more frequented and say a vulnerability description specifically a critical vulnerability description detecting this pattern would allow us to predict critical vulnerabilities in the

future now there are a lot more than five classification algorithms but when you begin studying machine learning without a doubt these five will be encountered so for the sake of an introductory level talk we will use logistic regression k nearest neighbors support vector machines decision trees and gaussian naive bayes we are going to review these algorithms and then we will make a selection based on results from the cleanse data set

all right so logistic regression is our first algorithm or model that we're going to be looking into so this is uh considered a probability driven model logistic regression finds patterns and data sets by identifying the probability of an outcome so in this case the severity level and this is based on the features that are present which for us is going to be the list of our most frequented words out of the five this is considered the most straightforward model

the next is k nearest neighbor or knn this one is a distance based model so when new data is introduced to the data set the nearest data points are identified and these are considered the neighbors using the value of k you can adjust the perimeter of the neighborhood and so although this is set to 3 in the actual script you can actually adjust that that value so if you look at the image to the right you can see there are three closest data points selected of these three the one with the shortest distance to the new data point will be selected the new data point will then inherit the nearest neighbors class

okay so the second one is support vector machines or svm so this one is a multi-dimensional model and it's generally really helpful in classifying when you have a really complex data set its goal is to find the maximum margin distance in order to make the classification so in this case it's a two-step process that happens iteratively until the maximum margin is detected so for step one svm generates multiple hyperplanes with which are basically lines that separate the data points into classes in step two the model then picks the most optimal hyperplane which is basically the one with the maximum margin so the maximum margin tells us svm has found the line that makes the clearest distinction

between the two classes the support vectors are simply data points that help the model accomplish classification so in this case that would be the blue circles and the red squares so from personal experience when i was running this this data set for this project through this model this model took the longest to process and so i'm not i'm thinking that that occurred because based on this definition probably finding like the maximum margin was quite difficult so when i ran into skip i think it was almost like two hours in order to complete processing

all right so up next we have decision trees uh this is a flowchart model so an interesting requirement with decision trees is the data set must be labeled and this is known as a supervised learning algorithm so basically like you cannot have a data set that doesn't have labeling of features so in our case we have features we're very distinctive of what we're looking for if you had a data set that did not have that and you were doing more like exploratory data analysis then this might not be the best algorithm for that particular project but in this case it was okay with decision trees so the way it works is it classifies by starting at the top or trunk of a tree

and then it moves its way down with more specific questions to figure out similarities and differences within the data set so in this case you are classifying based on the most finite shared category

all right uh last one gaussian naive bayes so this is another probability model um there are actually three types of naive bayes models but we're going to choose this one because frankly it's the simplest this model is a derivative of the bayes theorem it means the probability of one event is determined based on the probability of a completed event gaussian classifies based on a normal distribution if you look at the image the model is calculating the probability of observing each data point based on the class okay so next we'll talk about algorithm selection

so to figure out which algorithm to select for the prediction task you run the cleanse data set through a script that holds the libraries and classes for the selected algorithms the output provides an accuracy percentage for each of the models so that's the screenshot that's on the right based on the results it's clear that support vector machine returns the highest accuracy percentage on the provided data set however as i mentioned this also takes the longest to process so for the purpose of the demo we're going to go ahead and use the second best which is logistic regression but i will still show you output on how how the support vector machine fare

all right second checkpoint um so as a recap we know natural language processing is a two-step process of data pre-processing and algorithm development we covered each of the classification algorithms that will be used to make an algorithm selection and then next we're going to walk through the prediction expectations and then input test data into a prediction script to observe the results of the prediction task

all right so this project is derivative of two other projects of similar nature during the initial research i wanted to get an idea of expectations and what the definition of success should be if you look at the table to the right there are important data points for both for both of the example projects as well as the third one which is mine as you can see there are both variances in overlap across all three projects the general methodology of data pre-processing feature extraction again that's the number of word frequencies and algorithm development were also completed uh the only difference is the number of vulnerabilities and features collected are much higher in project three in thinking of what i was aiming for

with this project i wanted to gain an accuracy percentage that realistically could get presented for user buy-in i knew if i had anything less than 90 then the likelihood that users would feel confident in trying out this tool would not be very high so fortunately after an intensive journey of developing the size and the quality of the data set the goal was reached with the logistic regression and the svm models respectively

next we're going to talk about the impact of data to classification accuracy the relationship is absolute when i first started this project i was gathering cve data including severity levels and vulnerability descriptions manually i was hoping an even distribution across the four severity levels would result in adequate classification accuracy percentages unfortunately the process was cumbersome and unsuccessful with such a small matrix you can see as the data set iterations progress and the number of vulnerabilities and features increase the classification accuracy percentage increases in tandem for example if we follow the first row of this data set the relatively small number of vulnerabilities and features results in less than optimal accuracy percentages across the four algorithms

the other interesting part about this table is how mistakes in cleaning the data set impact the accuracy results this is evident in rows four 9 and 10. you can see either an errant column or row was left in the data set before running the classification script and that does impact the accuracy results

so here's a visual of the accuracy trend this is evident with the linear trend line which is in hot pink and you can also see the random peak with data set 4 where the column id was left in it should have been removed but it basically created a false positive where i thought i had hit accuracy gold but in reality i just forgot to remove the id column um so this is one lesson from this a key takeaway is that you know if you see your results being really really good even though the goal is to get as close to 1 or 100 as possible um if it doesn't make sense with the rest of the testing

then it's probably a good idea to check your data set and in this case uh you know just not removing a single column that had no value completely skewed the results but aside from that i mean you can see that as the vulnerability numbers through web scraping increased and also as the number of features increased which is the frequency of words as that count increased you know there was just a steady trend up where the accuracy percentage also increased so data matters okay so a couple notes before we get to the demo um i did record the demo i did it this morning because frankly i don't trust my coordination to do this live but we're going to cover preparing the

data set using a randomizer script and then we're going to cover data preprocessing which is going to be cleaning up of the data in both natural language and post vectorization last we're going to run the cleanse vectorized test input into the prediction script and observe our results okay so movie time

this works great all right so the first tab is just showing the classification accuracy this is the percentage output that was in the screenshot and that's just what it looks like once you run the script

all right so this tab um we're going to create our natural language data set by randomly generating a list of cves these are going to be pre-processed for the algorithm development so this is not a requirement for this project but it's just added for for effect just to show that you know if you randomly have a new cve that's published and you know your organization is aware of it like you're obviously not going to have that information right off the bat

all right so yeah that's data i'll put i did select five for the demo you can do a lot more and i have done a lot more but again since time is important i went ahead and randomized five and then you can see the id severity level and vulnerability description that's all in natural language okay so next we're going to open up the generated csv

and yeah

okay um so that's all the natural language data upfront in the csv so the first thing i'm doing there is removing the header we don't want to include that because then that's also going to get vectorized and it's going to skew our results the other thing that i'm doing is i'm adding a placeholder column so the count vectorization code um it will basically stop at that particular like placeholder and then it'll know to like iterate to the next row and so i add just this like arbitrary term and i just added like starter column because that's where i want the iteration to start

okay so the other thing i'm doing is i'm changing the file extension from csv to text

okay next tab [Music] so now we're going to run that text file through the count vector z account vectorizer script um so this group creates this useless first row of all zeros and um you do have to like remove that and so that's just what i'm showing here is that it works but for some reason it creates like a first row of zeros that has to get removed the other thing it's going to create is like the column of ids the identifier so that's another thing that gets removed as a part of the data processing

all right so that's what it looks like when it's vectorized you can see all of the features at the top and it's like 592. and then you can see how the occurrence of each of these features is actually listed and again we're removing the first row so that's all the zeros we're going to move that that's not valuable

okay so as a side note you can actually just do all of this through code but i am showing the manual side of this for transparency this is pretty much the stuff that has to happen before you can run this through your prediction script

okay yeah and i think that is just removing the identifier and then there's just one small formatting that's left before copying and pasting it to the script

okay so that's the vectorization for all five cpes we're going to copy that then we're gonna go to the script and we're gonna paste it in uh and again we're doing the stem on logistic regression which if you can recall it was roughly 90 accuracy so we are looking for pretty good results

okay so now what we're going to do is we're going to run the script and we are going to be able to see um the actual prediction that's made for all five cves and we're going to also see how it compares with the natural language output

[Music]

okay so that is the prediction that's made on all five cves it's red left to right which is going to be if you're looking back at the um the table it's going to be top down so critical i think it's yup medium low critical critical and okay so it looks like there's a one-to-one match on all of those as a side note i have done this with like a lot more test data and it's not always a hundred percent um but it's pretty close i mean even when i ran it with like you know like 12 different test inputs i still got nine out of 12 using logistic regression and that was about the same with svm as well it just

takes so long to process but um but yeah it was pretty spot-on with the accuracy percentage all right

so this is how the other models fared um the only one that came back with a little bit of a hiccup was the gaussian naive base but that was actually pretty expected and still getting four out of five is pretty good because i've run it again with a lot more test data and there have been times when i only got like three right uh out of 12 but if you look at the accuracy percentage it's relatively low so it's not the best model to use for this project but the other models you know they're they're relatively close in accuracy percentage and so you know the match was pretty spot on and that was also the case when i ran

with a lot more test data so that's pretty that distribution is it's pretty consistent

okay so a few key takeaways before we wrap things up first you have to keep processing time in mind uh for when the scripts run i found as my data set grew some of the models like svm took upwards of two hours to complete the second thing is there's a great benefit to this process improvement in comparison to just accepting the published severity level uh the big benefit is that you can repurpose internal decision making and tribal knowledge these are things that are not always going to be integrated when you see like a newly published cve so that's very helpful the third thing is there are two main assumptions this project makes the first is the severity level

the severity levels in the current ticket repository are more or less accurate and then the second assumption is that there's already an existing vulnerability ticketing process and hopefully that process is somewhat automated the fourth takeaway is the data set is is really the most important part of this process i mean everything else like you know the code the um yeah basically the code i mean you can pretty much you know find a lot of that information already on the python website and like numerous amounts of books and you know websites and whatnot but if your data set isn't cleansed properly it's just you're not going to get the results that you want um and the last thing is you know you

don't need to be a mathematician or coding expert to get started i mean i'm obviously neither of those things but you know a little knowledge can go a long way so the whole point of this was really just finding another way to improve process that's it um okay so thank you uh thank you for attending my talk here are a few resources that help me get started you know hopefully these will be helpful but these are really like the main books and the websites that i reviewed in order to learn this stuff

[Applause]

hi we had to we had to kill all of the wireless mics because of interference so if anybody has a question please speak loudly and if i can have the speaker just repeat the question into the mic before answering it that would be great thank you

bye [Music]

taking assets into account um so i think if you're thinking about prediction you can definitely take assets into accounts if those if that is an item that is routinely collected in your vulnerability tickets and so you know once the cv gets published if it's relevant to your you know organization um and you end up finding impacted assets and that information is is consistently collected then you have a pattern and once you have a pattern then you can utilize something like this and what it would really do for you is just if there was something similar that came up again or maybe you knew that yeah i mean basically if there's something similar that comes up again

you can utilize this historical knowledge that's already in your vulnerability tickets in order to predict things like what are the assets might be impacted by this or like who are the owners of these assets or you know where's the root cause so it really comes down to what you're consistently collecting and then that's where these machine learning models can really help you out to make you know whatever prediction you want so severity level is just one type of prediction you can make but the real question is you'd have to look at your tickets and say hey what what are we doing consistently what's the formatting like you know if i put everything in a csv and a table

you know what you know how much of that information is like consistently collected and when you have that then you can you can repurpose that information to make predictions

so if you applied another column of do i have what's applicable to this to this finding but that is

yeah i think it's also yeah that's that's exactly correct and then if there is a new uh vulnerability that's introduced that your organization has not encountered yet um the process of like feature extraction that we discussed which is like the most frequented words that could also give you insight like as long as that you can actually adjust the number of features that you include in your data set but if you wanted to add other things and then you wanted to count if there's like how many times ios is mentioned um in like all of the vulnerability tickets and then that would help you to find like the nearest classification you know that's another adjustment you

can make kind of yeah

is

oh that's a good question yeah when i did testing it was it was very close so most of the ones okay so i did testing multiple times and i didn't get any of the critical or highs incorrect what i did get incorrect were medium and lows and so whenever there was like supposed to be a medium for some reason we would come back as a low or if it was a low vice versa and i should have included screenshots of that and i didn't but it's the variation is not extreme it's not like if you see low then you know it's supposed to be a critical i did not see that in any of the testing

but it's a possibility you mean if you share the terminology yeah

yeah that would be a huge issue

i've got a lot of fantastic yes i did actually yeah i included um everything

i don't think that was included into it i think it's just mainly like you're looking at the most uh common occurrences of words and the assumption that you're making is that there are certain words that are more commonly reported in like a critical vulnerability versus an informational or low but it does not encounter like changes like if there's you know um you know something happens and you're like oh actually we have to like bump this up to a critical it doesn't include that except for the fact that if you have already experienced that multiple times and maybe there's something consistently trapped in the vulnerability tickets then perhaps like those keywords will pop up and that could help you to

consider like you know that pattern

so you know that's an example

it's also this process is bespoke or to your organization so it would also include um you know if something like that ever occurred and so the whole point of this is like you're repurposing information and decision making that applies to your organization versus just like arbitrary like you know generic um severity distribution and so if you have like a history of like you know nfs showing up and then it getting bumped up after a pen test and that is tracked within vulnerability tickets and that could very well be something that comes up and offers you a more accurate prediction but if it's never been there and that terminology is not present then yeah i mean that's where

that deviation would occur so definitely correct so

um and it matches like 90 percent match score for this one if i then apply on this one cycle can identify my knowledge

is a critical but

[Music]

because of those

so my response is probably a bit junior but if i were to discover new information then i would probably restart the um the collection of features the feature extraction and then rebuild um the header rebuild the the number of headers because at that point there might be new terminology that comes up yeah and then i would re-run um the natural language through it again and then vectorize it and then see if there's um improved results so that's probably how we do it it's a long process yeah you would definitely have to uh

i'm guessing logs yeah i would say logs

but just that the machine learning model was able to cut out the work of this other

i'm sorry could you repeat that please i didn't quite understand so my use case is that right now

yeah that's the goal yeah that's definitely the goal because then you i mean you'd have all this historical knowledge and you're running it through this over and over again yeah absolutely there's like that compounding that's occurring

[Music]

right um

is oh um there is tribal knowledge at this point so for this project i had to use public data and that's why it kind of looks like public data but the theory is that you would be using your own vulnerability tickets and those tickets within your orb would have tribal knowledge and as a result the features that are extracted would be completely like like they would be whatever your organization uses so if you have like some special term for critical or you have some special term for something else and that's like consistently tracked then that would become a data feature and then you would count the occurrences of that and hopefully that would align with um you

know the common severity level that's been detected does that kind of make sense yeah

thank you thank you

um yes there's more things i want to add to it though because it's still relatively elementary so i have this whole backlog but for this presentation i had to cut off and just like you know post what i had uh but if i do post because it's data science related i'll probably put it on kaggle um and then it'll just you know all the code and everything will be you know within jupiter notebooks and the data set would be available as well it's a pretty big data set i web script over 150 000 cves and you know the other published works were you know definitely less than that i think like well i don't remember but it

was almost half so it's it's a lot that i was able to work with and i think that is really the reason why the accuracy percentage yeah went up yeah uh yeah thanks for attending though and thanks for all the questions