A Guide for Managing and Mitigating Insider Threats

Show original YouTube description

Show transcript [en]

So, uh, I'm Andrew Spotswood. Um, I, uh, lived in Redmond. I had a short commute here, lived here for 15 years. Been in, uh, tech, uh, for over 20 years. Been in cyber, focused in cyber for about 10. Um, worked with cyber startups. Cyber startup got acquired by Accenture. Hey, Damian. um and uh left Accenture last year. Now work with uh RedApt helping build their cyber security uh practice. Um reason I'm talking about insider threat is it's it's been a a common topic with people I've been working with um lately. We've worked on some programs around it and I've I've realized that the definitions around insider threat are vast. They're very broad. A lot of

people think about insider threat the same way they think about Edward Snowden in those in the leaks. It's like, well, they he he used his access to to send that out. But I've learned it's it's uh it's much broader than that. So ultimately what today is is I'm just sharing with you what I've learned, what our team has learned, um what the use cases that we've run into with some of the the people, the groups I've worked with, and I'm just going to share that with you. And hopefully it's helpful. Hopefully it's something that you can apply to your organization. Um I'm I'm going to go through a couple of examples um that are recent um because I think

that this is a pretty abstract concept. there's behavioral problems, there's tech problems um that lead to an insider threat um uh issue, but looking at it through the lens of specific use cases, I think that helps with kind of grounding what what this is and how to define it for your organization. So, um I I also I want to mention I want this to be interactive. So, I'm going to ask audience. I want to go ahead and seed it in your mind. Think of a a nice juicy insider threat um story. And I'd love to hear one or two of those in in addition to the ones that that I'm going to uh share. But let me

start with with the definition. God, that's way way over there, isn't it? Um let me start with the the definition. Really what it boils down to is individuals who have access and it ultimately those individuals impact um sorry ultimately they impact the company's assets. They're they're affecting the core assets of the organization. And so when you when you boil that down, it's that's that's how when you when you look at the definition that way, that's how you look at um for your organization how to protect it. And so um access can be physical. This could be access to doors, access to buildings, uh access could be credentials, your MFA, individuals that that log in and

get access. Assets can be information, could be data. Um it can be processes that are specific to your organization. Um assets can be systems that uh that the company is invested in or facilities. And then insiders can be what we think about as full-time employees. But a lot of the stories I even heard from Maya um with Octa talking about um example where a lot of the threats come from third party uh groups that get temporary access to systems and ultimately they become insider threats. No, I'm good. Thank you. Um but that also can be board members. So just anyone that has has access. ultimately what um you we want to think about with with definitions. And one

thing I I want to challenge each of you to do today to make good use of of time is don't just think about your organization while we're going through these frameworks and and ideas these this um through the slides. Think about some of the dependent groups that you work with, the third parties that have access to your systems. Give thought to that their business case. how could they h suffer or or be uh impacted by insider threat in their organization that ultimately could impact your organization. And I I want to make a a special point as the definition can really evolve based on the industry that you're in, right? This this can um change the uh

how you deem what what you deem are your critical assets, who has access, what's your facilities or what your environment includes. You have to think uh a little bit differently about that based on if you're in healthcare, you care more about patient data, but if there's an insider threat, you care a lot about your reput reputation impact. Um, if you're in manufacturing, um, you know, you care a lot about the physical access, you care about, uh, the supply chain, you you care about the intellectual property of how you build what you build. So, think about that with your business, but also those that the businesses you depend on as as well. All right, I'm going to go through a

couple of um real world examples, recent examples. Um and and then and I'm going to ask again I'm going to ask for you guys to pipe in and interact and and give an example. But just a couple months ago, um this is a a a law firm in the in the UK and there was a a former employee that uh took some very sensitive information and email blasted it to over 900 internal employees. This included salaries, this included bonus information, this included performance review. Um, this uh this also there was an acquisition in the works. I don't know if this derailed the acquisition, but you know, very sensitive information. They went back and it it looked like the

email came from the HR director. So, like here here you go everybody. Now you have all this sensitive information. They found out it was actually that former employee um that was able to get access from three different restricted databases. So a lot of reputation impact um you know ultimately you know this this affects their day-to-day people do people trust them because this is uh global news at this point um so a lot of questions like that next use case um many of you might have been following this story a former Google engineer um started working there 2019 uh he's a software engineer and ultimately he transfered referred to as personal device. Um uh hundreds of

documents uh related to proprietary information of of how Google is building proprietary hardware and then the the configuration around the the AI. Um and come to find out that he was working with uh two organizations in China uh as a as a leader to build out the same technology. And so this is being investigated by the Department of Justice. Um and and and ultimately uh Google didn't find out about this until he announced and was was acting as CEO for this other organization. They had no idea they he had shipped all this information out until they went back and looked and found that he uh had uploaded this to a personal device and were then

able to track it. Um so a lot this is you know high pressure at this point. The department of justice and then um the the the battle between uh the west and and China with building out AI capabilities is is at stake based on this uh one insider insider threat. So I want to get some water because my mouth is getting dry. I want to ask anybody raise your hand. you have something happened at your organization uh insider threat or a story that you know about that that you could tell tell the audience. Does anyone have have one? Ali, thank you. We're all friends here. So started organization about four years ago. on things that we've identified as as

employees were getting ready to leave. They were doing exactly just this copying files that they worked on, files that they wanted to take up to another firm and ultimately use Microsoft Defender for exfiltration alert to not only give us real time but actual real time to be able to shut the camera. So in the process of the last I'd say about two years we've gotten to the point where we get alerting within about 60 seconds of a USB flash drive being plugged in because of the business that we're in. We have to allow this to continue to happen as we work with our external client. So we can't shut down USB file but defender has actually been able

to get us to the point where we can terminate on the spot. we can uh defend or is or into isolate uh shut down and wipe the computer before a file copy is completed and we can essentially get that data before something leaves. So it also works for exporting to Google Drive to a personal one drive. We can see all those alerts and reports there. So there are tools out there. This is one that we've been fighting against every day and it's a a very real problem that That's great. And just so I make sure I heard that this started because there was an incident with people stealing. You're you're in civil engineering, right? So these are plans that were

shipped off and stolen. Micro station and auto plans to another engineering firm to try to steal the project. Wow. To another. Got it. Great. Um I saw another hand. Anybody else have use case? Nate? Yeah. Um we just recently discovered that uh third party contractor uh had one of their support persons copy of uh GitHub personal access token their own and we were able to learn about it fairly quickly and uh reset the token. Uh but that person no longer So how did he get he he just had access temporarily. He had access to GitHub and created his own personal access token but then which was fine but then copied it to a private repo. Oh I see. And it

didn't goodness. Okay. Thank you Nate. Yes. Uh this happened five years ago, but I joined the startup and we noticed our cloud bill kept going up and we found out that somebody was uh mining in one of the AWS accounts and so it was a former employee who had been fired but they had the SSH key and so they logged in and then just started mining Bitcoin. It was a former employee. Uh we it was it was a contractor. It was an international somebody in Eastern Europe. I see contracted out no longer worked there and they had AWS credentials and they started uh miniming Bitcoin some sort of cryptocurrency. So those that that couldn't hear, he's he's

talking about it was a contractor that worked for the for the organization had access to AWS ultimately installed it was a crypto mining uh because all they noticed was their their AWS bill was increasing dramatically and so you know what's the root cause of that and I guess ultimately found found the issue. So these are great. So I I want to go through as we as I go through these slides and kind of go further into the definition. Let's revisit some of these stories because we're looking at through different vectors of what insider threat is because it ultimately it's we talked about it's an indivi individual who has access that's affecting the company's assets and that can be tracked either

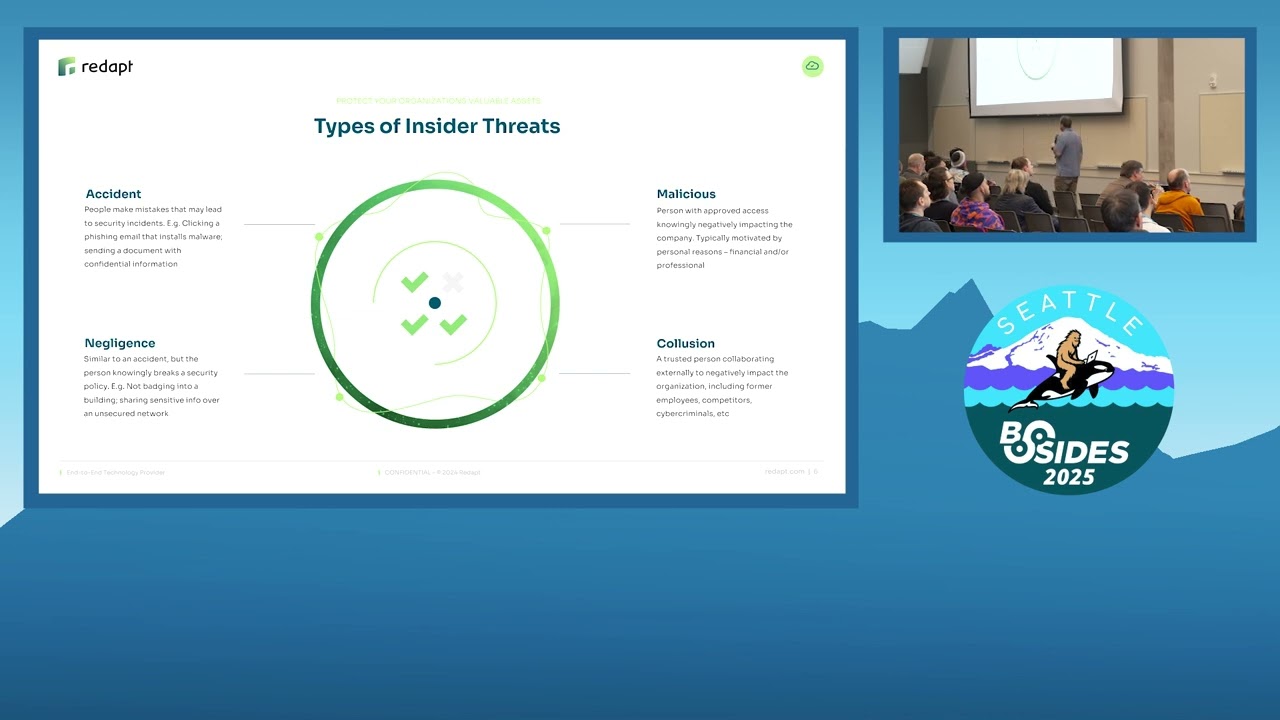

digitally or through behaviors. you can you can pick up on behaviors as someone is doing something wrong or you can pick up on it through logs or a sim uh so digitally and use use te technology and tools for that. So let's first go through just uh the types of insider threats that that we typically see. And the first one accidents are insider threats. Oops, sorry. I I I just emailed all that confidential information to the wrong person. That happens all the time, right? that that is a threat. Um, very right next to that is negligence. I I kind of knew I wasn't supposed to email that, but I did it anyway. And no one's going to it's not

going to matter anyway. But it's a blatant I knew I shouldn't do that, but I did it anyway. And and crossed a line, crossed a policy, that sort of thing. Now, on the other side, uh, we're getting into malicious. So malicious is uh you know they have the access that they need. They know that they want to impact the business negatively and they're ultimately um able to do it because they have the access and they have the ability to do that. And then the last one that we see is collusion. So a trusted person has uh access but they're they're trying to collude with someone externally typically to uh you know use their privilege access and and

uh connection to impact the company. So let's think about those examples earlier the the Google example which one which one was the Google example collusion. collusion was he was working with a essentially a nation state and externally to create the business. What about the the law firm with the the employee described old employee? Which one was that? Malicious. Right. How about the employee that was uh with Ali that was leaving the company but stole the plans. Which one? What do you think that? Malicious. So a lot of these that kind of hit that that level, they end up or are noteworthy, storyw worthy, telling over over drinks, like tell that story. A lot of them end up

being malicious and and collusion. Um our job is to try to prevent all of these, right? Or minimize the uh how often this happens. Now, let's move on to indicators that we typically see. So with with indicators, I break them up in these three three categories. Um so behavioral, the only way you're going to see the behavioral is by witnessing it. A a peer, a manager, um it the people that are insider threats that are acting funny, they're not going to self-report. How how are we going to find out about this? And this is also kind of a gray area. This is difficult with the organizations I've worked with because we have to ultimately work with

the HR. We have to work with legal because there's only so much that we can, you know, accuse them of. We have to build evidence around, you know, it looks like you are acting or using your access inappropriately. Um, so digital we I'm not going to go through all the the list here, but there's there's ways of tracking uh that and I'm going to go more into some frameworks that we can review um that that can help us track that. And then and then social, you know, we're looking at how are they communicating? Uh are they how are they communicating externally especially that that comes up. Uh we may revisit this in a little bit when we um when we come

come back. But also I wanted to show this um this framework of for you in your organization or as you're thinking about the companies that you depend on the third party dependencies that you have of um ways of building out a program. So a lot of times when we talk about insider threat we're talking about technology data loss prevention crowd strike falcon or or code 42 things like that. But this is if we think about it and zoom out into a program of how to implement that and track from a behavioral and a technical standpoint. We need training programs. We need policies in place. We we need to assess we need to understand our technology

controls and and limitations that we may have in our tech stack. The hardest part of uh this framework is the second one, the detection. That's the one, Ali. You're talking about 60 seconds and you get an alert. That could be noisy, right? That could be a lot of alerts that come and are there false positives in there. Are there and you have to go track that down. You got to you got to adjust the knobs and uh decide what's what is the right amount of alerts that I should get versus the ones that um you know I I I don't need to see this. This is wasting our time that we're what we're looking at. And then the rest of these response,

recovery, and then awareness is really what do we do once we the the event happened? How fast can we respond to it? Um what evidence do we need so that we can kind of prove that this is is uh this is real? Um and then what what can we learn from the recovery? How much did it cost us? How much did it affect our reputation? and just use all this information to continue to build the program because this this is more all of this is more of an art. It's not a science. There's a lot more of we need to shape it based on our user base, our our tech stack, our controls and and the

evolution of the business and how we use use the technology. So I want to show this example. Well, this this is kind of an anonymized view of um the digital journey for um tracking insider threat and and so um I'll walk you through it briefly and I I want to go revisit some of the use cases um as well. But the a normal user we need to let them log in all the time. we don't need to stop the the use of business, but um normal user versus an administrator are just going to have different access rights. And so the some of the secret sauce in the tech stack of is right there in the

authentication. We need to make sure MFA is is is set up. We need um to to make sure the the controls, the policies are in place and then so that we can track the actions that ultimately end up in a SIM and and end up in logs. and it spits out to the point where we're tracking normal behavior, we're tracking suspicious behavior, and then we're ultimately getting to malicious behavior where we can block that. And we want to build that into an automation over time. So, it takes it takes time to, like I said, adjust the dials for your organization based on the controls that you want. And and you really we need to go back to the very beginning of the

definition of insider threat when we look at a a a schematic like this is define what do we care about? What what are we trying to protect? What critical assets in our organization? Are they CAD files? Are they inside a intellectual property? Is it access to a building? So put some definitions around that. That's the baseline. And then that's what you start to tweak in your in your systems. That's what you get alerts on. That's that's what uh ultimately is is gonna where you're going to get the alerts that are effective to to uh for your business. Um oh, let me go back. Sorry. So with the um with the law firm example, what uh where did where did

things fail uh with the law firm? How did how how was he able to get all those files? What what what's the place that you pinpoint um administr administrative access for sure of uh he was able to get access to three different uh restricted files. Uh where else sorry with the personal devices I mean because he shouldn't have been able to get on or use email from impersonating. Yeah, I think the ability to impersonate the HR director that's hard to fix, but that's that is something to, you know, lessons learned and review to go back to. Um, so very good. The Sorry, go ahead. I can't remember if the first person mentioned it or not, but I was going to say like

when the first it was a former employee, so like really the rule of the problem is is like the authentication tokens like they weren't revoked because if access had been properly revoked would have been able to access the three systems wouldn't have been able to then impersonate like that would have been cause they properly uh offboard this individual. Exactly. Very excellent point. That's true for the examples from the audience as well as these are contractors or former employees and and putting in rigor with onboarding and offboarding. It's just a it's a policy change a process change that just needs to be enforced and we could have could have avoided the you know those incidents. Um how about with

the Google example? Where was the where was the issue there? Seems like exploitation was not right. They weren't tracking that uh these very sensitive files that were proprietary to Google were transferred to a personal device. I mean that that seems they didn't even know about it. That's it's still crazy to me that they didn't even know about it until they found out about the PR release in in China. Um so they putting in controls with certain files with the restricted access cannot be moved outside of put on personal device things like that. There's there's uh policies and controls that could have been put in place. Okay. Very good. Okay. Next one. This this one is

it's tricky behavioral. Um this is just an example that we've uh worked with a there's a large BO global BO where they do like help desk and we we worked with them to look at indicators of when a person might you know do something wrong essentially. Um and so we're we're looking at them as a person. How how are they acting or is their behavior changing? Is this just the way they are? Is this the way their face looks? Do they always look suspicious or do we we so we can't accuse them all the time. We need to have some evidence in place. We need to follow and track through uh to the point where this is an attack and we

uh this this is they are exfiltrating data. They were impacting our critical assets at our organization. We need evidence to show that they're they're disgruntled. they are uh you know they're a contractor and they should only have certain rights that are that are set up. They um you know they have some ulterior motive of why they uh would act that way. Let's go back real quick to this. Um so looking at uh disgruntled or violation, signs of financial problems, seeking unnecessary access uh privileges. So the there's there's indicators that we can track that if you educate in your training program for your organization you go this is this is typical uh use cases of when a a

individual is likely to you know affect the company this is what you should be looking for HR should know this managers should know this and even your the peers uh individual contributors should know this so they can they can understand what to be looking for and how they're communicating how that might might have changed. So there's there's uh preventative measures that I' I've put here on the side that we've we've learned and and these are if you think about it, I know everyone in the audience here is smart. So none of this is groundbreaking. It's just thinking deeply about the problem and then this is what's popped out for us of just access control, lease privilege. Um

spend more time vetting the employee. Is there a reason we're hiring someone that we found out is is now from North Korea or that you know there's examples like that that have happened over the past several years. Um security awareness training. Uh so so add this part in as you're doing your training and and getting your the staff up to speed. Um and then just protecting your data. um the example with Google that if they had better controls around their their data um integrity. So I'm going to just high level these are some things that we've learned that I believe is helpful for building a a program like this and like I said before start with what are your

top threats and involve the right people involve leadership to define what do we care about what is what are our critical assets what um and and do we all agree that these this will affect the company the reputation of the company the the processes are those defined well and then based on that uh create a plan with with stakeholders to execute it. So meet with your key stakeholders, review the threats and then ultimately put a plan together um and including the behavioral. A lot of times the reason we've had some conversations recently is there's just been focus on the the digital side and so we need to expand that to the behavioral side as well for

for the program. um assess tech tech capabilities for monitoring. There's a lot of cool technology out now that's uh that's cutting edge that's that's able to look at the enduser. It's able to look and and feed into your your logs and your sim to ultimately bubble up when users are doing things that they shouldn't. So there's there's uh a lot of new tech to to review there. and then putting in place a training in a in policy creation. That's that's a big step that is often skipped or or minimized. What what I've I've seen is there's organizations that just have one person that's a one of the hats that they wear is insider threat and it's for

a very large organization and typically it's a jun junior person or so and so there's there's not a lot of uh there's there needs to be you know more effort put into this a lot of times but you know it's limited resources so applying uh policies that are available online Um, we have some that I'm going be happy to share with you if if you're working on this, but um, set that up so that there's there's uh, accountability to make sure that they stay in the guard rails. Tabletop testing and cyber fusion center. Um, pick those those top threats, run it through your system, see where see where the gaps are, see what fails. Um, you know, bring in other

technology, other use cases to see uh, what happens. That's just takes might take a day or two to just run it all through. There's cyber fusion centers that can use the same technology that you have to then run it through your system. And then the last is just around continuous improvement. Again, this is an art, not a science. This is something we need to shape to the changes of the organization and u and the technology that that you have and training your people to continue continually do that. So um I am done. That was all the slides that I have. Is there any questions or anything else that we want to cover? Otherwise, let you go. Yes.

This may be a tangentful use case, but say a recently departed employee discovered um have been ordering certain illegal substances and acquiring certain services on company resources. Yeah. Without no so no company data but clearly being risky on company devices. So and your question is is that an insider threat something that we can monitor for this framework? Yeah. I I think that this is a an employee, someone that has access. They have the the ability to use company resources and assets and and so putting that in that model of how do we prevent something like this from happening or look at this is a you know h how how do we um from a behavior standpoint, what can we learn

from a technology, what do they have access to that we could track and and ultimately put controls in place very much so that sounds like insider threat. So yes, Nate say that's when you get HR involved and those powers that I mean it is an incident response type of action. Uh but a lot of the decisions uh then get taken out of your hands which makes it safe you know because then you're not the one making decision. Totally. Yes. Scott. Uh so in your experience across all the different projects that you've been uh managing from a product point of view if you wanted to to detect for example all the exfiltration or the copies to personal

devices um Microsoft has mentioned what are some other sort of uh top actual products or services that you've seen be effective? Yeah. So the question is around the tech technology to to alert um from a digital standpoint and some of the cool tech I' I've seen is you know CrowdStrike has some interesting DLP um tools that are relatively new. Um I don't know how well it works. Sometime there's there's a uh feedback about how how well that works. There's uh more one-off products like code 42 that do that as well from the enduser standpoint. um and and report behavior back and you can you can train it. Um there there's there's a handful. It really kind of depends on your tech

stack and then what your your your existing platforms in place, but um there's there's not a ton uh out there and they really need to kind of be vetted for your organization. But great question. Um yes um you talked about it just at the end about having how how much organizations focus on is there like a rule of thumb for how how much of the staff or focus should be on inside threat versus your typical threat. You know this this is one of many responsibilities of most uh cyber security teams or IT teams. So we only have so many hours in the day type thing. I the the reason I I and and we're a third party that

supports that. So people bring us in to help set up the policies and set up the program and then and then the company kind of runs it. So rule rule of thumbwise it's it's uh the goal is to those those last steps of have a program in place build uh an educa and educate the your team your organization about the definitions around this and what the indicators are and how to spot it and then have some technology in place. So I I I think how the how deep the implementation goes is really the question of how much time do you have how much budget excuse me budget do you have for it. Um but rule of thumb it's

it's going to vary with every organization. So it's good question. Anybody else? Yes sir.

Yeah. Yeah. A lot of times it's it's uh the approach that um I recommend people take with a small team or if you're bringing in a third party is you always want to start with a a baseline assessment. What are our gaps? What what what do we not know about the our the impact of insider threat? What what do how do we categorize at the very top the impact that would happen if someone took our our intellectual property or emailed our AI configuration? Um do do we have things in place for that? So assess um and third parties is helpful because it's a fresh fresh set of eyes to do that. But then uh go quickly to the tabletop and

test out what is what's the scenario that's going to happen uh if I take this these critical or these uh sensitive documents that are restricted and put them on my Gmail account. Can I do that? and and go through different scenarios of how can I get that data outside externally where a competitor could get it um or you know whatever the those worst case scenarios are and so I I recommend the assessment recommend tabletop and then decide what is the investment we want to put in with our time or budget to address this so yeah Ali at the beginning you talked about hardware I think that's something as we become more of an online cloud

A lot of people still forget about it. Um, something we're going through right now is 8021X on physical switches in the offices. Yes. Can come in, plug in the land. You don't have 8021X installed or set up properly. They can have pretty quick access to brute force your local local network, right? Yes, Wi-Fi is another one. um you know data centers a lot of people still are in shared data centers and we are we have two locations. We had an incident where the data center folks left the rack not unlocked but they didn't latch it and the door propped open. Luckily we have net box that alerted us the magnet wasn't closed. We were able to call

them. So physical is something that people still to this day gloss over and I think it's still a very important thing to insider threat because it's touchable everywhere we are. It's so it's a great point because again a lot of times in our head we define it as someone emailed themselves some sales data that they took it to a competitor. how a lot of us think about that or Edward Snowden but the facilities access the physical access to um get online to to brute force your way to get access that's that's a vector to to really heavily consider for sure. Yes. I find that's something people forget about or are not so good about is they keep their

laptops in the car and you have a lot of sensitive stuff and it's usually not encrypted and people don't even think about it. I was started company a couple years ago and I was out with somebody they were marketing they were going to leave the car the laptop in the car and I just gave them a look. Okay. But but again people just do that and it's a simple simple thing. put it put in the trunk or leave it at the office or leave it at home. Um, that's true. And that's that's policy creation. Like this is the policy that we're asking because you have access to sensitive information. This is our policy with using your devices that we give you that

enforcement policy what happens. Right. Right. Great points. Thank you. Yes sir. Uh instead of teams becoming much smarter and putting in more safe, are you seeing that these bad actors are becoming more sophisticated or they are still going after the low there? I I'm seeing both. I think that's that's a great question that the topic of insider thread has increased a lot just with my people I've interacted with and I think it's because of both. We hear stories about uh the Google engineer and that's not that sophisticated but it's you know very damaging and then you have the one with the law firm that is somewhat sophisticated. this is a former employee and got access to three different datab

like there's it's both I guess we're seeing that there's a lot of uh baseline controls set up that from an access standpoint just prevent the physical and the digital access to things that that's that's just step number one basically and a lot of times that's just not done very well or the process isn't in place the earlier point about onboarding and offboarding so great question Yeah, I think also when it comes to insider threats um and this was maybe less prevalent um historically, but as everyone's rolling out like JBT and all these like Gemini and like all these AI tools like now you have like all your like all your intellectual property and everything is now accessible and being

uploaded to these third parties and maybe it was like a few years ago someone like oh had like the Grammarly or whatever plugin on their email and suddenly all these sensitive emails were now getting uploaded. So uh you know it's not just like the physical devices now with the laptops and and dis encryption but also now like what data is now being and like you said the insider right threats it's not necessarily malicious it could be accidental or negligent like are your employees now uh you know uploading your data and like how do you detect that like monitor usage of these tools? It's a great it's a great point. Let's let's take the the law firm example and just instead of it

being a disgruntled former employee, it was just AI got access to those databases and people started to query it and then they emailed it out. It's it's a similar thing. I saw a thread from a lawyer so in a legal firm asking I think it was Microsoft representative like with chat TV or one of their tools is like I have different legal cases on like the same system that I'm processing that like they can't share details between these different cases and so if you're digesting this data and I'm asking for legal advice are you now pulling information from these separate legal cases and informing this other one where there needs to be that boundary right and so it it can get very nuanced

and like and again it's the accidental kind of things like do you understand your text stack and like how it's interacting even within itself right like is there are you crossing things there that's so and that's a double-edged sword because I work a lot with Microsoft and some of the other AI technologies and there's because AI is so new there's a push for co-pilot to just get access to everything they want to keep it open to so you see value from using this AI technology but really From our standpoint as practitioners, we have to put those controls in early and we're actually stunting the growth of using AI while we do that. But that's a necessary

part of technology may be your insider threat versus your like your Yeah. Just like I got hacked by the uh projector earlier. Yeah. Okay. Anything else? This is great. Great discussion. I appreciate it. Okay. Well, thanks everyone. Thanks for coming. [Applause]