Trust Chain: How to imagine and realize multi-organization Cloud Environment

Show transcript [en]

besides DC would like to thank all of our sponsors and a special thank you to all of our speakers volunteers and organizers for making 2018 a success my name is omar farooq I work for IC today we're gonna be talking about how to secure multi-organizational cloud environments like I'll get into what that means a little bit and then we'll talk about other few how to actually study strategic and tactical design principles and security principles to when to secure these environments Who am I I might like I said my name is on Farooq I work for is C I'm a software and security engineer we are based out of Baltimore and generally what we do is

we do where we do a lot of white box white box based of assessments so we are a bunch of engineers who just essentially love and to take apart things and then build them back together we do a lot of software what about some soffit about will work so I'm also focused on that academically I'm an engineer I have a master's degree in electrical and a bachelors in computer and I'm a PhD candidate UMBC is C it's a company that came out of research we are an app stack security evaluation company we work with specifically in multiple verticals but one of our major verticals that we do work in is media entertainment we have some fortune

fortune 10 clients that we do a lot of work for and essentially our primary focus is apps AK network security we currently run at ISC CTF contest this test in the other in the other room we have an ist lab that publishes C V's that are for for commercial products so and I think in the last month or two months we have published over 100 CDs regarding commercial NASA's and routers and switches so if you have a if you have some time to take a look to check out our lab and check out our blog so a lot online and we do publish after we have got permission from the authors from the vendors so what does this talk is gonna be about

one thing right away I think I should mention this has nothing to do with blockchain if you want to talk about lock chain I just went to a few conference of blockchain but I can talk about that too but what we are going to talk about is what is multi organization cloud environments why are they different from current multi-tenant sass environments at all everybody's kind of instantiating left and right and how they differ and why they're needed and where they're being used currently and then we will talk about what the challenges are and then the latter part of the second part of the actual take away from this talk is what the strategic security principles you should

be thinking about now and the actionable tactical security principles that you can go ahead and today implement in your environments so if if you guys are DevOps engineers or security engineers or CTOs or any of that functions of you in your organization's you can take some of these things and make sure you are running those and that will help you get secured day one so you know I will start from a little more basic usual then from the cloud essentially we will start a little bit and build it up and go all the way to what multi organization is but essentially the idea is that you know we go on away from data centers there is no essentially you can launch a

multi-million dollar company these days out of just having your URL links in the cloud and then you it's very powerful but also from a security perspective thinking that the idea that you having your own data centers are kind of more secure because the air gap is kind of like like thinking about thinking about security from application point of view we think or at least I personally think that if that's your reason for having data centers is because you think your data is secure just because it happens to be in an air-gapped Network it doesn't really mean that it's secure so eventually everybody is not everybody but most of the use cases do are I mean

some most industries can go to the cloud and they should so this part of this slide is talk about you know the elastic part of cloud the fact that you can render like for one of the examples in this slide that talked about is rendering and computing very that are very compute-intensive and so growing data data centers or growing data farms it's quite expensive so cloud is the way to go so then when we get into the next slide is to talk about I'm building this up about how you know how people are launching SAS applications a very simple SAS application would be single tenant single app single database you have multiple different customers you might be a start-up or you might be

a large company that has running a specific tool as I sat as a web app and then you just launch it and you push it out to multiple files now from the DevOps point of view that is quite easy you can have one cloud formation scripts and you can actually go out and push this thing after your cloud and very quickly now from a security perspective you have all kinds of holes because now you're sharing data and have multi tendency at the app level at the data level I do want to stop and just say one more thing before we go this this talk per specifically is not as completely cloud cloud vendor agnostic even though I might use

concepts that are specifically - one of the largest vendors this is not an advocacy or talk for particular cloud so every cloud manufactured cloud vendor that I know of at least the top three they all have a similar concept so even if I do end up using a specific technology that is offered by one there is somebody who probably is similar technology and the other one so this is not a cloud specific to one vendor so then people will built on the all the other extreme is that you have multiple instances for each for the application and have multiple instances for data well that means that you are now segregation data and app possibly not

networking you're still sharing a networking component because you might be in the same region you might be the same virtual private we proceed framework but you are guaranteeing or you are saying that we're going to have multiple applications and data it's data storage just this well this provides some intrinsic security that you might you will get out of the box because you're not sharing the same databases or the same storage object stores and stuff like that but at the other day there's still configurations that need to be done to secure it but that's another model people go to another third model that people people kind of follow through also the same time as having multi tenant application with the

database per tenant where you have one application instance but you are using multiple storage stores are using multiple databases and stuff like that and in that in that instance essentially you have authorization and authentication principles on top of your application that allow you to segregate data segregate access to data even though and the data itself lives in a different databases so all of these are very common routine things that if everybody just launches and and configures and deploys and they're in their use cases now what we are talking about today is how there is a whole flow of organizations that I go in towards sharing clouds and the idea is that you keep the data in your particular AWS or

Microsoft Azure cloud platform you have a vendor that has a basically a set of applications that they run in their containers in their containerized environments that essentially are might be a thermal and then and they basically say that as long as you open up your URL to your storage or you give us VP CP R appearing or some kind of sharing with our virtual private networks and on a non public cloud non-public Internet or even in public Internet how we we can actually work on your data in in place and so this is this is exactly what we were trying to talk about how that how they now that you have multi multiple actors or multiple organization involved

one is an organization that offers you the service you have and then you have a bunch of people who have their data and then there's partners like people who might be evaluators people who might be security auditors people who might be compliance people people who are actually doing things like logging and monitoring that also want to be get involved now what are the benefits and why would anybody be interested in pursuing an architecture like this well this is a real-world example I'll get back I'll get to that one what happens is now you're not sharing the cost of a full deployment as as the AWS not AWS but as a cloud and why I'm an

infrastructure grows the cost gets get higher and higher people are looking in a way to have divided costs based on what they own and what they use so if you're a data owner you don't need to have computer intensive do instances they have to pay for you and you don't have to bring those services into your cloud essentially which what people are doing is they're forcing the actual staff services into a cloud or into a platform that's owned by people who who built those and they get get they actually access your data work on it process it and then send it back to the object storage now you own the data you own the all the security principles

around that you know you you maintain the VP C's you maintain the key management you maintain all the encryption based on your local policies and they work on their in their cloud and their on their policies that might be industry regulated or based on your requirements and then you have people who are small small companies that might be consulting or independent shops that don't need to have a cloud they just need a clock they don't need to have a major cloud infrastructure to get in the business what they could do is they can have a small cloud infrastructure and then they could share the VP cease to get access on a temporal basis and go and do an evaluation or go

in or run some kind of audit or run some kind of compliance so it makes things easy for all partners involved because it's divided and distributed responsibility and it's and it's also means distributed cost so how does this look like in a real world whatever I mean so everybody wants to know how would it look how we have as an organization have evaluated a bunch of these environments I won't mention our clients but I can mention some of the reference architectures that we have looked at and what they look like in AWS right and then the way they look like it is that one of the ones that we looked at and and if you are asked if you're a

designer or developer or firm that looks looking to deploy SAS in a way that like this the way they do it is that they actually set up what they call der is a base platform cloud OS a bunch of platform software that's running on and you eat in their ec2 instances or their compute instances and and that's the middle swimlane essentially that is SAS applications running as containers and they could be multiple verticals of them running independently on top of that on top of the application and below the applications is the actual is the class is a platform that might be shared services that I've get used across multiple SAS and then below that is

federated identity service so essentially you will have to go and pull tokens or some kind of authentication by either through an end user or end organization so you don't even so the the SAS application owners are are responsible for launching and maintaining their self this dis offers and their services in the cloud they're making sure that they're they're sanitizing data they're not holding on to any of the cloud the data from the actual and organizations on the on the right hand side is the all the organizations that might be your clients or any customers so in that case what you could do is the data assists on their in the s3 buckets or their sits in

there in there in there in there MongoDB or whatever the data storage format or storage mechanism is but essentially they give you access through a URL or endpoint they they come across they come in and they access the data and then the access to data itself is limited and controlled by the data owner so essentially if there are encrypting for example data in s3 storage data is being stored at an s3 bucket at an object level then the keys are granted and then the key accesses can be revoked and the data is the data actually stays locally and not has to be and the access is revoked and the same thing for giving access so you can grant

access by sharing keys and sharing I am roles to an organization across the world and then they will do something like process to your data either in many cases that I was talk about is might be like a video that you want to get transcoded or you want to get a watermark tour you want to have a transaction that to be published - it might be a transaction in terms of a financial transaction that you want to get it processed so the data of the plot for the actual user information sits in your your data stores it gets processed and gets some kind of involved in some kind of financial transaction and then gets back put into your database and

then the third side is what I was talking about partner networks partner networks being small little companies or companies that are actually do business on on a small time time limited basis they come in they do an audit they do they come in and they they come in and look at things and and then and they go away so it allows everybody to have an odd infrastructure that is much more manageable rather than having one organization owning all of that or having multiple organizations running multiple large networks or infrastructure than having a look quite a bit of cost so where have you seen this and why what is a good example of this so one of the good examples is like

if you think of any kind of productivity soft software that you might be using today like any creative software any editing for any editing software for musics or videos or multimedia or any of those they're essentially running this platform essentially what's going on is you get an instance of the app itself but you can actually control the data in your in your cloud so if you're a large organization that is has thousand-seat licenses for a soffit that does graphics or software that does mechanic a mechanical were a graphic art works like auto cad or whatever then you essentially could get an instance of their get access to the data you will launch the application in their cloud

but the data attack itself would be living at the back end in your AWS instances and that would be a good it would be a good partnership because you are storing the data locally or in your cloud and then eventually they are there just allows you to launch the application and then away and a lot of those times what we've seen a lot of those vendors are kind of like at least stay or promise to be a firm allure in a way that they are running into in instances that I did that are they're supposed to be on per client upper you space so eventually when they do come up they they do with some sort of some level of servicing for

your client for one organization and then they go ahead and they get they get released out so it is a it is also like we talked about before it has it has a concept of using its Multi multi app but singer but multi app and Multi multi app and multi database kind of tenancy so essentially it's the same model but over a large number of cloud networks so where else we have seen it I particularly have seen this examples of this in performance compute where people are running images or videos or music files or unreleased content and try to get it processed and for example if you're if you're if you're like a production house and making a film or

something and you need to get it translated and there's a translation software that somebody runs then you could you just essentially open up your s3 object database open up provide them a key provide them access to on the I am roles and then they go and access that file do this they're running through this software for whatever they need to do and then they can put it right back and that way you can revoke the keys and remove the I am user and essentially the data actually possibly never possibly have a left your database yeah your data stores I already talked about lunch about why this is really important or why this makes sense but the I think

from a larger storytelling point of view it's important it's because one it divides the cost not everybody needs to run huge AWS or Microsoft agile instances and compute devices and run everything and get it instantiate in a self hosted environment AWS so if you are if you're an organization that likes to run everything self hosted you don't need to do that anymore you can possibly get a V PC sharing okay I get a subnet or get a V PC that you substantiate and yours in your in your a dub in your cloud environment or AWS environment share that V PC with the with a vendor and let them run it and then you don't have to incur costs of

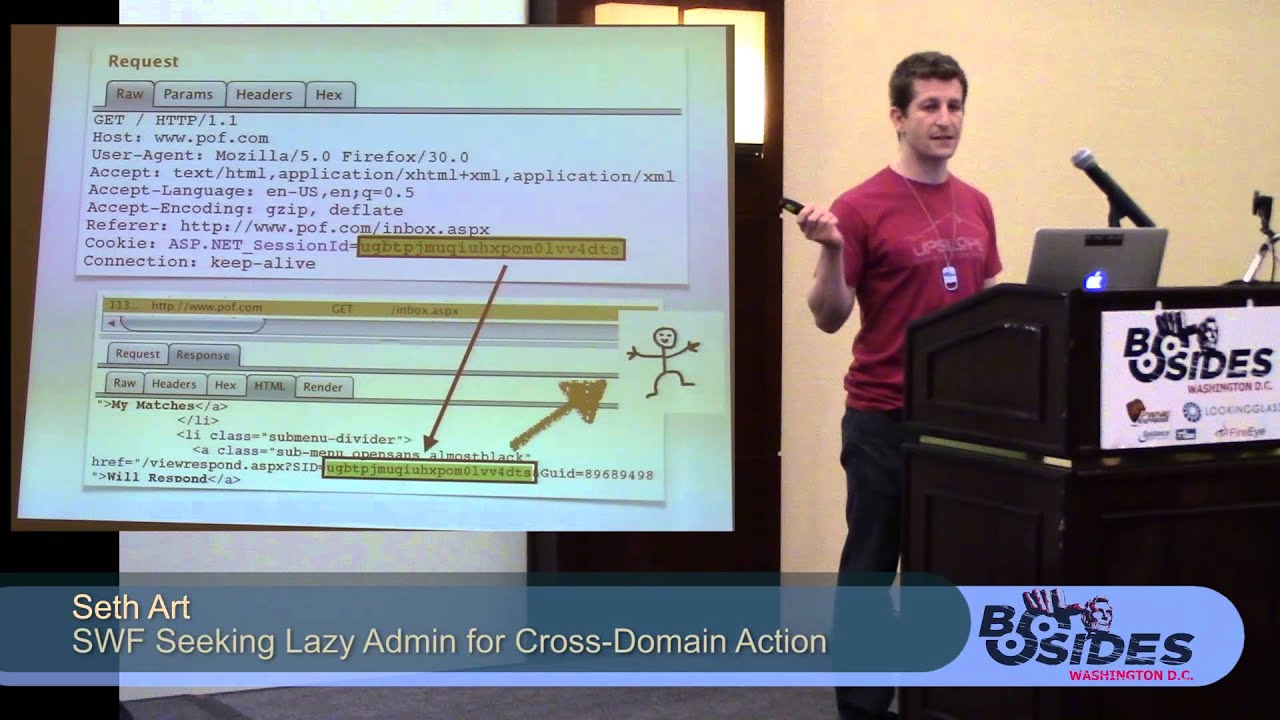

running large ec2 instances and then so but so exactly so what what are the one of the problems are we trying to solve with this so one is how do we validate that the when when the SAS application or organization says that we don't actually keep any of your data you actually just process it and sanitize it how do we verify that that's one of the issues that come across from an application cloud point of view how do we know there's segregation between our processing of our processing of one organization's data to another from a data cloud point of view how do we know that the data itself is encrypted is secure while in transit or in use either

at the data cloud point or actually in the fast cloud and from a service partly cloudy how do we know that we can that if an audited company is looking at your data or it's looking at your SAS how do we know that they're only looking at it as a passively and only looking at it at a limited access point of view because there's malt so there's multiple people involved in multiple different large organizations involved how do we know that everybody is playing nice with each other so before we get into it I had it this is very important so what before we get into it further down the road about about why is this why is this important

and all that the threat modeling aspect is very important because I have had conversations with a lot of folks it's like oh the government would never do this or this will never work but so I think it's very important to take things in context a little bit and understand that this is not about one all solution for everything it depends on what the or what the first of all it depends on the what your driving force is if you if your driving forces economics it makes sense but if your driving force is your government and you don't care about money and you just want to have its it won't matter right so we definitely want to take a

little threat modeling and understand what is why why are we doing this but if you're a private entity or a commercial entity and you are trying to bring down cost and you are already running into public internet yes you need to worry about these things that you can take advantage of them so usually when it from a threat model point of view within the context of if you're driven by economic forces rather than you know DoD or anything like that it but even even in the government for even in most government use cases you can actually put stuff in the cloud I mean we have we I know personally have customers who are working on in spectrum sharing and for

health services companies and for agriculture FDA then there are using clouds so it is it is very applicable in government to just but not to all extent and so I just want to make sure that people always push back and say oh this is not gonna work in a cliff or the well it's not applicable there at all so when we talk about threat and model and trust model I think it's very important to take a look at understanding what are you protecting as an organization as a SAS or as a cloud owner over data and what is your biggest threat so in this particular instance I want to make sure that we baseline that to say that most

of the times that more most organizations will be protecting their reputation they were protecting the the data that's there that's proprietary and then all of that and availability might be important but most likely it's either reputation or most or the or the content we come from or at least I come from a background where we do a lot of work for media entertainment so a lot of it this is applies in a way that if you have content or you have assets that are very valuable for short amount of time but they're not really they're okay they're not really very expensive after a certain amount of time for example a film that gets released after a certain

time it's not really that important but before that is extremely important so you have to understand I think that it's very important and what the value of the asset is and in this particular case when we talk about sharing cloud in the organization I think at least in this conversation we are framing that as not like super long term but most of the stuff is very highly valuable for at least short amount of time so and then this part of the talk I think we will go down to the so the next this part of the rest of the conversation isn't going to be about actual takeaways from strategic principles and actionable tactical things that you can go and write down

and apply to your AWS control control configurations and build cloud formation scripts out and then deploy them so first we'll talk about strategically for what you should be driving towards and then how you can implement them with with tactical things so as everybody should like in from a cloud perspective depth in defenses are obviously very important but what does it mean in this particular scenario it just means that you take everything from I am to networking to system networking from I am from data security and then from ECT or or compute as aspect you take all four of them and you layer them so essentially you have the you use role-based account you use role based

access authorization and you use key management to protect data you actually have hardened a.m. eyes for a C two instances you you have software patch management systems or for your e for your software updates for your ec2 s or if you use a container for ECS services or if you're using services in terms of for if using for gate for example then you solve all that problem right you don't even have to even worry about how to actually manage the patch management at all you they're already done for you and I think well one of the one of the things that we do want to talk about and I will talk about is down later in

tactical is a little bit about server so server less compute where if you were a SAS provider and you are gonna be working someone else's data it might make sense to be using lambda functions or functions that are just run in instances that you don't have to worry about you don't have to worry about management of software unit management of patches you don't have to worry about management of the firewalls and the rules of the actual and compute instance so the next principle is least privilege and I think the way I like to think of at least is how to use these privilege in a way of like a chess right so you have chess

pieces that are have a higher flexibility in terms of movement but well but the and then you have chess pieces that are more in number but they have less flexibility movement so I know this slide is a little bit more AWS centric but it still gets the point across that essentially at the end of day if you if you apply correct privileges to identity management and through key management you can pretty much lock down any data and the way you should do it is for these police privileges is deploy like say multiple planes of control plane and a data plane so essentially you should be applying a control plane that only allows people to manage the keys for example but not use

the keys and then apply a data plane where people can actually use the keys to encrypt or Rhian crypt or derive new keys but they should not be able to delete keys so the idea of having these privilege could be is that is actually implementable through thinking of things in two different planes of that are not they're orthogonal to each other um separation of duties obviously either this could be done through a role so they could be done through people it could be done through specifically if you have multi if you have a large organization you're like you have folks to actually you have a if you if you have a large enough infrastructure then

yes you will need more people but generally from thing from a role perspective push our people that separate out the beauties in a way that there they don't they overlap but also they are verifiable default by secures secure by default so essentially this was I was talking about before I serve it less compute or in this particular instances like using for gate or some kind of some kind of is some kind of a configuration for example if you are doing any kind of automation automated deployments push things out in a secure way push things out in the most most least flexible most tie-down and then write exception rules to actually open up the specific ports

for example a good example of this is like if your if you are you are going to be running into multiple V pcs you are going to have multiple subnets you're gonna have multiple security groups and those are all those security groups and all those V pcs and all and now security groups VP sees that all the subnets should have policy that should lock down traffic first and then open up on specific ports of specific source of destination pairs as you go along so this is this should be I think should be come up first but automation so I'm a particular big fan of like in Microsoft Azure or in AWS to use cloud formation

so this essentially is like an idea that people make mistakes you are going to make mistakes you are going to make configuration mistakes and those mistakes are going to need to open holes and it is one of the only one of the ways to actually limit or minimize number of mistakes you are getting or open configuration issues you are gonna have is to use things like cloud formation scripts or things like that automate deployment so if you are gonna be running you are going to have multiple instance and multiple accounts try to push out infrastructure deployments through rather than doing through hand setup them as stacks and create those stacks and through scripts and those and those should be pushed out

as a one shot so create as many parallel stacks as you possibly can and then push those out and that way you are keeping things in modular form of in a component where you can have a stack ECS instances that are do specific service or you can have ec2 elastic ec2 service that runs as a platform software but everyone's a stack and you push it out and last but not most important and specifically cut in this multi cloud organization is to you know I'm sorry it shouldn't say automation there should say trust with reluctance but it what it means is that log everything you should be you should have your own logs for at your service the service level at the

application level at the at the host ec2 instance level or the of the Machine level you should be forcing things at as much as possible to to either refutable evidence of yes you are doing what you're supposed to do and then you can actually validate to say that you sanitize the machine or you took down a machine after a certain amount of hours when it was supposed to go down because you had done a specific job for a specific client or if you're if you're in the data cloud part and you run a data cloud where you have all that then you should be logging all data uses all API calls all the number of number

of people who actually have access piece of data who have active keys to it who are actually how many times people call to that endpoint and things of that nature so to bring it all together and to bring it into like actual concrete tactical things that you can actually go implement and switch on today if you go on to AWS account or if you go into it at Microsoft Azure account I we take it on we've been talking about a very high level so we would probably could just go down to a point where we can we can go down to the specific specifics and talk about those and then we will be going to

do is we can talk about in four different sections we can talk about it networking and I am and kms and then storage and compute and those are generally those are very not I know that I've the word s3 there in the FS and EBS but block storage file storage of object storage however whatever your vendor calls it it's the same thing so first and foremost start with your cloud consoles right start with your API key start with your start with who has access to your cloud environments so you have a bunch of developers you have integrators you have testers you have DevOps you have architects so figure out who has the key figure out who has access to your cloud

environment and then essentially lock those down put put them behind a bash and server if you're specifically giving access to those two ec2 instances or however and monitor all log log on API calls that are coming from from the vendors that you have given keys to and continuously audited like like assess the cloud resources and components as they get used now one of the things that I think I would like that is it's very important I think it's organization also where as eventually you are gonna have multiple clients and multiple data sets or multiple SAS services running in parallel organize them using resource groups this is like it's it's so important to be able to take a certain

level of resource groups and just turn it down because you have either have an issue or you either are not using it or you have misconfigured it so it is as somebody who's in who's setting up to go out into a cloud environment where you are gonna be have access to multiple different when either as a cloud as a data cloud you're gonna be opening your data centers to multiple different staff services while staff services that are gonna be talking to multiple different disjoint clouds form data clouds it's very important to resource those to organize those using something that resource grouping or whatever your vendor calls it essentially the idea is that if you in your console know exactly

how you have laid things out and how you have organized them it's gonna help you in the future to either grow them or shrink them and or even just configure them as a one sat rather than doing it individually the next thing is like Identity Management like I am xand talking about authentication and authorization so things like force everything through rules are roles and essentially kind of restrict like I said before with control and data planes have idea of using things like 2fa it's using strict password session timeouts control number of accounts that people have look at look at them look at the last time somebody used a I am keys right most lenders when you go do go in

they will tell you exactly one of the last times somebody pulled a key or somebody made an API call so write a script that actually just Deacon D disabled accounts after certain amount of lack of use or go in there and and look at audit all the users that you have in your a I am or what federated identity system you are using to allow the API calls to look into who is granting tokens and if there are being granting tokens based on time of how long they've been logged in limit number of tokens and thinking somebody can get or limit the timeline and then reinforce them to relocate after like say whatever you feel is the right choice for your

app but generally I could say like a day or 30 minutes however you however the volume of your API access is um networking so this is like the first technically the first layer right I mean you have to essentially with the way the all cloud lenders have set up is that limiting traffic based on certain source and destination pairs so security groups BPC route tables and then and the third is the VP flow logs but Ethan a traffic within the VP sees are very important so take a look at those and see how they're set up how long how often you monitor them they will they will tell you who is using your data or who's getting to

access to your to your application limit the continues I'll go back and take a look at if you still have a partnership a relationship with certain organization if you need to have those rules in there but generally like we said before lock everything down in terms of open open routing tables and open security groups and that should be your first layer of defense essentially if you if you limit the traffic coming in your autumn you you will shrink the attack surface quite a bit so logging and monitoring is the next you know the next big thing so essentially for example a WSI or microwaves have cloud trail and cop formation essentially you can either bring in your own scene tool and then

have it off-site or have it or in a different cloud but having logging applications logging ec2 instances or logging it at the actual compute compute resource or logging at the VPC logs logging at the kms level logging at the kms being key management logging at the block and not the block level but the object storage level if you bring all the logs together and aggregate you can pretty much put together a very comprehensive picture of who is accessing your data how often to do it and if they do it in a way that's accordance to how you granted the access so it's so if you are running into like if I am the person who owns a bunch of

content that needs they usually go out to say for VFX rendering to outside organization and they have access to my s3 buckets I would want to set up logs to kind of get a picture of when they pull content from my buckets and when they put it back how often they actually how often they are accessing data and if there are trying to access data that was revoked or while the work was completed after certain time they shouldn't be doing that so those should be kind of like you could put together quite a comprehensive a picture of alarms and false and unauthorized access through logging and monitoring so another main concept is the storage the object and block and

file so if you have using s3 buckets are using EBS or EFS however what I like to like what I really like to at least say is that if you're specifically running in a multi-tenancy environment and specifically running with are you're interfacing with multiple vendors who might be asking data then encrypt the data not just encrypt the data but encrypt the data with specific keys that are specific to certain vendors so if you have a database store that has an object store that has multiple keys and multiple data multiple objects in there and there are four disjoint organizations and multiple people multiple correlation going to come access it it specifically encrypt those objects with specific keys that are

given out to those organizations so in case of in case you in case some in case they try to access piece of data that in the object store that is not numerated for them they still won't be able to decrypt it or if you want to revoke the key to a certain organization you don't have to you don't have to re-encrypt a whole all the objects in the s3 bucket you only have to really pull down keys for one organization

so the so it's the idea of compute the if you use the e CS or containerized services if you think sir will this compute like lambda functions and stuff like that generally in multi organization kind of thing but we want what is most important is that if I'm the person who if I'm an organization who's giving out access to data then I would want to make sure that either you sanitize the data after you're done on your on your on your ec2 instance or you tell me that you're running on a certain kind of like lambda function and then it goes away and I know that you you are not holding a a cache copy or a copy of

the data in a way that it's you can it can be it's kept in storage so the whole premise of using multiple clouds is that the middle layer the folks that are actually working on your data for short amount of time to do certain specific algorithms or functions they should be kind of like telling you that hey we're gonna do this in a formal way possibly in the formal service like a lambda function run it through our proprietary algorithms and you're gonna get an output and we're gonna put it back in your s3 bucket well you want to make sure that they're if they are not using like safe natively services services like lambda or anything that just runs

for short amount time like a batch function then you want to make sure that the if they are running on a container or they're running on an ec2 instance the dead instance itself either get some sanitized on based on a policy that you dictate or it gets if it doesn't get sanitized but the actual said the instance itself goes away or if it's a container that if either the container and ownership stays towards geared toward just one organization so for example they can also come back and say that look we are gonna give you X number of containers in your for you guys that you are only specific for your organization so if any if for since you

need the service to be very real time for example we had a client that said we can't take the service down because if you take the service down after every transaction it takes a quite a bit of time so V it to just to bring it back up well then what you could do is you can set up a subnet or and have multiple ECS services that are dedicated to one specific destination or route pairs and based on a client so you can kind of force traffic from one particular organization to just come into that application instance do function and then go out so it was an example of for example a transcoding service that had to have not transcoding

it was an example of creating thumbnails thumbnails on a pictures that I could get created on an editorial service and that has to happen pretty quickly so that's that is an example where you can just take the service down because it's TV TV service down it takes a quite a bit of time to bring you back up what you could do is you could you know put in specific subnet and then limit that traffic and sum that to a specific user and then after they after the traffic after that organization or your relationship has come to you know come to an end you can actually just take the whole subnet down all the ec2 instances

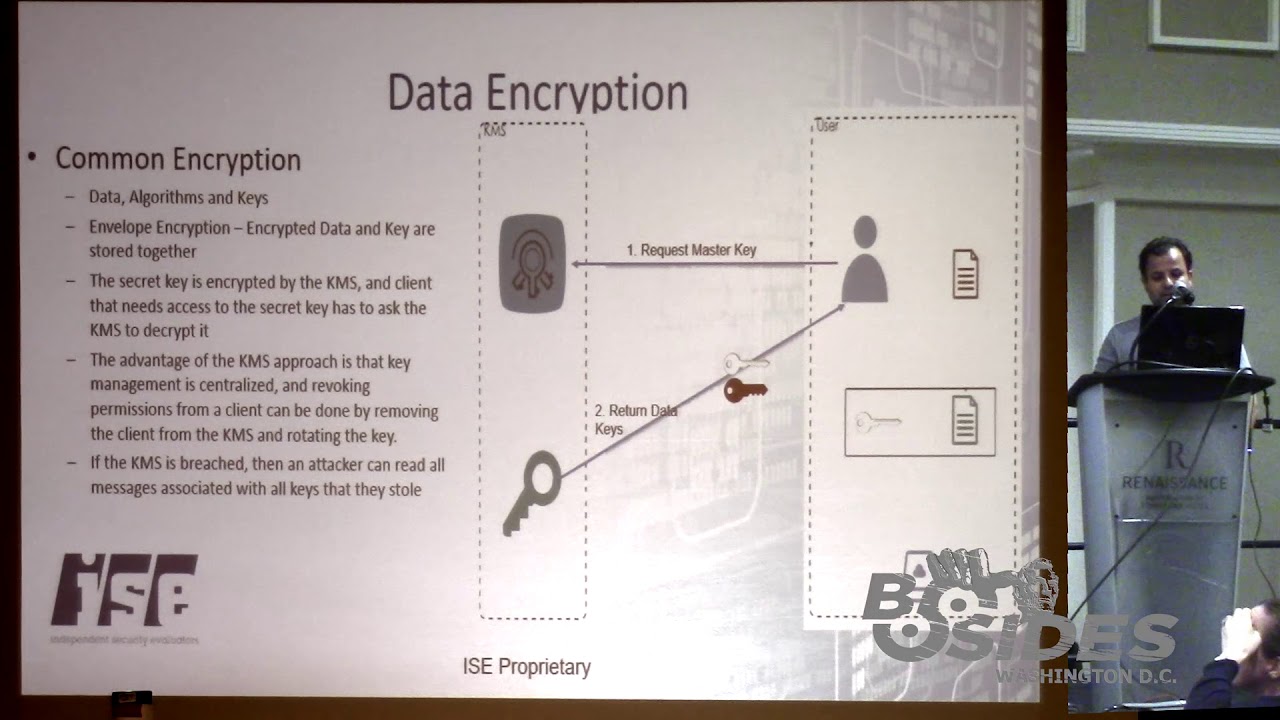

in there and that goes back to the idea of organizing resources / either per organization or for a client or per application you have and resource tagging them so all of that is tagged in a way that you can either bring a whole client back up or you can take the whole climb back down in a in a very verifiable way and I think the last of one of the last things we would talk about want to talk about anything I gave a talk last year about this was the whole idea of key management so this is very important on a data cloud part when you have a when you're running if you're big or large organization and you have

all kinds of data and all kinds of all kinds of vendors that you talk to that have access to your data key management is the principal method to action control access to it in a way that you did the actual data itself could be limited and revoked in a inefficient way so essentially if you have an an s3 bucket or you in a relational database or MongoDB database or however you have if you use key management in a way that you assign and encrypt objects or rows or columns and specific databases with certain keys that are tagged and assigned to a specific end customer or end user that is that could allow you to essentially

take away access when you when you revoke keys and it can also allow you to grant access but it would not interrupt any of the server and any of the other clients for example if you had just taken one key and encrypted the whole database or if you have taken one key and encrypted all the objects in the s3 database it would you would have to really understand a breach or you had some kind of issue where on access or unauthorized access that had happened you would have to re-encrypt all those database all the objects so key management itself I think it specifically and the key management part in both Microsoft Azure and AWS is quite

advanced and I personally feel that it allows folks to not only segregate data but also be able to control data how it gets used and on top of that if you just have different data key managers and key users if you apply that on top of it as a layer then you have a very solid base of data control obviously when you have key management it's a good always a good idea to either rotate keys or it all keys and who's using those keys at all times and that way if you put that on in your logs and you put it through your like say like a cloud for knocks on front but if you put it through any kind

of like seam tool to put together some kind of an idea about who acts as keys that who would vote from what IP address or what's the source and destination was you can possibly if you had an unauthorized access you can pretty much limit down to specific endpoints and specific users so at this point I had 30 slides so I still have five minutes but I think that I have pretty much come to version of my talk but I will take questions but go ahead yes yeah yeah yes

so it could be a staff provider or it could be actually so what I'm saying say is that look in this particular talk there's a SAS provider there's people who own the data and there's people who are sure your your service partners so three different entities right that's a SAS provider that is a SAS provider

no I mean I mean this is from multi organization point of view right so within itself within application owners there will be multiple people that are that are ownership of the database itself the data tier the business logic tier or the deployment here or how the the DevOps works so all of that would would have those would be sub categories under application ownership so this is the point that it's me it's that there's people so for example let's just say for the sake of example right there's a company that provides a service for some kind of algorithm they're their own specific priority algorithm that allows them to process specific data but they don't want to be in the business of

owning the data they don't want to be in the business of storing the data so they have a SAS they have a web service and they allow you to pull a push data from an endpoint that is provided by their clients in that case they're the application owners

you're accountable for your piece but you can expect the other person so these are all the tactical and strategic things that I've laid out here are applicable to every tea every every member of this all three parts the data owners and the application owners and the service providers but all of them everybody's implementing these controls at their point in their particular subset they will be able they will be able to build a verifiable irrefutable set of artifacts - if they if those are so for example if you kind of mint if you detect that you had unauthorized access to your data right either you can yourself figure that out because you're implementing these controls or you can

ask your partner your SAS application owner that what they observed because they were they were implementing these controls well if you know that for example that you are getting an IP address you're getting you're getting access and a certain a your endpoint that you opened up for CERN for certain reason and you detected it was for example the key was revoke 10 days ago but you're still getting access on that URL what you can do is you can ask your SAS provider and say look we have this particular log right what is your log say why are you why are you going out and making API calls and our endpoint that via hot talk about a

revoke keys so if you implement these set of controls at all levels then you build this you know instead of you know who's you

so the governance happens at your local so that's exactly the so rather than having governor's so for one you okay traditionally if you want to bought a SAS product you bought a subscription to a SAS product for example right you pay some of the X amount of money per user per user or per X amount of processing right you don't get to control the governance they do right but what happens now is you control the governance of your own data they're controlling the governor's of how they process the data and if you agree with it then you should go with this service and if they implement these controls then you know they're in pretty good

shape and form to actually work with you okay yeah so any other questions or [Music]

right right right

so I'm not sure about consumer but like say you're a partner right so you have a business relationship right so what we have seen for example is that if you have enough leverage right you can enforce them to get an audit you get them you can enforce them to get an assessment right but SLA czar more like nine nine nine numbers right there basically about availability we're talking more about data security unless they go through an evaluation or they go through some kind of like some kind of yield audit not just an automated tool there's really no way to actually see how they actually implemented all the mechanics underneath in the infrastructure fabric so from a specific

of a consumer point of view it's very difficult they won't give you that data but if you are in organizations that about to invest say about a hunt so a big amount of money with a certain organization you could ask them to have to show them an audit data you can ask them to do and get an evaluation in my particular experience the way we works is that any organization that has any kind of SAS product that has adds value to somebody else they want your business if they want your business they will get an assessment if you're big enough if you have enough if they if you're promising them enough business in the future they will go out and spend X

amount of money to get an audit or get it all get an evaluation but it's not publicly available nobody's going to go out and put an architecture up there out there of their service online and say hey look if you do it this way I mean it's very hard to I mean nobody wants to expose that data but I guess from a perspective if you really wanted to find that out there's ways to do that to you if you were if you were savvy enough to know that if certain certain SAS is deployed in AWS and you have either understanding of how that works you could try to backtrack and figure it out but that's not that's not that's not

your responsibility they should be either be telling you that or you if they're not then it's up to you to do business with them or not

well yeah but I mean but but I think that if what you could do is say like you work to say let's a public sale force but like a large organization that does something with your data right and you have you have all your CRM data in your data stores in the s3 buckets right and then you want to work with them and then they want to pull data then if what if but if you're auditing yourself to a certain standards that are pushed out here then you know that you if they are actually not enforcing good security you will actually catch it because you will have logs on your end that would that should indicate to you that if you have

unauthorized access well you have a data breach so for example if you even if you work with a large vendor like like Salesforce right and you open up your data stores to them but if you follow like the key management principle then you follow the IAM principles and you follow their locking and monitoring principles it should be quite it should be difficult to say why are they why are they pulling our data at us at a certain volume or why are they pulling a certain data at a certain time in a certain time a certain region so all those questions could be answered and you might have given them access or if you haven't then you can actually

have a conversation because now you have an audit trail of yourself that you can use to hold them accountable does that make sense any other questions okay I've been told to cut it off [Applause]