GT - Another One Bites the Dust: Failed Experiments in Infrastructure Security Analytics and Lessons

Show transcript [en]

uh so my name is Rahm and we start with a quick poll right here so how many of you consider yourselves to be like security engineers okay that's great I just want to make sure how many of you consider yourself to be machine learning engineers who worked on machine learning perfect I feel like John 10 going little there you gotta raise it up man so that kind of helps me orient so here's a familiar scenario whether you work in machine learning or in security imagine Friday evening you know you're out to go and you you're now on call and unfortunately you have been held back the login anomaly detection that either you built or you most likely inherited

is spewing a lot of false positives your incident responders are not happy and you're like oh my gosh the security analytics system is broken step two incident your pagers going off so there are two things that could have probably happened you know the first one is the security assumption that which you had built your system is changed say you know let's assume that there was like oh you know like a important vulnerability and your service and chance just went right in and patched it and that kind of like freaked all your computers and now there's like a failed logins because of your cell phone which is causing this like massive much like false positives and if you think you

know this something that actually happened to when I built my first login anomaly detection and because service engineers their job is to fix stuff and they don't tell you when they're fixing stuff especially if it's a critical vulnerability and this is like you know a small graph of like you know how I feel the security landscape is always changing so on the x-axis over here is you know at the time the number of dates and y-axis is the number of like high security vulnerabilities so it's pretty much I prayed the CVE database and I looked for those vulnerabilities that are you know scored seven point five or greater so you know it's an OS level

like system an attacker can on arbitrary code it is very much possible there was a patch for one of these CVEs and this being high-priority probably got patched through so net net you know your sister your security assumptions always changing about your system alternatively the analytics assumption with which you know you build your system could have also changed and this is because the fact is like the machine learning landscape is also changing rapidly and a good example of this is you know the tensor flows like you know major feature like breaking a patch changes so tensor flow is essentially like Google's big bet on you know open source like differentiable programming toolkit and a lot of open

source contributions and that's that tool is very rapidly evolving so if you by the way again over here the x-axis is you know the time line and the y-axis is the number of like you know major feature like or like breaking API changes I just restricted myself to that if you're wondering why September thought like no changes they only had like a github was only a kid hub deployment so the binaries were still the same and you know December probably was low because of code freeze but you can kind of see that they make braking cheap API changes all the time I just pick tensorflow but this is the same thing on Microsoft's cognitive mural

toolkit or any like system out there for instance like you know they might push out a new learner and you're applied ml engineer who's developing this system might a swap-out learner or you know they might have retired a feature like you know TF dot pack got replaced with TF that stack so you know if you use TF pack it's probably gonna be broken and that could have cost like this breakdown and the fact of the matter is just like you know how security landscape is changing so much and because the machine learning landscape is changing so much you also have these thora of tools that people use as different tool points you know you might use are for like

prototyping then you might use like tensorflow for deployment or you want to support Microsoft and increase its stock value you see ntk so different parts of the pipeline use different parts of schools and today I'm going to talk to you about some of the experiments that we did and platform security and how they failed my goal is definitely not to get through the slides so I'll make sure that you know I finish much earlier and you have time for questions as well so in terms of like you know the textbook machine learning development like you might think of this is a lifecycle that you might Joanie see you know people first like you know pick

they try to define the task you know they would define the data and they would use like some sort of like machine learning model and then they'd get the result and deploy and this is like you know whenever you hear people talking at a customer I mean at a conference you might assume that this is a cycle that they've been through but in reality industry great like machine learning solutions are extremely exploratory like there are many many attempts that happen in the background and the one that people generally talk about is the one that succeeded and you never know like why those other attempts failed it did it failed because like it didn't meet the you know the metrics that the

program manager defined to fail because it wasn't scalable and I feel like the aim of this talk is to share some of the experiments that we did that did not ship and share some insights about like why they failed that's that's basically the takeaway and essentially like I have three failed experiments that I want to share with you and for each of these failed experiments I'll go through hey what's the problem that we started to solve it what was the original solution why did it fail and how we improved on it so this is kind of gonna be the theme that you'll see throughout the talk and finally I'll wrap up with how do you

actually go from experimentation to production so you know this slew of great talks today at beachside Las Vegas I'm personally excited about the PowerShell talk that's gonna pretty pretty so so you know one of the things you want to keep in mind is how how learn the technique that the person probably shared with you and think of pushing it in production because that's how you're gonna add value to your business so jumping jump jumping right into case study one the lateral movement detection so lateral movement just make sure we're all on the same page is the part is when the attacker like moves laughs leap within your environment to attain the goal of either say credential

exfiltration or any or even cost a buck so you know they might first like you know compromised a dev box to phishing from the dev box they move into production because hey we're all DevOps right so you have like some sort of like constant access to production and they move laterally through the environment before they attain their goals and the problem that we want to solve is can be detect lateral movement and this is a really important problem to solve especially if you're a machine learning engineering this is the term that's unfamiliar with you because once the lateral movement stage has been reached it's pretty much difficult to detect an attacker because they go under the radar

or they blend in meaning there's so much I feel as a code word for there's so much of noise in my system I wouldn't be able to like detect the attacker so you really want to be able to detect lateral movement before they actually attain their goal so I'm gonna discuss like one experiment that we did to do this and actually this is something that's actually in production for on premise and you'll see why so what we intuitively did was we build separate models to detect our goal of compromise account so we want to be our goal is you know random attack when an account is compromised you want to be able to detect it so you don't even like you

know go to a lateral movement phase and we built a suite of models and and the crux is that each model kind of independently assesses if an account is acting suspiciously and I'll go into like what the models are in just a minute but the data that we use is the Windows security event data so on average like an online service like office 365 just to give you a scale produces like 30 billion sessions per day and that roughly translates to 82 terabytes of data and essentially the data that we're looking at is the sequences of like Windows security event IDs they're roughly 367 of them that's what TechNet says you know we've kind of whittled it down to like you

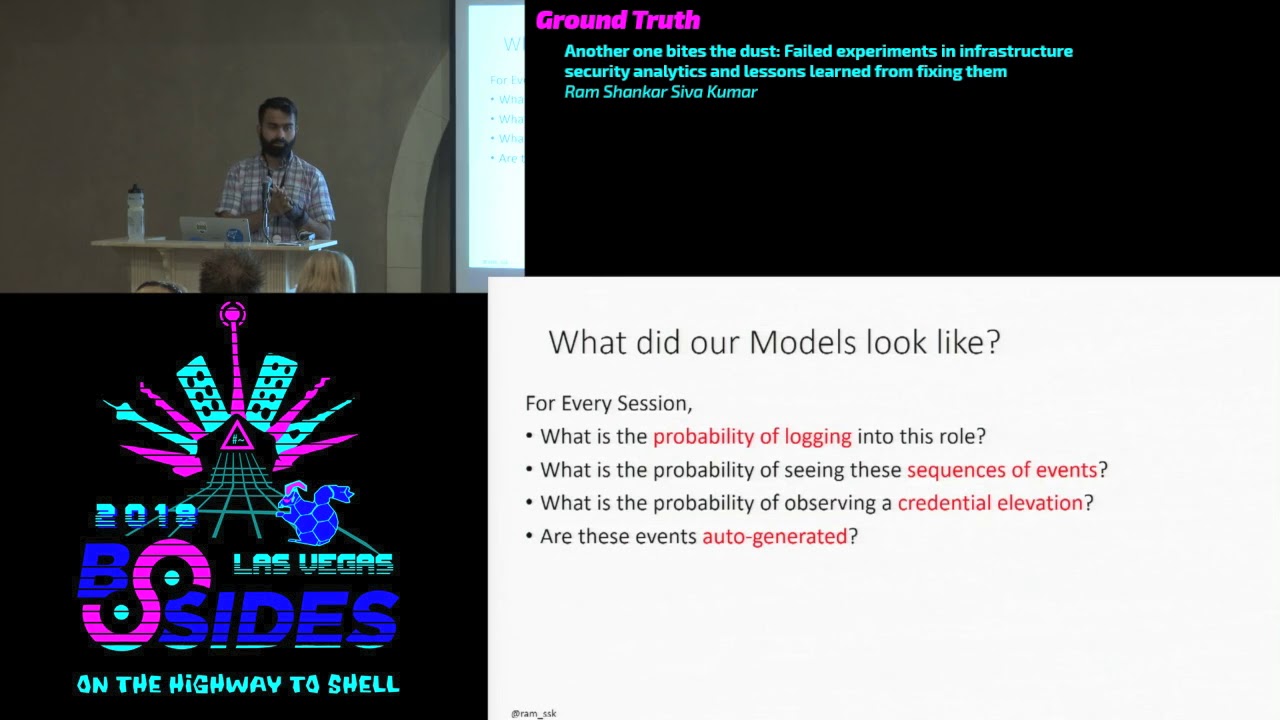

know 14 of that as of major interest and what kind of sequence are we looking at you know we look at hey but users logging into a machine this you know starts a process you know the process must exit and the user logs off so you know in a very very infrastructure setting must be very very constrained what especially a user or a service account that it's not like you know me in my death box so I told you that we built like you know a an ensemble of models and here's what the model looks like so we look at from a session level so we don't look at it from a record level we look at a session and like I

said a session is when a user performs a sequence of actions and for every set I know this is a representation of some of the models that we built so one of it is like what's the probability of this account logging into this role you know if it's if it's a user who's always logging in to say it's sequel or a front-end and all of sudden now they're logging into a domain controller hey that's like super suspicious so or what's the probability of seeing these sequence of events and this is kind of the ulterior motive of this question is to ascertain if it's a service account or not service account or automated account and they must be only performing

a very certain narrow subset of activities so you know you log into a box just kick off a process and then you get off there is no like like browsing around over there so we look at the sequence of events the third model for instance is like what is the probability of this elevating its credentials again from an infrastructure security perspective if you ever want to like you know elevator credentials we have a just-in-time system you can just like by clicking one s admin that's a big no-no and the third thing is like the last one is like you know hey are these events auto-generated especially like from a service account perspective the inter time interval

between two events must be pretty tiny because you know there's probably a powershell script that's automating these service accounts and when a red team goes after service accountant they love to do that because it's got like you know lots of permissions a lot of boxes the time interval between those events are no longer staying so that's another thing that you can look at so we basically have like you know a lot of models estimating like independently what's the probability of this account being compromised and this is what you know end up set by looks like you know you've got a session and you estimate these probability models but where do we really want to go with is hey want to

get some sort of combined score you want to be able to look at for all these from different perspectives what is that account probability being compromised and you want to wait them in some sort of interesting fashion you just don't want to do some sort of linear combination so the way we combine them in our experiment was we used rank net and rank net is a supervised learning to rank problem so think of like you know when you go to ping and if you ever do you know you would and win a search for a query you get a list of like answers to it those lists those answers are rank ordered based on relevance so the rank Ned was the

initial like even before like Bing g8 is bang those initial algorithm that was powering that and it does pairwise ranking so it's really nice so as opposed to comparing like between two lists no tries to optimize for pairs and so you know we try to take rank that and I put a pointer to the paper right there so imagine that you have two sessions a malicious session and a benign session and that's your input and then you also give rank net a probability and you know the probability you know it's what's called relevant scores so you know if you set that to one rank then knows - Kurt like you know the malicious session on top of the

denying session and if you put zero you know the opposite occurs and this kind of property not only you'll see helps to combine but also gives a rank ordered list for security analysts evaluate you know when you render giving a set of like alerts for security analysts they like it to the they kind of expect that one at the top or they know they would have budget for top five top ten and the hope is like you know the one at the top is pretty much like you know where they have to invest the back of the box so rank that also helps to solve that problem so you know you're doing training time or when you give the three

inputs a gift the algorithm is whether it's a malicious you know malicious session a benign session and a relevant score and you get a function that you know if such that you know hopefully the malicious session is pushed to the top it benign fashion is pushed to the bottom and this is kind of like you know how rank networks you know you you take the malicious session during training and then you push it through the probability models that I spoke to you before you get a bunch of probabilities you take a benign session your fish through the probability models you get probabilities and you set this bit to one so rank that knows to get like you know the malicious

session to be ranked higher than the deny efficient so and out pops the weights so that's pretty much like how you get you go from there you get the weights and you put it in the model and this experiment was super successful for on-premise systems you know in office 365 this is a running model from an infrastructure security perspective where you know showed a couple of times how it's been validated with red team attack so when we built this model for off you know from an on-premise setting and we expanded it very bravely to the cloud scenario and I got this very nice email from a security analyst and you know you can't read it only two things you should

kind of like note is that it's a three a.m. and the word anomaly comes in parentheses you know I was like please shut this off which is code word for do it or else I'm gonna kill you uh-huh because there is so much of noise in the system activity and it's all expected and not scary related so comes to the exactly blah wall so why did it fail like you know we did our best work so violent on premise and we expanded it to cloud and hope it would like you know work as well so first thing is security assumptions pretty much changed if you look at like a traditional on-premise setting like you know production environment which

those people are familiar with you know it's very nice you know you've got this private network so baby you kind of been closer to the eggshell and inside this actually thought like you know all different types of servers every server if you want to you know host like some sort of like database you brought you spin up a sequel server host POW set up a file server so and these are all like physical machines and you pretty much like add them to the domain where the domain controller and you know there's like one central place of truth you know exactly where jewels are you know we've been told time and again that hey if somebody can pop your domain controller

they've got keister Kingdom and are pretty much more which makes a lot of sense because in on-premise setting you know exactly what to look for but let's come to the wild wild west Asha so Asha is is you know in a cloud services setting pretty much everything is a service whatever you thought up as a server is like a service so you know you've got like a a sequel server you've got after sequel Tamara know you've got file server you've got very close you got like as a storage account one of the things that fundamentally shocked when I joined sure with that there is no domain controller there is no like you know one central brain like the domain controller

that's August reading it so one of the things that so we had to first kind of think this is a big paradigm shift for me you know four years back as to how like you know moving from on-premise to cloud means looking for different sorts of controls and one of the things that you will notice is over here they're all like digital machines and they're all like things like the Windows security event logs are like our bread and butter but over here in the cloud service setting like a VM or the host machine is but just a small piece of the puzzle we know security event logs are still like valuable but there are other

sources of logs that speak truth so that was the second realization for us and one of the things that you want to keep in mind is just like how you would think of something like passwords have a very different paradigm in Azure so you know you think we talk in terms of certificate and storage your table keys and better you think of here is Alsace you know it's almost mirrored to as t-ball's where you store those secrets so ship mapping these things were super important for us when we move from on-premise from an on-premise setting to like class setting and this is fantastic like a translation diagram that our red team Andrew Johnson put together which

kind of helps me when I'm building detection for the cloud keep-keep it'll help me translate things so anything that any concept of domain just like you know how you want to protect the domain the goal in like infrastructure security I mean cloud security is to protect your subscribe or to protect your tenant no but you think of is past the hash and you know you know the old window setting in a cloud setting is credential pivoting right you know your harvest credentials and you reuse those potential see if you've got any and have access so the the big difference is like any time that you're going from one paradigm to another is really hard for you too like

we use the same technology I'll show you like give you a little bit in title to how we solve this problem with this a minute so oh just this was this was just to prove the point that over here you see a new process has been created in the context of an on-premise setting and this is like a process creation in the context of like a host setting we're in over here you'd pretty much everything would run you know you would get like an account name for instance and from over here because it's orchestrated from an infrastructure perspective it says stuff so you're not going to get clean super big inside just by looking at a comment so how did we

kind of like improve that our failed system the first thing that we did is we translated a book favorite kill chain and adapt it to the cloud so you know like I told you in an on-premise setting you know an attacker might you know compromised somebody's potentials and then get hold up service account and you know use that service account to ultimately like move laterally and get to a domain controller in a cloud setting you know they would start off with compromising a cloud developer account and then instead of like we translated or concept of a service account we mapped it back to what in the cloud is a service account it turns out there is something very equal in service

principle and just like how the goal of an attacker might be non premise domain admin over here subscription at man and we also increase the visibility of our logs and not just relied on post security event logs so we expanded to for instance like the azure resource manager that is kind of like think of it as one of the brains that's controlling the cloud in terms of like anything that is important so you know it could create a service principle or if you create a storage account or if you modify permission kids gets kind of like added to this particular log source so this was super vital for us so I'll give you quick overviews of this particular

technique so essentially what we did is we use the service level detection and we converted it to a graph and essentially the graph is nothing but every node is pretty much an entity in terms of life it could be a user account it could be an IP address and I just something that's connecting between that and what we did was like you can kind of imagine that because we have so many millions of signals graph is extremely big and so we prove the graph using we constrain it using our kill chain and we constrain it using like a stochastic process so random walks a great example and that kind of like you know helps us

to go from like millions to like a handful of hundreds of possible attacks and then we just pretty much pour those sub graphs using things like random walk I mean random forest if you have labels and or else we do spectacle cuss posturing if you don't have labels so in terms of like performance the biggest thing that you want to take away is that you've had a lot of fall our false positive rate kind of like dipped and we got a six point improvement by using this so so you know if you take away from this case study the the back of the important thing is when you're changing paradigms it's important for you to

think about not reusing the same solution that's like you know that they take away there I'll move through this one so in terms of like the second case studies like how we wanted to take like PowerShell attacks and you pretty much know that when you get like a suspicious document and you click on it and you know there's like macro that's running like a base64 encoded command and you're pretty much like done for at this point and there's like partial attacks are really becoming common because like hey guess what if you're like want to go after like oh like windows the same PowerShell is like natively installed you don't have to do any sort of installation from

a toolkit perspective and they're all like you know they don't even touch this so there you go perfect and they're a bunch of toolkits out there that kind of make it even even more easy for attackers to go to go over this so in order for us to do this detection maybe think about it is take a malicious file and detonate it and we essentially collect the exhaust from those logs so you know we look at timestamp you look at the command line and you look at a host of those other information so the first step that we did was we try to like build you know we try to estimate the transition probability so we first

took the syntax the extractor the syntax trees and we thought hey Internet Explorer should not on say command X that's really suspicious and we essentially try to calculate like you know what's the probability of the current command line happening just by walking through this Markov chain so when we try so this from a logic perspective it made sense to us like you know people shouldn't be doing like unusual sequence of events in the command line should pop up but tada like you know this also failed for us because guess what Daniel Bohannon I think like you know gave this wonderful talk about obfuscation office gating command line so just to kind of like not familiar

with obfuscation this is the command line that they want to attacker would probably execute but after obfuscation you can see there's like a bunch of like fantasy use and like a whole lot of things with so through the magic that is PowerShell so execute but it's very very difficult to detect and our Markov chain failed because first of all you know first thing was like why can't you just use rules and you cannot use rules to detect this because you the number of radix is that they're gonna write is going to be too much and classical systems like Markov chains do not work in this perspective because every command is unique and there is no

like you know the proverbial like pattern for you to decide so we do not have you know rules didn't work for us and you know our existing system pretty much like experiment that we went kind of failed and we tested it like real data so the baby improve this and this is a paper that was just published by my colleagues so they use character convolutional neural max and I'll just give you like an idea how this works so you essentially take the PowerShell commands and you convert them to like bitmap representations think of it on a very high level and you know deep real character convolutional neural nets are extremely good at identifying like you

know images classifying images so you know facial recognition if you was like smile or any of those things are pretty powerful because they can capture hierarchical representation of your faces so another way to think is you take a image of your face and they decompose it into kind of like edges and many edges will become contours and many contours will become like a feature like a nose so think of them as like converting to like heat maps or in like you know the stronger I mean the more red the color is the higher the value of concrete let's say we pass it through like a deep neural net for like classification and I'll be happy to

point you to the paper and and essentially like what happened was we were able to get like a huge improvement in terms of like our true positive rate and one of the things that those guys proved was that they maintained the false positive rate constant so for a comparable and this is important from a customer perspective especially when you're deploying new systems is if you're increasing the false positive rate simultaneously increasing they still don't care it's still like more alert their cue that they need to triage so this is another example ran like you know we have a system that works for attacks that we knew ah but because the security landscape changed so much and there was like a man line

obfuscation technique became really popular and this is some kind of like in our existing experiment did not work so great kind of like you know change our our strategy as the last date was evolved the third case study that I want to walk through is how to check like geo login anomalies and this is kind of like a very common like problem especially like you know you're running like an infrastructure from an infrastructure security perspective you know somebody's always logging in from Vegas but all of a sudden now they're logging in from across the pond and say some part of China you want to be able to detect that so intuitively and this was like our

first pass whereas hey we cashed the last 10 locations of the user you know users chili dog travel as much except for like and for every for the current location you know if the kind of location is not part of our cache location then we challenged a user we tell the user to kind of like you know we trigger like multi-factor authentication or I'm sorry be challenging user in terms like SMS or just like how you know Facebook would you know show me pictures with your friends who we feel like my sheet acts like random pictures so there's a picture and like it's better on I don't know she tax buried pictures so and if

there's a false positive then you kind of like in either location to the cache this was like our very first like experiment in terms of like detecting and this is reasonable because like from an implementation perspective this is like matter of keeping track of state which so feel like you should have figured out a theme right now is that this one failed for the following reason the first off is like a problem with such an intuitive system is that what the basic system is that is not account for complexities so for instance first of all people do travel a lot and if you have sales people in the organization's they travel a lot more than a developer

and beyond just travel which is kind of easy to count for their company proxies companies have EPM and there are legitimate reasons why you know people use VPNs you know it could be for deployment in a data center in a different part of the world and they also have like cell phone networks which tend to be extremely noisy especially you know if you're moving from two different say between say Seattle to Portland it still does not account for it pretty easily and like I said you know vacations are pretty pretty common as well so instead of trying to like you know back engineer this knowledge when we wanted to see if hey try to look at

their calendar and try to ascertain during vacations just turned out to be too untenable so what we did was oh by the way just want to tell you that even a modest 14% like false positive rate in terms of like you know Microsoft scale when we have like a billion logins for all of our Active Directory that's more than 80 million false positives so think of like you know how much of trouble you're adding to the customers are using this system have to like sift through this so in terms of like improvements the key there are two pieces of intuition that we had first is instead of like looking at a user from just an isolation just looking at the

last time locations look at it in the context of their peers that's like intuition number one that you want to get from the following slides the second thing is like how do they how do they kind of like is there a reach abilities for that it can cap so in terms of like the understanding the user patterns so we capture like you know the past 45 day of login history and we basically look at the frequency of concerts how how often they log into a particular system and this is like users who are not been like no similarity metrics and this is kind of like their cell phone networks that they connected the second intuition is that

we try to calculate the user user similarity so it's essentially a huge matrix of like that we have and we use custom similarity metric to ascertain how one user is similar to another so the intuition is if my boss goes to Israel because for work and you know I follow up and go to Israel you know fact that me and my boss are kind of in the same peer group I should not get I should not get an alert or a challenge when I go to Israel and from this we use random walks with restarts again to kind of like ascertain the reach abilities for so the after we get the reach abilities for we want to

answer this quest at how likely is a user in this location and the intuition here is is a reach ability score that we calculated is pretty high but so it takes you know takes them oh it takes them a long place to get there say between say Seattle and say Japan but if the time of travel that we saw was really short then it's a problem so that is but this shows if the reach ability as a reach ability kind of like moves to the red level and at the travel speed also increases that's really really suspicious so this is what it kind of shows so you know imagine that you know got like you know

three locations which we saw the users and you see you know their time of like level and vici police for that we got from the previous staff we kind of use this as a transition matrix to kind of see okay the user is in Redmond and now he's in Portland there's also you know it's so possible for him to still be in Redmond but now that he's like you know logging in from Hartford with in just a span of like 15 minutes that's really really suspicious so one of the one of the most powerful thing and if you haven't explored random walks is that it can really drive the false positive rate really low because it can

increase you can input custom domain knowledge as part of it so this is something that we got a 70 X improvement just by using random blocks and trying to calculate a lucha booties for so totally totally like recommend you to check this out so I kind of like walk you through like you know some of the experiments that we did and I wanted to finally finish up with how we take something from experiment production what is kind of like you know once we narrow down on like hey this is an algorithm that it's got really low false positive rate how do we put this in production so like everybody we start off with expert to data analysis just

using custom tools and you know eighteen applied ml engineers in our team and each one of us has like you know r2 of toys I I thought you know this other peeps um one faction uses are you got a new intern who came from school and asked if you could get a MATLAB license and like you know if you're like what so you first do like expert or data analysis and the first prototype that we generally do is we build one model for all setup users and you know the the problem with this is obviously if you have one model for all users you're not going to get like personalized activity so then we kind of like you know build

per user model for each for our population and we try to like crap and the thing is like you cannot cram this all into like one worker role so what we kind of do is we have a separate storage system for models but then like you hit this problem and like you hit latency issues so now you have a reduced cash inside so that you know you have some sort of LRU policy so that you're not like you're maintaining your latency can advance and then our favorites part is compliance engineers come in and they're like no you this some parts of it just PII and the platform cannot have CII if you know so what we do is we have a separate system

that kind of has our you know PII data which also has its own Redis cache and that kind of communicates to it so you can see that you know from our initial prototype to a final like template simply like different systems but we don't really start off with like you know trying to architect to read this cache back in itself because like I said as you saw the experiments tend to fail really love so what should be like your big takeaways so first of all like you know when your security analytics system is broken you have two things in front of you your security assumption for the change or your analytic assumptions have changed but either way if even one of

them changes you have to look at both of them like I showed you in the case of PowerShell Brian like a security assumption of office data command-line change together kind of like we strategize first of all debugging ml systems is pretty hard and I put a link to this paper and they kind of like walk through like you know how you can debug a machine learning system well guess what debugging security analytic system is harder so you know just kind of like you know things that you know when you go back to the from the madness of Vegas and try to like make sense of it no the next week for first of all there's a

amazing talk yesterday thank you John for pointing it out from see exercise cake totally recommend reading his slides I personally like you know it was kind of like if I were more sarcastic and if I had like you know memes that's what I asked fire to do so very it was like 284 slides but I can flip them through like you know they're travelling I would totally recommend flying out red Canaries like toolkit where they help you kind of do unit at I mean the way I look at it they help you do your the task for that and really explore security as a service that might also help you out in the next month if you have access to Red Team go

show them air detection there's no shame in asking them hey there's my security system does my assumption solve or okay and then you know the next order that's how we plan you know in monthly and quarterly Sprint's look at things like how we can attack your systems automatically like cloudy cracking you know from Netflix has like a bunch of capabilities so these are all great tools for you to constantly see if your assumptions are so valid and finally like you know don't ever think you know detecting unusual logins is the problem that's that's the prototype I mean operationalize that if you have to think about like how are you gonna deploy it with compliance restrictions how are you

gonna scale it out how are we going to evaluate an shole your management that this system actually works and is adding value so and and and don't ever discount you know having if you're running a production service you want to have live site with on-call guarantees there needs to be someone if the system fails you know who's able to like step up and do it so my team my broader team has like a bunch of personas that we rely on to ship like you know a production solution you know you know if we've got like machine learning engineers but we totally rely on ops engineers for monitoring of performance or compliance and privacy folks are help us make sure

that we're maintaining our commitments I got product managers and go talk to the businesses and you know do our metrics so it's not like a one-man security data scientist to get something into a production that's working so that's that's pretty much the end of my talk and if you're interested in any other roles that I showed you super hiring for you know my team and the broader team can always reach me at my you know email Twitter that's where all the cool kids hang out but I just go there for sneaking around but my dams are always open and you have any any of what I spoke to you interests you or if you feel like you can come in at value I'm

gonna hang around I'll have to talk to you guys thank you so you could take about like two questions sorry hi what are you what was your training sighs for the year let's say do a PowerShell attack first of all very interesting talk thank you for presenting uh and you mentioned you're collecting about I think it was over 80 terabytes of telemetry from office 360

is kind of like in Jackson thetic now this might sound like extremely trivial like you know but it really works is for you as a sanity check especially it's the canary in the coal mine so if your system has changed so much and if you know you're in sector data does not pop at the top you kind of know from a unitized perspective doesn't make sense the third thing that we rely on is try to have like any any sort of open source tools that especially our red team doesn't use so we have like out of test distribution to evaluate our system again so we're not exactly only relying on like you know one particular set of no known badness

we've also looked from a completely like machine learning perspective we have like two strategies the first one is out of the box you know things like smoke brain you know you try to like under sample and over sample and the second thing that you know we've gotten good results but I've got preliminary results with is using generative adversarial networks so you know you have some sort of like data the malicious data and you try to bootstrap from that so from a security perspective those are the three things and from machine learning perspective it's that and for also like one of the things with Microsoft is because it has like products across the stack so you have got like windows

covering like the windows team you know covering the host level got office 365 covering like in an application level one of the things we do cross-pollination between products so you know if you want to find out if a VM is compromised and a hypothesis is like hey a VM is compromised if it's sending out spam data so we get the labels of spam labels from oh three sixty five and join it with our Asha data so we also have this cross-pollination that's kind of different from the security and the ml strategies yeah oh let's give a round of applause [Applause]