PG - The Current State of Adversarial Machine Learning - Heather Lawrence

Show transcript [en]

all right apologies for the technical difficulties my name is Heather Lawrence I'm presenting on adversarial example witchcraft or how to use alchemy to turn turtles into rifles there are a lot of details nuances on the slide deck if you want the resources and it's in nine font you're never gonna be able to read it please access the slides at this link hopefully you can read that and I will also have it on the end slide too if you need it sorry about that all right cool cool cool alright again I do data science at the Nebraska Applied Research Institute or out of the University of Nebraska system you can also find me on twitter under info

second on or snark victory let's also I know we're maybe okay so I'm about to play a video for you this is Google's state-of-the-art inception three model you'll notice that it's a turtle right you and I can identify that it's a turtle and the classifier can identify that it's a turtle however once you perturb the texture map I'm gonna go over perturbing here in a second basically adding noise to the texture map now it believes it's a rifle from every angle so what happens when an autonomous system cannot tell the difference between a turtle and a rifle in a surveillance state what you think is gonna happen a lot of dead turtles so a lot of the research in this space has

focused on image classifiers right so we could visually see what was going on that things were being misclassified it's obviously a lot harder in some of the other areas that machine learning has been applied to right it's harder to see that effect is happening but here and visual like you can visualize what's actually happening alright so some terminology I realized that everybody here is not a data scientist and that's for the better a classifier is a style of a machine learning algorithm I'm going to talk about a lot and it's going to determine a class so yes it's a turtle no it's not a turtle yes it's a rifle no it's not a rifle this is a classifier

I'm gonna say SVM a lot that means it's a support vector machine it's a type of algorithm how it works is not important for this discussion when I say perturbation I mean I'm adding noise that's it it's a fancy word for adding some special noise and then when I say adversarial example this is a worst case example for an algorithm right it's specially crafted that way so an outline I'm gonna go over a slight history we're in talks about the types of attacks what a blind spot is what's why that's important what an adversarial example is defense against adversarial examples some of the black box and white box research in this area a flight demo and the resources are all

at the end so timeline started out in 2004 obviously machine learning research has been long long established but in 2004 we had delvia al and I'm this is more of the academia of academia side because this is really Rick grounded in academia right now proof of concepts for InfoSec aren't there yet but we're getting there and I'll get to that in a minute so 2004 they came out with something called adversarial classification and it was basically looking at the spam detection domain you had a a victim and an attacking classifier and they're trying to fool each other in this game to figure out like if I do this will it detect it well the other classifier detect it and then

we move on to like who hang at all they define like a formal taxonomy behind all of these attack possibilities there are other notable contributions this is not an exhaustive list by any means of the imagination but I'm trying to hit on some of the really high points that have developed this research area let's see in 2012 Biggio at all they kind of discussed the consequences of injecting specially crafted attack data they call this a data poisoning and they they test it on an SVM at this point SVM is like the the model they all start with and then they start going into like deep neural network some of the more advanced machine learning algorithms out there Shogun II was the

first group to start retraining their classifiers with these adversarial examples to kind of see what happened and then we start doing black box black box attacks on like a DB AWS classifiers in Google Cloud and 2016 there are some really interesting current research I'll get to that in a second so poisoning versus evasion poisoning attacks happen before training you'll notice that you have us a part of the EM nough Stata set which is basically a bunch of pictures of handwriting and the idea is the classifier can determine what digit that is so the first image you see is a 7 the after the attack look at the all the noise that's been added and you'll

notice that the validation error kind of goes through the roof this is the same idea for evasion you have a bus you add noise now it's an ostrich does that look like an ostrich to you it doesn't look like enough if but we have right that context right we're humans we know what things are and are not classifier has no idea so the kind of attacks look like this there's causative attacks right manipulation of the training data before the classifiers trained data poisoning adding that specially crafted attack training examples before it's trained exploratory the classifier has been trained and we're attacking the already trained classifier and then a hybrid is a mix of those attack techniques now I

get into something called blind spot so you're probably asking yourself like why are we seeing like what what what is causing these adversarial examples to have such an impact and the idea is that noise right this noise that we talked about these perturbations they can mess with the classifiers decisions regions and the models decision space can be poorly defined and the example I like to use is pandas so let's say we're training our classifier an image classifier and we give it a whole bunch of pictures of what pandas are and what are not pandas I want you to think about the entire space of all the things that are not pandas imagine trying to provide

the classifier with every example of what a not panda is it's hard right there's a lot of overhead in that it's kind of impossible and this is like an ongoing research area nobody has been able yet to definitively approve where these come from why they exist and how to solve them but adversarial examples are kind of going towards that route trying to identify what blind spots are and why they exist and how to get rid of them okay so here be bugs as introspection into these algorithms increase obviously we're going to get some more bugs right so much like bug bounty programs where you're starting to get more eyes on a line of code we're

getting more eyes on our algorithms and trying to figure out how to break them remember that we have a talent shortage right of information security professionals it's estimated there's like a hundred and five thousand in the US alone but think about 22,000 experts looking at machine learning like algorithms and trying to break them right that's a factor of five and then in the US alone so we as we start training up more people who are fluent in machine learning algorithm so we're going to start to see more ways that we can break the classifiers so what our adversarial examples right data that presents the worst case to the classifier intentionally designed to make the classifier make the wrong

decision right examples for us right information security is detecting domain generic generation algorithms in command control infrastructure or my favorite how about generating malicious portable executables that are still malicious but they get by the classifier and are classified as benign there is an interesting or an interesting paper in this area where they reserved parts of the executable that were essential for it to execute right to work and then they perturbed everything else and remember remember like 10 years ago or like signatures are bad don't do signatures we have machine learning for this guess what we're back here again so now we have machine learning trying to detect these attacks and now we can subvert the

machine learning algorithm right adding on to that attack defense paradigm so some real-world examples of research that are out there adding the stickers to the stop sign for self-driving cars using eyeglass frames to subvert facial recognition systems or adding noise to verbal like okay Google to make it completely not register what you said to it these are kind of scary but remember when you used to throw the salt over your shoulder for good luck how about salt circles to trap self-driving cars we're like using alchemy to fool AI systems now this is a thing so generating adversarial examples again we're at which is adding specially crafted noise and we do this by using gradient ascent or creating descent and

one of the methods underneath that it's called fast gradient sign method I'm not going to get into math it's really not important basically you take each pixel you perturb it tour the the direction where you're gonna get the most error and you do that for the entire image or in case of video do it for the video so what can we do about adversarial examples we want robust algorithms we know that retraining from scratch increases misclassifications we know that retraining with a disjoint data set increases misclassifications however we also know that training with AD several examples reduces the misclassifications so if we took that into into consideration if we reduce the activation given two inputs that can be

tricked right we're reducing our the amount of exposure we have for that algorithm another way we could do it is keeping a human in the loop making sure it's not completely autonomous right it doesn't just do its own thing it got the the QA approval buck checkbox right and we're just letting it go and do its automation keep a human looking at it making sure that it's doing what it's supposed to be doing and then another version is adding consensus now instead of having one classifier to rule them all we have three classifiers that must come to a consensus about whether that input should be believed or not our training life cycle is probably going to change

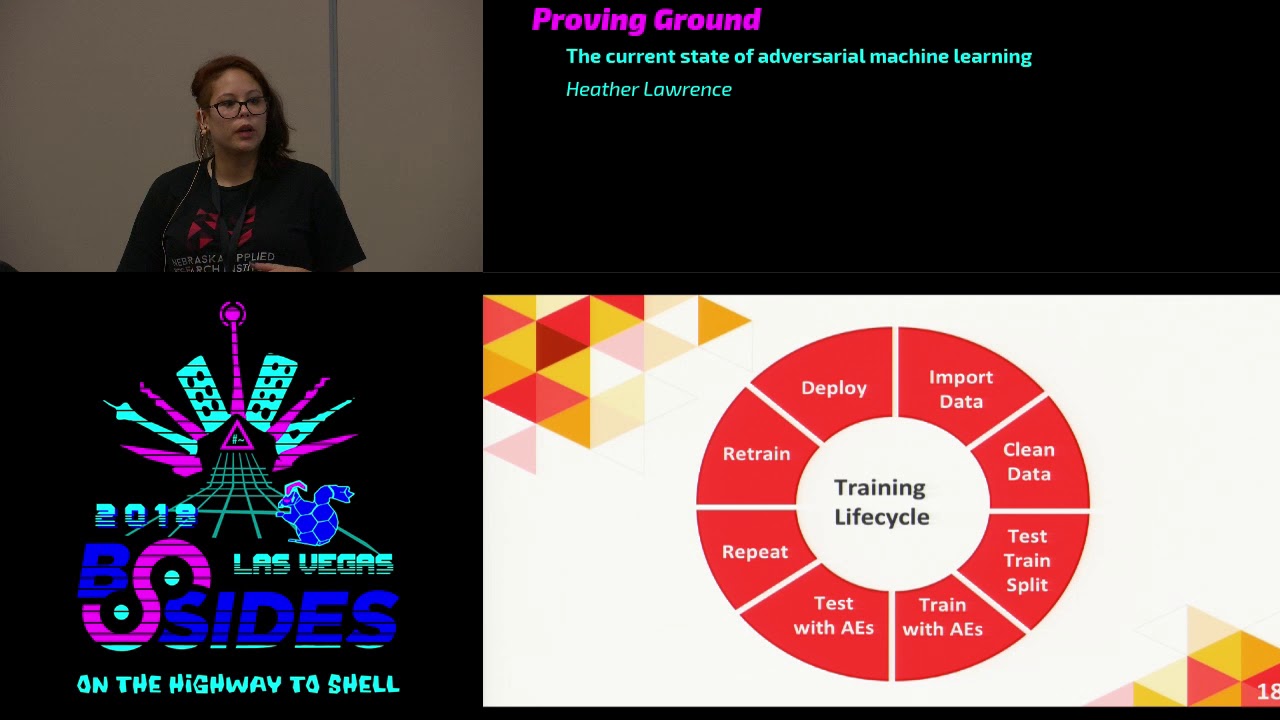

right if you're a data scientist maybe you work with this you'll recognize the import clean test test train split and then deploy with adversarial examples were increasing that training lifecycle we're generating adversarial examples we're testing with adversarial examples and we're repeating that until we get more coverage over that negative space that we were talking about earlier right providing all of those examples that we couldn't provide to the classifier and then deploying research in this area traditionally was kind of lackluster a lot of the earlier papers assumed easy-mode right the attacker has full access to the classifier and all the training data and all the test data right that's not realistic nobody's going to have that kind of access unless

you have an insider threat and several of the papers reference the information security community like look to them so we can have more robust attacks because we're really good at attacking things but blackbox research and I say that as in a recent less than five years time frame attacks can now be transferred between classifiers so before when I said white box like you had access to everything I don't need access to your classifier anymore I can attack your classifier with my classifier without touching your training data your attack your Viktor model your victim model becomes an Oracle so I can ask your victim a whole bunch of stuff how does this classify how does this classify and then use the

examples that it gives me to train my own classifier after that classifiers been trained I can generate adversarial examples on my classifier that work on your classifier which that's that's kind of scary I don't need to touch your classifier I don't need to touch its code I don't need to touches training data but I can still affect it and so here's like more of a visual understanding right an attacker has access to query or classifier the Oracle it tells me what it classifies certain things as I train it into class of the classifier B and then I use those adversarial examples that I generate against the first classifier what was interesting is that this image that you

see to your right is directly from the paper and what it shows is that it's the source machine learning technique is on the vertical and the target machine learning technique is on the horizontal so if I took my deep neural network right up there on the top and I trained it to attack your let's say decision tree anything that black column shows the increased miss classification rate so we're looking at 87 percent or if I took the SVM and trained it against another SVM that's a hundred percent that's how accurate I my adversarial examples that I generated are at attacking your classifier and making it miss classify so if you didn't notice from here right that hero's huge black

columns for SVM logistic regression and decision tree models you don't need to know really how they work just that they are more affected because they're differentiable deep neural networks are less affected by this phenomenon and they use something called reservoir sampling so remember I was talking about the Oracle and I have to query it I don't have to query it exhaustively in order to get the amount of training that I need to attack your classifier you would think that would I would need thousands right thousands of data points to figure out how it was classified but using reservoir sampling I take a random mix of those and I can reduce that querying amount down to the hundreds

instead of the thousands notable research in this area notice notice the date June 22 2018 alright more researchers are starting to use the limited information approach and they're taking their stuff and testing it against Google Cloud and AWS so they're taking Google clouds classifiers and trying to subvert them so if you're using Google cloud or AWS for their machine learning capabilities and I can subvert them what do you think that means for the greater community who's looking for this alright so demo in the event that you actually want to try this out on your own to the left is a QR code for TF classify which is an android app to the right is something is a it's an

adversarial patch it has been formulated specifically to be registered as a toaster does that look like a toaster to you a little bit a little bit like a toaster not completely like a toaster I wouldn't recognize that as a toaster I like that sir alright so demo right we have TF classify pair of glasses that's about 60 percent 60 to 70% we have the patch the patch is a toaster at about 30% that's not a toaster and then I have a duo souvenir and that's a granny smith apple right EF this was kind of like a remark about TF classify it was a it was trained on very limited data so some things that it may recognize

our may or may not be actually accurate but it does recognize the toaster references I pulled a bunch of papers academic papers for this if there was something that I didn't go into depth enough about that's why I have these references here so that you can go and pull these papers and read them for yourself to see I like how important these are right cuz it's really hard to hit on all of the nuances in 25 minutes there if you like machine learning and you're in cybersecurity and you have no idea where to get started I really love this github page because it includes so many resources of machine learning for cybersecurity data science in a box if

you just want to learn about data science and then and rue-ing's machine learning course is like the go-to course for machine learning right from Stanford a little dated still great information so takeaway it's right came in late machine learning algorithms can be attacked and algorithms like humans have blind spots Red Team your algorithms to increase robustness right we want to be strong against possible attackers we don't want them reprogram our reprogramming our machine learning algorithms that's kind of dangerous so like sequel injection classifiers require input validation right don't trust your user to just give information the classifier is going to use to continue to update make sure that you have some kind of input validation

or some kind of defense mechanism in an adversarial environment and again my name is Heather Lawrence I do data science at nari and you could get these slides at this link right here if there are any questions I would love to take them at this time all right let me work from the back forward so you mentioned current research they're testing against AWS and Google cloud and stuff like that and I think everybody in here probably knows the new hotness on the end point is your machine learning algorithms abandoning signature your crowd strikes silence and game do you know of any research that's currently going on that's kind of targeting kind of circumventing that endpoint protection or were those

machine learning algorithms or using it kind of I guess attack that stuff so I can't speak definitively on how they work mostly because the how they work is the bread and butter crown jewels you know IP that they protect right how they do it I do know that I am currently working on research in this area for information security I just got a $100,000 grant from Cisco in order to complete this research what were the the premise behind it is that we are we have an enterprise network set up through docker and a VPN connection and we're trying to figure out if you can use a classifier to fool the machine learning algorithm on the inside / that's using

an NG FW to block IP addresses so we are trying to like there is movement in this area to apply it directly to information security yes sir so on the research that you've done so far the wiggly reporting on is this basically poisoning the original data set or could have any of this be applied as a man-in-the-middle and doing this in real time pausing the results in a sense so it is kind of fuzzing the results right when you're clear querying the Oracle you're kind of trying to determine how the classifier is going to react so you're giving it data and it's like is it a panda is it not a panda so it is kind of like

fuzzing in real time but it depends on how the algorithm is implemented right so some algorithms do batch so it's it gets like five minutes of data updates the algorithm and then goes on that and sometimes it's on-the-fly instantaneous so it kind of matters on how it's set up in the backend yes sir all right Heather thank you we got to get the next speaker in here I'm sure you'll be around answering your questions outside the ring thank you [Applause]