You, me, and the HSM: E2EE's move to hardware

Show transcript [en]

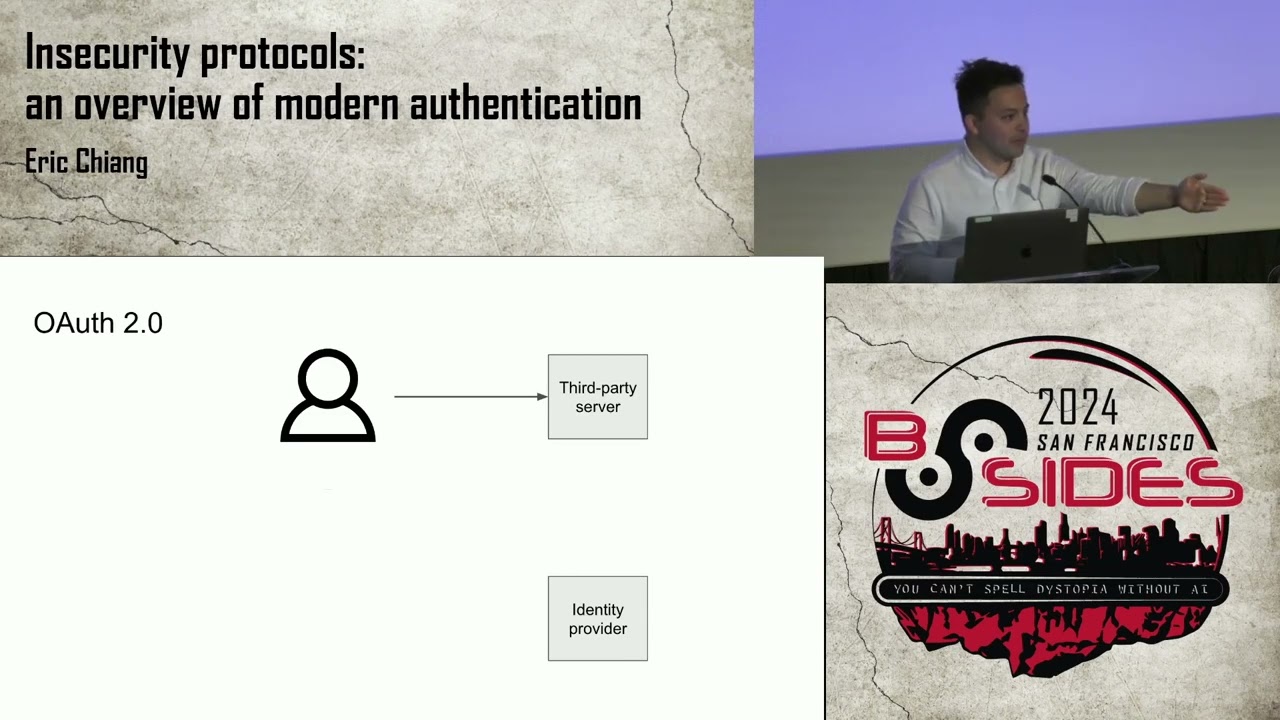

Hi everybody. You are all heroes for being at a talk at 3:30 on the second day. So I appreciate that. It's also like sufficiently few people that if you would like to sling abuse at me real time, please just do that. I'm I'm totally fine. >> I It's It's gonna happen. Um so yeah, just I think it's started. So this talk is called you, me and the HSM and to encryption's move to hardware. So my name is Eric. Uh I recently co-founded a startup called Oblique. We're kind of like in the IG group management space. So if you struggle with Octa, come hit us up. And that's the one time I will mention Oblique in this entire talk. Um,

previously I was at the Google security org in the corporate uh side for about six plus years doing all sorts of weird stuff with Linux devices and firewalls and uh I've also been involved in the Kubernetes community around sigoth specifically and all of the wonderfulness of arbback which is yeah the less said about that the better. So um we're going to give a totally out of the blue talk in terms of my background. Um, we're going to talk today about end-to-end encryption and specifically some weird cases where endto-end encrypted services are starting to use hardware security modules in places that might surprise you and might not. Um, I will also give this one to claim

disclaimer this one time. I am not a cryptographer. Um, so if you make any decisions based off of any of the cryptography I go on over in this slide, uh, that's on you. So um so yeah we're going to go to and talk about end encryption really briefly just get everybody on the same page. We're also going to talk about three interesting usages of hardware security modules in end time encryption. Specifically talking about uh WhatsApp uh X or Twitter and then Signal everyone's favorite. So first just to square and make sure we're all the same definitions. uh end toend encryption is largely the set of things that involve me having a conversation with you somebody and the

server is adversarial. So you can kind of construct some pretty easy ways of doing this. If I have your public key, you know, I can just encrypt stuff to it and you can decrypt it. You know, that's the old like GPG way as an example. Um, if you're kind of familiar with the algorithms that happen or the protocols that happen in things like WhatsApp and Signal, you'll learn that it gets incredibly crazy very quickly. You like renegotiate keys on every single message. Um, but the the core takeaway is that, you know, I'm sending data through a server that I inherently don't trust. And that uh server should also not be able to see any of the contents

of that message. So, you know, I text hello on one phone, you get it on yours, uh, and the server can't see it. Um, and and encryption is really starting to be the standard for lots of places that you would not necessarily even, uh, consider to be like a privacy focused organization. Um, there are some names on here that you like or don't like. Um, but I I think when you look at stuff like the amount of law enforcement requests to companies, like I I in researching this, I was looking in at some of the transparency logs that they um or transparent reports that they put out and like Meta gets 600,000 requests a year from law enforcement, which you

like, could you even like go through an inbox with 600,000 things in it? Right? So I think that all the big companies are feeling this pressure of like this is a huge cost center to them, right? Being able to have to like respond to all of these or figure out like you can't have a lawyer look at one of those. So I think that more and more you're seeing services realize, oh wait, if we just put everything behind end to end encryption, then suddenly we have a really good excuse when we say ah sorry we we just can't deal with that request. Um you're no comment. Um, you're also, you know, you're also seeing this in

places that really, um, I think were really appropriate. One password is a good example. Like you want your password manager to not have a world where one password get hacked and suddenly all your passwords show up. Um, and there's also weird ones like Ring just had hot water gotten hot water for like giving your video recordings over to police and implemented some amount of anti- encryption that you can optionally turn on. Um and as end encryption becomes more of a thing that we all use rather than just you know maybe the privacy focused set of people who go to bides um the set of the real problem here is that like if you lose your key you're a script right

like you cannot go to the service provider and say like hi uh I forgot my password can you just reset it and give me back all my data and you can see this in basically all the warnings for anything that's opt-in of like hello quick reminder we cannot save you if you, you know, get yourself in trouble. And what's really, really weird about this is you're starting to see a lot of UIs that look like this. So, this is X encrypted DMs, which is just behind a four-digit PIN. And like, again, I'm not a cryptographer, but like what? Like, how does X when it gets a law enforcement request say, "I'm sorry, officer. I could not possibly guess

10,000 pins. Like, there's no way we could ever unlock that." And the secret behind some of this is this handwavy thing called hardware security modules. Um you probably all have a in your head if you like go to RSA there's hardware security module vendors everywhere and you have your head like this like I don't know thing that you stick in a server rack. Hardware security modules just mean some code running somewhere with some tamperproof thing on it. Right? So when I talk about HSM today don't think about you know you have to store private keys. Think about, okay, there's something that has some guarantees about the software that's running on it and that, you know, if you

got physical access to it, you probably couldn't do too much to it. Cool. So, this is the meat and potatoes. We get to talk about WhatsApp. Okay. So, WhatsApp has a service um called backup key volt. Uh this is described in a 2021 white paper um that articulates a bit of how they use um hardware security modules to protect both the passwords and the pins that you enter in to encrypt all of your devices or to encrypt all your messages. Um this has now been exclus extensively used within meta for many many other services. So me things like messenger is now totally endto-end encrypted. They went through a huge effort to encrypt all of the

historical data in messenger. So if you you go in and you you do that and actually uh this is what the UI looks like. This is me locking myself out of Messenger in in preparation for this talk because I forgot the PIN. Uh so already we can see that pins are not perfect, but this is kind of the user experience, right? You show up on a phone that has no data whatsoever. You show up and it says please enter, in this case it's a six-digit PIN. Um and some of these have options where you can say like I want to do an alpha numeric password. Again, Twitter is uh explicitly X is explicitly uh four digit

pin. Um the architecture for WhatsApp's HSM architecture. Again, just think of HSN as some specialized code running in some hardware. Um is they run a entire cluster of HSM that all have a consensus protocol running between them. Um, and then there's a protocol called noise that is actually used in a few of the examples that we're talking about. Noise is very similar to is used in WireGuard and is very similar to TLS and it's just like the hot new cryptographic thing where if you want to set up a tunnel while you have two public private key pairs that's what noise is used for. So, um, what WhatsApp does is they publish like here's the public keys of all of

the HSMs and then when you go to have a conversation with it, you use that to set up an encrypted tunnel and it uses a particular password protocol called opaque that I think is really quite interesting and we're going to go into it right now. So, a traditional password authentication protocol works like this. You send the password over to the server and the server adds like assault and uses an algorithm like argon or historically brypt to harden that. Um, and the combination of those two produces an output that you can check to see did somebody enter the right password. And you know you can arbitrarily turn up the pain basically of terms of either the amount of CPU or

the amount of memory that these algorithms require. So if you accidentally leak the result of these, it would be hard to brute force in the background. Uh unfortunately this is not very end to end of you um to accept the user's password right like step one send me your password is not does not cut it in sort of a a scenario where you do not trust the server. So we're going to have to talk about one particular thing cryptographic protocol which is called an oblivious random function pseudo random function excuse me for all the cryptographers out there. So the way that an oblivious pseudo random function works is that I have some d sorry I have

some data uh like a password or something and I would like to combine it with some other data that the server has. This could literally be like elliptic curve private keys or assault or something like that. And the result of this cryptographic primitive is an output that the client sees that is deterministic. That is the same result every single time. But neither the client or the server ever learns anything about either side, the password or the salt. So in this case, the server would not learn anything about the password and the client would not learn anything about the salt and the client always gets the same result. You can implement this with elliptic curves uh

kind of classically where you basically take your password, you map it to a point on elliptic curve and you take a really big random number and multiply that. The output is called a blinded result. Send that to the server. Server multiplies it by its private value. You come it back, do some module inverse, and you get a result. So it's basically a a cryptographic way to take my input times the server input and get a result. And because of math, this is hard to reverse in the other direction. The problem with this uh if you're thinking about um stuff like a pin where you want to do pin back off, right? If you enter the wrong PIN, I want to know that as

the server is that the server doesn't get any information here. So even if I have the right password, I have no way of proving to the server that I have actually entered the right password on this particular side. So I could just keep on retrying without the server knowing. Um this is where we get into this really fancy cool new protocol called opaque which is from classic. I think it's from like 2017. So that's like bleeding edge in terms of cryptography. anything not out of the 90s is like great. Uh and the trick is to combine that pseudo random function with a key exchange. So the first thing you do is you try to exchange some keys

and this will there'll be a diagram on the next slide to demonstrate this. um and you use the output that result to encrypt a private key and you give that to the server and then later on if you can decrypt that you use that private key to prove to the server and say like okay I was able to decrypt this and therefore you can reset the pin retries so it kind of looks like this you have the output I pass this through a hardening function which is even cooler this is all in the sense of pin retries but you can actually just use this for authentication too um and that result encrypts my private key I give to the

server and if I am able to decrypt that later then I've proved that I've entered the right password. One nifty part of this if you're using this just for authentication is actually uh the client is doing all of like the brypt of the argon 2 so your server can actually offload all of that uh computation on the client which is great. Uh, and then there's a little note in here around, okay, don't worry if you try the wrong pin too many times, we rerender this totally inaccessible and the source is trust us, right? Um this is kind of the weird part that we get to of the talk where certainly in WhatsApp there is no evidence in other than white

papers that they are doing those pin back offs that there is even a cluster of HSM somewhere and you just kind of have to trust it and and WhatsApp is a weird space in general like they are one of the anti- encrypted services that like stores all of your metadata for example about the messages so maybe this is correct but this kind of hits another set of problems which is like how do we know those servers are doing the right thing? This is where we talk about X or Twitter. Okay, so X encrypted DMs. It's a fourdigit code pin always um and it's has a similar setup of a cluster of HSM to do this and they use a really

interesting protocol called Juicebox which I'm going to get into in a little bit. and Juicebox. Uh, one of the authors is Moxy Morlin Spike, who you might know from Signal. So, he and a bunch of cryptographers got together and wrote an incredibly complicated thing that I'm going to try to explain in a bit. Uh, one small note, uh, for X- encrypted messengers, your AI girlfriend and boyfriends are not encrypted. So, that's uh, you know, do with that what you will. I would think this is the one thing you want X not to be able to see, but that's that's their own choice. All right. Right. So, Juicebox, the idea, but the idea behind Juicebox is you know

what, it's really hard to trust one person to do pin back off or pin retries back off. What if we somehow split this between three people or four people or five people or whatever, you know, your threshold can be. So the whole goal of here is that you know maybe I don't trust one individual um sort of entity or tenant or realm is what they're called to do the correct thing in terms of backing off the pins or backing off the pin retries. But maybe if we split this responsibility between a few people that it would take a lot to coordinate between all of those sets of um entities to you know recover your uh do the brute forcing AC across

these and sometimes this can run in hardware it can run in software. Again HSM are just specialized hardware that runs software. The way it works, and this is the handwaviest part of the talk in all capacities, there's a thing called Shamir's secret sharing. If you've ever used vault and done the unlock, what it does is you can take a random value and you can say, I would like to split it against n number of shards with a certain threshold. So five and three maybe and you get out some math bits and if you can recover any of those three, any permutation of those three, you can recover the input. And again this is used for uh vault you

can to do their uh master key unlock where you you know have you split that key between a certain number of shares and you use that to recover the core disc encryption key. Um juicebox which again is far far far more complicated than this slide entails is basically splitting your pin into many different shares and then running an oblivious random function pseudo random function algorithm on top of that. Um, again, this is a little bit more complicated than just doing opaque uh several different times. Um, but yeah, check out the the research paper if you're interested in this kind of stuff. So, yeah, it splits it across different realms. We can then at least say you'd

have to coordinate between, you know, three of five realms in order to brute force this, right? That would have to be agreement between many parties, which is great because uh this is this is actually happens a lot where security teams are like, well, you know, if one person was able to push code, that would be problematic, but if you had to have review, then we're we're all good, right? Like you add the N plus one and it makes collabor collusion much harder. Um in terms of trusting themselves, but we'll get to in the next slide. um they just recorded themselves unboxing the HSMs and racking them and it's like a 7hour total of video with like some

notes about what you know public keys was extracted out of them. Um I did not watch that all for the purposes of this talk but and maybe you you know maybe this will cause you to trust X. Who knows? Um, as soon as it came out that X was using this, the authors of Juicebox immediately published a paper saying this completely defeats the purpose of Juicebox. The whole point is to have the tenants running by different realms uh or realms by different organizations. It doesn't make sense if you're doing this all under the same space. Um, my theory of why X is doing this is what nice about Juicebox is you can avoid the need

for consensus protocol between your HSMs because you need to consult every single one. So rather than having to do all this crazy like paxos or raft between your HSM which sounds very complicated, you just say okay you know I'm running this in a redundant manner and you have to consult many of these to do the pin back off. Uh anyway, you can, you know, take whatever you want from this, but yeah, I would be reticent to not mention that the Juicebox authors are not particularly enthusiastic about this particular setup, which is where we get to everyone's favorite uh signal. Uh so, Signal has a pin system. You can it is that minimum four digits. You can

answer alpha numeric. Who has actually seen this and has no idea what the heck it does? Okay. Yeah, like most of you. Great. Um, this is used to encrypt your contacts and certain metadata about groups. It importantly is not used for signal secure backups, which is a uh in beta right now. And I think a little bit of it was because of some of the feedback they got on the slides I'm about to get for uh go over in in some of the uh by some of the prominent like cryptographers in the community. It's got to be hard to be signal, right? You got to like do everything at the top level. All right, but the coolest part is that

it's all open source. Um, somehow this repo only has 58 stars uh and is like some of the more interesting cryptography I've seen. But the the system is called secure value recovery. Uh, there's been several different versions. This is two, but it's actually holds the all the code for version 2, three, and four. And earlier versions of this use a system called uh Intel SGX. So I talked a little bit about how HSM are just places that you can run code. Um, one of the primitives of that kind of a thing is called a trusted execution environment. This is a generalized term for I don't know a trusted space in your computer to run. This is where like if

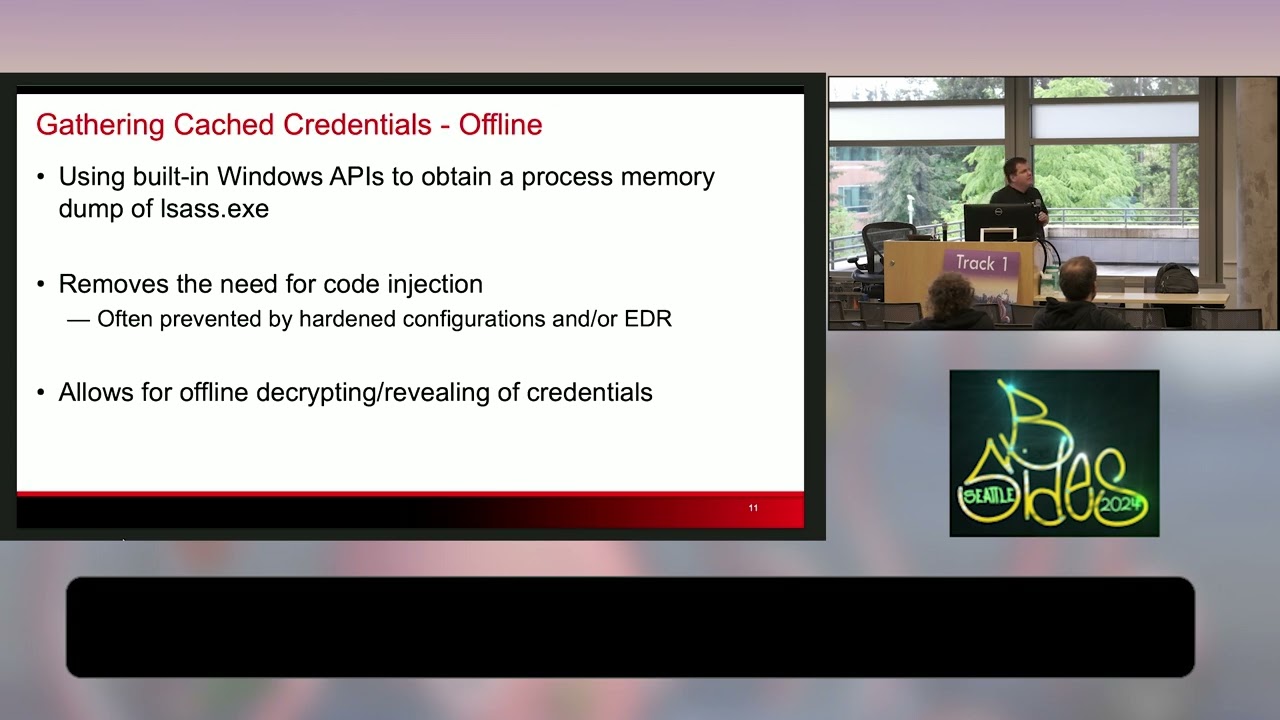

you have a trusted platform module that code that implements that might be in this space. They are definitely very resource constrained environments. Uh you don't get an operating system in those spaces. So you don't get things like threads that developers love. So it's generally not a fun place to develop in, but you can write very resource constrained stuff to run in there. The cool part about this is that if you're running within an Intel XGX trusted execution environment, you can ask the uh that environment for a quote which is a attestation that you are running there and then any measurements about the code that is running in that environment and the signatures chain back to the Intel certificate

authorities which is really cool. There's it's incredibly complicated how those certificates are provisioned. We actually don't have to worry about this in this talk. So, I'm definitely not going to go into it. I would also had to put this in there. Security uh security researchers love hacking these things. Um there have been dozens of attacks since Intel like put these on the market. Um they do run in specific cloud providers. Uh but if you talk to a security researcher, they'll probably be like, "Yeah, you know, we could probably hack into that." So, again, use the think about that what you will. Um the way it works is that oh no excuse. Uh the way it works is that the code

that's running in the trusted execution environment generates a key pair as it's running and then requests a quote over the that key pair. Uh that quote includes again the public key as well as the measurement of the exact hash of the data that's running there. uh you then do a noise protocol uh I think even between clients as well as individualized uh HSM and the client while it's doing that connection will pull out that quote attest up to Intel that is the correct one validate the hash and then validate that the key that's in the quote is being used to have that conversation so you're now at the point where you have uh a strong confidence that the

conversation on the other side is running in this trusted execution environment uh and you can see this just in lib signal itself. So, you know, if you have signal on your phone, these hashes are just embedded in the client that you're running where all it says is, you know, for every single version, these are the hashes that we accept. And when you do this handoff, the first thing you do is say, does the hash that Intel attested to match this hash? So, you know that you're talking to a known uh piece of software. And then there have been several versions of this. So uh v1 was uh basically doing raft based syncing between different um trusted execution

environments. So you could run multiples of these. They do the same noise handoff where they have to be talking to the same um piece of software. Actually there might be a chicken and egg there but let's ignore that. Um and then all it was doing for a while is just I have a pin and a private key and I'll store the pin. If you guess the pin correctly, here's the private key. Um there was a rewrite for scalability and then in V3 they added support for other execution environments. Uh uh Nitro was one of them from uh AWS and uh the snap or I forget what the other one is. Uh they also switched to the oblivious uh

pseudorandom function based print protocol. So they no longer store your PIN. They store whatever the input for that protocol is required. So, I'm about out of time. And I think what you might all be asking is, is this end encrypted? Um, and I think what I love about the messiness of these particular schemas is that it really hammers home that cryptography is never never a standalone. it's always integrated in hardware and software and you have to trust organizations and it's awful. You know, just the fact that you think that the version of Signal running on your phone is the correct version already has many, you know, leaps and bounds to get there. And I think that

what's really cool about these PIN derivation things is that it's just putting this right in your face of like, hey, sorry, you don't actually just get to trust math. That would be a great world. You have to trust the organizations that are they're doing the right things and that they've actually, you know, done the steps to encrypt your data to get you the client that you expect and all of this good things. Ask Pete Heads about that. >> And that's it. Right out of time. So, thank you. The slides are we have a question in the back also. You can just come find me too afterwards. I'm happy to. Yeah. >> Yeah. I was just wondering uh you

mentioned Moxy and you had a slide on on Grock. Have you uh been looking into any of this work on private inferring? >> Yeah. So, Confer is really interesting, which is what you're talking about. Confer actually uses pass keys instead. I actually wrote a blog about this. If you go to oblique.security security and go to blog. I wrote a a key a thing about how pass keys are actually the new generation of this where you don't enter a pin. You uh there's a special extension to the pass key that is used to establish your end to end data with whatever whether or not you trust that the LLM is running in the TE. I'll leave

that to you. >> Yeah. uh is there any published work or has have you seen anybody talking about the risk of forcing account lockouts by sort of maliciously trying pins and are there protections in place like the account? So the question was if you know if you're malicious and want to force the retries and break that um my so anything in this is not unauthenticated you've already logged in correctly to the correct WhatsApp account or Facebook account or signal you know you've established that you have this phone number so it's generally post authentication here and then at the point where they're in your thing you're you're cooked anyway right all right thanks everyone