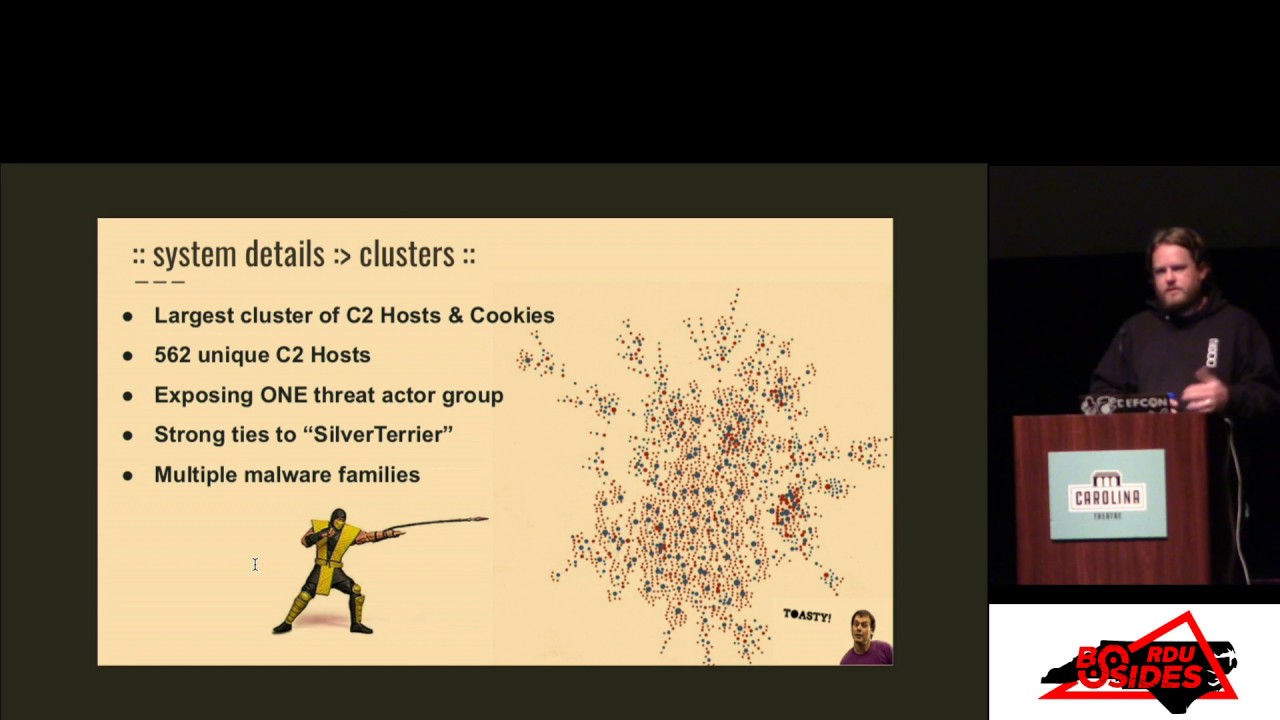

BsidesRDU 2018 02 - Approaching Parity Considerations for adapting enterprise monitoring - Matt

Show transcript [en]

okay all right we're live all right our next talk is approaching parity but you can see it here considerations for adapting enterprise monitoring and incident response capabilities for efficacy in the cloud environments and how to operationalize those capabilities with a playbook mat our next beaker is an information security practitioner with over 20 years of IT experience he helps protect Cisco's Network and assets as a first responder on the computer security incident response team he participates in groups such as the defense security information exchange and North Carolina InfraGard and Matt's hobbies are include making his own sauerkraut and competitive rifle shooting welcome Ana [Applause]

I'm pleased to be here good morning thank you for joining us thanks for the b-sides crew for everything that they've done to put this together so here's my contact information my role at Cisco see certain involved protecting corporate assets by serving as an incident responder my one of my focuses is on monitoring and response in cloud environments and that's what we're going to talk about today so this talk is about how c-cert adapts our security monitoring and ir posture for monitoring and infrastructure as a service cloud environment and the story is based on lessons learned to date from monitoring some Amazon Web Services tenants so it also includes top of mind from evaluating different environments not

just AWS and I should point out that although a lot of the terms that I use our AWS specific we see architectural similarities with other vendors and so the concepts that I talk about should apply just as well to other environments and finally this this talk is intended for security practitioners incident responders and basically anyone who's role in involves puts them in a place where they're responsible for monitoring and responding in cloud environments so what do we want well starting off no matter which environment your organization consumes I think it's safe to say that we pretty much want the same thing right we don't want to lose visibility in cloud environments we want a tool set that allows us to detect

compromise and of course we want to maintain and hopefully improve our efficacy as responders so where do we start with that and as an aside first I would just say remember that an infrastructure as a service typically the the logs must be enabled they're not on by default and that's because they typically incur storage charges mm-hmm so we can we can start by examining native vendor monitoring capabilities for one doing so will help illustrate the different vendor names for similar technologies and you can maintain your own surveys like the ones that I've put together here but vendors are now publishing their own guides and quite frankly it's easier just to use the ones that they've put together an

example is the Google cloud platform for AWS professionals guide and then second knowing these native capabilities will help you plan your security monitoring architecture and your kit so where native capability gaps exist you can then look toward workarounds to bridge the gap or third-party solutions and some third-party solutions design their kit to integrate with multiple IaaS vendors right so and the native capability will dictate differences and how the third-party solution implements for example the stealthWatch cloud is something that natively it consumes native flows and Amazon Web Services but due to the architectural differences if you were to deploy in other environments you would be required to use a sensor for those collections so new features

and releases and upgraded tooling tend to be the norm for many providers it's sort of the odd week that I look in my email and don't see notification of yet another feature release or or improvement from AWS and also keep in mind that there's an almost can end Liske --nt in UUM of KITT you can evaluate but you need to make sure that you don't let that distract you from your mission which is to obtain the security logs that you need and make them actionable and then I have one example that's I'll share with you so stealthWatch cloud which is a cisco acquisition consumes cloud trail and AWS virtual private private cloud flow logs and it works the product works to solve

to try and solve the context question around the network and infrastructure events in the cloud the idea is that by baselining what is normal so it can alert you to what is what is abnormal and and just from my own experience in the week before we were to go live Amazon Web Services released their equivalent solution guard duty that had a similar enough feature set that it gave us pause on our deployment so we could take a look and reevaluate the feature sets another consideration so for environments where you're responsible for monitoring multiple tenants I think attribution information is important tenant owners are typically the ones who will be able to provide context around events right and in

knowing context is is important because obviously we don't want to bother tenants with alerts that are not meaningful for them so when we see interesting events taking place from our kit we need to know things like is is that a brute-force attack or is it just a broken script or is that a compromised machine instance that we've detected is what is it what does it do is it a test host that we can isolate and or just shut down or is it the web interface for something more important like a revenue-generating service these are important questions we want to bring the appropriate response to the condition that we detect so tenant context can help paint the picture in

these situations and the example that I'm showing here is a a Splunk dashboard where we try to enrich context data from AWS so that it always this is that it also includes employee specific attributes logging events is sort of a talk unto itself or as Blaine is here there he is but here are some things to think about so first we're possible relegate alerting to a single mechanism your analyst will thank you we use bunk next consider that some logs will be more interesting from a security monitoring perspective than others and into that and third-party solutions and software development kits can increase the volume of the read or describe type of events that are logged and these obviously

might be less interesting to a responder than write or update events and so to limit log ingestion volume particularly or that's a concern for a cost where appropriate I suggest consider indexing only the more interesting parts into your scene leave the less than interesting logs and their native repository and then you know because they may later come in handy for investigations and you can seek them out only if needed and then also be prepared for the scarcity of logs to serverless service offerings tend to abstract the virtual machine for example so you can run DNS using route 53 but just as that machine is abstracted keep in mind that so - maybe the logs that would be used

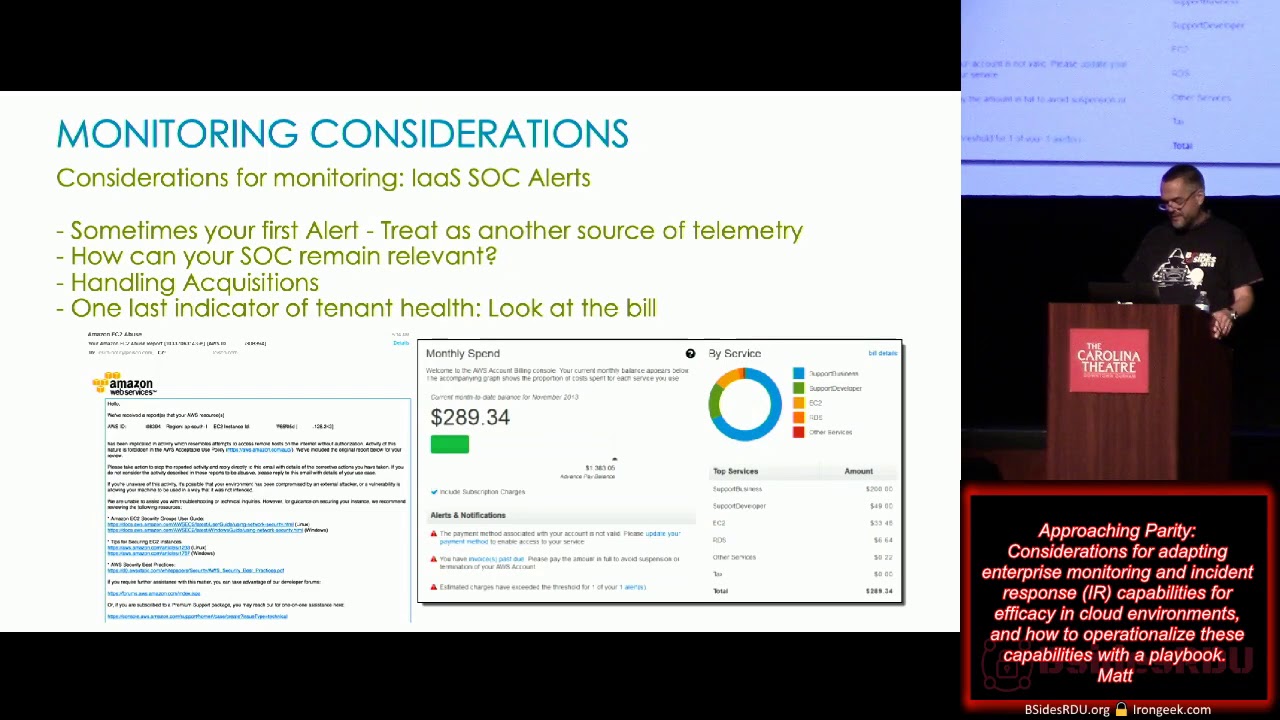

to go along with it and then one other thing to prepare for is the infrastructure-as-a-service sock may alert think AWS ec2 abuse notifications for those who are familiar with these but the sock will sometimes lack the attribution in context that you that you know about anything that's organization's specific that can give that important context around those events and let you decide whether that's just an event or an actual incident but I will say that until you've deployed your monitoring solution these alerts can be used as another layer of telemetry it in either case be prepared because the sock will contact you and you at a minimum need to have some response capability and then one last

consideration is is the bill it's a great it's a great quick check if if you know what your typical monthly charges are this can be a great indicator so for example if your bill goes from five hundred bucks a month to fifty thousand that's that's a pretty good sign that something's changed and you just have to figure out what and why so now let's switch gears and I'll talk a little bit about how the AWS environment that c-cert monitors so this is a simplified picture and version of the story continuous security buddy is an internal tool set that we use to monitor our tenants we came about a year ago to have multiple company owned tenants this

adoption happened fairly rapidly over the course of a few months and at that time Cesar was working on our initial AWS monitoring solution based mainly on cloud trail logs and billing logs and a little bit more that I'll get to in a moment and we were fortunate in that our kit was a by our InfoSec group at large and became a core component of the continuous security buddy monitoring so the the kit initially had a button click aspect which everyone here knows that if you need to ask your users to click a button you're already behind the game so the initial version was suboptimal we don't want our telemetry or kit deployment to rely on the tenant taking action so

there is a caret-- component for those who have may have multiple tenants to roll up under a corporate account and to the tune of discounted pricing that's something you need to talk to your infrastructure as a provider or representative of course about but the kit could be installed via the command line or via browser simply put the solution uses template stacks in order to deploy roles the roles that are installed include an investigator role that can be activated on a temporary basis and it looks for the kit also looks for and reports on permissive s3 policies provides VM and other best practice notifications so once this gets installed were able to capture cloud trail locks were able to capture VPC

flow and OS query which you can think of those is it's it's an open source that will give you inventory information on the local machine so yeah cloud trail is the AWS name for the infrastructure logs stealthWatch cloud will ingest the VPC flow and provide alerting and then as I mentioned the OS query captures host level inventory so but capturing just these three things is it's just a start there are so many other log sources that we may want to use every practitioner knows that the more telemetry that the the more layers of telemetry that you have available the better equipped you are to detect and respond to security incidents again recall when making decisions about what

sorts of logs you'll go after that the vendor native capability surveys will really help guide your efforts on which tool is best to use in your environment so so we're ingesting lots of logs right just like this dog trying to drink from the sprinkler but but how do we make sense of so much so much log data and it's a good question right so now that I have this mountain of data what do I do with it and so what I'd like to touch on now is a short overview of the c-cert playbook and then just stepping back from that for a moment if you think in terms of a recipe for an incident response shop these are sort of the

ingredients that we think are required to bring it through Incident Response capability to bear right you have to have management support you have to have the technology in place you have to have people who know what to do with the with with with the technology and then you have to have process written around that otherwise everything is has to be done manually and so we use a playbook that is the process by which we automate our monitoring response and we also use continuous feedback to on the place to help ensure efficacy so the question is well first of all playbook is a talk all into itself and the question often comes up what does our PlayBook look like so I

and I will show an example here but if you would like it the discussion some of the members of our sea sir family have written about our playbook approach so a a lot of people are surprised to learn that we don't use a traditional seam monitoring solution for the work that we do and some of them are also surprised that to learn that even though we are a Splunk shop we don't rely on Splunk Enterprise security rather our approach is that we write each of our own plays internally and so what you see here are the this is the development process and brief behind each play and it's each plate that we write or looking

through Splunk and we're trying in order to garner the results but each one of those queries is written based on the questions that you see here what am I trying to protect right what are the threats against it how can I detect them and then how do we respond when we see these things happen so here's an example play an actual Splunk query from one of our plays we have over 200 plays deployed to monitor and protect our networks this example play is based on AWS cloud trail logs and it alerts us to the application of overly permissive s3 bucket policy which everyone knows if that's unchecked or unintentional can can result in data loss and I'm probably

going really fast here so I apologize I want to touch on IR containment and a little bit about mitigation next so what's the model for IR in the cloud the the providers commonly describe a shared model for security and it goes something like this they the the provider does the things like securing the data center right they secure the electricity the facility the infrastructure the heating and cooling etc and then you take responsibility as you get nearer to the virtual machine instance a storage bucket or the the service layer right but really what all this means is that you the tenant owner is ultimately on the hook for security and as an aside the c-cert is that I

work for is a small shop and in our case we are neither intended nor are we resourced to perform the tenant support function so likewise when an incident is detected the first action is typically to contact the tenant owner for additional context containment and then ultimately remediation activity so a little bit more on the shared responsibility essentially it's the cert working incidents with the tenant whenever possible to ensure that the tenant mitigates and contains detected security incidents as appropriate so this includes things like using tenant context in order to get the correct response if you think back to my example about a machine right we before we isolate it we would really like to know

as much as possible about it is it development is it production is it a machine that generates revenue for the company it's important that we bring the correct response to bear so and how do you really know in environments where you're responsible for mold monitoring multiple tenants or what function that host provides in absence of that context right now of course we can read to that environment as needed and assume the role that allows our investigators to come in and value exactly

fix a family member's laptop right who doesn't know anything about computers once you've done that you now own everything that goes wrong with that thing from from then on also as a cert we don't generate our revenue by selling security products rather we demonstrate our value to the organization by helping mitigate risk and this value is can be expressed in terms like in numbers right yes so you can talk about things like time to detect and time to contain generally speaking one way to gauge the efficacy of your service by using these numbers and so this is an example timeline of an incident response you know you have what the stages where the analyst determines a compromise has

occurred the analyst notifies the tenant and the tenant takes a mitigating action right so that brings you from detection to containment and that sort of encompasses the timeline now of course there may be examples where the numbers will turn out differently of course shops can often bring forensic capabilities to bear if the case warrants warrants it but that doesn't happen for every incident that we detect in some cases it's simply remediate get the tenant back to a known good configuration and then drive on but think about this timeline for the next examples that I'll share with you so this is that I our shared model when it works as expected so in this case we

detected validated contacted the tenant and advised and the tenant remediated and notified us of such the impact was not disruptive and we closed the case all this took place in under a day and again you the tenant are responsible for you to cloud security most public cloud environments oh no I think we've already covered this yeah I don't want to chase that any further so one thing to think about is without attribution without knowing the tenant contact to get in touch with to look into this you know what what sort of problems can you encounter it could be you know problems mitigating problems it could have a negative business impact runs the whole gamut that was one that

shows when it works as intended but also I will share a case where them in States gap and this is a case where you know due to a mixture of attribution problems potentially unresponsive tenant and a daily continued influx of new abuse notifications really lasted a lot longer than there's ideal so but it raises a good question because we come across this and the question is what happens when the tenant contact is unresponsive you will occasionally run across I called them derelict tenants the lights are on but no one answers the door so in that case you your sock investigator may have to invoke a privileged role and take action right my suggestion there is that in that case

when you have to do that it's invasive make sure that you document your actions in case your actions have to withstand scrutiny after the after the fact and then one other point to this end where possible work to include this mitigation and containment functionality in a central spot your analysts again well thank you they don't necessarily all want to become familiar with the inner workings of how to get into a tenant through the CLI and contain an ec2 with an ingress policy so if you can wrap that around and wrap that into a webpage then that will help put you ahead of the game and then finally the worst case scenario if the keys to the kingdom and by that I

mean if the tenant root credentials are gone you may have may have no other recourse to remediate than invoking vendor support okay so with that I'd like to touch on just a few retrospectives and a little and close on some some root cause so we've moved everything to the cloud right and everything is different now than it was in our data center right it would raise their hand and say that well it turns out that you know the more things change the more they stay the same and so I just wanted to share with you here are three conditions that we frequently frequently see in cloud that have been the root cause for security incidents that we've worked and

obviously the idea here is that by preventing these mistakes you can reduce the risk to your cloud environment we want to do things like harden our cloud environments by reducing the attack service wherever possible and prevent the conditions that lead to compromised these are all the sorts of opportunities that we can take before the fact improve the overall security posture of our cloud presence so first that think overly permissive network ingress think of overly permissive networking policies as potentially careless firewall policies right that can let anyone on the internet access your cloud host I mean obviously there will be situations where a permissive ingress policy is desired for example when you're running a publicly accessible web

server but ingress policies otherwise should be scoped according to the principle of least privilege allowing network access only from those hosts who require it and then you could use you could draw the same analogy for storage buckets like s3 or elastic block storage it's the same idea here so right and unless there's a specific need like a public document repository for example avoid setting bucket permissions to be world readable the risk here obviously is an intentional exposure of sensitive data and then number two as with permissive ingress policies leaked credentials are another pitfall to a secure cloud environment and can lead to data loss the common vector here is credential leakage and access key is

committed to github or other publicly accessible repositories such that they can be acquired and unauthorized users can simply go to github and use those credentials to access your environment same thing that you've probably heard a thousand times again avoid this by not hard coding credentials in the source if there's no way around that sanitize those credentials from code before commit and then another step is to make sure that you're restricting access to your code repository to only the people that need it and then finally unsecure machine instances these are the images that are either vulnerable from a configuration standpoint or a software perspective and then when they're on up they're run either with retweet credentials or sometimes with no

credentials at all the lack of strong credentials particularly when combined with an overly permissive network ingress policy can make these hosts an easy target for hackers these machines exposed to the Internet and this weak security configuration once they get popped there they can quickly be subverted and used to distribute and to do all kinds of naughty stuff distribute illicit files distribute malware and participate in distributed denial-of-service campaigns and can generally have a negative impact on on your organization's reputation so that is that is my talk and one last thing I was advised by a colleague who previewed this talk that the presentation lacks pictures of clouds so so here you go thank you [Applause]

[Music]