Don't Run Six Checklists: A 25-Minute Sane Guide to AI + Healthcare GRC

Show transcript [en]

I just wanted to give a quick uh background about myself and let you all know where I'm coming from. I'm Pran Mata. I'm a senior security and compliance analyst at Headspace, the mental health app. We have uh meditation therapy, psychiatry allin-one app. Uh prior to this I've worked at other organizations as well like fair financials, engaged learning, PCS with the focus on healthcare compliance and privacy. Uh I hold a master's degree in cyber security, doctoral certificate and business administration. And when I'm not running checklists, uh I love watching soccer. Liverpool's my favorite team and I like going on hikes whenever it's sunny outside. Uh so let's begin. Uh let's talk about the challenges that we face. uh in the

healthcare industry right now specific to GRC I keep seeing that LLMs get introduced everywhere across healthcare workflows product and leadership team want to add some LLM feature and AI feature in their product uh because of that security teams face a lot of pressure to give a quick yes or a no answer for these uh uh products that want to be introduced in the care workflows and they feel a lot of pressure while doing this a lot of Different frameworks arrive from different stakeholders. Legal might come in and say, "Hey, this workflow touches PHI, so you need to be compliant with HIPPA. Some other team wants to be compliant with NIS, some other team with ISO." And all of this brings a lot of

engineering deadline as well. Uh and the fourth problem that I keep seeing is that if you we keep uh treating these frameworks as separate projects and end up answering the same questions around access control, logging and monitoring, backup multiple times in multiple different formats. This duplication like really slows down delivery and still doesn't produce any confident results uh for a lot of security teams. The goal today is to uh replace that with a shared language and to make decisions that can travel across all different teams as well. U so what happens when leadership gets involved uh around these decisions. So when leadership is involved the conversation becomes really simple. They don't really ask subcontrols or

framework questions. They ask these four different questions in some way or the other. Are we protecting PHI? Is this secure enough to launch? Who is accountable for the risk and the decision? And if we move forward, are we going to regret this in the future? A good GRC approach should answer these questions very directly and should do it in a way that no one at the table is a framework or subcontrol expert. Uh so I wanted to talk about where LLMs usually show up in care workplace settings uh that I've seen in organizations uh out there. I would say the first is patient chat where LLMs and models show up for support and triage. The second is

note assist where models help draft summaries and write clinical notes. The third is around care operations where models help route tasks and automate a lot of follow-ups and help with administrative stuff. All of these depend on a service layer that performs inference, applies guardrails, and generates logs. So if you're not protecting the service layer, we are not really protecting the data around it and we are not really protecting any care workflows. So let's talk about the framework pile. This is like the alphabet soup that a lot of infosc and compliance team have to deal with. We have HIPPA, we have NIST CSF, we have HHS guidelines, we have executive orders as well. And we have AS specific frameworks like the AI

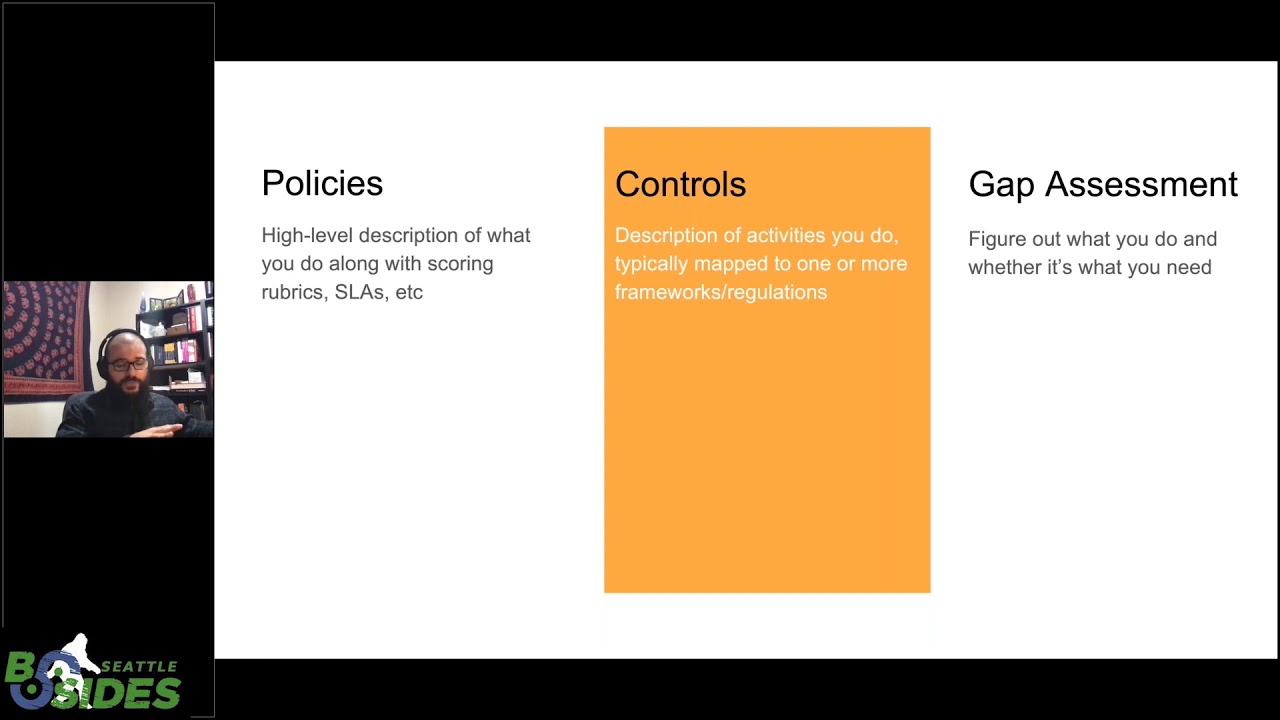

NIST RMF and ISO 4201. I'm not going to dive deep into each of these. Uh you might already know them. The point is not what they are, but the point is that they all show up at once. Each one uses a different terminology and structure, but their intent really overlaps very heavily. The mistake is that we keep treating these as six different checklists and require six different work streams. In reality, they overlap very heavily and they often want the same outcome just with different labels. So instead of running six different checklists, I say we can run them by intent and group them by intent. So as I said previously, uh instead of directly operating HIPPA, NIST or ISO

and everything else, we should take a step back and really see and ask a simple question. What are these frameworks actually trying to solve? When you zoom out all are aiming the same thing that is reduce harm, protect sensitive data and ensure accountable decisions. So rather than memorizing subcontrols, we should translate them into shared outcomes. This is very important psychologically as well because when you go to an engineering or product team and say hey we need to be compliant with ISO subcontrol X or is NIST framework subcontrol C that's when they'll disengage but you when you go to them and tell them hey we need to protect PHI and ensure safe output of data that's

when they'll start engaging with you and work towards a common solution. So the what the map does is it becomes a transition layer between compliance and engineering teams and bridges that gap between different product teams as well. And once you have like a shared outcome, governance stops being like a document factory but rather it makes uh the governance team be helps become very a very help make clear and consistent decisions and lets uh the product and leadership team ship responsibly at the end. So now I wanted to talk about the five shared outcomes that we can align uh these frameworks into. The first is protect PHI and privacy. This is an obvious one but it's worth saying out

loud. It means confidentiality and integrity but it also means appropriate use. It means we make uh we are clear what data is allowed to go in, what is not allowed to go in and we it helps us stay aligned with legal expectations like HIPPA and any contractual commitments as well. The second is SEC strengthen security and resilience. This is classic security stuff but uh posture stuff but just on a different workflow. It's access control, encryption, logging and monitoring and incident response. It also means resilience meaning we can detect problems, contain them and recover without any chaos in the environment. The third is accountability and transparency. This is where a lot of AI projects I feel get stuck. Nobody can answer basic

questions like who owns this feature? who signed off on it, what are the risks accepted and why were they accepted? Transparency over here doesn't mean exposing any company trade secrets but it means that we can explain how the system works at a very reasonable level and we can then defend these decisions later on as well. I would say the fourth is safe and effective AI use. This is an AI specific dimension and it's whether the output is reliable enough for the use case. What failure modes we can expect and whether we have guardrails for any misuse in the uh workflows. It includes things like robustness against manipulation, bias concerns where relevant and making sure the system actually does what it's

supposed to do in real world outcomes. And the fifth is govern and improve continuously. This is the part that makes it sustainable. It means monitoring what the system is doing, reviewing periodically, updating controls when some uh when the model or the feature changes and having a clear path for responding if something goes wrong. AI features are not not a set it and forget it. So that so governance also cannot be that for them. Now I feel what this is why the framing works over here. When someone brings a new AI feature, you walk through these five outcomes and ask a simple question. Which of this does this feature impact and why do we need and what do we need

to prove for each one of these outcomes? I just wanted to talk a little bit about the foundation as well. So before we start with threats and scoring, we need to start with visibility. Visibility step zero. You cannot govern what you cannot see in your environment. First is create an inventory for every LLM endpoint internal or external. Everyone every of that endpoint needs an owner. That includes API embeddings in your third party SAS tools as well. Shadow AI is still AI and it causes a lot of risk in the environment. The second is PHI flow. You need to know where PHI enters, how it transforms, where it's stored, and where it exits. You need to include prompts, output,

logs everything. The third is vendor path. Many healthcare teams assume that if you've signed a BA, we are secure. But if the LLM endpoint for that third party vendor is at a subprocessor or if it's in a completely different region that it's not supposed to be for your environment, those assumptions can be wrong. I feel most AI governance problems are actually just visibility problems in the end. And this is like the harmony table idea. So different frameworks use different labels but they overlap a lot on outcomes. HIPPA, NIST and HSS guidelines cover PHI and resilience. AI, RMF and ISO frameworks bring explicit governance and AI safety languages. Executive orders emphasize visibility and secure practices. If you squint, these are different

structures, but they all have the same intent. The mistake is running a HIPPA risk analysis then separately running an ISO mapping or a AI RMF mapping. Instead, run one S base system level assessment and then map them to uh the outputs that I had shown before. For example, your data flow diagram can satisfy HIPPA documentation requirements, a RMF map expectations and ISO documentation requirements all at the same time. If you do uh for other controls as well, this is how you eliminate a lot of duplicate work. So now let's look at some B best practices out there. Uh a lot of AI governance conversations get abstract very quickly. So we should bring it back into these defensible guardrails. These

low ef loweffort guardrails help reduce a lot of risk in your environment. Region pinning reduces regulatory and contractual ambiguity. Training off and retention minimize reduces exposure and surface area. Data minimization reduces the blast radius. So least privilege limits who can see sensitive data and what and the inputs and outputs. Log hygiene ensures that you can detect misuse and abuse in your environment. These are not exotic AI controls but are basic security pract practices applied to a new workflow. And if these are in place the mo most of the frameworks that we had listed before becomes satis get satisfied at a very structural level as well. So now let's look at some AI specific risk. Prompt injection is not

theoretical. It's essentially input manipulation that can override instructions and trigger unintended tool use. The practical mitigation here is constraining tools and validating outputs before you take any action. Jailbreaks attempt to bypass guard rails. The mitigation here is testing prompts and monitoring all the outputs. Context leakage is especially sensitive in healthcare. If session boundary fails, PHI can cross context which causes a lot of risk and also potentially harm. Mitigation here is tenant isolation, context scoping and avoiding unnecessary sensitive in uh data in your prompts. None of these, if you notice something, none of these require any exotic AI research teams. They require discipline and architecture and monitoring. Now that we have shared outcomes and baseline guardrails, we need to make

decisions quickly and consistently. This is where the lightweight security risk analysis comes in. Over here, scope means defining the feature boundary. What data enters, what data leaves, and who owns it. Score means using a structured but simple method to estimate the risk. Decide means committing to a go pilot or whole decision tied to launch checkpoints. The goal is not to create a massive report, but to produce a repetitive engine that is backed by a small evidence packet. Every FE AI feature should run through the same path and because of that the if because of this consistency over time you can start building a lot of trust in the environment. We'll walk these through in three stages of scope, score and decide.

Stage one is scoping the PHI flow. When I say scope, it's literally draw the flow on a whiteboard. If you can't draw it, you are not ready to assess the risk. You identify the feature, trace where PHI moves, identify who is involved, keep the flow simple, for example, a patient app to an API to a prompt builder to an LLM service to clinical review and include logs and monitoring as well. Then ask these three critical questions. Is there PHI in the prompts or outputs? Is there any data retention anywhere in the path including logs and embeddings? and is it region pinned or otherwise controlled? If you cannot answer these questions confidently, you are not ready to score.

This is a this is where I feel 50% of AI governance gets solved over here. The second is scoring. You use a four simple 4x4 grid. Uh you use a simple 4x4 grid. Likelihood on one axis, consequence on the other. Likelihood is about exposure and ease. How often does this feature run? How easy it is to misuse or exploit this? And how many users are exposed? Impact is about consequences. If something goes wrong, are we talking about a minor operational issue or are we talking about PHI exposure or in some workflows even patient harm which is more more bad. Now I feel here is where some teams get it wrong. They try to be as specific as

possible. They'll try to score this as like a 2.4 or a 3.2 in the risk scale. Rather, I would say use bands such as low, medium, high or L12 to L4. And keep keep everything explainable. And one of the most important things I would say is write down assumption behind your score. For example, if ret retention is on by default, likelihood will increase because exposure duration will increase as well. Or if this feature generates clinical suggestions without any review, impact increases because unsafe output can cause a lot of harm. Now you're not arguing upon opinions, but you're documenting assumptions. And here's why I would say why this risk matrix gets a little more powerful. You

don't rescore if uh someone is feeling nervous about the score or if there's a new stakeholder in the meeting. You only rescore when the control changes. The this consistency helps with decisions and makes it defensible to leadership and auditors as well. The grid over here does not eliminate risk but it makes the risk explainable and the explainable risk is allows you to move forward much more responsibly. I would say this is where governance becomes more empowering than blocking. A go over here means there are controls are sufficient and evidence exists in the environment. A pilot means there are limited exposures. Extra guardrails are required and there's a defined review date. Again, pilot is not a soft yes.

So pilot, I would say pilot is not a soft yes, but it's a control decision that's on a timer. A hold mean there's an unresolved high issue that requires either leadership input or you need to go back to the drawing board and redesign your workflow. Then you layer these in launch checkpoints design pilot and production. At this stage, you define what must be true before you move forward. And what and you make and you make that visible to everyone up front so there are no surprises in the end. The point is to turn risk decisions into a stage rollout plan so that you can learn safely in pilot and only graduate to production once the right guardrails

are in place. This gives engineering clarity and they know what must be true between moving stages. And lastly, I would say if you don't do this, at least produce these three artifacts. A one page data flow diagram. This gives everyone the security team, compliance engineering teams and auditors a shared vis visual understanding of where PHI enters, where it moves and where it exists the system. Make it retention and region proof. This provides concrete evidence about data handling settings and that the data handling settings are configured that the way you claim it. It reduces ambiguity around storage training and geographic compliance as well. Third is access and access and logging snapshot. This demonstrates who can see the sensitive data who uh the

inputs and outputs shows that we are actively monitoring and we also retain in such a way that retain the data in such a way that is accountable and prone to incident response. So these three things eliminate a lot of risk and a lot of repetitive questions that auditors and leadership teams ask. So I would say collect them once and reuse them across different teams and different frameworks. Yep, that's it. Uh, I've created a substack that I keep posting about telly health. So, if you all want to join, I've just started it. I'm post I'll start posting more on it. But yeah, that's my talk. Thank you.