A Comprehensive Guide to Post-Quantum Cryptography - Panagiotis (Panos) Vlachos

Show transcript [en]

Welcome everyone. Hello. My name is Panos. Uh I'm an information security engineer, master card and a PhD researcher in Queens University Belfast. Today I'm here to give you an overview of postquantum cryptography as part of the research I started uh in center for secure information technologies back in October. Our agenda is quite high level. So you don't have to be a cryptographer to follow but time will be pressing. So please keep any questions for after the presentation. Now the outline for the presentation will be as follows. First we'll do a quick refresher on conventional cryptography. Then I'll introduce the concept of quantum computing and how it differs from what we know and that's the quantum

thread but only the abode basics. So no physics uh required here. At this point, postquantum cryptography will come to the rescue responding to the quantum threat. Then we will explore what's the current situation with postquantum cryptography and finally take a quick look at some of the most interesting areas uh where it is applied. So conventional cryptography. First some uh basic terminology and refreshers cryptography is the practice and science of ensuring confidentiality, integrity and authenticity of information by transforming it into an unredable format which allows us to communicate privately over insecure mediums. Plain text is the term we use to refer to the normal text, the human readable format of a message in its original format.

Cipher text is the encrypted plain text. So it's the result of the encryption algorithm. And finally, post quantum sorry cryp analysis is the science of analyzing and breaking cryptographic codes and ciphers. And finally, postquantum or quantum resistant cryptography to which I will be referring to as PQC from now on refers to cryptographic methods and algorithms designed to remain secure even against potential attacks by quantum computers while still functioning efficiently with classic computing. Now to visualize this we can imagine that we have two parties Alice and Bob that want communicate over an insecure channel. So Alice will have her original message in plain text form and she will use encryption to transform it into something unreadable cipher text. She

will send that message across and both upon receiving it can't do much because it's encrypted. So with the use of uh the decryption process and the secret key he can decrypt the cipher text back into its original readable format and the message. Um at the same time if we assume a third party who is dropping on that communication we will call that third party Eve they cannot do much with the cipher text other than analyze it trying to break it and bring it back into the original uh plain text form without any further knowledge and this is what we defined as cryp analysis. So this very basic illustration here give you a visual example of what cryptography and

crypto analysis is. Now there are two categories of cryptography. The first one is symmetric cryptography where the same key is used is used for encryption and for decryption. And in the previous example you can go val and bob share the same secret key. It basis is the algorithms that transform plane to cipher text using the secret key. And these algorithms are also called ciphers. Its security assuming strong cipher mostly um relies on keeping the secret key actually secret. The main cryp analytic attacks in this uh type of cryptography are root force attacks where a combination of key is tried until the right key is found and crypalytic attacks aimed directly the underlying cipher. something that would

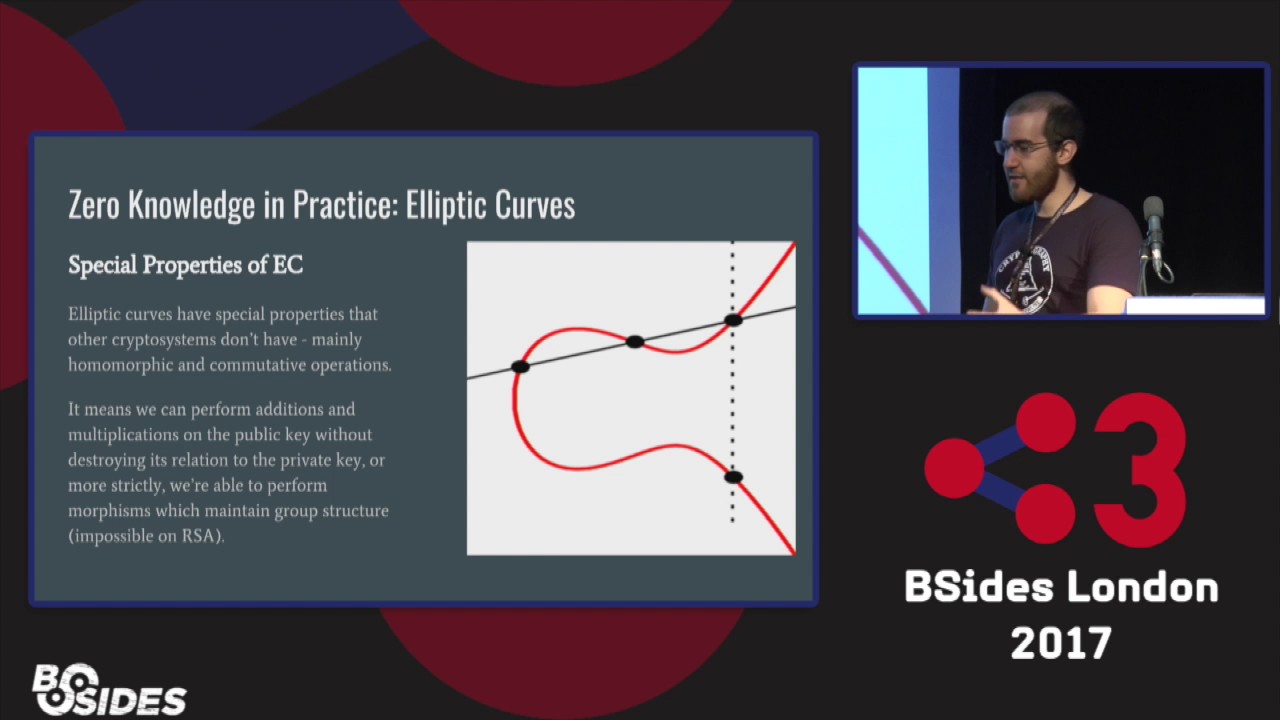

make the whole cryptograph family. The second category of cryptography is asymmetric cryptography. And here we have pairs of keys public and private. So Alice has her own set of keys. B has his own set of keys and they use that for encryption encryption. Its underlying mechanism is the mathematical problems that they are based on with the most common being large null factorization and discrete logarithm problem and uh the security of this uh type of cryptography relies on the computational difficulty of performing certain mathematical functions. [sighs] Main cryptonalytic attacks uh in this category are mathematical attacks and quantum attacks. >> [snorts] >> Now we will introduce quantum computing into the mix and its most basic principles.

In traditional computing all processing is reduced to binary values either one or zero. This basic unit of information is called bit and value of a bit can only be one or zero at any given time because it represents the existence or not of an electric current or voltage. [sighs] With this property, additional computers can explore a single path of time. This approach also known as binary computation forms the foundation of classical computing. Quantum computing on the other hand is fundamentally different and reliant on the peculiar laws quantum physics. Here the fundamental unit of information is called cubit quantum bit [sighs and gasps] and unlike a classical bit can exist in multiple states simultaneously. This property is a unique quantum state

known as superposition. Meaning a cubit can be both zero and one at the same time. And how can that be? You can think of a spinning cone. Is it head or tails? It's actually both until you stop to see what it is. Additionally, cubits exhibit entanglement, a phenomenon where the state of one cubit is directly linked to the state of another, even if they are physically separated. You can think of it as an information telepathy. And even though this term is not very serious, uh it kind of describes how one cubit might telepathically affect the state of another or a group of other entangled cubits. These properties allow quantum computers to process complex problems more

efficient um in a more efficient way than classical computers uh leveraging probabilities and interconnect states which are impossible with classical bits and this way they can explore multiple parts all at once. Now all these make quantum computers fundamentally different. And as professor Martin Alrech has rightfully put it, quantum computers are not faster. They are just weirder. So let's now examine how exactly does quantum computing affect our current cryptography. First we have source algorithm created in 1994 by mathematician Peters saw which demonstrates potential of quantum computers to solve mathematical problems such as large number factorization and the discrete logarithm exponentially faster than classical algorithms running on classical computers. This directly targets the asymmetric cryptography that we are currently uh using as its

security is based on the hardness of mathematical problems. Then we have Grover's algorithm uh developed two years later in 1996 which is designed to search an unsorted database or solve unstructured search problems on quantum machines with a quadratic speed up compared classical algorithms. This increases the efficiency of brute force attacks against symmetric cryptographic keys and thus directly targets the current symmetric cryptography. That's all great and a bit scary. So what can we do about it? Well, regarding symmetric cryptography, the efficiency and speed up that Grover's algorithm offer mean that it essentially helps security level of symmetric cryptography. That means that as a temporary measure we can just double the key length to maintain the same security level. But as

you can imagine this is just a temporary measure because it takes a toll on the performance of our existing systems. It requires more memory uh increasing latency and energy consumption. [sighs and gasps] Um in in asymmetric cryptography on the other hand things are not as simple because source algorithm attacks the underlying mathematical problems which um does not allow us a quick fix like uh with symmetric cryptography. So this is where postquantum cryptography comes into play. Remember from the initial slide, this is a kind of cryptography that relies on different hard mathematical problems that makes it sec today but all in a future with quantum computers available. So we saw that quantum computing works on a different way than traditional by

attacking the underlying mathematical problems. So the solution would be to focus on different mathematical problems that are hard to solve from both classic and quantum computers. Based on those underlying problems that constitute the hardness basis for the cryptographic algorithms, we categorize postquan cryptography into different families with each having its trade. First we have latch based cryptography which is the most widely studied and considered highly promising with a majority of the standardized PQC algorithms falling under this category. We will see what that means in a bit. Then we have codebased cryptography [clears throat] which is uh well established and has some historic background. So it's well researched with proven security but has greater toll on existing systems because it often

requires larger key sizes. Then we have multivaried polomial cryptography which has been proven very efficient for certain applications but one of the algorithms in this category has been proven in secure and traditional computers. Then hbased cryptography which relies on the security of cryptographic functions are simple robust but can be slower for large scale use. And lastly is based cryptography which is characterized by compact key sizes with a downside of computational intensity. Similarly to mulvariat the algorithm that was under consideration in this family has proven to be insecure. Now all these categories are based on different mathematical problems and since we don't have quantum computer to actually test how secure these algorithms are. uh we are using

mathematical proofs of security and advanced computational models to to test that. Okay, it's great that uh we have that in place and now we will explore how PC is standardized. Let's talk about the US National Institute of Standards and Technology NIST as most of us know it. This is a government agency in the US that uh develops technology standards and guidelines to ensure security of new technologies in various fields including cryptography. Even though this is a US agency globally recognized and referenced especially around cyber security so initiated the standardization process that included the following steps. First a public call for submissions where researchers from all around the world submitted the algorithms along with their mathematical proofs of

security. [sighs] Then the evaluation period of the submitted algorithms began with the global cryptographic community as well as experts from east and members of the public having the chance to submit feedback based on the algorithms they uh they had submitted. And lastly, the most promising candidates were either forwarded to next round or selected for standardization. But what exactly do we mean by standardization? You can think of it as the official recipe to follow if you're trying to implement a vetted, secure, and efficient algorithm and want it to work as it was advertised. So this whole process started back in 2017 and we're currently in round four with two algorithms being actively evaluated. Let's take a closer look on the

algorithms themselves. In this table I've summarized them and the algorithms that have been standardized are selected for standardization or are under evaluation. As you can see, three out of the four candidates at the end of round three belong in the family of Latis based cryptography, which led NIST to decide to go for a fourth round focusing on other families in case advancements in cryp analysis render latisbased cryptography insecure. So the fourth round um is focused on non-latis based algorithms with the vast majority being in the family of code based cryptography that as we said earlier has been studied extensively in the past. Now the current status is that uh we have three standards including two latis

based and one has based. There are also two more algorithms that have been selected for standardization. the second of which was announced just a couple of months ago. And finally, uh, two algorithms are currently being tested and evaluated. I want to make a special mention for the last in the list. Um, this algorithm site was um proven to be insecure, but NIS decided to include it in the fourth round for academic visibility because it had gained significant action when it was being evaluated. Now the algorithms that didn't make it into that uh table doesn't mean that they are insecure but they might have not been the best option among all candidates. One such example is Roto Cam

that was not selected for the NIST standardization process but uh the German federal office for information security as well as the international organization for standardization have considered it for um standardization. [clears throat] Now we have uh already some postquantum algorithms standardized but now we need to implement them for our systems and start the migration process. For this reason governments and agencies around the world have started drafting and publishing plans to migrate to PQC in the USA. Apart from NIST which is leading the standardization process federal agencies are required to implement PQC by 2030. a similar timeline with that of the Australian government. In Europe and the European Union for cyber security agency as well as the

European Commission are leading efforts to ensure synchronized transition across all member states with guidelines and strateging campaigns with some states having already started pilot migrations. Two months ago, UK's NCS published a road map requiring full migration to PQC by 2035 for critical sectors and they have already detailed guidelines and awareness campaigns. Um, in Asia, Japan and South Korea are leading the way with similar steps and guidelines and road maps focusing on critical sectors. South Korea is a very interesting example because they don't only have timelines in place but they have a specialized research group doing a more focused uh standardization process than east uh based on the industry and unique national strategies and policies of South Korea.

So that's a lot of text and I'm sure I could find more to add but um the main message that I want to pass with this is that this is a serious concern and the fact that uh uh we have um policy makers and governments around the world drafting guidelines to implementation is very very useful because in the end the global policy landscape uh will be motivating even the more reluctant organization. So it's very important to have that support in place. Now from migration to the migration to postquantum cryptography is something that is not very easy. Uh so it needs to to be taken into steps. So proofs of of concept and experimental implementations are very

useful for that as they shine a light into the unknown and allow us to gather feedback on what works and what doesn't. Now these are not stable enough to be used in production environments or on critical systems which uh are used by thousands of people every day. So what can we do instead is use postquantum cryptography on top or in collaboration with conventional cryptography also [clears throat] known as hybrid implementations. This way the targeted systems have a guaranteed protection by not um by not only the postquantum algorithm itself but by conventional cryptography that has stood the test of time. Uh hybrid implementations also allow for a gradual transition by not completely replacing or reconstructing existing

systems but rather adding postquantum cryptography implementation as a strengthening measure in complex and legacy systems. It's often very difficult update the cryptograph primitives they are using also known as cryp agility and this is uh something that might become major obstruction in the transition postfund. Hybrid implementations allow organizations to become quantum secure faster without having to shut down critical functions. Lastly, hybrid approaches expect other systems that are involved ensuring interoperability as they don't abruptly stop supporting the cryptographic algorithms. Of course, this is not permanent solution, but rather a hot fix to ensure that our systems and their data that they are processing and storing and using are safe from attacks such as the still out decrypt later.

A very very simple example are digital [clears throat] signatures and I'm going to go over a simplified version of that. So in a hbrid approach um you would use a standard digital signature in combination with a postquantum one and to verify the authenticity of a document you had to verify both of those. Now as you can understand this adds extra steps to the process of signing and verification as well as the size of the uh signed document itself. So it's neither ideal nor a permanent solution. So we covered the quantum thread, how BQC comes to the rescue, how it's being standardized and some basic items on the migration. Now we will explore some emerging technologies where PQC is

needed. This section is particularly interesting to me as it bridges academia and industry demonstrating how large scale experiments and pilot systems pave the way for innovative products and services. The first example I want to go through is a project that's being worked on amongst other places here in Dublin by a team in UCD and it's about 6G and particularly confidential 6G. I have added a link to the project in the presentation slides you will find after the event or website and I won't go into too much detail because this is not my project but uh what I wanted to stress out is that um a large part of infrastructure will based on 5G. So that

also includes general purpose protocols like TLS, IPSC that one uh that are the foundations of the core infrastructure and how they operate. The thing is that this infrastructure needs to both be respected operationally. So any changes should not break existing functionality [sighs] but also needs to be secure from the quantum thread. The challenge is that by the time this uh research had started, the designs were late. Ecosystemization was still in its infancy. So the research team decided to follow a layered security approach including both conventional and postquantum um cryptography to secure the infrastructure in a hybrid approach until official updates to the general purpose protocol are issued by the relevant bodies. The research team focused more on areas

that they could fully develop such as stronger quantum resistant identity concealment protection mechanism that protects the identities of mobile users and uh in instead of focusing on making TLS or IPSC quantum resistant which is a very crucial to do but they didn't have the resources to do that they focused on designing 6G in a way that's fundamentally secure for everyone and they left IPSC and TLS to the relevant and technical people and bodies that have the resources to focus on that. Now, the reason this example is of particular interest is that it displays how hybrid approaches can be used as a temporary measure and how synergies in the technological and academic community help drive innovation and technological

progress and why are so important. The last example uh that want to talk about is space missions. So currently, space missions rely heavily on symmetric cryptography, but that will change over the coming decades with satellite constellations and deployments becoming more frequent. That means that the cryptographic protocols used in such scenarios will need to be scalable and flexible enough to allow parties to be added on an ad hoc basis. Space missions have lifespans that can be decades long. And if you compare that um the rate at which quantum computing advances, PQC advances and generally technology advances um [snorts] it makes sense to have that in place now because the long operational lifespan lifespan of space systems.

Um so this will ensure that both the safety and the security aspects of space missions are safe from future threats. The space segments of satellite communications allows not only operational controls to be sent to the satellite but very sensitive mission specific data as well and that be that may be stuff from uh military to everyday uh use like navigation. So PQC needs to start being implemented for space missions. Now that's great and hopefully interesting. Let's see what you need to keep after this presentation. I hope that at this point you have a basic level understanding of what all this is about and how PQC is cryptography based on different mathematical problems. With that in place, keep an eye out for

latest advancements in all relevant areas. quantum computing, PQC, stay curious and up to date, read the guidelines from the relevant agencies. And lastly, if you have a technical role, depending on the organization that you are at, try to maintain the cryptographic bill of materials or a smaller list of assets and the cryptography that you are using because this is the first step to migrating to postquantum. Now with this and this beautiful photo of the Titanic quarters in Belfast, I want to thank you for your time and your focus and uh thank you to the organization of the conference. It's very very nice today. I hope you learned something and found it interesting. If you have questions or want to discuss

more about this topic, you can find me later or you can connect with me on LinkedIn or my academic email. Thank you very much for your time.