Making Sense of Splunk Enterprise

Show transcript [en]

hey everybody good afternoon um thank you Mike for sure the kind of production and then putting on this awesome event with all that help and support of the community let's go ahead and get started because it's it's time to have some fun so title this talk is making sense of Splunk Enterprise again my name is Jonathan singer and I'm a spun practice lead in the Southeast region I've got point security so a little bit about our agenda for today we'll talk about Who am I right why do I deserve to talk about this stuff and what's my background we'll talk about the history of logging I'm a big fan of building a foundation and understanding right where

the current technologies that we're using today what's the history behind where'd that come from why is it the way we're doing things well talk about what is spark right I mean it can be a lot of things well find out who uses smoke I think it's also really important because oftentimes you may look at other case studies or you know companies that are using a product we want to know what it's doing for them and how they're applying this set of technology and then my fun facts on how to get the most out of Spock I've been doing this for a while I've learned quite a bit about you know some of the what to do what not to do how to make

the most of the tool and then I want to remind everybody that you know getting into this stuff yeah it's free you know I don't work for Splunk I work for God point but this is what I enjoy this what I talk about and then finally I'll actually talk about what I do at guide point security you know just to kind of round it off if that sounds good so Who am I I already talked about my spunk practically down here in southeast where cyber security company say hi we're hanging out I'm in I'm in the chat thanks for the comment on the cool background it's just hacker stuff prior to working with guide point and in doing

all this stuff and even this one stuff I worked in data centers Red Hat web application security ir but I spend a lot of my time reading logs with grep and that taught me a lot about how I appreciate spork and similar sim tools today I've got a bunch of letters G CIA Japan blah blah we love letters right I do have a master's in cybersecurity I'm a big advocate for punishment in higher education and then finally some fun facts right I leave the local lost chapter here I co-founded b-sides Orlando eight years ago and I love talking at conferences so you know just add this one to the list and then I'm a bad shaker that's one of my

hobbies I admit badges at Def Con I make that just for b-sides conferences all kinds of fun stuff like that in fact I'm a goon at Def Con so if anybody ever sees me roaming the halls with a red shirt yelling at you it's out of love I promise so let's talk a little bit about the history of logging right love history um fun fact this syslog protocol it was originally designed in the 80s and it was put together by the seminal team and if anybody's familiar old-school Linux endmill is just this classic application for for email transmission and stuff like that and so single team wanted to log their application and so they put together the

initial standards for for the syslog protocol and you know a lot of developers and stuff back in the day is very tight-knit you know community developing all these applications for the linux environment and unix and things like that and so other applications at the time started to adopt this publish standard that said mil was kind of putting out there and they said hey look this is how we're kind of doing things and other people said that's very clever oh you know we're gonna do it the same way you're doing it right lose a little source code sounds great and from that point it started to kind of grow and it just kind of just became the standard

for any kind of Unix Linux logging right if your application was generating long data it was from this syslog protocol you know put out by the send mail team in fact it was just assumed for a long time that this was a big publicise well-documented stand but surprisingly for several decades the syslog protocol was being used being added to different applications and it wasn't even until 2001 that it finally made it into the RFC and that's when I was just even initially documented it was actually standardized in 2009 and when you kind of look back and put things in perspective you know that's almost three decades of using a protocol before it was actually like hey you know

I think we have something here there seems to be a lot of traction with it so it's actually quite impressive of how long these things Lisp existed prior to it becoming you know published and document it is something that now people will want to apply a standard user oh yes you want to read our piece totally cool with me I just you know there's a couple good ones out there it's a lot of documentation if you ask me um so one of the cool things though that I did find while pilfering my history story on RFPs is that is that the they they decided you know what syslog can can relay in a lot of different ways and what's

interesting here is that these different architectural models to allow data transmitted from the generation of the log data or application to where it was ultimately being collected for review they wanted to be able to be flexible with that right um in fact if you look at this wonderful archaic after display here um it kind of looks familiar especially if you're already familiar with with any kind of similar logging tool the concept of of generating in at one place and then relaying it through another system or application whether it has multiple times or even splitting the data this is this has been well understood and well contrived for quite a while here and so in you know in my

opinion this is the basis of how almost all modern logging and send technologies are built today and this is from two decades ago published even prior older than that and so this technology you know doesn't even really look like it's changed much from when it was originally designed and architected but it's kind of fun to see where a lot of these tools today came from and that put together that concept of centralized logging right I think why do we do this in the first place well we collect these logs from all these different systems and applications it's put in one place okay okay so you you put all my logs in one place now what

right well you can store them for long term right oftentimes space was very scarce on systems back in the day and it's not like yeah then the log itself has really changed much it's a string right but you know a one gigabyte hard drive back in the day was kind of a big deal versus what you know today laughing at a one terabyte and so offloading you know putting in a central place allowed you to actually save a lot of space on that host or system generating the data and then from there it just kind of adds benefits really you know um you know if something happened you had to you have to login into every single box soon

imagine if you're in a data center we just call in crash cards we had to run the monitor up and plug into every single system just to look at the data so you can review it all from one centralized place doesn't matter where it comes from an you can search for values across multiple systems very quickly for instance you can look for an IP address and find out how many different systems that IP was found in the log so very cool stuff in my opinion from back in the day um so let's talk about you know the whole shining star I guess well today right what is slow so we you have spunk here at the top and

it's an architecture of different moving components right you have your searching capabilities which is going to be like that magnifying glass layer right so the people use the search console to look at the data and to architect these searches in this SPL language and then we have what's called the indexing tier and that's where these little green database looking symbols are and and that's where your data is stored so it comes into Splunk image it's parsed and that data is then indexed and brought in and it's it it's not like a like a database it's a little bit different there's there's some fun algorithms around it and there's different types of searching blooms and things like that but the idea

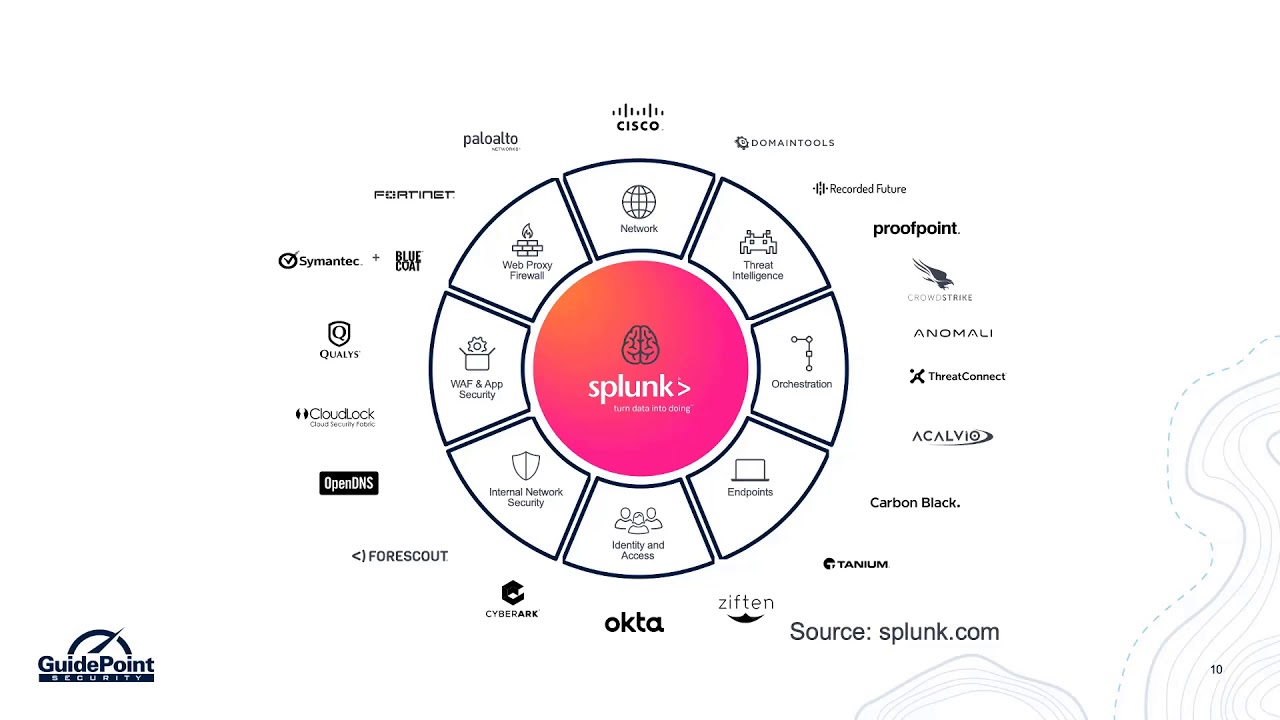

is that the data stored so that's quickly searchable based off of all kinds of different keywords and then finally that that third one and the gray area with the little tux penguins there that's what we call the 42 right ultimately where that data is sourcing from so in that order right we source the data we send it to our conductors and then those indexes are searched by our searching consoles right and then you know that that data that we're forwarding that we're bringing in remotely all over the place you know we're talking all your different systems and your applications and your database is in your virtualization in your firewalls right I mean really it comes

from the vast variety across your organization um you know just to name a few places there's a lot of big names out there and they've all you know looked at you spoke you know in other sims to UM to get familiar with their logs and their long types and be able to send that data so can you know you have to look at these same tools as something that has to kind of have arms into every aspect of your enterprise if you're not collecting data from everywhere um you can't paint the full picture right um these these people today are just much more clever as far as a business could have different ways of being compromised

whether it's your you know your website or directly into your network at a firewall or some kind of API with a partner or insider threat right or your endpoints and somebody's checking email clicking clicking the wrong waves there's many different ways and attack vectors in an organization and so just being able to collect all that data and make sense of it is what the big goal that bigger picture is trying to achieve aren't both of that talk tech right what what is a log anyways right like why is this important why do we care and how does look actually make use or make sense of this data um and so we fought machined it and the vernacular of Splunk

but let's just throw this example out here right and so we have a log and there's quite a few fields and back in the damaged and how you see these grip is a very simple to use regular expression based searching tool either something is there it doesn't image shows you where it is yeah there's a lot of other things to it but you get the idea but what spook does is when it looks at this log there's a lot and so these green highlighted fields are actually smart parsing out the data and what we've done is we parse a date and a time we've also been able to parse out you know bytes and command and Department

right and customer name and result in PID in process and so there's a lot of rich fields right and key value pairs in these logs that we can parse out with Splunk and suddenly all of these variables become individually searchable so then instead of saying you know I just want to generalize or customer logs it's I want to find logs where the sshd process right was identified or was a key value pair so suddenly we're becoming more granular and more specific on our searches and pivoting off of specific variables or key values rather than a larger string and just like that we went from making our haystack a little bit smaller but it's always going to be a haystack

somehow or some way but if you can shrink the size of that haystack you will find that needle quicker and more effectively right the worst would be a needle in a needle stack but that's a story for another day so along with those fields that are broken out from a Splunk you know Roman law I'm gonna enter slug smoke also it takes a moment and tack some metal it I called metadata on top of it right and so every log has to have these three fields the first one is host where did this log come from that's either the host name result by DMS or an IP address so even if an application on a system generates

logged that host still had to be a computer sending that long to Splunk it somewhere down the tree chain so where did the system what system did this come from now source source helps to find where the log originated on that system so a source could be a particular path to a file so if we're reading like a log file that's where sources so now we know what system it came from and what file on that system this came from and it finally have something called source type which is what's the data structure of it so I know what system it came from I know what file on the system it came from but do I know what type of data is

is now that's very helpful because classification and the identification of data is gonna help you quickly parse out what all those fields are there's characteristics to each type of log and in that furthermore it's gonna allow you to maybe isolate oh yeah I only want to see the log from my talent well two firewalls I only want to see the logs you know from my checkmarks application security review so you get the opportunity to focus on a specific type of data because you may have multiple types and multiple searches from a single host so you know what do I mean by logs look different no like really lawns look different right we have an example of three different vendors here

a FortiGate right that's a firewall for some people um and this FortiGate calls it source right here sr CIT but palo Pal doesn't even give it a variable you know something equals term it just throws it in the log so I need to know that that position in the log that comma-delimited position that that field is a source that's where that source type definition comes in is is being able to say okay this log it looks like this and this is where the data exists and this log that looks like this and this is what this data is and then finally something like Apache you know just those IP right up front um and so each one its defining a little

bit differently so Splunk is going to kind of build a commonality across different source types using this common a common information model and and building these like aliases or comparisons so a source ID and one log is going to look different than in a different loan but that doesn't mean that that we can't determine that they're both the same purpose and that way we can actually search for one single value for a source IP specifically no not a destination in Turkey or anything else across different types of log so suddenly we are now operating more efficiently right in our searches we're shrinking that haystack and we're actually getting results quicker super cool stuff right um so so that's how so

what how do we search in the first place right what is this that we're dealing with here so searching is based off of this language this search process image called SPL over 140 commands there's all kinds of crazy stuff when it comes to it but um but what's what's cool is that it is based off of UNIX and sequel and if you're already familiar with stuff like bash and you're already doing day-to-day stuff with SQL any of the variants the concept is is it's a combination of the two so pipelining your commands and doing boolean based logic is going to really accelerate learning that that's SPL you know that language so that you can be an efficient searcher and so some

of those commands allow you to do stuff like filter modify manipulate enrich insert delete and if you're an old-school Linux fan like me know we used to call those cut set and awk uh-huh but you don't have to do all those things you can actually you know do it all in line and so part of that searching process is manipulating and actively working with the data you can even create variables you can do algorithmic you know manipulation and you can even do testing so if statements and cases and things like that some very very deep and honestly I don't use all the commands but there's a lot out there and if somebody were to ask what's your

favorite command transaction it's pretty cool check it out maybe the end I'll tell you oh so now that we understand that slug is bringing in our logs its parsing that data and identifying those fields and allowing this to make those fields pivotable and searchable um we've already gotten the approach on okay I am ready to start performing my searches but how do I really make value here like it's you know we invested in this expensive tool or I'm at home experimenting with it which because it's free you can get up to 500 Meg's of log data a day and you can just run this at your house just for fun there's to see what's going on in your network

you'd be surprised your router makes a lot of logs and and so the big right the big seller you're in the business space and the enterprise space is the dashboard right it's a visualization of that data how do I present my data in such a manner that anybody can make sense of it in fact I throw up this executive dashboard here why because executives love dashboards percentages numbers definitive right is it a yes or a no is it working or is it not am i making money and you know this is a great example of just taking data from different departments right we have IT operations we have security with application delivery of business analytics and IOT and all of these

different systems are actively logging you bring that data centralized back in a slum and then you make sense of it right so we can do trends and graphs and analytics um we can determine the state of different things all based off of the log data that's generated oh you know if we wanted to go down the security room we absolutely can are stuff like firewalls and our VPNs are constantly generating data based off of those connection attempts where they're coming from in the world are people failing are people successful or we're seeing 10 fails in a row and then a successful within you know a certain small time frame is that a brute-force the idea is

if you can collect the data and you understand what it means you can start to make sense of it you can make it alert about actionable displayable on and then you can build your what we call correlations right so on the bottom left we have what's called notable events that's a series of conditions that are predefined in a search query so that when that event top or that event comes up well creates an alert it's a notification about it right and so SQL injection attack detected well in theory what does that look like well we would maybe look at our application logs that are web-based or public facing and then we would look for particular characters that are often

used in sequel injection so stuff like single quotes right what would that ever need to be in a query um that's user generated right we're talking about the parameters here and you know stuff like join and you know things like that and and those are often used in sequel junctions so you can say hey slump if these logs from this type of system have these kind of fields in them let me know right just let me know when when we're under attack and so working with the data is is much easier once it's in and once it actually makes sense right oh and by the way I am watching the discord so if there's any questions

along the way please feel free to shout out I did crcs mentioned the cool background so you can also just say hey oh finally here you know a lot of people guess plunk it they do security right but everybody's gonna hate me when I say this monkey it's not a send out of the box you can make it a sin but the best part in my opinion is the versatility to make it whatever you want like it is for your organization is a tool that can be used by different teams let's take this example for instance so IT operations has a a web application here so we have stuff like apache and mysql that log

source can still be used by other people so let's just take Apache log that's an example this for a web application this web application can be used by security to look for the sequel injections and other types of web based wonder abilities it can be used by IT to monitor application health response uptime 404 errors versus you know 200 successful or their pages missing it as their experiential and UI issues from the user space and then finally it can be used by marketing team to determine which pages are most popular when people land where do they click next you know there's a lot of different departments and teams within an organization that can use the one single

long source for multiple different purposes and just to put it out there the pricing model first look I hate to talk about money in business and stuff like that but once data is in spot there isn't a charge to use it so if you can figure out how to maximize the data that you already have in spunk and get the most people using that same data set like that's free value that's added value right there where the data is already in that's where the billing takes place the how are you using this data how many people can you get enriching it and making sense of it for their departments that's entirely to you and that's an things that I love to

support spunk tech who uses Splunk all right at the end of the day we talked about then it comes from your security and your network in your databases right it's all these different things you know really look if something generates data or generates logs splut can make sense of it it can use it so now that we know that and we know that can be used by different members of our organization [Music] let's take a look at some examples here sorry I wish I had my water somewhere oh so Donna's pizza what girls gonna call them out like that right more than a half of Domino's orders today aren't digital we do not call nobody picks up the phone anymore I

don't understand these Millennials and oh you know we have a different world we live in we are digital we are operating online and we expect it to work the first time right and we're just kind of little you know we're privileged like that so so whose it's used by their IT mr. security but but what they're doing it is they're monitoring that experience health right is the app responsive is the website responsive because if if a website doesn't work you are bound to go somewhere else right if you log into a website and there suddenly becomes an error you don't often continue to just try like well maybe you don't start working like no if your experience is

terrible you lose business right and it is very important for their stream and and flow of successful transactions to constantly work and be very fast in fact Domino's their most important day is the Super Bowl and if they have an outage on the Super Bowl that I I call is bad business so Splunk is one of the you know many ways Domino's does take this stuff very seriously and utilize it to make a better business I hope everybody participated in the US Census because it makes a country better uh but this year all right is the first digital descending alright so every twenty years first time they're actually letting you to go online and do this stuff and the

US Census Bureau is making sense of that data they're breaking down the analysis with all of that rich information and so now they can start pivoting office block by state by counting by all the different statistics and factors that are part of the census um and it's fantastic what you can do with this much data as far as what it you know really means and how to how to analyze it quickly we're not crunching paper and numbers anymore the computers doing the work for us so super super interesting how that is happening now here's another really cool one Carnival Cruise Lines um they are using Splunk to look at every single system on the physical cruise

ship and what that means but I am I'm talking about the HVAC system so that air conditioning then navigation system the water purification so that when you open that sinker that shower it's not saltwater right and they're monitoring the whole cruise ship so from an IOT perspective and this data is critical to safety and security of these ships than all of those passengers on board and another cool thing is they they have all these new apps now and so you can use Splunk to monitor the wireless experience because the program is now in your app and in your phone you can see like all the activities for the day and based off of your location in the

ship they can suggest things that are nearby and so there now yes from a business perspective tracking you wherever you go on the ship but that that really does help you from an experience where they can make things flow better so that you're not walking long distances all the time to get to different areas that maybe you want to go to next so they have a good understanding of that that you know how people interact and float around the ship to build better future experiences for you and so all of this data again can be analyzed parse put together and and tabulated so that you can determine the best route to handle things whoo okay other than next let's talk a

little bit about values well great and I'm not talking about how to throw money at smoke I'm talking about how to make pay for itself the first one is Splunk base this is the de-facto App Store for Splunk and you can go onto the website and this is where you download the apps and modules for spark this there's only really let's be honest at the other day there's a handful I think about five or six paid ones but the rest are free and this is where you see the partnerships between major vendors insula so if you run um you know a particular piece of hardware in your environment type it in the search and bring and download that

app that's going to plug into Splunk um for that particular log type that way when when you bring in the laws smoke is already familiar with it this is the trick to making Splunk understand your logs and parse out those individual fields that we talked about now you can go to swamp acecomm or you can use this book basic built into Splunk this is actually in a swamp what interface and you simply browser more apps and it's the same searching functionality and you can click install with a one-click deal you're now importing these different parsers and apps okay right into your spunk environment now what's cool about smoke base and all these apps that's up there

nearly 2000 apps and I just checked a little bit earlier before this toxin yes there's like nineteen hundred and seventy something like that apps and add-ons available on sport base today right and these different apps are going to help you make Splunk successful smoke is itself as a data parser and you have to tell it I'm getting logs from XYZ vendor here's the app that makes it parse those logs and so um these apps are valuable in my opinion to making Splunk do what what you what you're sold on what you read about what it's supposed to do in here you know in your perspective in your view right now there's some apps that entirely just

demonstrate and visualize data that you know there's apps from certain vendors that this is what is capable as far as a visualization and a processing of logs from our source and then other ones are truly just background parsers but the key here is that there is a cooperation between the vendors and spawn to process that data okay um when you get the app from XYZ vendor it is prepared you're now prepared to receive logs from that system and it won't make sense right it will break out those fields that you can search off of one of the next other cool tools that I love is answers this is a QA forum where it uses an upvote system

to you know providing which is actually kind of gamified users can log in and participate in supply answers and if they're you know voted the right answer for users questions they get points and it actually some cool stuff that happens at the larger national conference if you're a big participate in this kind of stuff and so oftentimes you start out here by searching you know is somebody having the same issue that are how do I do this and you might find an answer I often times 9 9 out of 10 times don't find exactly what I'm looking for right here and answers but you know it if you do not that's ok too you can ask and the community will

respond so cool stuff there and then finally I kind of made a little joke about RFC's and GOx earlier but um will soon raise a hand if you've ever read the Microsoft Windows documentation thoroughly I didn't think so look docks are actually pretty good they are very well written um they give you a lot of examples visually step by step all kinds of different things like that and you would be surprised if you just simply pop over to the docks and you know kind of search for what you're looking for and there will be provided a command line version of it a web GUI version of it and and what it does in the different caveats

and haven't even examples of different parsing codes really I'm absolutely impressed with the documentation of Splunk and I don't say I like reading the talks of most things but these these are good Oh something else that's really cool uh there is this Lexie partnership look lexicon there's so many words that are unique to Splunk architecture or binocular things like that that understanding what all of these different key components are and defining them is going to help you when you're talking to support when you're talking to professional services or even when you're talking to other smokers you know using the language of Splunk that way you can get your message across appropriately um and and so so what are

some of those things defined us right well we have stuff like a search head right that is the definition of the web search console there's something the indexers and oftentimes this is comparable to other sins right smoke does it one way but another sin they do it you know similarly and they just call it a little something different you know Universal forwarders right that's the agent that can also be called a collector in other environments a heavy folder is a Splunk system dedicated to receiving from other forwarders so it's like an intermediary point and then finally of stuff like a deployment sir it's a really fancy term for agent management console you know but again

learning these particular words and understand where they play a role within this funk environment is going to help you talk to other professionals and get your point across and so that you're all in the same page and as especially if you're going to do any kind of like education and training which is free um you want to know what these different key components mean so a day you can go on swung calm and take fundamentals one um this is a free self-paced training that you can just kind of get you know the lay of the land so it's stuff like navigating this one crate and use different fields that we talked about a big breakout right um you know you can

get statistics from you so these are great examples of okay now I have my data how do I start using it so walk you through some of the capabilities and examples so that creating reports dashboards and alerts that's the executive wing right there um and did I mention a spring so this is a great starting point um and it's actually the training required to get your first slump certification which is called slump user certified it's okay so when I'm often working with a customer and they're telling me oh you know we have about ten analysts that will be using this spark suite I say alternative need to go and take fundamentals on right now I don't think

they're allowed to login until they've completed fundamentals one because they're gonna have a million questions how do I do this how do I do that fun of those one is gonna cover all of those things and so this is your opportunity to get free training to your employees and save time and time is money from asking a million questions that may already be answered in this training material not funnels to its the next step it's gonna get a little bit more advanced searching reporting commands right we talked about getting into those those SPLs in creation of knowledge objects and knowledge objects are those rich things there's dashboards with those units we talked about transforming commands mentioned

transform was my favorite visualizations right there's a lot of value and then I think you have a filtering correlating and data models the point is is this is the next step in it now although not necessarily free too public and everybody well it does have a really good program for veterans and so if you are a veteran this second set of training is also free and so I would highly suggest you know taking advantage of this if you are in an enterprise that smoke is already at why not take the free training if you are looking to learn more about slot Thank You hours yes sir for posting up that link oh if you're looking to learn more about it

they sir these are things that you can put on your LinkedIn right this is marketable stuff it's one thing to say that I've seen smoke what's another thing to say that I've took some training in it and I got a certification and that actually does mean something when you're looking at opportunities it's like an analyst check it out alright so sorry what some closing remarks I always got a shell just a little bit here but in my professional opinion the key to success is you get data in so we locate that source and we tag it with the appropriate vendor stuff out of base then we validate and verify is it data correct and can you perform

basic searches on it okay you always trust but verify oh then step three is gonna be build those dashboards and reports this is where you prove worth this is where you make the investment into a piece of software actually pay for itself make Splunk yours and then finally let's deliver that success to management let's get some huge wins here all right let's show them why this tool or you know really anything like it is going to make sense of your data and bring value to your company hopefully even protect you to and alert you when bad things are happening so what the hell do I do it guide point right well I do professional services I run an awesome team of

talented individuals and we do all kinds of cool stuff we help you out remotely we don't really do on site right now nobody does um but uh one of the things come back won't go back on say but projects and staff augmentation and we focus on you know Splunk itself we also spoke to us on some of those premium modules like enterprise security 90s I'd you out of here's one cloud user and phantoms for automation right and so what are the things that I'm dealing with a and yes you know I have to talk about what I do but I want people to also be inspired to seek out a professional unk and smoke Enterprise

and smoke engineering because there's a lot of fun doing this stuff right so I'm doing architecture and I'm doing migrations installations upgrades tuning and helping other people you know take all this massive amounts of big data and parsing it and processing it and enriching it such that it makes their business better right it it is such a cool thing to see the impact that you can have on incorporation um you know when I walk into an environment I try to look at it from a health check perspective so what is going on in your architecture what are your configurations look like well you're absolutely cuz your licensing are you clustered so all kinds of different

things and then I'm you know present that is this is where we're at today me this is your baseline how do we get to that next step and let's build that road forward so that you are happy with the software you're happy with the product and you feel that experience in that value is really you know adding to your company so I wanted to take this opportunity to you know as we wrap up here to take the next five minutes before in our next kind of thing here to see if there's any questions out there and I want to again say you know thank you to the the B sides Greenville team I live down in Florida but I have been to

Greenville it is a wonderful city and again I mentioned I'm of a big background in cybersecurity in besides this one so so so we love to hear but I see a question coming from from discord here you've been given access to Splunk and you want to watch over your digital flock any other recommendations on where to start beyond the fundamentals you class especially schema searching good folks references etc um so the big trick is I think like anything else the more you use something the more you get familiar with the more all you get the opportunity to learn about the little nuances and click tricks and tips along the way so really look at what other people are doing in

your field by bringing in some of those apps with example dashboards when you download the dashboard for instance for Palo Alto it is full of what is capable with pal amount of data and so you're not left on your own to try to determine it I like okay great I've got the logs now but how do i you know where do I start what's the first thing I should be looking for right I need some kind of direction here and so those apps are often going to help you build out some examples of potential directions you can go and you may find that those examples are very useful to ultimately building out your ideal dashboard of my Security

Operations Center my command center there's a ton of great books out there about select commands and I also do this stuff regularly so I'm out and about I do training sessions and webinars and so you know hit me up say hi tell me a little bit about what your challenges are and I'll do my best to point you in the right direction um you know and by that I'm not saying like I'm trying to sell you something I'm literally gonna say check this out you know look into these different things here's some other resources that are similar to kind of what you're ultimately trying to achieve um so you know I don't see any other questions

right now at this time sources also missus blog answers and the community built around that there's even a sign it's long past public slack so hop in there to the tracking down online right so again thank you so much my name is Jonathan singer Luke a point security you find me on linkedin and on twitter and reach out if you have any other extenuating questions after this