GPT-3 and Me: Large Language Models for Defensive Cybersecurity

Show original YouTube description

Show transcript [en]

we have young Ho Lee and Joshua sacks from sofos and the topic today is gpt3 and me how supercomputer scale neural network models apply to defensive cyber security problems please welcome Young Who and Josh

okay does anybody hear me without straining to understand what I'm saying yeah okay cool I'll try to talk close to the mic which is a little awkward because I'm tall um okay oh the other thing was is a laser pointer here isn't there but I'm remind me what button

oh yeah oh awesome okay that's great okay so um I'm here with young Huli um we're gonna co-present here um and um I want to say first I want to say thanks to Jung who he flew here from Sydney in the last 20 hours and just arrives uh like an hour ago so yeah happy he got here on time and uh yeah um yeah looking forward to presenting together so our talk today is entitled um gbc3 and me how super computer scale neural network models apply to defensive cyber security problems um a little bit about us um Josh sacks I'm Chief scientist at sofos um if you're in if you like what you hear today uh you're welcome to check

out our book malware data science I wrote this with Hillary Sanders it's about malware data science and security data science more generally generally I've been doing um cyber security machine learning research for a long time now um Yahoo you want to introduce yourself quickly yeah good afternoon everyone my name is I'm a senior uh sorry recently I promote I'm priest for research scientists yeah thanks okay so I'm gonna lay out right off the bat the the main arguments of this talk um so first and those of you who came to my morning talk you heard me hint at this a little bit um I think two two ideas that are new slash gaining popularity and machine learning

self-supervised learning and model scale are fundamentally changing machine learning um and what its capabilities are I'm going to talk about the implications of that sport Security today and so is Yung who um second I think that uh for those of us who are security data science researchers here I think a new um important topic on our research agenda should be figuring out uh how large models apply large self-supervised trains models apply to security I think that's a problem for us to figure out uh over the next uh one to five ten years and hopefully I'll convince you of why and civil Yahoo in this talk um thirds I think the proof of Concepts that the Yahoo is going to show that are

that are built on large language models and specifically gpt3 uh really show show promise um really prove out the thesis that large uh large models are going to be important for our Fields um and then lastly I'll just this is not so much a thesis it's just a caveat um what we're presenting here today is early work meant to wet the appetite of our community around it around the potential impact of large models um let me ask how many of you know what gpt3 is when I use that acronym okay so I'm going to use that not everybody raise their hands so um this is a kind of iconic iconic large machine learning model that's that's

um been built by an organization called openai um it's it's it can't be run on commodity uh systems you need like a very large supercomputer to run this model um but it's it's showing it's it's showing some really uh novel capabilities with respect to natural language processing um and you'll learn more about it in the course of this talk um and then finally by way of just summarizing what we're going to say up front at the beginning of this talk um young who's going to demonstrate a couple key results in our research first he's going to show that experiments we've developed at sofos where we both work um demonstrate that GPT 3 can do something

fundamentally new which is successfully execute a small reverse engineering task which is to take command lines like this which stock analysts stock analysts at sofos and many other places spend their days looking through and translate uh translate them into clear natural clear and accurate natural language descriptions like this description on the right so here I mean if you just if you just sort of introspect and notice the cognitive load of trying to parse a command line like this you'll notice that even those of us who look at a lot of command list lines like this you know take some exertion to figure out what they mean and it's significant that we found a method for using a model like

gpt3 to translate that into clearly written text which explains what's going on if you imagine the job of a sock analyst is looking through hundreds of these suspicious command lines a day it's significant that a large a large language model can be put to use and in translating that into intelligible English uh that the second result that um young who's really going to Deep dive into which I think is really significant is in a context in which you have very few training examples uh so let's say you are attempting to detect phishing emails on a customer Network and you have just a handful of benign examples and and a handful of email examples from a malicious campaign uh

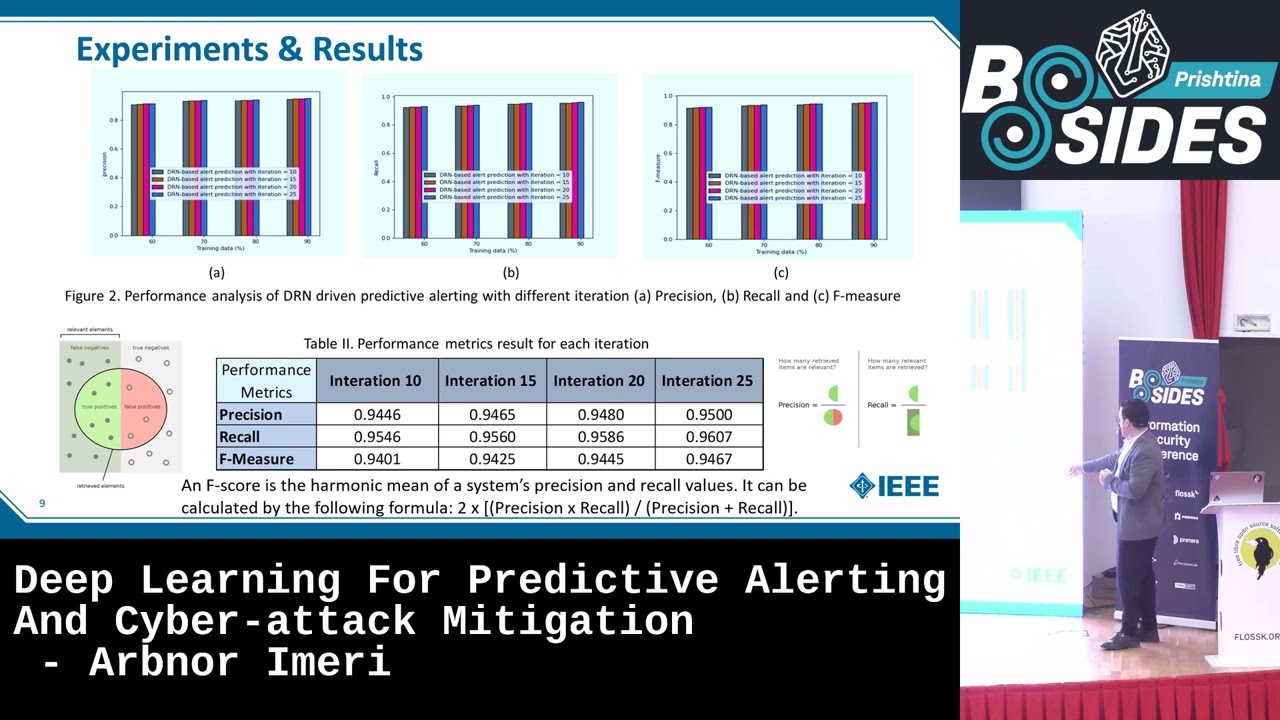

you we can use gpt3 to achieve a Deployable level of accuracy uh in detecting other malicious emails so young who's going to show young who's going to drill into this more deeply but basically we can achieve on an accuracy metric like f-score we can achieve uh like a 0.9 or 0.95 results with a tiny handful of training examples whereas traditional machine learning just as a non-starter here you know it achieves a non-usable uh detection accuracy so really these two key results are meant to provoke interest uh here and in applying large language models to defensive cyber security problems and I think suggest that more investment in the space is necessary and I think are significant in their own right in terms

of what we're building in our data science sofos okay so the format of this talk is I'm going to give a bunch of background into large large models and then young who's going to drill into the examples that I just talked about okay so here's an important Trend um and um uh got this from the website towards data science which which tracks a bunch of interesting metrics there's a sort of meta-analysis of the scientific literature and machine learning um uh and and what this plot shows is that over time you know some of the horizontal axis we have time here um uh machine learning papers are proposing larger and larger models um and this was sort of a log linear

relationship between time and model size for a long time and now all of a sudden we just have this explosion in large models being proposed by researchers uh so just just to hang on to that fact that models are getting larger and they're getting larger at an accelerating rate um and and then then I want to put in I'm going to come back to that but I want to point to another Trend here as well um which is um we're also seeing these models being trained under a newly popular regime which is a self-supervised regime um so self-supervised learning I'll get to in a second but basically it's reason for being is that it helps to exploit

the internet scale data sets that are becoming available to machine learning researchers so um you know you have this non-linear Trend and the amount of data being being generated by by Computing devices and available on the internet self-supervised learning helps solve the problem for machine learning researchers which is that most of those data are unlabeled um so we can't use traditional supervised machine learning approaches to take advantage of them and self-supervised learning allows machine learning models to improve even while they're unlabeled so so here's here's what here's what self-supervising learning tell here's what self-supervising self-supervised learning uh is so basically what is self-supervised learning approach does it takes it takes unlabeled data um and creates a kind of artificial

prediction task on that data so for example um one approach to taking advantage of large image data sets where we don't have prediction targets like so where we don't have uh say labels that say you know this is a picture of a primate is you just create a game right like remove a patch from the image and then have the machine learning try to fill in the pixels that we've removed and then just repeat that over and over again for a given image so you know remove a patch here and predict it remove a patch here and predict it remove a patch here and predict it um and by training a machine learning model on like web scale image data sets

to solve this sort of artificial task of filling in the missing pixels it turns out that um the model learns useful representations of images um because if you think about it to solve the problem of predicting the missing patch here um maybe this is a better example uh the primates face um uh the model really has to learn a lot of useful semantics around what images actually mean um it takes to really solve this problem of um knowing what those pixels will look like means understanding um what an Apes face looks like and inferring that the relationship between the body of the ape here and the pixels in the Apes face and it just turns out

that a model trained in this way on self-supervised data can then be used Downstream uh to solve image classification problems for example uh better than a model that just um that wasn't trained in that way okay now um so we're getting all these models that are very large and trained in the self-supervised fashion outside of security today's security data science models really live in this kind of size range um so in this access we have we have size uh expressed in parameters um the models we deploy within um the data science team that I manage at Sophos are all really within this range and that's notable because what's happening in the research Community outside of security is is folks are

finding that these models in this much larger size range are more efficacious um so this raises the question how can we take advantage of self-supervised training and large models and web scale data in order to get the boosts and results that secure that researchers in the image domain and the audio demand and language domain outside of security are getting um and so you know that's what this talk goes towards is you know we are experimenting with gpt3 in this talk which is about this size so it's really up there um on the Vanguard of model scales uh um in terms of models being used in the machine learning community so I want to dramatize just a little bit

more um what I mean when I talk about large-scale models trained on self-supervised learning tasks getting better results so here I'm going to show some results from a model uh trained by a team at Google this is called the party model that's very large scale and was trained on like a web scale image data sets um so what this model was trying to do is solve that was given a given an image caption like the alt text that you find in images on the internet predict the pixels and the paired image so here we've got the caption a portrait photo of a kangaroo wearing an orange hoodie and blue sunglasses standing on the grass in front of the Sydney Opera

House holding a sign on on the chest that says Welcome Friends um so to be clear this image doesn't actually exist on the internet what these researchers weren't trying to do with the sentence is see whether or not the model had learned so much about the relationship between text and imagery they was able to generalize and create a convincing portrait of uh you know a kangaroo with all these attributes and this in the Opera House in the background um now here's the interesting result right so this this model was trained in the self-supervised way just to predict you know play the game of predicting it predicting image pixels based on their alt text um and with a model at the scale of 350

million parameters and this is about uh uh I don't know 15 20 times larger than any model that we've deployed um within sofas products it was able to do like a pretty decent job right I mean this looks sort of kangaroo-like it's got the sunglasses in there it's got a sign it doesn't have the it doesn't have the The Welcome Friends text on the sign but there's some letters on it it's got a vague visual gesture and a hoodie uh didn't really solve the problem but it's impressive nonetheless now if we scale up to three billion parameters it gets more interesting right I mean this is this is unmistakably Kangaroo like this this uh this creature here

um it's got the sunglasses and the letters are actually getting closer to um what the researchers asked for which is welcome friends right which is this is remarkable um I'll just say even at this level of success right that the fact that a model merely trains uh to predict an image based on its alt text actually learn to write right it actually learned to write some letters all on its own uh which I think is really remarkable um but still I mean we're still pretty far off from completely solving the problem now once we get to 20 billion parameters I mean this is this is at the scale where um you know you need to fill up this

room with with compute with a distributed sort of GPU setup in order to run a model of the scale um it the model the model solves the problem uh so you know here you we really do have a kangaroo with blue sunglasses the model is has learned to write out Welcome Friends on its sign and you've got this in the Opera House in the in the background and what's noteworthy is that these capabilities just emerged from the model playing this game but predicting the pixels from from the alt text right um and uh additionally what's noteworthy is that the researchers here didn't change they didn't introduce any clever new mathy ideas into their model that

they scaled it up um the model just by virtue of the fact of having more sort of connective tissue between its uh its its artificial neurons um uh just gain these capabilities uh just gain the capability to write and gain the sort of memory of what the Sydney Opera house looks like and what kangaroos look like and also learn to sort of compose all those Concepts together in an image um I showed this in in the talk I gave this morning but just another example so that that same model given given the prompt a map of the United States made out of sushi it is on a table next to a glass of red wine right it doesn't do

well in a low parameter regime but as you just scale it up without changing anything else I learned to create a map based on you know with sushi and the wine glass and learns to put a table in the background um so this is really significant I think um it's significant for machine learning that we can now prompt models uh with natural language text and generate coherent imagery that really aligns with the semantics of the text but I think the question we should be asking ourselves as security practitioners is how does it apply to security um and the question that Yahoo and I are asking ourselves is how does it apply specifically to the defense defensive

problems and security it's I think it's easier to see how it applies to offensive security like if you need to if you want to generate fake social media content or face fake Facebook profiles right you can use models like this to generate that kind of content but how do we use it in this in the sock and how do we use it when we're defending real networks is the question that we're asking here um I want to say by way of summary I mean I'm showing sort of qualitative results around what happens when we Scale Models up um they're this very famous paper that came out a few years ago scaling laws for neural language models came out of

open AI um shows this really beautiful relationship between scale and and error rate um so here I mean they're just showing this is what's called a power law relationship um between um model error when it comes to in this case they looked at predicting the next few characters in a document based on the the characters that the model has seen so far they're able to show that the error rate of of models that are doing this autocomplete problem just goes down in this really smooth fashion um as we just merely scale up the size of models and this is a robust result that's been shown in a number of different domains so to to sort of hone in a little bit

more on why this matters for um why this matters for cyber security here's here's an experiment I did with uh the gpt3 Codex model which was trained on lots and lots of code like GitHub scale code from the internet um so I so I wrote out this python function signature and Doc string so the function is called compute mean and standard deviation says two standard summary summary cysticks and stats compute and then then the doc string says compute mean and standard deviation on the input data in pure Python and render the result in flashy HTML on a page titled don't trust summary statistics and then the gpt3 auto completes I wrote the code correctly which I think is interesting because

it's sort of like the kangaroo example in that um you know if Mina standard deviation standard deviation are the kangaroo and uh the HTML Parts you know don't trust summary statistics is is the hoodie right the the model sort of knew both of those Concepts and composed them together into function that will actually execute and do what I asked it to do um so we can see that these large models also understand um can understand the technical domain of python codes um when they get to a sufficient scale this model is about 200 billion parameters and and size so so very large and has very very high capacity um and then I apologize for folks who

are in the morning talk I sort of force out of this but here's an example of using gpt3 to classify domains um so here I gave gpt3 the prompt berkeley.edu maps to education Amazon at master shopping Netflix Maps entertainment Etc and my goal was to get it to classify a new domain that I'd never seen before accurately just based on the five Training examples I gave it in the prompts um and so I gave it this new example shooting range that come and asked it to complete the pattern and it gave weapons as the answer I think that's really significant for security because let's say um there's practical challenge is to actually do this right now given how big

and costly it is to use cbg3 but I think it's useful to think ahead and think in a sock if you want to recognize um new examples of a pattern that you're seeing the fact that you can just type out five examples and then gpt3 will sort of recognize conceptually equivalent content in the future is significant right I mean you could write something out like this in the nationality to go find you know to go categorize all the domains um that your users have looked at over the past day and I mean the Tactical power of this over traditional machine learning which requires tens of thousands or millions of examples is really dramatic right the ability just

to write out a pattern and deploy a model very quickly I think is significant um I mean here's another example of um here's another example of using gpt3 in this way where it's given a you know security examples of you know good domains and bad domains like this PayPal sort of phishing URL or the city card phishing URL and then it's able to detect you know given a new domain that it's bad just given a handful of training examples I mean just to emphasize this is this is that so using gbt3 to make detections in this way uh it requires orders of magnitude less training data than using traditional machine learning um as young who's going to talk about that more

later but we would need like tens of thousands of examples to get a machine Learning System functioning as well as we're getting gpt3 to to function with five Training examples so in context where you have just a few examples of a bad kind of observation that you need to detect I think these large models are really significant now I've been teaching gpt3 in these examples to detect um malicious observations using um a method called in context learning and in context learning is just a fancy way of talking about using a model like gbt3 uh giving a model HTTP 3 a prompt and then asking it to autocomplete so here I'm not for data scientists in the

audience there are no gradients there there's there's no stochastic gradient descent there's no back propagation literally we're just writing some text uh into the writing some text on asking gpt3 to autocomplete and getting your answer in this way and there's there's a whole body of research now around in context learning and prompting large models uh in the way that I'm showing here in order to get predictions now there's another way that you can adapt large language models to solve a problem like detecting malicious domains which is called fine tuning and that does use the traditional deep learning optimization methods like back propagation and stochastic gradient descent basically the idea there is we use so when we're when we're fitting in

a large neural network to data like gpt3 uh we we initially fit it using a self-supervised learning procedure uh like predicting that predicting the next few letters of a document given the the the characters and the documents uh up to up to the point in which we're looking in the document um we play that kind of self-supervised learning game with the model and we use um traditional neural network training like back propagation is Casa gradient descent to sort of get ourselves to a good region of the parameter space so we're sort of tuning all of our neural network weights in that way and then we do a little bit more um back propagation the stochastic

gradient descents in order to solve a downstream task like detecting malicious domains um and what we're finding is that a combination of in context learning and fine-tuning tends to get us the best results young people will talk more about about our experiments there okay so just just wrapping up um so this is this is my last slide in the past is left to to Young Who Um hopefully I have what you're appetite into thinking about um the significance of these large models um and their significance for cyber security um and maybe folks even now or in the OR subsequent to this talk we'll have some ideas about where they might apply uh within cyber security

here are some areas that we're thinking about um so I've already mentioned you know um using large language models to detect previously unseen attacks um better than we're currently able to to detect um clearly interesting capabilities emerge when we scale neural networks up to the level that I've described um and I think that's an area that we can be thinking in um young who actually has done a bunch of work around building capability on top of gpt3 that translates between a natural language query like show me all outgoing SMTP connections on my network they're connecting from our machines in Australia to machines and Belarus like asking quite you know basically he's developed technology that allows us to

ask a question like that and have it have gbt translate that into a structured query into a database we've had some success there I think that's a really interesting area obviously we're going to be talking about models that help reverse engineer obscure and obviously the code um autocomplete on steroids models for security operations um so in cases where security operators are typing command lines could we Auto suggest a menu of actions they might take um you know using gpt3 and I think there's a lot of other possible applications um that just wanted to throw those out in a brainstorming kind of way so I'll pass it over to Yahoo now to talk in detail about the experiments we've been

doing with gpt3 anything yeah thank you Choice uh yeah the kangaroos especially the 2 million Parramatta one was very impressive to me especially I'm from Australia so it was a long trip from Sydney to Las Vegas but it was great to meet those wonderful anywhere here again in the second part of our talk uh I will talk about two cyber security use cases you can apply a larger large-scale language model so our yet so actually there's many not many of you different religious language models but we can use GPT city as our large scale language model and usually it is a true logic you can use directly it unless you have a super computer but luckily we can use open AI API to

build a powerful application let me show you how you can build the search Power application so our parts application is Spam detection with gpt3 when you start a new ml project probably you will start with few samples so we have the same problem we just collected two Whirlpool Malaysia spam samples but we wanted to build a new spam detector with those samples traditional ml machine learning models usually does not work with few centers so it's something like when you train a small puppy probably you need to show him a lot of examples to teach a as good scared but however a smart door with a lot of experience it will just the dog will just learn quickly with few

examples so our super scale large language more pre-trained language model can do a great job with few examples I will show you how we can do that so uh so she mentioned earlier we achieved impressive uh the detection accuracy with our model so as you can see gpt3 with only two examples one ham and one spam image message we achieved about 90 accuracy and with some additional eight samples we achieved about 95 accuracy it is really amazing however one of the traditional but it's a I mean the popular random forest model it is a tree based model however with only two examples you can get like a slightly better than them guessing this is the

power of a large-scale language model so I will talk about the details so these are our data sets for our evaluation and the model details so teaching GPT 3 is quite simple so when you train when you design a large language I mean the links model the architecture is quite complex however utilizing the ping model is quite simple it is as simple as designing a prompt data so it can uh yeah the typically can do a amazing job for example so this is our example so we are going to translate uh movie titles into emotes icons so we provide some examples with a simple instruction the first instruction yet we will convert movie titles into I uh the

Emoji icons and we provide some examples back to the computer and we provide the relevant icons and the second one better man Transformers and then we ask about our custom Star Wars so we get the correct answer so this is really simple but we can build a powerful application with the same apology so this one is called like from the designing or prompt engineering this is the step we will follow today so let's design our prompt for detect uh spam message we start with a simple instruction so a classified message is spam or hem this is really simple and then we provide some examples so here we select one spam and one hand from our training

data and then we add our test sample so the test sample says uh the free something and then with this input the model can easily understand what task is about so it is the task about classified message as a Spam or amp and we've already provided the relevant information so easily the model will return spam as our output so this is really simple step so we will show you some other examples about the model so from the previous uh the The Prompt we now test the use messages the message says horizontal something so this is a obvious spam so we detected this one our model detectors spam correctly and the next one so yeah I can

see but it yeah it is not malicious it is not detected spam so this is a screenshot from open ai's playground website so well you can test your input and output with some settings so here is one of the most important thing is the model so we set the model as tax uh text tabins this is a uh the largest length model of an airplane provided for text generation so we used this uh the g53 to detect spam message so this is a simple use case demonstrated the power of a large scale pre-trained linkage model so the next use cases is a more complex than the previous simple one so we are going to generate a human needable

description from malicious command lines in previous token mentioned about the soc analysts uh on their analyzing a lot of I mean police command every day but it's really hard job so as you can see we have a one malicious command so uh it is really long and it's hard to pass so it is really hard to understand the actual the hidden uh I mean the intention of this one so our code is actually we wanted to translate this one into human beatable description so our question is that can large language models like cptc can make I mean our analysts are easier by describing this command in simple language our answer is yes it is possible

so you can see the description generated by our approach so to complex one now you can be so it is simple so actually the command create a file called uh exe.pat file and it has some malicious content and it execute and delete immediately so it is one of the malicious behaviors attackers employee to avoid the detection with the file based signatures so we will talk about how we can generate these impressive description so actually gpt3 is a family of large-scale language model and it comes with two different versions the first one is um actually uh text generation version so these models has been trained with large-scale Text data so it can write interesting stories and second person is

called codex so these models has been trained with the uh I mean a lot of Public public available source code depositories including GitHub so it can write code in many different languages including python JavaScript and power shell script as well as many different programming languages so this is the Codex is our choice for our Second Use case and we already use the I mean the text version for our spam detection so okay let's uh start with our simple prompt so we provide a command as it is as a command section in the second section which I will provide description but we carefully design the uh The Prompt so the gptc can also complete the domain as a chip chip D3 is a

pre-trained language model so the pre-treating language model is pre-trained within the printfin step to uh predict next word so as you can see so this will guide the GPS to write discussion about the command so let's have a look at the result with this prompt so now we can read the command where copy the exe binary to a temp folder so it generates a valid description about the command but actually the command is malicious because it copy a system binary into a temp report as Adobe exec so it is hiding some malicious activity here but it didn't catch that so we are going to add some additional information to improve the quality how we can do that

so our approach is quite simple so usually those malicious commands will be detected by any second space rules and we can use Sigma rules or Yara rules to detect those ones so in this case the previous command was detected as uh the one of the sigma dual signatures and the detection name was Windows spheres copy system 32 so the signature name is several many many I mean important information about the command so we are going to use this invention to improve the previous I mean description so let's have a look at the open now so yeah sorry I forgot about the uh the the second version of our prompter so this case we still use the same command

section but we add one additional section we call the tags and we will be using uh signature names as the Tigers so if the one command can be detected by multiple signatures we will be at all with the comma separate string and then uh we will ask GPT to write about the description so now we have a better description so you can see that actually the command will copy the finally from system folder to a tempo for the Adobe exit so where the attackers can use the to finally to perform ballistics activity so now it correctly recognized the malicious activity as well so now we have much better description so uh this case uh previously we set the model as a text

star principle now we are using codex version so which is a code that means the model and also as you can see there are some other parameters like templates or what in the top the top probability parameter so with when you change this parallel with the same input you can generate multiple descriptions maybe just slightly different but our next step is we are not happy about this one but we still want a team for for the description so our practice we can generate multiple description from the same input we can change the templates over the topic parameters and then we will select the best one out of a multiple candidate so our approach is using back

translation so effectively installation um is I can explain like when we train a translate English to French water so for example hello to bonjour it is not easily directly compare within the quality of the principle but if we translate French to English now it's clear we can easily compare the quality of Friends by comparing to hello original one and pack translates English high so now we can apply the same mechanism for our problem so command is a one language and description is another language so we can apply the same mechanism for our case so we can generate the description as we did before and then also we can generate the description ah sorry the command

from the description so now we can compare the original command and the effect translate command to selected past the description so okay let me show you some of the equation first oh sorry so this one is the overall step so the in step one we will generate multiple descriptions from one command using the different settings of the model and then we will generate command from the generate descriptions and then we will link description by uh simulated score between the original Grant and the effectiveness once so this is our new prompt for big translation step so we provided the title information and the description generated from the previous step as input and then we will ask the model to

complete our Command so this way we can generate X translated the uh the command from the description if the description has enough information it should be able to be generated the original command and then also if you don't um do not provide any information as the command so you probably get some random strings so we that's why we just provided the first word as the prompt for the command to uh so yeah these are the some of the examples uh from the previous command we now have a two descriptions the first and second one the first one actually uh did not exactly mention but it just mentioned about temp so it failed to degenerate the command so as you can see

uh uh the the final output was not a double but it has the same Commander name but however the second one so it mentioned correctly the temp out of in the description so uh it can generate the original command correctly so this way you can easily compare the the quality of description using uh back translation step

so this is another example of our the description generation from a command this one is a quite interesting can you guess and we you you have the answer actually this command will search files in desktop fold and the server port and and then the find files containing password actually it is a finding your password from desktop folder so you can easily understand what the I mean the malicious activity about this one so the second application also demonstrated the uh I mean power over large-scale pre-trained language model so you can build a powerful spam detection with just a few samples and also you can I mean create a impressive application which can describe the complex command line into human

leaderboard description so uh actually do you remember the the title of Auto actually the title of our talk was gpt3 and me it's our story so our separate story now it's time for you to create your own wonderful application so maybe the next chapter will be gpt3 and you thanks for listening

you guys if you guys have questions we can both take turns answer yeah please go ahead

dude

[Music] yeah we also tried to detect the malicious URL with the same approach yeah so you can still [Music] oh I'm sorry so yeah the question is about um the spam or the malicious message can include some URLs some random strings so it is possible to use the those I mean I mean random strings to detect those one so so we also tried to use malicious URLs with the same approaches usually molestory or let's have some random strain so for example like Facebook but it has some other random string so it still works so it helps to recognize the random string as the malicious part and still can work yeah in the examples that you explained about

uh so I understand that if you use the GPD models the gpt3 models for language and for code but how did it work with uh with URLs meaning it is not code and it is not a language and uh and furthermore if we want to uh to use this uh this approach for things like other malware detection or something like that uh is there a simple or not very very complicated and expensive way to do that um yeah so I think you guys heard the question thanks for speaking to Mike um yeah I mean I think you know so I think it's really um a problem for experimentation to know to know where gpt3 will will shine and

um that said I think my intuition is that gpt3 and models like gpt3 um will do really well in cases in in which um the self-supervised problem they were trained on has sort of forced them into into a really useful representation of the domain so um like I think the reason it did so well on that um oh that's fine it's funny you guys are seeing my social calendar here um so I think you guys are all welcome to come to Topgolf later um so I think the reason it did so well in that domain categorization example I gave for example is because um it was trained on like a web scale Text corpus and it was forced to to learn

quote unquote that like shooting range uh.com is like semantically quite similar to like Firearms Warehouse or whatever example I had in there now if I was just to give it a bunch of like hex from some software boundaries I wouldn't expect it to do particularly well at all on that because it hasn't been trained in in that way thank you sir uh yes we please go ahead we have another question

yeah yeah yeah go ahead oh I'm happy he just wants to like he like he's like why didn't we use a text description whether we just use the tag so I think your question was why are we just using a fairly um uh non-descriptive tag as opposed to like a like a like a description that says like so you know just like a full sentence description that sort of is mapped onto the tag but

anyway right yeah yeah so yeah I'll answer and then younger add whatever you like so I think the question is like why did the model so like what's the model making like what's the what's the intuition behind the tag and why is the model like what's the model doing with the tag so so basically um what what we did there is so the intuition so first of all the goal of this was is not to detect bad versus good it's once you once you know something is suspicious have the model unpack all the Obscure sort of text on the command and give a clear English description of like what's going on um that our intuition about the tag and

this was young who this is entirely young whose idea um was that um if you give the model the tag and the prompt you'll get the model thinking along the same lines that the analyst is thinking about the about the about the command and then it'll write auto-complete that sort of incorporates the information in the tag you might wonder well can the model really understand like an abbreviated tag that's like win underscore sus underscore password and like what we found is that yes it seems to understand it's it seems it seems to internalize something about what that means and reflect that in the description yeah please go ahead yeah

like the logic like the rule matching logic

yeah yeah yeah uh I'll repeat the question yeah I guess the regarding the tag here we have medications about so actually the reason to use the tag as our I mean additional information because we only have that information so if we have I mean the texture description about the actual uh the I mean the uh the signature so probably it will be better to provide those information but we don't have that information at that time so we just use any edited but also mentioned I I show you some examples like the previous the movie title to the image icon we just provided two or three examples but the model can do that so actually the during

the pre-training so we provide a lot of not people I mean the open air provide a lot of data to the model so it can recognize not only those complete malicious commands but also it uh I mean recognize all relevant information from many because in in your source code so there will be some targeted ones as well as description so it can recognize all those information yeah I mean I'll just say this is part of a larger pipeline in which there's you know so yeah you weren't seeing like the pipeline which were matching signatures against you know that's just that's just outside of the scope of the research but as a security company where we match command lines against

in this case it wasn't your it was Sigma but you know you know rule-based systems I don't know how many oh please go ahead yeah um did you fine-tune any of your models or were you just using off-the-shelf models here and secondly I interested how far away you think we are from super large neural Nets being used in production to improve security yeah actually the previous uh the span and the uh the second command line description we only use the fuchsia Journey not fine-tuned but also we tested with the fish uh the fine tuning with Spam detection so with like about 1000 samples you get like about 99 accuracy with up when you fine-tune the

model so you can improve the product with fine tuning and uh what was the uh next it was like how long is it going to take oh yeah yeah the problem is actually so you can improve the performance but the problem is the model is really slow so it is not good idea to use like a two year detection problem but as a uh you mentioned so in Source case we collect a lot of samples and we close the uh and then we select some represented samples but so let's say that this one one cross has a 1000 samples but we can select one one command line and we can provide a different product so so it will be

easier analyst to analyze that with the human leader description so it has some value but still the I mean the in terms of the uh the runtime yeah the speed is a problem I mean so I think I'm agreeing with young who here basically but like I think that some of this stuff like the stuff young who've demonstrated about the descriptions could be could be used in the short term um uh it again the question is volume uh and cost so it you know we I don't know how much we spend maybe at least probably spend like a thousand a month or something on like gpt3 based on you know so we give an open AI you know 12

000 a year annual annualized um right now just to do this research um and that's just us messing around in our research group not not deploying it sofo scale where we've got you know half a million customers deploying its scale you know all of a sudden the costs become really significant um what that means I think is that it's not that we can't use these models but we need to use them in a discriminating way uh is a good analogy as malware sandboxes like you couldn't possibly run every um email attachment uh that you receive in a customer base uh in a sandbox but you you know you use the sandbox very very selectively in cases where you

already know something is quite suspicious in the case of the description generator you know you might own you know you'd only use that in the context of new previously unseen alerts that we think are really suspicious and that and that have you know big command lines attached to it that are hard to parse you know um you just have to work out the economics of it so I think I think it's possible we get deploy stuff like this really soon but we have to use it very sparingly and then in the future I think you know hopefully costs will come come down um so I guess we can take one last question and then I think we need to wrap it up

sorry it's not really not really an exciting one but have you tried this with the centroid analysis or what I mean you gave an example of yeah yeah yeah we tried it with sentiment analysis can you say that so I mean sentiment analysis so the question is have you tried all this sentence NLP National national language processing but more with figuring out how someone is feeling or their emotions behind a post yeah yeah Instagram Twitter or something like that so in case anybody didn't hear the question is have we tried this um in sentiment analysis like so if you have a document uh you know categories that document in terms of the kind of sentiment reflected in it

um uh yeah I haven't tried any I mean those use case because I can only use our money to type screen applications so we only have monthly uh yeah two thousand dollars sorry to talk anyway so you can find the example from the open air website so there's a one example of a sentiment analysis how you can do that yeah yeah well I think we're out of time thanks for your thanks for your questions and comments everybody much appreciated