Uncovering Azure's Silent Threats: A Journey into Cloud Vulnerabilities

Show transcript [en]

so hello everyone uh my name is NES and I welcome you to uncovering aures silent threats a journey into Cloud vulnerabilities so a little about myself I'm from sikim India and I'm really honored to be here at bide zabad I work at Tren micro with a focus on cloud and container threat research so mostly studying attackers by building honey parts and um yeah so I've been in the uh threat research space for around 2 and a half years now and I'm also a member of null an open an open security community so the fun fact like I wrote my first song in 2018 and my first exploit in 2009 when I wanted to win at a game

called project IG like the host said and uh this is a picture from my last track where I went on with my friends like a 4 km track very small but I have an ACL ti so it was a very big thing for me but why are you here today today I'll be talking to you about a journey of exploring the iceberg or of a cloud-based machine learning as a service offering to make things simpler we'll go through a few episodes an introduction of uh what we are going to talk about the issues that we uncovered while exploring the service and we'll drop to the conclusions and takeaways so this is an expanded version of my uh

previous talk from blackhead USA this year and this is a talk about the security issues that we found in the hottest machine learning as a service offering currently known as Azure machine learning service from Microsoft so let's begin the journey so there has been some Buzz around cloud service providers fixing vulnerabilities reported in jupyter Notebook based Services across Azure AWS gcp so is there something off or something fishy with notebooks in general so I pack my bags and I head to the Azure portal searching for services that are based on Jupiter and I come across this one interesting result which talks about running Jupiter notebooks in workspaces and folks this is where it all started

in 201 19 there was a prediction in a Gartner report which talks about a five time increase in the usage of cloud-based AI Services by 2023 and in 2023 it kind of makes sense as companies are seeking to use AI in their product Services they want to create chat GPT like chat Bots they want to train llms on custom data sets and even when chat GPT was released I was a little curious to know how does the uh underlying platform look like and how can one leverage the same platform and this video clip from May this year uh kind of makes sense now so the AI offering in Azure looks uh something like this on the top we have certain

problem solving services and on the bottom like the cognitive Services we have the machine learning models as a service and everything that you see in the highlighted box is actually built on top of azure machine learning service so AML is a mammoth of a service and we'll go through some basic terms first to understand the issues that were found uh so it's a machine learning as a service offering it uses a mix of us user managed and Microsoft managed services to function in AML you basically create a workspace which is like a centralized place to manage all your machine learning activities you can access the workspace using a browser uh vs code IDE the Azure ml CLI as well but what do you

do on a workspace you basically work you create jupit notebooks you can run your experiments you can create and import data sets you can create training inference environments you can also deploy these uh machine learning models as points and you can do all of this just from the browser itself so that's pretty cool the workspace is dependent on certain Azure services on the users end so when you create a workspace there are resources for these services like the storage account keyo application insites that are created on the users's subscription except for the container registry so it's not mandatory to have uh have an ACR like an Azure container registry configured to create a workspace so in AML use users or data

scientists would basically perform mlops and these are the various compute targets that are provided by AML for a user to run the jupyter notebooks training scripts or host your machine learning models as endpoints so you have comp like compute clusters which are like clusters of virtual machines so you can scale them as per your requirement uh you can have your on premise kubernetes clusters or AKs as well you can attach uh them as well you have attached comput so you can attach any uh like virtual machine teams that you have and you can run your scripts on it and specifically for this talk we focus on compute instances so compute instances are basically managed Ubuntu virtual

machines that reside in a Microsoft subscription by managed uh we mean that these resources are maintained by Microsoft like patching of the host OS if there are any issues found patching of those issues as well and the OS image contains a lot of tools that are used by developers like Jupiter vs code Docker Python and all these deep learning librar and stuff so basically this is where you run your code and you perform your model training Etc and each workspace has a storage account which contains your machine learning related entities so to access the data in a storage account you use something that is called a data store which are basically a reference to the storage

account uh for the Azure machine learning workspace so using data stores you can actually avoid storing your access keys in scripts and like SAS credentials in your scripts and you can simply use the data store to refer to the storage account okay so it's like just a reference and there are file shares and blob containers that are used to store notebooks data sets logs models and like this is basically where all your stuff is stored okay so we can browse the storage account using Azure storage Explorer and we can view the file shares and the blob containers that are referenced by the data stores as well so these are certain default data stores so on the left that

you see workspace artifact uh store that is the name of the data store and the type of storage it refer refences is azure blob storage and on the right you have uh the screenshot from the Azure storage Explorer just take a note of The Blob container names like Azure ML and Azure ml hyphen blob store hyphen some U some uu ID so this is how it looks uh the data store name is workspace working directory the type is file share and the type of file share it references is defined in the box below and the account name as well so that's pretty fine and uh the data stores in Azure machine learning service they support a

credential based authentication for file share this basically means that if you want to work with file shares using data stores you will be using a combination of a username and a password where the username is the storage accounts name and the password is the storage account's primary access key additionally multiple users can create compute instances in a workspace and by Design there cannot be two owners for a compute instance also by design any user can access the other users files in the same workspace it's a shared environment so the created compute instances share the workspaces storage accounts file share as the file share is mounted on all the compute instance in a workspace so with this background and a

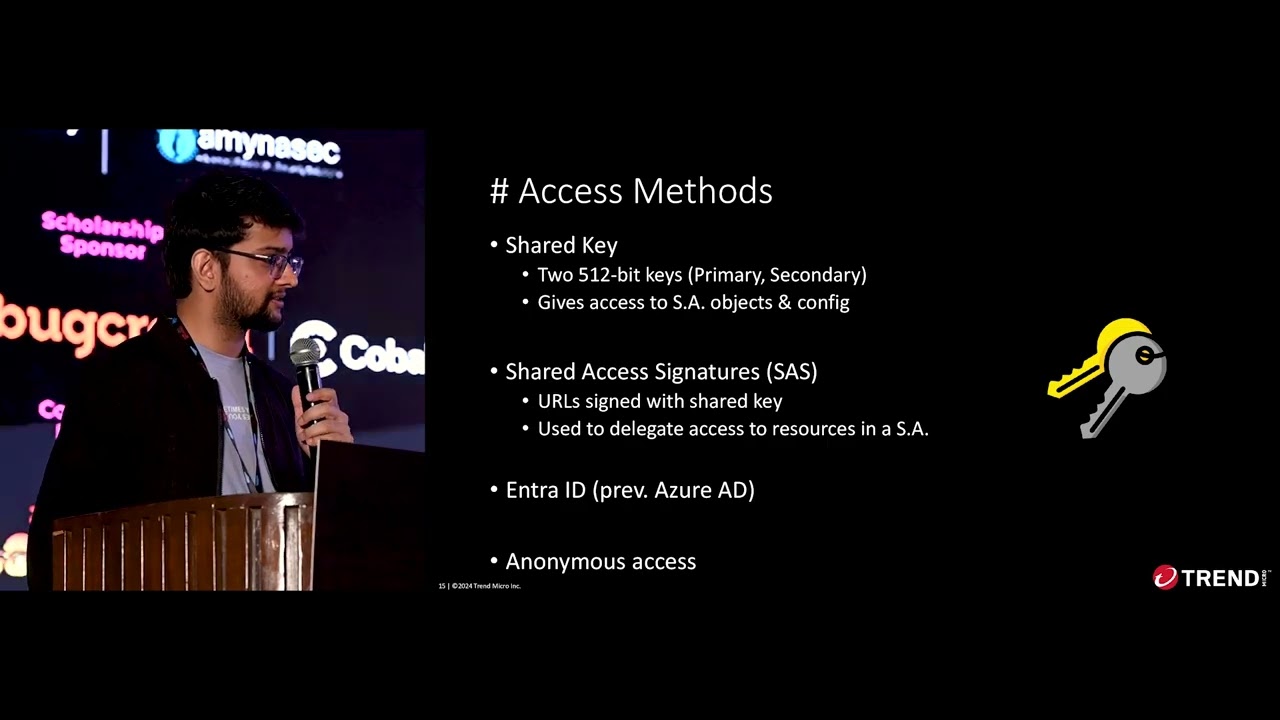

very simple basic introduction of aure machine learning service we begin with the first episode where a data scientist is looking for the keys so while examining the different folders and the the files on the compute instance we come across a directory structure which looks something like this so applications FS amounts shared startup volatile work work items so this directory structure is of azure batch which is a different service so we take a quick look into what Azure batch is so using batch you can basically run large scale workloads in Azure with Autos scaling and monitoring as well so for example if you want to process images at scale like you want to have your 100

nodes then uh you can just do that using aure batch and these are some basic terms to understand as we proceed because it's a journey so it's a journey of cloud vulnerabilities we need to take certain pauses we need to get a little uncomfortable and then we learn again so compute nodes are Linux or Windows Virtual machines the group of nodes is called a pool and uh so basically you can group nodes together the jobs are collections of tasks that are run on a pool where you have your compute nodes and you can think of tasks as individual run of a job so for example if are testing the output or the performance of a script then you run it 10 times you

compare the metrics and then you figure out okay the script is doing fine or not right so that's a job and each individual run of that job is called like a task and you can monitor and download the output logs for tasks so in batch you have a concept of a start Task so basically the start Task runs when a node starts up the files the configurations the output of the task they all are stored in this path in blue and the tasks startup and the output of these uh tasks are actually stored in like error logs like standard out and standard error so as we saw previously the file share is mounted on a compute instance

so we had a question how is the Azure file share being mounted on the compute instance since it uses uh credential based uh access only it would be interesting to examine if there are any credentials being logged or stored in clear text on the compute instance so we found that the output of the start Task is logged in standard error and standard out files in this in this path and we saw that the storage account credentials were being logged as is in the standard error logs and since the command runs as Pudo it also gets logged in the authorization logs so we reported these two instances to Microsoft and we waited few days later we came across an

instance where the password field of the entire command line was mased note that the commands executed in the start Task on the compute instances are also authored by Microsoft so this was the fix that left us wondering if there are more such instances of credentials being logged let's just report them to Microsoft so we started looking for more so Azure machine learning uses certain agents to manage compute instances so these agents are basically uh some services that are used to diagnose Monitor and make sure that the compute instance is healthy or just you know troubleshoot stuff so these agents are located in the working working directory under the start Task and these agents need certain configurations to

perform actions like they don't act on their own they need certain configurations and these are provided in the form of environment variables so these environment variables are again stored in files which is under the working directory DSi okay so we found that the configuration for two of such agents named DSi Mount agent and DSi ID will stop agent contained the storage account's primary access key so the environment video was named pass WD and that contained the storage account key but we were left wondering like uh it could have been something else like password or storage account or something else as well so turns out that the password argument can be supplied as an environment variable named pass WD and

this issue was found while trying to understand how are the previous issues fixed nevertheless all the instances of storage account credentials being stored or logged in clear text were fixed after our reporting and that brings us to chapter do since credentials being logged and stored in files was acted upon and fixed we had a thought are the credentials incoming on the compute instance and with that we begin chapter 2 wait is that my token so a user can access the compu instance from the browser using jupyter notebooks Jupiter lab browser terminal so these requests are actually proxy to the computer instance by a load balancer SL proxy in between so basically if you want to access Jupiter lab on a compute

instance named AML in the East Asia region the URL would look something like this uh we do not have access to the load balancers logic here because it's uh maintained and owned by Microsoft so this is how the Jupiter lab UI looks when you access from the browser and this is how the browser embedded terminal looks in the Azure ml studio uh oh yes the default user Azure user can assume root privileges without needing a password and we'll use this bit later on so these browser based terminals can be accessed by your user with the proper role based Access Control permissions and the authentication flow for a client looks something like this so the client

logs into Azure ad or entra ID maybe like maybe not a good idea to call it right now and they are presented with an armm token which is the Azure resource manager token now the client uses the arm token to talk to the Azure machine learning service which in turn generates a new token that is the AML token which is scoped to the workspace to perform AML actions and this token is like used from then on all incoming requests to the compute instance are received by an enginex proxy that is listening on a fixed Port that is 44224 it has SS it has SSL enabled and there are certain certificates that are used from the

above highlighted parts so client side certificates are validated by this proxy configuration and there are certain checks on the subjects and the issuers as well so the proxy checks whether a header named X XMS Target Port separated separated by hyphens contains a numeric value later the value of the header is used to forward the request to the appropriate service so for all incoming requests with the URLs containing uh Jupiter related apis they are the request is upgraded to the websocket and the requests are forwarded on the basis of XMS Target P header in the proxy pass directive so basically an incoming request on the compute instance shall have this header populated XMS Target Port this request will be received by

engine X service listening on Port 44224 and based on the value of this uh header it is8 which is also the default port for Jupiter the request is forwarded to Local Host I mean 1271 and then the interesting bit here is the enginex like in a default configuration enginex also logs your access and like any errors that are generated cool we found an issue using our good old friend called TCB dump to view the Network captured then that what happens when you uh terminate uh a browser based terminal basically so when you click on close and you hit on terminate there are certain requests that are sent like a get request is sent followed by a delete

request and if you notice the get request has a URL parameter named token which contains a JWT and who did the token belong to the Token belonged to the user so it was the armm token and turns out that we had the JWT of the computer compute owner that was being logged in the enginex access logs because it was there in the URL parameter so while trying to figure out why was this even happening uh in the documentation of Jupiter server we see that there are three ways to authenticate to a Jupiter server so one of the ways is to use an authorization header the other is to pass it as a URL parameter and the third is to specify

the password in the login form and since engin X logs the access attempts the access log actually contain the JWT token we reported this issue to Microsoft and they promptly fixed it again now after all these are just credentials being logged or stored in files on computer instances what could go wrong and what is so risky about it so developers have been observed sharing error or deug logs from their systems on public platforms like GitHub if they are not aware of the fact that these artifacts at times may contain credentials that to in clear text that's a silent risk that they're being exposed to in this example we see uh certain uh folks discussing on GitHub they are

requesting for batch error logs which in our case contain the storage account access key in clear text however this instance is from 2018 it's a pretty old instance but let me show you something new so does anybody recognize this URL I cannot see the audience but I'll assume no okay so we have a storage account name and this is like a SAS uh URL being used to access a file named fgvc aircraft 2013 B rgz something okay so what is happening we have a storage account name that is robust robustness wwws something something cool the types of services that can be accessed on the storage account that is the blob file q and table the apis that are accessible for

the storage account that are the service container object level apis and the permissions are root on the storage account just for like just from this URL itself and the expiry of this URL or the SAS token for this URL is 2051 this URL is actually related to a recent finding from researchers from Wiz where they found an overly permissive uh shared access signature token like a SAS token for a storage account on public GitHub repositories of Microsoft it basically allowed them to access 38 terab of data but this was like a over permissive token so you could basically uh take take a look into the entire storage account which also contained backups of like two former employees and

it was uh like all over the news some time ago and by default the storage account in Azure machine learning service is publicly accessible and this is just a speculation if you notice the blob container names that are Azure ml hyphen blob store and Azure ml that's the similar container names that we saw for the storage account that we were exploring in the first uh like in the in the introduction so mistakes happen and it's pretty human but we need to keep learning and trying not to repeat them we have couple of more findings that we'll be hopefully hopefully presenting sometime related to SAS tokens itself so dependency confusion attacks with attacker control code has been

known to be exfiltrating uh credentials from files after Gathering them so this is the case of a popular Library called pyo the nightly build of this pyo library was compromised December last year and the binary would basically exfiltrate credentials from scripts environment variables and this was targeting machine learning environments primarily because pytorch is primarily like used in that uh context so if an attacker is able to compromise a file share they can very trivially compromise the entire storage account and the entire workspace too let me show you how so consider a scenario which might be likely to happen there's a workspace with three data scientists each having one compute instance by Design we know that the that each

workspace user's files are stored in the same asure file share if an attacker can get hold of a user which is again a very high degree of compromise but that's how you threat model things or a compute instance which has these credentials being stored or logged in clear text or the file share where you have your jupit Unown books scripts that are run on compute instances and compute clusters and everywhere the entire workspace should be considered to be compromised now you picture this with a scenario where users could view each other's JWT token being logged in the engine X access locks suddenly the issue becomes massive because of the design of the environment according to the docs uh

data stores provide a way to secure your connection information such as storage account access keys and sasit using them you don't need to store these credentials in your scripts and that's very cool of a feature however if the storage account key itself is being logged in error logs authorization logs environment variable files on the compute instance that kind of creates a difference and the default user as user can easily assume the Pudo privileges so user privilege is not really a boundary here now the same issue of credential logging may have been in your environment if you were using Azure machine learning service but on Valentine's Day this year Microsoft released a CV for this finding now the

JWT is not observed to reach the compute instance and the storage account access key is not logged or stored in any of the files on the compute instance some takeaways be it logging or storage of credentials credentials in clear text is bad that to in user shared environments like aure machine learning from the design of such environments we need to be aware of the risks as well while using open source tools uh we also need to be aware of the configurations that they have like uh if the JWT of the user was reaching the compute instance that's still fine but logging the token in a file probably not sensitive information should not be shared uh as URL parameters as you never

know there are inline proxies or where where the request is getting logged so this is also echoed by researchers very long back and additionally developers need to be aware of possible uh security implications that may like show up while sharing information on public platforms the issue of credential leakage can turn out to be very critical especially when users may not be aware of them as we saw in the case of The Accidental leak of 38 terab of uh like Microsoft AI with that being said now we embark on the third and almost final chapter of spying the scientist so in Azure ml you can create compute instances in Virtual networks you can specify the virtual

Network and the subnet during the creation of the compute instance this can be an observed scenarios uh this can be observed in scenarios where the training environment needs access from or to other Azure Services via private networks and not over the internet and using vets is also recommended by Microsoft in their documentation a simple environment would look like this the virtual machine lies in a virtual Network which is on the users's subscription remember that the compute instance is a managed compute and it lies in the Microsoft's subscription and on the same vnet we have a compute instance let's say the virtual machine on the user's end gets compromised what could an attacker do from the virtual

machine on the compute instance were there any ports open let's find out so we fired up our next favorite tool and map and we check for open ports and we come across a port called 46802 since we have the compute instances access we are able to figure out what is the process that is listening on this port it's called DSi Mount agent and the process runs with root like the high Privileges and it is written in go it is close source so it's not like wa Linux agent or Omi that has been talked about before and we don't find any references in the public domain but we have some debug information to understand what the binary is doing as

the binary is not stripped like we have the debug symbols now we crack open the binary using Ida to understand what is going on and we come across a function called DSi star DPI service so this service exposes certain HTTP endpoints on that port and just a reminder there is no authentication required for network agis and resources to access the these URLs perfect so we start taking a look into the different URLs and we think like we try to figure out what they actually do the the first one executes sync command on a file the second one executes the uh Mount operation the third one helps us view the compute instances image version nothing specific

nothing interesting but the fourth one Let's us view the uh service statuses on the compute instance so examining the services endpoint one could list the status of each service installed on the comput instance we also see that we could see the logs of any service that is in installed on the computer instance H so a network agisent attacker could basically list down the services installed the status of the service the logs for any of the service and this information could basically help them in uh reconnaissance for performing further leral movement once they are post in the environment after initial access and okay that's cool but as an example of what all could be exposed here's a short

little demo of what could a network ages and attacker really see so while exploring the installed services on the computer instance we see that Jupiter is installed as a service on the computer instances now this is across any compute instances that you will be using using Azure machine learning service and for each service installed remember we can view the logs being Network adjacent and there is no authentication required for this turns out there was certain commands that were being logged in the Jupiter service and folks this is where I went hm what did just happen we saw some comms being logged in the Jupiter service so instead of Jupiter there could have been some other Service as well simply put let's

say a network agenc and attacker gets on like an attacker gets on a virtual machine which is part of a virtual Network on the same virtual Network you have a compute instance the comput instance has this uh agent called DSi Mount agent that is exposing uh that is fetching the system D logs and it exposes an API on diff on a hardcoded port that is 46802 across all the interfaces and if they there is a user or a data scientist who is using any form of Jupiter be Jupiter lab Jupiter terminal the browser based terminal to run commands as pseudo an attacker from the virtual machine could basically view the commands executed by the data

scientist and yeah now if you remember the mount command that was being run in the first episode that was being run as pseudo so that's just one example to show you how this could be exploitable in scenarios so this is a short little demo of hacking the data scientist we Nam this vulnerability mlc so on the top we have a compute instance where the data scientist runs like pseudo caty Shadow and on the bottom we are just fetching the logs for the Jupiter service from an attacker VM and as you can see we can view the logs now I will run the command to mount a file share on the compute instance and the same command will also

get logged on the network agisent uh attacker VM so this issue was fixed uh as a network ages and attacker could possibly see the actions performed by a data scientist on their comput instance without needing any authentication so this was a funny information disclosure bug now the service doesn't does not even expose uh the port on all interfaces all the traffic reaching the computer instance is coming via the engine X proxy which listens on Port 44224 and you need client set certificates to uh get through the proxy okay okay some takeaways so secret agents may lead to secret bugs and hence an expanded invisible attack surface also a silent threat so cloud service providers also should look into the

attack surface introduced when using such agents in manage services like AML where these agents May leak sensitive information so there's a focus like a need for focused threat modeling on agent features because certain features may be very helpful for the cloud service provider but they may add vulnerabilities in Secure configurations as well uh plus uh practicing zero trust is hard but it's very crucial for cloud security because the essence of zero trust is like do not assume that just because something is in your environment it should have access to other resources in your environment additionally simulating attacks in Secure configurations so like using v-ets is one of the secure recommendations from Microsoft can at times help you uncover vulnerabilities

in the service itself okay so the fourth chapter is can you really see me and I had to put John Cena because why not let's say we have a virtual machine and a compute instance and we want these aure resources to access certain other services one of the ways is to hardcode credentials but that's not a recommended way so Microsoft recommends using something that's called a manage identity so the logic behind manage identities is that if you want your application to access a resource the application will request for a token to work with the resource from a manage identity service and The Returned token will then be used to interact with the resource that you want to interact with

the managed identity service can only be reached uh from within the resource it's similar to the instance metadata and you as a developer don't need to hardcode credentials in your applications or scripts and this is pretty cool so you can assign privileges and roles to the manage identity that you create and based on that you can get a token and you can use the token to talk to the service that you want to talk to so manage identities are of two types a user assigned manage identity and a system assigned manage identity so the user assigned manag identity can be assigned to multiple resources in Azure so basically they can span across multiple resources and a system assigned

manag identity can only uh like they are tied to the resource itself so they cannot expand like uh span across resources and only the Azure resource can request for tokens for both the identities cool on a compute instance one can sign in using the manage identity using the Azure CLI so using manage identities you can assign certain Privileges and all that stuff but one when one runs a login d-- identity I was curious to know how does the traffic look like what happens in like in in the background so we ran TCB dump and checked for any outbound traffic uh when we run a login identity and we get this uh get request with some with some

interesting headers containing a secret header but the request is still being sent to a local IP and Port as we can see from the host header from the port information we pull out the process that is listening on 46808 and the process is named identity responder demon the process belongs to a service that is called Azure Bachi identity responder demon and it uses a bunch of files with various environment variables it logs output and error logs to CIS log and that's helpful but responding identities H while understanding the binary we found that the binary fetches the secret and the endpoint the MSI endpoint and MSI secret from this particular file at HC environment. SSO but even now the request is still being

sent to a local interface I was curious to know where is the final request being sent is there some public endpoint for this so for analyzing the binary just for fun I had a moment without deciding whether to dump the process check the logs for the service or reverse the binary but since this is our game we set the rules here and so just like let's just go all in here we have the CIS log entries for identity responder demon as and as you can see it reads some file that is in Mount as mount. nbvm and it fetches certain API end points like the get a new token from East Asia Ser API and

stuff so basically okay it fetches environment varable uh variables from these two files and it basically talks to a public endpoint and the final request to fetch the Azure machine learning JWT looks something like this so we have the compute instance uh name as instance ID in the post body parameter the certificate thumb print and the resource for which we are fetching the token okay and there are certain certificates and private keys that are used from this hardcoded path cool so consider a scenario where the okay the identity responder demon basically uh talks to the C URL using a pair of certificate and private key and it fetches the AML JWT token okay consider a scenario where the certificate and the

key combination have been exfiltrated after compromise so the thought is like can an attacker use these certificates from nure environments to fetch the JWT token turns out uh the attacker got like a 401 unauthorized so okay so the thought is the Assumption rather is the certificate and the private key are tied to the compute instance and they cannot be used from outside the compute instance and that's a good thing but does the story end here not yet so have you played this game called return to Castle Wolfenstein so this is my version of written to Castle DSi Mount agent please laugh uh so now we take a look look into our good old friend DSi Mount agent the service

fetches its environment variables from a file that is DSi Mount agent EnV under DSi working directory this agent runs as a service on every compute instance and the environment variables look quite interesting we have something cool going on here like Keys certificates some XDS endpoint what is all this about checking the system dlog we figure out that the DSi Mount agent basically checks whether the file share of the workspaces storage account is mounted on the compute instance and it checks for this every 2 minutes like every 120 seconds okay but to work with file shares we need the credentials right the storage account name and the storage account access key turns out the agent talks to an

endpoint that is defined in the DSi Mount agent EnV environment variable file and it uses the certificate and private key from the same path that we saw earlier and while examining the outbound Network traffic from the computer since we were able to create this post request we need to focus just on the post body that is the request type and like whatever that is there in the post body basically so in this case we have the request type set to get workspace and from now onwards the post request URI and the headers look all the same so we'll be just focusing on that uh request body Itself by reversing the binary we figure out that the function

that is uh like sending this request type is get workspace info okay okay the re the response contains the metadata about the workspace and this can be considered like the who am I of the AML workspace so you have the resource IDs for storage accounts key vs application insights container registry and some metadata about the AML workspace itself cool later when we were debugging the agent and we were trying to figure out what are what are the other request types that can be uh requested we have one more candidate that is called get workspace secrets so what secrets are we talking about this looks very promising in the response we get the storage account name and the storage accounts

access key encrypted uh like encrypted in the form of J JWT basically it's called a jwe so the Json web encrypted format so since we have the storage account name it's highly likely that we have some way to decrypt this token but let's find out upon understanding how the decryption of the token happens this is how it looks in a nutshell two environment variables that are defined in the environment variable files the encrypted symmetric key and a private key they are used to fetch the like they they basically used to create the decrypted symmetric key so this decrypted symmetric key can actually decrypt the response that we saw in the previous slide to get the storage

accounts access key okay and uh for this I thank uh my colleague David so he was able to help me with the reversing for this uh aspect okay so with a bunch of certificate and private key and the environment variables if the if these three files are stolen anybody could basically fetch these storage accounts access key from non- aure environments okay so if these jewells are compromised an attacker can fetch the credentials right cool but uh there is this concept in Azure storage uh that you can rotate the credential so does rotating the key help we'll find out okay so I go to the Azure machine learning workspace and there's a storage account that is associated with this

workspace now if I go down and if I see the access key I will first copy the access key that is already there so copy so on the right you see a terminal that is there on my laptop where I have the certificates and the environment variables already exfiltrated from a computer instance and as you can see I can fetch the decrypted storage account access key from non- asure environments like basically my laptop using the certificate cool now if I rotate the access key and I synchronize my workspace to use this rotated access key so I'll first copy just to show you that it's the same one and I'll run the synchronization command so basically

this will update the storage account key to be used for the Azure machine learning workspace and now the synchronization has completed and now I will rerun my PO and we can see that we got the rotated storage account credential using the same bunch of certificates so if your Jewels are stolen even rotating the axess keys don't help and this is quite persistent already obviously after post compromise and uh is there a way to track the usage of this certificate and the environment variable com combination from non asure environments we'll get to that that in a bit but let's find out what else can we do since we had get workspace info get workspace Secrets can

we find something new so the function get workspace Secrets actually calls another function that is called generate XDS API request schema so this uh method and in the same binary is basically used to generate the post requests body so the idea was to look at all the cross references to the function that generates the post request body that pretty much makes sense and we have some candidates like get ACR token get ACR details get application insights instrumentation key and all that stuff cool we found one of those functions in one of the binaries that is called get a token to figure out how does the request body look like we debug the agent to find the arguments

being supplied and we find resource client ID API version and stuff cool so the request finally looks like this we have the request type set to get ad token and a request body where we have a nested Json which is resource for which we are trying to fetch the token which actually makes sense you fetch a token for the resource that you want to access and okay cool and in the response you get the Azure Ed token of the system assigned manage identity of the compute instance okay so this was a little out of scope for the talk but this is a recent development so you could specify the client ID in the request body

itself uh yeah in the request body itself and you could yield the user assigned managed identity token from non- asure environments as you saw earlier user assigned manage identity can span across different resources and at times it may be overprivileged so the compromise uh sorry so the compromise of the certificate and the key and the environment variables can lead to uh fetching of the Azure token of the manage identity as well and you can use the token later to move Lally or something that's open to the Privileges based on the Privileges assigned but why does all of it matter while skimming through the documentation we come across this particular statement where by Design only the Azure resource can use

the managed identity to request tokens from Azure but in our case we are using the certificates and everything from nure environments impersonating the manage Identity or the resource and that was like a deviation from what was expected quick recap so the agent DSi Mount agent talks to this batch XDS endpoint and it uses a pair of certificate and a private key and you could basically fetch the who Ami of the EML workspace the storage accounts primary access key the azurity token of any assigned manage identity and a lot more so could an attacker if they had the certificate and the private key which is again a very high degree of compromise but we are just trying to

understand like why this issue was even there because for identity responder I was getting a 401 but for these requests I was getting a 200 okay so this is a demo of uh fetching the tokens from system assigned and like from non asure environments about system assigned and user assigned manage identities so here we have the certificates and environment variables exfiltrated from two compute instances so they have user assigned and system assigned manager ID entity so in this case I'll be fetching the work space uh storage account credential so I'm doing this from my GitHub Cod space instance and as you can see I'm able to fetch the storage account key as well so yeah now I can fetch the storage

account I mean the AML workspaces metadata so this is the humi of the EML workspace now this is followed by fetching the uh this is Samy yeah so this is Samy and this is like a very recent demo so we are fetching the system assigned manage identity token from non- asure environments using the certificate and private key so we get the JWT in the response so this was a deviation from the design and the expectation that we had and similarly we do the same for uh user assigned as well okay so in this case we just fetch the jwd token is like Asis and as you can see we can fetch the token deviating from the documentation and the

expectation and how manage ID entities are supposed to work and uh this issue is still under uh like like analysis from Microsoft but since the issues were reported via zdi so we have a strict disclosure policy of 120 days I guess so it was over 120 days cool so how did the logs look so you may be thinking okay if the certificates and the private key gets exfiltrated I can still uh figure out from the logs but let's see so here is the legitimate activity where we fetch the JWT of the manage ID entity assigned to the compute instance and here we have the malicious activity where the attacker has already gained access to your cred like your

certificates and we fetch the token from an on asure environment well the logs were not very different so this is the diff between the log generated when the attacker does that and we when we do from a computer instance and the activity details don't have any sign in uh like there's no IP address to figure out okay whether this request was done from non- aure environment or from from azure environments so the observation was that the logs are almost identical the location info was missing the certificate that we saw it is valid for two full years and to invalidate the stolen certificate you need to delete the compute instance and if there are any over

permissive identities then it can open up Avenues of lateral movement privilege escalation anything that you can think of so some takeaways from this fourth episode so you need to have your cloud service logging enabled and in place because that is the most important thing most important artifact that you can leverage to figure out okay what happened in your environment so the logging for manage identity could be done better you need to scope your identities following the principles of leas privilege I know we hear this a lot but when you think of attackers who are quite persistent who want to go stealy who want to find issues like this you need to follow these principles and have your basics in

place so defense in depth with respect to Cloud envir ments is a very important and a very good win and definitely you can threat model environments for possible scenarios of compromise where you can uncover such uh differences in the design itself we found some bugs that rarely make the news and they got fixed silently as well we tried few angles as well so where do we go now from here you need to set up your environment using v-ets private links and private endpoints so the issue of storage account access key getting logged the jwd getting logged the agent exposing sensitive information although it was in a vut environment having your defenses in place can help you reduce and

eliminate these vulnerabilities at times you need to focus and follow on secure deployment strategies and obviously go defense in depth it sounds pretty generic but it's very important so in fact in fact Microsoft made it very easy to go secure by default by offering these Network isolation options while creating an AML workspace in the Azure portal itself and you need to monitor your Cloud environments for changes any storage account Keys getting rotated Public Access or Anonymous access is enabled you need to perform regular audits as well setup logging using Cloud native Solutions you can leverage Frameworks like Azure threat research Matrix storage Matrix as well trust but verify if you all love this but you need to

verify the Integrity of Jupiter notebooks scripts because when it comes to the like the threat landscape for machine Learning Systems looks a little different from what we have observed before you need to examine and man like examine manage services to uncover silent threads so this is also for researchers bug bounty hunters just take a look at it go on with your web application skills and maybe you find something cooler than what I found and obviously you need to implement principle of lease privilege so uh specifically for machine learning environments you can Leverage The Atlas framework which is like the miter attack framework for machine learning as companies will tend to move towards leveraging such Services it's important

that we don't mess this up there are there is a page for threat matrics for storage services so this is just for the storage uh offering from U Microsoft and you have a documentation of well-known tactics uh techniques procedures that advisories use against these type of accounts and these are certain resources to begin with Atlas also and list various case studies around simulations and incidents that are focused on ML environments that can help you understand and secure your own deployments so finally down to the acknowledgements uh really want to thank my family members friends my colleagues for helping me out in times of Doubt uh zero Initiative for triaging and smooth communication of the bugs with Microsoft

there are a few more high impact bugs uh in disclosure that we are excited about for next year so stay tuned and thank you David and Magno for all the help it's not a question of if a vulnerability like this gets exploited someday but it's a question of when we need to secure our present first and with that thank you so much for attending this talk