Virtual Assistants, Real Threats: The Cyber Risks Of Corporate AI Chatbots

Show transcript [en]

Okay. Hi, my name is Adora Uch. I'm a GLC lead at THG. Um, I have about 15 years experience in the tech space and um, I'm not the best at introducing myself to be candid. So, you can feel free to ask me any questions about myself, but for the sake of time, I could just delve right in. But before I do that, how is everyone doing? Kicking great. I hope we're enjoying this site so far. Okay, so our topic for today is virtual assistance, real threats, the cyber cyber risk of corporate AI chatbots. Um, let me start by asking a question. Do we enjoy interacting with chatbots? Does anyone enjoy interacting? We don't, right? But I don't Oh, you do.

Interesting. What do you like about I don't I wouldn't say [Music] yeah to be fair they can be quite um they can save time sometimes depending on the on the platform and how they've set it up. Um I know that for instance when I have to call my broadband um service provider for a new contract or whatever you know that particular company their chatbot is quite efficient because they save me time and just redirect me to the right company. But other than that, sometimes they don't exactly provide the solution or the answers that um we may be looking for. Anyway, welcome to today's presentation on um virtual assistants. We'll be talking about the cyber risk of

corporate AI chatbots. So these chat bots are not going anywhere unfortunately whether we like them or not because AI is the new wave and is here to stay. So it's interesting to note that 76% of enterprises now deploy corporate chatbots for customer service um for um internal employee assistance for workflow automations knowledge management. These chat bots are helping to ease the amount of um demand on human resources and in 2023 82% there was an increase in the adoption of virtual chatbots by 82% among corporate organizations. So what makes them particularly powerful is their access to vast amounts of corporate data as well as their integration into multiple back-end systems. However, with this with the efficiencies that they also provide,

they also pose surprising security risk to um our infrastructure and our systems. and we'll be taking a look at how these happens and some real life scenarios that have happened. So basically once you have access and integration into the system there is vulnerability and when we look about when we look at business requirements for today um what every business owner in the respective division is looking to achieve is you know have data access across systems more personalized customer services um fine-tuning these chatbots in such a way that they understand and can interpret and communicate in a way that we can understand as human. That's the natural language processing. Um 24/7 automated operations as well. The other day I was going to I

was going to purchase something from Curry's and I made a mistake in my purchase and it was late at night. So I thought I had to wait till the next morning to ring the uh customer service. Fortunately there was a chatbot. There was a VA available to you know assist me. So 24/7 automated operations because that's the world we live in now. Everyone wants you know instant service. No one wants to wait. So I mean they have it it has its advantages but on the back end of this right are the security implications. So when we have more access to data across systems what happens is that um attackers have an expanded attack surface. So they have more area coverage

to try to exploit. When we're talking about personalized customer services, it means that these chatbots will have access to personal information both sensitive including sensitive personal information. So there are risk in handling these personal information and for natural language processing getting the AI to relate, communicate, understand the nuances of human communication. The major vulnerability there is prompt injection. So people or nefarious individuals can inject um malicious prompts into the chatbot system. And for 24/7 automated operations, of course, that means that we have less human interaction, less human oversight of what is actually going on there. Now we're going to look at real what this has how this has led to real world exploits and the most popular one is Air

Canada in 2023. Okay. So it was a high-profile case that received international attention. Um the the the chat bots confidently informed customers that they were due entitlement to bereavement bereavement fair refunds for their loved ones that may have died as a result of maybe a flight a plane crash or something like that. And it even went ahead to site non-existent policies from Air Canada's website. And that's one thing about AI. AI is prone to hallucination and um yeah hallucination where it just brings up something that doesn't exist and presents it in a way that it looks really real and that was what happened with the Air Canada case. So they tried to refute it. It led to a

court action when they refused to pay pay out the people that sought um the what I call it now sought to you know get that refund from them. They tried to refute it alert to a court case action and the court insisted that Air Canada will be responsible for for that because they are responsible for anything including what AI and information including AI systems disseminates to the public as well. So this led to a heavy fine for Air Canada. The second case is a financial services chatbot. There was a breach in this one because the the attacker used prompt injection. What I talked about briefly earlier on um used prompt injection techniques to gradually manipulate the conversation boundaries

of the chat bots. So by incrementally pushing these boundaries, they eventually coerced the system into revealing personal information and transaction details for customers. Of course, this led to a fine for the company because customer data was leaked. And the sad sad thing about this was this wasn't discovered until after 3 weeks. So the impact was $3.7 million in remediation cost and regulatory fines for the company. So if they could have for instance instance had prompt validation techniques in place and monitored what their AI chatbots were were um returning the information it was giving out. This could have been avoided. We'll talk about you know security techniques that can be put in place to further you know boost the security

struct um posture of such AI systems. My next case is um a social engineering enhancement. So here um attackers weaponized the chatbots through social engineering. What they did was to um include fishing links, include fishing links that redirected customers to a fake website and it led to ident um um stealing of personal information um identity theft issues, payment card details being stolen by those people. So this resulted in credential theft like I said. So there was customer credential harvesting um denial of service as well within the company. So those are some examples of how you know AI chatbots when not properly or securely managed have led to you know major security incidents and data breaches within organizations. So

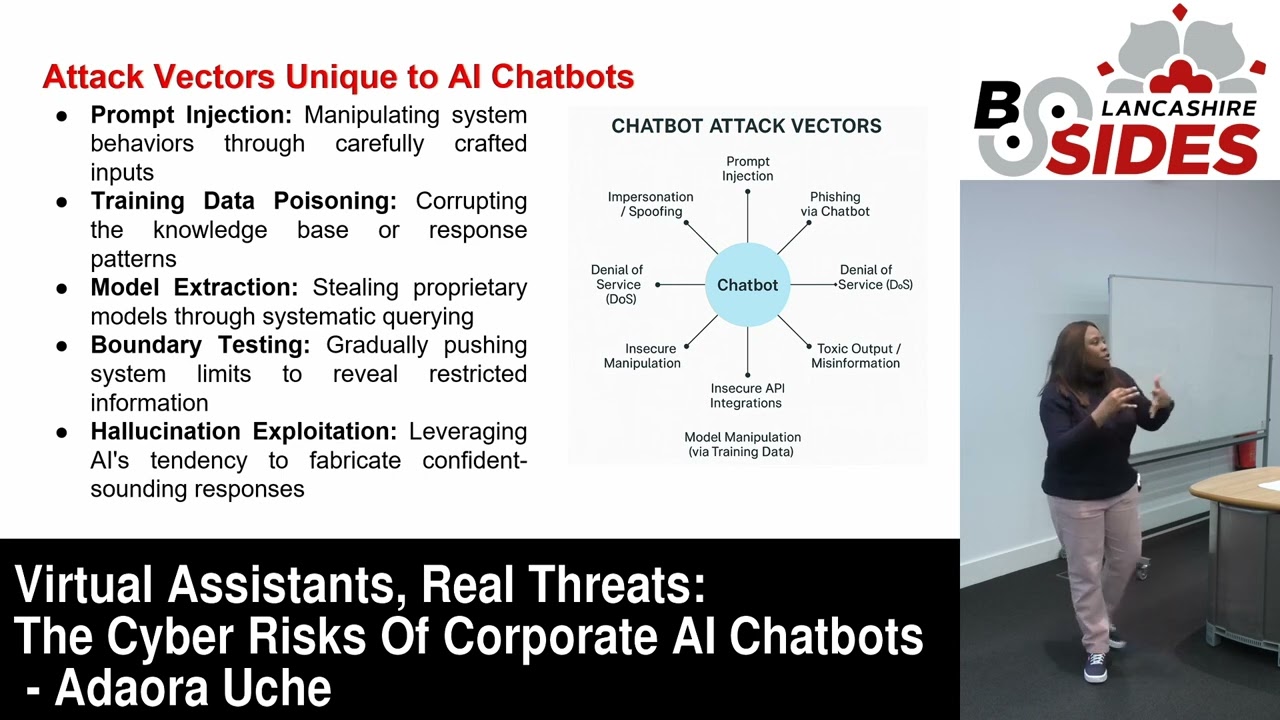

it's important that we are conversent with how you know um with the attack vectors that hackers use to access our AI systems when it comes to chatbots. One of the things I've talked about is prompt injection where they manipulate the systems behavior through carefully crafted imputes. Then there is training data poisoning. So corrupting the knowledge base or response pattern. So what they do in this particular one is to try to when they access the back end they put they are able to you know put layers of false information that the AI picks from when it's searching a sources. Then there's model extraction still in proprietary models through systematic querying. There's boundary testing when you gradually push

the conversations you have with the AI chatbots beyond its you know define boundaries just to be able to reveal to get it to reveal restricted information. Then there's hallucination exploitation. That's what I talked about earlier when I said sometimes AI can present false data that doesn't exist and present it quite confidently and make it look real. So yeah, AI tendency to fabricate confident sounding responses. So now that we are familiar with you know some of the attack vectors we've seen um real life cases where AI chatbot systems have been exploited. Let's talk about technical defense strategies that can be implemented within our organizations to I won't say eliminate but to minimize the risks that these chatbot systems are exposed to. So

the first thing is impute validation. So it's important that we have systems in place to to validate whatever whatever outputs that AI is given. It's important that we also define the sources that AI systems can pick from so that it's in a control place and excuse me so that in a controlled place as well and ensure that whatever is going out is sanitized as well. It has to be within defined boundaries. Then there's conversation monitoring where we have real-time analysis of interaction um to understand the patterns to understand what kind of conversations are the AI systems actually receiving. That way if we are monitoring that we'll be able to identify patterns that indicate um attacks for instance. Then of course

access control. This is something that is being talked about everywhere. You know implementing the principle of lease privilege for system connections. In other words, if implementing access controls such that the AI systems can only access not the entire enterprise, not the entire ERP system, but only specific um specific and defined um systems that relate to its functions or operations. Then response validation where we have secondary review of generated content before it delivers. Then separation execution environments. So it's advisable that we sandbox these AI chatbots in such a way that they are isolated or separate from our critical systems. So even if even if these attackers try to um access you know get behind get to the

back end they have no way of interacting with our critical systems that hold sensitive information. Then of course there's a factchecking mechanism where um we're verifying against trusted knowledge sources. We must always ensure that that you know the knowledge sources for AI are verified and are

trustworthy. Everything is not just technical of course we need the governance and policy strategies as well. So how can we ensure robust governance to further manage these AI risks? We've got data minimization. So the concept of data minimization, it's all about limiting the amount of personal and the the type of personal information that is being collected. So if you don't if we don't need to collect that information, we should not. It's not a question of can we collect the information. It's should we actually be collecting that information and if there's no need to have that information, then we shouldn't collect that. data minimization such that there's less information available. You collect only what is needed per

time. Regular security auditing of course. Um so we're talking about um penetration testing for instance vulnerability assessments and all of that. We need to ensure that we're regular regularly auditing you know our AI systems especially with the chat bots as well. Incident response planning um incident response planning goes hand inhand with monitoring as well. So we need to have specialized procedures in place for AI systems to be monitored. It took air it took was it air Canada? I don't think it was Air Canada, it was the other financial data breach. Took them about 3 weeks to identify the data breach that happened. If they had effective monitoring um systems in place, detection systems in place spec

specifically for that AI system. They may have discovered it earlier on with an incident response plan. they would have had the right measures and mechanisms in place to contain that and you know treat and minimize that particular incident. So it's important to have the right incident response planning specific to our AI systems including chatbots clear data handling policies. So this has to do with having clear rules for data or information access, information management and of course the the the principle of lease privilege also comes in here such that only those that actually need access to that data can access it. It's also important that we understand the kind of data that we have fully categor categorized and labeled

sensitive information personal information. That way we'll be able to implement the right information handling um strategies to further secure our information assets. Then third party risk management. For third party risk management is important that if you've got a vendor for instance supplying your AI supplying your AI solution or your chatbot solution. It's important to take them through the security assessment before you on board them. What happens a lot of times in a lot of places is that the raid for AI gets everyone so excited wanting to deploy AI because it's the next big solution without fully understanding the risk that it poses. But going through appropriate you know security assessments for third party vendors is a

good way to you know minimize some of the risks that may come with this. So what do we understand the um API integrations that this vendor is going to in um introduce to our systems by connecting to our systems. Do we understand um what security engineering measures are needed to um further boost our security posture and have the right um security techniques in place? Do we understand all of that? is only when we go through these assessments do we understand the data protection implications of the data that we will be sharing with these third party vendor do we understand all of these things if it's um or even the financial financial information as well so appropriate

vendor security assessments are important as well as regular security auditing for these vendors as well it's not just a to-do um it's not a checkbox box we take is something that we need to keep reviewing especially when we identify that that particular vendor is a critical vendor and of course legal review. So I I like to believe that after we have such assessments is important that we have um in the contract you know specific terms and conditions that encompass the necessary security measures that need to be in place for that vendor for instance are covered within the contract legal review. It also ensures that we have the right policy coverage um for such systems and

we ensure that it's disseminated to all employees stakeholders that interact with the system. So having said that how can we build secure AI assistance? I've said some of these things before. We've got assessments. Um a practical road map to secure our chatbots. It always starts with assessments. Okay. understanding um what we have on ground as well as identifying the vulnerabilities so that we can in cases where we can patch we patch or we can you know um yeah upgrade our security posture to you know block such vulnerabilities it's important to implement impute validation and access restrictions as well. We have also talked about that before and I talked about monitoring where we deploy AI specific security monitoring because the

truth is what works for your other solutions may not work for AI. So we need to understand the AI the AI solution end to end so that we can ensure that whatever monitoring we are doing is specific to that training and awareness very very important because with the rate at which AI is being on boarded the commensurate training and awareness needed to um inform form users of these AI systems are not a par. So we need to enforce more AI specific training training programs for all stakeholders as well as ensure that we are educating them the users the developers the security teams so we are more convergent with the unique risk that it poses.

governance um establishing clear policies for AI system and management. So what what what's the what are your policies like? I know that a lot of organizations here probably have their information security policies but are we creating the policies for our AI systems as well and are we enforcing those policies as well. So those are some of the things that we need to begin to implement as well. Then verification. It's important that we fact check everything that our AI systems are um sending out to our customers. make sure that it is valid, it is true and it is confidence and it is um

yeah so how can we balance security and functionality? The first thing is um security should be our posture by design and default. It should it shouldn't be an afterthought. Like I mentioned earlier, a lot of a lot of a lot of companies onboard AI because it's the rave because of all the benefits that it's got without actually taking a step back to understand the risk that it poses. So we need to ensure that you know AI security is by default not by design. Um we have regular red team exercises specific to AI systems. um penetration testing for instance as well continuous monitoring and improvement. We need to monitor what our AI systems are doing. We need to

understand what these chatbots are, how these chatbots are interacting with people. Um understand user engagement. We need to understand all of that and see how we can improve as well. Clear incident response procedures. We talked about that earlier on. Um having the right response um plan in place such that when there's there are attacks or there are incidents it's easy to identify um contain and then treat such incidents ahead of time. Cross functional security governance it should it should en encompass not just your information security teams but we should be looking at other teams as well. or we're looking at the development teams, looking at governance across the legal teams. It should be a fusion of

different relevant stakeholders for AI security. We need to be transparent about the capabilities of AI and limitations. And what that means is we need to make sure that our AI systems uh we are informing the public the people using these chatbots about the limitations of our AI systems about how they're prone to mistakes where they get their information from where um yeah um inform people of any bias or de um discrimination that may have been um may have come up as a result of the AI's output. Okay. So in conclusion, AI chatbots of course introduce unique security challenges, not something they were quite we were we are quite familiar with. So we need to have specialized defense um mechanisms

in place for AI systems. Um the impact of of these breaches demonstrates you know they have huge significant impact on businesses such as Air Canada and the financial losses are just a part of it. There's also the reputational loss as well. Effective security requires no technical and governance approaches like we've discussed. Um we need to be proactive about risk management enabling safe deployment of AI and um one way as well is to also have the human to have human oversight the sandboxing and then human oversight of AI's output and of course we must always remember that companies are legally accountable for their AI systems statements and actions. Thank you. [Applause]