BG - The Fault in Our Metrics: Rethinking How We Measure Detection & Response

Show transcript [en]

hey y'all thanks so much for coming to my talk I've worked in detection and response for the last decade and I've made a lot of mistakes especially when it comes to metrics this is the talk I wish I'd seen today you'll get three things you'll get a framework I built to help you build much better metrics you'll get a new maturity model that I've been using to describe and measure detection response capabilities and you'll get lots of examples so my story with metrics starts on a Monday morning I'm only a few months into a new job and I get a message from my boss he's like yeah you know the board of director meetings coming up and I'm

looking for updated program metrics and you can tell I'm new to Senior Management I'm eager to please I don't ask any questions so I send a message to my new team and I ask them hey what have we presented in the past yes what's the response oh no bad news last manager just made those up and good news I'm going to do so much better how many of you have had this happen where you inherit someone else's metrics yes this is often our starting place metrics that haven't been well thought out or maybe even worse fudged to avoid questions or more work yes so I did what you probably did I Googled it and then I ended up

just copying the metrics I used at my last job and that's led me to using a lot of bad metrics but so what why should we care about metrics well you all attended a talk that had metrics in the title why do you care about metrics it drive it can it can drive change show theming things it shows you're doing things what would you say you do here budget yeah I need more money I need more headcount yeah one reason might be yeah metrics do they supposed to drive Improvement uh Carl Pearson he's a late 1800s 1900s guy widely viewed as the founder of modern statistics and he's got this quote he's famous for that which is measured

improves sounds like a great plug for metric but there's an implied warning in that message what if I'm measuring the wrong thing there's this paper that's written by these two guys out of MIT Hower and cats the paper's called met metrics you are what you measure and they talk about how as you pay more attention to metrics you start to make decisions and take actions to improve those metrics the metrics you choose are improving and over time you'll become what you measure metrics also help us communicate what we do why people should care uh Edward tufty who by the way he teaches one of the greatest classes about presenting data is not cyber security or

infosec related whatsoever he's got an entire section that talks about terrible PowerPoints so it's a very fun class and he's got a quote that says metrics revealed data metrics are a tool that enable us to present the greatest number of ideas in the shortest time with the least ink in the smallest space and why well if we're being honest because we need budget we need headcount and metrics are usually the tool we use to communicate that so why are security metrics hard why do we struggle with security metrics we don't know what success looks like we don't know what success looks like what else I've heard people say it's because we're trying to prove a

negative right like nothing happened success uh in my personal experience security metrics are hard because I'm a security person I don't care that much about metrics here's a much less famous quote metrics are an annoying PowerPoint I need to update every month that's me a bit about me I'm a senior engineer I work at Airbnb I work on fun things like Enterprise security threat detection and incident response and I really love my job I live in Austin Texas with my wife and three-year-old son Liam here he is little smile there and I really love being a dad and a husband and there's one one thing I'm really good at as a husband as a dad and as a security

engineer I'm really good at making mistakes and this is the point of the talk where I'm supposed to gain some credibility with all of you tell you about my accolades my 15 years of experience but really I've just been making mistakes let me tell you about five of them and the first terrible mistake I've made is losing sight of the goal how many of you are on call or working alert queue of something some kind yeah how many of you are on call right now yes what a terrible idea right those are the tired people in the room by the way and this year marks my 10year anniversary of being on call and for those of us that spend our days

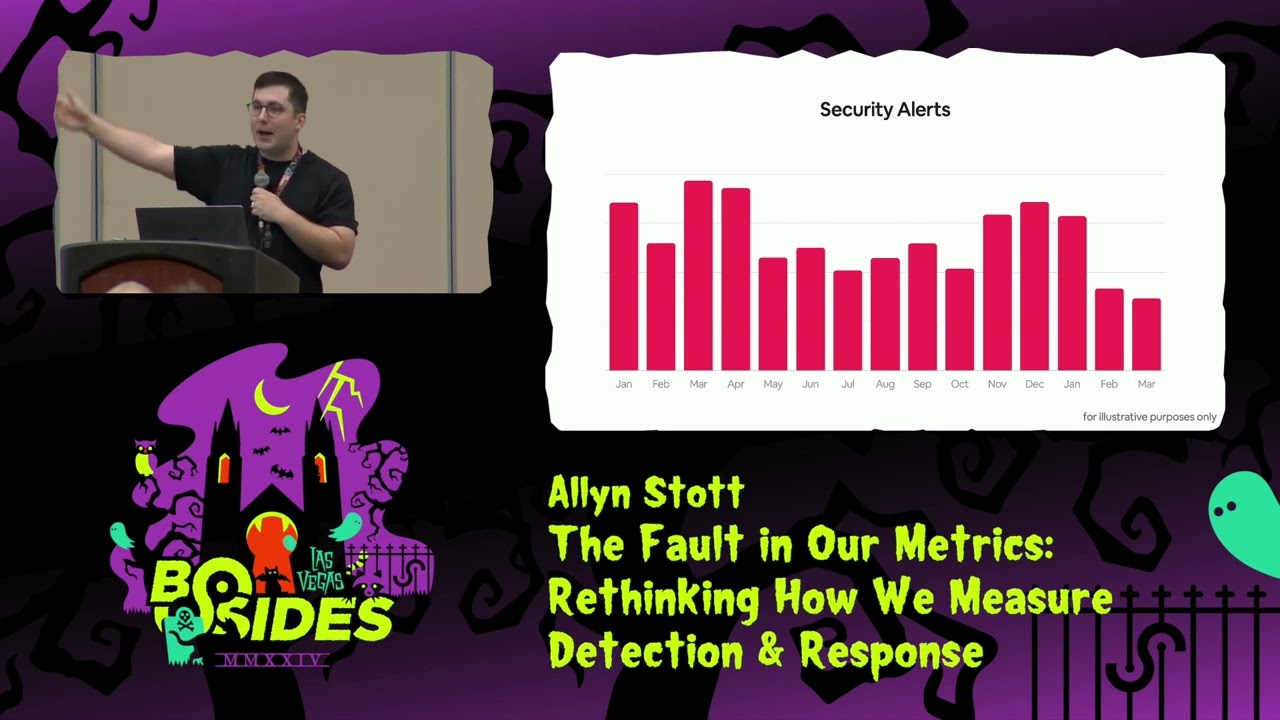

triaging alerts and responding to fires it can be really easy to lose sight of the goal and so we end up describing that Frontline operational work with metrics like this one and here's a metric that shows the number of security alerts per per month how many of you have seen this metric before yeah how many of you have this metric today cool and if you take a closer look you can see in the past year March and April had the most alerts my boss will ask a question about that and if we keep looking at at it it looks generally like alerts are trending down did we do that did we stop logging something in February did I get really

mad at the IPS alerts and was just like we're turning them off alert count has become the heartbeat metric for security operations instead of rooting back to the goal of detecting threats and responding quickly we've reduced ourselves to cries for help I've come to call this metric the operational burden we've inflicted on ourselves you'll notice this graph has no numbers on the Y AIS if it said 5,000 versus 500 versus 5 million like does that does that mean anything to anybody especially the people that aren't working the alert que another typ for this might be we're doing things it's crazy out there maybe it's fear driven scare leadership with a bunch of alerts and sometimes we try to make it a

bit better we break it down by true and false positives I've been proud of myself for doing this but if I'm honest I'm not really sure what I was trying to say with this metric that we have a lot of false positives so what's a good true a false positive ratio is it the same for every alert type if I reduce this number would it mean that I have decreased my visibility in the threats if I have too many false positives does it mean that I'm possibly missing true positives and so the first problem I'm running into is I don't know where to start with metrics detection and response has significantly matured as a field but I'm

stuck here making metrics about alert volume so I needed a starting point and so to give you a starting point I thought about what in detection and response could we measure to help us make decisions to see if we're improving the acronym here is not great it's sa and the S of it is we want to show that we're streamlining our operations by improving our efficiency and accuracy through automation through better tooling and processes so that's one area for metric we want to raise awareness about what we're learning from threat Intel share things like what threats and Trends we need to be prepared for we want to measure our vigilance how prepared are we for those top threats

can we detect them and as we learn about new threats and trends how is that guiding our threat hunts as we explore our networks what are we finding and when our detections fire or our threat hunts turn into incidents what's our Readiness how quickly are we able to organize and respond to incidents how complete are our playbooks so when you're thinking about your own metrix think about which sa category the metric should fall under and this can help you tie it back to an outcome and I like to start with just one metric in each category we often get asked to make a lot of metrics um and that doesn't help us focus and so for

each metric we should ask what question does this metric answer so what question were we trying to answer with this metric I think it was are false positives taking up too much of our time or phrase it a different way do I have enough time to properly investigate my true positives so another important question to ask when you're looking at a metric is how do I control this metric how do I reduce false positives so how do I reduce false positives alert tuning how's that going for you yeah about that and if I map this to my sa categories this is maybe a streamlined metric and streamlined metrics usually answer questions about efficiency accuracy and

automation so I have two big problems with this metric first this metric doesn't tell us where we're spending most of our time we think it does in two tively it makes us feel like we're spending most of our time with false positives and second the only control I'm rewarding is tuning or t turning alerts off so how can we make it better and here's a graph of time spent on false positives and for now I've completely removed the true positives because for now I'm okay if we spend time on those and instead of tracking how many false positives there are I'm tracking how much time we're spending on them now how do you track this that

could be as simple as well whatever alert system using is when the alert gets created to when it either gets assigned or triaged and there's an inherent problem with that uh if your team's anything like mine you know if I'm on call I'm doing the triage and I've got you know 10 alerts sit and there in the queue what's the first thing I do when I see all those do I go to each one individually and start working on them no I select them all and I assign them to myself and why do I do that what metric response time to response time to triage our slas it's important to remember when you're thinking about a metric to

remember that you've got a bunch of hackers and they're going to figure out how how to make this metric improve regardless of what they're actually doing is improving the process so I recommend not measuring it for a while you want to have accurate time for how long an alert triage takes and by measuring something that is inherently making people do the thing that gives you poor data doesn't make any sense so how do I control this metric how do I improve this metric well automation maybe and as we get more automation tools the number of events may not even equate to how much time we're spending on false positives and as you automate you can carry over

the time you spend to automate that so this lets you do something really cool you can actually speak to the amount of human hour your automation efforts are saving you so now folks aren't just incentivized to tune or turn off alerts they're incentivized to find out where are we spending the most manual time so that we can automate it my second mistake my second mistake is using quantities that lack controls or more simply said measuring the things you can't change meantime to recover is a classic incident response metric it'll be in your Google search and in this example you'll see that recovery was lower in September and October and then it grew in November and December but then the

team pulled together we did some real good Process Management we improved our tools we worked really hard and we got those recovery times back down or maybe there are two three four major holidays in November and December it's funny I've spent the last year researching metrics for detection and response and I've learned something we're obsessed with speed in incident response the vast majority of results when I search for detection and response metrics are about meantime time to detect time to respond time to contain time to recover and I'm not going to argue that speed isn't important but using time as the sole measurement across incident phases completely ignores quality and Effectiveness but my big problem with

this metric is that security incidents have a lot of variability especially the further you get Downstream in the response process a lot of dependencies from event start to recovery and not all of them can be controlled especially by our teams so a graph like this it doesn't help me make decisions because I don't know what's controllable here I don't know what my team needs to do I don't even know if this is good or bad and what happens when your teams don't know how to improve a metric you stop caring about it because you can't affect it so instead I've broken out the response times across all the different phases and here I have filtered out any

built-in time that I need for Quality so I like to do this where every response Playbook I have has some expected built-in time sure as you mature your capabilities that built-in time will come down but that's not the focus for this graph here we're looking at what can we control today Eric brandwine from AWS he gives this talk it's called the tension between absolutes and ambiguity and security and in it he says when you look at a metric it should immediately answer what do you want from me what do you want me to do and one of the easiest ways you can do you one of the easiest ways to do that is make the answer zero

if there's nothing to do so here I've filtered out all the time we can't reduce right now so if there's nothing for us to do we've made the answer zero so now I can look at these metrics and I know exactly what it wants for me go look at the incident in December and figure out what happened in the remediation phase so then we can either filter out more of that time because it needs to be built in or we can do improvements in our playbooks my third mistake was thinking proxy metrics are bad or more simply choosing amazing metrics that are insanely expensive to create when all I really needed was a metric that was good

enough so here's a great example so a long time ago uh my team and I decided that we wanted to know what our miter attack coverage was and this was before this was the cool sexy thing to do and we determined that we needed to write tests across the entire framework and once we got going we figured out okay we don't need just one test per technique that won't tell us much and also we've got Windows Mac and Linux so we're probably going to need a couple of tests for those and so after years of developing tests investing in tooling we finally had the data to visualize our attack detection coverage side note I saw a really great tweet the other day

it said we need to do a better job of mocking vendors that claim 100% miter attack coverage for lots of reasons but most importantly it's silly I've seen the Carnage that 100% miter attack coverage is and it's alert fatigue like you wouldn't believe anyway we spent years Gathering all this data and it is very cool but at the end of the day all we really wanted to know was where do we prioritize detection building so do this instead rather than trying to measure your your detection coverage across the entire attack Matrix start by finding the top five threats you care about the most don't overthink it look at your external thread Intel and think about what industry you're

working in what type of environment do you have and then look at your incident Trends what kind of events what kind of incidents are reoccurring and then link those back to your organization's security risks what would be a really bad day for your company if data was exfiltrated what data would make your Chief privacy officer cry the most it's a great metric you can visualize it by a growing tier as well and once you've got your top five prioritize your detection development from there and I like to Workshop these as a team where everyone takes one of the top five threats and then we use attack to derive all the different techniques and sub techniques and as you

write tests and detections you'll slowly end up building yourself this prioritized miter attack coverage map things you actually care about but without all the alert fatigue and without having to build this super costly metric and plus now you might be best friends with your Chief privacy officer my fourth mistake was not adjusting to the altitude and as someone who has floated back and forth between management and individual contributor I'm very guilty of this one who here has tried to explain all the different Columns of the miter attack framework to a board of directors yeah I see a couple guilty hands I have sure why not let's do it detection coverage is actually one of the better new metrics that we've come

up with but wow we've done a bad job of explaining it at the leadership level I've seen one of those miter attack heat Maps uh from a specific vendor just slapped into a board of director's deck as if it meant anything to them so we need metrics at every altitude and the higher the altitude the less it becomes about the specifics of detection and response and more about the impact to the business and it's helpful for me to think about it like a pyramid for the business the impact we make is reducing the cost of an incident or a breach or another way to think about it might be how costly we make it for an attacker to

cause impact and so our metrics at the top of our pyramid are about meantime to detect and alert the organization about a threat and how quickly we can respond and get thing get business back to usual but then under that top layer is our coverage and Effectiveness can we DET the detect the top threats to the business do we have playbooks for the attacks most likely to happen do we have the visibility we need and then under that layer how well do our tools perform how much time do we spend trying to figure out what logs we need to search and how long it takes to search them organizing your metrics in a pyramid can help you connect the lowest

layers to your norstar metric and speak at the altitude that's appropriate for your audience organizing them in a pyramid can also help you connect your metrics to the rest of the security organization so it turns out detection and response isn't always the best strategy if your metrics show that meantime to respond is trending up because of a repeating type of incident Sometimes the best way to reduce the cost isn't by improving your streamlined or your Readiness metrics it's putting a new control in place to prevent that type of incident from even occurring I really like to do this especially because I get to work across lots of different teams I get to work across detection and response and then I

get to go and visit all the teams that do prevention and you would be surprised how little we're telling those other teams they have no clue what's happening over in detection and response they don't know what V vention and controls they should be prioritizing based on what's happening in the real world and it's our job to help inform them so that our lives get a lot better and my last mistake was asking why instead of how and my natural inclination is to ask why why didn't we detect that malware sooner why are we still missing those firewall logs and as a dad I have a lot of why questions why do we bring the car seat

when we only took one taxi ride the entire trip why do we need four suitcases why didn't we bring the stroller and why can't Liam walk by himself why can't you walk by yourself Liam but in all of these examples why is not helping and so instead I've learned to move straight to to the how and start figuring out what needs to be done and often answering how allows you to identify the underlying problem much faster and from a much more positive perspective especially from your spouse I mean cooworker how can I carry Liam a car seat and two suitcases through the airport how can we detect these types of threats sooner how can we respond

faster when I interview with my current VP she asked me how do we build a modern detection and response program how do we get there but one question interview and it made me think about maturity models and my first exposure to maturity models was the hunting maturity model hmm who here is not familiar with the hunting maturity model okay so it came out in 2015 is it's was created by David biano who's also the creator of the Pyramid of pain and hmm was great when it came out and it's still great today because it helps describe the different levels of maturity for a threat hunting program what do we need to get to the next level of

maturity how do I get there what specific indicators would put me there what type of activities would be expected at different levels of maturity and maturity models are useful from a standpoint that they give us as security practitioners this common language to answer where we are now where we're going and how we're going to get there so I created the threat detection and response maturity model and the TDR maturity model builds off of the hunting maturity model and expands it across all the different areas of detection and response and there's a lot to it so at the end I'll provide a link with the full maturity mod model that you can use and the first pillar I thought about

was when measuring maturity was observability do I have the tools and logs to get the visibility into our entities and user activities can I enrich it so it's contextualized and searched quickly and then proactive threat detection where we focus on collecting threat Intel prioritizing the detections we build and buy and the Hunts we perform and then finally rapid response where we prepare playbooks and automations so we can move from triage to analysis and respond with all the forensic capabilities we need and we can use these pillars and these 14 capabilities to describe and measure where we are today and where we want to go next and for each of the 14 capabilities in the framework you'll

score four different areas process tools documentation and testing and you'll rate those from initial all the way up to Leading and I've provided some general guidance on how to rate the maturity of each area but in the framework itself there's a lot more specific direction for each specific category and capability so for example if we rate our detection engine capability we think about what processes do we have do I have a process for creating a detection that looks for first time occurrences do I have a process that defines the most optimal way to determine those thresholds and then we rate our tools are the detections we have managed from a central location and then documentation or what's been the case

for most of my career the lack thereof and then finally there's testing and we all know what happens when you don't test things well bad stuff happens and as you go through each of the capabilities and rate them I like to rate them individually and then afterward rate them together with your team because everyone once you start talking about the different capabilities you'll hear things that you will change your mind confirm your own rating and you can have a really great discussion about where you are with each capability and then you can take those ratings and show at a high level where you are today across the three pillars and where you plan to be by say the end of the year

based on the projects you're planning and the initiatives you're doing and I like to use this tool because it's a very simple message for leadership but there's a lot of underlying detail that backs it up that you can zoom into depending on your audience but I also really like to use it because it shows whether the work that you've planned is going to have impact or not if you've done all your planning projects and you're like cool I'm going to work on this and then you're projected to not move at all maybe you should rethink the things you're prioritizing what's also really cool is that a number of companies and folks have been starting to use this and I can now start

to Baseline where different sectors are and understand where I should expect myself to be and where folks have struggled in moving their maturity and this is the nice thing about using a framework is that we're talking about the same capabilities and problems using a Common Language and we can talk specifically about what went well and what didn't in our journey to get there so as you do this work you'll need metrics to show are you getting better and here's where saver comes back in and so for each metric you create you'll put it into this structure you want to avoid my first mistake losing sight of the goal and ask what question does this metric answer what's the outcome we're

looking to achieve and then what sa category do I I tie it back to to help drive that outcome you want to avoid my second mistake using quantities that lack controls make sure it's a metric you can actually control and don't forget make it zero filter out what you can't control today so when you look at a metric you know exactly what it's telling you to do and then if you have control of a metric what risks could this measurement reward I was talking to a buddy of mine and he runs one of those really big socks like the kind with the huge room and the monitors on the wall and the pew pew map and I haven't been in a big

operational sock in a really long time and I'm happy to say pew pew map is alive and well it's doing well anyway they were talking about metrics and he was telling me that their time to analyze metric was one of the biggest pain points in this sock overall analysis was way above what they expected so brought the metric up to the team they're like hey time to analyze we got to find ways to bring it down so guess what you won't believe it the team brought it down and guess what else went down quality of analysis so then guess what went up true positives missed so when you introduce a new metric think about hm what risky Behavior am I

rewarding it might not be a bad metric but you might want to create metrics that balance it out because remember you become what you measure then there's metric expiration when is this metric not needed anymore when my only lever was alert tuning it might have made more sense for me to track alert volume but now as I automate more and more of my alerts maybe it's time I expire the alert count metrics or at least remove it from my leadership decks and then data requirements how much data will this metric require how much new effort are we going to need to improve this metric and how much time does it take to collect this metric don't come

away from this talk telling people Allan said I should spend all my time working on metrics that's not the reality the reality is that we rarely have enough time to work on metrix so don't make my mistake number three where you come up with this amazing metric that's going to take so much time to work on to improve think about the fact that I have very little time I don't get new headcount just because I invented a metric take that into account when you're choosing your metrics and anytime I talk about metrics I always get asked how do I change the bad metrics I'm already presenting and I get it change is hard leadership does not like surprises and

they often have expectations that I'll be updating last month's slide deck but I have a tip that's worked really well for me uh here I've convinced my friend Dexter he's still my friend to get in near freezing water it's about a little under 40 Fahrenheit and Dexter's first reaction was shock his heart rate spiked when his body hit the water he gasped Liam thought this was pretty funny and he had a worked in that hyperventilate but then suddenly it all made sense to him this is great and it's the same when you change your metrics it's not going to be fun immediately people will go into a State of Shock those metrics they've been around a while and they've

gotten very very used to them but my tip is embrace it push to the change because they'll soon have Clarity because when you bring it all together you're about to tell a story that's complicated it's Technical and you don't have a lot of time to tell it so here's my pitch up front and center is our maturity model using the TDR maturity model and it shows the maturity of the program where we are today and where we're targeting by the end of the year and then we use the saver categories to tell the rest of the story we're streamlining our operations by looking at what's taking the most time that's what we're automating we we

looked at our threat Intel and incident Trends and we're raising awareness about these top five threats to the company we're focusing our time this quarter to build detections for these threats here's where we're tracking and we've been exploring gaps in security controls relevant to those top five threats and we found three new gaps and from a Readiness perspective we have one type of reoccurring incident with a really long recovery time so we're working with our security team to implement new control rols that'll prevent these from ever occurring so now instead of making wild guesses about whether you're improving and if the tools you're buying are making a difference you can use the TDR maturity model to measure your

capabilities instead of using volume counts fear tactics and tired emojis you can use sa to get to the core of a metric ask better questions and map that to something you can control and instead of focusing on 100 % miter attack coverage you're focused on the threats that matter the most found your top five and are working on having detection coverage with real impact so hopefully this talk is your wakeup call take a cold plunge it's time to rethink your detection and response metrics thank you very [Applause] much and this is my link tree it has my contact info it has a copy of the slide deck and then there's the complete TDR maturity model I also write a very

infrequent newsletter very infrequent I have a toddler called meard uh it has an adorable cat that people love the security info is half decent I've got lots of stickers to hand out so please if you see me after please come and grab them and I think we have a couple minutes for questions we've got a couple minutes for questions so raise your hand and I'll walk around with the mic thank you so much for this by the way I thought this was really useful I had a question regarding the program maturity model that you were talking about can you talk a little bit about what you use to fill out that graph i' I'd like to

understand that better to have examples yeah absolutely I gave a talk last year about building a modern detection and response program and in that talk I dived I took a step back and I dove into what are all the things that we do in detection response and then I thought about what are those outputs and what I found was a lot of the outputs that we had internally things like threat Intel were really not getting shared at the right level they were you know informing our other tools internally and that was about it um there's a link in this link tree that has that full talk and in there I talk about all the different

areas of detection and response I think we we need to be able to succeed and I use a lot of uh a lot of additional like research that folks have done into how do we build good programs how do we think about programs and what matters the most so check that out that'll answer a lot of questions for you yeah great talk um two questions how long does it take for a big sock with those pew pew maps to get this going all right how long does it take all right well I guess it depends on like your bravery uh I have had folks I've talked to that are like this is going to take me years

to make that change um it really does depend on your leadership's willingness to shift I really think that if you're not telling the story of what you're doing all you're really doing is continuing a narrative that doesn't inform anything one thing I found works really well is asking back the question of what question are you trying to answer with the metrics we have today and spending that time to bridge that Gap because a lot of times the questions they're they have in their head don't aren't really actually being answered by the metrics we're putting in front of them and you can use that saber framework to find where their question really is and map it to something you can really show that

that makes that change I'll come back to you for your second question later um how do you guys go from counts of detections in a MIT bucket say to coverage of that MIT bucket say that again uh so we talk there was a MAA coverage you know kind of in there for like a a tactical procedure how do you go uh from the counts of the detections that you have to deciding how much of that is coverage within coverage it's really tricky to know so and this is this is partly why uh I think a lot of the miter attack coverage maps that specific vendors and tools pump out don't tell us a lot especially if we don't understand

like you know a miter attack technique could have 50 different kind of examples and if you can detect one of those does it really inform that you'd be able to detect that in a day-to-day way um there's uh a waiting system that that I've used in the past where for every test you write and every then correlating detections to those tests you talk about how good that test is for how good the detection is so if you can think of 50 different ways to do something will your detection catch a percentage of those this is what I think helps us build detections that are a lot better versus like if somebody's like oh I'm going to detect all miter attack you

could write like some very simple binary type detections or you can improve your like all right this is looking at behavior that doesn't normally happen here and it's extracting out some sort of data analysis from that I think having a maturity of basic all the way up to you know this is actually going to detect a lot of different like abnormal behavior will help you wait whether or not you say What percentage of that technique you're covering and so assigning like a weight to each of those there's a really great talk I'll have to look it up that they talk about a framework for weighting the tests that you have for each miter technique to the

weight of your detection and they've done like a really cool analysis of like tools that actually like do this uh so come find me I'll try to find that talk of that paper I think one more so if you're just starting out with some of this what would be the top things that you prioritize first from like the metrics I create yeah all right I would start with what you're doing today uh so if you don't have a threat Intel program or you're not even thinking about threat Intel today don't start with that metric because you've got to do a bunch of work first um I I I really think you generally don't have a

lot of time for metrics so think about what can I actually measure today that would help me make better decisions today uh if I think about like the s a v r I like to start kind of on the the response Readiness side of things because that's the stuff that happens regardless uh you have an incident you could have created the detection for that but the reality of it is is like you just might need to be able to respond to those things and so I like to start from response I think that's a the Readiness area I think that's a good place to start cool I'm out of time I'll hang out and haul over there and I've got lots of

stickers so thanks everyone for attending