LLMs for Beginners - Jr Hernandez

Show transcript [en]

we're very fortunate today to have J.R Hernandez with us he's been doing the information security for over 10 years he's currrently a man a security manager at evolved security and in his spare time he likes uh photography literature and standup

comedy Hello everybody welcome my name is JR Hernandez as I said uh this going to be a talk about large language models for newbie so I I am a newbie to the field as well go into that in a little bit but uh welcome thank you guys for coming appreciate it uh so what's on the agenda today so we're going to talk about what llms are um how they work and how to train them and then the future and what it holds right um as you guys know uh you know llms kind of took over a year or two ago everybody G kind of went crazy uh I I'll tell you guys why that experience made me decide to do

this talk okay so who I who I am and who I am not right so uh I'm a security manager I lead a team of pentesters um you know at at invol security um yeah I I was formerly I was a penetration tester myself uh I've been in the infx space since I started my career uh around an' 08 uh lifelong Noob I'm proud to say that I'm always trying to learn new things uh technology and Security in general is very uh you got to keep learning it's part of the job you know it's always something that you got to keep on top of so this is one of the things that I'm trying to do for myself

to stay relevant uh what I am not I am not an AI expert uh I'm not a data scientist I'm not a machine learning researcher I'm not a mathematician so I'm just a security guy who found a new technology and trying to understand it and learn it uh and I want to make sure that our AI overlords I understand what they want uh um all right so uh why have this talk right uh well because um there's a lot of unknowns about large language models chat gbt about AI it's very scary right so I wanted to understand what it was I I didn't want to be scared of the things so I want to see like how does this

really work so that's the thing that made me decide to do this and research this topic um as I said before learning you things as part of my job I have to stay relevant uh in order to secure LMS I have to understand how they work right so that's that's the big motivation here all right uh so what are llms right large language models so uh large language models are basically AI models that understand uh natural language and then generate it as well right um so here are some of the common things that they do uh they generate code review code uh generalize text summarize text translations um the most popular one are chat Bots like chat GPT um sentiment

analysis and much much more right H and you might be like hey you use the word model to describe large language models that's not fair right well I did that for a reason uh I'll tell you guys where that is uh because it's not something that you can kind of it's kind of like a Russian doll situation but I have to go back and go back and like explain all the layers before I can kind of so you can kind of get a grip of what um AI model is so let's start with artificial intelligence artificial intelligence is the field kind of like physics it's a science field right that kind of focuses on getting machines to perform tasks

that require human intelligence um the next level down is machine learning which is a subcategory of artificial intelligence which kind of focuses on how we get machines to learn right uh the typical problems that we see with machine learning are classification and predictions and they do this by analyzing uh text and and getting these algorithms to get the machines to learn uh a subcategory of that is deep learning right which is uh getting them to solve harder problems and they do this by mimicking the human mind uh mimicking um the how the neurons in our brain and synapsis connect so that we can learn um this is kind of where deep liing comes from and we created

something called artificial neural networks which is basically how the human brain learns and we did this um in order for to solve more complex problems and then from that study of deep learning we have General AI which is like the buzz and what everybody's here for and Chad gbt and Dolly and uh mid journey and all these tools that are using neural networks to create new content not just let us know if something looks like something else this is actually creating new content um based on training that it's been done so that's kind of why I had to say AI models to explain uh what um large language models are um yeah it just there's a whole bunch of fields that all

of these fields are you know disciplines and studies that people spend their whole lifetime learning so this is my attempt to kind of dive deep into that all right so like I said a large language model is basically this it's a function uh this is as much math as we're going to do today this is it right uh all right so let's explain what that is right so F ofx it's a function that's the AI model X is the input Y is the output so basically llama 3 is an open source like chat GPT equivalent you give it a prompt which is your text which is your put it does its magic and then it gives you it

generates new text right they basically a lot of these AI models that's what it is you give them input it gives you output right and the same goes for Mid Journey you say I want a picture of a cat surfing and it it spits it out for you all right but at the root of large language models and text it's just next word prediction right this is the magic this is the thing that's kind of scary to me well not scary but it's fascinating to me how these things work right um because at the end of the day large language models like chat GPT do amazing things they do uh they do things that seem like um like actual

intelligence but at the end of the day underneath the hood all it's doing is next word prediction which is kind of insane right because this is the equivalent of uh this is the equivalent of IM message right when you're on your phone and it's looking at the next word that's kind of what it's doing which is kind of mind-blowing to me but anyways let's go back so I gave it this sentence and I said please complete this sentence this is an AI model right and I and I said Humpty Dumpty sat on a wall Humpty Dumpty had a great and it gave me the word f but it also gave me a couple other options and I'll be more clear but

here 97.7% chance that the next word on this sentence was going to be fall so I'll give you this is a better one this is more clear I said make a three sentence story about a hacker Meetup and it gave me the whole thing but the word monthly when it reached that those were the options that it had right it gave me the percentages of what's the likelihood most what's the word that's most likely going to happen next and it had the option to be annual monthly regular secret or weekly right now the interesting thing is where does it get those percentages and where does it get all that information that's where the training comes in that's when it kind of

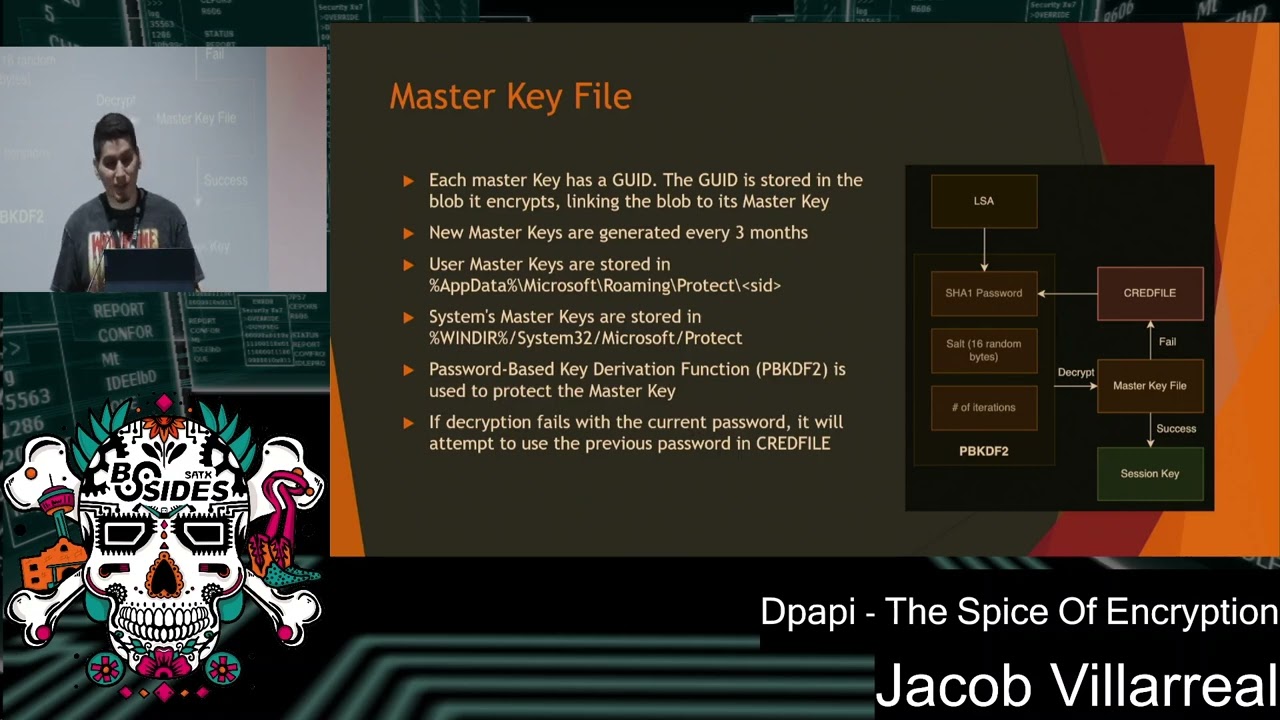

decides what word is most likely to be next but isn't that amazing that the fact like all it's doing all these chat gbt functionalities based on next word prediction which is insane which is pretty cool I think and like I said IM message is the thing that started all I guess uh so how do they work at the end of the day right so it's three main components you have your data uh your Transformer which is a neural network as we spoke about earlier and then your training making these model understand and learn that's the training process so that's the three components that comprise a uh large language model so let's start with Transformers which is the neural network right it's

part of the deep learning part of of artificial intelligence so Transformers um are kind of like the big novel thing that came out uh and is kind of like the thing that started the Gen craze right or didn't start it but it's one of the things that contributed largely and made people pay attention to this this was from a paper in 2017 by Google um called attention is all you need and this was a new novel uh um neural network that was presented by these researchers in this white paper and basically what they did they did two things that were different than the previous neural networks that we were using um and this is what kind

of caused everybody to kind of switch to this neural network and like I said this is kind of like the start of what you know was happening with um all this ji General AI stuff is that well this this paper all right they're mad at me for talking about um yeah so uh this this paper was designed for like translations to translate different languages from English to like French or something uh but um the when researchers they they found that this model was uh this neural network was a lot more efficient um it had something called self attention where would recognize the context of where the word is compared to the other words in a sentence other neural

networks were not aware of that so it kind of understood the placement of the word when you had it in a sentence which was kind of new uh and and and really exciting um it also was able to be trained a lot more efficiently than the previous ones uh because in the previous neural networks they had to go train by individual one word at a time where like for these new Transformers um it was taken in whole sentences that means that it was way more efficient to train these meaning that it could be done a lot faster um and again this is with the GPT the t is Transformers that's that that's what that means so at least you guys

took away that from this when people ask you what's Chef gbt you'll be like Transformers uh uh so an artificial neural network uh this is uh this is kind of like the magic of how it all works and this is when people say you've heard this right many times when people say they don't know how it works they don't know how it works it's a black box this is what they're talking about the hidden layers and I'll talk about what that is and you'll see it in in right now but basically um it you give it an input like I said it does its thing it does something and then it gives you an output in our case for us it's the next

word that's likely going to be there like on the sentence uh so what this does is you get an input you give it to it it starts processing it passes into the next layer all these like rows of nodes are what we call layers it does a a mathematical function on each of those noes and then based on the results it passes it on to the next uh layer and it goes on and on into the output now I'm going to show you guys I know it sounds kind of hard but basically all you guys have to know is you have it in it does some sort of mathematical equation uh and then it fits out what the output is

so basically this right so what what kind of image is this it's a dog right and and all you guys that's all I want you guys to take away you don't have to worry about the math of how it works on underneath the hood just know that this is kind of what the neural network does right and because at the end of the day like finding out how these neural networks work is a lot of like math and um something that is going to require a lot more time this is just an introductory kind of thing I just want you guys neural networks they take input do some magic when those hdden layers then you get the right output and

I'm going to go reason a little bit of how that works okay so this is um before you feed stuff into the Transformer you don't just feed text into it right we give it a prompt but it has to be changed in a way that's understandable um it turns text into tokens this is when you see chat GT they charge it for token use right uh this is what it is it basically takes the text that you provide and chops it up into smaller fragments uh those are called tokens and this is so that it can be changed into another thing later on in the neural network but this is the first step so the first step

is tokenization so tokens are not word for word right it's not just broken up in that way it's combined into different things so for example the bsides description I put on here was broken up into 795 tokens um and keep in mind that these this tokenization process is not standardized so each different model handles the breaking up of these in different ways so if you break it up in like you broke down a um piece of text it might be like 75 tokens here for CB but for a llama it might be like 80 or 75 or 70 or something like that it might be different and this this this goes the same for images as well images go broken

down by pixel all right so after you break things down and you break them into tokens then you have to change those things into something called embeddings which uh embeddings are just an array of numbers right however the cool thing about this and this is the thing that kind of blew my mind when I was researching this is that this is how the models um kind of give meaning to words right and how they have like that's kind of how the model kind of understands what words mean which is insane to me right so for example here in these pictures you have an array for uh the embedding for the word food right and then foot and burger those are all

arrays right those are the embeddings of those words um so in a from a dictionary perspective the word food and foot are kind of closer together if you put them all and right like they're kind of but there is no relationship there's no there's not really anything there unless you're really kinky but like like there really no relationship at all right but food and burger there is one right there's like there's there it's food it's something that there has to be a relationship to that right so it's cool that these embeddings turn these into arrays and that that's the part that I'm going to research more and that's going to be my next talk to really understand

how these embeddings kind of like talk to each other I don't know where these numbers come from but all I know is that for some reason these embeddings actually give meaning to Words which is crazy to me um oh before I go that so these embeddings also you can kind of think put them as coordinates right if I tell you if I give you a coordinates for like latitude and longitude and I you're going to end up in a city right if I say what's theat what's the coordinates for New York you can give me that based on coordinates think of that as well for these embeddings right because if you look at a multi-dimensional Spector

space these are all words and they're kind of put together in like a not like not even in a map plane but like in the space all these words are based and coordinated upon the embeddings all those numbers that we saw previously I have a really cool demo that I think you're going to like in a little bit that that kind of goes deeper into this give me one second just checking my phone okay so one of the things that blew my mind when I was doing this and and again this is you're going to see a lot more of this in a little bit but when you like I looked at the embedding for the word King right and then you can

see kind of here it's kind of bigger than the other ones but like all these other words L up in the vector space and it's because there's a relationship with the word King with like Henry Authur brother son all these other words have a relationship and they're kind of related in this Vector space right it's hard to see because we we can't comprehend multi-dimensional space like this it's too big for us to understand but like um and in the demo that I'll show in a little bit you're going to get a clearer picture of how these words kind of are intertwined together through those embedments all right so whenever you guys hear about people talking about

chat and these new models they always talk about like this model has billions of parameters right this model was trained on a trillion parameters or whatever the parameters are basically the connections between the notes that's all you guys have to know so the weights and those numbers remember when the image was processed through the noral network it goes from one layer to the next um in order for to understand which nodes of those are going to turn on and turn off um the weights have to be adjusted and they they are then that's what we call the train I'll go into that in a little bit but basically all you guys have to know that every like line

between the nodes that's are the parameters that are being like counted when when people talk about like our model has you know is training on 5 billion parameters that's what they're talking about all those connections um keep in mind the bies in there too okay so I'm going to go deeper into this a little bit but when you're training a model you you show it examples of what you want the output to be right and then it if it if it gets it wrong it looks at the difference of how wrong it was and then goes through a process called backward propagation which adjust all those parameters that we talked about in the past it kind of

modifies them so that the next time it goes around it's actually closer to what you want and that's how it does the learning right um you give it a pass it goes through the neural network that's for propagation if it's wrong it adjusts itself through backwards propagation and it adjusts all those settings all those weights to make sure that the next time it gets that similar thing it's actually understand it it's better off that's the training process in a nutshell all right so when I was learning about this the thing that like stuck in my mind was that like you're basically tweaking a bunch of settings every time you do this right every time you do a a training session you're

tweaking all the settings all those connections you're adjusting them a little bit until you get it just right and that's kind of what I kind of saw in my mind just like lots of dials lots of like things that you f tune and you like you you adjust every time you train all right so let's talk about the data so for training data what what how are Chad gbt and all these large language models trained well they're trained on like what they say like um you know large data sets right the whole internet people talk about this was trained on the whole internet um so that's kind of things that it's trained on just uh text Data books articles web

pages um I think chbt um was trained chat pt3 was chain was trained on a data set called open crawl I believe that's what the name of it is and it's just billions of web pages um and the larger the data set the more accurate the llms tend to be right so that's why everybody wants more and more data that's why people like Google I like transcribing YouTube videos just to get that extra text just because they can't get enough because the bigger the the training text the the better these llms perform so here this is something that's really interesting as well when I was researching this is why people get so kind of freaked out about AI is that

they found out they saw that the more connections that we had right those collection those parameters the more things it was able to do so when you had a smaller uh set of parameters it was only able it was really bad at certain things but then the more parameters that you gave it the more data that you gave it and the more the larger the neural network was uh the more it was able to do so then the thinking is well if you keep giving more and more what else can these things do right because it's able to do a lot more things based on how big these um the how many parameters do we

have so that's why people are kind of afraid of it now there's other people that disagree with this and say that we're going to reach like it's not going to mean that like just because you keep expanding the the the Min connections that you're going to be able to have like general intelligence but you know that's kind of like why people are kind of paranoid about this because they see the pattern okay so let's talk about training so training is is broken down into two pieces the first piece is uh pre-training which is basically what we talked about right where you give it all this raw Text data and then you pass it through the neural network a bunch of

times it figures out if it's right or wrong and then adjust itself accordingly through back propagation and then it uh it adjusts all the dials right that's the pre-training phase that will give you a foundational model basically something that just does that thing that we talked about which was the next word prediction right those are that's the first stage but then you're asking me how does it do the whole chat GP think thing where it's like an assistant where you interact with it and it gives you answers because remember if you ask a pre-trained level one uh model question it's just going to give you like the next it's not going to know how to act

so then the next thing that it does is called fine-tuning which is level two which is you feed a data still but you give it a certain format if you guys go to hugging face which is a site that deals with large language models and all kinds of AI models they have a data set there called open Orca I believe and it's basically like questions and answers examples like five gigs worth that you use to train it with and this is how it actually knows to become an assistant right because you give it the instructions hey you're an assistant this is a question this is how you're supposed to answer and you do this and

you train it over and over again until it kind of gets that kind of like personality or fine- tuned personality so it becomes an assistant this is how chat gbt and all the chat box work um what companies like MAA meta have done uh they give you the foundational model so then you can do the second part the fine tuning to customize it to your needs which is pretty nice it's pretty cool all right another part of fine tuning um is the human uh feedback which basically um it'll ask you give a a a request it'll give you two answers and then you pick whichever one you you you like best and then it'll use that in its

next data set for when it trains because it'll have like hey they like this this answer and response uh let's use that on our next training run and then the next um version of uh whatever model you're running is going to be a little bit more accurate because it has feedback from humans it gives it more data all right so this is this is again this is part of level two which is alignment uh and this is a this is the really complicated part about these models uh is that um remember these things are trained on like all the data from the internet which means that there's like data that's not safe obviously how to build a bomb like you

know how to break into a car all that information is out there and these models can't tell the difference they just answer the questions that you give them right because it does that next word prediction so what they have to do is they have to give it do the fine tuning but they have to give it restrictions they have to kind of put the handbrakes right um and this this is where you have the the you know when you ask GPT to make like how do I make a bomb it tells you like I can't answer that this is how this process is done right by just giving it and like answers and questions that it's not supposed to

answer right so basically you're training it more and more just not to give you wrong information the problem with this is that alignment is subjective right what's right for me might be wrong for you and there's people who want have freedom to like just ask it whatever so it just depends like it's it's very subjective it's not an objective thing it's really hard this is a whole field of study that is really fascinating right because at the end of the day we're instilling our values into the latch language model because we tell it what to agree to say yes what to say no to it's a very difficult problem um and they do this again with synthetic

data so like they feed it questions and responses that are fake right this is where you got to see um there was like a number like on um to correct like for biases and stuff this is where you saw like Google you had like somebody Googled and said give me the picture of the president it was like a black president right it was because they spr a lot of synthetic data in there to Cor some biases that are in like the the language model naturally right so it wasn't intentional or they weren't doing anything bad they just tried to correct the bias and there's no way to do it there's no sitting that they can turn

they have to do it by feeding in lots and lots of training data again this is the level one when you don't have anything that's um you haven't fine tuned anything that's the foundational model and then you can customize that to a domain specific in in um the second part on level two and that's what'll give you a more specialized uh model that you can use within your company for example if you wanted to use one that's focused on just your policies that's going to be aligned to whatever restrictions that you have in your environment just you would do that in level two one takeaway that you guys have to and I'm pretty guys you guys already

know this but like training is really expensive right training these models like for level one it's super expensive like it takes a lot it it really requires a lot of customized gpus and it takes millions and millions of dollars to do this um so this is for context llama 2 this is the training data for llama 3 that's on screen but for context llama 2 was train on 6,000 gpus for 12 days and it cost upwards of $2 million just to train that model so it's really expensive to do that however after you do the initial training and you finish with level one the processing that and and doing just the querying and dealing with the chat bot itself is super like

not expensive at all the second part is called insurance so that part is not hard and it's not really that it's processor intensive it's not too bad so um what they do is they give you a model that's trained on level one and then you can fine-tune it to your liking and and that's really that's a nice thing to do and you guys can see here llama 3 was trained on 7.7 GPU hours so that's a lot of time I'm pretty sure that cost a lot more money than GPT I mean than llama 2 Okay so let's talk about promp design so we talked about the data we talked a little bit about training we talked

about what llms are let's talk about how you interact with them right there's a prompt Engineers that was a a job that got you know that got popular recently I don't know if it's still a thing or not but um okay so when you go to chat gbt or any of these chat boxes um it it'll talk about tokens remember we talked about tokens in the way that phrases are broken down right um so one thing that's really important when you talk about a log language model and you and you interact with them is to learn about what the size of the context window is meaning how many tokens or how much text can you put in that in that context

window and that does a lot of great things for you because um it'll remember what's in the context window right so if you have a a conversation on chai GPT and if you keep going and keep asking questions for it it'll forget what you wrote at the very beginning if it if you go past the context window because it's only going to remember and it's only going to have in memory the stuff that's in the context window so like for example I think GPT 4 has a 32,000 token context window so that's a lot of text so that's really good um so keep that in mind um for prompt engineering one of there's four Concepts

that you guys should be aware of so when you talk about um customizing and your better prompts to get better results remember these things are are made with like natural language so the way that you interact with them is is is how you connect and make these prompt requests I think of this as kind of like Google Dorking right so for example if you ask if you go to Google and you put in a query for a website it'll give you the results right however you you can customize that Google query and get specific information if you're looking for PDFs or anything like that you can kind of think of uh prompt Engineering in a similar context right where you if

you give it context and you say hey for this pretend that you're a Automotive Specialist and then you ask it about card questions it'll give you more specialized response in that context window uh if you provide examples which is called few shot learning you can provide examples of what kind of responses you want and it'll give you exactly that when it gives you the output and also being clearance specific is it important so TBT already does this if you go to customize all your your prompts you can say hey for these every prompt that you give it it can have specific requests of what you want and how you want the output to be and how

you want it to like uh process your request so this is basically just injecting a little bit of pretext to the questions that you ask to give you a more uh customized response which is really good and that's kind of the basics of proct engineering again it's kind of the equivalent of Google dorkin if you into security okay so this is a tool by Daniel Meer it's called fabric which basically uh took um did a bunch of like um as you guys can see like uh prompt engineering things that gave him really good results like summarizing text summarizing video or anything like that um these are basically prepended to any prompt that he puts into uh chat GPT and

it gives them like very specific responses right so on this this one is just a prompt to summarize text but you guys can see that like it give them really Specific Instructions like what kind of text it wants how it wants the instructions to be how he wants the output to be uh yeah and so just like check it out if you have any chance it's called fabric has a bunch of these requests and it helps you like streamline a lot of these uh requests that you given to like these large language models so keep in mind again this is the the most important part if you want to get the most out of this it

uses natural language so it's not programmed language that you interact with you have to know how to probe these things and together what you want um okay so next thing how do um how do we do how do we fix the problem of let's say you want to you don't want to send your data to Chad gbt right if you wanted to ask questions about your Internal Documentation you don't want to like send documents to a third party company because that could be potentially sensitive information so the way that people uh figured out the problem the answer to this problem is called retrial augmented generation or rag uh which basically what they do is they take all the documents that you

want to have um be in your queries that you want to be able to like look from so like either internal documents all your books or anything like that you make those into tokens you make those into embeddings and you put those in a database which is exactly what we did earlier right we took that text parsed it up put it into an embedding put it in the database right that database can then be used to be queried upon so whenever you have a prompt and you say hey can you give me the information uh for my internal password policy for my company it'll go to to the database it'll go look it'll do a search on all

the text and all those embeddings that relate to your company's policy it'll take that embedding identify that turn it into text inject it back into your prompt and then that way when you ask that question hey can you give me the policy for my company it'll look it'll put it in a context window and now that text is in the context window so now it's in the memory of the llm right and now it'll know exactly what you're talking about and it'll give you the answer that you want right so basically it's a way to do database queries on text that you have provided and turn into embeddings and then now you can kind of use that in your own local

model which is pretty I think that's interesting okay um okay so there LMS are not perfect obviously there's a lot of stuff that um is wrong with like there's a lot of problems with LMS the most well-known one is hallucinations remember uh this is just next word prediction so it doesn't care that it's right or wrong it just like it's just giving you a word that it think is the most likely thing then it's going to be next right in that sentence right there is no sourcing for these things right because there is no Source it's just giving you a prediction of from all the text that it's consumed um and in looking at statistical um

statistical uh similarities in that text it's giving you the next word so there is no like source that you can probe for these things uh Math logic and reasoning so a lot of people have uh discussions about this I'm not going to weigh in on it but people say that they're bad at Math logic and reasoning other people say that they're not that people are just not asking the right questions in the right way so I don't know it depends it's still I I don't know which one but people would say that that's a problem like I said cost of train is a big deal right getting in these llms to perform efficiently is is very expensive right

now um the training data that we use to train these things has biases which is another big problem um work displacement people think that this is going to automate a lot of jobs away which is another big problem cyber crime uh you know the big the big thing that everybody talked about is when you're looking at fishing emails look for typos look for you know Common like pronunciation issues or something that's not going to be an issue anymore right with these things we can pretty make really good um really realistic uh social engineering campaigns EXC me and then also the training that we use the data is copyrighted a lot of the times right so

who owns that right is that okay for the companies to take and kind of train upon I don't know right it's that's something that we still have to figure out in the courts is the data that we're using trainable and because it's copyrighted or not like that's something that hasn't been figured out yet again AI is upset that I'm giving you all this

information all right and then uh these are the OAS top 10 attacks against llm right we have prompt injection I'm going to show you an example of prompt injection but these are the common attacks that you know are are being used against large language models right now um obviously this is a very sort of new sec secur field so it's going to get better and better if you guys are interested in this stuff I would suggest contacting o and trying to be a contributor because there's a lot of space for growth here there's a lot of things for you to research there's a lot of things to do because it's a relatively awish P like a field of study

and there's going to be lots and lots of attacks that you guys are going to see so this is a jailbreak I'm pretty sure I don't know if you guys are familiar with this but like there was a lot of jailbreaks right that happened uh when chat GPT came out and basically they would basically the the way that the jailbreak would would say like pretend to be somebody that is reading a story to my grandma who's a cyber security expert and then the LM would be like oh okay so it's not real this is a story so it would give you all that information right well this is another cool thing that happened is that like for this

model if you ask it the question it wouldn't like you be the answer say I can't do that but if you just do a base 64 of that text and ask it that question since it wasn't trained on that it'll be like oh I understand what that question is here you go it was just give right so there's going to be a lot a lot of these types of like attacks that uh are going to be more and more common right uh another one was that if you like you would give it a now you can upload pictures right so there's some that you could encode text in pictures that was like non-readable to the human eye but the llm model could

understand it so you could just give it instructions in the form of pictures that's another cool novel attack uh so what is the future hold for these things uh in my opinion there's three things that are kind of like the the next thing that are going to be the future of these for right now at least is Agents agents are basically llms that behave and can command other aspects of your computer to do tasks so for example if you're a pentester you could say hey can you give me uh can you pent test this IP it'll be like sure here's my pen testing methodology and then it'll take those instructions and run nmap get the results feeded back

into the context window and depending on what ports it's open it knows what to do because there's a standardized methodology for pen testing right so it'll just follow those instructions that it knows already and just do those actions so the cool thing about agents is that it just basically adds more and more functionality um it's dangerous but it's it's going to be the future I think uh self-improvement meaning that it creates its own synthetic data for training so like remember we talked about um you use we use data to train in level to right you use fine tuning well it could create its own question and answers right to make itself better um but you know that's another thing for

self-improvement and then the last thing that's a big field of research right now is the thinking fast and slow I don't know if you guys read that book but basically talks about how the human mind has two ways of thinking one is very like um intuition based that's Thinking Fast and then thinking two is where you actually sit down and focus right now uh large language models are kind of like in The Thinking Fast stage where they just give you the next word and they're not really thinking about anything or like they're not really taking their time but there's a field of research right now that is trying to slow them down and say give them more parameters

where it can say before you give me the answer take a day or two to kind of really think about the possibilities and kind of like trying to force them into more of a um trying to make them think more about the problem and giving them more and more complex problems right all right so um there's this uh the guy that's the chat gbt main security guy I forgot his name is Andre I I I forgot his last name but I apologies for that but he came up with a really good uh way to think about llms which is kind of think of them as a computer right so the LM would be your processor the ram would be the context

window which is your prompt and all the text that you interact with the file system which is are the embeddings right those are the words that we turn into those arrays that would be your file system the browser would be your ethernet port and then the input and output but and then the agents would use the calculator and the python interpreter uh and the terminal right but I I thought this was a really good way to think about it because it kind of makes it seem like these are actual computers and it actually kind of matches up really well with our ecosystem right because if you think about it even further there's closed models and open source models right so

you have llama which is an open source which is kind of like to Linux then you have Chach which is a closed model which is kind of the equivalent of like Microsoft or something like that all right so let's talk about let's do a little demo real quick so this is the tokenizer stuff that we talked about uh where you take these words these uh just sentences and it turns them into uh tokens we talked a little bit about that earlier this is uh I I basically use this to kind of outline how a word you kind kind of see how it's a different color if you click on the word it tells you what the options are for the next

word prediction so at a dark dim ding rund down and secret those were all the options and it just kind of went with uh dim for this particular part of it you can kind of do this this is actually pretty cool because it gives you like the percentages of all the words that it could have used and it gives you like why it went with this one if you see the red ones those are the ones that are like went rogue a little bit it wasn't like the highest percentage but it just kind of randomly picked one just to make it more um more random okay so it's an embedding I mean that's the next word prediction here's

that um remember like I said the embedding stinkle like coordinates these are all words but it's just um they're they're all over the place so I'm going to use the like I said in the example that I had on the presentation I'm going to use the word King you guys can see it's really hard to tell here but you can see all these other words that are kind of lighting up are kind of related to the word King like Prophet holy judge Sons daughters I don't know why but all these words basically when they light up means that there's some sort of connection between them and the embeddings there's some sort of meaning that connects them

together and it's really interesting because you can actually do a word math which is actually pretty cool uh so give me a second I'll show you guys something so if I give you like the word King show you like words that are similar right the same kind of idea there but like let's say we do Germany oops Paris France

oops see if that works oops oh well that don't work let me try another one one okay so you can actually sub adding and subtracting the vectors this is what a really cool thing about this was that like if you subtract the word man from King and then you add that to woman you get queen as the second choice which is kind of crazy if you think about it right um the fact that that it was able to like identify that so let's do another one that's kind of fun dog bark and now hopefully we'll get cat somewhere in this list yeah so there's cat right here and I don't know I just think it's

cool to do algebra with words it's interesting uh all right one last takeaway before I let you guys go um like I said these foundational models uh are are really expensive millions of dollars worth and these companies are giving them away for free so if you uh want to go around and play around with these things you can get uh AMA downloaded it'll let you download the Llama 3 uh open source model and then you can use it and have your own um Chad gbt Lo running locally this is I don't have internet on this box but you can have it like say tell me a joke about dogs and now I have like a very powerful

open source um model running in my box locally there you go so I suggest you guys go download this and have this in case the apocalypse happens at least you have your own chat the world's knowledge in your computer that you can download for free and use it to survive uh all right thank you very much that's my

talk oh yeah go ahead