Cloud & Containers: The Security Puzzle That Locks Tight

Show transcript [en]

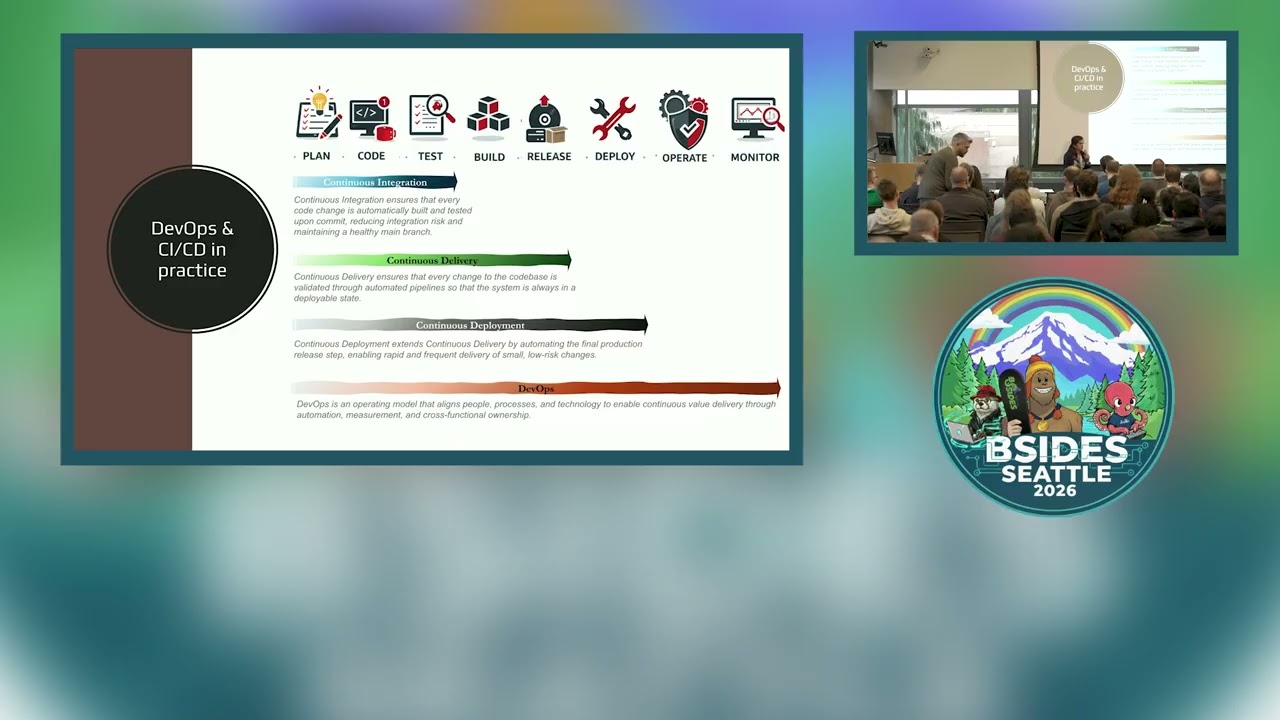

Good afternoon. My name's Ashley. Thank you ever so much for coming. Hope everyone's had a good lunch. And this is bit of a talk around clouds containers and the puzzles that interlock them all. So starting with just basic concepts, a lot of this builds on Liz Rice's book which she published about a week or second iteration a week after I put this in. So building on top of some of the stuff that she's just republished. What's the general problem when we talk around containers? Well, containers themselves, we can secure them. We can look at the different pieces around that. So we've got things like CI/CD pipeline. We've got things like our guardrails, our assurance. We've got

security logging and monitoring across the board. We've got all these different elements that we should be weaving together. Typically, most of those sit as independent silos or we're looking at one or two of those pieces, not everything. One of the things that stood out was, I think it was Exabbeam said 82% of cloud breaches come from misconfiguration and human issues. Well, that's a huge number that we're working with, especially when we then say from CNCF, they reckon there's about 15 and a half million cloud native developers of which something like 80% of them using containers in production in some way. That's a huge surface that we're working with. So, I need to do is start shifting from we've got individual

silos and individual bits working on their own. having a very much interconnected mesh of defense and then really trying to focus on how do we make these things measurable enough scale ourselves across the board. So diving into the problem in a bit of detail hopefully this is fact some of you are familiar with but what we're looking at is very much what happens underneath the hood of containers. So most people whether you're an application dev you might be looking inside the application and at the top so within the pod then as you start to come down you get very much into well now where's it running so what we're actually seeing is everything starts to

run in the kernel everything shared at the kernel level rather than being isolated. So what's the core problem? Oh, even if we've got individual processes running, individual containers running, each one of those accessing the same same kernel. Now, where's this take us to? If we're using this, there's the CVE. So, the TR the three run CVs that came out earlier this year, they started doing race conditions and looking at the mounts. So what that enable them to do is exploit the mount from one container then laterally move across into others and start moving laterally across the grid. Where we need to start to move to is actually the kernel and the sysols need to be defended as well as all the layers

above it and below it. So not saying that the containers on the own the bits running at the top the application needs defending the kernel itself needs defending and the hypervisor and hyperscaler it sits on needs defending. So how do we do that? It's some quite simple things like we want to make sure no one's using root. There's no reason for people to be using it because roots actually an aggregation of 40 plus different privileges that Linux provides. You could go for something like capsis which is nearly root but again it's far more privileges than you need. So where should we be going? Let's start to move away and take away the really really basic stuff. Let's start to take away do

we need roots? How do we use namespaces? Not just ones that are in the cubet system, but things like the Linux name spaces. So under the hood that your process name spaces, your user name spaces, networking name spaces. So now we talked about the container and system at a high level. On the left of everything, most people will talk about CI/CD. So, how do we build and actually build an application within a container, deploy it? Most of the time, we think that's nice and safe because we trust our developers. Now, this is nice because most developers good, but not all of them. And as we've seen, there's been an awful lot of supply chain attacks going

on both from inside of things as well as from outside, whether it's poisoning of OSS, it's been stepping stone attacks. we start really take a step back and think about where do we get all of this from? How do we use it all? And where's it all tied together and really good use case for this was the 3CX attack. So this targeted people's build environments or targeted a specific build environment to produce signed malware. So this was targeted into someone's CI/CD. It compromises the ICD via the supply chain produced signed malware that was then distributed publicly. Problem that actually passes every test. Now you can have valid signed malware. It can pass your SAS. You can pass your

sbonds. It can pass various other stages. But where we need to take a step back to is even though we've got all these different components, it passes. Is this right? So this where there's two key elements that start to come in. First being spy levels for software artifacts otherwise known as salsa. So core part of salsa is it's looking at provenence and the attestations of all your content. So specifically it's looking at where did you pull it from? When was it built? What is the different composition of these things? And then that runs within your build pipeline ideally using something like ephemeral hermetic runners. The idea being that they're not persistent. They're spun up, spun down, you do your standard checks.

So you do your dash, you do your SAS, you generate your S bombs. All of those different elements get fed instrument testation that Salsa then starts to mandate this is the structure of that on itself only says that you've done the test. It can tell you which policy was run. It can then tell you whether it passed or failed against the policy, but there's no real way to then say, was that good? Was that what we expected? So that's where the next point comes in which is around BSA. So verification summary attestations. The idea is you take all of those attestations and you put them all into central repository and there's logic there that sits there and looks at the

testations to say is that right? Is that a the structure we expected? Is it B the result? Now a really really common one is we talk about guardrails and coding standards. probably most people are aware of. We don't want specific vulnerabilities at a root. We don't want critical CVEs. Now, if that's all within a policy within say your different scanner, if you're using Trivy or Check Off or whatever your favorite tool is, you can extract that and then check it within your BSA. The cool bit then being that if it all looks good, you digitally sign it. So, you sign it with X509 certificate that then says the attestations are correct. This looks like what it should be and there's two

versions of this. There's public version which is where you can submit it externally and there's the idea you can build it yourself. So those of you coding with your own internal applications that aren't necessary out the users or something you're doing yourself you can do the same thing sign it with XY9 so cosign or something. Then the next stage shifting to the right again is well now that we know that we've tested it meets all the standards should we actually deploy? And that's where the testation controllers come in which is again a different caveat because as we start to go through how many developers like to be able to go test things potentially run it with root in a

sandbox versus in production you're not going to run it with root. There may be things in production that need to run with root like engine X few years back the engine X production had to run with root. Therefore you had to make an exception. So as you go through the different stages into different environments, you can slowly increase those guardrails using your BSA test stations using test station controllers there to start making sure that everything aligns. So coming on to this one, talked about the code, but now let's talk about the environment itself it sits in. So what I've done here is mocked up a very very simple network. Actually, most Google's networks is going to be a lot more

complicated than this, but there's two layers. You have the cloud layer at the bottom, then you've got your virtualized container layer that sits on top. Now, part of the jigsaw puzzle is whilst I'm focusing on the containers, it still means we need to look at the cloud layer. We still need to be using good access control. We still need to be using ingress egress controls. need to be using organizational controls so that someone can't just spin up a GPU or another cluster when they don't need to. So even at the layer we have to think above and below across all of it. One of the key things on here or one of the key premises is zero trust. I don't

think you get away with talking without mentioning zero trust in some way shape or form. And the idea is we don't trust anything within the network. We're not verifying what is there. We're verifying absolutely everything. We don't trust the users. We check them. We don't trust each connection. We check it's got the right certificate, the right authentication. So coming into this, you want to be looking at things like service meshes. Make sure that when you're looking at your network, you'll start to put in service mesh ideally. And if we can't get to the level of service meshes because it's difficult and complicated, let's take a step back. What can you do around your logical name

space? What can you do around your standard Kubernetes configurations? So things like using the network policy barriers, making sure that you have ingress e controls start to lock down within that namespace. Namespace one can't talk to namespace 2 even inside the name spaces. Can your front end talk to your mid tier, talk to your back tier? Well, do we have anything on the back tier that's running at all? Similarly, deny by default is always really helpful. And then come back to the attestation controllers. If it's not there, let's make sure that we're using the mission controllers to actually say what's our policies. What do we want to see that's good? What do you want to see

that's bad? Make sure that we can't deploy those bad things. And similarly, when we're talking about handling of secrets, no more secrets in code. Everything needs to come from an external sequence manager that's injected into things like the temps. So it's not actually going to be sat there in code. We put it into the file system or the memory, sorry, and then that actually runs through. We're never putting those. We're calling them in real time. Then possibly the most awkward one of all is talking about the runtime security. So specifically looking at our containers and think about where they run. Most of us used to doing say application security in the top layer or talking around how do you put the login

monitoring around it. But what that means is under the hood at the kernel there's an awful lot of things that you can't see. So things like engine X spawning a shell that wouldn't be seen typically by your top layer. You're having to look under the hood at sys calls that are going on in the kernel. simply living off the land attacks, any of these behavioral anomalies, unless you're starting to go through all of the different kernel controls, what's happening at that level, there's going to be a lot of change there. So things like you want to be monitoring CIS call file access networks, how do you do that? That's enhanced BL packet filtering. So enhanced DBPF

that is a really good way. You can look at the kernel that's going on. You can put it across your network as well. So you can tell what's happening with the network connections cuz it's looking at the telemetry at the lowest level possible. If you start to define that along with then behavioral baselining of what it can see. So similar to we've done in socks for a long time. You start to run a baseline what's normal, what's happening with my common telemetry, my common process IDs. It's the same thing but we can now do it at the kernel level. And then we also want to be able to use it to be able to say when do we have

configuration drift. So anything at all that's starting to look like configuration drift. So unauthorized access to Etsy to/bin/lib any changes to container containers tear it down. I will caveat we need to think about how we then do forensics and capture the logs of that if we need to be able to do that. But a lot of the time is if you've got configuration drift, there's no reason to hold that and keep it running. Then finally going on to assurance and visibility. So EVPF gives us that probe level. So gives us the probe at the kernel and then we can start to layer it up with things like open telemetry. So using things like Falco, we start take

on the cubernetes API audits. We start to look at application traces, you start to correlate all of the information around that to be able to say, is this what we expect it to be? Should this be happening? And actually, we want to move a step beyond that, which is to say, does what we're seeing match what we expect from the assurance. So, from an assurance side, we often talk about things like Dora, we could talk about things like Nest or ISO. we need to map those into our individual controls and start to map them into policy as code rather than necessarily having just onsite GRC. So I'd really say for things like your test stations

for your CI/CD pipeline, look at what your assurance and standards GC are saying, then map it back into what you want to see on the ground and then three things that you can do tomorrow which hopefully wouldn't cause too much hassle. So first thing take a look at salsa. Take a look at what that means for your trust and your pipelines. Take a look at what you can do around containment. So things like dropping roots, making sure you've got cap all sorry cap drop locked in. Look at what you're doing with your network telemetry and where you can really start to reduce that attack surface. And the third one is think about deployment visibility. So

think about how far down the stack can you actually go. And thought to finish off with I did put a cheat sheet up. So there's a cheat sheet and thank you ever so much. [applause]