Cryptography and Failure

Show transcript [en]

much and coming back so I'm madly as they asked me to give a talk and they didn't ask me to talk about anything in particular so I'm gonna talk for a little while about not much in particular but kind of broadly what we've learned as hackers and what we're good at and what we're bad at and so I decided to call this as pornography and failure because we're good at both of those things and in particular where we've actually gotten pretty good at you know doing cryptography and that's actually something that's changed during you know my professional lifetime we've gone from a world in which we really didn't know what we were doing to a

world in which the civilian non NSA crypto community you know it is surprisingly sophisticated and and good at what it does and yet security is probably worse now than it ever was so somehow we've managed to get good at security and good at failing at the same time so I'd like to just spend a minute talking about you know where we fail and giving an example of where we failed so we throw in a number of different ways and probably the most prominent way we look for failure is in algorithms and and protocols right I mean we we do things like say oh well there's cryptography involved and there are progressive protocols involved and those

are written down really formally and you know let's let's really look at them carefully and see if there's something wrong with them and you know interestingly you know an example of our success is that we sometimes fail there you know we sometimes find things that are that are wrong there but it's the exception rather than the rule it's pretty rare to see a cryptographic zero-day in a fielded system you know now when it happens it's very exciting because it tends to apply to many many different things but it's it's still more the exception than the rule um and so of course as particularly as an academic but even as a as a non-academic research or at least as the

sort of person who likes for published papers in spite of the fact that that's probably the least fruitful place to look for problems that's where we spend most of our time right I mean that's where we that's what we reward people for writing papers and that's where we we encourage people to to spend all of their time looking for new cryptographic and protocol weaknesses and it's you know it's a lot like that old that old joke about the guy who's standing on a street corner looking down and you know somebody finally comes up to him says what are you looking for can I help you yeah I'm looking for my keys I lost them and oh you know where do you lose them

about two blocks down and while you're looking here well there's that streetlight the light is much better here and you know we know how to look for problems in crypto protocols so that's what we do and it it and almost ever works but then there's failures of engineering and implementation and you know there we often see failures and we you know that that is we have bugs in software we have design flaws in systems and you know we have architectural failures of design and that actually occurs you know probably a factor of ten times more often at least than cryptographic failures but we don't really know how to formalize it quite as well right I mean we we have some notion

of correct programming but it boils down to comparing one program or maybe the specification of a program to another program and actually that's one of the very few things in computer science we can prove we don't know how to do so it's it's you know it's hard and it's hard to know whether about the specification right in the first place and so we we fail a lot there but when we fail there we often get to say oh well whatever went wrong it was somebody else's fault and so the system was fine even though it work and then finally we have failures of systems as a whole and I'm not even sure how to how to characterize that and failures of

the security provided by a mechanism to match the security that is required by the application and in fact almost all of our failures fall into that category and that category is both the most fruitful to look for vulnerabilities in and the most difficult to even nail down and so you know with that lesson you're sort of well learned and I think people anybody who looks for vulnerabilities and systems knows this intuitively and extremely well um we still spend our time looking under the streetlight where the light is you know at the crypto algorithms and protocols so I'm going to give you an example of where I've been guilty of this in in my group and it may

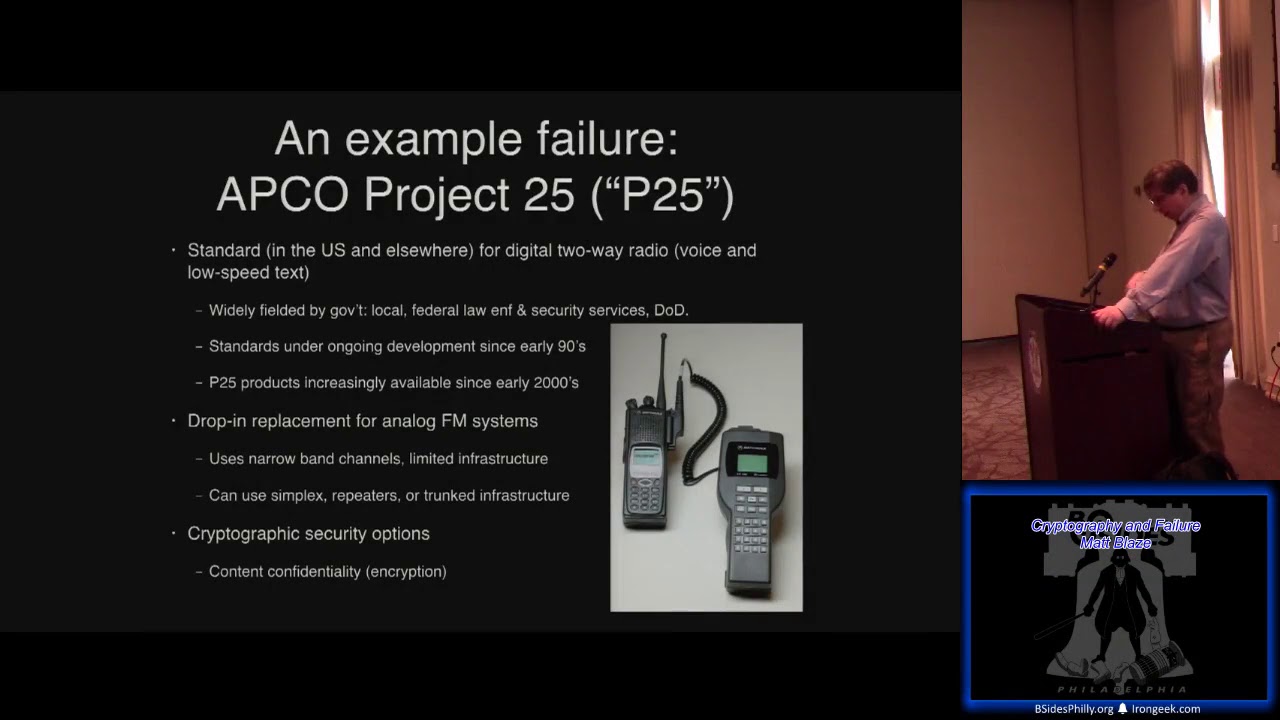

be some work that that you're familiar with but I'm gonna go over it very briefly and then I'll talk about what we can learn from that for some current public policy debates where computer security and cryptography and you know the government are smashing into each other head-on so the example that I want to give of a failure and I'm talking about both a failure in terms of the system and also a failure in terms of the way that we went about analyzing it was a system that I looked at a couple of years ago with my grad students called aapko project 25 so echo project 25 is this two-way radio standard that was developed in the early 1990s as a

successor to something called app Co project 16 I'm not quite sure I understand their naming scheme all that well it was intended to basically be a digital drop-in replacement protocol for analog FM narrowband two-way radio used by Public Safety and similar types of land mobile radio uses so this is a two-way radio system it was designed in the early 1990s it really started to take off in the early late 1990s 2000s equipment started to become available for it and the federal government went kind of wild buying equipment for it at about the same time that the equipment started to become available and the government started buying it they started to graft on to its security

standards because this is digital two-way radio it's it's now a much easier to add encryption and other kinds of security features to it in particular you know digitizing you know what - something's digitized it's just plain old cryptography on digital data securing analog voice is much harder and generally doesn't doesn't work all that well degrades quality encryption doesn't degrade quality at all and it's very straightforward to do because of the layered model that you get with digital communication so um the the this was a heavily constrained problem so in particular the p25 system is intended to be spectrum compatible with its predecessor and what that means is that it has to use exactly the same

frequency allocation model as analog systems even though if you've got a digital system there are all sorts of things you could do that might be more spectrum efficient and more resistant to various kinds of interference and might might work better things like spread spectrum you couldn't that with the system because it was intended to replace the individual narrowband channels the carry voice over the analog systems that it was intended to replace and I had to in fact be able to coexist with those systems they couldn't just you know this would be like changing okay everybody all at once stop using IP we can't really do that we have to come up with something that that

that's incremental incremental e compatible okay so how does this work well the channels have to fit into a narrowband channel that's a 12 and a half filler hertz wide allocation of spectrum that has to carry voice and so that's they're the same bandwidth of a standard analog voice channel for land mobile radio now that basically means you can fit comfortably about 9,600 bits per second into that into that channel over the constraints of radio and they do that within encoding you know a bawd encoding of 4800 two-bit symbols per second exam and the voice is encoded using a standard vocoder called IM ve that gives actually a surprisingly reasonable speech quality at that bitrate it's you know pretty good sort

of telephone quality or maybe a little a little bit worse than telephone quality audio sounds a little bit different than analog FM but it's still pretty pretty good and it you know it's actually fairly computationally intensive and generally needs specialized hardware to implement just the vocoder itself and the decoding um the vocoder divides speech up into 180 millisecond long frames and if you do the division that's 17 28 bits per 118 millisecond frame that are sent out one after the other so a stream of a voice stream basically consists of these hundred and eighty millisecond 1728 a bit chunks of data sent in a train after the other and you know there's a couple of details here there are two

different kinds of these frames but they that carry different metadata in each but each of those frames basically contains the bulk of those seventeen hundred and twenty eight bits are IM ve encoded voice and then there and that might or might not be encrypted and then the rest of it a few other bits are metadata basically saying hey this is the type of frame it is it's a voice frame that's encrypted or it's not encrypted here's the unit ID that's being sent and and so on so that the receiver can receive it now the one thing that's important to remember is that this is digital communication but in traditional communication that that is very different from the vast majority

of protocols that we designed for things like the internet um in particular although this is intended for something that is ostensibly called two-way radio it's in fact a almost completely one-way protocol the transmitter is broadcasting and receivers are entirely passive so there are no acknowledgments that are sent back or any any notion of a session in the same way that you know we tend to design protocols and we tend to analyze protocols this is put the transmitter puts stuff out over the air and it is entirely up to the receiver to be able to receive it now another property is this is going over radio now with wireline digital protocols we tend to have an error property where it's pretty

much either all or nothing you know you either corrupted your your packet or your frame or what have you or it has gotten through completely perfectly and what we tend to do is design protocols where the model is either accept completely or reject completely and in fact over a radio protocol that would work horribly because the nature of radio is there are little transient fades and multipath transmission and things that will cause you know a few of the bits to get corrupted with very high probability on almost every transmission um so this has to tolerate the low-grade errors without being without just stopping completely because low-grade errors are just really normal like a non zero bit error rate is normal so it

makes heavy use of forward error correction which is to say the vocoder itself can tolerate some drop bits the more dropped bits there are the lower the quality of the audio but if you you know if you kill ten percent of the bits it'll still sound pretty recognizable and so that's that's a important property and the metadata that's used when you decode these frames also is forward error corrected so that if a few of those bits are are corrupted you can recover what the actual payload bits are of them and in fact there are about 64 bits of important metadata in each of them in a couple of different frames that are each error corrected okay so we analyzed

this protocol my graduate students and I because we were bored basically you know we got some some grant money to look at at GSM and other mobile cellular phones and we discovered that the equipment wasn't quite available that we needed and so what are we gonna do well we decided well let's look at some other protocol and hope that they don't notice that we didn't look at the one that look or and go this is radio it's sort of the same nobody'll care and so I hope the so what do we do what we looked at the protocols and we did that by getting copies of the standards which we had to to get by interlibrary loan from Canada

because they charge so much for the standards we couldn't actually afford to buy them would have filled our entire budget but we found a library in Canada was willing to lend them to us and we we looked at the standards and we and we you know our first reaction was oh my god the security standards for this look as if somebody from the NSA showed up at the first meeting and then stopped showing up because it had all of this sort of good terminology you know things like they talked about key material and crypto variables and all this sort of fairly NSA spooky-looking terminology and then almost every actual cryptographic decision that they made was unbelievably bad and you know I like

not unbelievably bad like some subtle secret backdoor bad but unbelievably bad like the security guy isn't here what do we do oh let's just keep going and it turns out that's actually what happened so so we found actually numerous weaknesses in the protocols um so first of all everything is unauthenticated even if you've got key material which among other things means that parts of the protocol are vulnerable to replay attacks and there's in particular a sub protocol of it called over-the-air rekeying that becomes extremely vulnerable because of this and there's a very slightly clever replay that you can do that'll white people's ease out remotely and it's just like you look at the standard and it

becomes immediately obvious what it is um metadata is not encrypted and that includes unit identify unique unit identifier and when the option is turned on their location and this appears to have just been an error in the specification because they're attempting to do this it says that this is one of the requirements but then they just don't actually encrypt any of the metadata and what that means is that if you if people have location services turned on an adversary can basically create a map of where everyone is in a system even if they can't decrypt the voice so if you've seen the Harry Potter movies which by the way I haven't put my students tell me this is just like the marauders

map that you can create from Harry Potter where you see where your adversary is at all time and so when the surveillance people are following you around and you can see where they are and we discovered a remarkably terrible susceptibility to denial-of-service because of the way the framing is arranged so in particular I'm going to talk very briefly about that because that was probably the most that was where most of the light that we applied was to this to this protocol and there's aggressive forward error correcting but they don't forward or error correct that entire 70 928 bit frame instead they forward error correct individual fields so each individual field of that frame is separately error corrected and you

know why is that good or why is that bad well it's good in the sense that you can apply different types of error correction to different parts of the frame and that might be a little more efficient but it means also that an adversary can select an individual subfield to jam if they've got good timing synchronization they can just pick out an individual field and block it out and not bother blotting out other fields if they only care about jamming one of them well one of those fields is a 64-bit field that actually contains 16 bits of payload that identifies the type of frame and in particular it identifies you know is this encrypted is this in

the clear is this a voice spring and that that 64 bits out of those 728 bits is essential for the receiver to be able to demodulate the rest of the frame if they don't have those 64 bits they can't do anything with the rest of the of the 17 and Connie Agnes and so what that means is that if a jammer has good timing synchronization and turns on their jamming transmitter for 64 bits out of the seven hundred and twenty eight bits of seventeen hundred and twenty eight bits which they can helpfully do because it comes right after a synchronization yield and in the frame so it's very easy to to build a piece of equipment that

that does this timing or at least in principle it should be they can essentially achieve something that is you know I'm unprecedented in the history of jamming the way you you identify the jamming resistance of a radio system is by comparing the energy required by the jammer to the energy required by the user and in a if you do nothing and you just design a kind of gum protocol it's roughly 50/50 the jam whoever has the most energy wins if you're designing a system to resist jamming you want to make the jammer use a large amount more energy than the system that you're trying to that they're trying to jam so maybe 10 or 20

DB more at a minimum and things like spread-spectrum achieve that in in different ways particularly they keep the spreading sequence in an encrypted place you can actually achieve really good resistance by in from an energy comparison to jamming and again if you do nothing it's just the strongest wins might makes right but this protocol manages to go exactly backwards because of this in the ability to Jam individual sub-skills the jammer actually enjoys a 14 decibel buy power advantage because they only have to turn on their their jamming transmitter in short bursts and what that means is that you know with a couple of double A batteries worth of energy you can build a fairly powerful

jammer that'll last for days and days against a fairly high powered system in principle ok so why is that bad well jamming systems is that the ability systems are used for public safety and surveillance and by the Secret Service and the FBI and and all of those things but in particular you can interact with the security part of the protocol in really mean ways if you do this so the scenario that we thought of was very selectively jamming only teach the users that the encryption doesn't work and do that by only jamming encrypted transmissions so that they learn to turn off their encryption in order for the messages to get through and and so you

know that that might be an interesting scenario in which the encryption works but it doesn't actually work for the users and so they're forced to to turn it off and again fortunately the part that says this is an encrypted transmission comes right before the part that you have to actually jam so again you could build a you could build a jammer that synchronizes and and recognizes this very easily okay so how hard is this to do in practice so one of my grad students at the time a fellow by the name of Travis Goodspeed who I'm sure you all know he is probably the most dangerous person I've ever had in my lab in all respects because he kept

ordering all these dangerous chemicals like they stopped accepting his packages in the mail room because of all this very stickers that were on them and you know he kept saying hey we're you know can I use a fume hood and I said well I don't have a fume hood and he said okay nevermind and then you know he's doing this stuff in the lab smoke and then things start corroding so Travis said oh you know I know how to build one of these okay you know and I'm thinking oh god what's it been cost you know we're gonna actually build this high synchronization radio equipment so oh no there's this thing here called an I am

me which was a toy marketed at preteen girls in the early 2000 so that has a trip set in it that turns out to be exactly compatible with the way that p25 transmissions are framed and of course he had it has a little JTAG interface inside it if you solder in Sweden we program this chip to build the little recognizer to synchronize frames and turn on and it conveniently takes not two but three double A batteries and can if you soup it up put out about 800 kilowatts of power but if you do it in really short Hertz you can get it to like even more than that and and they turn out to be really cheap

because the service they use went out of business so they're kind of useless for their original purpose and you could just buy these things for about $8 each on ebay the chip itself would have cost about $25 each so it's cheaper to buy it in this little pink box than it was to do it so we bought a whole bunch of them and programmed them to do that and here that developed this sort of nice group of the my first jammer so um so we were very proud of ourselves and we did what what we do is academics which was not deploy these around the city and go on a bank robbery spree but you know we wrote

a paper and but before we wrote the paper we wanted to know hey you know we're smart but we're not the only smart people around um you know our bad to have bad guys figured this out to our you know people actually deploying this and so we decided to build a network of receivers to a monitor over the air sensitive p25 transmissions like of the FBI in the State Department and the Secret Service and all of these agencies to see if we could recognize the attack packets that we were sending out to see if you know and somebody else to give this out are they doing this to these people and it turned out that in fact it

can make a long story short it was easy to identify the frequencies that they're using for sensitive stuff because they're the ones with encrypted traffic on it and it turns out there's also redundant metadata that they use like tell which agency is using it from the unit IDs that they're doing in it so a little exercise in traffic analysis to figure out how to reliably identify who was transmitting on what channel but we figured out okay this is the secret service this is the State Department this is this is just the post office and and and so on and then we we said okay let's let's record this stuff and then you know as we're configuring it we

notice that we're getting on the using cryptic channels a whole bunch of clear traffic and we're some investigation thing aha somebody had scooped us and he's doing exactly the stuff that we had discovered wow that's really something that there was a zero-day out there in this protocol but no we couldn't actually find any evidence of any malicious activity we would just see all this clear traffic on these otherwise encrypted channels and it turns out that none of the attacks turned out to actually necessary there was no need to bother with any looking where the light was and analyzing this protocol so uh in 1995 I attended conference that I go to called crypto to the conference for

cryptographers it's held in Santa Barbara every August it's very nice it's right on the beach they party they had invited as their keynote speaker in 1995 one of the head photographers at the National Security Agency fell by the name of Bob Morris he's the father of another Robert Morris that you may have heard of and he worked at NSA and he promised at the beginning of his talk that he was going to reveal the NSA's rule of first rule of crypt analysis and so I made sure okay I'm worth getting up early and go into this talk I want to learn the NSA's rule first rule of analysis that might be useful I'm kind of surprised he's

revealing it you know I'll bet it's all that it's really good and he's going to say something like if you use Gaussian elimination on the S boxes or something like that and then then you get the clear cuts so he said okay paralysis and it's very important because it will it works about 50% of the time rule one of crypt analysis look for clear cats I'm not with them so we did we did that with this p25 system so we basically found all of the channels that they were using configured a network of receivers in a number of metropolitan areas Billy obviously being one but you know other large metropolitan areas that you can imagine

that we basically everywhere we had friends we said hey can you put this box in your apartment no don't ask any questions but the antenna near the window and you know how to upload every night everything that it got on the sensitive government channels and we also checked with our lawyers to make sure it was legal it turns out it is amazingly so what we discovered was that in fact on the channels that have sensitive traffic that are encrypted mostly they're encrypted but on average there's about 30 minutes a day of just out of the blue clear transmission that just starts at some point and then you know it goes back to being encrypted after a while or some fraction of users

just don't have encryption turned on and is going on here and some of the sensitive stuff I mean like really sensitive you know things things like all right I have the informant in the car if they say CI because it sounds cooler I had the informant in the car with me he's the guy in the green shirt you know I'm the information that might be kind of useful if you're the target of that investigation and you know the sensitive traffic use and when they're doing executive protection of those people like the president and and and so on just you know goes out in the of the clear interestingly the agency we discovered sensitive traffic from every

agency we had heard of plus a few agencies that we hadn't heard of that that we track down but one agency never ever transmitted any clear traffic they were always 100% encrypted and you know anything Addington CB obviously the NSA no actually the NSA security horses they're they're in the clear all the time or important need the postal inspector good don't mess with the postal inspector they understand crypto I'm so I ended up actually talking to the postal inspectors information pack guy and he said yeah basically there was this guy he retired you just drilled this into everybody to get the crypto right but almost everybody else it sends traffic in the clear and so why

well so what we discovered was that a frequent problem and we did that by analyzing first metadata and then by actually just listening for the transmission it was frequently the case that different users within our network didn't have the same keys keys would be identified to you could tell this by analyzing the metadata pretty easily that the key identifier x' would sometimes just get out of sync and the only way to resolve that if you don't have the keys is to turn the radio into the clear mode and so it turns out there's a protocol for over-the-air rekeying that the federal government uses every month for most agencies and every week for the really sensitive ones

in which radios are sent new keys and the new keys erased the old keys and this is done radio by radio and so if you're not in range with your radio at the time at the time that the over-the-air we can broadcast what happens and you forget for request new people new keys be sent to you your radio won't have with high probability will have the old keys in it but your colleagues radios will have the new keys in them and so this protocol intended to keep keys up-to-date in fact helps ensure that keys won't stay up to date because of the way this this actually works it's not a synchronized protocol where everybody updates at the same time

it's done radio by radio and so ironically if you're one of the really sensitive agencies where you're rekeying every week it's much higher probability that you're that you are going to actually end up in the clear because people don't have the same key material so that seem to account for about half of the clear traffic that we saw in fact after we anytime we've seen over the everything transmission happen we'd say oh boy we're about to see a bunch of clear text and that would last for you know a few days or or sometimes weeks before it would go back to being more encrypted than it was so you know we learned you know Oh first Wednesday

of the month with time to listen for some clear text that was yesterday that was the day before yesterday by the way um so the other the other thing is that their users don't actually know how to turn the crypto on and off and you could say well that's the users fault but I think we can call this a pretty good usability failure so let me just give an example of the user interface for these radios so this is a Motorola XPS 5000 radio which is probably the most common of these radios used by the by the federal government it's a expensive walkie-talkie looks roughly like a brick it's very well built you could hurt

somebody with it and this is what it looks like in its user interface at the top of the radio and there's its front panel display you know two walkie talkie radio you know you hold it up to your face there's this is what it looks like when it's configured in the clear mode and this is what it looks like when it's configured in the secure mode and the difference you might notice there's this little switch on the top of the radio next to the antenna and one of them's encrypted clear and hopefully they also show that logo in the middle of the display and you can see that before using the radio knows but that's so we

noticed that in listening to we'd listen to some of these transmissions it was it was great because it was like having our own private version of the wire we hear these little surveillance operations that that we're going on we would often hear the very first thing is okay I'm in secure mode and you know quite plainly no they're not and then sometimes you have somebody explaining to somebody else now now to get it in secure mode you have to put it to a little circle the circle with a line is turns the encryption off which is a perfectly you know valid guess um but you know it's interesting you could kind of make the argument either way well you know one is

closed and the other is open and that seems to be the metaphor that they were going through but it could be off and on which is sort of the opposite way to do and there's widespread disagreement about what these symbols mean but them but the manufacturer in this case Motorola thinks that this is what the secure mode should look like and so they're you know basically we discovered some incredibly subtle cryptographic failures and we had Travis be some great electrical engineering work and making this thing get all synchronized in order for them to go into the clear but it turns out that they would go into the clear without our help and it was just

really easy to do okay so where does that leave well this suggests this is a failure at the system's level this had failures a little bit of the cryptography level more at the at the implementation level but completely at the system's level and you know this suggests we just don't we aren't good at building systems to do complex things like this and in particular I went to the standards group that put this together I went to the federal government and talked to them and they were very friendly and concerned about it and then I you know then they said you know you should talk to these people in the p25 security group but I went

there and it was absolutely the most hostile group I was lucky to get out with my life they basically said you don't understand why there's nothing wrong with this system um it was kind of the thing that I I spent they spent a couple hours yelling at me and explaining you you don't understand decibels you don't understand radio this system is fine so this was before that cartoon with the dog and the flames was out but but that that's not what I think of okay so from this is that building actual complex systems that are in fact reliably secure is actually a really hard thing to do and you know we could laugh at the

designers of this but you know they have a point when they say you know every component of the system can point to some other component as the cause of the failure nobody you know there's no one thing wrong with this it's it's it's wrong as a it's wrong in combination even when the components are actually pretty strong so where does that leave us and I'm just gonna spend you know a quick five minutes and then I'll you know you can yell at me and explain why I don't understand decibels but I want to spend five minutes talking about a sort of current public policy debate and what this sort of thing might tell us about that so in 1992 AT&T introduced

this device of the TSD 3600 which is a telephone security device it was basically a phone encrypter that would work on a wireline phone fourteen hundred dollars apiece as they announced this product would use des and diffie-hellman he exchanged and display a hash of the key on the phone it was actually a very clever design but fourteen hundred dollars and he'd need two of them to encrypt your call they sold just about as many of them as you could imagine the federal government though caught wind of this and utterly free out and they were worried that this would cause wiretap to become obsolete the criminals would buy these and that wiretapping capability would go away so

they convinced AT&T to take out the DES chip that was built into it and replace it with a new trip called clipper and clipper basically introduced a system called key escrow that would send an encrypted copy of the session key as part of the initialization vector that you would send during the key exchange the decryption key for that encrypted session key would be held in escrow by the government so that if there was a wiretap order against somebody who is using one of these they could recover the session key by getting the key out of escrow and so that was controversial and got much more attention than this phone would have otherwise got at at

this price the government by the way agreed to buy a whole bunch of these in exchange for a theme keys redesign and those phones ended up sitting in a warehouse somewhere and then got released as government surplus and even buy them for about 40 bucks right now so clipper had problems I had actually discovered some of the problems in it and that killed off this particular idea but we spent the 1990s arguing with the government about whether this idea broadly was a good idea and by 2000 the government basically gave up and it said you know actually encryption is important we want to encourage the community to encrypt things because that is a national security issue and this

key escrow stuff is not going anywhere go forth and encrypt we will get out of the crypto the crypto war ended in about 2000 most of the export restrictions on encrypted products and we live happily ever after until crypto war - we didn't know the number of the person Phil war now in crypto war - which started in about 2011 or so up five years ago it's um summarized by FBI director Comey who this is a typical quote from congressional testimony we are not the law is not keeping up with technology those starts with protecting people aren't going table to access evidence crime and prevent terrorism even with lawful authority we often lack the technical capability doing so and this

is this has been a recurring theme they call it going dark and interestingly this is fairly alarmist wording from 2014 if you look at the wiretap report from 2014 that lists of from 2015 that lists when encryption has been encountered in legal wiretaps in 2014 there were a total of 41 cases or 22 cases and in two of them officials were unable to get the plaintext so this actually doesn't seem to be as large a problem you look at the federal government you add another two cases so I've been a total of four cases of encryption being encountered in wiretaps but we can you know let's be optimistic and assume that that's just because we

haven't figured out how to deploy crypto enough yet and that eventually we will succeed at deploying crypto okay um so what you know what do we do well what they've advocated for is basically a return to key escrow and you know III think we can take some of the lessons of failure that we've got to analyze whether or not key escrow style backdoors are likely to prevent crime or cause crime and I think you can make an argument that backdoors are both dangerous that is they make cryptosystems weaker and also ineffective I think it is probably unarguable and I'm going to use the c-word for just once and because you're allowed to use it in Washington we are

in a national cybersecurity crisis which you know and I think that's unarguably true you know unarguably the case you know with we are seeing more data breaches than ever before we were seeing systems failing left and right and you know our ability to secure systems seems to be outpaced by our ability to build weak systems you know every time you know we take one step forward and two steps backwards with progress we're making and there's really no end in sight to this we actually really only have two proven tools that can help secure complex systems like we use for our infrastructure one of those tools is crypto because that means we have to trust fewer components and we actually

know how to do crypto reasonably well and the other is make systems as simple as possible and we're terrible at doing that but when we are able to do what we we know that it gives us a pretty good chance of working and unfortunately the backdoor concept makes systems more complex so it attacks our ability to make them simple and it also attacks the crypto by in particular making the crypto more complex and increasing its attack service so the backdoor concept attacks head-on really the only two security mechanisms we have that have a proven track record of success in real systems and worse backdoors are easily evaded right I mean it's you know crypto software is

available there's this thing hoping Internet you can download software on it and you know people even write books in these classes about how to how to do these things the secret is out and so if we mandate that you know Apple and Microsoft and and so on incorporate these mechanisms you know building encryption on top of that that doesn't have the backdoor would be a fairly straightforward thing to do so we're stuck with these two potentially unreconciled problems the first is we can't afford more security vulnerabilities and lawful access either is or will be getting harder and that is where we are today in a world in which we are doing a terrible job securing our

systems and the only people who seem to think that computer security is actually too good are at the FBI so so with that thanks very much for coming back from lunch and [Applause] so I think I think I have a couple minutes you can yell at me or yeah yeah it was well the people who did the over the radio stuff and the people who did the security were different people and it was a bit bolted you know the standard was deployed with the idea that there would be hooks to do security in it and yeah so you know it wasn't inherently as bad as that sounds but literally when I went to the meeting I

said you know the standard looks as if somebody from the NSA showed up for the first couple meetings and then stopped showing up and it said oh yeah yeah that was that was Roger he died and they never replaced him so I mean literally that you know the the the assumption that I may turned out to be exactly right yeah I would be shocking to me you know and whose Big Brother right you know the nice thing about Big Brother's is that you know there are so many people with big brothers out there so you know if you if you trust one you know that doesn't mean request you should be trusting all of them and you know oh yeah don't get me

started on the certification mess that we're in yeah okay well anyway thanks thanks very much see you soon [Applause]