Securing Frontends at Scale: Paving our Way to the Post-XSS World

Show original YouTube description

Show transcript [en]

Good morning everybody. Welcome back to Bides Las Vegas ground floor. Uh this talk is securing front ends at scale paving a way to a postXS world and our speaker is Aaron Shim right there. >> Hello. Thank you. >> Uh a few announcements before we begin. We'd like to thank our sponsors, especially our diamond sponsors, Adobe and Iikido, and our gold sponsors, Drop Zone AI and RunZero. It's their support along with our other sponsors, donors, and volunteers that make this event possible. These talks are being streamed live and as a courtesy to our speakers and audience, we ask that you check to make sure your phones are set to silent. If you have a question, use you'll be

using the audience microphone that's in my hand. I'll be handing it around so that YouTube can hear you. As a reminder, the Bides Las Vegas photo policy prohibits taking pictures without explicit permission. So, please do not take pictures, even of the screen. These talks are all being recorded and will be available on YouTube in the future. With that, let's get started. Please welcome your speaker. [applause] >> Hello. Hello. Can you all hear me? Great. Well, thank you for that warm introduction. So, um, welcome to this talk. Today, we will be talking about securing frontends at scale and how the lessons we learned by doing this, um, with all of the different web apps at Google have influenced how we imagine

the post XSS world. So as you may have heard cross-ite scripting or XSS is a really hard problem to solve but this will be a tale of journey through tools and runtime mitigations to show how we can get to a vision of a post XSS world. So before we start um how many of you are security professionals? Great. Uh developers do you consider yourself developers? Great. Great. We have a few. Um and how many of you have uh web apps at your organization that you have to secure? Great. That's what we like to see. Um, so, um, hopefully this is a message that you can take back to your developers to use these tools and philosophies to make some of your

dev's lives easier and to ship the best security we can to our customers. And um, also as a note, this is also a shorter version of um, this talk. So, we're going to move really quite quite fast. So, let's buckle in. And as a disclaimer, so a lot of this work is what we do on our team, but um, the opinions are like my own and not the company and not the team. Great. Thank you. So to introduce myself, um my name is Aaron. I work at Google in the New York City office. I've been there almost eight years. My team primarily focuses on deploying web security mitigations across the entire fleet of Google's web

apps across hundreds of web apps and billions of users. And before security, I worked on some product side uh yeah teams before. So I tried to approach a lot of these uh security mindsets with um uh focus on the empathy for developer. At the end of the day, we are all on the same team. We want to ship the most secure product to our users. Um so how can we work together and make um life as easy as possible for the developers who are our partners in this journey. So a quick agenda of what we will talk about today. We're going to do a quick intro of cross-ite scripting so that we're all on the same page. Um we

are going to talk about our strategies to how to write more secure code at scale. We'll talk about our runtime browser side mitigations so that um we can complement this safe coding approach with runtime mitigations to make sure we've really caught everything. And then we'll talk about um an extension of these mechanisms and how that um leads to our uh postXS world. Great. So XSS is something you've all probably heard about before, but let's recap and talk about why this is still relevant in 2025. So, Google runs a VRP program, a bug bounty program, and this is a visual of the payouts from about five or so years ago. As you can see, half of these are

web issues. Makes sense. Um, Google has one or two web apps. You may have used them before. Um, and you can see that in the diagram, just how many of these um individual vulnerabilities were some form of cross-sight scripting. So, this is really a massive problem for us and we were inspired to develop techniques to remediate these at scale. So before we get into the mitigations, let's go ahead and recap briefly what XSS really is. Um the web is dynamic by design. You want your websites to be interactive and react to your users. Um what is dangerous is that amongst any of these moving pieces, data and code can get mixed up um with more powerful APIs and

it's really not clear sometimes what is data, what is code and um this confusion leads to uh yeah injection attacks. Um, and this could really uh lead to a user having more control over the site than they really should. And in this particular example, right, there is a bit of string interpolation we want to do in the markup. And while um while we assume it's a um bit of text, it's safe, but um it could very well be a bit of malicious uh markup that uh executes JavaScript. And if this is user control, we have no guarantees that only safe inputs will make it through to the interpolation. Um, but why is this kind of injection dangerous? Well, whenever

there's an XSS, an attacker can essentially run code in the context of a user session. Um, this could be anything from stealing credentials to installing malicious loggers. And we really call this um like the client size client side browser version of the rce, the remote code execution, because they really share the similar concept, right? Anything that the victim was authorized to do, the attacker now can as well. Um so it usually when we do uh proof of concepts for this we pop an alert and we'll see an alert happen and um this is going to um show up in some of the code examples as you go along. So now that we talked about the theory what does this

look like in a bit of code. So this is an example of DOM XSS in the code um snippet. see that we take variable fu right out of the address bar and then later on in the code we will insert that into the DOM and because you know the user can type whatever they want into the address bar it could inject um whatever the attacker wants. Um, now we might say like no one really like codes like this anymore, right? Especially with modern frameworks um that isolate components and give you nicer APIs for dealing with uh refreshing the DOM. But obviously assumptions are broken, right? Just because a framework can abstract away the complex DOM operations doesn't

mean that it can prevent you from use uh using unsafe DOM APIs directly. And these issues are really easy to introduce. It really takes a developer one bad day or one um reviewer to miss a line in the review and then once it's introduced it's really hard to remove systematically from your codebase. So do we stand a chance? And we think we do. So let's talk about the philosophies of removing entire classes of web injection vulnerabilities at scale. Um as a quick plug before we begin a lot of these concepts um are also more than what we have time for today. So here's a blog post written by someone else on our team. um it is on the Google security

engineering blog. So feel free to check out um this article and many others like it um on that blog. So another way to think about the protections that we deploy as a part of the solutions that they are really like pillars that support our complete defense and depth mechanism. They work together to uphold security in each aspect of the development life cycle. And we will um now talk about each of these individual parts and give examples of how we um add this to our engineering process. So onto our first idea, frameworks that give you security superpowers. So the big idea is that the basis of our atscale defense against cross-ite scripting comes down to relying on

frameworks that are contextaware templating um have built-in compatibility with these security headers or mechanisms the runtime enforcement mechanisms like CSP and are secure by default. So we'll show an example of context or templating in a few slides but um very quickly it means that the templating system knows whether a piece of string that is being interpolated um as HTML or as a part of an attribute or a script and does the right escaping and um sanitizing to make sure that that uh context is not broken. Um the and this is really a part of like the safe coding philosophy at Google right and this is a term that we'll use a lot throughout this section. It's

giving the tools to developers that take away allow the uncertainty from the developer on how to use and configure these security features and also make these features hard to misconfigure in an insecure way. And we really want security here to not get in the way of shipping great features to our end users quickly. So we want to work with frameworks that do not work against the developer as they try to write code that are that is vibrant and um yeah user friendly. Um and hence a framework built with these security related features in mind um with compatibility with security features like CSV but also um with a design um with a focus on API design and

emphasis on developer experience is really key and you might say this is possible at Google because we have a lot of control over our internal frameworks and give guidance um to our in-house developers on what the best practices are and we can really like control that culture tightly. But what if you aren't building web apps inside Google? How can you get some of these benefits? And thankfully, our colleagues have worked hard to ensure compatibility with these powerful security features and some of our some of our other frameworks as well. For instance, all the work that we did inhouse for Angular and Lit um that is also available open source um so that the open source ecosystem can benefit

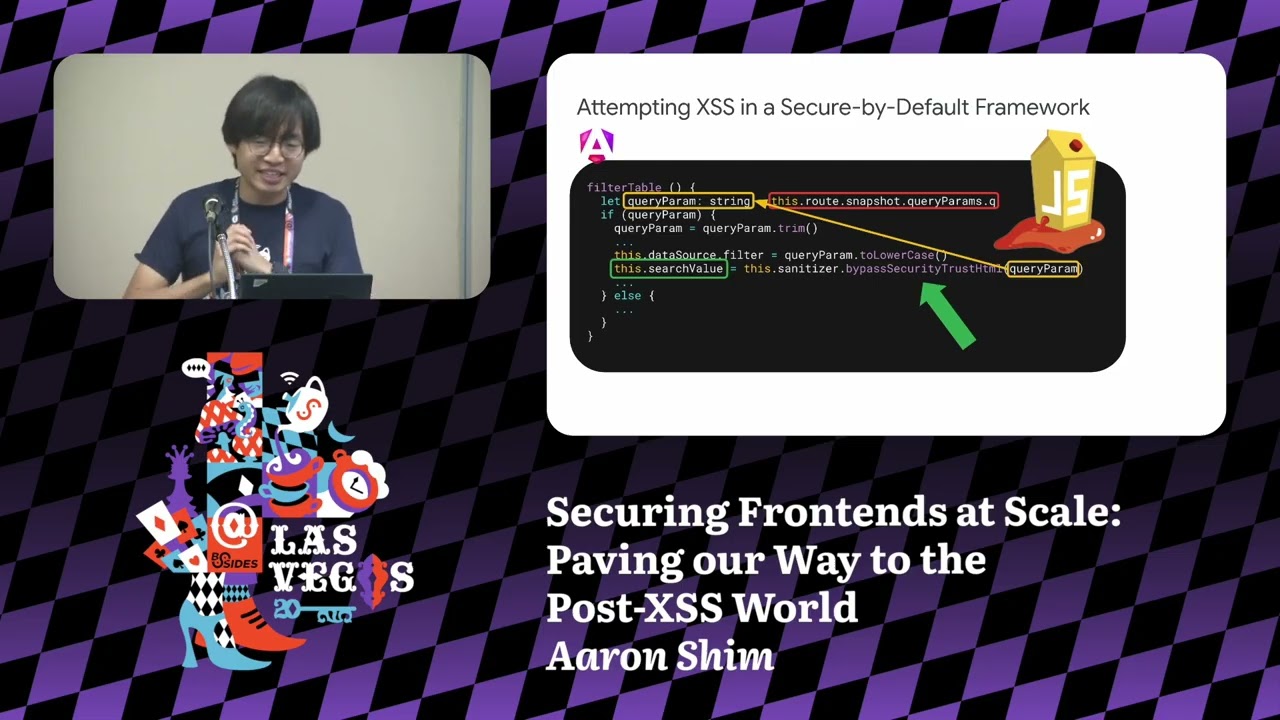

from the the work that we've done there. But um our contributions aren't only related to um Google sponsored frameworks. Um our friends at Meta have also worked hard to make React compliant with their web security strategy. So it has a lot of goodness baked in and we've also submitted PRs to some um other React based frameworks like Nex.js to work well with some of this uh philosophies. So here is a concrete example of what um some secure by default features at work in one of these frameworks that we just talked about. So the code example here is from OASP juice shop an intentionally vulnerable web app designed for trainings and education written using a web a modern webstack and modern

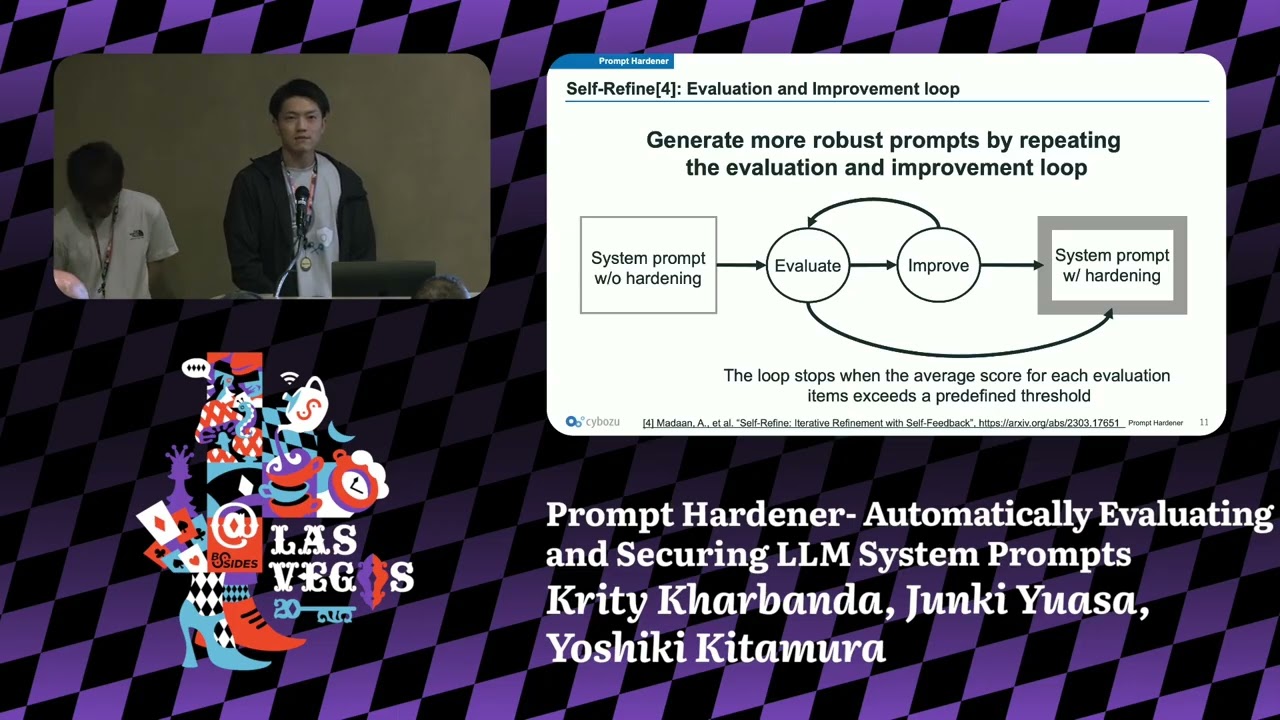

frameworks and um we'll just note that we are using angular here because of the availability of the code snippet but the philosophies described here are not uh framework or like company specific right um we really hope to see more of these uh propagate throughout the ecosystem. So um this particular component it's a part of the angular templating for um the piece of the page that is going to pop the XSS and um here we see that there is something that is bound to the inner HTML attribute inside that node and it is a property search value that we're going to um poke around and find in the um controller and see where it comes from. And let's uh keep in mind

that whatever is set to be bound to inner html here will be treated as markup. So it'll be inserted into the page and it'll be uh running as code. And in the controller we can see that search value property is being set and it depends on this variable called um query param which depends on the URL of the page. So maybe there is a bit of user control string that is being interpolated into the DOM through inner HTML in this bit. But do you also notice this uh lengthy function called bypass security trust HTML? This really unwieldy name should give us a clue that something is out of the ordinary is happening and this is

the core of how Angular's API design works. Um it's telling us that by like bypassing security with an input that is clearly user controlled and this is supposed to make you feel a bit uncomfortable and it's supposed to make you check this bit of code um a little bit more carefully. By contrast, um what if we didn't have that very uh bulky function in the in the way, right? What if we just like try to set um this user control string directly to the property? Then Angular's auto sanitizer behavior kicks in. Um since we know that um since we know from the template that this is being bound to the inner HTML attribute, this needs to

be treated as a bit of markup. So, Angular will um apply the default HTML sanitizer sanitizer value before putting it in the framework. So the excss will not happen if the code is written like this way. And this is really the core of the secure by default framework strategy. Doing the secure thing is easy. Making the mistake is hard and uncomfortable. Yeah, as you can see here, um you can read more about the best practices in the Angular official documentation and it goes into a bit more depth than what we're covering here. And we also want to stress again that while we use Angular as an example um since this was a code example we had none of these

philosophies are locked down to the framework right and we'd really like to see more frameworks adopt similar approaches in the future. Great. So now we will move on to our next idea. Um so we have the building blocks of frameworks secured. um we want to like shift even more left and think about the application code that is being written by our developers in house and how to enforce some best practices there. And at Google, we uh have a model repo, right? Where we stick all of these uh all of these different web apps in and be and and in this model repo, we have all of our JavaScript pass through what we call like a compilation pipeline.

It's like a giant bundler and llinter. And this allows us to insert ourselves there for um static analysis, linting, um checking for best practices. So at its core, what we call conformance is the idea that we really want to automate the checking of the things that a security engineer might call out in code review with a technical control. Um and at Google, it extends to more than just security features, right? It's for other features that maybe aren't at the top of mind for most developers like performance or accessibility, code health, etc. It's really a way to make uh best practice enforcement sustainable at scale. So really there isn't like a 100% um I guess uh good analogy for conformance

outside of like the Google monor repo, but you can really get um the best of its effects by uh combining tools that are readily available such as TypeScript um that'll do type checking that already corrects for a lot of really silly um type mistakes that we used to have in JavaScript. We have ESLint to uh really enforce best practices um coding standards, certain code patterns with more ASD based analysis. We um can insert any of these as a part of your CI/CD like presubmit hooks to make sure that we really don't um push code without doing some of these checks first. And um we'll also talk about some of the tools that we've written on our

own that we will introduce in the later slides um where we do um offer some of these uh benefits through open-source methodologies like this one. This is one thing you can use today. We took the most effective checks that we have on our internal llinter and static analysis tooling and built on top of eslint. So you can run it as a part of the CI/CD the ID setup etc. So this project is called safety web and it's under active development and as you can see in the example here um it'll detect unsafe or risky um APIs like the inner HTML assignment in the code and then um call it out um if you have it as a part of

your yeah IDE setup or CI/CD pipeline it'll really yell at you as you're writing the code so that while you still have the context fresh in your mind you can go refactor it and fix it. Um but this also raises a question. So once these tools warn you about dangerous code patterns, how do we uh refactor this? So that's our next big idea which is how can you ship even more left than actual conformance? Can you make good security decisions and effortless part of the development process itself? And our potential vulnerabilities start at code being written by developers. So we should be able to shift even closer towards when the developers are writing the code, right? And let's and I think

it's easier to show this philosophy in action with a code example. So let's walk through this motivating example. So here's an example application with three different sections. I know they're all on the same page, but you can imagine that they're in very disparate parts of the codebase, maybe work done by three different teams. Um in this um application, let's just say that we uh accept user input and then try to format it and display it back to the user. Um so we have three layers. A storage layer where the piece of HTML that you don't trust enters a system and is and gets stored. We have the formatting layer that tries to do the formatting and

create new markup. And we have the actual browser layer which uh runs on the client side for your users and actually executes the code. And here are the three locations that the user input passes through. And it's obvious here um because it's all on the same page that it is passing user input through. It's less obvious if these three lines are very very far apart from each other in the codebase. And obviously if we have an error message like that, that's going to pop an XSS. So let's think about how we can replace these three lines. Should you be fixing it before we store it into the storage layer? Should you try to fix it as you

format it? Should you try to make sure that nothing dangerous gets inserted into the DOM at the browser runtime? Should we be doing all of them? And if these three places have work done by different teams, how do we ensure that any one of them has been treated? And here we rely on the TypeScript uh type checking system to offload some of that uh mental work for us by creating this uh wrapper type called safe HTML. We want to make sure that the TypeScript type checker will check that we are essentially um only passing around safer input through. and let's see um how to actually correct the uh the compiler errors that this will create because now

we've changed some of the strings to safe HTMLs. But before we do that, let's talk a little bit about this wrapper type. And the important thing here is that there's really no equivalence between a string and a safe HTML. As you can see in this example here, if you want to make some markup by interpolation, there is no guarantee that interpolating a random variable like um name is going to end up safe because it could as will easily be this and we'll pop another XSS. So um of these constructors, which ones might guarantee a safe construction of new markup versus not? If you said the first two, these are not safe. And this is because the first one

is trying to equate the string type with a safe HTML type. And that's not good, right? We want to create a new type so that the TypeScript type checker can check for it. The second one is a little bit more nuance, but this is also not great because it's kind of just like a wrapper, right? We want to make sure that some transformation happens to the string to make sure it becomes safer markup before we um turn it into a safe HTML type. And that is what these two are focusing on. Great. So back to our code now that the correct TypeScript type annotations will it compile and how do we fix it? Well, we have to first uh we could either fix

it there but that might not work because you want to sanitize rather than escape because um if you just escape then we might break the markup that we are making. Great. So as you can see we also have to use a special wrapper to make sure that the browser side code also doesn't use the raw API but is asserting that we are receiving trusted markup before inserting it into the page. Great. So now we have one more close to talk about the runtime enforcement but we are running out of time. So maybe um we'll just do a very quick review of uh CSP. I I'm sure like enough of you have heard about CSP before that we can be a little

more uh faster through it. And the idea around runtime enforcement is that while these safer coding methodologies help us think about uh whether we've written safer code at the runtime you also do want to block anything that may have gotten through because JavaScript is infamously dynamic. Right. Great. So I'm just going to maybe fast forward through a bunch of the slides so we can get to the conclusion. But um essentially we um recommend that when you set up a CSP we uh use non Sony CSPs because rather than URL based allow lists this is a lot more granular and um and less prone to injection. Um we also use strict dynamic to propagate trust and also another cousin

of the nons only is a hash only CS which is great if you don't have to do the template and the back end and the front end coordination to pass the nonsis around and and this is also great for uh static single page applications because you can um transform during your bundling process something that looks like this into an autohashed uh CSV um page. page with the meta tags containing the hashb CSP. We even have libraries that do this for you. Um we also have trusted types which is a formalization of the idea that we showed before with a safe um coding example and the safe HTML type but in the browser to make sure

that you don't have assignments that might um break because there are a lot of these different DOM APIs that might essentially uh introduce markup of strings and turn them into markup on the page. Great. So actually I'm going to now skip um a couple slides um to the end where we can now talk a little bit about this and um end the talk. So we so we can now we saw how these steps interact to really form a web of uh protections, right? But we instead of um these pillars, we can also think of it as a pipeline where we have code flowing from the devs until the environment that is running on the users browsers. And

this is each step of the way we have a different protection to catch what hasn't been caught in the step before. And now the real um insight here is that the runtime enforcement CSV and trusted types we can think of it as essentially what if we lock it down even more? Why if you lock down the runtime enforcement so that instead of just being the last layer to catch whatever is left over, we have a guarantee that dangerous APIs are completely turned off. And and then what if we use that as a basis to ship new APIs in the web platform that will feed back into the first part of this pipeline. And this is a future we hope to get to.

Wonder can safely lock down access to all of these dangerous DOM APIs along with a suite of new platform APIs to replace those operations in a safe by default manner. And we call this um approach perfect types. And we see it as yeah a evolution of um trusted types and the safe coding approach. Well, thank you. And I guess um yeah, if you're interested in this topic um please join the conversation at the W3C swag group where we um discuss how to yeah how to u further the topic of web security amongst developers. And thank you for coming to this talk. [applause]