New Face, Who Dis? Recent Adversarial Approaches to Facial Recognition

Show original YouTube description

Show transcript [en]

like most good hopefully good facial recognition talks this one starts with Naval Warfare now this is how Naval battles used to be fought two fleets across from each other in two lines attacking each other at near Point Blank Range until one gives up or sinks in 1805 Lord Admiral Nelson changed this with a new Naval Warfare tactic basically just consisted of going straight at the enemy as you can see here what do you think happens when you take one huge Fleet of ships and fling them directly at another huge Fleet of ships yeah that's right a hot mess right friend and foe mixed together close range fog of four really hard to identify who is who identify friend and

Foe and else Nelson knew this and so Legend has it that he painted all of his ships in this particular color scheme now known as a Nelson checker and this as an authentication scheme was nearly perfect at least in the first battle why because it was accurate only his ships were painted in this scheme and it was easy to use right at a single glance every ship every gun crew every individual sailor could look up and say a friend or Foe and take immediate action as you might imagine it led him to actually hoist his message to his Fleet and instead of giving directions you should go there go here as you normally would he just lifted a message to his

entire fleet and said look do your job like take responsibility I've enabled you take action after that first battle however which was a success even though he died we'll gloss over that part um this scheme faltered a bit why for the same reasons it suffered problems with accuracy and ease of use accuracy because people aren't stupid right they immediately the other Fleet started paying themselves to mimic Nelson's Fleet and then secondarily ease of use it doesn't take a genius but about 10 minutes to realize oh look all the enemy ships are painted a particular way and they could use the same scheme as well facial recognition has the same give and take when it works well and by that I mean

more of a one-to-one scenario classic example think of facial recognition on your mobile device where it's it's comparing it to your image that you voluntarily recorded a secure Enclave pretty great right it's accurate and it's easy to use it identifies you gives you some strong off and you're going to look at your phone anyway you're not going out of your way to do anything but just like Nelson scheme it ran into problems as soon as the ball game changed a bit when it went from one to one to one to n now instead of comparing single photos there would be a still from a video or from a surveillance camera or some such and it'd be compared

to a database and that would return a top n number of suspects or possible candidates if you will and just like Nelson it ran into issues as soon as it started using this scheme issues with accuracy and ease of use first accuracy in 2018 Dr Joy boulemwini at MIT realized official recognition systems could not identify her own face because she was a dark-skinned female that led to lots of research by her algorithmic justice league and documentation of false arrests three or four men in Detroit were falsely arrested based off of video facial recognition systems because what was happening was these systems were being sold to law enforcement to agencies to kind of identify suspects leading to

potential false arrests the work of the algorithmic Justice League and adversarial research like Dr bulumlini's improved the bias scores and the bias issues with some of these major Enterprises and some Enterprises like IBM decided to leave the technology all together but it wasn't just bias and accuracy it was also ease of use that presented a challenge and that's because think of all the photos of you or people you love out on social media pretty soon companies like Clearview Ai and pemas started farming all of these photographs building a massive database and then in turn selling it to law enforcement agencies or neighborhood associations or on pemas you could go out and search for yourself for people you know right now

and see where they've been on the internet and in real in the real world um the database was 20 billion for Clearview and this is like two or three years old it's much larger now so the issue is that it was too easy to use in a way right photos that were uploaded for personal or private use were now being weaponized against the very people that uploaded them so accuracy and ease of use and and we saw examples of the of Enterprises realizing the danger and trying to address bias and taking care of some of their facial data and biometric data and we've seen the government try and step in in Illinois with bypoc and gdpr and the artificial

intelligence act in Europe was just trying to limit sharing a facial data between nation states in Europe but we can't rely just on Enterprises and the government to protect or help people protect their privacy right we need to enable individuals to play an active role because privacy is a team sport adversarial research has been moving from bias addressing bias like I talked about before and to trying to deal with that ease of use that universal access of photographic data online basically saying how can we give people tools to protect themselves that kind of can be used in normal flow I'll go cover five or six different approaches that have emerged since 2001 most of them in the last year and we're

going to start with one that I ran into in 2019. I wrote a Spartacus app that tried to protect privacy of your social media Accounts at Defcon Blackheart 2019 and as part of that I needed a fake identity so I I made one wholesale and I needed a photo for my fake identity to give her authenticity online this is the photo I chose after I did this a couple days later my friend called me up and said Mike you can't use this woman I was like why not she was openstock photography that you know she's cautious domain public domain he said have you done a reverse image search on her and I said no how how you know how many sites is she

on ten thousand four thousand no 25 billion hits right she was everywhere she was a mystery novel writer in Des Moines she was a lawmaker in DC she was a diplomat in Amsterdam she was a sex worker in Vegas she had she was everywhere and she was nowhere at the same time so one potential approach if you chose to take it to protect your facial data would be to put yourself in every stock photography you can find I'm not saying it's recommended but again like her you would be everywhere in nowhere onward to Academia the more realistic approaches 2001 researchers started to experiment with pixel modification basically finding the gradient in changes in images and facial

data inserting geometric patterns or even single pixels in key areas throwing off uh facial recognition systems facial recognition systems caught on very quickly as the cat and mouse game goes on but it was an interesting start and what I'll do is we go through these and these slides will be available later I'm kind of categorizing these in five areas for example this pixel modification is it fast sure is inserting a pixel is pretty pretty darn quick is it on mobile it was not at the time in 2001 is it Dynamic meaning is it per image that checks that box as well is it transparent in other words have I modified this photo so much that I

wouldn't want to share it with family and friends and my own mother no it's pretty pretty transparent and then protective there are two phases for facial recognition face detection and face matching or classification and so this one basically messes up the ability of the system to detect faces so far so good we'll build up this graph next up is generative adversarial networks most people have seen this in the this person does not exist.com website that's the technology it's using behind the scenes it keeps your high level attributes modifies things so it doesn't quite look like you and the issue here is that it's basically your avatar it's not you but it a lot of people would use it one

place everywhere and it's fairly expensive it has come to mobile everyone who's used tunify or anything else the the recent app I can't remember the name of it at this point face app there are others that made your own little Avatar that everybody used same kind of thing here is it fast it can be but in the past it's not been when it's done to photorealism standards um it's not dynamic because it's just this one image emulator review transparent certainly not because again it's not you really at all and then really it's still identifiable as a face it's just not recognizable as you if you put it out there 2020. now we're going to start to get

interesting there's a paper and a demonstration Android app that's a little wonky but you can still get to run on today's devices called camera adversaria what this does is it takes your default image and then places procedural noise on top of it fancy word for random noise in the over top of the image lightning and darkening pixel by pixel same kind of technique is used to create landscapes for video games is adjustable you can see that originally the system identified it as a screwdriver once the noise was created and injected and identified it as a quill which isn't that impressive until you see it in real life obviously far left this is not my faith

this is me it's identifying that it's a suit right not a face but we'll we'll just use that as a placeholder and then as I increase because you can increase the protection I I've been identified as wearing chain mail possibly corn and my personal favorite I like to think it's that I'm wearing a turtle but maybe you thought it was a turtle either way as you can see it becomes less usable over time right still fascinating and it's kind of nice because it's super fast you're just laying noise over top um it's already in a mobile format so easily corruptable Open Source by the way is it Dynamic yeah you can do it picture by picture you can generate new

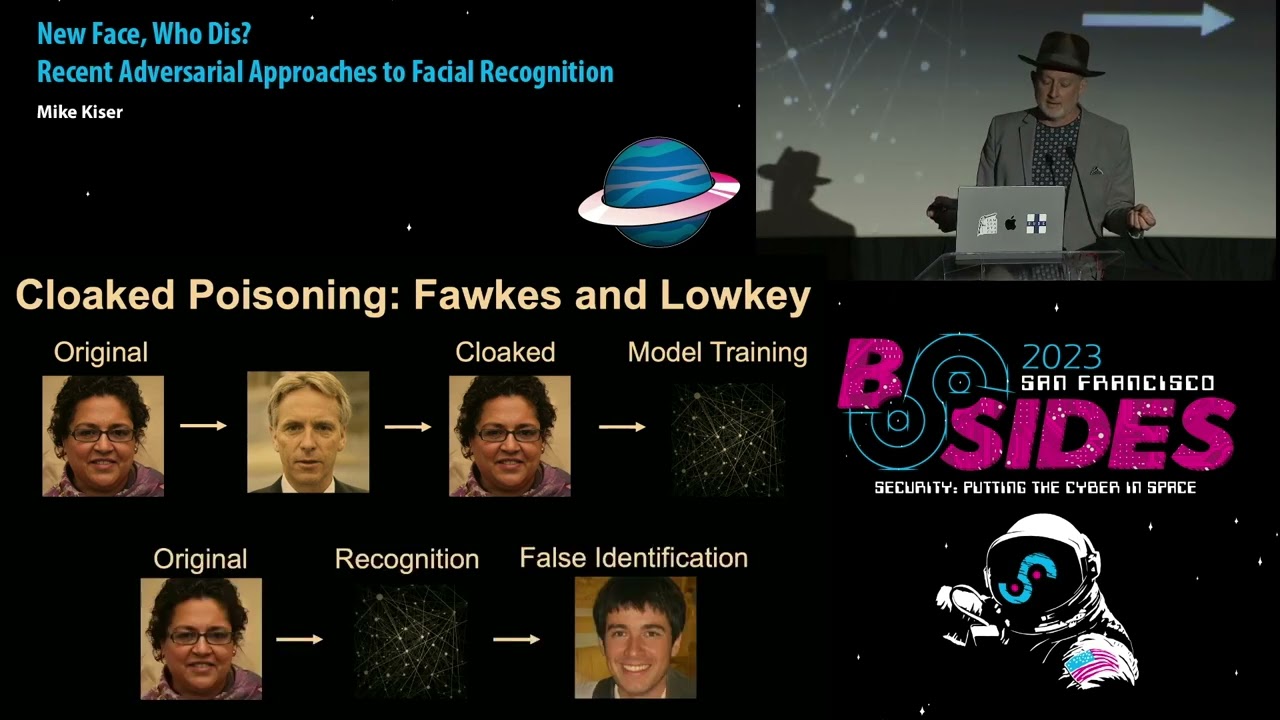

noise for every photo is it transparent sort of I put yes if you don't dial it way up and make me look like a turtle then you're probably in business and again it's that detection is there a face is there not a face not so much the matching matchy face to face technique now we're into the more sophisticated stuff uh I call this cloaked poisoning because it's easier to understand if I use that phrasing there were two uh academic papers and pieces that came out near about the same time uh in 2020 Fox and Loki at a different academic institutions the idea here is you would take your original photo take a stock of photos and pick the one that doesn't

look like you uh mathematically and then it would kind of combine the feature set so that your original photo was modified slightly but hopefully not enough that enough to thwart facial recognition but not enough that it didn't look like you and then it would be put out into the wild the idea being these commercial systems would pick it up enter their database and be poisoned by their training data and as a result when the original say a video whatever else came back out it would do recognition on you and it would still identify your face but it would misidentify who you were sounds kind of starting to be a little more ideal what we're looking for

a couple things this is the more you know the piece the graphic from the academic paper they used a loss function called structural dissimilarity index basically it's trying to minimize uh how how the minimize the amount it can recognize you with a commercial system but make it maximally usable things you would show other people it was a Mac and Windows application it was open source they have it for M1 Mac silicon at this point it's pretty great but to run it on a couple images took on the order of two to five minutes so if our goal is to give people the same experience as face ID how do you take pictures do you take a

picture and then wait five minutes before you upload it certainly not right um Loki very similar different loss function this one's learn perceptual image patch similarity which is why you see why they abbreviate to lips because that's just fun um it comes it comprehends the entire pipeline which is slightly different than Fox Fox was doing mainly just classification this one the way the math works out it takes in to both of those detection and classification think about this one it's a hosted web service it's not open source you submit your picture you submit your pictures to a web service and then they email you back the results so there's something there what does it look like

again using this person as a sample far left you can see that my original photo is there in the same park same outfit conveniently as we dial up the protection I start to see some artifacts but by the time you get to the high protection over here it kind of starts to look like someone has beaten me about The Head and Shoulders with the lead pipe right if I put this out on social media I'm going to get a phone call from my mother with about seven minutes asking me if I'm okay so there's a balance to be had here Effectiveness which we haven't done as much of or talked about but it's also important right because one of the

things you want to know is if I'm applying these protections how much am I really protected at least in the moment right so you can see the fox protection scheme right there as reported by authors themselves they test against the commercially available systems you know that they did really well against Azure until they didn't in January of 2021 Azure did something they're not really sure what that improved how Azure performed against their protection system so they went back and rewrote some algorithms and and got a lot of that protection back uh Loki I like their reporting better because the reporting against the top end results remember I said the name of the game is to not be in that top five

top ten top 50 results that's what's going on here now you also note that Loki is throwing a little bit of shade on Fox here saying that well they did an okay job but Loki does a lot better if you're thinking about these approaches they're not fast I it's really hard to tell how long Loki takes but the email doesn't come back you know in a 30 seconds time frame they're not on mobile they are Dynamic they're picture by picture uh they're transparent for the most part as long as you don't turn the protection up to high and depending on which one they're protective with both or just classification both of these had a lot of publicity New York Times wired

all that kind of stuff back in 2020. uh there's a one last uh uh research paper of note and this was called one person one mask it's come out in this last year or so this is actually pretty fascinating it's kind of using that procedural noise concept except uh uh running some training on it so that's customized for your face and so it's a procedural noise that shifts the feature set away from you specifically and then you would just apply that mask to any photo after that and without any training or poisoning or anything else of these commercial systems the idea is it would misidentify you this is more of a class wise protection and you can see

them call that out in other words instead of image by image protection this is just a a set of noise you would put over any picture that you put out there advantages and disadvantages right as always if you're being pursued by a nation state I wouldn't trust any of this right um your mileage may vary if you're a normal person ish you're going to get some routine 33 to 66 percent depending on the day and the picture and and these types of things the cool part about this uh approach is it starts to use Pi torch which means you can actually do it quickly enough and apply this procedural noise mask quickly enough to inject in

front of your face in video on a frame by frame in a near real-time approach because that's going to be the next step going back to our chart um yes it's fast uh no it's not on mobile I'm working on that part it's dynamic in a way it's class wise not image by image it is transparent it's not really as even half as intrusive as what we saw with Fox and low-key it's also not quite as protective as we saw on the stats but it does try to disturb uh the pathways the detection and the classification you'll note there's one more column and you'll note that most leads don't have mobile options in 2019 I started playing

with this stuff and in 2028 Fox and Loki came about and so I tried to incorporate those into a mobile app kind of proof of concept just to see what was possible limited by a couple things there is no training for tensorflow Lite or Pi torch equivalent machine Learning Systems there's no training on device or at least it wasn't at that point with tensorflow you can now do it on device which means any training any protection generation those types of things would have to be done off the device so I did kind of what I could with the technology we had which was um two things one was fast style transfer which you see here at the top

basically you take an image and then you take a style you want to modify your image into and then you decide how much influence you want for the original content versus the style to have again if you look at some of these uh these uh examples they get kind of horrific as well and unusable the other one that was more interesting was the procedural noise generating that and placing it over top and so this is what the general flow of that open source app that's out on GitHub still basically applies a style change to your image puts procedural noise over top of that and then checks to see if it can detect a face or not I

didn't have time to put in classification comparison against a known face on the mobile device itself but after it does that check to say do I see a face or not it goes back and allows you to to modify your approach more or less protection and then saves to a dedicated album so they can upload it later on the whole idea here is to give people agency and to make it in as much of the normal process that they go through as possible um there's a demo I can show you some screenshots I'll blow through this demo it's an area demo and nobody has time for that so like I said I'm not a designer and if

you notice that um but basically either from the camera roll or in this case from an image you take oh look it was the same day um it takes the picture then after you choose to use it it shrinks it down into a slightly smaller format most of these approaches uh need a 384 by 384 image before it can scale it back up yeah and this is why you don't do this part

okay and then you choose the style classic art examples here once you apply it well actually before you apply it you can do a detect face on the mobile app you can see whether or not it identifies a face and some of the features and then after you apply obviously if you jack it way up it's not going to find a face at all but you can manipulate it until it's an appropriate level of protection and then you can save it off to a dedicated photo album that on the device and so that's the general idea so ideally all yeses in this last hem column I'm kind of hesitant to say that I think once uh one person one Mass gets

in I think it would be a lot more viable you should be asking yourself this at this point right is this practical would anybody really use this I'd say yeah no one yes yes and no like in other words I've got lots of friends if you ever ask them do you want something like this they say you bet I do right but they're not going to go out of their way to take extra steps and as well the technology only is just arriving on mobile to do things like Fox and Loki and one person one mask um so I think it's coming but I think it's more to raise awareness and to Grant people agency and that's what this

is all about right protecting privacy especially a biometric information is a team sport Enterprises governments and individuals have a role to play and it's when we all play that together that we can be like Nelson to throw back from the beginning we can raise our flag and say look everyone has a responsibility in this process thank you [Applause] questions concerns complaints

the photos that we've seen so far from your slides they looked very artifacty even with the lowest settings and I know normal people don't want to appear like it on social media they want to look good to their friends and family and so it makes the the usability of a platform like this questionable for the General Public my concern is do you think it will ever become usable for the general public who do want to preserve their looks while also having some privacy and agency I think as a technology I think it'll be a cat and mouse kind of thing in other words I think that what I've seen is they get better the algorithms get

better and as a technology especially on device gets better I think that one person one mask those types of techniques where it's protecting you as a class will be better I think it's too costly to to be more refined in terms of I agree with you if you look really closely you can see artifacts right it depends on what the use case is right especially I think these days with the trending to video that's going to be especially key and we're not we're not quite there yet

hi there thank you for the talk um are there any retroactive steps you can take if your facial data has already been scraped everyone in this room is in trouble um no not particularly right what you're trying to do is you could poison the cash of the data it has on you but it depends on how much is already out there right you are already most likely you were already in a clear view database and you're already in a pemize I know I am Pima is database um so that that's that's an ongoing challenge right now there's also the side effect that if I take a picture or a video and I put it up on someplace

online you saw the local park behind me if you were good enough at open source intelligence you would know where I live even if you didn't know me right so there's a there are side applications besides just not being identified with the law enforcement if you're if you're being stalked or being pursued or otherwise harassed there may be side benefits as well to privacy in the moment or as you upload new content it's a great question though

you don't have any more time yeah well I'll be around uh so thanks y'all have a good day