Response in Action: Lessons from the Digital Frontlines

Show original YouTube description

Show transcript [en]

[Music] Ah, thanks. Uh, thanks everyone. Sorry for some technical difficulties. This is why you don't change things minutes before you present. Uh, but okay, here we go. Uh, so as I mentioned, I'm Ivan. I'm going to be talking about some instant responses we do. Um, but just a bit more about myself. I work for a company called Blue Vision. Um I have the title of of head of innovation research and development. It's a fancy title means nothing really. We are a small company of like 10 people. So everyone does everything. So everyone gets a fancy title moving on with life. So not not not not great but things I am more involved with is is is more with

community building. So hence I'm one of the organizers for this event. So I like building communities and teaching people different things and also like um building up skills and capabilities. Hence I'm quite involved with building up CTFs and stuff like that and information sharing also with uh Hex Coffee and Hexcon. Um so yeah so this is more the things that I'm interested in doing. It's not really something I do in my day job. My day job is tends to be more consulting but more um instant response and monitoring stuff. Um but this talk I'm going to talk about one of the features that that us as a company do. So our company our company model is

officially called uh it's it's it's uh practical cyber security. Our boss is very adamant about we don't just want to give people advice. We want to make that advice practical to have somewhere that they can actually implement this. Um uh but as the company sort of started up we came up with a far more accurate uh slogan for us. It's stumbling into success. So we when we started up the company, we didn't plan to do any IR responses. It was sort of just something that we locked ourselves into. We um got involved with a couple of um fairly large breaches. And because most of us that that started off the company, we worked in research and and also had some

consulting uh activities, we got involved as consultants within those sessions. So we sort of building up a capability where we would act as incident response managers. So if a bridge would happen, we would come in and help you manage the incidents, help orchestrate things, help make sure that everything is actually working as planned. So this led one of my other colleagues, he likes referring to us as the ghostbuster. So if something goes wrong, call us and we'll try and help you from there. Hence are many many slogans and we'll discuss some of them today and and sort of like why why Ghostbusters I I I I fall far more into the stumbling into success. Um that's

that's that's what I feel we're is more accurate for our company. Um so in this talk I'll discuss a bit about like what is the incident? Um when is something actually classified as an incident as opposed to just something that is abnormal. Now I'll discuss some of the frameworks that's out there. So there's multiple frameworks out there that can help you guide your process of of how you would deal with an incident. And depending on which ones you specifically follow, it might dictate uh where you have more emphasis within your uh process or within your framework. Then I'll discuss three uh case studies. I made a bad choice of judgment this morning. I decided to swap out one of the case

studies for a new one this morning. So those slides will probably be quite errorprone, but hopefully they're interesting and I made pretty sure that there's no sensitive information in there. So yeah, we'll see how that goes. Then we'll discuss some of the common pitfalls. So where something that we see often goes wrong. So so uh some of the information or some of the the common trends that we see of what goes wrong during instant response. I'll discussing that. Then for individuals that want to get into this field, I'll discussing some of the tools and and and what training is available, what resources there are for you. And then from an organization standpoint, if you want to

build out your own IR team, what is possible tactics that you could use to try and build up that team? And then I'll discuss just some some actual steps you can do because that's a good way to end the talk. It's like, hey, now go do something. So yeah, so that's that's basically outline of my talk. Um so yeah so let's first start start off by clarifying terms because that's how all presentations go. You go with a glossery and you explain words. Um so first off uh so usually when we talk about incident it's we know this because of events that we monitor. So event can be anything. It can be someone logging into a system, someone switching off a

machine, someone starting up a script, someone installing new software. This is just something that is a logable activity, something that you can associate a uh someone actioning an item, what it was action on, what the result of that was, and at what time this was done. Then you get alerts. So alert is not necessarily a malicious thing. It's just something that is outside the operational norms. So it could be someone um logging in from a piece that they don't usually log in from. It could be someone um Yeah. So that can be basically there there's many things it could be but it's not necessarily that's malicious. It's just something that is outside of the norm. When you start getting into things

that start breaking your confidentiality integrity and availability your CIA triad then then you get more into the concept of of it being an incident. So we like to classify incidents as as lowerase incidents. So these are smaller things that it usually affects one user. It's a quick correctable thing and then you get incidents that is cool we need to call a war room we need to get all the people in get all the vendors in now it's time you cancel all your plans your next couple of weeks or hopefully only days um will be dedicated to this incident trying to recover this uh incident usually impacts the business processes itself so it will have a

business impact usually this the the lower case incidents they tend to be smaller things An example of such a thing might be someone uh foring an attachment to someone they're not supposed to. This might lead to uh unintended data disclosure. Uh it's something that is a quick correctable thing. It's something if you catch it quick enough you can correct it. It's not necessarily a um depending on the data obviously that got leaked. It's a correctable action and could be easily fixed with training. You don't necessarily need to deploy a full IR team and EDR systems and go all hog wild and crazy. So yeah, so when it comes to frameworks, the two most popular frameworks for this is

either you go go the NIST route or you go the SANS route. So us as a company uh we are crest accredited to provide IR services and as such we sort of more lean towards NIST. So NIST it focuses far heavier on the policies, documentation, processes. It's more governance orientated. So for for for corporate companies or for for for governmental organizations which you do which we as a company deal a lot with they tend to prefer the N standards versus SANS which is far more practical in my opinion. It's far more about what tools you use, how do you use those tools, what what capabilities it is, um even the training is far more hands-on,

I would say. Um but if you look at the processes that or or the steps they define when when when dealing with an incident for the most part there's significant overlap and this is true if you look at both frameworks is is both frameworks have very similar steps. they might just vary in their what they focus on and also some of their terminology is similar but not exactly the same. So for example during the NIST in second stage of NIST is the detection and analysis whereas as in SANS it's mostly just the identification. So NIST during the the analysis part there you might include things such as uh reverse engineering malware there's a bunch of bit more things so that you

have better decision support when you come to the point where you do the uh containment eradication and recovery you'll see that both of them have those three steps because those are sort of the where you start doing actions. The first two steps is far more about gaining more information so that you can know you have proper decision support so that you know when when you switch off a server is it the right thing to do. Is this the what is the root cause of the attack? So a lot of your pre-steps is is more with giving you enough information so you can have an informed decision when you actually do your action steps. And then the one thing that's very

important most people forget about IR is the post incident. So what is the lessons learned? What is the post incident activities? What should you do after the effect? So because we our company open is on um more this focus I'm going to go through each of these in a bit more detail according to the one but but keep in mind fairly similar for the sands um just slightly different uh focus within those. So preparation phase. So this is usually when you start making your plans and how you how you plan to act on certain incidences. I say plan because uh there's a famous quote by uh Mike Tyson. Everyone has a plan until they get punched in the face. So that's the

thing is is is is you have a plan. It's well documented. It's exactly according to your your your corporate document structure. It's it's been approved by several auditors happinesses. Uh now something actually happens. none of that actually works or or it doesn't work as you intended or it's too specific or it's too vague. Um that's the thing is is is you often times run into this type of problem where where your plan doesn't actually meet the reality. Um but it is important to have a plan. So again Benjamin Franklin is the other quote of this. So failing to plan is preparing to fail. Um so yeah so you need to plan but you also need to be

quite cognizant that this plan might need to be tweaked at a moment's notice when the actual incident happens. So from our perspective we because we provide incident response as a managed service for people we often times don't own infrastructure we don't make the plans we do sometimes consult in plans that are being made but usually by the time we get called something already went wrong and we get the the response plans and what steps the company usually does uh hand it to us and say okay cool what is what is your response policy I say usually because often times the policy say the the company just says oh we never expected this type of attack to

happen to us if you're not prepared for it. No. So, so yeah. So, so um one of the most important things usually within these plans is clearly defined roles and responsibilities because as the previous speaker mentioned often times in subse bystander effect. Everyone knows something happened. Everyone knows something must be done about it. But unless you have clearly defined, oh okay, something happened on the firewall. You you and you go sign these things. Oh, we need to have someone investigate the the EDR logs. You, you and you, you go do those things. Report back. You need to have clearly defined roles and response. Who's going to do it and to whom they should report those

those findings to? And that brings us also to the point of communication channels. So, communication channels, most companies say, "Oh, no, we just use teams teams for communication channel." That works well until your teams gets compromised. You need to have backups of backups of communication channels. Uh we had one incident where um during the investigation we realized that one of the users that was in our war room team's channel his team's account got compromised. So that person we could never verify this for sure but the attacker theoretically could have access directly to the team. He could see the communications coming in um as we were trying to stop him progress further within the network. So hence you

can't rely on a communication channel that might be compromised during the attack. So have alternative communication channels and also with regards to that um we've had cases where the entire HR portal gets taken down. So if you set okay I usually contact this guy via teams I now need to get hold of him midnight on his cell phone. Nobody has his number. You need to have that in writing somewhere that isn't tied to your infrastructure. You need to have it somewhere that is easily accessible, easily pull upable that that that if your infrastructure goes down, you can still actually reach the people that needs to be notified. Um, so also usually when we when we

start our IR process, we try and find out from the client, cool, what infrastructure, what security infrastructure, what what security tooling do you have? Uh, as the previous speaker mentioned as well, there's hundreds of security vendors, different EDR solutions, different firewall solutions. We then try and figure out with the mixture of solutions we have, what can we use to try and narrow down what happened? What what logging is available? Is that logging logging centralized? How can we gain access to it? Is there any vendor support for if we need custom scripts to be deployed or if we need custom um analysis to be done, who's the vendor contact for those type of things. Um so also what we as the as a retainer

service we provide sort of um training through attack simulations. So again you don't want your first incident or first actual attack um to be the first time that you run through this process. So you want to pre-attack run through a couple of scenarios you have an idea of of what is to be expected during one of these um incidents. Um so there's a QR code for those that need it for the the scavenage on. So just if needed um yeah so the next phase is the detection analysis phase right so this is usually where we we we look look through the logs if they are available. So often times depending on where your logs are stored your logs might be also

encrypted due to ransomware but we try and see what is there that that we can start building out fleshing out a picture of of what happened during this incident. This could be uh as I mentioned seam logs. It could be a lo alert uh from users. Sometimes it might be someone uh sending out a support ticket saying hi I no longer have access to the system or this isn't is acting uh slower than usual. These are all indicator that we can maybe use trying to figure out where stuff started going wrong. Uh we can also then compare that to common uh indicators or compromise that is associated with certain threat actors. Um yeah so so this is where we

we start gathering evidence and start with finding okay we we now suspect there's an attack what type of attack is it is it the only attack that happens because another thing that happens often times with with response planning you assume you'd only be attacked in one way at one time but you might have a fishing attack that leads to a ransomware attack that might lead to credential disclosure it's it doesn't mean that you just have one attack at any given time and then it's becomes quite important to know how do you prioritize this? What is the thing that is most sensitive to your business? What is the crown? What is the thing that will bring your organization

down? Because that is the thing that will need to be addressed first. It is important to also address the other things but you need to with your information know already what would be the greatest impact. Okay. So then we get to the containment, eradication and recovery phase. Um, so there's there's several things when considering how do you contain an uh an infection. So this might be isolating a device off a network, taking it off a network. For forensic purposes, you' most most likely not want to switch it off. Um, depending on whether you want to live track the attacker as he's going through, you might not want to completely sever their internet connection, but you want to limit their

connection to the rest of your network. Uh, several EDR systems have capabilities to sort of sandbox out a specific user and they can still access the internet and and and think that they are fully working, but you can sort of limit their exposure to the rest of your network so that they can't spread or gain lateral movement. Um yeah, and then with regards to eradication, this is this can be anything from patching the vulnerability, uh removing access accounts, um trying to get the threat actor out of your environment. Uh this is important because if you want to recover your data, you don't you don't want to start recovering your data while the threat actor is still in there

because then you're just going to get breached again and you're just going to get crypted again. Um and then it comes to the recovery process. So you need to have a way that you can in a secure environment um rebuild environments that have been compromised beyond the point of recovery or well just just fixing the issue. Um so hence you need to have mechanism in place. So that this is where your data recovery plans comes into play. Um and it's also important to to validate these steps. We we've often times heard people yeah we've got a backup plan. We haven't tested it in 10 years but in theory it works. We've we've we've got backup tapes, but we've

got nothing that can read the tapes that we've backed up on. We we had one case where where the client had to wait over a year to get the correct uh read head so they can read from the backup tapes that they've created. Yes, it's backed up, but they can't get the data off because they only ever wrote data to it. Um yeah, so yeah, so those are the type of things you need to practice for and and and and train for. and then when the post incident happens. So, so, so this is where you've now stopped the bleeding. You've made now made sure that the the the the critical servers are back online. They've been

recovered. You've made sure that they haven't been compromised after recovery again. Uh now you need to decide what is the lessons you learn where did the security fail um what went wrong try and identify what was the root cause of the problem. And this is often times where you get the the blame game problem where people say, "Oh, it's this guy's fault. let's get HR involved and and and pin it on this guy. Once you start playing this blame game, you get far less um responsive, far less accurate um responses from from from users to say what did they do? Did they accidentally click the link? It's human to make mistakes. But if them hiding a mistake

that they made and you having to spend several hours to find that mistake and then the attacker had so much extra time actually in your environment is far more costly than the him just being able to own up to the mistake and without fear of repercussion. Okay. Then there's also the problem of now you need to also responsibly disclose the specific breach and how it happened. uh there's there's certain steps and usually you would need someone that is fairly well trained in media relations to actually relay what was being uh breached without giving out too much corporate information without leading to further reputational damage to your organization. um this specific step. are usually the the post incident activities they get

overlooked because most organization once the bleeding stops they go okay cool you're patched up go on that's also the time period when when when a lot of the security funding just suddenly dries up during a breach you've got all the funding in the world you can deploy whatever security tool you want once the bleeding stops like okay no we think things are fine now we're not going to deploy these extra tools so yeah so um and it's also important to check those activities that you've now deployed to try and stem the bleeding and stem the attack. Uh is it still working that way in a month from now, two months from is those extra security controls that

you've now um made as specifically for this task, is it actually uh relevant a year from now? Um I'll get into that a bit later. Um so yeah, so I'm going to talk about three case studies. So three free incidents that these are real events. So, as I mentioned, I've pretty sure I removed all the identifiable information from here. Um, we'll see. But yeah, so this first one, this happened about two years ago um in June of 2023. Uh, it was a uh black ransomware. So, so ALF v Blackat ransomware and there's a lovely ransom notes. Fairly stock standard type of attack. Um, we established a timeline of the events of of of when they got into the

system, what new security tools were deployed. Um, and we tried to sort of just build up a timeline for the client during an investigation. So, I'm going to go through some of the things that we observed during the attack. So, the initial alert was raised at 20 minutes to 1 uh on June 29th, 2023. Um the shock operators they informed us that there was uh impossible travel event was observed. So I I see I made a mistake here. It wasn't failed login it was successful login. So a specific user they were logging into the company's VPN from various countries throughout the world within mere minutes or seconds from each other. This is known as the

impossible travels because as fast as you want to be you can't easily log in from various countries at the same time. So this was raised as an alert. it. They tried to uh the sock team contacted the networking team, networking team tried to just see if they can limit this and and and and they were trying to figure this out. Again, this was like 1:00 in the morning. So, the response wasn't the greatest, but eventually they could figure out, okay, cool. And then they needed to block this. Um, but at that time that that user already had access when they only realized when he logged in multiple times from impossible locations. So he was logging into the system couple of

hours before this alert was even raised already. And they realized that this was a local VPN account that was being used by the attacker. So it wasn't anything that was managed by their active directory. And eventually they figured out that this specific account belonged to one of their service providers contract workers. It was a contract worker. He needed to do something just quickly on the firewall. So they quickly gave him a VPN access. It was meant to be a weekend task. This contractor has long since resigned from the company that he does this this this specific patch on and nobody ever knew that this local VPN access account was was there. This act this account also had very high

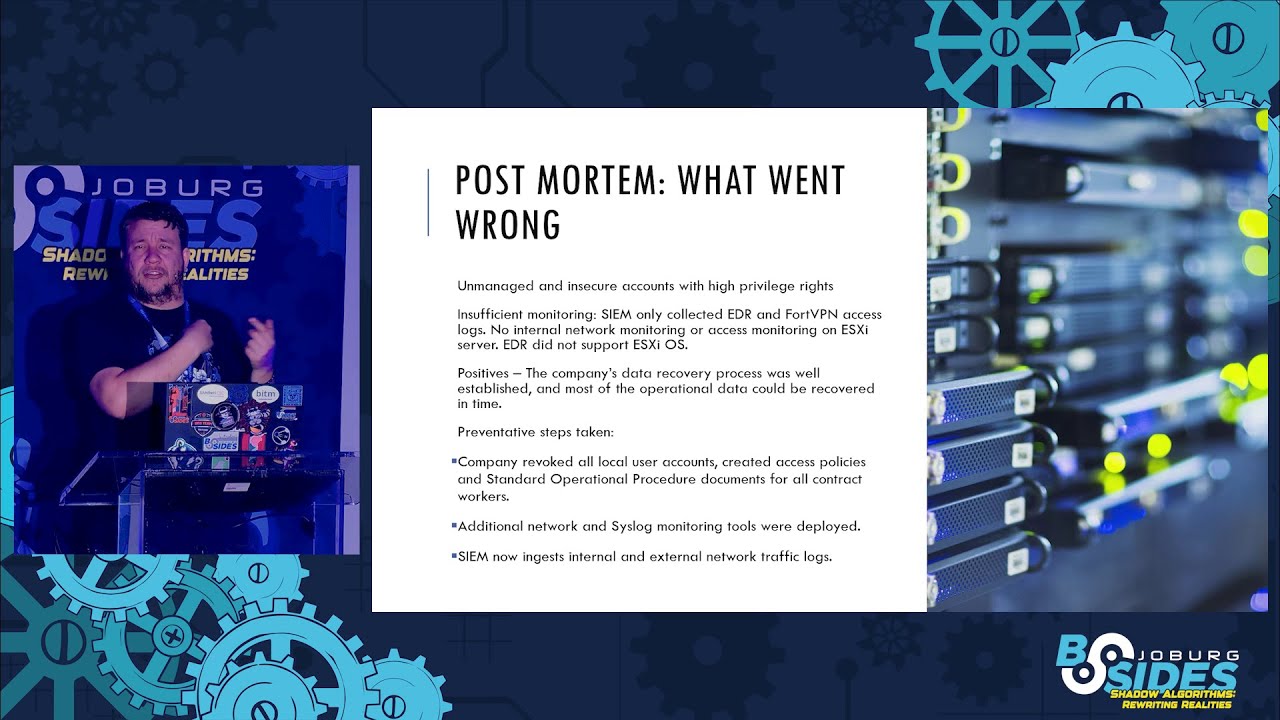

privileges and it was never monitored was never enrolled in two factor authentication. There was no 80 logs of it nothing. So what what the attacker then eventually did is they used this access and they snooped around in the network and up to the point where they found uh the the uh the service providers VMware ESXi server. So this VMware server it would host all the VMs of all the um all the critical systems and they decided we're just going to encrypt this. Um so they used uh Alfie Blackhead ransomware 2.0 things. So that's the specific variant of it. Um yeah so that's what they used to encrypt it and the sock team their first alert of this was when

suddenly they received no more telemetry from any of the edrs or any of the systems because now suddenly they have lots of measurements on the servers but on the exi server it was hosting them all they have no telemetry so they suddenly saw their sim is getting zero logs from anything suddenly and then about just after 1:00 we got called and lots of fun uh and then we got involved um So we tried to now investigate what happened and we we realized okay this was this unmanaged account that had a very high privileges and we realized that the clients they didn't have any monitoring on their um uh they had EDR deployed all throughout the restate so edr was there

they were monitoring things but they their specific EDR vendor was not supported by the VMware. So VMware um yeah so you need to do very specific things to actually monitor EDR type logs within it or you can write your own sensor that that tends to work a bit easier um because ESXi has a proprietary OS so there's a lot of like um EDRs that don't necessarily work on it. So one of the good things about this company is they were very good at data recovery. So they were able to within two or three days recover all the servers. They had good backups of everything. They had tested their backups. So everything worked quite well. The reason this

worked quite well is because they got breached about 6 months prior. Pretty much the same type of attack, but they learned from their previous mistakes and they could recover quickly. Um so the company what they did is they started a process of going through all endpoints uh trying to find is there any local accounts that aren't bound to the uh active directory and they created a uh standard operations procedure document which everyone had to sign. contractors had to sign whenever they create new things. They created procedures to stop anyone from creating new accounts like this. Um they also deployed a lot of new networking because as as I mentioned suddenly when you have a breach funding just becomes available.

So they deployed a lot of more networking activ uh monitoring tools and they started ingesting network um monitoring traffic not just from the uh previously they only had the um 40VPN they had their access logs being pushed to the sim they didn't track any network activity internal to the network anything like that so they started monitoring additional stuff so that again once you get a breach you get funding lovely um so yeah so they started monitoring so that those were some of the corrective steps that they did and luckily because they had such good backup processes, we could recover them fairly quick or well they could recover themselves fairly quickly. We tend to not get involved with recovery,

someone else's problem. So that's was case number one. So now we've got another ransomware case. This was an attempted ransomware because it worked slash didn't work depending on um if you restarted your machine, but I'll get into that right now. So again, this alert, this was an alert that came into the sock. This was in June of this year, so fairly recently. I intentionally left some of the piece of evidence for this specific case vague or not included because some of it is still under investigation and stuff like that. So, so yeah, but anyway, so so users noticed that they were receiving a ransomware note on their desktop and but their machine was still accessible. Machine

wasn't encrypted. They couldn't understand why, but they got a ransomware note. So, a lot of users just ignored it. But eventually someone said, "Hey, shock, this is something's probably wrong here. Why are we getting a ransor not and we can still access things?" Um, so yeah, so eventually through the log analysis, they could figure out, okay, so there's a there was a suspicious WMIC process that was copying this ransomware note and the process was initiated by the DC01 server. So that's the the main controller server pushing out ransomware notes which is not something the domain controller is usually known for doing. Um yeah, so eventually we we Okay, this was the Yeah, there's no IPs on here. That

is okay. This is fine. This is fine. Yeah. So, so we eventually we we were able to get some of lock because again this company they had EDR they had a lot of tools. Um and we could actually see the exact attack pattern. We could see the the commands run. I again I removed a couple of these because they actually directly point to who who it was that got breached. Um but basically what it did is again WIC and they they they disabled a bunch of notifications. They they they they block some of the um EDR tooling and anyone that got infected then started pushing out this uh ransomware notes uh the readme.txt that you see at the bottom. And after it did

all of that it would install a uh well just install bit locker basically. So in some of the previous steps, it would get the key from the Bit Locker. So the attacker would know what the key is. You wouldn't know what the key is. So basically, they did you a favor. They installed Bit Locker for you. They just didn't tell you what the key is. So yeah, so that's that's what they did. Um yeah, so that was lots of fun. So now it's like how did this happen? I mean, we've got EDR installed. There's things monitoring. How did DC01 start doing this? How did DC01 get infected? um why did the EDR not pick it up? Um the the

the specific client, they had network traffic monitoring, but it wasn't logged. So, we needed to investigate and we need to sort of try and piece together all these different disjoint breadcrumbs. Um yeah, so this is what went wrong. Uh the client was in the process of changing out one EDR vendor for another. they had no clear logs of which endpoints had the EDR installed and which ones didn't. Um, also this specific client they work within the mining sector. So a lot of their systems, they would have a laptop, it would be there for observation purposes and it would be locked in a cabinet for 6 months. So it's not uncommon for the EDR uh management system to not see it

checking in for 6 months, 7 months. There was no monitoring of this and there was no um correlation no collaboration between both EDR vendors because obviously a competitor oh we've dis disabled this EDR you can now install this EDR it was sort of a arch push we now remove one we now install one we also noticed that the company they were in the process of enforcing um Bit Locker on all servers so it was a known policy that they wanted to roll out and previously One of the sock analysts, they saw the script. They said, "Oh, no. Look, the companies are rolling this out." And they whitelisted it. They said, "Oh, cool. You're doing Bit Locker. We expect to do Bit Locker.

It would be weird for the company to do Bit Locker installs in middle of the night, but so analyst went, "Yep, they said they're doing this. We saw it coming. Happened." Okay. So, um, so this was one of the many problems. Um and then one of the big problems we had at the point is is because we had very limited data because there was not all the systems had EDR, we could sort of part partially piece together how the individual moved throughout the network because we would see secondary logs from other EDR. So as as some as some devices that didn't have EDR installed, if they if they were used to scan devices that had EDR, we could sort of trace back via

those logs and sort of try and piece together how it all all came together. The problem is the initial machine that we found to be the the the sort of the main culprit. It was stuck at the bottom of a mine shaft and by the time we got to it, it had bit locker on. So, so we this again this this happened about the 10th of June last month and during that long weekend the weekend of the 16th all mine workers went home. So we had to wait till Monday for someone to be willing to go down to the shaft to actually go get this laptop. And obviously by this time patient zero was no more. And we now start with the

problem like cool we found the earliest known victim. We don't know if it's patient zero, but this is as far back as we have any evidence of someone attacking this specific endpoint. It hasn't connected into the EDR management system since last year, approximately November. Um, yeah. So, so yeah, so we we had limited currently that specific laptop, it's going in for forensics and there's a bunch of other legal processes around that. So, I'm not going to go into that right now. Um, yeah. So, I'm just going to talk about what we managed to determine so far. So from some of the firewall logs that we were able to obtain, we realized oh the attacker they

connect in via VPN again via uh using a unmanaged local admin credentials. There was no two factor authentication on those things. Nobody knew about it. Um they exploited weaknesses in some of the unpatched workstation because because a lot of these workstations don't connect to the internet often. They are several patch versions behind. So, so we we could see from how the attack spread is that they would specifically target specific vulnerabilities in those boxes and and because they weren't patched, because they weren't connected to the internet fairly often, uh that's how the the lateral movement occurred. And we realized that from that specific laptop that was down in the the mine shaft, that's where they were able to get the

DC admin access and from there they could get to the the the DC01 and from there they could spread this delicac but plot twist uh case study number one case study number two same company. So again if you remember the first time they also had the problem with the VPN with unmanaged accounts. So they had they had corrective controls that were deployed after the first case. It's the first time it happened but after two years of okay things are fine now we can go on as we are it just got recompromised a completely different attack pattern completely different um targets that they were they were no longer targeting the the EXR server specifically it wasn't a specific R square train um but

they got breached in pretty much the same way two years later um this is the one I added this morning because I thought it was just funny let's add this one in uh yeah so so Yeah. So that that shows you so it's good to have the post incident uh actions but then you must actually make sure that those actions are actually maintained and that they are actually observed. Uh, one of the things we also noticed is that um, we started getting into the firewall logs after the first incident, but the vendors changed the way that they log their um, the basically the data structure of their logs changed and that broke a lot of the

analysis or the analysis team's queries. The analysis team never picked that up. So, we could never see that lateral movement because the queries were still running off outdated data structures data that we weren't getting in. We were still getting in data. So all the things that checked are we getting data in from the data sources were still working. We were just not getting the right data in which meant our queries weren't working. And we had a false sense of security. Well, not we but the people that were running this. Um they had a false sense of security about like what are their exposures? Um so okay so I'll also discuss quickly just the third case study. So this one

is not the same as the first two. It's not always the same company, but this is specifically related to mass mass fishing. Uh, so this is also something that happened slightly earlier in the year. I think the first instance we saw of this was the 3rd of June. Um, and this was the most obvious of obvious fishing campaigns in the world. So the whenever someone clicked on a they got a very suspicious email. I'm I'm not going to put the text here because there's a lot of identifiers in the actual email. But then they got told, "Oh, click here. Your company has very important details to share with you." And they get shown this very basic

documents uh document information for telco for example. So so this would target multiple companies. Um so they would say claim from tel from s from they were mostly South African companies. But yeah and if you click on it you got given a lovely uh goofish link. It is not even trying to hide it. It is as obvious as humanly possible. But the way that they actually managed to spread this is that the first person that clicked on it, they would then log into that person's email account after the person put in their credentials and they would create a email forwarding rule for all their business contacts and all their contacts in the address book. So the next person, they would receive a

seemingly legitimate email from someone that they most likely contacted last because if they're in your address book, you probably know them. It passes through all the all the security checks because it's coming from someone that passes all their demark and all their little security checks because it's coming from legitimate email and it's someone that you've communicated to before. So it's not a new uh a new communication channel that might not have been um because some security tools they might warn you, okay, you've never contacted this guy. why you contact him now. So, so yeah. So, this is how it sort of spread. Um, in this specific case in one of the one of the people

that clicked on it, we saw that they attempted to send it to over 3,000 people in their address book. Luckily, Microsoft is not completely useless. It stopped it after 400. So, Microsoft doesn't Microsoft doesn't allow you to send so many spam emails at once. It sort of after four, it goes, well, maybe we'll wait. So, so that was at least some good news. But within so so I say the first instance we saw of this was about the 3rd of June but throughout the start of June we saw about three or four different companies all contacting about this specific thing that happened. So multiple companies got hit by this. Multiple companies um at different um

seniority levels got basically got hit and we saw a couple of this. Now again this is I'm not going to too much detail because yeah it's a fairly recent fairly active thing you saw. Um and what this led to is that now people sort of get fatigued because we're getting so many alerts you don't know if it's real. You don't know if it's active. Um the the tier one guys were so afraid of missing anything because the one company got breached 57 times the same company um over well sp of about 9 days. Um yeah so so so the the tier one analyst they got to the point where if they see any email forwarding rule they'll they'll

immediately flag an alert say listen and at some point we everyone just started getting this this this fatigue of like you you've cried wolf too many times nobody wants to respond to it anymore and this is one of the the previous Io also mentioned this is where you start ignoring potentially really uh serious attacks because you are so overwhelmed with very basic basic things. Uh, one of the alerts we got, I think on Monday, was literally the person mailed set a forwarding rule for their own internet provider, but the person that did that for them, their surname has the word pay in it. So, because there's an email rule that was set up, the word pay is in it. It

was just so so, so this sort of highlights some of the where you need to customize your rules. You need to make sure that your rules aren't just flagging on anything. Um, and like I said, like the the the tier one analyst, it's not completely their fault. They also got so they got so afraid that they might miss something that is actually critical, they would rather just forward it to us. Uh, so I'm running short. Ah, okay. So, I'm going to speed run through some of the stuff. Uh, so so common pitfalls that that occur is lag of lack of logging. So what we saw with the the EXI server, they weren't logging the EXI server that they

were logging all the other endpoints through EDR, but they weren't actually uh properly logging what was happening on the EXI. So they only realized when all the other servers got taken down. Uh communication issues as I mentioned uh if people uh don't get assigned specific roles, they might not actually action it. It's it becomes the bystander effect. Uh another issue is people become overreiant on tools. So people people were so used that oh no the EDR will pick this up or the networking tool. They never check does the EDR do what it's supposed to do. Is is there anything whitelisted on the EDR that shouldn't be? Um another common thing is that there's no practicing of the IR

plan. Often times the IR plan is seen as an auditing exercise but it never actually gets followed through upon. So if you're individual and you want to get uh involved with this type of stuff, how I got started is I like playing with command line tools. cuz I got used to log analysis through grip and and orc and said and word count and there's so many useful basic utilities in Linux that you can just use for these type of things and that can teach you if you're more of a windows person I'm clearly not you can use some of the security event logging tools but try they have some very awesome new uh tools that they've

released in the last month or so they've got a tool for sock simul so if you want to get into the sock environment if you want to do tabletop exercises uh CSR sock simulator is fairly good for that. If you want to know how a EDR work but you can't afford your own EDR, there's a couple of free options available. So I my first EDR that I really got used to is is Velociraptor. It's a it's not a full-fledged but you can do the basics with it. It can teach you the basic principles of instance response. Yeah. Okay. Unfortunately, I ran out of time. Sorry. Uh yeah. Any questions?

Oh, sorry. I can't see a thing.

>> You did say that the attacker was able to compromise us accounting. Was this done through the credentials used being that there was other vulnerabilities present such as the lack of multip

Okay. So with with the first couple of instances we detected like this like I said it was about a 9-day period. The first couple of them completely relied on there not being uh multiffactor authentication. So the first couple of ones got compromised by not having multiffactor authentication. Um then as the I think by day five or so we started seeing that there was action mechanism that they were trying starting to deploy to either um ask them to to to enter their their two factor authentication in exactly the same very dodgy website that you saw earlier. They they would prompt there to say listen please enter your two factor and they got your two factor authentication via that. Um

yeah so so yeah so it started off very basic and then it got more complicated as the incidents went on. Uh yeah. Yes.

>> Okay. >> Mhm. >> Okay. So, so once the attacker had VPN access again, so this this is as far as we can ascertain with the evidence that we currently have is that laptop gets switched on from time to time because they do some materological me. they test the soil and I can't remember the correct word for that whatever. Um so so so what that attacker was doing they were using their VPN access and just scanning that network constantly and and and they were looking for vulnerable machines and as they got onto certain vulnerable machines they would see where else does this machine have access to and that specific laptop at some point someone of high privileges was logged

into there and they could use mimikats to basically as far as we can basically understand based on how we saw the other attacks or the other lateral movement worked. So we suspect that is how they moved from there to the DC but we don't have exact evidence because unfortunately that that that machine was um encrypted at the time but yeah so so so the attack would use the VPN to scan for vulnerable machines pivot to one machine if it can't get any useful credential to pivot to another machine will try and find where where's a useful machine for it to actually eventually get access to. Yeah. Yeah. Okay.

Yes. Yeah. Yeah. No, no. Of often times we have no idea how they got in. So it's it it becomes a complete investigation. So So we involve a lot of forensic teams also to assist us with this. um especially in the cases of ransomware because a lot of your your your your your raw evidence gets lost. Uh so so we've got a couple of things to try and pinpoint to as close to patient zero as we can but there has been cases where this is as far back as we can go. This is we don't know. Um in those cases we try and do as best monitoring. We we sort of uh update the EDR to look for

specific patterns that we observe of how the person spread. try and see if if if if we can see how they leveraged their initial access. If we if we have no evidence of how they got their initial access, at least we can see cool this is their their their um uh their TDPs when they actually try and spread to other systems and so we basically make a rules for that. Okay. Any you have a question? >> Okay. Oh, sorry. Yeah. So mentioned that there was

something. So again, so so so based on the evidence we have so far is is they could use the a vulner that was on there to get remote code execution on that box because that was the sort of the vulnerabaries that they were looking for. So all the vulnerary scans, all the specific attacks that did with other machines that that they were able to pivot onto, we could see that they were mostly looking for anything that could allow them any form of code execution. And from two of the system that we could see, we could see that they once they got code execution, they would they would run mimicats and they would do stuff to try and get any any any

additional credentials. So we suspect that is how they got further. Again, we don't have any evidence, but that is how we suspect that they got there. Okay. Happinesses. Okay. Thanks, guys.