Graph Based Detection and Response with Grapl

Show transcript [en]

hello good morning my name is Lu style I'm here to introduce our next guest speaker Colin O'Brien and he's gonna be speaking of about graphic face detection and response all right hi everyone so as mentioned my name is Colin O'Brien I'm gonna be talking about project I've been working on for a couple of years now called grapple so grapple is a graph analytics platform that really tries to target detection response workloads so finding and removing attackers from networks before I get started talking about grapple I want to give a little bit of context I have been working in detection response in some form of a nut or another for about five years now my entire career

but I've had a lot of really different jobs in that time I started off at rapid7 I was hired by the insight IDR product team so that's their detection response service and my first job was basically to embed myself onto a data science team so I was going to be the sort of security engineer for that team and they asked me what I wanted to do and I thought machine learning sounded cool so they they gave me that job and what I found was that we did very little machine learning ultimately and really we spent the vast majority of time looking at broken or just garbage data for whatever reason sources were out of date data would age really poorly so

security data in particular just ages really terribly because you might think that it's Sarah you might think that like a virus scan happened a week ago and that data is different from a virus gain happening a month ago or today so I saw these security minded data scientists digging into these massive data sets and really managing to pull tons of signal out of what looked like the the worst kind of noise you could imagine and I learned a lot about the techniques that they leverage the tools and the ways that they work together to do this after about a year on that team I started working on the IDR product more directly so I worked on the

detection team building out attack signatures for our numerous different customers these attack signatures had to you work across pretty much arbitrary customer environments you can never really predict your next customer is gonna be they had to have low false positives and they had to catch real attackers I learned that the engineers who are building these detection x' leveraged code as their primary weapon so all of the alert logic did the same way that our service code or any other code would have been checked in it would have been code reviewed it would have had unit tests the sort of latter part of my time at rapid7 which was roughly two and a half years I

actually worked a lot on reliability software engineering and building services out and so at the end of that time I decided that I wanted to get a little more into detection response I wanted to stop being sort of the ivory tower vendor that looks down and hands down detection x' and actually do the job day to day so I left rapid7 I went to Dropbox and for two years I was working on their detection response team so actually engaging with the red team and triaging alerts day to day seeing what sort of problems were being faced and what was interesting is that it was a lot of the same problems that I had seen in that vendor space I saw security

experts being tasked with dealing with what to me were data or engineering or operations problems dealing with log sources that were incorrect or that weren't being parsed correctly dealing with log volumes that they weren't necessarily in the right position to scale to and so I tried to help that team out for about two years and a lot of the lessons learned went into how grapple works one of the big things that makes crapple somewhat more interesting than other products is that it is a graph based system and so today I'm going to talk about why I think that a graph based approach is so important for security in general but especially detection response and hopefully you can

walk away with some understanding of why that's the case okay so before I talk about grapple I think it makes sense to just go over what a graph is so graphs are a data structure that comprised of nodes and edges nodes are usually things like entities or nouns right so a person might be anode edges denote relationships between those nodes graphs are a really powerful and generalizable data structure and I think one of the easiest ways to demonstrate that is to take a really basic graph like this right there's almost no labels there's no explicit data in a graph like this but despite that fact I can say a lot of things about this graph I can say that

the green node has a relationship with the blue node the blue node has a relationship with a purple node the green node has a path to the purple node with a length of 1 that crosses over this blue node the reason why I can say these things is because graphs have this really powerful property of encoding relationship information directly into their structure this is why we see graphs used a lot in visualization but these relationship heavy workloads actually come up all over the place so as some examples Google search is powered by their knowledge graph and this is what allows them to instead of taking just a plain text search approach to a search engine start giving you

related information Facebook's graph API underlies their entire public API you can imagine why this would be useful you might want to ask questions like given a user on our platform who are their friends what are the posts that they like and then we can recommend those posts to that initial user right this is another very relationship heavy query graphs are in some more niche areas so graphs underlie machine learning libraries like tensor flow tensor flows an example of something called dataflow programming where the computation that you build in your tensor graph is represented as a sort of graph traversal and then graphs also tend to really just emerge from systems without any purpose right we end up building graphs without

really meaning to and an example of that would be BGP and the internet right you have all of these routers acting as nodes with neighbors that they know how to talk to and that's how packets managed to get from one system to another traversing this massive graph of the internet a little closer to home in the financial security space graphs have been used to great effect for quite a while now to detect thing like credit card fraud or scam businesses and they do this by querying and building models on tops of on top of these graphs to understand things like commonality about locations that credit cards are used which users have in the past worked with a business and then why

is this new strange user talking to this business and and they're sort of a staple in that world at this point in the information security world we're starting to see graphs gain a lot more traction unsurprisingly given all these other communities that have been leveraging them John Lambert at Microsoft wrote a post a couple of years ago about his opinions on graphs in InfoSec and in this post he makes a statement which many people may have heard at this point he says defenders think enlists attackers think in graphs as long as this is true attackers win and in this post that he creates afterwards he talks about an example of this list and graph based thinking John

talks about how when defenders are tasked with protecting a network one of the first things they'll do is start creating lists they'll create lists of privileged users like domain admins or exposed services or high-risk assets that contain maybe user data and they'll start to prioritize the work that they do based on those lists this is really different from how attackers go about doing their work attackers are going to opportunistically land on some asset they'll compromise whatever they can probably something unprivileged and exposed and they're gonna leverage the capabilities that they gain from that asset to start moving around your network and this can range from things like lateral phishing where an attacker might compromise your co-workers email

and then they'll send you a PDF and say like hey look this over and all of a sudden they're they're taking over new users and assets on other people's computers across your network or as John talks about using tools like proc dump or meaty cats to dump credentials for memory and start moving around laterally and what the attacker is really doing here is they're abusing trust relationships right the attacker is starting off on this network they don't have these lists of users or assets ahead of time know these risk for prioritizing their doing discovery they're moving across the network in a very graph oriented way at the end of his post John says managed from reality because that is the

prepared defenders mindset and so I think there's kind of a deeper meaning here at least that's something that I draw from this post which is not that graphs are necessarily better than lists or lists are better than graphs or anything like that it's really that when you have information that so clearly maps to one model one data structure and you force that information into some other model you lose something inherently right so when we as defenders take graph information like a network or like how an attacker behaves and we pull that out and we force it into lists we lose actually the most important thing which is what the attackers are taking advantage of those trust relationships

one tool that I think really demonstrates a shift in approach is bloodhound bloodhound is a tool that both attackers and defenders can use and I think that really goes to show that it's sharing this common model bloodhound allows you to query and visualize Active Directory as if it were a graph and from a defenders point of view this is much closer to how attackers are thinking about and taking advantage of your systems and this allows you to move away from asking questions like who are my domain admins and how do I protect them and instead start asking questions like how would I go from an unprivileged user to a domain admin and start cutting off those paths

another tool that I think demonstrates this in a different way is cloud mapper this is built by duo and Scott Piper cloud mapper is a tool that allows you to visualize and graph out all of your AWS resources and policies and this lets you move away from thinking about individual policies and how they might have individual issues or they might look fine when you're just glancing at one of them but when you start to combine them together the way an attacker would as they chain attacks across different assets in your environments you can start to find privilege escalations or leaks that you didn't expect so in the detection response world the model and the primitive that we leverage

is the event and we do this by collecting logs which are some sort of representation of these events things like process execution logs file creation logs network instrumentation that sort of thing what we do is we collect billions of these every single day and we store them in what is effectively a massive index or a big list called a sim and we run queries against these and we look at things like individual events as they occur and we try to write queries against them to find something suspicious if we do find something suspicious we go back to our sim we write subsequent queries looking at more logs the thing is that when you take some of these logs down and you know

what you're looking for you can start to see that there's actually a lot of relationships hidden in between them a process ID and one log might be a parent process ID in another log file name might be shared across them and when we start to pull these relationships out and make them more explicit I think that we can start honing in on more complex attacker behaviors rather than isolated events as they occur across our environment and so a grapple really tries to demonstrate what we've just seen it tries to demonstrate that when we can move from thinking about logs as our fundamental primitive and instead more as a building block into an abstraction that matches what attackers

are doing more closely we can be a lot more effective as defenders so grapple will ingest raw logs you can send it up sis Mon or any other data type that it supports it's gonna parse those into a graph representation so a process execution log might generate a graph that has a parent node a child node and an edge between them these graphs go through an identification process I'll talk a little bit more about this later but essentially what this means is that we don't want a million different nodes representing the same entity right if we have a thousand logs coming up about chrome doing some action we really just want one node for that chrome entity so

that's what identification is going to do these identified graphs get merged into grapples master graph so in real time as these graphs are generated they're getting pinned up into this massive graph database that's going to represent all of the entities and behaviors occurring across your environment and as this is happening on every single updates this master graph grapple will orchestrate your attack signatures or what it calls analyzers they're gonna look for behaviors they're gonna look for suspicious patterns and when they find these patterns and you've deemed this a risky enough situation to start investigating you have a tool that's really gonna exploit the ability for graphs to pull in context quickly right everything's joined together sort of

inherently and grapple calls that an engagement and so I'm gonna talk about how all of these things work both in terms of a sort of generalized graph based approach and then how grapple implements it when I talk about the master graph in grapple it's really what you see here there's actually more nodes than just what's here and there's also a sort of plug in nodes you can add your own models but nodes are pretty much what you would expect they have properties so a file has a path a process has a process ID or process name and then there are these special properties the edges right so a process has children process can create files

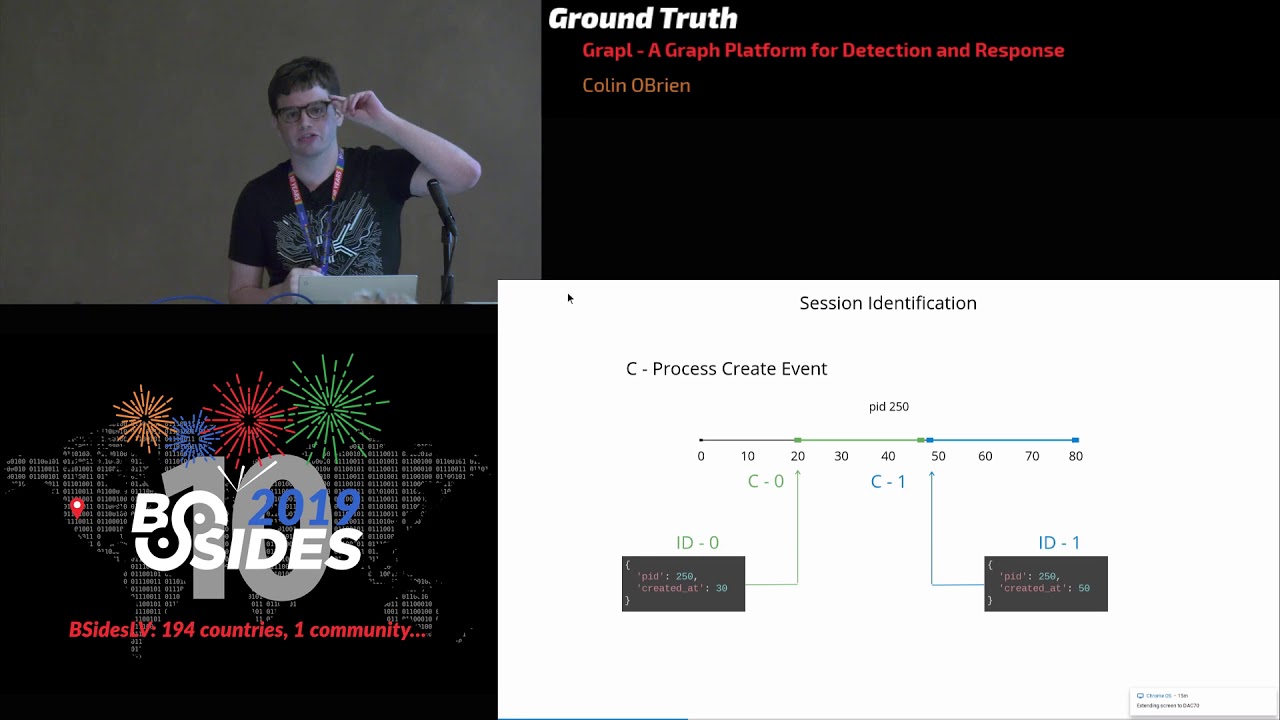

network connections will connect out to their destinations so identity is really important not just to grapple but in general it's why tools like sis monde try to package identities for things like logins or processes as baked into their their processes is possible grapple is gonna do something very similar to sis Mon but it's going to do it on the back end so it's gonna be generalizable to really any source type that you send up and it's gonna provide an identity for every single node type so not just processes but users files network connections anything that it can understand as a node the problem with logs without identity is that you might see information like we have here right

three events they all share a process ID across some series of time you might think that this is all referring to the same entity but that's not necessarily the case process IDs can be recycled and they're recycled actually really with with quite a good amount of frequency so if you don't have some way of resolving this it's gonna be really painful in any kind of investigation so grapple is going to use context from these logs the timestamps the event metadata to disambiguate what these entities are and provide a canonical identity when you combine that identification process with the graph based approach you can go a lot further than something like an instrumentation tool like sis Mon we can

coalesce all of that information that comes up across thousands of logs on to just that one entity that one node using its its node key which is the identity and we can throw away a lot of redundant information so this helps grapple scale sub linearly so you might send a million logs to grapple in my experience across you know a million logs maybe ten to thirty percent of that information is actually unique the vast majority of it is just the same thing over and over again the same logs containing the same Pibb process names if it's if it's something like sis Mon they're really really heavy so it's gonna have the process gooood it's gonna have parent

process information we can get rid of a lot of that and grapple and this can compound really quickly so if we have a master graph with just a single node it's got a pit of 100 and an event comes in and there's a process execution where pit 100 executed a child process we can immediately throw away some information right we can throw away the pit and then that type information is actually encoded directly into the graph if the child process creates a file again we're throwing away more and more information and if that child process then reads that file you can see how this really quickly compounds we're storing almost none of the information in that log

we're just adding a new edge with a timestamp this really goes towards a lot of what I've seen in the field as people fighting with their data I see this in security I see this in really every discipline where you're dealing with a large amount of data scaling linearly or worse with your data sets it puts you in a position where you have to start throwing data away every year or you have to ask yourself do I really want this data source and I don't want people to have to ask that question I want you to be able to put as much data in this thing as possible okay so detection is really what I think in in terms of a log based

approach Sims and logs push us in the wrong direction so I see a lot of detections that are looking at properties of an attack the reason for this is that it's a little difficult to look at multiple logs especially across log sources in most tools today and logs don't really tend to encode a lot of behavioral information they tend to just have properties this makes logs pretty good for things like searching for hashes or IP addresses I like those low-level pyramid of pain io CS but when you want to actually talk about attacker behaviors they start to fall apart joining these logs together is is usually pretty difficult so what I end up seeing is

people building signatures based on attacker controlled information if I want to ask the question where is exfiltration happening on clients I can't really represent that in a generalizable way using logs at least not very well what I'll end up writing is something like you know curl and W get are used for this a lot and these are the command line parameters that I expect and if I see these file names show up then that's my signature but that's really really brittle every aspect of that signature that I've written is based on attacker controlled information and I see these signatures all the time not just for X full really for everything and so you might try to

put some like globs in there or use multiple queries with ORS and build this kind of monster query that tries to be robust but ultimately I think it's a losing game one way of demonstrating this is if we take two process execution logs this is word and PowerShell executing and there's no properties to go off of right maybe if the power show log had command line arguments I could play that losing game of trying to parse them and say like oh this you know base64 encoded data being passed in is suspicious for whatever reason but as I said I that that's attacker controlled information that's not what we want to focus on similarly word and powershell

are benign you know digitally signed valid programs right they're executing in the vast majority of environments and they're doing so legitimately so we can see here that logs are hiding what I would say is the most important piece of information we have this pit sort of implicitly joining these logs together and we can see that it's got nothing to do with word or PowerShell executing it's really that word and PowerShell are executing together right there's this relationship between the two that we don't expect we want to start focusing more on behaviors and relationships as opposed to isolated events and properties and we can generalize this further it might not be word it might not be PowerShell right

there's a number of ways to get code execution on an endpoint it might be Excel loading a macro it might be Adobe Reader getting owned right and they might shell out or they might call it to a JavaScript or Python interpreter on your system and so what we really care about is tracking the fundamentals of what attackers do attackers execute processes we should be tracking that sort of information not trying to hone in on overly specific signatures that attackers can almost accidentally bypass when we start to represent our attack signatures as graphs and when we stop trying to deal with specifics of attacks and start to think more in terms of fundamentals I think we can represent

attacks much more creatively and much more powerfully we can still leverage properties so as an example we might still want that signature forward or Adobe Reader or excel these commonly targeted applications with non-white listed child processes we can leverage that just fine graphs expose properties perfectly well but we can also start asking much more complex questions like what are The Dropper like behaviors in my environment a dropper is something that I've defined as having a process that has reached out to an external network creates a file then executes that same file we're talking about multiple composite behaviors you would have to probably join at least four or five logs from different source types combine them together and then analyze

them in an in a sequential order right but in a graph this is really straightforward so what I really want to do here is basically start painting our master graph with risks we want to start labeling our massive data set and anyone who's done data science to work knows that this is essentially the entire job you start off with some murky data set you get it into a clean format and you start labeling it and so for that I would introduce the concept of risk another problem I see with security teams is drowning under false positives it's a losing battle of constantly whitelisting anyone who's done security at a tech company in particular will note that developers and

attackers do almost exactly the same things every single day their motivations are basically the only thing that differs you will have developers scanning your network you will have them basically doing pre vests all the time running Python running things you've never seen and so we don't want to have to constantly be chasing down developers doing their job instead we can start to model things in terms of a risk gradient I can say unique parent-child process that's a low risk event it's definitely something an attacker does I want to track that but it's nothing like that commonly targeted application one that's that's a much more finely tuned detection that I'll probably try to maintain a whitelist for so what we have

here is what I would call local correlation these are tightly coupled attacks signatures but we want to take this labeled information and feed it into a model and that's not going to be a machine learning model it's just a way for us to view these risks together and get a sort of arbitrary level of correlation and so here I had reduced the concept of a lens this is actually inspired by a talk by for who's the the C slide over I gave a talk in 2012 where we talked about sort of an arbitrary correlation lens and so I really like that idea I built it into grapple and the idea is that you have these

correlation points like an asset a user's laptop or their user name and through that lens you can view all of those other wise disjoint risks and now I don't have to write signatures like a unique process parent process relationship with a unique network connection I can write those individually view them through a risky lens like the asset and see that they overlap automatically and now we can build a risk score that takes all of this into account and risk and grapple is very simple there's no fancy statistics it will count all of the risky nodes and take the sum of their scores and for every node that has an overlapping risk right it's involved in

more than one risk at a time we add an increasing percentage so 10% for the first node 20% for the second that sort of thing and what this is going to allow us to do is push things like you know silly anomaly is like a parent-child process that just happened to be unique but it's it's an update that just happened on a computer and those are gonna fade into the background and all of the risks that correlate together are gonna start lifting up a lot higher the way that these signatures are represented is in Python my experience with all of the various domain-specific query languages out there is that they were built for one specific purpose and

as soon as you try to move away from that you start running into problems so a lot of the time they can do things in one or two blinds that's really really cool and powerful and then you want to do something like a join or you want to really do anything that it wasn't designed for and you're gonna start running into like huge performance barriers correctness problems you'll run into the query sizes exploding and that sort of thing so grapple just uses Python it's general-purpose Python is adopted by data science security communities I have confidence that if in five or ten years attackers start changing up their tactics while other query languages might start to falter

Python is gonna be able to scale an ex-pro Civet e to whatever they're doing and so this is what a an analyzer in grapple looks like here we have that commonly targeted application this is a process query where we're saying it has one of these process names and any unconstraint child process we have no white listing on the child processes right now grapples gonna take that query it's gonna do client-side query optimization it's going to ensure it executes in constant time so it doesn't matter how much data you have these analyzers will always run in real-time in constant time they'll be batched up paralyzed there's a ton of work to make sure that you don't have really painful

operations work to deal with and if this signature ever hits that on response method gets called next the on response method can do things like Khan texting as I'll show in a minute or subsequent alert logic but really it's just going to label the data with some kind of a risk and and a name I think where Python starts to really show some power is when we want to do things that are a little more out there like it sound silly but counting efficiently is not something that I've seen a lot of existing systems do very well here we're leveraging a parent/child counter abstraction and this provides a specialized interface it hides things like caching the vast

majority of the time this isn't even going to hit our data Lake so it's gonna stay really nice and efficient this signature here is looking for living off the land type attacks so processes with parent processes where the process binary is in system 32 or 64 we're going to look for any execution of processes that match that will use our Parent Child counter abstraction to say has this happened a few times or less and if it has that's going to be something that we want to track I think probably the best part about using a standard tool like Python is that you get to follow standard practices if you were to Google your Simms name with unit tests you

would probably not find great results if you found any they would probably tell you that it's just not supported at least that is what I have repeatedly found you contrast that with Python there's a number of tools for making Python correct there's linters for style you can put it into code review and store it in github you can get that on github you can run things like my pie so grapple is statically typed so you can avoid errors before it ever runs and most importantly you can just import the unit test library from the standard Lib and write unit tests for your alerts so when you're whitelisting or you're tuning over the next couple of years when the

people who wrote the alert are no longer there and someone else is maintaining it they can be confident that their alerts and their detections are still working properly I see this as a really significant problem at a number of companies they have hundreds I've actually seen thousands of individual detection x' and they don't actually have a way of determining that those work they might have some end to end like attack system that were tries to test the entire pipeline but like coming from a vendor we just wrote unit tests and so I wanted to kind of give that capability to the average security team I think investigations are probably where log based systems really start to

fall apart to the point where attackers take advantage of it and so I'm gonna do sort of a strawman investigation of an attack using a log based system so as the incident responder I come into work I've got an alert and there's some process execution log and it it went off because some threat intelligence feed has told me that the hash is evil for whatever reason it doesn't give me much other information so in an in a situation like this there's usually two things that I want to do first I want to just quickly see what has this process done I'm not gonna do like a fully scoped thing that's something that I'd bring the rest

of the team in for so I'll just see what it's done and I'm gonna trace it backwards and try to find where this thing came from so we'll open up a search window probably like four or eight hours depending on when this thing happened I'm gonna look at like the last business day or so and I'm gonna start pivoting right I want to take information that I have and I want to gain new context new data so I'll search for the pigs I want to see what this process has done and I get all these logs back right and and some of them are interesting and some of them tell me that this thing may be shelled out a

couple of times and this is notable enough that I'm not immediately discounting this as being benign someone to start tracing it backwards and I'll search for that parent process ID and what I get back is a ton of logs because the parent process it turns out was something like cron or launch D or schedule task manager right what this tells me is that the attacker has probably been on this asset for weeks or months and they scheduled their final payload to execute in the future because of this exact workflow they know that this is going to really mess with me and so that's why they do attacks like that they know that the data that I'm looking

at is going to be severely disconnected in terms of time from the data that is gonna tell me where this attacker actually started off so at this point I've hit kind of a dead end with processes so I have to pivot off of things like the hash or the filename I want to see where this payload was actually created I don't get anything for the hash and I don't get anything for the file right clearly an attacker is outside of my search window I really only have one choice at this point I'm gonna extend my search window out and this might be days if I'm lucky more likely weeks and if I'm really unlucky they've been here for a couple

of months this is starting to give me the results I want right I'm getting the image name creation maybe but I'm paying really serious prices here for one thing if I've extended my search window from four hours to a month every single search from this point forward is going to be hundreds of times slower I could try to play the game of managing a whole bunch of different search windows in like 10 different tabs but there's just no winning with a with a system like this what's worse to me is that I'm probably gonna start running into fib collisions so maybe you're lucky and your entire investigation can be done with something like sis MA and using

process codes that's never been my experience even on Windows systems I end up looking at the event log I end up looking at something that has a pid' without a process go ahead and pit collisions will happen over this period of time for a client laptop running any multi-process architecture program like Chrome or Firefox or all sorts of things at this point users are gonna reuse pids a number of times in a day so when we're talking about weeks or months I will have many paid collisions to resolve and that's that's gonna suck for me it's gonna really slow things down especially coupled with those slower search times a third problem that's a little harder to

see is that the fundamental thing I'm trying to do here is pivot off of my data but I don't really have clear questions I'm sort of just doing like raw text searches for whatever information I have and I could try to constrain those by searching specific fields but it's it's just very murky in terms of what I'm looking for and what I'm getting back so grapple takes a really drastically different approach and at the heart of this approach is the Jupiter notebook so this is again me stealing ideas from the data it's community because they've been doing this sort of work for years and it seems really silly that we wouldn't like look at what the experts in data

analysis are doing right that's that's a huge part of this job and what they're doing is they're using Jupiter notebooks these are these sort of super powered Python environments so if you've ever opened your terminal and typed in Python you may have started writing code and it gives you some immediate feedback right these are a lot like that but you can organize your code into cells replay them modify them you can also do things like shell out to the command line really easily you can embed images markdown and these are sort of the tool to trade at this point for investigating large amounts of data we're starting to see Jupiter notebooks and security a little more so I believe as your

Sentinel is like pushing notebooks as how they want people to do investigations so it's definitely gaining traction as an idea so I mentioned those lenses earlier so as the incident responder looking at your grapple homepage you'll see a list of lenses right these are gonna be your correlation points so different assets or usernames we're just gonna see which one is riskiest and I don't have to worry about like having a thousand false positives I have a set number of hours in the day that I can put towards incident response and I'm just gonna apply that to lowering the risk as much as I can right and I think this kind of goes towards fleas talk a little bit as

well you don't want to be like spending all of your time gatekeeping and like hammering things down you want to just sort of manage risk you want to move towards a risk management approach otherwise you're gonna burn out your on call so if we click on one of these lenses we get a graph this is going to in this case contain three nodes with some relationships between them we can click on any of these nodes and we can see any of the properties for things like these processes or files or anything that's in here this is a pretty data rich graph this may have taken in this case probably tens of logs so probably like more than a browser screen

worth of logs but because we can coalesce all of that data with that identity we can create a very like dense and compact representation if we click on the command dot exe here you can see that the analyzers involved our that we've never seen anything called dropper execute command dot exe before so there's a signature and grapple that just looks for unique parents of command dot exe right that's a pretty fundamental thing that attackers do they shell out anytime you're in Python or another language and you try to P open it's gonna go through a shell and then we have a much more constrained signature which is that we maintain a white list of valid SVC hosts

attackers like to pretend to be legitimate services like SVC host and so we're just gonna track that sort of thing and thanks to the lens these automatically overlap and we get this cumulative risk score so in my Jupiter notebook I'm gonna create an engagement engagements are like a special type of lens where you control what nodes are brought into it and I'm gonna pull in that first node I'll pull in the SVC host right and I'm just gonna pull it in by its identity and this is in the Jupiter notebook so you would probably have like two browser panes or even two monitors one with a jupiter notebook and one with your live updating graph

visualization at this point pivoting is really efficient and really simple I don't have murky questions I want to ask what this process did so the first thing I'm gonna say is what children processes were executed from it this is extremely efficient there's no search windows to deal with it doesn't matter if these two processes occurred across wildly different timelines this could be months apart it's gonna be a constant time expression it's gonna pull it in a millisecond so I say get children or or get the parent process in this case to start expanding it out and that pivoting is nice and efficient and clear so to sort of replay a similar situation as before we would start off with our

jupiter notebook we have this sort of route of our investigation right that's our SVC host and we're gonna just do our pivoting behavior right i want to find out what is this process done i say get the children processes immediately that's gonna show up in that graph visualization on the left as i'll i'll show you and it's also gonna let me display and play with the information in the notebook itself so i know this thing is it's probably not great it's shelled out three times that's pretty sketchy I'll do that pivoting behavior again I'm going to climb backwards up the process lineage right really simple really efficient we can see that there's a dropper dot exe we can see

that there's this chrome bxe so this is telling a really really simple story right a user was probably fished through Chrome they executed something called dropper that dropper if we were to expand this out further reached out to s3 pulled it down and all of these logs are available for you guys as I'll talk about later and we're gonna have this graph that looks something like this now this graph again is going to contain probably tens maybe low hundreds of logs worth of data this amount of data would probably take up multiple pages and your sim to go through but here we have a really nice compact representation so you end up with a workflow that looks a

lot like this at the end of any investigation you will have two really powerful artifacts you're going to have on the one hand a graph visualization this is going to be really great for showing teams that don't want to pour through thousands of logs you probably want to interact with other security teams you might want to show an executive or a manager depending on what's going on they're not gonna want to look at logs graphs are nice they're compact they're visual and even like a big attack across your environment isn't gonna be like too unruly of a graph to visualize the other thing that we have is our investigation and it's encode the fact that it's in code opens up tons of

potential the obvious one is that we can replay it right so if another investigation is taking place and it's a you know a very similar situation to this where we want to take a look at the children processes and then trace it backwards we could move that to a function we could just replay it in line the idea here is you should be accelerating as you investigate so one investigation should feed into the next one right you should be getting faster and faster every single time this could also be training material or run books so you have a new person coming into your your team maybe you had a red team recently go ahead and like start a new

engagement have them play through your last notebook step by step and explore that data Apple has a plug-in system and I've managed to apply grapple to a lot of different problems and in ways that I don't think I ever would have even tried after being burned before and taking clusters down and that sort of thing with other systems so here you can see an inter process communication node which is implemented as a plugin and this allows me to detect things like SSH hijacking where a process is performing IPC to an SSH agent or SSH daemon we can do process tree analysis so unique parent unique grandparent processes again this is applied to SSH we can think see like a

Python process shelled out to SSH the nice thing with grapple is that it's going to stack unique parents and unique grandparents automatically in those lenses right so that correlation ends up building up really really quickly the more unique your process tree is you can build recursive queries which I don't actually think other systems even support but if they did I think it would be extremely slow but in Grandpa we can ask questions like given a process trace that process tree backwards and if we see a user ID change in that process tree we're gonna call that a privilege escalation right so a simple case of this would be calling pseudo and changing users but if an attacker had

some sort of an exploit where they were able to prove esque and spawn a child process as long as that relationship is there we would be able to catch that so I'm gonna really quickly go over how to set grapple up it's designed to be really simple so you can clone the repository crapples open source it's free to use you pull it down go to the grapple cdk folder you have to fill out a dot env file with just a bucket prefix in organ amiss probably a good idea something unique and run the deploy all script and that's really it at this point it's gonna set up all of the infrastructure for you there's really

only one more step and it's pretty simple go to the AWS console go to the sage maker notebook section grapple will have already created a notebook for you so you can just open that up upload or use the grapple provision notebook and just hit the play button and it's gonna set up the database schemas it's going to set up a user for you and you're good to go at this point you can send up the demo data it's in the repo as well and that's that's really it so I'm working full-time on this now grapples open source I'm certainly curious to hear any feedback that people have there's a slack channel or you can just hit me up on Twitter

always happy to hear feedback about it

we got a couple minutes for your questions cool so I went over here yes I don't think I could probably say a lot more but yeah there's there's definitely interest from everyone has these problems vendors have these problems security teams have these problems lots of vendors are drowning under their data the same exact way that security teams are and there there's definitely interest there yeah yeah so with the right instrumentation we could definitely trace it if the data is not being provided to grapple then you might have to do like a little work to say like show me all of the possible places where the cron may have been set or where that may have come from but the

nice thing is that if you just fit that instrumentation there you'll automatically get that right you don't have to do any manual work it'll just sort of be there yeah question over there

yeah so there's two what's that oh sure so the question was I showed a lot of like Windows stuff what other data sources does grapples support I think that's like a yeah so right now grapple the the version that's in the repo supports two data formats one is sis Mon so don't just natively work with system on the other is just a generic JSON format so if you can map your logs to that format first and send it up you'll get things like process and networking and file work all for free if you wanted to have custom parse data you can build parsers and grapple you can extend the whole thing arbitrarily so if you wanted to do that we should

definitely talk but I have done like the SSH stuff was on Linux my colleague is working on an AWS plug-in that'll let you ingest things like cloud trail so really it's it's arbitrary you can bring your own model yeah and we've got one in the back over there

yeah yeah so cycles are fine they're probably so the graph the Python code you saw is gonna compile down to a graph query language and it's gonna handle resolving cycles for you so if there's a cycle in there it'll detect it but cycles are natural right a process might create a file which executes says another process which IPC's back to that parent process right so it has to handle stuff like that and there's work in there to do it yeah any other questions oh yeah

[Music]

so grapples built off of a graph database called D graph which has a very like graph QL like query language it's it's at least inspired by I think they now support graph QL natively so you could if you wanted to build your own like grapple analyzer library you could build it on top of graph QL if you want to just use like pure graph QL you could do that in grapple I believe today I think it's on a version earlier nut or new enough to support full graph QL yeah I think that's it cool thank you thank you thank you for speaking [Applause]