Bias Is the Bug: How Analysts Get It Wrong (And How to Fix It)

Show original YouTube description

Show transcript [en]

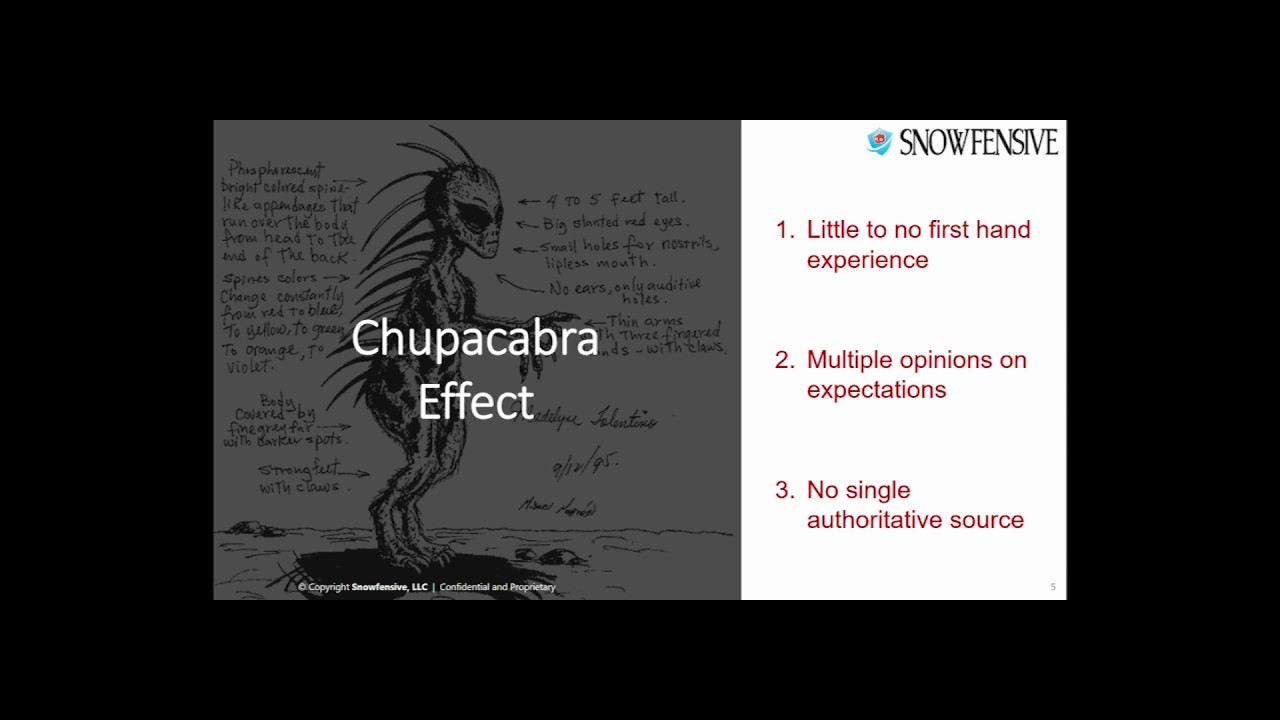

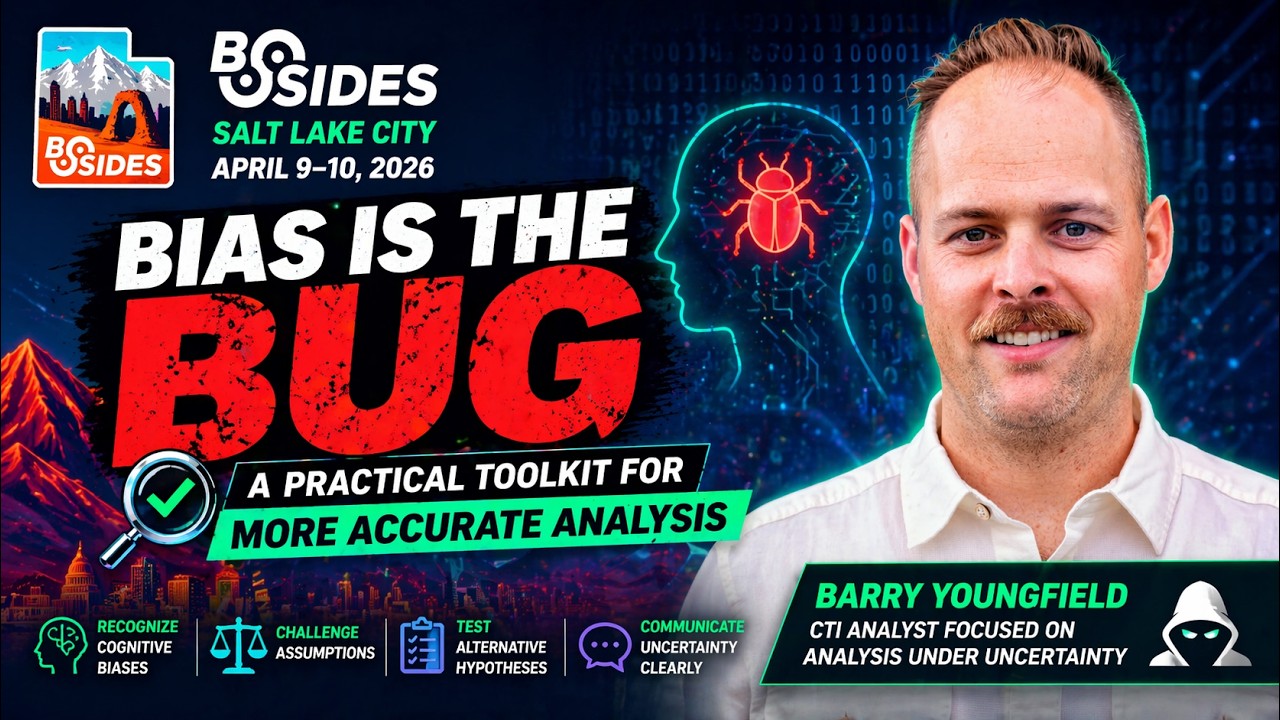

Hello everybody. Can you hear me? I'm guessing we can hear you. You can hear me. Hello. Welcome. Uh, great. I'm gonna probably wander around a little bit because I tend to wander, but we'll jump right into it. Um, this is a talk biases bug. Uh, going to give you a more practical way or a practical way for a more accurate analysis. So, um, this is me. What's on this slide is really just saying that I know things and that I like things. And um if anybody has any good space sci-fi recommendations for me, TV, reading, whatever, I would love them. Um and the second thing is if you want to debate your favorite energy drink with me, I'm

happy to do that. So, um, with that, uh, I'm going to lay down a little bit of groundwork for this talk today because it might be a little bit different than what you guys have seen here at Bides. So, this is going to be a talk that's a little bit out of the ordinary. Um, I want to talk a little bit about how um, not how I've done some cool hack or found some vulnerabilities or created an AI army to do my day job, which is something I'm still working on. Um but I want to talk a little bit about the human programming that can interfere with the decisions that we make. And so um let's add a little bit more context

here. Who is this talk for? Um this talk is really for anybody whether your job is an engineer or an analyst or an admin or a VP or a developer. Um but I guarantee that you'll get something from this talk because we each are making decisions with limited information and um analysis which I posit is just it's something where we have to make that gap between what's known and what's unknown and as we kind of claw back the unknown to find that thing that's known we we have to do analysis to get there. And so um that brings me to this quote which is from uh Richard Huer who is uh really famous in the intelligence analysis

world in the intelligence community. Um there's a fantastic book that he put together that uh basically all of your free letter letter agencies when they have intelligence analysts they read this book. Um, but what this really gets at is that uh we live in an age where data is available to everyone. There's all kinds of different data that you guys have in your environment from firewall logs to EDR logs to um having a SIM or an NDR or OSINT for enrichment. We live in a datarich world, but we also are decision poor. And so we rely on our tools a lot to give us the answers. And that is when um we can run into some issues. So we're going to do

something fun here. This is a little exercise. Um you guys can say out loud, but not too loud. Um, the color of the word is written in, not the letter, not what it says. Um, I know this is, uh, kind of broke my brain as I was putting this together. Um, but what it's really meant to show is that, uh, your brain works against itself. There's some different forms of thinking, fast and slow, and um, your brain creates these shortcuts to make decisions faster. And those shortcuts are things um that build up over time. And so as uh those shortcuts are really known as what's called cognitive bias. Um and this is a definition here in psychology

which this talks not about. I could spend a whole um you know course worth of of material whole semester's course worth course worth material talking about psychology but um what it really means is that uh cognitive bias is the automatic and systematic way that your brain processes data and makes decisions and how those um decisions impact how you perceive the world around you. Um and that's really what cognitive biases is about. Um, and it is a field of psychology. And so, um, as you can see here up on screen, this is a little bit overwhelming. There's 180 of these cognitive bices that are studied, um, throughout psychology. And, um, I'm not going to get into all of them.

Obviously, we don't have time. Um, and that's a lot of information. You probably didn't come here for that. But what I do want to do is um look at this through the lens of a common everyday security task. So you uh let's picture this. Um it's a normal day in the security operations center. Uh you just started your shift. You have five alerts in the queue. Um and this is the first one that you pull up. a suspicious process that was run on one of your workstations. Um, upon digging in just a little bit because you don't want to spend too much time here when you're triaging this alert. Um, you see that similar alerts like this have

fired on the same machine over the past six months. Every other analyst that's looked into investigations for this has deemed it as uh as benign. Um, since it's likely the second or third or maybe fifth time that you've seen this particular alert, you're inclined to mark this as a false positive. And so, after all, there's four other alerts that you have to deal with that are kind of in your queue waiting for you. So, if you decide to look at this, it could read up it could eat up um your time, wasting u maybe missing out on more critical alerts. But let's pause as um you think about this this scenario. Do you think that there's cases where your

cognitive bias shows where your brain is already making those automatic decisions about what you see here? So certainly is. And so that's kind of what we're going to talk about. I have five biases here that we're going to introduce real quick and then move on to talking about a toolkit. So the first one here, anchoring bias is really this static lead um is the tagline for this one. Uh because anchoring bias shows up when we um stick to a specific reference point of something that we've seen. Um that might be initial an initial data point. It might be one that comes along comes down along the line but oftentimes it could be totally irrelevant to um

that initial question or um the subsequent conclusion. So in security this happens um a number of different ways. Uh one common way could be as a vulnerability management team if you are initially ignoring vulnerabilities based on only their CVE number uh and only looking at maybe critical vulnerabilities instead of u medium severity vulnerabilities and just disregarding them instead of putting in more um thought into the context of your environment um or actual attacker activity. uh you could disregard a low a low ranking vulnerability. Second, um a security analyst might incorrectly attribute attacker activity to um a copycat actor and subsequent investigation if they anchor too um tightly onto a specific point. So when we fail to pivot from

information that we gather and information that we take in and rely too heavily on singular data points um especially when introduced early in the process that's when we fall victim to anchoring bias. Uh the next one here is availability bias. Um availability bias has to do with our brain's ability to recall information. Um maybe it could be that it's recent. You could think of um think of this as your brain finding the easiest way uh the thing that's easiest to imagine in a given um circumstance. That could be again something that happened recently or could be something that h that evokes kind of a visceral um feeling evokes strong emotions um or maybe it just reminds you of a

core memory. So something along those lines. Regardless of this, it's when your brain is using whatever is most readily available to make that decision. Um, a common way this comes up in security is when organizations build around the most uh visceral threats they've experienced. Think if your organization had a massive breach that they might that they might um make decisions based on the things that happened in that breach regardless of uh the current environment, the current threat environment. And so um that's what availability bias is. Next one is a little bit more of a flavor. It's kind of a flavor of availability bias, but this is based on um based on time. And uh the important distinction here is

that um while both deal with information that is available to us uh in our brains when we make decisions um their recency bias is when we give disproportionate weight to the most recent events simply because of their proximity. Um, and a classic example of this is whenever uh there's a plane crash and you're traveling, you might be more afraid of plane crashes if one happened more recently than in the past despite the relative security of driving versus flying um when traveling. But in security, this happens when an organization um takes reactive security stances uh and builds defense strategies based on kind of the most recent attacks, the things that come down the line. Um and I think this uh you know,

cue the AI comment here, but this is something that's happened quite a bit recently that I've noticed in in my day job, but um everyone's in the industry is scared of AI attack. um how AI is going to you know do some novel kind of attack or enable attackers to more quickly but they do this in kind of in the face of realizing poor security posture uh not taking care of lowhanging fruit in their environment um but really kind of focusing on AI because it's uh the most recent thing okay uh another interesting one is uh survivorship bias which um story time here you can see an airplane up on the screen. Um during World War II, Allied

um forces were analyzing damage uh were analyzing damage patterns of bomber planes that returned home safely uh trying to find ways to make sure that more planes could return home safely just like these ones. But during the process um uh or dur during the analysis it was proposed that the red areas which indicate um bullet holes in the planes be reinforced because if the bullet holes were reinforced then more planes would um return safely. But what um they failed to account for was that the planes that returned home safely were actually the planes that survived. the planes that didn't survive um probably had holes where you don't see holes here on this um on this plane. And so just

meaning that um for us as we take a look in security um at the things that happen in our environment um to not discount attacks that are blocked for instance. So, um, just because something was blocked doesn't mean you're not still being attacked or that a motivated attacker isn't trying to find different ways around it. And so, in that way, when you're doing analysis to also consider the things that kind of miss that filter that that that initial filter um that makes things makes it to uh the data that you're analyzing. Then the last one that I want to talk about here is uh really the granddaddy of all bias um of all biases which is

confirmation bias. And confirmation bias um is where we choose things that support our own beliefs. And really um really what's a core mechanic of this bias is that we fail to um test the negative. So for instance, I don't try to disprove a hypothesis. if um I'm looking at some type of information, I'm not actively trying to find evidence for something that might prove that false. And so it's in this way where um we need to see both sides of the information that we're processing. Um and then in security uh this can service in a lot of different ways. Uh for instance, um we're try uh we're trained to start with the end in mind. So, if you're analyzing

something to if you're analyzing something only to complete a specific task or objective, uh you're likely letting confirmation bias take over. And uh maybe perhaps you're doing an audit and you're looking for those check marks on your list or maybe you're conducting a threat hunt and you came across an early hit. uh it supports and that hit supports your initial hypothesis aligned to u maybe a vendor article that you're reading um but you fail to miss those things. So at the end of the day um it's not that we're uh at the end of the day when it comes to confirmation bias if you're not actively trying to disprove the theory or hypothesis you're missing information

and certainty. And by only confirming what you know and not challenging uh and not challenging, you're simply guessing. Okay. So, we've learned a little bit about biases. Let's talk about a toolkit that can help us overcome uh these biases. Uh the first step to uh fixing the problem is admitting that you have a problem. Um, we need to know that biases exist. We need to expose oursel to different viewpoints and um, alternate information. Um, and the most surefire way to overcome our biases is by implementing systems to um, combat those in our thinking. So with that um I have put together what I have deemed the bias foster loop. Um four proc uh four steps uh observe, hypothesize, test and

communicate. Um in the observe phase you are um you're trying to discern what you know and what you're guessing and what you're inferring about the situation. It's important to distinguish what evidence you have that uh that what evidence you have available and where you're drawing conclusions. So this will help you communicate in the communicate phase when you um test the accuracy of each of these. So after you have gathered what you know about the situation, put it into these buckets, you're now going to create some hypotheses where you say I think that the I think that this explains what I have observed. I think that this um might uh and it's important to come up

with multiple hypotheses to kind of get a more complete picture. Um this will help you prove or disprove uh those in your conclusions about those observations. Um it's kind of that we it's important that we acknowledge confirmation bias in this that we can find something to disprove what or that we're testing that negative as well. Testing is where you kind of get down to business and you go collect the data and you um find supporting points and supporting information and you put that to the test against your your hypothesis there. Um and you document where it fits in and where it doesn't fit in. Um and then you can later this can later be revisited and uh

by you or others when you re-evaluate or recreate your analysis. And then the communication part is where you're communicating what you know, what you found, how that influenced the decisions you made and where you have gaps. So in that way, um, you're communicating all the way through your analysis. I've got biases. I can overcome them by doing these things. This is where we might need more information. But it's a way for you to um, it's a system that you can as you come across those. Um and then the second thing is a bias checklist. Um on this slide you'll see a number of questions here that overlap from biosbuster loop but um it's designed to be direct questions that can

be used to used um they're direct questions you can use versus areas to explore. So each time we ask one of these questions it can um lead us to re reevaluate our hypothesis. It can lead us to um run through this loop again as we you know ask this question about some set of information we have. It might lead to more questions that we run that loop through over and over and over again. Um but together it with these things together are really how you overcome um bias or how you mitigate the bias that you have. uh you can make great strides by implementing these systems in uh that we've discussed here and um really come

through your analysis with a clearer picture of uh the root causes. So um my final thoughts on this you know in our world today in this with technology with information um with AI um there's so much the information that we have that we really need to think critically about what's given to us. Um and we're all analysts here trying to make the best decisions with limited information. Um, don't let your brain go astray. You know, we saw that we have biases. Um, we can acknowledge those and you can put systems in in place to overcome those. Um and then just in closing that uh trying to overcome your biases is something that will that is a core

component of critical thinking which is probably the most critical skill that we can have um in the world today. And so acknowledge your limitations and identify areas to improve. And so um I'll just open for questions if anybody has questions. Um

yeah right other questions comments

I don't see any phones it is it is so similar to the udaloop which is something the air force includes observe orient act what no decide and act So it's similar. Yeah, similar. Um but just a little bit of cyber security um flavor there. So yeah, so thank you guys very much. Um if you want uh this is just a feedback survey here, so you can stand that and tell me how great I did or how awful I did or tell me your favorite, I don't know, whatever. Um thank you guys. Uh appreciate coming. Yep.

You want to come?

Get thrust.

Well, it looks like it just rolled around to 2:30. So, uh, first off, this is my first time presenting at a conference. I was on a panel, not quite the same thing before, but I'm really excited to have this opportunity to be here. Um, I dragged one of my friends in to help me out with this. So, this is my Clangler. I got Jared. You got some introduction slides. So, I'll let Jared pitch his. You are. He comes with 4K. Yeah. Getting old, guys. So, I flew all the way into Mer from Maryland to present to you guys, which is totally misleading because I live in Salt Lake. So, here we are. Um, Jerry Jacobson. I'm a crypto head. I've been

doing this for a really long time. How many of you have like background in crypto? Josh. Okay. Sure. our manager here. Um, so yeah, I've been in this community for a really long time. Uh, hence the glasses. Um, yeah, we're excited to be here presenting. This is my second time, I think, presenting a conf. So, I'm Mike Lingler. Um, Jared and I both got our degrees at BYU. That's where we became friends back in the day. Um, I've started out uh as a penetration tester. I was a sock analyst and now I've been um at all three Harris working in cyber security for the DoD. It's been a lot of fun. Um I became a grandfather

yesterday. My wife just called me saying, "I'm going to visit the grandkid. I'll see you after that." So, it's great. I have a picture of my phone if you want to see it afterwards. Super cute. Looks like a burrito. Generally, that's the way they come. Um and the funny thing is I am a landlord and one of my prior tenants is in the audience, which is really funny, but I'll talk with her later. So, okay. So I've been wanting to present for a while in one of the areas that we're trying to dig into at our companies to understand quantum cryptography. What does it mean? Where does it work? How do we get used in our

embedded products? So the stuff we do at work, we work with we build modems and radios for the military. Sounds really boring, but it's really not because our modems will send data at hundreds or thousands of megabits per second to targets that are hundreds or thousands of miles away on spacecraft, on tanks, on airplanes, and all sorts of stuff. But the problem is that they try to stuff them in the tiniest places. So they resemble IoT devices more than other things. And so quantum cryptography is new. It's a challenging environment. And so we're trying to understand what that looks like. We figured we'd talk talk to you guys about some of that to help you

understand what Quantum Crypto is all about. So, we've got a lot of stuff we can talk about, a lot of stuff we don't fully understand yet and uh some of the stuff the results that we've come through so far. So, first off, I felt it was important to differentiate the terminology. There's a couple different things. We have classical cryptography. Its purpose, of course, is cryptography. It's done on classical computers, meaning like the one I have in front of me right here. It's well established. We've been doing crypto for 20 30 years. Bruce Schneider wrote the book. We've been living it living the dream for many years now. Then we have postquantum which is what we're going to talk about

today. This is algorithms uh rel the resistant to attacks based upon quantum computers. This also runs on classic computers. I'm not talking about quantum computers. This is cryptos computers like the one in front of me right here. And where is it at? And this released standards for these about a year and a half ago. And people are starting to implement that. You have some of those algorithms in OpenSSL right now for the latest versions. Then you have quantum cryptography. This is something completely different. This is an evolving standard. This is where using quantum mechanisms for cryptography. We're not talking about that here. Then you have quantum key distribution, which you think, hey, it's kind of related,

but it is, but it's not. Quantum key distribution is using physics to transmit keys securely. It's really interesting, fascinating stuff. there's some commercial availability, but we're not going to talk about that here really. So the the main takeaway is that quantum postquantum cryptography is done on classical computers, but it's not subject to the same attacks that the classical cryptography is. Yeah. So who knows what cryptography is? Who uses cryptography? >> Yeah. Yeah. Everyone, have you used Have you used a phone today? That was cryptography. It's on there. Everywhere on the internet is filled with cryptography. That wasn't the case 25 years ago. You know who's heard of Telnet before, right? That's the evil word. If you have anyone says you've got

Tel in your system, auditors will drive go crazy. You can't use TNT. You can't use Tellet. They get it because it's not encrypted. So you pull up your phone, you say, "I want to get online." You click into the captive portal, you got HTTPS to the captive portal. That's public key cryptography. Okay? You do DNS or HTTPS. That's public key cryptography. You connect to google.com. You download the certificate. That's public key cryptography. It's everywhere. It's the reason we trust to look up stuff on our phone because we know we're sending the stuff to our bank or to our workplace rather than the random hacker who's got an evil twin spoofing attack going down. Other common

uses SSH, PGP, Smipvs, Signal, you know, secure boot software firmware authentication. All those are using public pre- cryptography. Does anyone have crypto? You don't have to raise your hands, but all that's based upon classical public cryptography. In fact, I contend that Bitcoin being hacked will be one of the first rounds of us being aware or not of a quantum computer breakthrough that breaks all. Okay, so here's the mathematical basis for why public key encryption is safe. It's based upon the difficulty in factoring large composite numbers. You take two very large prime numbers, you multiply them together, and it's hard to figure out what the right number is. And how do you factor a numbers? You start

with all does it divide by two? Does it divide by three? Does divide by five? Does it divide by seven? Divides by 11. There's lots and lots of these prime numbers. So, it's really hard to do. In fact, the biggest number ever been factored was an 829 bit number, which is well short of the 248 bit RSA keys we're using today. Now, the math is on the screen there. what is called the general number field seieve is the fastest algorithm we have for factoring um composite numbers and that is on the order of operations here we've got kind of got this chart I'll explain it just a little bit the more items you have in

your list the harder it is to do these things some things where it's like linear it takes the same time other things it gets more complex the longer you have the worst case is n factorial where you take the number of items you multiply together you get about 17 items and you run out of things the computer can But with a general field sleeve that's subexponential, meaning it doesn't take quite as long as something two to the n. So if you have 20 objects, you do two to the n that makes it like four billion. I don't know the math in front of you right now, but you it's really hard to count that high

for a computer in the first place. And so that's why it's mostly secure. 20 48 bit RSA keys are safe against classic computer attacks because we'll run out of energy before we finish counting the number of times we need to try before we can get the solution in place. So what changes um Shor's algorithm is and Grover's algorithm. We'll talk a little bit about Shores. I don't have Grovers quite illustrated here, but Peter Shore 1994 before quantum computers were even a reality said, "Hey, I think I got an algorithm for how to factor numbers quicker and we believe it to be correct. In fact, the biggest number we factor right now is 21." I think most of you

can do that in your head right now. And so that doesn't seem to be that big of a deal, but they did it on a quantum computer, which means the technology was working mostly. That's 2012. That's going on 14 years ago. So things have changed a little bit, but that changed the factoring complexity by an order of magnitude and now it's just merely oh what's the term? Polomial means it can solve in polinomial time, which is a lot easier to count to from our computer. And so that's what's changed now is we have a more efficient way of factoring those those com component numbers. And so that's that's the difference here. Now it's speculated that if we factor an

RSA 248 bit number, it's going to take between 4,000 and 10,000 logical cubits. And that's it's kind of weird. What's a logical cubit? We'll talk. So when does this apply? So physical cubits that that's what we have right now. Physical cubits are a single bit of actual hardware used in a quantum computer. They're noisy and they're subject to air. You got to keep them super cold. I don't really know. I don't have one. I've never played with one. I've never seen one. But because they're so sloppy, you need massive redundancy. And so that's what a logical cubit is. It's like you take a bunch of physical cubits and you try to have them error check each other. And I can't explain

it. I'm not a physicist. I apologize. I'm just trying to deal with the cryptography side. But you need many of those physical cubits in order to make a logical cubit. In fact, I couldn't find anyone claiming they really have logical cubits yet on market. We'll look at some stuff in the next slide. So, the development of these quantum computers is still underway. IBM has a Condor product released in 2023. We said they have,00 physical cubits. Um, Quantanium's Helios projects claims to have 98 physical cubits with a 99.9% fidelity. What does that really mean? It's hard to say. It's kind of squishy because it's people are still trying to figure out what this stuff means to you.

All the people are getting PhDs in physics solving these questions here. But the day when we have a quantum a cryptographically relevant quantum computer is projected to be here pretty quickly. We don't know exactly when no reliable estimate exists. And if for example China got a quantum a cryptographic relevant quantum computer, would they tell us? Probably not. If the NSA got one, would they tell us? Probably not. So, we may not know when that day really happens, but it's going to happen and we need to make sure we're prepared. So, here are some slides coming from intro to quantum.org that talks about who has which computers and when are relevant. You know, we've got this trend

line here from IBM. Looks like they're growing a little bit. You have Google coming in there. Pantanium's got some in there. And if you take a look at when those will hit the mark of being useful, I mean, we don't know exactly how many cubits we need to factor RSA, nor do you know when quantum will happen, but most people agree it's going to happen sooner rather than later. Um, I was talking with a gentleman who does research down at UT Austin, and he says there's nothing that says this won't happen eventually. All the research he's seen, all the fundamental objections, they're kind of broken. It's going to happen. The question really is comes down to

when. So, in addition, cryptographic attacks only get better. No one says, "Hey, I found a new broken way to break as if it's less useful than we already know." Here, here's a new one that came out a couple weeks ago. The Jesse Victor Gabriogi. He's saying, "Hey, I found a new way of doing factoring. It's going to be way quicker than Shor's algorithm." Why isn't it big news? Because it's all really fuzzy math and everyone has to verify those conjectures and try it again. So research is kind of ongoing. However, the other problem is store and decrypt later. If I log into my bank and I send it my bank information and someone is writing down all those bits

later on, they may be able to hack it in 10 years from now. Say, "Hey, we broke the RSA key, which was Mike was using to talk to his bank and we're able to see all these transactions happening. And what if it was something clandestine? Maybe I was buying something I shouldn't. I don't know. Maybe I was paying some off I shouldn't have. that could be exposed to 10 years from now. Now, it's not likely I did that to be honest. Just asked my son. He's right here. But, you know, maybe you're the military, maybe you're the government, maybe you have these secrets that you're trading with your partners and your allies and you can't risk that. So, you

have to be really careful about the store now and your trips later because if your secrets are worth still having secret in 10 years from now, then this is a concern today.

for you. >> I can't remember that often. I have password fatigue. It's like a circular buffer for my >> passwords.

Let's talk really quickly about the standards. Um, all of the postquantum crypto algorithms are based off of a different kind of math. Um, and that's why they're secure against classical computers and why they're secure against quantum computers as well. So, we don't create these things in a vacuum. All of the postquantum crypto algorithms are still classical because they run on classical computers. They also can't be uh defeated by um classical attacks either. Okay. So if you have a postquantum, it's not just postquantum, it's also post postclass. It's classical. Um so these are some of the ones that NIST has um standardized over the last few years and we're starting to see roll out of some of the

the more recent ones. Leighton McCaulay signature um the NISTP P800-208 that one's been out for a little while longer. It's based on hash trees. Um and it's great as a signature as a signature algorithm with one small caveat. Um the classical algorithms that we have, you can sign many many things before they start to significantly lose security. With the hashbased algorithms, um, you have a fixed number of signatures that you can perform with a given key over the lifetime of that key and then you have to roll to a new key. Okay, you can imagine the logistics problems here. If I'm deploying software and I'm using this to sign my software, I can sign,24 different signatures and then I have to

roll to a new key. Now I have a new public key that I have to distribute or have in my uh trust store. Okay, so there are a lot of logistics challenges that come with this. And the signatures are all unique. They use kind of a piece of the key. You can't sign the same thing with the same part of your key or you lose security. All right. FIPS 204 MLDDSA is MLDDSA and uh SLHDSA, the Sphinx Plus. Yeah, these were um recently standardized. that came out in the last two years um standardized in 2024. And these are much more flexible. They map much more neatly to your existing public key signature schemes. Okay. Um

but all of these mechanisms have some tradeoffs and they have some impacts to systems that we're not used to. Um mostly in terms of key sizes and signature sizes. What makes them hard to crack involves a lot more data than you have an elliptic curve diffy helman and elliptic curve DSA that are the two kind of uh most popular cryptographic public key algorithms right now classical algorithms. Okay. Um those have very small key sizes which is what makes them great. Here are a couple other standards. Um all the ones on the previous slide were signature algorithms. MLEM is the only current currently standardized uh public key exchange mechanism postquantum. Um there's one more that's well I guess

there's classic male I have no idea how to say this thing. Um but that thing has enormous signatures so and enormous keys so it's not practical for any any real use. um MLM much more reasonable key sizes and when I say reasonable the reasonable for like your desktop PC we did some investigation on a pretty capable micro microcontroller um that you'll see a little bit later and you'll see that even with that pretty capable microcontroller it takes some time to do these things so your little 8bit microcontroller that you're going to use for uh what is it ZigGB type of applications in your uh cars, can buses, that kind of thing where your premium is

on low power like we do a lot in the military because uh we're tactically deploying these things and they have to live for days, weeks, years on a battery, right? Um we have some problems trying to run these on very small processors that don't have a lot of power. So, I talked about the key and signature sizes. Here they are. Um, and you should note that some of these are ranges, right? Um, RSA and ECDSA, the signature sizes tend to be very consistent. ECDSA will give you a very slightly uh different size depending on how it's encoded. Um, but for the most part, what you see is like a t-fold expansion in the in terms of your

signature size and your key size for some of these algorithms and or your key size. Some of them have very small key sizes like SLHDSA. Um, but this has an impact to everything you do, guys. Like if I'm standing up a web server and I have to exchange data to set up a key exchange to to set up my session key to protect my information. I now have a lot more information that's being exchanged and you're paying for all of that. And maybe it doesn't matter too much if you have a gigabit connection. Some of our data links for the military, we're talking about bits per second, literally single unit bits. So you can imagine how

long it's going to take to send a 282,120 byte key over that interface. So the size of the keys and the size of the signatures matters. And when you talk about keychains, right, where um my bank has so I don't know how much you guys know about PKI. Any PKI experts in here? Yeah. Okay, cool. Um PKI started a trusted root certificate. There's a certificate authority that issues a what's called a self-signed uh certificate and it has the same public key and it's signed by this the private key associated with it. So what trust can you place in that? not much except that they're very careful with their key and they have a certificate practice

statement that um that determines how they handle their key material, right? But they use that to sign usually other certificate authority keys which are then used to sign other certificate authority keys which are sometimes then used to sign the certificates that they hand out to regular websites and people. Okay, these chains get very large when you start talking about these 17,000 byte signatures because every one of those certificates has a signature in it and every one of those certificates has a public key in it. So now you're talking about lots of kilobytes of data to do a single key exchange and so your web servers are going to have a lot more burden. Okay, so these are all things to

think about as you're provisioning your systems. I think that's everything I wanted to talk about here. Guys, if you have questions,

yes, exactly. Okay. So, yeah, um you're going to you're going to fragment is the short answer. Um so, for TLS, which is over, um TCP, it's not such a big deal because TCP handles all the fragmentation stuff for you. Um, in a lot of the protocols that we work with in the military, they're over UDP and they assume a 1500 byt packet is your maximum. We now have a problem with all of those uh protocols. There's a big problem. Um, DTLS has they actually foraw this coming some years ago and so they have a a fragmentation sort of system built into DTLS for it which it runs over UDP. Um, okay. So, we took a Raspberry Pi 4A,

which as microcontrollers go is pretty solid. I mean, you can run a home theater on this thing and it doesn't blink at it. Um, compared to some of the microcontrollers that we run, that's a supercomput. Um, and these are some of the times that we see performing signature with some of these algorithms. And as you see the SLD DSA, which had small key sizes, which is great, some of those take a very long time to compute. And just looking off to that axis on the left, right, 4,000 milliseconds, that's 4 seconds to compute a single signature. It's a long time. Okay. And if you're on a web server that's under load, better have an accelerator for that, guys.

Um here we were assigning 10 kilobyte messages. We did it with 100 bte messages and one uh megabyte messages and the results were very similar. Most of the computation time is in the signature not in the hash that's used in the computation of the signature. Okay, so very capable. Here are the signature verification times. The good news here, these look an awful lot like um they look an awful lot like the the classical algorithms that you're used to now. So this is good news, but it's the only good news. Um, and part of what makes this good is that your lower power devices that are out uh reaching out to a website as long as

you're not using client authentication. Um, that part of the all you're doing is a single uh signature verification. So, your low power devices are going to be okayish on this. Okay, getting close to done. IoT devices tend to be very low power. not patched very often. That's another problem that we can talk about another day. But um they're also tend to be kind of difficult to update and because they're low power and difficult to update, people don't do it very often. Um all the signature verification whenever your uh so your smart thermos for example gets a new update, they have to do a signature verification on that update. I hope if they're not doing that, we have

problems. Um, so that can take a very very long time on these low power microprocessors. Uh, some concerns like if you're in the medical field and you're doing things that are embedded inside a human, um, those have to run off off of a battery for a very long time, right? Because you don't want to be opening people up to change them all the time. Um, and your communication protocols very low bandwidth. So, um, the implications to IoT space are kind of frightening for this. Um, also another thing to think about, a lot of uh modern microprocessors have accelerators for these things built into them, even the even the tiny microcontrollers, but right now they only have that like signature

verification built in for classical algorithms. So, we're starting to see vendors express an interest in this, but there's nothing on the market yet. And if there's nothing on the market yet, guess when it's going to get fielded? So they're working on it. Figure two to three years for the development cycle for a lot of these processors. Um and then it's going to take developers time to get these in their hands and start writing code for them. Right? So you're talking a 10-year thing. And what we're seeing in the literature related to quantum is 2030 we start getting risky for these uh classical algorithms breaking. So if that doesn't scare you now it probably should. You should also if your company deploys

software and sells it you need to be thinking about your quantum resistant signature. If you deploy software to other places, all the I don't know if you were here for the guys talk two ago. Um, but your Fordet firewall when they do their update, yeah, should be signed with one of these. Um, every time they do a new new deployment. Oh, yeah. Okay. So, I think that was our last slide. Oh, it just wasn't go. Okay. So hybrid approaches um TLS DTLS there's a big standards problem that has to happen here to get these things into the standards. Fortunately TLS uh the consortium and the internet uh engineering task force that looks at that has already done some of that work

and the scheme that they've come up with because the postquantum algorithms haven't been out for all that long. We're talking maybe a decade. We don't tend to trust security things, cryptographic algorithms until they've been tested for 30, 40 years, right? Um because catastrophic breaking could be a thing here. We haven't been hitting on them. Cryp analysis has not been a thing for a long time. Um and so to mitigate some of that risk, they're developing hybrid schemes that do both a classical signature and a postquantum signature. a a classical key exchange and a postquantum key exchange. That's great for security. That just makes everything bigger. And we already have problems with the bigger, right? Um so it's good for

security, not so much for the other. So here are some of the challenges. We're getting really tight on time here. Um we'll fly through some of these. We've brought a lot of these up as we talked through it, but protocols have to be updated. People have to update their hardware. Look through your supply chain, guys. If you if you write software and you send it to people and you want signed updates, this needs to be in your system. Um, I was talking to one of our developers the other day. We several years ago saw this coming and we bought some HSM, hy uh hardware security modules that could do the signature for us. Made sure that they had postquantum

algorithms, LMS, and that they could we could integrate it into our system. um talking to one of our devs the other day and he was like, "Oh yeah, we should probably get that into our package manager so that we can do our deployments." I'm like, "Yeah, we better, right?" Um so it takes time to work these systems and these processes into your corporate structure. So start thinking about it now. That's our So we need to start thinking about this now. The standards have been released. It's our mitigation period today. If you remember Y2K, people talked about for years before we're ready to go. But this one's going to catch us by surprise if we don't start acting. The hardware is

not ready yet. We need to push on our vendors to get these supplies ready for us so we can purchase them and deploy them into our systems. Um, and here's our key takeaway. The quantum threat is real and approaching. We can't see any reason why this won't get there eventually. New algorithms will resist the classical attacks, but they have a trade-off. They have a cost associated with them, and we need to understand that now rather than later. Also, we're looking for good engineers. So, if you're looking for a job and you like to talk about crypto, come talk to me later or or Josh over there. Be happy to chat with you about that possibility.

>> Not that crypto, the other. >> We don't do money. >> Oh, sorry. Not cryptocurrency. Thanks, Jared. Yeah, we're we're focused on uh protecting secrets, not trading money. Okay, I think we have like maybe one minute if we got any questions.

>> Yeah, they're expensive. So, you're not going to see, you know, uh your teenager in a in somebody's basement out buying one of these. They're also expensive to run. Um so, It's going to be large organizations. It's going to be nation states. It's going to be um there are some other uses for these uh quantum computers other than breaking cryptography. Breaking cryptography gets a lot of press because it's kind of a big deal. So um you'll see organizations start to pick up on them as a a kind of form of supercomput for certain problems. Um but you're not going to put one in your garage, at least not in the near term. As the technology matures, who knows? I mean,

people that we would never have one of these in our garage either. And now we have 500 of them in our house. >> Well, thank you so much for coming to our our presentation.