No Silver Bullet: Multi-Contextual Threat Detection via Machine Learning

Show original YouTube description

Show transcript [en]

up next uh we have rod soto and joseph zade from splunk turn it over to you guys hey good morning and uh i'd like to thank the besides organization for having us we actually did this presentation earlier this year besides new york we have updated it with um new information new data and hopefully we'll have a bonus for you it will give you a preview of an open source tool that we're going to release today at blackhead arsenal if you were previously on on on the keynote you probably heard a lot of truths of uh about the mishaps of machine learning we're not here to preach you on machine learning as a panacea but we definitely believe it is as a an

enhancer and i would say a battlefield enhancer when you are fighting against cyber crime we're still in the early stages though so who are we my name is rod soto and i am a researcher with splunk uva usual behavior analytics former caspida this video was acquired by splunk over a year ago and we were a startup that was using machine learning for user behavior analytics i used to work at akamai technologies i was a the senior the principal researcher for prolex and plxtory i like do great things palm botnets and i play a lot of cdfs and i develop a lot of ctfs as well then i have my partner in crime here jefferson he is a senior data scientist

at splunk uva and we've been working together for over a year or so um and we do have a for those who are here interested in this in this area and either have a data analytics or data science profile and security it's usually good when when both of them mix and they can talk to each other a lot of times and this is sort of like a tangent in the industry you'll find somebody that's a pure data scientist and they don't understand what what malware is for example they see it as numbers or you have people that are high quality security researchers but don't understand the concept of the statistics so we've been able to have that synergy because we

both have survey a a gradient where we work at one point and we're prior are interested in the hacking community so in order for us to talk to you about machine learning obviously we have to talk about the big data big data as we all know is um it's something that is described a it's a bunch of a variable very large and fluid amount of data that cannot be processed by normal means right so with the environment of big data we had things like hadoop hadoop is actually in my opinion a a revolutionary technology is still advancing but it gave us a way to use commodity hardware to do distributed processing and analysis of all this

big chunk of data that otherwise would not be possible to analyze and and make conclusions or assertions on it um so we're going to talk about uh the machine learning applied to security workflows and how it can help and it's limitations and we're going to describe a central nervous system approach to behavioral security we're going to talk about the lambda defense architecture so as i said before big data is obviously defined as a very large number of data that is very uh it's fluid it says it changes constantly and is very large in size so it's usually very difficult to process to process on its own and it requires lots of processing power in order to be

tackled so right now the way we see this uh and and and serve a putting this together with current technologies and defense technologies we have enterprises where there's there's a plethora of devices with lots of logs and alerts that are constantly producing data and this data is distributed in too many places and the analysis is usually slows and uh a lot of these companies are using same products which they definitely allow us to put a lot of data together but in certain cases or in cases where decisions have to be made pretty quick they fall short because of their limitations and processing power so like i said before sim definitely makes life easier but it sort of gets to a

certain point where it's almost a stagnant and that's why in technology such as hadoop provide an advantage to process this data and to apply machine learning technologies such as algorithms and we're going to talk about that we're going to talk about you know how you train algorithms how you know when the algorithm is ready you know machines are like babies right so i hear sometimes when somebody says yeah i can beat a machine yeah you can also be my eight-year-old daughter in chess right why because she's not trained once that machine is trained it has the knowledge and the experience that you have we we it's going to give you a good run for your money right so sometimes

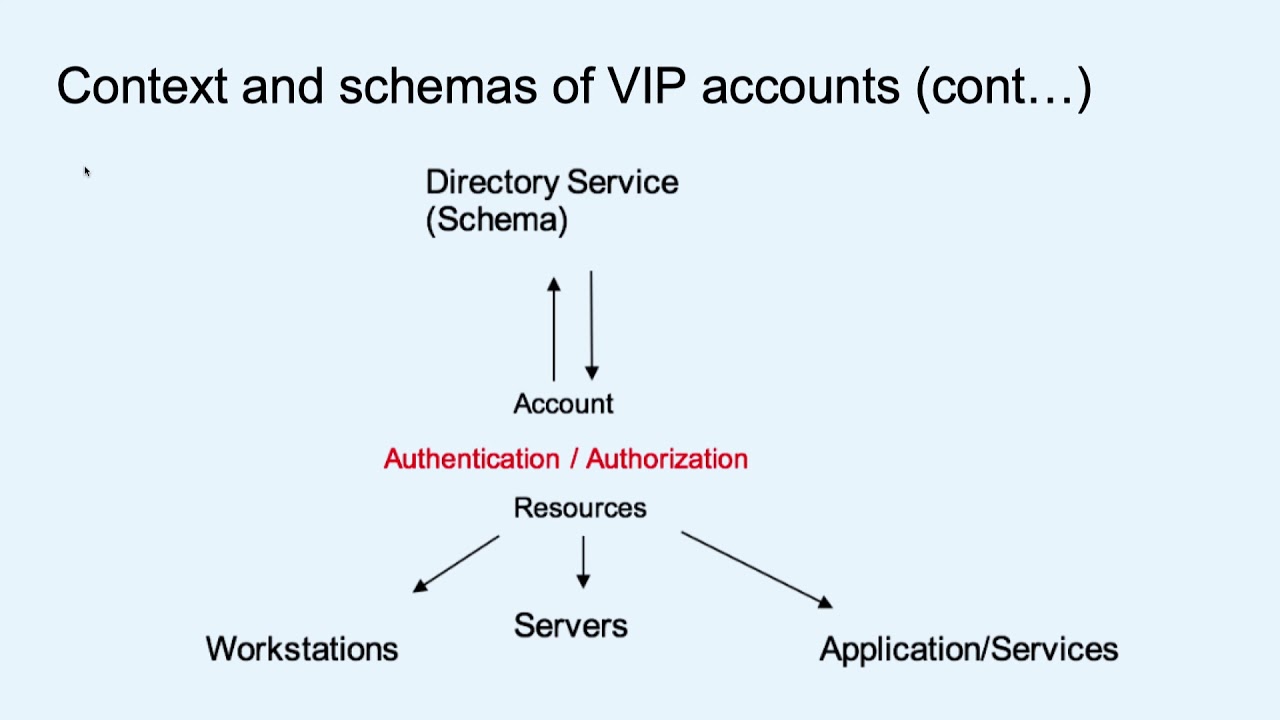

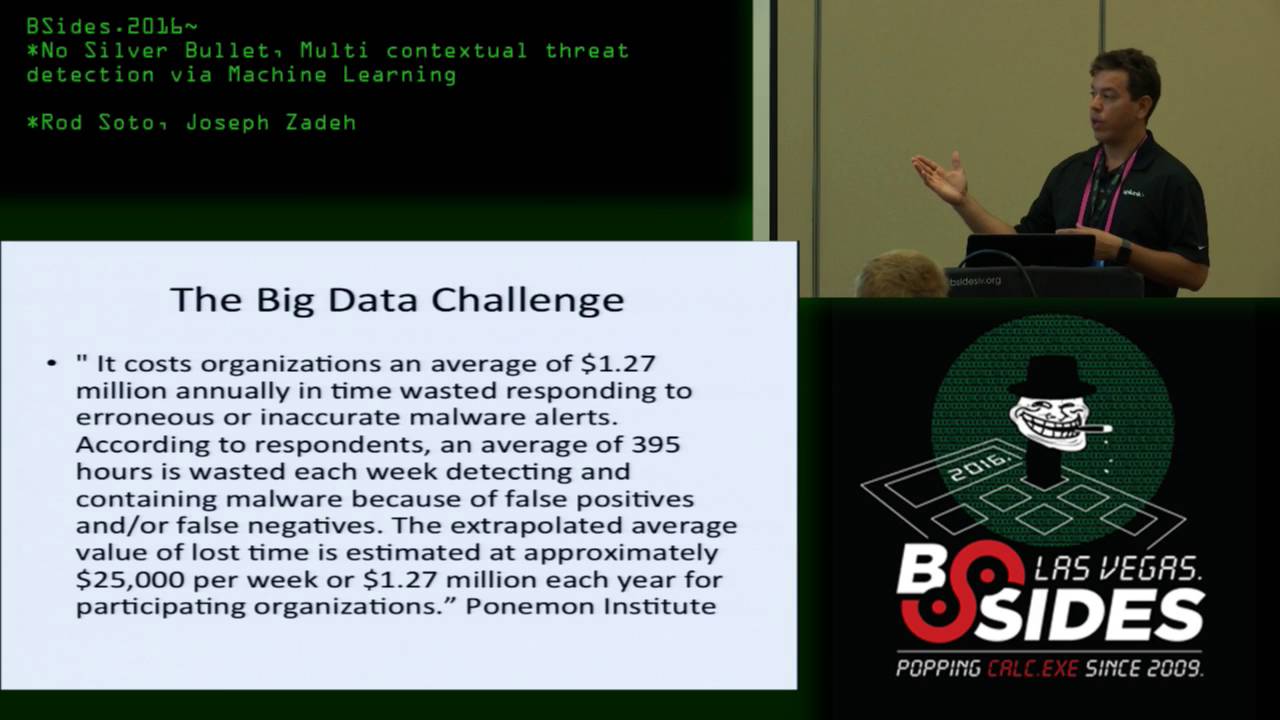

seeing machines i know sometimes we feel somehow nervous of anxious about the the machines basically coming over to the workplace and probably taking over a lot of our work functions so one of the things that we believe is that for example stock one positions at one point will be taken by machine learning technology and you'll see that as we move and we'll show you the architecture however we shouldn't be that anxious this this is a technology that is still its infancy this is a technology can be understood it can be managed and so far it can be controlled so here's for example here's a one one example of the utility of these technologies right it cost an

organization an average of 1.27 million annually and time wasted um responding to erroneous or inaccurate malware alerts you know according to respondents an average of 395 hours is wasted each week detecting containing malware because of false positives or false negatives the extrapolated average value of lost time is estimated to be approximately 25 000 uh per week 1.2 me seven million each year for uh participating organizations there was a survey that was done and here it brings back what i said you have a an organization is full of this wonderful defense technologies you have your pants your fire eyes your snorts your you name it and all that data is going to the scene right but then you

need this balances with people that are highly skills and almost like intuitively knows what to look for right if you ever work i worked at a stock for 48 people and it would take us between six to eight months to get somebody up and running and if you ever worked in a stock you know that the turnover is around a year and a half right so we're talking about somebody you train for six seven months it lasts another six months and then it says uh-uh i'm going somewhere else right so this is definitely a challenge um and these technologies can definitely help to to improve the texture and to provide us with means to tackle these issues

so i just said that about how stocks are challenged they require training um and to be honest uh until we train the machines and we get to a point where this technology evolves is it comes down to people versus people right people versus people means you as a skilled person as somebody that can put yourself in the mindset of the attacker against the attacker um and we will talk about that in the adversarial drift and how that becomes a problem and it's a challenge that can be tackled with machine learning

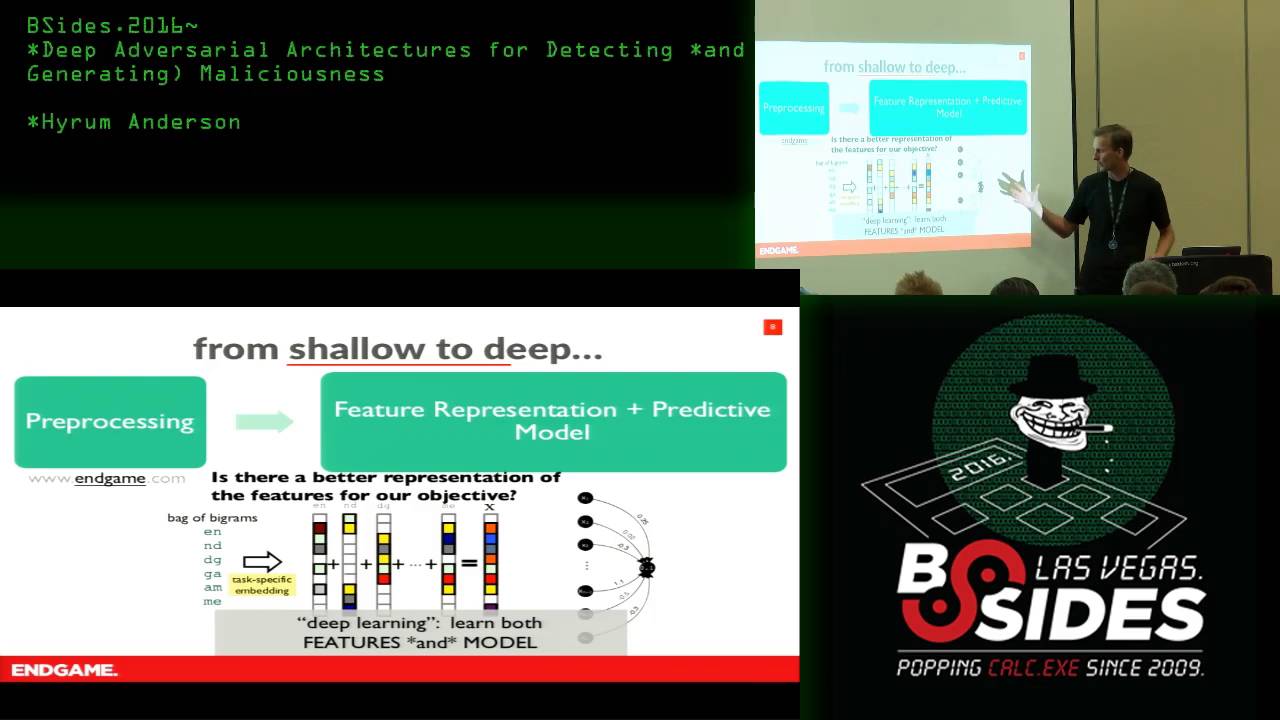

so machine learning is basically a subfield of computer science that basically evolved from the study of pattern recognition and computational learning theory in artificial intelligence basically with machine learning we have the ability to process very large sets of data through distributed computing plus the ability to apply algorithms that can learn based on these large datasets and this provides analysts with more meaningful detection and actionable items and you you're about to see that so when we talk about algorithms or learners that's how they are known in machine learning when somebody says learner it means they're talking about an algorithm so here's some some some generic definitions so it's a process a set of rules to be followed in calculation or

other problem solving operations especially by a computer these algorithms or learners can be designed to develop on scale against all the sources of data which is what we're about to show you one of the the the advantages of this is that um by applying machine learning you you can complement static based defense technology so far we have been depending on on a static signature based defense technologies i'm telling you that because i i i when i worked at akamai i would write snow rules all the time and when we were looking at malware it was the same thing we were looking at static stuff the attackers either ddos or developing uh any type of malware will obviously

realize that we were creating signatures and then they would change it and this presents a challenge sometimes during campaigns you have a window after 10 or 15 minutes to come up with a signature that they know they're going to change so definitely it is a challenge and we can use these new technologies to tackle this problem so by applying these learners you can also build models and these moles can approach threats from a multi-contextual dynamic perspective so we go beyond the static-based security technologies so um with that i'm gonna introduce my uh colleague and he's gonna tell you a little bit about sequencing the security dna can you guys hear me okay um so uh

just a kind of brief intro about me i'm i'm a data scientist so i take a lot of like the security language and i try to translate it to code basically and um part of the part of the challenge in this problem space is usually when we're kind of talking about this stuff um and particularly these slides are just sort of translations of how we a lot of like conversations that we've had with customers and basically pivoting back and forth between three languages which is sort of the security centric language mapping these languages to an architecture or platform and also um kind of expressing them using some some ideas like statistics and machine learning and stuff so um

so kind of how this presentation fell together is we we actually had to put put some papers together for a conference called kdd which is like a machine learning conference so there's so there could be it might be a little bit dense um definitions and stuff in here but uh the goal is to sort of translate some of these things these these terms that are kind of floating around a lot of as a lot of buzzwords particularly machine learning and map and kind of mapping back to your security intuition um and so one of the most interesting parts of working on like analytics or building like a big data application in cyber security or more more generally in any problem space

where you're modeling an adversary it's really it's sort of a really interesting like quarter case for artificial intelligence because essentially what what artificial intelligence is in some ways is just is um taking uh taking an algorithm that's really good at generalizing information about known data or label data and so what what a like a label is is a really kind of key piece of input for for any of these algorithms we're going to be talking about and in in security particularly uh you run into the problem of not having very many labels uh that's already sort of like just just kind of a a an accepted part of the the space and um the other thing is which is more complex

is the labels change over time and this this happens in sort of different scale so i spent a lot of time modeling like um just kind of covert channel type backdoor communication c2 the like malware beacons over the last few years and and it's really interesting watching how like when we used to model h tran and and some of these sort of classic rats um you could kind of rely on on a steady sort of assumption of of some kind of callback behavior over time it might be randomized might but basically there's going to be a footprint on the wire at some point nowadays like the in-memory malware um that sort of persisted from from the perimeter at web shells like

this really interesting um i guess it's a couple years old now but it's a crowdstrike talk called uh uh beyond the indicator and and i suggest you guys like check it out if you're interested in some of these sort of like the the the drift in some of these behaviors that that back in the day you could just rely on on on on a back door kind of leaving a network footprint at some point now they're just becoming much more stealthy in the sense that once the malware is is basically it's residing in memory and once the op is done they can just drop it and there's not even a file system footprint um so there's just this is

what we call kind of adversarial drift over time the behavior of the model changes and then the other kind of thing to be practical about so this is this is a slide i took from one of our security researchers uh named monzy and and he uses this to talk to a lot of to talk to customers about what it means to do defense at scale um across like a a complex threat surface so so so part of the issue when you're doing like enterprise security is is really we're talking about many things we're defending against we're talking about all kinds of different attack vectors all kinds of different like depending on if your health in health care you got to

worry about you know biomedical devices get getting compromised if you're in like um like the the electric industry now we're worried about iot and there's just so depending on kind of where you're positioned in the enterprise or in a particular sector um there's there's many many types of threats you have to worry about and then and then the way it kind of helps to to deal with this both from a modeling standpoint and just from a security posture standpoint is mapping mapping kind of your your security your defensive posture and your point solutions or whatever defensive components you have to a model of the adversary and i find this really useful in a very kind of mature way um to to

sort of uh kind of break the space down a little um and and and so uh and that hopefully that's kind of one of the good takeaways i'm going to walk you through sort of what it means to like unfold a little like machine learning algor analytic as a just a real simple proof of concept and and the idea is to show you that when we're running these these algorithms they're almost like hashes of information um you take a big piece of it like labeled data and the output is maybe a set of coefficients or a hash of some encoded information that that we then use to do predictions on and so in that sense they're they're the brittle by

definition so um analytics machine learning all these buzzwords we hear i would say you know i would like the nation state and we here at apt and some of these advanced threat actors that's a game of human intelligence much more than it is automation we want to augment we want to augment the human analysis as much as possible but at the end of the day um it's much easier to build a machine learning analytic on like exploit kit samples like that's what we we've been doing for for black hat we grabbed like a uh 400 um labeled exploit chains and and we have the actual like payload delivery and i and i and it's because

it's generalized across many exploit kits you know there's there's some invariant behaviors in this in this um in this sort of threat vector and so i kind of think it's it kind of falls a little bit more in here where i'm where we're not um we're not dealing with adversaries who have like lots of resources who can kind of change and and really stealthily hide their tactics so um so anyway uh just to sort of like dive into like what what we're doing here it's basically just trying to um accelerate some classic workflows like i've been kind of doing this um off and on more or less at from like a consulting standpoint just building custom

uh solutions on top of like uh uh first generation sem data when when the sem data like like what happened with first generation sem like qradar uh envisioned some of these big systems that were sort of classic inputs to a bunch of different data sources this is sort of like how the sock in the last last decade or so was uh basically landing all all the uh the data for from a normalization standpoint and and kind of triaging and escalating incidents in a day-to-day workflow so kind of spending time in the sock you you sort of realize there's a lot of these pain points and rotary touch on them part of it is just sort of like like if you have an

incident like oftentimes to get a full picture of like doing the digital evidence collection you have to touch a lot of different data sources you have to correlate across different data feeds you know pivot off an ip address and you know look at the subnets around and there's just depending on kind of what what problem you're looking at there's sort of a search space you go through as an analyst and and this the search space is pretty it's pretty creative in some sense that's why one of the heart this is a hard problem to map to like an automated solution because really depending on like the piece of evidence the use case we're talking about um we

you know we we sort of adjust our kind of search patterns and what it means to to to verify uh an event is a true positive or false positive essentially um so but but there is benefit like we like i think that the point here the message we're learning is is for one um certain problems kind of will will naturally be be helped by uh by some kind of algorithm uh that that is essentially what what i like to look at is is like an optimization really so so um so uh so so basically so here's we're just gonna kind of walk through before we sort of show you like how we're applying these algorithms to sort

of like a central nervous system approach at scale um this is sort of like what's what's happening with any one of these algorithms if we talk about it in some sense um so so uh basically this is kind of like a really hacky way to describe like a whole you know body of knowledge um but but but there's this idea in machine learning like formally called vc dimension and kind of so this is sort of like if you're interested in the definition like mathematically this is sort of what we're talking about here basically if you think about what what it means to learn something and we do this in our brain because you don't want

to learn like something's dangerous every time uh you encounter it like like it we we take hashes of the information and then we're able to do quick look up so that i know oh a lion is going to kill me if i see it you know instead of having to sort of to reprocess that and so that's kind of what happens with data too with with anything you know in in a learning workflow is is you take a bunch of input data and and there's some some brain or some algorithm that processes the input and it sort of creates like an encoding of what what that input really means in some generalized sort of feature space

and so kind of what to just really quickly um basically what i just did here is i took uh sort of like r the the this this sort of open source um statistical programming environment and basically i just these are like default data sets in r so so all we did here was take a an input data set something to do with like cars uh it just has a bunch of different stats about cars and basically what we did was we fit a model this is about as simple as a model as you can have in in kind of these these predictive models and it's called it's just a linear model so it's a regression you're basically

fitting a line given a couple of predictors so so these are sort of the variables we are saying is basically saying we want to model miles per gallon as sort of a linear combination of a couple of key variables which is weight displacement and a cylinder number um but at the end of the day what the point is what we're trying to show is we take some input we we model it with an algorithm and the output of this this this whole process is basically like these coefficients describe the line that we learned so now when i take a new data point and i predict using this model i'm not using this whole this big

data set anymore i'm just basically reading off this hash and saying oh yes are we on the line are we over it are we off so you can you can get a lot of information from this those little i mean there's more information than here there's metadata there's sort of like have to kind of like assign significance and p-values and stuff but in any case like it's just sort of like a real kind of um hacky way to explain uh the learning compression duality so it's just sort of like there's a lot of ways you can think about machine learning and it's a buzzword that's thrown around a lot but from from a security standpoint and from

any problem space where you're dealing with uh sort of changes in time over the underlying ground truth and uh and more and also like adversarial drift then i would say this is this is like something we have to use very carefully in because it's a brittle process from this from these slides oh and and so here um you know if we have time i'll show you the code that we were writing for this black cat project and basically what we're using it's kind of like well known that random forest it's just a type of learning algorithm you basically give it some input and just like the the linear model it's going to give us a prediction that this

is sort of what we're going to use because it's kind of best in breed if you know all other things being equal if we don't know anything else it's sort of what i tend to try so i don't have a lot of time um and and a lot of this stuff we're doing just kind of like as a side note is is i mean although basically the last two years since since we've been part of the startup in splunk we've been leveraging um a lot of open source apache projects so all these things we'll be talking about are essentially built with um either apache storm or apache spark and some other things like ml lib

um so okay okay so here's this might be a little bit dense but this is sort of basically a uh this came from a a conference called kdd which is like a machine learning conference so this is already kind of really academic um and i did i did my like grad school and stuff and math so this is like some of the definitions here come from like they're not security at all like particularly probably uh well yeah i mean the space of possible behaviors is a continuum at this point like i i like i say this a lot um to to customers and it's probably a worthless statement but in some sense like um there's a lot of depth to this because

this sort of represents of the pattern we've been using to our to to to take an enterprise and decompose their their threat surface into actionable units of of kind of like ttps essentially or or just a set of patterns that we want to model with an analytic or just augment a point solution signal and so this is sort of how we kind of express this this pattern in a really high level workflow that was meant for a paper but uh that's essentially what i'm trying to break down in in some sense so but basically for from a high level we we take a threat surface and what i mean by threat surface just really quick is that let me find it

so like a threat like that's kind of a bad picture but like for a particular like environment like i used to show this slide to a couple like like large fortune 500 companies when they would talk about like the 30 use cases they want wanted to model and and and and kind of handle from just from like where their sock didn't have visibility like oftentimes like a great like question that comes up is like how do we get more visibility in ssl traffic or how do you know we were we have like um you know uh some kind of covert channels over ssl or how can we better find um pass the ticket or golden

ticket just depending on the use case um it turns out that that you want to sort of enumerate the threat surface and then just start assigning either models or um or uh the way it's kind of described in this this workflow is is a family models so um so yeah so in any case if anyone's sort of curious about this paper or something like it's it's sort of a work in progress but you know come talk to me afterward i'll give you a copy of it and stuff it's it's a little bit dense though um but basically kind of like what what ends up happening from a practical standpoint is what we're mapping um essentially there's two computational

workflows especially in these big data systems they're called like real time and batch workflows um and and it turns out that if you sort of take any arbitrary behavior basically you can kind of break them down into two sets of things essentially that sequential behaviors and non-sequential behaviors and and and if you if you sort of expose this in the right way there's uh there's basically like a system of uh of uh workflows that map like that map out how these how these behaviors are computed and it sort of becomes like an architecture question at this point so essentially what what i've been doing from a modeling standpoint is tape taking like a a real-time engine like in in this

case it's apache storm and then blending it with a a batch engine and and then what happens is in this in this batch computational framework like the thing that the reason we're sort of structuring it this way is all these sort of projects like are highly scalable on commodity hardware so you know basically the number of clusters or the number of um vms i have is sort of like what will kind of scale linearly across the number of users i'm analyzing in a whole environment and so we're sort of like what we're doing is like in first generation sem there was basically bottlenecks because because these things were stood up on on on relational database back ends so you

can't do like a complex analytic query like a good example is like page rank like an algorithm like page rank or some of these algorithms that are really graph heavy that process like really they're basically large combinatorial optimization problems or just large problems where they they don't scale well across a rel relational database and and so we're kind of taking that that that pain that sort of has been felt in the enterprise for the last few years and we're trying to kind of optimize around it so so when you kind of decouple the mutability of a transactional related relational database in the backend of these architectures then it lets you scale out much more complex

analytic computations across individual users so now essentially what i what i do per per user is i'm able to assign like a thread a thread of memory or state per user in in sort of a in a highly parallelized way and and um and uh i just wanted to jump around a little because it's more like i kind of jumped ahead because probably i should have started with this picture because this is sort of like what we're getting after is for a particular m for particular use case like i mean sometimes it's not users it could be ip addresses it could be anything that's sort of a primary key but basically i want to study their like

a particular entity's behavior over time either over over sort of a batch workflow or a sequential workflow so in this case this is sort of a real-time computation where we're doing um the analysis of exploit chains and basically what an exploit chain is in in some ways is just a a set of like interesting requests that will happen in in a short amount of time and if you start breaking down like the mime types and looking at i think there's an example in here yeah so here's an exploit basically an exploit sequence that happens in a really short amount of time i think this comes from like a some some kind of exploit kit sample from contagio

but uh the the the point is here like if we look at the mime types yeah so it's a text uh here's like the flash exploit they're dropping a payload and this might even be c2 at this point so like basically this happens in a really small amount of time if you're a firewall and you're sort of like sitting in line like looking at all these logs well the the problem you have is that like there's gonna be other people kind of in between stuff in between here so you haven't sort of sequenced the right way like basically like it's hard for firewalls to keep state across multiple users at the same time or anything in line because basically

hardware only has so much memory so what happens is instead of like letting the hardware the point solutions have to maintain a bunch of state for every user in the environment we just land these logs you know to a sam or for you know basically like a data warehouse and we're able to kind of um uh uh just re like essentially group by and sort i mean that's that's all that's sort of happening here but basically this this this sort of sequence of flows now lets us get a really simple picture and and and so this is like essentially one use case and maybe um in in a bunch of different uh models that are focused on the

sequential side of things so that's kind of like um i mean that that's more or less sort of like the the moral of the the story at a high level here what we're trying to convey just a comment about that all this is done by centralizing remember what i said at first that how we have all these similar sources of data right and then we you had this tons of logs like what we just saw in the in the uh getting the sequence or the explo the display chain you can't you really can't do that if you have a a stock analyst you have to look at multiple sources right he has to go to a firewall has to go maybe a tcp

dump maybe a fire eye whatever it is but it's it's very dissimilar by us concentrating this and um sometimes you have to do some etl and i'm not sure if you you guys know what etl is it's called extract transform and load so basically we're getting all these similar data sources and put it in into something that can be understood and then processed by doing that then we can apply the algorithm to it pooling for all this multiple sources right so we just don't depend on just a firewall anymore or uh or even an expert eye the x prime you needed to train the algorithm which he can tell you a little bit and you'll see how the algorithm

sometimes you have to feed them with benign data so then they can tell what uh malicious data is so that's the context that that we're looking uh in that a slide where we can actually the algorithm say look um there is the sequence of mind types you have the text html flash or pdf and then you have octet stream and then you have some survey outbound or even ssl or sometimes you have door that happens a lot on exploit change so so if you you can combine that with other things such as you have av locks for example and you you get a lot of a bunch of uh 40 um i think it's 46 24 which is uh you

should relate to past the hash or any other type of ad event where that would spike at the same time that this was happening or or sequentially then this gives you a strong indicator that something is going on whereas when i have all these dissimilar resources or i just have a saying which had to constantly query and it's based on a relational database like he just said there are limitations on getting to it so i don't know if you want to add a little bit of the training on the algorithms yeah and that's essentially you know so part of what we're i mean i jumped around a little but basically the the the the goal here is describing

a system that from like uh if we're just talking about like silicon valley terms what this is sort of reference to in the valley is called like a lambda architecture which is a reference to um a term nathan mars coined when he was working on the storm project at twit twitter and um there's sort of a whole interesting history here this is really where the the the big data in some sense is very bleeding edge and basically we're taking advantage of the fact that there's first generation bottlenecks that are kind of well known in security workflows we're kind of decoupling those bottle bottlenecks by by by leveraging immutability in these different workflows in a very very um

nice way so so the mutable side of all the state happens in this kind of real-time path and and and because of that a lot of things become real really clean when you got to scale it so so one of the things that happens on these systems is is their their learning system so then now we have to answer from an engineering standpoint like what does it mean when i'm not i don't have rod like or an analyst sitting down and and kind of telling models to do things like kicking off a job having a model to relearn so we have to answer all these questions from an automated from from from an api perspective basically

and um and we we have to implement something that that this whole platform is monitoring so i think even the system we're working on that's a commercial application there's about 50 models right now and that that's really like the power of these systems that like that the the only way they're going to do anything for an enterprise is if you you augment it like existing signals with with with the power of many kind of analytics that are blended blended together essentially and so all these models talk to each other they're first-class citizens we use scala so they're it's a very expressive language and basically all these like so when i write a command and control model i can

kind of subscribe to the output of other models that that are are focusing on on similar use cases or just maybe tracking users and and and combining the output of of the peer groups for different users with kind of what what a typical um covert channel looks like gives you just basically augments lots of signals together um and so there's i mean there's there's an api that does all the learning for us if anyone's it's kind of like this is for like another dense topic um but basically we sort of have a a whole for any model how how we update it in parallel is basically like a map and reduce kind of a workflow so so if

anyone's really interested in these like really technical details just come come uh come talk to me this is part of this paper i i can send you if you're interested um you are a little better oh yeah yeah because i want to see if we have time to show the tool too okay so this is something that obviously the community is very interested in okay so so what is that the the extent of this uh you you hear things such as overfitting or underfitting overfitting is for example do you see that sequence of mind types where it says okay there's flash and then there is there's a text then there is html there is flash there is uh sometimes there's

adobe then there's an octet stream well overfitting would be for example if you have sometimes like a cdns cdns become a problem because some of them behave like botnets and if you actually look at the sequence of connections um then the algorithm starts saying hey there's an exploit here so we have that's one of the challenges oh it's called overfitting right so i see a certain sequence and start uh basically transferring that so sometimes certain benign traffic like cdm or video streaming or sometimes when you have users that are using certain apis and and this apis have ui strings that suggest there's commands and sometimes that becomes a challenge for the algorithms to say okay well this looks

like an exploit so that's called overfitting underfitting is the the opposite of it which is the model is not trained enough right so like we said before before we get you get those models ready you have to be able to produce a behavior a benign type of a benchmark so the model knows what is normal and then you have to train on what is my line and that's how eventually the algorithm can tell okay this is good or this is bad but yet you still have to tackle challenges and those are principally for for detection they're very important the overfitting of their fitting because then what happens is and this is from personal experience the the

overfitting starts giving you a lot of false positives and then once you start getting the false positives then people start saying okay well you're like ex-brother or not i mean there's i guess we can put a name to each of them right which is it's full of uh like somebody told me my knock was getting so many alerts from x product that we stopped looking at them right so that is a challenge right there that we're trying to tackle all this so what is the response to that the response is to refine the algorithm like for example we have a model that checks with the http one is what over 24 type of indicators micro behaviors

oh yeah i mean it's like 60 features there's like 60 features in every single http connection it looks at 60 features and checks checks check checks and then it does on a statistical calculations it's likely this is benign or likely this is when this is done in real time that's why like i said before things like hadoop is is wonderful because it's what has allowed us to get to this point um the technology obviously as we have said it this is not a panacea this is like we you saw in the uh the actual screen about once we go to apd this pretty much is is very difficult right uh even by um by applying that

statistical logic right because you just certainly say you had to feed a bunch of data so if you have a once occurrence how would you know if it's a targeted operation if it's a binary has never been seen anywhere no iprep no virustotal see there are limitations that we obviously have to tackle but the the there is what we're looking at at this point is at this point is we're in early stages and we're looking more like the basic behaviors of detection uh stock one that we believe that eventually this in five years will probably replace stock one so if you are at stoke one right now you may want to start transitioning to suction

uh because soak two stock three you're gonna have to learn and take advantage from this from this technology but definitely i i i'm telling you it's coleman and not only in just the log area but in the network area and many other uh decision type uh defense uh or uh detection technologies uh the operation operationalization do you do you have any comments about that the as far as uh well you know i call hadoop sometimes magical right like you look at it and you know there's so many kings and quarks that you really need a level of skill and and hadoop and is a hundred thousand pieces that you had to put together in the hadoop ecosystem in order to make

it work so this is not something that we just can github clone and that's it or docker and it's up and running so that is definitely a a challenge in things like aws and cloudera in my opinion are a positive uh provider of a way of streamlining and using this technology uh and obviously we had like we showed the adversarial drift where you know just like they learn like we learn from them they learn from us and they will continue uh adapting and improving their their attacks however it isn't our opinion that as we train the algorithms and as we are able to gather more data and apply it and improve our algorithms the detection

rate will improve would you like to show uh can we show the uh a little bit of the code or oh yeah yeah sure so basically if you saw what you saw before we we show a like a sequence of exploit chain and what we will show you now is it's a tool that we we're going to release uh this afternoon that basically you will apply this to pickups and then it will tell you what's the likelihood that there is an exploit chain and run somewhere in it we will publish it so that we want to give it away to the community so the community can work on it and improve it and one of the nice things that we did

was from the output of this tool basically we created a script that uh ssh um i had i have a domain uh a laboratory where i have the main controller basically you can grab the the for example the name of the executable um create a powershell conlet ssh into the domain controller put your gpo and then update the gpo and the gpo will prevent fraud executing that malicious uh executable that you will get just from because you just have to feed in the pickups and then the two scripts would do it so it's like an active defense script yeah so so basically what we're going to kind of show is um part part of the

the biggest like the most painful part of these workflows from like a any analytics application is creating this label right here so like off like what we had to do basically and this is kind of what we're open sourcing oftentimes the hardest part from like a security research standpoint for like big data is getting data that has the labels here so so we we had like some security researcher basically had to go through each pcap or each you know like call back or essentially kind of layer 7 http flow and kind of label you know good or bad malicious or benign so that's basically once we this once once you have these labels that's when when um kind of

machine learning becomes really applicable but even when you don't have it like like it's and that's why it's for me oftentimes it's like an optimization because like we sat down this is sort of like a like a um the the full uh kind of compute that we do per domain didn't fit on the slide so this is just kind of like a couple examples but we compute like a bunch of things i'll show you a little bit in the code but basically these were sort of hand tuned by uh some mania guys some really you know and raw like just security researchers who are sort of mapping their intuition what they hunt hunt for to kind of

lightweight code and um what we ended up doing at first was just designing an algorithm that weighted some of these manually so we didn't use any machine learning we just use a heuristic and we said okay you know a domain is bad if agds for like a classic kind of computation here if we see sort of randomly generated domains that's kind of risky if you see like a high number of null web referral high number of you know user agents if you see kind of weird mime type extension pairs just a bunch of little things that you can kind of just adds the overall risk um so so just real quick so basically what we're kind of like on

this repo i'll show you uh we just have a bunch of different data sets like some of them are just rod uh uh just doing benign traffic and us labeling it and then having the algorithms you know the algorithms can use that as input along with um the exploit kits okay so let's see here um it's the best way oh you're not even on that yeah okay see here oh sorry so just i'll just kind of show you very quickly like what does it mean to build these like little um oops sorry okay

so like at the end of the day um actually let me just fill up a better example i just i'm going to make this full screen i just got to find one little thing here so at the end of the day sort of what what what's like what's what's the value of doing this just versus using like a regex or kind of a snort rule or something um oh man sorry um so okay this is kind of hard to see um but basically like what we're sort of able to do so kind of like what i've shown you with this like this like call of this row of each domain is we we basically have these rules for different

like different behaviors we're computing about a particular use case and and the thing is i can just for exploitation for uris i kind of studied the data i looked at these exploit samples and just derived some simple features and basically are mapping this in in a workflow that now this is sort of what um the learning algorithm is is keying in on and and and the power here is this is very expressive i can write down anything i want it doesn't have to be a regex it doesn't have to be a snort rule but i can add like you can add snort rules in i can say did i match on some level of criticality we match on like we blend

everything we blend palo alto rules like semantic av hits these all kind of come in so so you get sort of a holistic view of the world and um and so just you know from a demo perspective let me just show you sort of what what the output is uh okay here it is

sorry

okay one last thing

so this is basically like so i'm just at the jar here and i'll just kind of show you like like the um see okay so basically if you if you clone this repo what's the most valuable here is what's in in data so this is um this is the the majority of the work here the code is just as proof of concept some of these ideas it shows an end-to-end end-to-end thing but but the the real value here is sort of already kind of going through this and labeling this stuff um and then so what we do you guys see this okay is it big enough okay basically i mean what i'm going to do is just run through

a demo oh man i forgot to put one of the brakes in there but basically what it's doing right now is the first step of like a learning algorithm is learn from data so i just point it to a directory um and it's just gonna gonna like crawl the directory and and in this case it's taking each labeled exploit exploit sample and kind of deriving some of those those features that i showed you in the kind of previous like um sorry in the in the development environment and then so we take some some uh good data or should say malicious data there's only three 350 or so samples and then we we um we learn from that and then we learn

from labeled benign traffic samples and we build a model out of both those and then and then when we want to score something this is a little fast but um what happens is kind of here's the key here's the key prediction step so i just passed a new model it was basically a new it was like a ransomware sample that someone gave us there's like the it had the exploitation in it and and it's just kind of goes to show that if you sort of deployed this in line um the output is actually like an ioc of json so when we see a good prediction like okay this is bad then give us some some indicators we're just grabbing like

the ips the domains and the files in the in this in the um pcapp right now and then we're passing it to like another upstream workflow that's kind of part of this like sexy uh ideas and automated response in this case we just created automated gpo object so that's essentially sort of like you know basically if you have like 50 or 60 of these workflows that's that's and you're blending them all together that's sort of when these things become you know practical for enterprise um but otherwise it's it's sort of hard to to um scale some of these technologies down but uh i think we're we're right yeah

you know i think an older version is like on on our linkedin um and and maybe the old b-sides they'll be yeah we can we can upload it to the the github and this will be uploaded is it is it is it uh available now or yeah maybe we'll put it on the github so we'll put it on the github we'll put the tool we're gonna put the white paper so you can read a little more technical where we got all these indicators from and then we're going to give you the uh the power points as well after we're done with the with black hat arsenal today so you just saw a preview of it

no you know i wanted to really give this to visas because i think this is super cool and an amazing conference so uh if you don't have any questions i'll we'll thank you very much yeah sorry there's we didn't have a link in the other slide deck but that's where most of the stuff is living yeah here so yeah yeah let's let's try this oh sorry

that's it can you guys get that so if you don't have any questions then uh thank you very much

you