Cluck Cluck: On Intel's Broken Promises

Show original YouTube description

Show transcript [en]

See this is the hecklers. Okay. Good afternoon almost. Good evening ladies and gentlemen. Welcome to Breaking Ground. Last presentation of the day in this room. Um just a quick note before we get we begin. Please respect the speaker. Put your cell phones and other devices in vibration mode or silent mode. Whatever your preference is. Floor is yours. All right. Thanks for uh skipping a little bit of bar time to come out and uh watch my talk. Uh my name is Jacob Tory. I work for AIS and this is my talk uh Cluck Cluck uh on Intel's broken promises. Uh though I was just talking to an Intel guy who uh took offense to that and so I

had to put this little disclaimer that it's actually any system that supports PCI Express is vulnerable to this uh this uh virtual memory breakout. Um, so before I put you to sleep or before you slip out to get a genonic, I just want you to know that uh basically the crux of this talk is that the uh PCI Express specification allows software with sufficient privileges uh to break out and escape um a virtual memory and then access any hardware any uh any memory of any process as well. Uh I prefer this to be a discussion. This is some technical stuff. So, if you have questions, please don't be afraid to to jump in. If you

can't hear me, just put your hand up or go like this. Um, and then obviously any rude comments are are mine only uh and not those of my employer. Uh, I work in Denver. Shameless plug, we're hiring. I lead the low-level systems or uh group. So, we've built a custom hypervisor for Mudge and a CFT program, which I'm talking about at Black Hat on Thursday. uh built my own BIOS uh CN assembly and I like the outdoors. So um real quick, we're going to cover some background. There's actually quite a bit of background uh because it's a pretty technical um CPU bug that I'm basically going to be presenting uh the problem and what what

they're trying to goal what we're trying to accomplish and then why it's called cluck cluck. Uh and then the solution uh why this happened and then some I think probably the best parts of future work. So if you leave early, you're going to miss out. Uh really quickly, this is probably very basic for a lot of you, but virtual memory, uh it's, you know, kind of a using paging. Um the page tables, operating systems can then partition each application to have a consistent view of memory. So each application appears to have say in a 32-bit system uh 4 GB of flat memory and there's no other programs and that program cannot overwrite other processes or the

operating system kernel. Uh back before paging on DOSs if you had an application that was running it could go berserk and overwrite your kernel. So um that's what virtual memory. So that's it's it's a addressing that doesn't actually hit RAM. It's it's run through the processor and the memory management unit before it hits uh before it hits RAM. Um it's controlled by the MCH which is the memory controller hub. Uh and that is kind of acts as a bridge from the CPU to all the devices on that. So the PCI the PCI Express uh the USB is all run through this memory controller hub. uh in the newer processors this has all been integrated onto this onto the die

but in in previous so pre- nhalm so core 2 duo and older you'll actually see a separate chip and sometimes even a separate separate heat uh heat sink um memory mapped IO that's if you want to talk to a hardware device as if it's just memory so it will be show up as a region of physical memory and there are some defined registers there uh graphics card is a great example they have a region of memory mapped IO and you just put your bit apps there and it will get you know drawn onto the screen. So, uh it's faster, it's newer and that's kind of the way everything is going. Uh before that was port IO or programmed IO

in which the CPU was involved in every single write of uh either a a bite, a word or a dword to a device. Um so this is a lot older. This is how you used to talk to a keyboard controller and pull and get one keystroke at a time. Uh this is being phased out because of that. Um, and I'll get talking about DMA a little bit later, but you can actually offload a lot of the memory mapped IO to a separate controller so that your CPU is free to actually do work. TLB translation look aside buffer really quick. It's just a CPU cache that stores virtual to physical memory translations. Uh, PAE is physical exist

is a dirty hack. We can kind of skip over it really. It's just a way that allows a 32-bit OS to pretend to access 36 bits of memory and access more. Uh it was kind of like a a stop gap measure before 64-bit oss became popular. Um PCI configuration space. This is a set of uh registers and um memory region that uh allows you to control your device. So if you want to um tell your uh USB controller to eject a device, that's how you go through you can go through PCI configuration space. Uh PCI express this is probably the most important part of all these terms came out with something called the uh eCam which is the extended

configuration access mechanism. So PCI config space you used to access using port.io. You would load into one port register the address you wanted and then you would either read or write. So it required two port IO reads which take about a millisecond. So if you imagine how many clock cycles you're wasting on one millisecond. Uh PCIe ecam is all memory map. So it takes all the PCI configuration space throws it into a place in physical memory and you can just access those registers as if you're accessing memory. CR3 registers on the CPU. It tells where the processor should go and look for the base of the page table. So when it wants to translate a virtual

address to a physical address, it takes the address at CR3 and then part of your virtual address which is up at the top where it says PTE. That first one is kind of an index into your page directory. So that's what PTE is. Page directory entry and then an offset into here. And so each PTE when you're using a large page points to a 4 megabyte page. Um, you can add in more levels of direction. So, you could actually map a PTE to a page table entry. Um, but for now, we'll just simplify this. Uh, it can get really complicated. I think with virtualization and extended page tables, you can go all the way to seven or nine

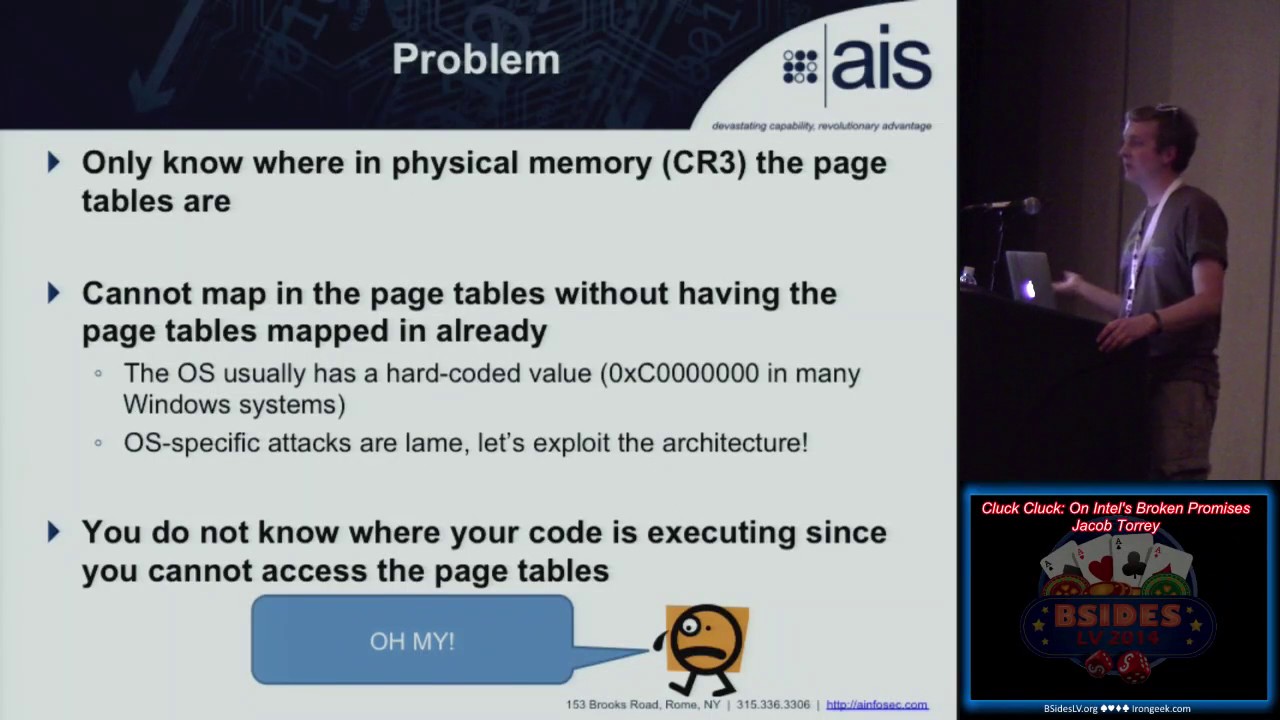

levels of indirection. So, that's why the TLB is there to cache these very slow um processes. So why is virtual memory good? And why is that considered a a promise that Intel is going to break later? So it's a protective feature or promise. So the first code in when you boot up, you can set up paging and memory however you'd like so that your applications can't access kernel memory, your applications can't access other applications and you can kind of go in and defend the system. Uh unless you have access to page tables, you can't access the page tables. So unless you know the virtual address for those page tables and that's been mapped into your process space, you

can't change that for other ones. So this is the chicken the egg problem. It's a catch 22. So unless you know how to add mappings to the page table, you can't add mappings to the page table. Uh and it protects against a number of attacks. So um you know, if you want to access uh memory mapped hardware that's not mapped into your space. So if you're a a process and you don't have access to the memory mapped IO for the graphics card, then you can't access that and maybe do an attack on the GPU. So the goal we wanted to be able to map in arbitrary physical memory. So this requires modifying page tables um

which is that chicken the egg problem. So we need to know where they are in virtual memory. We can read the CR3 register and figure out where they are in physical memory. That doesn't help us. Um and so we're or we as the attacker or good guy um we're executing as either kernel shell code uh live memory forensics we could be a hypervisor we have ring zero access so we might have found a bug in say the Linux kernel uh but we're confined to whatever mappings uh Linux defines um and so we can't access those memory mapped IO devices like I mentioned and most importantly this the coolest part of this is that it's OS independent so

this is just pure x86 assembly uh so you you know, Windows has uh a mapping to the page table at a known virtual address. So, it's C 0xc and all zeros. So, if you're in this situation on a Windows system, you just go to that address and you don't have to worry about any of this. But this is an architectural flaw, so to speak. Uh I said most of this, oh, and you don't know where your code is executing since you can't read the page table. So, that's another one. So, some people have tried uh switching to real mode and then executing there where there's no protection, setting things up how they want and then switching back,

but you don't know where you're executing uh and so when you jump back out of real mode or you turn off paging, you're then going to jump to some random instruction uh and you're going to be in in big trouble. So, the crux of this problem is you need control over just 32 bits. So, once you have that, you can set up a recursive mapping. It has to be at a known physical address so that you can set your CR3 there. Conveniently, PCI Express has more configuration space per device. Portio is slow, so they need a way to make it access faster. So, this ECAM, it shadows device configuration space into a physical memory location.

The base address stored in a register on the MCH.

So our basic solution, we're going to construct a page directory entry that will map in the CR3 of the operating system page tables. Um, and then we're going to mark it as present, you know, readable, writable, and then using PS, which is the page size, we're going to make it a large page. So we don't need to have an intermediary intermediary page table entry. We then want to use port.io go to insert this new page directory entry into PCI configuration spot space which basically is allowing us to modify what the CPU thinks is physical memory through port IO. We can then determine the physical location of that place we just write wrote to using this PCI base address which we can

access through port.io again. So the only problem seeing here is that uh page directory entry has to go somewhere and you can't just overwrite a random PCI configuration register otherwise the system may crash. So conveniently Intel has provided us with the scratchpad data register. Uh I kid you not it says this register is for software use only. It has no functionality. Um so it's almost as if you know they were helping me out with this. So uh 32 bits of beautiful storage right in the memory controller hub itself uh device zero function zero and uh now you can use portio to write that physical uh memory in the scratchpad register. Uh then you read out that PCI

xbar I mentioned already and um now you know where your PD is in memory. Next step is uh to change your CR3 register to point to your PCI configuration space. Um, kernel code is marked as global in the page tables. So the TLB won't get flushed when you make that switch and uh so your box won't crash and you can still run the code. And then the CPU doesn't know anything that's doing anything wrong because in its view it's just accessing memory. It has no idea what's there, what the semantics or the meaning of that memory is. Um, and the MCH doesn't know what the CPU is using this memory for. So it thinks everything is okay. So, it's kind of a a

disconnect in between the MCH and the CPU. Any questions about this so far? Yeah. Sure. Okay. So, the operating system page table, the base, the physical address is always stored in the CR3 register. So, you can read that out, but you don't know where that's mapped into your virtual address space. And so that's what we're doing right now is we're going to create a virtual memory pointer to point back to that CR3 register so we can access the main operating system uh page registers page tables. Which one? So the scratchpad data is where we are putting our page directory entry that points to the operating system page table. Um, so basically what we're doing is we're treating PCI configuration

space as a page table. Um, and we had to be very careful when we access it because there's only one entry that's valid and there's some alignment issues where you can't have a page table pointing to, you know, an arbitrary memory address. It has to be aligned to certain uh certain boundaries. So uh the scratchpad data register is a PTE or page table entry that is then mapping in the CR3 value of the operating system. the scratch pad. It's just because I know that in the specification that it won't muck with any hardware. If I were to use say a graphics card one, it might throw crap up on the screen. So, good question. Any

others? Okay. Um so now we have a virtual pointer to the operating system page tables or page directory I should say. Now we can scan these the operating system or the real ones uh for an empty entry and then we can put a recursive page table entry that points back to the page directories that the operating system is using. So this is uh called recursive mapping. Um and then we know we'll save where that entry is and then we'll have a known virtual address. We switch back CR3 and again since we're on global pages the TLB won't flush us so we can continue working and then all in a few lines of assembly we have a virtual pointer at a

known location that points to the operating system CR3. So breaking out of virtual memory essentially couple caveats. So alignment I mentioned um the CR3 register the bottom couple bits are more like status and configuration bits. So they get uh you know hand anded off. So you need to have um some some math and bitwise operations because wherever your register is, you're going to have to figure out how that virtual address works out. Um you're going to need some PCI configuration registers that are okay to be trashed. Um there's the MCH scratchpad register. There's a lot of other ones if you just look through Intel specifications and just do a controlF for scratchpad. There's some on

the GPU. There's some on the SATA controller, the LPC. So, there's a whole bunch of them. And so, I'll talk a little bit later about, you know, extending this to say a 64-bit system where you're going to need another level of indirection. And so, you're going to need multiple recursive uh pointers. Um, and then the big one is this requires ring zero and global pages. So, uh when I this got talk got put up online, I got a frantic email from Intel saying, "What did we do? what's wrong? What's going on? Uh, we went back and forth and basically their argument is once you get ring zero, there's really all bets are off. Um, and so, uh, even though there

are a lot of cases in which if you find the little exploit, you know, you getting this was a kind of a pivot point to insert yourself. So, um, we're actually going to get rid of that restriction to be in ring zero. Um, and we're going to move on from that. But, uh, um, go on from there. So, Any questions? Good. Okay. All right. So, how did this happen? Kind of a classic feature creep example. So, uh you know, Intel made some kind of assertions back in the day when they brought out their um virtual memory and their paging protections and then down the road they said, "Okay, well, we now we have these PCI Express

buses that are moving data extremely fast and have huge amounts of configuration. this is too slow doing it all over port I io so let's throw this in uh and then they violated those assumptions without going back and verifying it this has happened before there's the SMM caching bug which is where they added a new feature without realizing that it you know forked everything up there's some virtual machine side channels where they went for the performance over security decision uh etc so uh as I said Intel reached out spoke with Bronco Rodrigo who's actually in the other room right now he's also also speaking at uh black hat um to try to bump into him if you

see him. His thoughts basically this is an impressive attack in the sense that it makes something OS independent. Uh so really there's no point if you know what your target system operating system is because either the Linux kernel is identitymapped or Windows it's at a known virtual address. This is just a cool flaw in the x6 architecture. Um and so if you already have ring zero you're already pawned. So, not really a big deal, but they're going to go and provide some education to the OS developers so they realize what can happen when you have these flaws. Um, and it's already possible for target operating systems. So, cluck cluck is not target specific. If you know you're

hitting an x6 system, regardless of what operating system is running, this x6 assembly will run and work. If you're trying to target say a Windows 7 machine, then you would just use the target specific attack. So, this is more of a a cute weird machine or an Easter egg rather than a a zero day. Um, hopefully that's not a let down for some of you. So, if you're out and building a new system, these are some uh suggestions I would provide uh to make sure that you you don't have a similar issue. So, um codify your verify what your platform is guaranteeing and what it does and what it doesn't do. you know if you're taking input formally parse

that and so that way you can reject bad input rather than going into some unknown state. So that's kind of the example behind Rob where you know you don't formally parse that input and that input is return addresses which is essentially an assembly language for a ad hoc virtual machine. So you need to codify what your platform or what your design is doing and then what it's guaranteed or is making and what assumptions you're making that you know per example the adversary can't do x y and z and then when you add a new feature go back and review everything. So you know one thing I think you know alloy is a tool by MIT to do um model

verification. So you can write uh you know in a couple hundred lines of code you can write a formal model for an architecture and it can check a whole bunch of different cases that you wouldn't be able to check. So before you start writing a single line of you know C code or Python you can make sure that your design is sound and there's no invariance that can be broken and then you just update this model and have run it again whenever there's a new uh feature that you add and obviously maintain an adversarial mindset. So this is probably the perfect audience. You guys already have an adversarial mindset. But if you're building a system, don't forget that. Don't think,

you know, okay, I'm just going to worry about my class diagram. Think about how you would attack the software you're building. And then are those assumptions, you know, a reliable or a safe bet when you're thinking about your

adversary. Couple use cases. This came up originally as a forensics. So um you can plug a PCI device in. So actually there's an NSA playet talk at Defcon about exfiltrating data over PCI. Um so they're doing at the PCI bus mastering level. This is if you can plug something in and get software to run. Um so you don't know or you can't trust the OS API. So you can't just execute code in kernel space and say okay Windows m app MAP I io space my PCI device because you're not trusting and you don't want to alert the operating system that you're doing some kind of forensics. Um you need full memory access is you want

to get the whole dump and then you're going to need memory mapped IO to whatever device you're excfiltrating. So if you want to get direct access to the nick and then that way you can send your data off over the uh the network without you know using the operating system uh APIs which might get picked up by some kind of hostbased protection. Um so that's why you can use this. It's basically a memory create attack where you're directly accessing it rather than going through OS uh APIs. Um hypervisors it provides a memory or an OS independent way to uh map in memory. So if you're writing a thin hypervisor, you want to do some introspection and you don't really want

to care about how the whichever operating system you're virtualizing. Um so something like Bluepill is a rootkit example, they'd be able to go in here and use this to uh map in memory regardless of whatever operating system they're subverting. And then the last, you know, kernel shell code for, you know, whatever reason you might have. Uh, and you want to be able to pivot from a little blob of shell code, you know, x86 that doesn't call any specific kernel functions to full system memory access and memory mapped IO. Once you have that, you can inject yourself anywhere. You can get a lot of rootkit persistence. You can hook the IVT or the IDT and you can really, you know,

completely hone the system. All right, any questions before I go to Okay, cool. future work. So, these are all theoretically possible. I just haven't written them up yet. So, um you can extend this to work on PAE or 64-bit. You're just going to need to find larger scratchpad registers or more of them that will point, you know, so you'll have to have, you know, a page directory entry that maps to a page table entry which is also in PCI config space and then maps to memory. So, uh it's more of an engineering task. Um it would be really nice if you could remove your global page requirements that you could execute anywhere as long as you

had kernel privileges. Um and then uh remove ring zero requirement. This is the big one. So uh this actually happened when I was emailing with uh Intel and they said this attack is not really that impressive. Um so I went back said okay well now I've removed the ring zero requirement. So an application running in user space with uh IO privileges such as XS server which is run on a number of systems um or if you're running in BSD with secure level um you can actually have full memory access from ring 3. So don't tell me my attack is pointless is the the the outcome of this. Um so this hasn't been implemented and tested so it's

future work but in theory everything should uh should come together little bit more background and then our goal is basically to get from ring 3 so user space application with an IO privilege um to talk of port IO to ring zero or arbitrary physical memory access. So why would you care about this? um you need root to get OPPL um and uh so on BSD systems with secure level you can get root but root doesn't have access to kernel you can't load a kernel module in a BSD system on secure level so uh this is a way to break out of that and get into the kernel um and then uh it's also kind of a neat

trick so really quick the overview um for the technical ones amongst us. We're going to use DMA. This is actually a DMA attack to overwrite either kernel code or the IVT. Um, we're going to set up a table in the eCam pointing to the target memory addresses we want to overwrite. We're going to write a payload to disk and then we're going to use port IO to tell the DMA controller to read from that block or have it read from memory first so we can do a patch and then write back. Um, and so this essentially allows us to do uh access to arbitrary physical memory. So um a little bit more background. So DMA uh requires a few things. So the

reason you can't do this without this trick is that DMA requires both a buffer or this it's a PRDT physical region descriptor table which has to be stored at a known physical location to tell the uh DMA controller which blocks and where they need to go. And uh and then also you need port IO. And so up until now there was no way to write this PRDT at a known physical location. So even if you had exploited Xserver, you couldn't do this DMA attack. Um so basically it needs to be at a known physical address. If only there was a way to write physical memory using port IO at a known location. So conveniently I just showed you that. So

you can set this table up and you can store your target memory address. So I would recommend reading out the IDTR which is the pointer to the interrupt descriptor table and then you know maybe modifying that. So then DMA this is the old school DMA. So this is ATA DMA. Uh then you can use port IO to tell the DMA controller to read and write. You got to give it kind of if it's a read or a right start and stop. You don't care about the status bite and then a pointer to the table we already set up. And then you use port IO to communicate with the ATA controller. Um, and then you can

communicate over the standard ATA or a Tappy spec. You could also use a CD drive, but I don't know how many CD drives have shell code on them, but you could if you wanted to. Um, and then there's the different read DMA and and write DMA bits. Um, so you now theoretically have full read and write access to the entire memory space. Um what you do with that is left as an exercise to the reader. Uh so some caveats as well. Uh newer drives mostly the SSDs are in what's known as AHCI mode which is something you can set in the BIOS um and they won't respond to these ATA commands and so uh this won't work in um

in AHCI mode. And then Intel VTD, which is the extensions for hypervisors that provide um directed IO capabilities, which prevent a DMA attack against a hypervisor. This will allow you to do that if you had an operating system with this capability. Uh as far as I know, uh that's not um happening right now. All right. So, I'm going to let you out early so you can get drinking. But couple conclusions. Um, as I said, it's not really a security hole in itself because you are need to have some privileges. Um, it's a nifty trick for sure and x86 is is full of weirdness. So, there was uh Sergey brought us up front in the audience and uh one of his

students showed that the um memory management unit in an x86 processor by itself is touring complete. So, you can simulate any touring machine by setting up your page tables and never actually executing an instruction. So, uh, you know, basically this is such a complicated architecture that there's a whole bunch of weird things you can find. Um, a new architectural feature creates a broken invariant from the past. Uh, Intel's pretty cool. I hope they can take some some some jabs. Uh, and then you'll be able to read more in the latest uh, PC GTFO which is coming to a printer near you or on Friday you can grab it from the No Star Press booth at Defcon.

That any questions? Perfect. Just the response I was expecting. Oh, one question.

So, can you go back to the um uh to the solution slides uh and um comment on which invariant they broke? Sure. And what were they what were they thinking they they were preserving? Yeah. So I I would say that the promise they broke is that uh currently the the way that when you bootstrap a system you start off in in real mode which is completely unprotected. Any software that runs in real mode has full power over the system. And so an operating system immediately bootstraps itself to protected mode and then it can set everything up where applications can't access kernel memory, can't access this device or that device. And so that invariant of basically whoever's the

first in, who's ever the king of the hill can then defend everyone else. That invariant has been broken now. So now if you come up, this is basically a catapult that allows you to smash your way into the castle and uh and take over and kick the operating system out if you wanted to. You could then create a little region of memory where the operating system can't even read it. Um, so even if there was some kind of uh active operating system defense, it wouldn't be able to read the the region of memory you're hiding yourself in. Does that answer your question? Okay. Um so uh so you have this uh you have this uh

PCI configuration area where you put the table which is which is great. This is lovely. I I wish I thought of that. Um uh but uh so at what point uh at what point does that get uh inserted into the uh uh say the boot process? This can happen at any time. Uh I can actually show you. Oops. Let's see here. My code is a Windows kernel module that you could load at any time. Um so this could be this can happen after the operating system has completely set up its defenses and it you know is assuming that even if you were to get access to ring zero from some kind of driver exploit that you wouldn't be able to

kind of pivot from that. And so this brings it back so that your attack can then access anything as if it was in there first. So the invariant seems to be right that they took their uh the knowledge of the location of the uh page table as a sort of a capability. Exactly. Yeah. Right. So as as you were you know as as um you know we were as you mentioned that they said that well you know you already have ring if you already have ring zero well sort of the capability model yes is based on uh you need to know this magic number exactly yeah even though you you can write all over memory

but you need to know this magic number to do so uh purposefully. Yes. And so I think you know there's there's two. There's that which is probably the the crux of it and then there's the other one is that up until now there's been no way to write to physical memory at a known location. And so virtual memory was this perfect unescapable jail that you could have, you know, you could have 4 gigabytes of memory in your application but only have 2 gigabytes of memory. You're not sure where that memor is going. It's just it's off and it's managed by the operating system. And so that prevented you from doing a lot of stuff uh and talking to hardware because

hardware usually has a both port IO and a memory mapped IO component. So that the memory mapped IO component was kind of a protection capability that unless you had access and you had that mapped into your address space, it was essentially hidden from you. Yeah, that's it's it's interesting how much I mean what other leaks you might be able to find with this. Yeah. So my, you know, weekend projects have been trying to get rid of the ring or the IO privilege, uh, requirements. So that would be a fully generic privilege escalation on any operating system. Um, so that's hopefully what I'll be talking about if I can have some results in the next uh, year or so.

All right, any other questions? Okay, thank you very much. Thank you. [Music] [Applause] This is end of line for breaking ground tonight.