The IoT And AI Tech Nobody Asked For But Cybercriminals Love

Show transcript [en]

hello everybody thanks for joining over lunch as well choosing uh choosing us over lunch is a big thing so obviously in case you'd missed it uh AI became accessible to the masses in 2023 don't think anybody's mentioned it allowing almost anybody to use it um and the topic of iot and AI I think it and its impact on our lives is getting a l of uh excitement and fear across the tech world and I think this is being especially felt in the cyber security space on one hand there's kind of a need to enable businesses to be Market leaders through the use of this kind of Technology on the other hand well our it security teams are faced with an

enormous challenge as Defenders our kind of organizations's infrastructure and data and of course the customers so to help navigate this conflict we're going to take you on a journey today to understand the wearable the sharable and why we think sometimes it's pretty unbearable especially since it's giving the cyber security criminals an advantage we really cannot afford them to have and who is we when we're talking about this it's everyone in this room it's us that are supporting our teams build and create these tools so and it's also we as consumers of these tools so consumers either knowingly or unknowingly and so today we're going to discuss the rampant uh Tech adoption of AI and wearable devices the erosion of

what we are calling the social contract and the proliferation of the Quantified Self and also lots of examples of AI and wearable misuse so why is this a problem for us in the tech industry well I mean the field of cyber security kind of evolved from corporate it as mature protocols architectur and security controls how The Internet of Things in AI are quite a different story security policies and implementation in this field are pretty immature and it's are starting to take advantage of that and this is exacerbated by the fact that organizations are being blindsided by the fact that AI is being used heavily in their organizations already this adoption is actually a bot some Up

Revolution with individual adopters and their employees rather than top down as more traditional leadership mandate and this stealth adoption is happening with little oversight to the potential security exposures so basically the genie is out of the bottle when it comes to this and O in Tech cannot just sit back and let it happen so but the other thing is we can't just block or ban it either as much as some of us would like to so the only thing for us to do is to embrace it but embrace it in the spirit of Adventure but embrace it with obviously lots of guard rails and you know as many of you in this room who will be

supporting teams build this software we need to think about the customer Journey more we need to think about how we support our colleagues that are actually building this to help them become more security aware when it comes to these tools and it will very much be a team effort it's not just something that the cyber cyber security team is going to lead on now a little disclaimer here this talk is going to be a Debbie Downer talk because you know it's AI we recognize however that for every good uh for every bad use of AI there is a good one as well but this talk isn't about that because we haven't got time to talk

about all the great stuff that AI is doing at the moment as a side note as well that this topic is moving so fast that when we first started doing this talk in February we have now changed it six times because people keep making immense cock-ups when it comes to Ai and it's nice to keep up with them so let's introduce ourselves Jeff thank you I'm Jeff Watkins I'm the cpto at uh create future what called until last week thought I'd slip up there uh 500 strong Tech consultancy headquarters in Edinburgh with Offices here in leads Manchester and London uh finished my cyber security MSC last year and I've just started a msse in artificial

intelligence in January so I'm kind of a bit of a glunt for punishment um so somebody with an interest in both cyber security and AI I think this kind of subject really floats my book and I'm also one half of compromising positions with my co-host that's me uh leam Potter I Am head of Security operation for a major retailer as the day job when I'm not doing that I'm also known as the cyper Anthropologist uh because my background is anthropology um and basically what cyber anthropology is is looking at why we do things from a cultural perspective around technology and particularly cyber security sove you're finding lots of insecure behaviors proliferating your organization try and think maybe it's

something to do with the culture around us and as Jeff mentioned there is a little plug to our podcast uh compromising positions that's that's slides wrong we should have changed that that's it awardwinning podcast compromising positions last week we won Award for best newcomer we are the only cyber security podcast that I know of that only interviews non-cyber security people about cyber security so we've had the wonderful Beck on the episode here far I hope you've caught her talk earlier so we've had psychologists on over Anthropologist people working in ux developers data anything that's not cyber security professional coming on the show and talking about how does cyber security make them feel we also did an AI miniseries as well so if

you like what this talk you'll probably like that now that's the plug over let's get back to the show so we'll start off with a recommendation if you're interested in this kind of thing uh it's a book called robot rules by Jacob Turner it's kind of part law analysis part philosophy and it's a really important read on the topic it's quite dense but I would recommend you chew through it and it's formed a lot of this talk through the various narratives that we've built over the decades and some might say centuries if we include things like Mechanical Turk which is a chess playing machine from the late 1700s which wowed people by being able to play

chess seemingly at the grandm level against a human player except he was eventually revealed that he's just a Wei Man in the Box controlling it indeed like Amazon actually give a nod to this by calling their product M Turk instead of Mechanical Turk CU they're down with the kids um and they describe it as a crowd sourcing market place that makes it easy for individuals and businesses to Outsource processes and jobs to a distributed Workforce cuz they highlight that the service works because there's still many things that humans can do much more effectively than computers which is turns out really kind of what they were doing with their just walk out stores oops uh find it kind of amusing

and strangely dystopian in equal measures which I think is a good vibe to stand as we talk about all the robots around us every day so the average person underestimates how many iot and smart devices they have in their home their work and in their businesses and indeed the surroundings around them and last year the number of voice assistants in the world reached 8 billion and that's more than the human population and by 2025 that's supposed to exceed to 30 billion so it's going to be big and their proliferation lies in their ease of use often Plug and Play straight into the network their costs are often really quite cheap to use they inconspicuousness once they're in you

kind of don't notice that they're there you get used to it really quickly and because they're convenient and by convenient it's the shiny promises that these U wearables and iot devices are saying about making our lives much easier but when I think as a security professional about what when I'm trying to enable my devs to build these wonderful tools I have to think actually when I talk about security it kind of goes against what they're trying to achieve because security is not easy easy to use it can be very complicated to implement it can be really costly to actually embed security controls it's highly conspicuous like think about things like MFA and passwords you literally have a

process to to actually get to a security position and very inconvenient if anyone has ever forgot their password and things like that so how can I compete with that and it's a conversation that we're going to have to keep having amongst ourselves because these challenges are not new to us in cyber security because we're always having negotiations with our engineering team about you know what is nice and easy to use but what is also very secure so there's always going to be discussion around usability um functionality and security and if all the breaches lately or anything to go by I don't think we're doing very well in that negotiation so it's not a unique challenge to us in this space but is the

adoption of smart devices complicating things further what we think is I mean the growth of this second internet which is what the iot movement is internet I think uh has changed the way we live and work and and realiz in different operating systems and protocols one's are quite unfamiliar with most of us and we've seen an explosion of wearable devices smart watches medical devices smart glasses Etc which you're often collecting and sharing data coupled with the fact that these devices are smaller more numerous and often inconspicuous it makes them difficult to secure even no it's the there so the sharables we refer to in this talk can come from the sharing of data from a wearable device

but also includes things like data being transmitted by other iot devices not necessarily worn such as smart bulbs people hack those security cameras person assistants like Alexa or sharable can Meine apps like my fitness pal which can collect data manually or using a wearable and then well whether it's wearable sharable or both they have the potential to impact her privacy change the social contract and blow the lines with that between our personal corporate and Civic worlds which makes it kind of a complex and everchanging unique security Challenge and a lot of that change actually does take place in the erosion of the social contracts and people aren't familiar with the social contract a guy called Thomas Hobs in the

1600s described it as when we as individuals give up some of our freedoms and to submit to an institution the government hospitals the church for example in exchange of protection of our remaining rights for the maintenance of social order and he said without this system um we're actually really is chaos you know we need this system for the stability of our culture relies on it um because by our very nature we're quite self-centered beings and this doesn't lend itself the AL ISM that really that that needs to keep things ticking along nicely so does the same social contract apply today well yes however with some changes in the 20th century we saw the rise of what we would call the

individual or individuality rigid social norms like not questioning the government starting to fall away and in its wake we see what we now refer to as something called freedom of speech which we think is very very normal very and a human right now because of the right of of the individual Society began not only to question the rules around them but actually challenge them and so this study from McKenzie in their um paper the social contract in the 21st century they said that um the rise of the individual actually materialized in response because the government could not provide socially in the way it had done before so traditional institutions are becoming unable to sustain their

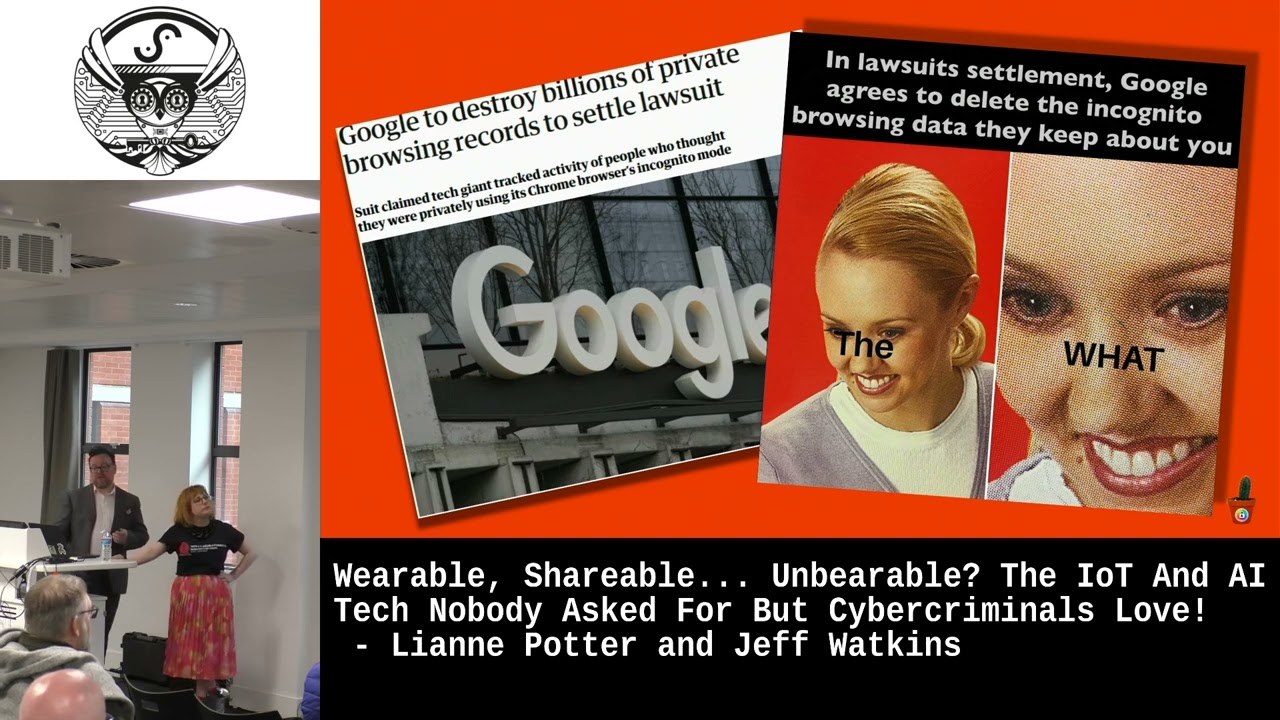

population so think about everyone's pension age going up that's the indication of the the breakdown of the social contract which has resulted in society pulling away from the social contract um the traditional social contract but in its wake a new type of institution has emerged one that Fosters control algorithmically so you meta ex Google all these big companies and more and you might even work for some of these big institutions the new institutions with these institutions we literally sign a contract terms of use it is literally a social contract we sign to them which we don't often read those terms and conditions in terms of use but as you're aware of and I guess a

lot of us now have made a lot of piece with if you're not paying for the product then you are the product so why is this the new social contract Well because most developed countries cannot function without Tech in Touching almost every element of their daily lives so the current social contract can be said to be one where you give up your data rights for your ability to participate in a modern society but the cost to us the consumer is the erosion of our privacy and Security in unprecedented very intrusive ways I mean considering quite a few people were a bit uppy about doing the Doomsday Book and when we do the census it's a bit Rich that we're

happy to share all our personal details with Google for example than with the census yeah doesn't end well that yeah with every device or platform that we connect to ourselves with we give up a little part of ourselves and because a good of these devices are with us all the time that put aspects of Our Lives the private the public which includes work and the social risk but longside that erosion of the social contract it means we've put a new found Reliance in Tech and these guys are really good at making addictive products in this new age of permissive sharing the number of people using wear wearable devices has reached an all-time high um where these

devices offer convenience and connectivity they also come with risks that can't be ignored one of the major concerns is a lot of these devices end up getting connected to corporate resources which adds another layer of risk I mean the lack of dat data loss prevention when cameras and microphones are in every device further complicates that situation and it puts organization at risk of data breaches privacy violations and other cyber attacks not that DLP is a silver bullet we haven't figured out quite a way to actually St to stop people taking a screenshot with their phone of of their screen until recently that was pretty arduous but nowadays with um you know the picture des skewing and image text actually

become really simple and advantage of AI making this really really easy and and then you add in wearables with cameras no not that one uh but stuff like meta has built in partnership with Rayban a little bit more stylish is presents a new set of challenges for the Defenders of data which we all care about whether or not we work in cyber security I mean the idea of an always on lifestyle is what a lot of companies are really persuading us we want but we should ask the questions really did we ask for this and do we really want this imagine a world where these innocuous sunglasses they're very stylish and worn not just about but in courtrooms in changing

rooms in private moments where you know it's things are supposed to keep quiet maybe it's not a pair of glasses I mean I'm certainly not cool enough to wear a pair of sunglasses indoors and I'm just going to look around you have all made the same fashioned decisions I don't think that stopped you from being very cool you've just decided not to wear sunglasses indoors but what about a less obvious device like the rewind pendant it records everything you've seen or heard for under $60 and the hardware the actual subscription is under $20 a month from the cheapest subscription I mean don't worry though they take your privacy really seriously but is it actually people like rewind we really

need to be worried about maybe not I think it's the actual users that we kind of need to be cautious about that are going to be using these devices like Josh for example what happens when he takes all the intellectual property out of the business when he leaves or Randall being in a much better position to be able to pinch all our clients uh when he gets a job with our competitors or what would be absolutely unbearable in my eyes is if I go from rare occasions where I go into the office for a bit of offive gossip and I find out it's being recorded by one of these things no Mike not cool or worse if

you've ever had a conversation with your partner that starts with something like I've already told you about that imagine if they could actually pull the tapes and say yes remember I have actually told you about this could Ai and wearable devices bring up the rise of breakups and divorces but somebody Microsoft obviously thought this is a really great idea since it practically replicated every element aside from the cool bit which is the wearable aspect of the rewind pendant there a sign that rewinds R&D themselves said that customers reported that something that just recorded screens on its own would be considered really weird why's my clicker stop working there we go so and as we've seen in the last few

weeks it hasn't been very going very well for for Microsoft you know they've got into a little bit hot water about it you know it's not never good to kind of catch the eye of the Ico but recall gets recalled so what what we going to do about that well you know in less than two weeks getting recalled which is really bad because I was really excited about recall because of all the potential memes it got me really really excited because I'm a massive fan of the film Total Recall and this is very much my life at the moment so it was a gift it was a gift that they said uh they were going

to record fall in two weeks so I can keep using the two weeks mem what I'm curious about this all is actually the early parts of tech really we were moving away from The Real World and into the cyers space now actually walking back into the physical space there something that's referred to as the Cyber physical convergence this is refers to when the IIT movement introduced things like sensors and robotic automation you can observe and interact with the real world bridging that Gap then you add in AI to the mix and start to learn and modify their behavior based on these kind of interactions so as our wearables and sharables become unbearable a breach that originates in either of these

worlds so that's the um cyber and the physical can result in a breach in the other and that makes the threat landscape really really complex and all it requires now is that we're going to have to do a convergence of both to be able to be secure the convergence of the the the stuff we know from cyber security to keep us safe and the stuff we know about physical security to keep our safe so that's the policies the tooling and our approaches we seem to be really captivated by these devices though as you've seen some of these here I'm so captivated accepted an average of 22 of these devices into our homes as of last

year another alot to these devices is a more accurate experience one where iot and AI do some of the remembering and thinking for us and that's a really dangerous game to play I mean these small form Factory devices like the rewind pendant and the Humane pin feel a bit to me just like spy devices of all just re badges assistance remember these assistants aren't just recording your voice and what you're' seeing but also your location your browsing history purchases and also the people around you so what boundaries are there in public in workplace at home technology is moving much quicker than things like legislation in this area we might not even know when other people are trading

on our boundaries recent AI app that matched CCTV images to Instagram photos with a really chilling example of how little privacy we actually have in public spaces the prevalence of smart AI assistance camera glass and other always on devices means that what happened in Vegas stays in Vegas no longer applies chat house rules will just let me take everything off and turn everything off the phone and have a conversation so how far do we go pulling this social contract before these personal assistants might become acceptable to some but off limits to others it's a discussion again we're going to have to keep having amongst ourselves because as this talk title suggests I think a lot of these products

are being built without any consideration or consultation with the intended audience and the intended audience is all of us if if going by that it records everyone and therefore it will be unbearable to some now these posts on here might be ingest I think the ick factor is something that we might help us when we're building these tools and devices but who knows these AI wearables might just implode on themselves because although they are wearable and sharable maybe the market actually is now s deem them too unbearable but I could be reading too much into this we in Tech must enforce boundaries for the uses of these devices in our organizations and unfortunately the legislation as Jeff said is really

lagging behind in addressing these concerns and big tech companies and the AI startups are not doing much to help and our organizations probably won't do anything until we're told to so we're kind of in a security development limbo at the moment but we better get to it fast because we're going to start getting too comfortable with these infringements of our data privacy and our security well I think a lot of these vaguely dystopian AI assistance will kind of boil dry when the services are baked into the operating systems and phones and that's been hinted at recently with open ai's GPT technology been putting into the upcoming version of iOS iOS 18 um one of the other fears

about sending our personal data services is one of to Services is one of privacy and that's the much use quote often attributed to Joseph gerbal uh but it's not him it says if you got nothing to hide you got nothing to fear yeah heard this elsewhere as well um and that's what happens when security and privacy kind of get conflated life is really not quite that simple the basic human right to privacy according to the Human Rights Act of 1998 is not just about things like criminal Acts or intent when it comes to our personal corporate interactions cter point that last quote I think is a more there's another quote here more fitting to this talk attributed to Cardinal

wisho this time actually accurately see what we say do or believe can be used against us even if it's entirely legitimate what about weable when weable Dev become become part of the social contract well the truth is that quest for the quantifi itself is one of the other parts of the Allure of these devices it's not a New Concept we've been tracking our steps our calories and other metrics for some time now however the question is who's using this information why are we willing to give away more information when a leaderboard is involved there's no real price except for maybe the criminals because really this is just kind of narcisism in data form why do you think all of this is so

valuable it should be shared with everybody what happens when you don't actually get to choose whether your data is being shared so so when you don't get to choose the dark side of the Quantified Self is when someone else is quantifying us now unfortun we don't have time to go into too much details of things like boss Weare and workware time motion tracking which are all very awful but we would like to look at the bigger picture UK government recently Tri GPS tagging for immigrants coming to the UK which Dre much ifon privacy International who found that basically the people subject to this had great physical and mental health uh impacts um imagine having to account for everything

you do every hour of the day every day of the week potentially having that used against you especially when there's a lack of transparency on what data is being collected and how it's being used in most cases people didn't even know how to actually challenged the decision of being tagged in the first place and there was a range of practical problems with them they didn't work very well they didn't charge they broke and and last year only um so this year only a few months ago the Ico ruled that the home office had breached UK data Protection Law not really our finest hour there so another Point millions of people use menstrual tra apps every day

they use them for lots of reasons like predicting uh periods for trekking Health menopause knowing when to get pregnant when to use contraception things like that Flo and clue are the two biggest players in this area and both have 50 million active users each so big deal and Health Data I'm sure we can all agree in this room is extremely sensitive data but in the wake of the Supreme Court decision uh to overturn Road versed Wade in some states got privacy experts beginning to be increasingly concerned about how that data is collected and how it could be potentially used against people in the future particularly penalizing anyone that might have had an abortion Now privacy experts were on

edge about absolite clue and flaw because this data whether it could be pened or soul to a third party could be used to prosecute people this is really something people are very concerned about at the moment in America and with our perpetually recorded life wants to say something that I did that was acceptable years ago won't be used against me today it's very much in line with the Chinese social credit system which showed that data can be used to explode people outside of the law so Fring stuff so so far we've talked about things like the types of device into the ecosystem and how this social contract is changing forcing us to choose between uh participating in

technology driven society and things like our right to privacy and how it could be used against us in the future but how much data sharing is going on these days well let's have a quick look at cyber crime by generations for reveal it um can I see a show of Han uh H combining three types of cyber crime uh so raise your hands if you think gen Z have the most incidents how about Millennials Gen X how about baby boomers right okay so baby got the boat okay it's this is consistent so cyber safe's o Behavior report shows that actually Millennials aren't fairing too well on Cyber crimes are you surprised by this most tech savy generation is also the

experiencing the most incidents now it could be that these Generations are also the most online according to McKenzie um jenzi only 1% of them read the te and C's the're most likely to take risks online most people have okay access to personal information for exchange for some kind of nice cookie um but actually also geny doing things like buying uh vpns and other making other protective but I have to wonder is it a coincidence that what Mackenzie calls the most permissive of generations is also leading up quite a lot of these startups and AI companies could it be the fact that they are so permissive that they don't feel the ick Factor as much as

other Generations might do potentially I think no more uh research needs to be done but there's a correlation what it does say I think is we can't do a one-side fit Soul security because it's quite generational so I think we're actually almost at time so should we skip to the end we we go can talk about digital panopticon come and talk to us about the digital panopticon later if you want to um well let me tell you this story though um go back so it's not just things I'm wearing it's not just my computer recording everything I'm typing on it's happening all around us and there was a survey done by this organization called Big

Brother watch um and they uh published a study and it's under our very noses with being like Billboards and they uncovered that millions of people's movements were being recorded all the time with these um electronic Billboards um and that's including children and it's for targeting ads specifically um and if you watch it long enough you will see some of these Billboards actually um especially on the sides of buses change for the individual uh slightly and what the they're saying is it's really intrusive surveillance techniques where they are literally watching you to understand like what's your all the demographic details what's your age what's your gender um the call it dwell time how long are you looking at this ad

how do you feel when you when you're looking at this what's your facial expression and they're taking all this data without consent and they turned this in and there's a cute news story that kind of came alongside with this in which a um shopping center decided to use the electronic Billboards and it was to um Foster a dog and so what they had is this dog you would come into the shopping center and this dog would pop up and would follow you around the the shopping center again without consent this is the social contract in full force so what are we going to do about it well as I say government legislation isn't there yet so it just lies the

burden one place us us building the products us having to work with securing these devices and also us consuming them so just to recap easy to adopt Tech tends to be the hardest to secure because it's ubiquity in and its ease in which it entes our environments and our habits we're kind of on our own when it comes to government legislation on Tech because it's slow so it's up to us to influence good Tech practices as positions in these new institutions we need to make sure we're enabling business not only to collect what's needed keep that uh gdpr close so it can't be used um against us eventually one siiz pitol never works in security

and it's certainly not going to work in this new tech landscape we need to follow the whole chain and not all cyber incidents are financially motivated never mind it's call to action to us really not something to fear just means we've entered into a new era of technology and that means our methods will need to change accordingly and we should all be champions of the responsible use of this technology acknowledging that will be used where we work in the home in public and and obviously in the physical workspace as well and we we go as far to say that security team should fully own things like Ai and iot and smart device implementations not to be a gatekeeper

but to actually enable it safely and to do that we need to start taking talking more about how we can change to meet this new era and so if you fancy that there is the it's free online it's called Uh pilot Flor AI it's a really great tool which you can um use in your teams it's bit like a fret modeling activity and if you are interested yeah there's a little Q code there yeah and there's a side note if you actually enjoy the the kind of awful AI then there's actually a um this is keeping GitHub report that's keeping tabs on a I I would recommend you have a read of some of that it's it's really great yeah

so in the world of the machines knowing where it is to be human is going to be very important it will be important for the architects of these devices and products to make sure that the servers make it irresistible for us to consume it'll be important to those who want to use what makes us human against us and it will be important to us to make sure that they don't cross that line of what is wearable sharable unbearable thank you very much for your time giving you a little bit of your love