Bringing Red vs. Blue to Machine Learning

Speakers

About this talk

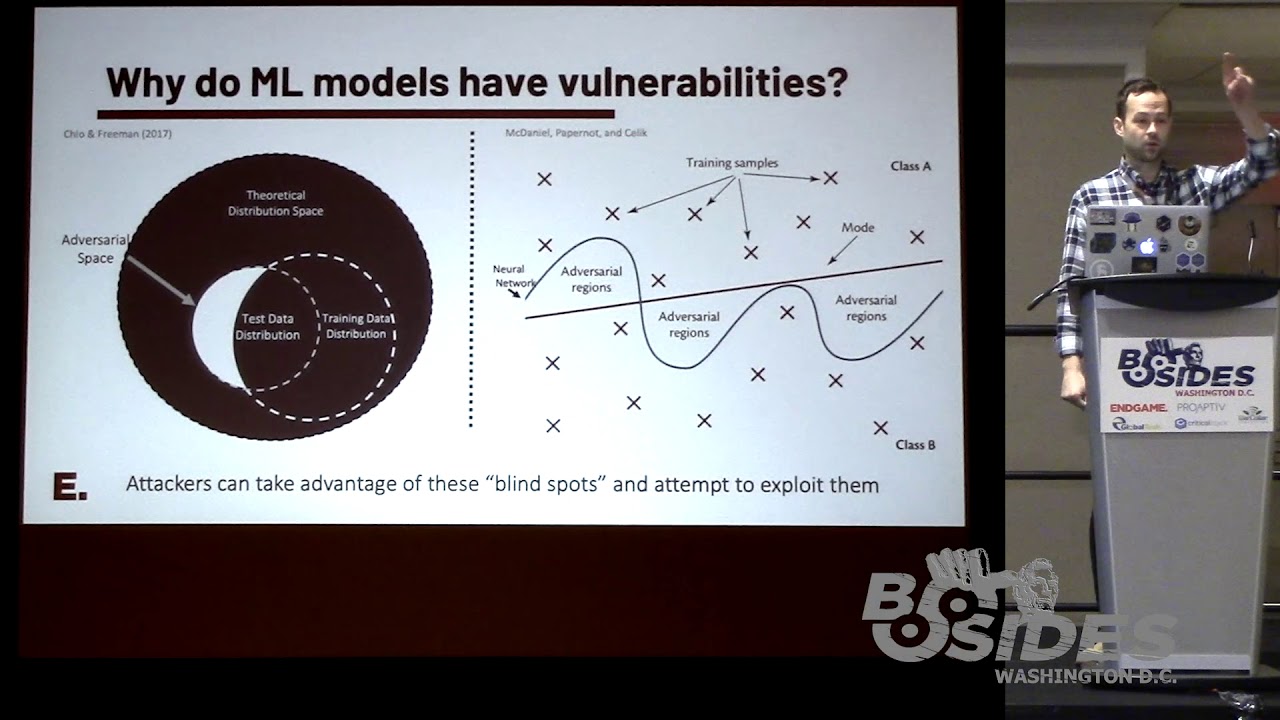

Machine learning (ML) has introduced novel techniques designed to identify malware, recognize suspicious domains, and detect anomalous behavior, even in the absence of previously observed data. As ML-based security platforms gain popularity, the models they leverage could potentially introduce evasion or bypass vulnerabilities. In fact, security is at best an afterthought for most machine learning models. This presents an interesting dynamic where ML models both enhance defensive capabilities as well as expose new threat vectors for attackers, making them a novel challenge in Red vs. Blue exercises moving forward. In this presentation I will briefly introduce adversarial machine learning as a concept - how these models can be attacked - while demonstrating how defenders can develop countermeasures or resiliency. This presentation will also explain why ML should not be viewed by defenders as an invulnerable panacea, but one component of a defense-in-depth stack that requires the same rigor as other components in your security stack. I will first demonstrate adversarial machine learning through an example-driven approach focused on the use-case of attacking EMBER, an open source malware classifier. With that foundation established, attendees will learn how an attacker might implement strategies for attacking ML models - using three common approaches such as black-box (no knowledge), gray-box (limited knowledge) and white-box (perfect knowledge). For red teamers, these attacks are executed in much the same way as software with a buffer overflow vulnerability. Attackers need only determine how to manipulate an input in order to maximize the likelihood of bypass based on their knowledge of the target model. Demonstrations will show how attackers can “fuzz” a binary to generate small manipulations to the file which can lead to a bypass against a malware classifier. Moreover, I will demonstrate how these attacks can often be transferable to previously unseen models. I will also discuss the ramifications of modifying x86-64 binary PE headers as one method of evading an unknown classifier. Meanwhile, I will provide an overview on how blue teamers must gain a deeper understanding of model blindspots, vulnerabilities, and potential model defenses to best combat novel attacks. Examples of model hardening will be given that improve defense and reduce the attack surface of classifiers. Bobby Filar (Principal Data Scientist at Endgame) Bobby Filar is a principal data scientist at Endgame where he employs machine learning and natural language processing to drive cutting-edge detection and contextual understanding capabilities in Endgame’s endpoint detection and response platform. Previously, Bobby has worked on a variety of machine learning problems focused on natural language understanding, geospatial analysis, and adversarial tasks for a research non-profit. Bobby has given talks at several industry conferences, including O’Reilly Security, AISec and SANS.