BSidesSF 2025 - Lessons from Running a Product Security-Focused... (Aditya Saligrama, Joey Holtzman)

Show transcript [en]

Please welcome Aditia Aligramma and Joey Holtzman presenting lessons from running a product security focused cyber security clinic. For all Slido questions, please reference besides.org and select theater 9. Uh cool. Uh hi everyone. Uh thank you so much for coming. Uh we've been running a product security focused cyber security clinic at Stanford for the last year and a half or so and we're super excited to share more about that with you guys. Uh so for some quick intros, my name is Aditia Salama. Um I I'm a recent graduate of Stanford. Uh graduated back in December where at Stanford I led the applied cyber student cyber security group which hosts the security clinic. I competed in CCDC and CPTC for several

years um including uh podium finishes at the nationals. Um I once I graduated I joined formal as a senior software engineer. Uh we empower security teams to enforce lease privilege access to production data and infra resources and we're a sponsor. Hello everyone. I'm Joey and I'm currently a second year uh studying computer science at Stanford and I've taken ads place as the president of Stanford applied cyber and I'm excited to share this talk with you today. Cool. So we're going to start with some framing. Um so as it turns out security clinics are not a particularly new concept. Um and many universities have started their own clinics over the last eight years. Um and actually if you

go down a couple of theaters uh you can find a talk by uh Sarah who is a the leader of the uh the composium uh the symposium of security clinics an organization uh that is uh sort of host to a number of clinics there's a clinic at Berkeley for example there's one at MIT one at CMU one by a series of colleges in uh in San Diego one in Nevada one in Texas and elsewhere across the country um they've been popping up um over the last decade or so um And they're mainly started somewhat top down by universities that want to give their cyber security graduates an experience of improving the security posture of organizations in the real world. So

they're going to partner with uh local organizations like municipal governments um and local businesses to help improve their security posture. Now they're typically corporate security focused. Uh, for example, things like making sure everyone is set up on SSO, enforcing MFA, looking at Windows Active Directory configurations, coming up with incident response policies, and all all sorts of other fun um corporate information security stuff that is vital and very important to uh improving the overall state of security um in these localities. The thing is at Stan at Stanford the prototypical local organization is ne isn't necessarily a municipal government or a a small business. It's usually a tech startup. Um at Stanford as you guys might know uh there's a significantly

entrepreneurial environment in which uh many students are interested in starting startups and many students do go on to found startups sometimes after they graduate but uh increasingly more often especially with this AI boom uh while they are students at Stanford. Um uh and so we've encountered a lot of young startup founders who start building their startups long before they take a formal security course. Um which means that uh often they are building things without necessarily even having a security mindset or knowing how to secure the products they develop. Um and at the same time the products they develop uh do host a significant amount of user data. U the the specific kind varies over time um with what students

are interested in building. Um obviously right now it's applied AI but uh over over time uh this these are have tended to host more and more um student data and as a result uh there's been a significant challenge with regards to uh the student startups hosting all of this student data um and then potentially uh uh having this being exposed uh by security vulnerabilities. Um and that's kind of the problem uh in which the security clinic started um in which uh we sort of observed this uh growing issue but also realized that we weren't going to be able to stop uh students from building startups. Neither should we. Um it shouldn't uh be necessary to

like quash entrepreneurial spirit. Um but yet we need to help uh these student entrepreneurs um as well as everyone else in the Stanford community build tech products securely um even if they necessar don't necessarily have a formal security education and the the environment in which this began uh we sort of like substantiated some of these findings like empirically um and in fact I started my security career at Stanford almost by identifying uh security flaws in student startups um like literally like finding security vulnerabilities and then just sending out vulner disclosures. Um, probably not the best advised way to do this, not the safest way to do this. And in fact, I uh even ended up at the wrong end of a lawsuit

threat once from a student social media startup at Stanford um as a result of this. Um, didn't learn my lesson. Uh, kept doing this uh kept filing more and more disclosures um with better reception from the startups over time thankfully. Um but also realized that this wasn't super sustainable. Um and uh you know like find literally like finding startups and like sending uh disclosures uh maybe not the best um and most efficient way to protect student data at Stanford. Um so we decided instead that it was better to be pro proactive um and host this somewhat more formally um letting uh startups come to us uh and partner with us to let us help them with their security posture. um

maybe keeping some of these same elements of of vault finding but while they were in the room with us um and with their sort of full initial cooperation um and not necessarily sort of like just receiving a disclosure out of nowhere and to level set a little bit uh before we talk more about the clinic itself um I also did want to uh discuss a little bit about the common vulnerabilities we've seen um before and during the clinic uh the broadest category of these is probably misconfigurations of backend as a service uh platforms like Firebase and Superbase in which uh the overwhelming model is a client like a web or mobile app can make arbitrary queries to the

database. Um and as a result it is often incumbent upon developers to write these sort of like static security rules or like role- level security to prevent uh the client from seeing data that they shouldn't be able to see. Um in Firebase this is literally by natively using the SDK and you can just sort of query for like somebody else's data and often get it. uh if they've misconfigured their security rules in Superbase it's because they superbase like autogenerates a REST API helpfully for you over your Postgress database and as a result any client can arbitrarily make a query um especially if you don't have uh the real level security rule set up for that. Uh

the second broadest class of vulnerabilities is uh authorization and authentication confusion with APIs where often uh these APIs uh especially custom APIs only really check that you're logged in before they let you make arbitrary queries uh and take arbitrary actions on other people's accounts. Um, so for example, you can uh cause two other people to like block each other on a social media platform um because it's taking in their user IDs as input but only checking that you're logged in before allowing you to take that action. Um, a couple of other issues include, for example, unprotected GraphQL endpoints in which you can just request the schema from GraphQL and then that gives you all the information you need

to start quering for actual data. Um, and other basic API security issues such as IDORE and flawed input sanitization. Um, and now over to Joey to talk more about the security clinic. Awesome. So, we get a bunch of startups and they're uh usually at different levels of their maturity of their product. Some of them started maybe a couple months ago. Some of them have been around for a few years. And so I'm going to show you the structure of how we handle uh these different types of startups uh to get the best and the the most out of the the engagement here. So we'll first start uh by actually before the engagement we'll give the client a

worksheet as well as some legal docs uh before and these legal docs are just a mutual NDA and MLOU uh that we have. And the worksheet that you can see here on the right, uh, it just gives it allows the client to detail the tech stack of their app as well as what it actually does. And this allows us, uh, to better understand what their product is. So we can focus during the engagement more on the actual security of their product rather than even understanding what it is in the first place. Uh and then second now once we get to the actual uh engagement part we're we first do just a short little uh walkthrough just uh

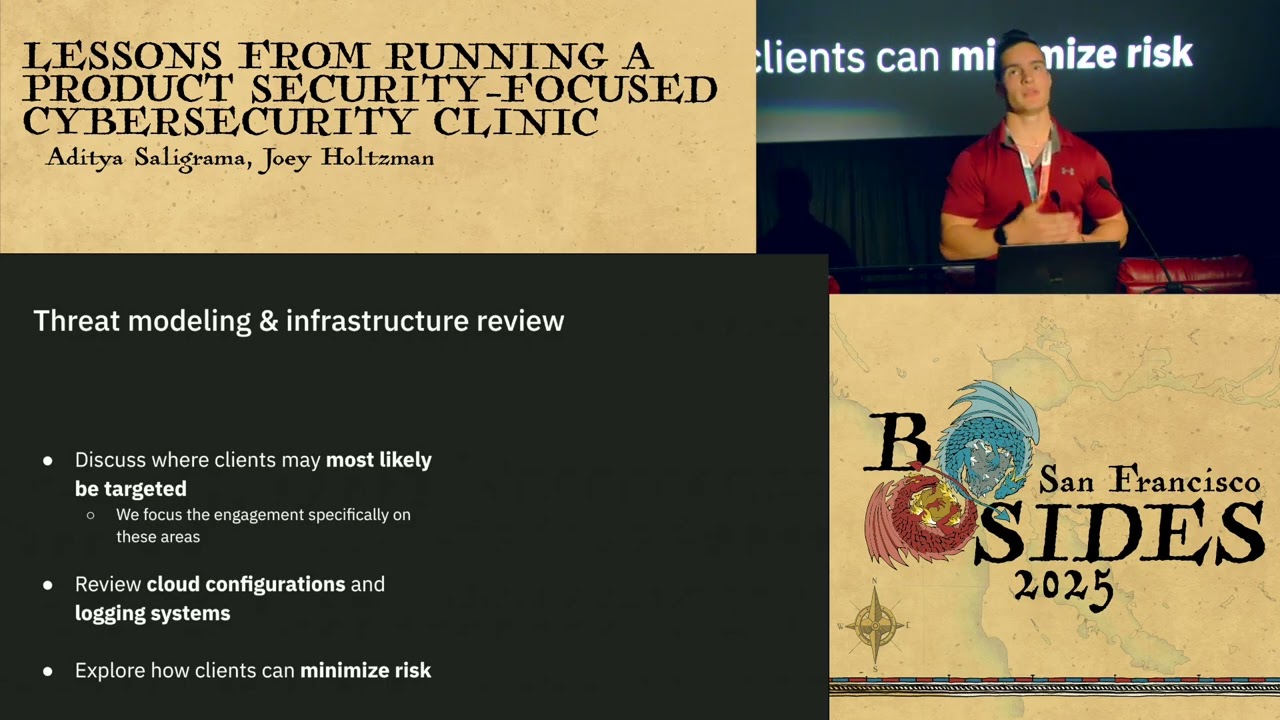

having the client detail how a typical user would use the app so we can get a better understanding of that and then uh we'll go through a a threat modeling and infrastructure review. So this will be finding out where the uh clients could potentially be targeted the most by attackers as well as going through um any of the cloud configurations that they use because really all of the startups uh that we we have seen use cloud and so we'll go through and see in their their AWS their superbase uh anything like that if there's any or what types of misconfigurations there are and um and and all that. And then uh next we'll go through a data security

consult. So uh usually most products will will have some sort of sensitive data that we want to make sure is uh is secured. Uh and so we'll go through and just see how the users are able to communicate with the database and the back end. Uh and then last we'll go through my favorite part which is the live security testing of their product. And this will be typically uh the first couple steps will be the first hour of the engagement and then the last hour we leave for the actual live security testing. Uh and then after we'll go through a debrief and then uh later on we'll send them a write up based on the

vulnerabilities that we have seen as well as uh the any uh remediations that we recommend for them to fix in their product. And so now I'll detail uh steps three through five a little bit more in detail. So first uh in the threat modeling space uh we we want to discuss with the clients where they're going to most likely be targeted by attackers. Uh and so uh take I guess uh for example like a social media platform uh that we we want to ensure that the clients understand uh where where the attackers may focus specifically on. So maybe this is exposing uh the sensitive information uh of user accounts such as emails and uh phone numbers and whatnot. And uh so

that just as an example uh and we'll also go through a bunch of all their like their infrastructure configurations uh because many times there's there's many configurations in these these cloud platforms and we want to go through and ensure that the uh any uh misconfigurations are identified and that the clients can then fix them as well. uh and uh we also want to ensure that in case of a a breach or an incident happens within the product that the clients can also uh well they can um find out how the attackers actually have got in through and we can do these through like logging system alert systems and so we ensure that they have these in place and that they're also

configured correctly. Okay. And so now a little more into the data security consult uh we'll we'll go through with them and find out the type of data that they're storing and how they're storing it. Uh as well as how the users uh actually communicate with uh getting their data through the backend um and maybe authorization middleware and uh seeing just how that is laid out right now. if it's necessary for the users to be directly connected to the to the database and have access to that data and uh what what data those users should should have access to and should not have access to. Uh and then also we can we will advise clients on how to uh

protect that sensitive data that we we have identified the best way possible. So in case of an incident um that there is the the lowest uh risk that that could happen from that. And so now uh to the actual security the live security test. Uh this is where we'll go through the product and we'll we'll go through all the potential misconfigurations and uh things that we've noted down uh previously in the engagement and we'll actually go into the product and test uh if these these systems are secure and try to find any vulnerabilities within the product. And uh we'll also uh verify that that what the client has said about their security thus far in the product

is what is actually happening because many times we've gotten clients where they say oh yeah we have this authentication for this page or whatnot and that's actually not the case. So we'll go through and ensure that that is in place. Uh and then at the end of the security test then we'll go in and check and uh discuss what we found as well as any uh remediations that we recommend for them to to fix. Okay. So now I'll talk about some success stories that the uh clinic has had within the last couple years that it's been running. So we have definitely seen many many startups uh many different types of startups uh in the the different domains

that they are in as well as the maturity of their product. Uh we've seen some startups come to us and they've only been there for a couple of months uh in the the startup space and then we have some startups that have been there for for multiple years. And basically in every single engagement that we've had we have identified these some major flaws in their security of their product. And that's kind of helped uh vindicate our initial motivation for starting the clinic as well. And we're able to uh both find these these flaws uh before an actual attacker uh can can do so and take advantage of that. And uh the main main kinds of startups that we

have seen is firstly these public interest tech startups. Uh so one of them being startup that would help journalists. We've had uh one that is a nonprofit that helps that helps non or sorry that helps nonprofits uh manage their grants. Uh and then we've had uh edtech startups as well. Uh and the second main kind of startup that we've seen are these B2B applied AI startups. Uh if you look in the current Y combinator batch, you'll see that most of these the startups going into that batch are actually these B2B agent AI. And so that's uh understandable that we get a lot of those uh those startups as clients as well. And then last, we've

also seen a couple fintech startups. So one example being uh one startup that helped uh write that helped um international trade specifically within um Latin America and uh then also some biotech startups. So one of them being a a startup that helped provide a computational tools and a web interface for scientists to develop these proteins and molecules and enzymes as well. Okay. And recently we actually have expanded a little bit beyond startups. So we worked with uh Stanford internal uh development team and throughout the engagement we uh evaluated their their website security and to see if uh their their WordPress websites uh were securely set up. And uh we also discussed a lot of the uh their

their plans in case there were was an attacker that was actually able to breach the systems. And uh we were able to to go through uh that with them uh in many different scenarios like maybe potentially some ransomware that could occur or some DDoSing. Um and then we actually were able to identify uh some some vulnerabilities in their systems and uh one being a DOS and as well as uh looking through their logs we saw that there were a lot of these script kitty attacks and so we also gave him some ways to help mitigate against that and um overall make make Stanford's infrastructure stronger. Okay. And I'll hand it back to DJ. Yeah. Um and now is uh part of the fun

part which is uh detailing some war stories and vulnerabilities we've caught in the uh the startups that we've hosted at the clinic. Um so uh one of our very first clients um had a edtech startup that uh had an admin dashboard that was protected only with clientside access control which basically means that uh you could load like you know app.com/admin it would block you because there is some sort of access control there but then you hit the back button in your browser and then it exposes the entire admin dashboard and then you can make changes and see sensitive data there. Um so that is kind of fun. Um another startup uh and this is this is

this startup came to us in interesting way whereas uh most of our clients uh sort of email us at our main contact page um and ask for an engagement. Uh with this startup I got a a Slack message being like we are actively under attack. Could you help us out please? Um and as it turns out as we did this incident response process like live there with them in the next hour. Uh we found that uh they had accidentally exposed their AWS API keys in the front end. Um and that some crypto mining uh bot scanner um had found these API keys um spawned a number of container clusters that were mining uh crypto in

their AWS account and uh sending the proceeds back to the uh the crypto mining group. Um, and we had to essentially help remediate that uh, you know, shut down the IM rules that the bot was using uh, to create those clusters, shut down the cluster itself. Um, and then sort of like go through their AWS account, see how else they'd exposed resources um, and shut everything else down and also um, help them communicate with AWS to try to get the $6,000 bill that they had racked up in two days um, as a result waved. Um, so we definitely do some uh, very diverse work here at the clinic. Um uh the same startup also had a couple of

other issues including uh the fact that they had this uh Python flask endpoint that could spawn um machines up to do uh various sort of biotech jobs. Um and as it turned out uh there is command injection where you could just get remote code execution um on their main uh web server via that. Um another uh issue that we found in sort of a public interest tech um not quite startup but uh but sort of project was uh basically their feature was AI analysis of PDFs. Uh so you could basically submit a PDF um or a URL to their PDF uh and have u essentially like that fed into an LLM to get your PDF analyzed. Um and uh clearly

there wasn't proper validation of these uh these URLs. Um because one thing you could do is you could ask for the EC2 instance metadata service which would then give you back all the AWS IM ro keys uh that you could sort of take over the AWS account with. But a more clever and fun exploit that we did with this endpoint was we were able to realize that because tiny URL lets you make predictable shortened links. Um you could do sort something like app.com uh like like enter like you know a custom tiny URL URL link into this into this app. Um and then go to like um and then have that tiny URL link uh redirect back

to app.comindex questionark url equals tinyurl.com/mycustom link. Um, and with this loop, uh, this, uh, this app would just try to keep visiting, uh, the tiny URL link and then back to itself over and over and over again until it finally, you know, ran out of resources and crashed. Um, and and and so like that was that was something pretty significant we found. Um, a couple of other miscellaneous ones was uh a fintech company that we could have wiped with uh their entire company out with a single web request because they had an API endpoint for withdrawals of money that allowed you to enter negative numbers to withdraw. Um there um there are numerous startups with

their OpenAI API keys exposed in the front end so you could rack up more big bills that way. Um, and then a last fun one was an admin panel um in another startup that they claimed to have uh gated behind a VPN. That is if you're not coming from a VPN IP address uh they're going to kick you out. Um, and we found that uh the way they're enforcing this was they're using a Django plug-in called admin restrict um with a CVE from several years ago in it uh where to just blindly trust the X4 and four header uh which is supposed to be set by a load balancer but of course you can set it to whatever you want. Uh

and and so as a result you could like find their like allow listed IP ranges um set the exported for IP header to something within that range and ta you now have an admin panel. Um, so like we've caught sort of a diverse uh amount of of vulnerabilities of of very different types here at the clinic. Um, and uh, and it's been a very fun experience and and also very helpful for these uh, these clients who um, maybe if they deployed these apps in the real world um, would uh, would be finding out in these about these vulnerabilities in sort of a much more difficult way for them. Um, and now uh, back to Joey to

talk about some lessons learned. Yeah. So, there are definitely a lot of lessons we've learned over the last couple years in the clinic. Uh, so the first one being as what I talked about earlier is giving the clients this the worksheet and the legal docs beforehand because this allowed us to uh prepare for the engagement by reading through this worksheet and and understanding what the client's text stacks looked like. So, we didn't have to necessarily do that during our time with the client and we could focus more on the security and configuration that they have in their app. And then also uh clients were really good about this and this was uh giving us these test accounts and test

data uh before the engagement. So when we did this active security test uh we were able to to uh use a staging environment rather than the actual production environment and uh we didn't have to need need any like compromise actual uh users that were using the app at all or anything like that. uh and so now for some tips for uh you guys working with some early stage startups or uh other clinics doing that. Uh so the first being that we really with these startups we see a lot of commonly used software. So this can be stuff like superbase uh graphql and uh this to to check the misconfigurations in these in this software uh it's pretty

tedious to do it manually and so developing these automated tools to automatically check for these security misconfigurations can be a big help and save a lot of time during the actual engagement. Uh also another one is that these startups differ a lot between uh what their needs are and uh depending on like the maturity and the domain of their product. And so really refining those those methods uh before before the engagement can really help make the engagement run smoothly and ensure that the clients can get the best out of it as well. And last uh something that we've we've been trying to do or that we have been doing a lot is emphasizing to these our clients that early stage

startups are actual targets to attackers. I think many people many people think that oh the attackers are after these big companies like Google and Meta. Um but really as Adichia has pointed out earlier that these attackers are going after these early stage startups a lot too and we want to emphasize that um that these founders should take their security in their products seriously. Um and so that'll help them exponentially as they grow as well. All right. And so thank you very much for coming to the talk and we'll take any questions now. [Applause] Thank you guys. Starting with our first question. When would you consult with professionals regarding your recommendations? How much experience did you have before the clinic? And how

confident are you in what you do given your relative experience level? Yeah, I can I can take this one. Um yeah, so that's a that's a great question. Um so I I can sort of answer uh about initially about the experience level um in which we've been like finding uh vulnerabilities like this in um startups of like the similar stages that we take in um for several years. Um and and of course like one thing that we we emphasize is that what we find uh often is not an actual exhaustive uh enumeration of possible vulnerabilities that a startup might have in their app. Um and like the obvious constraint beyond our experience level is is

actually just the fact that uh all of these engagements are two hours um including all the review and not just the pentest. Um and and so like uh we we definitely find it appropriate to consult with professionals. We have done that before. Um and uh but like the often what we find is that of the startups that we we deal with uh the sort of attack surface um and sort of the surface area of their app is limited because they're very early stage startups. Um and so we're able to do sort of a 80 90% pass at it uh within that two-hour session. Um but obviously like yes there can be more vulnerabilities that are more

complicated to discover. often like what these startups are looking for initially is sort of the first best and the most obvious vulnerabilities. Thank you. You mentioned some common vulnerabilities found in your clients apps. Do your clients generally have a good idea of the principles of secure design and do you think it's a problem at the education level? Yes. Uh so um I do think that these uh a lot of our clients definitely like do not have very much experience with security design principles and uh one of the things that we try to do in our sessions is sort of elucidate some of the most basic things that they need to know about uh these security principles. I do think it is an

issue at the education level. Um and you know our friend Jack here in the audience uh has written in the past about um you know the need for uh for universities to uh teach uh security as a mand mandatory part of CS curriculums. Um the thing here is that a lot of the founders that we encounter are very very early in their CS careers. Um many of them are freshman in their first or second quarter at Stanford. um which means that even if uh C security were a required part of CS, they just wouldn't have even gotten to it at that point in their educ educational careers. So yes, like a lot of this can be helped at the

educational level. Some of it cannot be just because of how early these people are. What kind of engagement do you have with other organizations to review and improve your own processes for consultations? Yes. Uh we've talked to uh the consortium of security clinics in the past. uh we've actually gotten a number of recommendations from them on uh on improving our own processes. Uh the idea of sort of uh legal docs and having our clients sign legal docs beforehand was actually surfaced by them. Um and they're helpful in like providing us templates for uh uh for the clients to uh sign uh these documents for. In our last question, do you consider privacy reviews and compliance with privacy laws

such as GDPR or CCPA when reviewing startups apps? Um, I think we try to look at some of the principles uh that these regulatory frameworks are based on and uh try to apply um some of the like standards that make sense for these startups. Uh the thing is that a lot of these regulations only really kick in once your app has like 50,000 or more users. Um, and like the apps that we're dealing with by and large have like at most a thousand users. Um, some of them have more, but like none of the apps we've dealt with have had are like up to like the 50,000 um user level in which these regulatory frames frameworks would

kick in. Um, so we don't really emphasize that um as much. Um, I will say that like you know despite these apps um having a small user base uh the damage that they can do to these users is pretty significant um if they host sensitive data. So like our um our main priority is sort of a pragmatic review of like how they're hosting the data, what their data model looks like, um what they consider like sensitive data exposure um and trying to guard against that. And that's all for our questions. Thanks at Joey. Thanks so much.