BSidesSF 2023 - NLP for security log analysis : Learning to crawl before you run (Arjun Chakraborty)

Show transcript [en]

hey everyone uh my name is Arjun and welcome to my talk titled NLP for security log analysis uh learning to crawl before you run um so let's just start off with a sort of a brief introduction to myself uh I'm a detection engineer at databricks I've been kind of in the ml security domain for a while uh and some people would call it a specialized skill I'm having a full-blown existential crisis that's me on the side not part of any of these groups so hope you'll get some at some point I'll be there somewhere in the middle but yeah that's kind of been like my whole career basically so I thought this was an appropriate way to sort of put

myself out there uh so we have quite a few things on the agenda so um most of my talk is focused on embeddings and I will talk uh about what embeddings are and what they sort of represent but the idea is like uh can we actually use NLP models to sort of build out embeddings and then sort of use them Downstream for detection engineering tasks so I think this particular sort of diagram or or chart is like very familiar to a lot of people who work on the detection engineering side of things uh you know you have like a bunch of data that sort of comes in you're trying to do detection you're trying to do

correlation uh and then you're sort of feeding in alerts to the sort of the IR team uh now the sort of the idea is like this is not sort of restricted to just security you know I feel like the idea of extracting sort of signal from noise uh is sort of relevant across a lot of different domains and that's kind of what I was thinking when I was thinking about using NLP techniques like it's it shouldn't be just restricted to security it shouldn't just be restricted to other fields so why not security because the problems are the same security logs I believe are a language of Their Own uh probably harder than a lot of other

languages and NLP I feel like has been specifically designed to help with context and I do believe context is very important in security so that's kind of where um sort of things built up for me when I was sort of thinking about this and when I was working on the project associated with it as well um we'll now sort of get into the detection engineering side of things on the left here are sort of um examples of kubernetes audit logs I took like kubernetes as an example because I feel like I spend a disproportionate amount of time staring at them and they are my favorite logs I feel like by now so the idea is like when I'm building

out detections like how do I go about doing it like I do believe like static rules are very important so you know you're looking at maybe user agents maybe you're looking at resources looking at a bunch of different fields uh the problem with these sort of logs sort of arises is the fact that um schemas become very complicated uh cardinality of certain features becomes really difficult to manage so that point of time you know you're kind of playing whack-a-mole with your your static detection rules and most of the times you know if you just have those rules you're going to miss out on something not to say that they're not important so having sort of worked on sort of the ml

detection side of things I do believe that sort of there is a lot of um uh I would say there's a lot of advantages to using sort of a narrow approach which is sort of associated with static rules and then there is um you know you have sort of umbrella models as I like to call it with with either you're using machine learning or you're using other techniques to sort of get like a more holistic view of your detection sort of engineering use case uh which is kind of why I have these options here so you know your option one is your static rules then you have your embedding or other ml techniques but the

best way to sort of use them I believe is using a combination of all these options um so what are our embeddings and this is kind of like the core of my talk um embeddings are nothing but numerical representations of a word or a phrase um they're widely used in uh pretty much every other field that has text associated with it you know recommendation systems are something that pops out that you know use a lot of a lot of embeddings very very successfully so it's basically you know the ability to sort of map this word or phrase or in this case a security log to a vector of numbers this could mostly be in a higher dimensional space so what

it basically means is you know you have your audit logs which are mostly and completely just text uh you have like an embedding model and then you end up building these features which you can then use Downstream so embeddings are basically your core of all the other Downstream tasks that you're sort of trying to build out but what I really like to call it is it's essentially essentially building a representation of logs so it's trying to incorporate context as well and which I'll talk about later but the fact that this representation can be really powerful in terms of doing a bunch of other Downstream tasks I think that's where sort of the the core of uh the

embedding side of things uh sort of lie in um just a quick pointer to like sort of this um uh this diagram here um you know I wanted to take an example of like two different user agents and I wanted to point them out that you know if you actually sort of build out the embeddings associated with them and sort of uh display them on a three-dimensional plane you'll see that the distance between them is not just the fact that you know they have different strings associated with them embeddings actually help you sort of differentiate between logs which is which is so important when I believe like when you're sort of dealing with detection engineering tasks because

you're trying to sort of you're spending a lot of time sort of trying to um like kind of find the needle in the haystack so it's a really important to figure out methodologies that would help you do that so I've kind of mentioned uh context a couple of times and I wanted to sort of elaborate a little bit on that uh on the left is a completely fake user agent I'm pretty sure this doesn't exist but uh I wanted to point out the word Linux right because it's that's really common um as detection Engineers we spend a lot of time you know building out uh parsing agents or writing complex regex is sort of to build out these

features or detection rules now the idea is that when you have this word Linux the strings around it matter as much as Linux itself you may have many Linux based user agents so how are you going to differentiate between those so the idea is yes you can write a lot of regaxes you can write a lot of complex projects as well or you could use something on the or you could use something on the NLP side of things that will help you differentiate uh sort of between them the idea is like there are a lot of modern NLP techniques and models that have come up but as an example for this talk that do help sort of

figure out the contextual part of it it's called attention or self-attention in this case for for Bert and the idea is you can use these techniques to figure out like if there was another user agent with maybe like a different version name or a different string that follows Linux the fact is that context is incorporated and you could use embeddings to sort of differentiate between them or you can just keep writing reg access that's all up to you um so um yeah welcome to the AI hype cycle of our times um I felt like I had to put this you know after a conversation with my manager and I couldn't fit in all the models so

there are like four of them but you know a few hundred probably came in the last month as well but the idea is uh you know the model that I use is sort of right down at the bottom the first one uh Bert which sort of came in in 2018. um needless to say I you know llms are obviously the in thing right now and you know everyone's building one and not to say that they're not important uh but I do believe that there is a lot of value in smaller models as well especially because security is such a such a closed domain uh you know every Enterprise has a certain set of logs every Enterprise has

uh subtle differences in the way they have their logs and it's very hard to find a one model that beats them all in an insecurity unlike unlike other use cases where you know you're just talking about text generation or you're talking about chatbots or I don't know you're trying to make ice cream uh you know all the other things that are associated with it you can have one big model but uh but with security I feel like because it's so constrained and because Enterprise has differentiate so much from from one to the other you know you'll end up having to make uh make custom models uh the fact is llms haven't just come in since November of 2022 they have been in

use for a while now um you know I bring up the point of distilled models because digital models are the models that are sort of smaller in size but have comparable performance that have been used across the open source Community for a while now so you know one of my favorite bird models is distilbert which is basically using maybe 60 of the parameters that that bird uses or more optimized models such as Roberta as well and they become very popular they they work really well they're not as uh compute heavy so I do feel like there is a lot of scope for using such models within security and and not just uh not just bird models you know

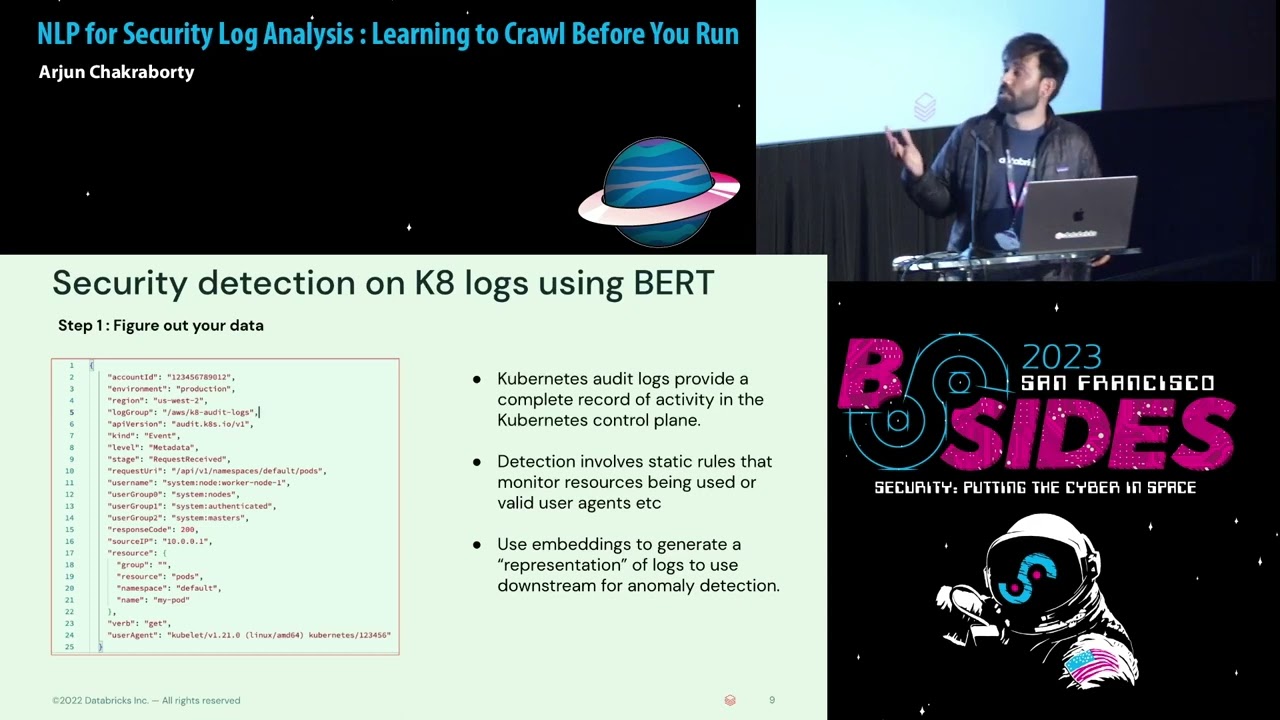

classical machine learning I do believe still has a place in insecurity but um you know we live in the times where we're talking about bigger and bigger models so uh I think there's something to be said there so come on to like the security detection side of things um and I wanted to sort of walk everyone kind of like through the process of of security detection itself um I picked kubernetes logs again because uh they're right now they're my favorite log Source but as you can see uh on the left that uh they are pretty complicated um I'm not a kubernetes expert by by any stretch of imagination but the idea is that when it's sort of providing you uh

sort of very detailed information about uh whatever sort of happening on your on your control plane and that is very important for detection engineers in most cases uh you know uh Whenever there are services that are sort of associated with with kubernetes you can have like a bunch of different logs but audit logs are generally the one that people sort of sort of walk in and on and try and build our detections they're also very useful for for like operationalizing things um in most cases when you're building out these detections uh It's associated with with static rules right uh you'll probably end up uh maybe looking at the user agent you'll try and figure out whether there

are user agents that that haven't sort of been part of the system for x amount of time um you'll probably end up excluding a bunch of usernames uh and you'll probably reach the point where you've excluded everyone so that your rules don't generate any noise and that can get frustrating right because if you go on the other end of the spectrum uh you're flooding your IR team team with alerts so while static rules can be used precisely for certain use cases I do believe that you need something um that is sort of uh wider or like or sort of a more umbrella based approach as well so the idea here is uh you know we're

going to be using embeddings to generate like what is a representation of of logs such as this okay so um before we just go on to the previous slide itself um I do put in Step One is figure out your data which is at a minimum understand what each of the fields mean at least um you also need to be understanding uh sort of how it sort of fits in within your system when you're sort of using NLP based techniques I feel like the second step is um kind of like the most important one so this is called like the tokenization process so you have like a bunch of text and you are converting them into a bunch

of numbers uh this is because neural networks do not understand anything but numbers as inputs now the process of tokenizing itself is can get very complicated um you could sort of build your own tokenizer but building your own tokenizer also requires you to build your own vocabulary and then build out a tokenizer on top of that and building out a vocabulary is is difficult um there are a lot of sort of pre-built vocabularies available and free build tokenizers available which you can use to sort of convert text into the tokenized values in most cases you know even with the smaller models and I say small sort of very lightly but a bird model with say 300 million

parameters which is by comparison nothing compared to maybe charge GPT or GPD 3.5 which is like 170 billion training those models from scratch is hard so you know I would advise anyone who's sort of looking at Text data for security and trying to build their own tokenizer uh good luck that'll take a lot of time so I would say you know use the pre-built once because pre-built ones have enough information within the Corpus to sort of incorporate uh the things that you need the third step is sort of trying to figure out your your embedding model itself now I have this example here as the bird model itself so sort of going into like the like the

specifications of the bird model is sort of beyond the scope but the idea of of sort of these these powerful NLP models is sort of twofold you could you could either pre-train the models or you can find you in the models now the pre-training part is sort of an unsupervised uh semi-supervised way of sort of learning the language representation when you're pre-training about model you are going to take like a bunch of logs a lot of logs and you're trying to sort of and to some degree tree in the Train the bird model from scratch and then you're going to find your net on a specific task uh in a lot of cases and in this case as

well you know I used a pre-trained bird model there was that was already available that had been using the English language Corpus and then I sort of fine-tuned it on a specific task so in this case my task was as you can see it's a classifier up there and I was trying to predict for each each specific set of logs like what the verb type is uh the idea was I would take this embedding model have it predict what the verb type is and then sort of extract the embeddings out those embeddings would be my representation of the logs itself now of course like I said you're welcome to sort of uh pre-train your bird model

and then find tune it on your on your son on your own objective um but from what I've seen that haven't been too many pre-trained cyber security specific models I think maybe a couple of them but on the other hand if you see a lot of other fields you'll see a lot more because just sort of more viable to sort of build them out but so this is kind of like the story for now in the sense that you know I have a model I'll be predicting the verb types and I'll be extracting the embeddings and seeing sort of what comes out of it and this slide sort of talks about the embedding results themselves

um I have a point there about embedding sort of evaluating your embedding results aren't the same as evaluating your model um you know it's sort of a little bit more ambivalent when you're trying to figure out how to evaluate your embedding results what I did was I trained the model on approximately 7500 K8 log samples and they aren't that many but you can always you can always expand them but my idea was to sort of take the embeddings generated by the training set and then sort of display them on a two-dimensional representation and as you can see uh sort of each each particular verb type has a sort of specific spot in the cluster which kind

of shows that the embeddings were successful in sort of breaking each of these verb types into like these separate clusters and that's kind of like an indication that hey you know what like these embeddings worked these are a representation of your logs uh based on based on your sort of your objective function when you're training the model itself it's uh it's sort of a more straightforward sort of multi-label uh multi-label classification model so you know you're just going to use something uh sort of more traditional like an F1 score or something of that sort so we're going to take these embeddings and then use them Downstream so the idea is I've trained all these uh trained on

all these logs I have a good representation of my logs which is based on this particular plot and now I'm going to use it Downstream um so um credit to the stratus rat team who sort of um have excellent documentation on a lot of red team use cases so I picked up one of their use cases which is you know can we detect if within a K8 cluster if an attacker tries to dump all the secrets uh within the within the K8 cluster itself now ideally would you have a rule for it yes uh uh there are there is a very high possibility you don't as well because hey that's just the way things work and

the detection engineering world um should you have our back control probably but again you may not have it but the goal of uh but the goal of this thing is you know not to sort of point out who's going where on the detection engineering side of things it's about me sort of taking this this log and simulating this on my sort of tester validation data set and seeing if like my embedding model can sort of find this particular log um so that's kind of what I did I had a bunch of uh validation data I simulated the presence of this particular log uh sort of the main parts are you know if you can see like uh like the

attributes and the objective resource you know there are secrets and that person is basically asking to kind of dump all the secrets and list them all the method does become does become pretty relevant because what I'm going to do is I'm going to take all the verb list types and sort of see if I can find this particular log within uh sort of my embedding process so that's kind of what I did um and as you can see what I did was I generated like the embeddings for the verb type list in for the malicious and that single benign uh data point um the blue data points out here indicate benign data points and that

single one gigantic orange data point indicates the log which was used to list all secrets um my hypothesis was that it would be isolated to some degree and it does work like that of course certain caveats are the fact that the model itself was trained on not too many logs so I would say that you know I would have needed sort of more examples of the warp type list to have like a better representation but the idea was that machine learning models that are using that you're sort of using for cyber security are not meant to find um or sometimes are not meant to find like specific instances of this going wrong or that going wrong right it's supposed

to be used in correlation with your detection rules so that if you can see with even if even if you did not have this rule if you had that particular set of points that was sort of a more isolated you could at least sort of be like okay you know what this is isolated this particular data point is something that we should uh we should investigate um this exercise in itself was more of a validation process where I could be like okay if we have if we simulate this will this be caught by the model and it does uh challenges in looking ahead I think is um uh pre-standard I would think um you know ml is is most definitely not

a silver bullet uh to the security problem uh I'm sort of a strong believer of of sort of going wide and then going deep as well and I think ml does help you sort of go very wide but you still have like a bunch of rules uh that would help you sort of go deep and then you'd have like a bunch of correlation efforts as well which can sort of help you face that problem uh another point is an anomalous event is not always malicious uh so you know in the real world the way things work that orange data point won't be visible to you that may or may not have been malicious maybe someone's your maybe your system

is okay with someone dumping all your secrets in a K8 cluster I'm not sure about that but but maybe uh interpretability is a definite problem especially with embeddings uh but I think a lot of that sort of comes up to um you know working with your IR team you know I think a lot of cases are where we struggle to sort of give the right information to IR so it's not so much about embeddings or numerical Vector representations it's it's just about answering the right questions when it comes to ir and giving them the right information to sort of operate when you're using uh ml models uh looking ahead I think within security I don't see one model sort of ruling

them all anytime soon um so I'll talk to you guys next year maybe um but I do see like a lot of fine-tuned bird models can be very useful because of just the fact that security is no different I believe from like any other field which is like very tax driven so we should be sort of using um uh you know bird-based models a lot more than we actually end up doing I think and the third part is of course you know um you know I work on the on the blue team side of things when it comes to using machine learning and security but you know you should be using the right platform you should be making sure that

uh you know the packages that you use or or the platform that you use is trustworthy and you know I know there are a lot of talks here associated with um sort of attacking AI models as well so you know I do believe like that's something that should be uh kept under consideration as well and lastly shout out to my detection and response teams who sort of uh knowingly and unknowingly have sort of helped a lot with this talk and questions [Applause] you know so what mode of these large language models very important

yeah distal bird was is just trained on mlab so I was using just a mass language model yeah yeah

I think you can do a lot of different things initially I wanted to just shove a isolation Forest onto it and be like okay you know what we can just have a contamination and see what sort of comes out yeah yeah uh you can use a cosine metric as well I think that's a really good idea you can I certainly believe like it's kind of hard to do that with uh clustering is great for visualization where I don't think it can be used as an anomaly detection tool so I do believe like a similarity metric like a science yeah let's give her a round of applause for a presenter if you have any questions you can ask

him any questions outside