Service Mesh Security: Shifting Focus to the Application Layer

Show transcript [en]

Hello, welcome to our penultimate session here today in theater 14. Um, it's my pleasure to introduce Daniel Papiscu who's going to take it away with service mesh security. Thank you. Thank you. Hey everyone, I'm Daniel Papiscu. I'm the group tech lead for security at Yelp. Today I'm going to walk you through a multi-year journey to secure service to serviceto-service communication at Yelp. And you'll see what worked, what didn't, and how we ultimately found success by shifting our focus to the application layer. Here's the agenda for today. We'll start with a bit of background on Yelp's infrastructure and how we initially approached this problem. Then I'll walk you through some of the early attempts we made to secure that

communication and how those efforts didn't go quite as planned. And from there, we'll dive into the solution we ultimately landed on, the trade-offs we had to make, and the realities of deploying it at scale. I'll wrap up with the results we've seen so far, and some key takeaways that I hope you can apply to your own environments. So, that's me. I'm Daniel. Like I said, I recently hit my 9-year Yelpversary. As the security group tech lead at Yelp, I help guide technical strategy across multiple teams, especially when it comes to security architecture and infrastructure. And while I have my finger spread across all facets of security at Yelp, I realized while writing this presentation that

I've actually spent 75% of my time here at Yelp in calendar time anyway, thinking about this problem of securing service to service communication. And that means I've had a front row seat to how this problem has evolved over the years and how we've tried to solve it. And that's why I'm here today. And I'm excited to share this story with you. And my hope is that you'll walk away with some ideas or insights or at least feel a little less alone if you feel felt if you faced similar struggles. And before we dive into the technical details, let me give you a quick sense of the environment we're working in because the scale and structure of Yelp really shapes the kind

of security challenges we face. Yelp's mission is pretty simple, to connect people with great local businesses. And we've been doing that since 2004. And over the years, it's grown into something massive. As of the dates called out on the slides, we serve an average we serve on average 29 million unique devices every month, and we've accumulated more than 308 million reviews. That's a lot of people relying on us and a lot of data to protect. Behind the scenes, we run hundreds of microservices built and maintained by dozens of teams. These services power everything from search and recommendations to business tools and mobile experiences. At this scale, serviceto-service communication is a foundational part of how Yelp works. And

securing those connections reliably across hundreds of services and teams is no small feat. So, how does one manage all that communication and ensure that we secure all this critical data? That brings us to our first topic, the service mesh. So to get on the same page, let's quickly define what a service mesh is. A service mesh is a dedicated layer that handles how services talk to each other. It manages things like service discovery, retries, load balancing, and observability. Things that are essential when you're operating at scale. And in many modern implementations, the service mesh also provides security features like encryption, identity, and access control. Without those features in place, you're putting your data and your

infrastructure at risk of misuse. But when we first started exploring the service mesh space, the ecosystem was still in its early stages. Most tools focused primarily on basic routing and discovery. And if you wanted any features beyond that, like observability or security, you had to build it yourself. Of course, we wanted all of these benefits, reliability, observability, security. Who doesn't? Right from the start. But as is usually the case, we had to prioritize based on what the platform needed most at the time and our business needs. So now let's take a quick detour into psychology. This is Maslo's hierarchy of needs. It's a psychological framework that organizes human needs into a pyramid starting with the basics like

food and safety at the bottom and moving up to things higher higher level needs like esteem and self-actualization at the top. Now, you might be looking at this pyramid and asking yourself, "What the heck does this have to do with service mesh security?" That's a great question. As it turns out, this model maps actually surprisingly well to many facets of life, including general software development and how we approach prioritizing things that need to be done. Here is my adaptation of Masow's hierarchy of needs. For the service mesh at the base, you have to establish basic connectivity things like service discovery and routing. If services can't find each other, nothing else really matters. Do you even have a service mesh

at that point? Next, there's reliability, then observability, then adoption. And finally, at the very top, security. Not because it's the least important, far from it, but it's because we can't implement that effectively until the rest of the foundation is solid. And I know this order might feel a little backwards from the security first mindset folks in this room, but in practice, it's really the only way we could make meaningful progress. And as with all pyramids, you've got to start from the bottom. So let's rewind a bit and take a look at how we first uh started building out our service mesh, what the early setup looked like, and how we got things off the ground. The year is 2015. At the time,

our container orch our container workloads were running on msos and we adopted an open- source service mesh called smart stack from Airbnb. The focus back then was on the basics, service discovery, reliability, and observability. Security, however, wasn't part of the picture yet. Not because we didn't care, but it's because we didn't have the resources, the infrastructure maturity, or frankly, the immediate pressure to prioritize it. And honestly, that was a reasonable trade-off and the right decision at the time. We were focused on building a stable foundation. But as time progressed, the lack of built-in security became harder to ignore. Feature teams were building new services and user experiences. And naturally, they started coming to us in

security and asking us over and over again, how do I protect my service from unauthorized requests? Totally valid question. And unfortunately, it's one we did not have a good answer for. We had long-term plans to build security into the service mesh, but those plans were still very much coming soon TM and our existing infrastructure with SmartStack and Mesos just wasn't built for that. It didn't support pluggable security features and we didn't have a clear supported path for teams to follow. So, when this question would come up, we'd try to explain the complexities. We'd offer some guidance. We'd warned about the dragons they might encounter if they tried to build something custom on their own, but we

couldn't offer a simple paved path. So, teams were left to figure it out on their own. Some teams tried building custom solutions. This was met with mixed results. Others gave up and left their services unprotected, not out of negligence, but just because doing it right was too hard. We knew this was a problem. I think we all understand this. The real challenge is figuring out how to fix it within the constraints of the infrastructure we had. But for the longest time, we just didn't have the right tools to do it. But that all changed when some new players entered the scene. Now it's late 2018. We were beginning a major infrastructure migration to move away

from MSOS and onto Kubernetes. As part of that migration, we introduced Envoy, which is a cloudnative proxy that had become a common choice for service meshes in Kubernetes environments. And for the longest while, we were in a mixed mode, running Mesos and Smart Stack along Kubernetes and Envoy and many different combinations of those four. This mixed mode complexity really wasn't ideal. But the silver lining is that this new service mesh technology with Envoy opened up the doors for us. Envoy comes with built-in security features, things like mutual TLS and authorization policies. And for the first time, we saw a real opportunity to integrate these service features, these security features into the service mesh itself. Our infrastructure teams were

supportive. Our timing was right and the tooling was finally mature enough. So we decided to go for it. This was our first real attempt to build a centralized robust solution for serviceto service security. And so we did that. We did that. We built a system using native envoy constructs and the open policy agent or OPA to handle authentication and fine- grained authorization. A primary goal of our design was to take the burden off of individual service owners. They didn't need to modify their application code or redeploy their services. Security was enforced entirely at the infrastructure level through Envoy and OPA. It was beautiful. that included traffic encryption via mutual TLS, fine- grain authorization, all handled

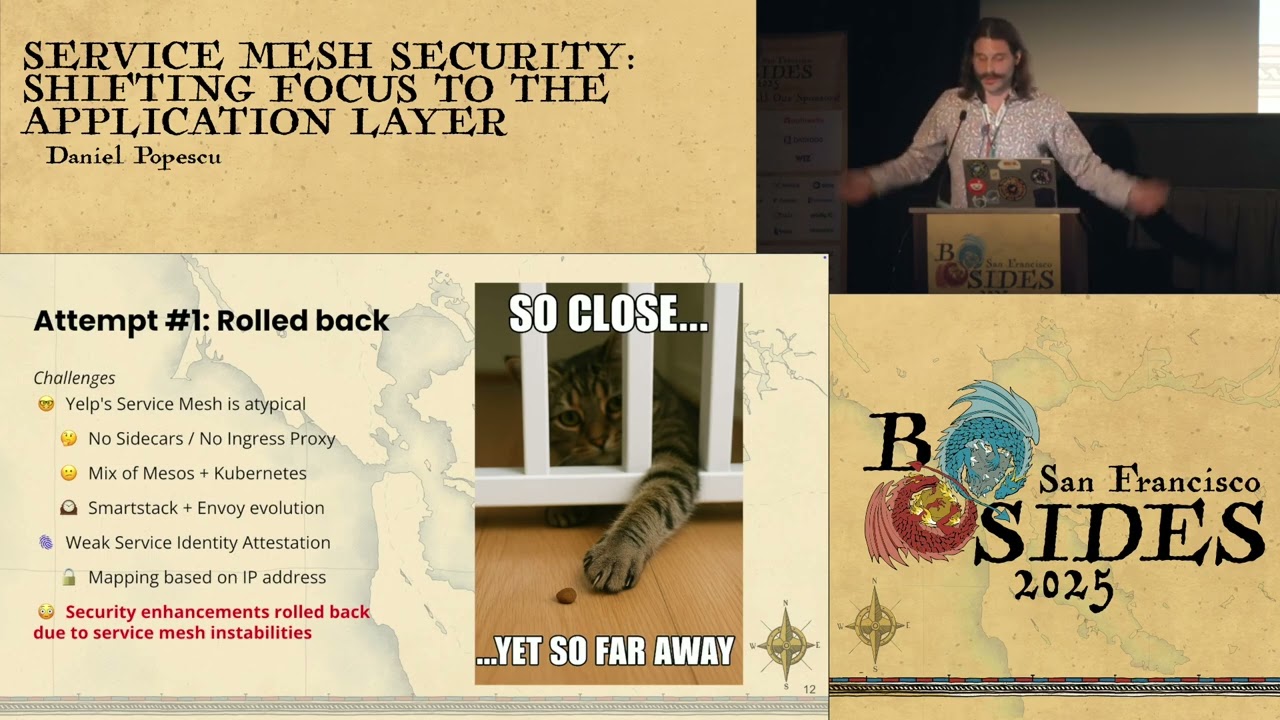

transparently. And even better, it was centralized and it was language agnostic. We even presented a talk on this project at KubeCon in 2019 and we were beyond excited and thrilled and it felt like we finally solved this puzzle that had perplexed us for so long. We were stoked. On paper, the solution checked all the boxes. But yes, there is a butt. There was a problem. Despite all the planning, all the excitement, and even that CubeCon talk where we touted about how great it was, we had to roll it back. The issue wasn't the security logic itself, but it was the the infrastructure that we tried to build it on. Yelp's service mesh was, let's call

it, atypical. uh we don't have sidecars, we don't have ingress proxies and those are the two main enforcement points that most service mesh security models rely on for authentication and authorization. So to make our solution work, we had to bolt on these dedicated ingress proxies and we had to somehow figure out how to resolve identities without the presence of these egress sidecars. And while what we put together technically worked, we added a bunch of complexity and the complexity from these custom components ended up ended up introducing uh instability into the service mesh. And when reliability is on the line, these security enhancements had to go. And this was incredibly frustrating for for all of us. We had

the solid design, fantastic execution, but we were building it on a shaky foundation. We were so close but yet so far. So now we're back to where we started with no security features in the service mesh. So of course we went back to the drawing board and we started exploring other options. At this point both the infrastructure and security teams had a desire to replace the existing service mesh with something more modern, robust and futurep proof. And in each case we tried to bake in our security requirements from the start. We looked at AWS app mesh which is AWS's managed envoy solution but its feature set was too limited and their roadmap didn't align with ours and in hindsight

it was a great call to not move forward with that as it was recently announced that AWS is actually discontinuing this product offering. Next we evaluated ISTTO which had all the features that we wanted but as you all know it was incredibly complex and it was difficult to integrate with our systems. Then we looked at psyllium. Psyllium is really cool tech and looked really promising, but it only supports layer 4 authorization, at least the way that we were going to to have it set up. And we really wanted layer 7 authorization policies. Honestly, at that point, we weren't even willing to compromise on that. We needed something. Uh but unfortunately, just like STO, Selium came with a high operational cost and we

didn't move forward with that either. And every time we went through one of these efforts, they took real time and effort and emotional pain and months of prototyping, testing, and integration work, and none of them ever made it to production. Eventually, we had to ask ourselves, is this dependency on the service mesh even the right strategy? Even if we picked a new one tomorrow, aren't we going to have to roll it out across hundreds of services, two orchestration platforms, and dozens and dozens of teams and all of their services? That's going to be a multi-year effort. So after all that, those months of evaluations, deadends, and the looming reality of a multi-year migration, we took a step back and asked

a different, possibly naive question. What if we stopped trying to solve this problem at the infrastructure layer? And what if instead we shifted our focus to the application layer? I know if you're like me, this feels like a step backwards. Application layer security is often seen as harder to scale, harder to enforce, and more burdensome for developers. But we also realized that perfection was starting to become the enemy of the good. We didn't need a flawless solution. We needed one that we could actually deploy. After all, the developers were still asking us how to secure their services, and we didn't have a paved path to offer them. And this idea actually had some real advantages. It would be decoupled

from infrastructure changes, safe to deploy and roll back in a controlled fashion and it gives us full control over the authentication and authorization logic. Of course, there would be trade-offs. It would require code changes and redeployments. It wouldn't be language agnostic, and we had to be mindful of performance. But given our constraints and the track record with these infrastructure-based attempts, this pivot felt like the most pragmatic path forward. And so we decided to to move forward with that. But we had to be really clear about our requirements by evaluating what was truly important for our infrastructure. We landed on these four core security requirements. Authentication. Every request needs to have a strong verifiable identity. And that means no

shared IPI API keys, no longived tokens. We need short-lived cryptographically secure credentials that can't be spoofed or tampered with. Authorization. We didn't want every service to reinvent the access control wheel. We wanted a centralized policy enforcement system that could enforce fine grained rules, things like read versus write or role based restrictions in a consistent way. On the observab on the observability front, we need comprehensive logs and telemetry to understand how the system is behaving, detect anomalies, and support incident response. And finally, usability is critical. If security ends up being hard for people to adopt, it simply won't be adopted. Integration had to be simple, intuitive, and require as little effort as possible from service owners.

Now the keen folks in the room, the keen eyes in the room may notice that encryption and confidentiality explicitly is not on our requirements list. And that's intentional because traffic between services already runs on hosts with strict least privilege access controls and the risk of someone sniffing traffic on the wire was outside of our primary threat model and not the problem that we were trying to solve. So these requirements gave us clear gave us a clear definition of what success looked like and it helped us stay focused on building something that was both secure and practical. Now in addition to our security requirements we also have a number of operational requirements. Um the system must have

minimal performance overhead. We can't afford to slow down every request just to add these security features. Latency had to stay low especially for high throughput services with call with large call graph with and call graphs with large fanouts. Secondly, high availability. The sec the security system had to be resilient and needed to stay up scale with demand and recover gracefully from failures. And if and when those failures inevitably occur, because they will, they must not impact the service mesh. We'd already seen what happens when security destabilizes the mesh, and we weren't going to repeat that mistake. The system had to fail gracefully without taking down the infrastructure it was meant to protect. Finally, safe deployment and

rollback capabilities. We needed to be able to roll this out incrementally, safely, test it in production, and back it out quickly if something goes wrong. With those requirements in mind, here's the architecture that we came up with.

I know there's a lot I know there's a lot going on here. Don't worry, I'm not going to make you stare at it for 20 minutes. I'm going to break it down piece by piece, starting with the main components, and then we'll zoom into how it all fits together. Let's start with authentication. This is the part of the system responsible for verifying the identity of the users in our environment, and it's the first step in making sure that only trusted entities can access our services. Next is authorization. After an authenticated client sends a request, the receiving service extracts the request details and sends them to the open policy agent which evaluates them against a set

centralized set of access control policies. OPA then returns an allow or deny decision determining whether the request should proceed. And finally, we have observability. All authentication events and authorization decisions are logged and we collect metrics and traces to monitor performance and detect anomalies. So let's dig let's dig deeper into authentication first. We chose to use JSON web tokens aka JWTs aka jots. I might use any one of those interchangeably to identify the actors in our environment. We considered using client certificates. They're a common choice for serviceto-service authentication, but they come with significant drawbacks. Implementing TLS at the application layer would have interfered with our observability tooling as tracing and metrics as tracing and metrics collection relies on

the ability to inspect plain text traffic and issuing, rotating and revoking client certificates across hundreds of services as well as operating and securing the certificate authorities themselves. It would have introduced significant operational complexity that we just weren't prepared to deal with at the time. So JWTs give us a simpler, more flexible alternative while meeting all of our stated requirements. They're short-lived, they're cryptographically signed, and they can't be spoofed or tampered. And they let us unify identity across all the different types of actors in our environment. For humans, we use octa. For Kubernetes pods, we use the API server and service accounts. And for arbitrary workloads like things that run on EC2 instances or lambda function, we

use hash corp vault which can issue a JWT based on the workloads IM role. This gives us a consistent verifiable identity across all types of uh users in our system. And when you look at this you might think okay that's cool but doesn't this add friction for the humans and service owners who now need to authenticate themselves? I'm glad you asked. Actually, I guess I asked. Uh, we put a lot of effort into making this as seamless as possible for users. So, we know that humans generally use curl to interact with their services. So, we built a wrapper called curl with JWT and that prompts the user for their octa credentials, fetches a signed jot and automatically adds it to

the request. To make things even more convenient for them, we cache these credentials locally. So, you're only prompted at most once per hour or whatever your timeout is configured for your uh Octa or for your identity provider sessions. We also have this pretty neat tooling that can borrow your Octa session cookie to obtain a JWT. And so, in many cases, you might only need to authenticate once per day. And before you go about thinking about stealing one of those locally cached JWT tokens, think again. We thought about that. We monitor file access and we trigger an alert if uh one of those token cache files is accessed inappropriately. And here's an example of me uh triggering one of those alerts

trying to steal somebody else's token because I'm totally malicious. Anyway, back to usability. Um for the Kubernetes services themselves, most of them already use client libraries to talk to other services. And those libraries are automatically generated from open open API specs. So we were able to hook into that code generation process and update the libraries to automatically read the service account tokens from disk and tack it on to the outgoing requests. So in most cases for services, no code changes or redeployments were working as long as they were using the these client libs and were on the more recent versions that supported this functionality. Now let's talk about authorization. The open policy agent is the core of our authorization solution.

When a request reaches a service, we need to decide whether it should be allowed or not. And OPA does the majority of that heavy lifting of the decision-making by evaluating the request against a centralized set of access control policies and returning the allow or deny decision. We chose OPA because we'd used it elsewhere in our infrastructure and had success with it and it gave us the flexibility to define fine grained access controls policies centrally without embedding logic inside of every service. We run OPA as a standalone service in ECS Fargate which keeps it decoupled from Kubernetes and the service mesh uh and it removes the need for us to manage capacity or scheduling because ECS Fargate takes care of a lot

of that for us. So, how do these services connect to OPA? Well, glad you asked. Most of our services use common libraries to serve HTTP traffic. So, we added the necessary logic directly into those shared libraries in that middleware. And this middleware extracts the request details like the JWT, the path, and other request metadata and sends it off to OPA for evaluation and then handles the allow deny response accordingly. And opting into this functionality is as simple as setting a config flag again if you are using the latest version of this middleware library. And the middleware config file uh config options actually support this concept of dry run mode. And that lets a service owner opt into the authorization

workflow without the risk of breaking any existing clients. The decision logs still flow but all requests are allowed. And this lets us know what would have happened without actually enforcing the decision. And these dry run logs could then be used for those service owners to produce the authorization policies based on the actual traffic patterns of what requests were hitting their service. Now let's talk about the policies themselves. OPA's policy language is something called Rego. Rego is really powerful, but it's not always the most approachable. So, we built a YAML abstraction layer to make it easier for service owners to define access rules using well-known HTTP concepts they're familiar with. That YAML is processed and made available as input

data to the complex Reggo policy. And this lets us separate policy logic from policy configuration. And this makes it much easier for teams to define pathbased HTTP rules without needing to write or understand Rego themselves. All policy definitions live in GitHub and they're version controlled and branch protected just like any other application code that people are used to seeing. When a policy is updated, it triggers our custom tool called the pol OPA policy manager. The policy manager retrieves the latest policy definitions, rules, group memberships, and ownership data and then prep-processes, validates, and uploads a bundle of this final uh policy output artifact to S3. The OPA instances then periodically pull the latest bundle and use that to enforce

their authorization decisions. So now let's walk through an example of what one of those authorization policies looks like in YAML. Here's a rule for the security happy hour service. This is a test service that we own. And this rule defines who is allowed to call the /hello endpoint. You can see that an uh uh one of these rule files lets you grant access to a few different types of identities. It could be the owning team, an AD group, other services, or IM roles. This format is intentionally simple and readable. And while we could support much more complex policy logic, we found that just this basic combination of path uh method and who can access them, this level of this

level of abstraction works really well for the vast majority of use cases. It's another example of choosing pragmatism over perfection. So now let's see what happens when this policy is actually enforced. If I try to call the /hello endpoint using plain old curl, the request is denied. That's because there's no authentication header and the service doesn't allow unauthenticated results or requests. Now, with one very simple change from curl to curl with jot, a signed JWT is automatically obtained using my octa session and added to the request. All I changed was curl to curl with jot and this time the request is allowed as indicated by the HTTP200 status and the response from the server saying

hello. This is a simple example but it shows how the authentication and authorization work together driven by the policy that we just looked at. And this all sounds great but what about the performance impact? We're now adding authentication and authorization checks to every single request. But we know that this can't come at the cost of latency or reliability. So we focused heavily on performance optimizations starting with a multi-tiered caching caching strategy. In the client libraries, we cache these JWTs locally to avoid reauthenticating on every request. In both the server middleware and the OPA layer, we cache the authorization results for identical requests for a short window of time because these authorization policies don't change very

often. We also put a lot of effort into optimizing our reggo policies. Uh, one of the interesting outcomes of that was that we prefetch and bundle the public keys from our identity providers directly into that policy bundle so that when OPA wants to verify one of these JWTs, they can do it offline uh without having to make any network calls to the identity provider in in the critical path. We also benchmarked and tuned our reggo queries to keep evaluation times as close to uh constant lookup as possible. And the result less than 5 milliseconds of added latency for OPA requests at the 95th percentile. And we're super stoked about that. And a big part of that is because

99% of the authorization decisions are served from one of those many caches. And most importantly, service owners reported no noticeable impact on their response time. And that was critical for adoption. But Daniel, how do you know all of these metrics and statistics? That's where the observability comes into play. Observability is a critical part of making the system reliable and trustworthy. Not just for the security team, but for the service owners and infrastructures too, infrastructure teams too. We instrumented the system at multiple levels. We have logging, we have tracing, we have metrics and we have alerting. This level of visibility gives us confidence to operate the system at scale and it gives infrastructure teams the the

the infrastructure teams were able to trust that our system was working well and it gave service owners the tools to understand how their services are working when they're uh opted into this workflow. So let's look at some of the logs that we have in Splunk. Remember those curl requests I showed you earlier? Here are the corresponding OPA decision logs for those requests. Every authorization decision, whether it's allowed or denied, is logged with the relevant metadata. Who made the request? What were they trying to access? And why was the decision made? These logs are sent to Splunk where we can search, filter, and alert on them. This gives us full visibility into how policies are being

enforced uh and how they're being used across the system. Uh, yep. So, I'm going to show you another decision log example since the first one there didn't capture the case where an authenticated user is unauthorized. Here's another decision log from me being sketchy again and trying to access a production service that I'm not authorized to call. In this case, you can see the logs give a clear indication of why the request was not authorized because I am not in the allowed group. And here's a sneak peek of one of our internal runbooks which lists out all the possible OPA authorization results, what they mean, and instructions for how to address them when they're encountered. This is useful for when a service opts

into uh this functionality and all of their clients are getting failures and they can go and see instructions on how to how to deal with that and what they mean. So now let's just quickly kind of peek at some of the dashboards we use to monitor the system in real time. We track key performance metrics, things like request volumes and the distribution of authorization decisions. This helps us cast catch misconfigurations or unexpected access patterns early when they happen. We also monitor client and server identities involved in these transactions and the open policy agent query latency and we keep a close eye on the health metrics for the OPA service itself. Things like CPU, memory usage

and of course latency. These dashboards are used by both the security team, infrastructure teams, and service owners as a shared source of truth. And they've played a key role in building trust and confidence in the systems reliability. Speaking of reliability, let's talk about the operational excellence of the system. Earlier, we talked about the operational requirements we needed to the system to meet things like high availability, low latency, and safe rollout. Throughout this talk, you've seen how many of these goals were supported by the design of the individual components, whether that's caching in the middleware or how we isolated OPA from the service mesh. This slide highlights many of the safeguards we put in place to make sure

we actually met those requirements. I've already talked about most of this on other slides, so I'll I'll probably skip most of it, but I do want to focus on how we tackled safe deployments and roll backs because this is kind of the most important part. Um we so to facilitate safe deploys and roll backs we monitor and alert on spikes in denied requests. This helps us catch bad policy pushes early. We have that dry run mode that I was telling you about which was critical for adoption but it also lets services simulate enforcement and that gives them confidence to try to turn on this functionality without being scared about breaking uh existing workflows. Um, we also implemented a fail open

mechanism. So what that means is that if OPA for some reason becomes unreachable or too slow to respond, the middleware allows those requests to proceed. We hope that this never happens, but if it does, there are very loud alarms and we get a ton of eyes on it so that we can understand what happened. And as a final final last resort, we have this emergency disable switch that lets us globally turn off enforcement if something goes wrong. And again, we monitor the heck out of that. And to date, both of those two uh dramatic options we have not had to have to use. Uh but having them in place was critical to convince ourselves and our

stakeholders that we could proceed with deployment of the system. So now let's talk about the fun part. Um let's be honest, no solution is perfect. While we are very happy with uh what we built here at the application layer, uh there is one very major downside that to date we can't fully address and I have to acknowledge it. And that's this dependency on library updates. So when a service opts into authentication and authorization, every single one of its clients needs to be on a compatible version of the client libraries. Or in other words, they every client needs to send their identity in their requests. And that's manageable for small services with a handful of client

services, but for popular ones with dozens or even hundreds of clients, it becomes a real coordination challenge. And it's not just about that initial adoption when you first enable it for a service, but anytime we fix a bug or add a new feature to these client libraries or the middleware or change the contract with the open with the OPA uh response, we're going to have to bump that package version and then convince all of the service owners to bump that package version and upgrade it and redeploy their services. And again, these are going to be in the hundreds. So this is uh an annoying but acceptable trade-off. We knew that by solving the problem at the application layer, we

would end up like with a problem like this. And that's just kind of how it is. And of course, we like mitigating risks. So to to mitigate the risk of when this happening, uh we have a number of mitigations in place. We have observability in place to track the outstanding version upgrades and at least we know where we stand in the migration process when it happens. We try our best to make all the code changes forward-looking and maintain backwards compatibility and push whatever we can to configuration files whenever possible so that the so that we can avoid this scenario of having to redeploy the services. Um, at Yelp, we have this internal tooling that we call Yokio

Drift. And that's a tool that lets us automate changes across all the repos in our uh, GitHub environment. And that's really neat and it helps. Um, but we kind of still end up with the same problem. We can automate the changing of the dependencies, uh, requirements.ext text or whatever your package manager is, but you can't force the service owners to go and take those changes, build them, deploy them, click the button in Jenkins or whatever their release workflow is. So, you know, you can drink a you can lead a horse to water, but you can't make them drink, I guess. Um, yeah. I mean, the only other thing we can do really is just provide clear

documentation and support and let teams know why it's so important that they need to just click the button and and make this change to deploy to deploy their update. Uh, so yeah, this is the hardest part and the biggest downside with this decision. Um, and it's a reminder that every architectural decision comes with trade-offs. And that said, I would say the results have been worth it. So let's talk about the results. I've talked about a lot of this, but let's summarize. We have significantly strengthened our security posture by doing this project. We now have strong authentication and fine grain authorization deployed across a large portion of our key services and that's reduced the risk of unauthorized access

and helped us meet our internal and external security goals. Second, we've seen widespread adoption. Service owners have opted in at a high rate that we're happy with and the feedback has been overwhelmingly positive especially around the ease of integration and the transparency. Third, we have achieved operational excellence thanks to all the safeguards we put in place like the caching, the fail open behavior, the dry run, the emergency big red switch. We've seen minimal overhead. We've seen minimum minimal performance overhead to date and zero operational incidents related to the system. So far, knock on wood. Uh, finally, we've built a level of observability that gives both security teams and service owners real time insight into how the system is

performing and how access is being controlled. I will repeat this was not the perfect solution, but it was the one that was right for our environment and it's actually been deployed and it's actually working. So, I would like to wrap up with key takeaways from this talk that I hope you get. Let's just go down the list. Persistence through failure is essential. We didn't get this right on the first try. We didn't get this right on the second try or the third or the fourth. But each time we failed, it taught us something that helped shape the eventual solution. And when the conventional approaches repeatedly failed, we had to get creative and explore solutions that

weren't initially obvious and think outside the box. Next, pragmatism often beats perfection. We spent years trying to build the theoretically ideal security uh by layering it into our service mesh. But what actually worked was this silly idea to just shift focus into the application layer. Something that felt unconventional and wrong at first, but it was what let us deploy incrementally and make real progress without taking a dependency on a monolithic uh in um infrastructure migration. The best security solution isn't the perfect one on paper. It's the one that actually gets deployed and used. Infrastructure constraints fundamentally shape security architecture. Rather than fighting against our unique environment, we designed a solution that worked with it. Actually, I guess it kind of worked

around it, didn't it? Uh, but that is to say that there is no prescription. What worked for us or what worked in that blog post you read, it may not work for you. So you got to do your own homework. Uh next, design for resilience, not just security. Features like the dry run mode, the fail open, and the observability, they just weren't they weren't just nice to haves. They were an essential part of making this system safe to operate and easy to deploy. Resilience is what made this project a reality. And we had some really awesome software engineering fundamentals uh built baked into this project. And finally, security adoption requires minimal friction. We offered a paved

path by building abstractions into the middleware and client libraries, enabling opt-in through configuration, and making policies simple for teams to understand and manage. These lessons weren't just technical, they were cultural, and they're what made this system not only secure, but successful. And as a quick fun aside, coincidentally or maybe not, these takeaways align remarkably well with Yelp's core values which have guided our engineering culture from the company's inception. And I'll just go down. Be tenacious, authenticity, be unbouroring, protect the source, and play well with others. I just thought that was kind of fun and it just kind of happened by coincidence. So um let me just quickly recap the journey that we're on since I think I

have a minute or so. Um we started this journey back in 2015 with our service mesh on smartsec messos. There was no built-in security. In 2019 we made our first serious attempt. We added security in the infrastructure level with envoy and opa looked great but the infrastructure wasn't ready and we had to roll it back. Between 2020 and 2023, we explored multiple service mesh replacements and none of them fit our needs or our environment. And then the turning point came in 2024 where we just shifted our focus, shifted our thinking and move security to the application layer. It wasn't the perfect solution, but it was the one that worked. Uh, and yes, it has been quite

the roller coaster and quite the journey. Took time and it took a lot of iteration, but we finally got there. And that's all I have to say. So, thank you all so much for being here and sticking with me through this journey. I hope this get talk gave you a real look at what it takes to build secure systems in the real world and not just the ideal idealized version. So, I'd love to hear your stories uh in Q&A or or later this weekend. Yeah. Uh thanks. We don't have any uh questions in Slido, but if anyone has a question now, um if you want to step down. Yes. Thank you so much for this talk. Um

we're dealing with similar things so it's I really appreciate to hear your journey. Uh so I I had two questions if you'll allow me. Um so I missed unfortunately part of the talk. Uh so sorry if you already said this but uh it sounds like you just dropped kind of the networking aspect of service mesh. So I just wanted to hear like why that was. Um and then the other thing was in terms of the migration you mentioned that all the clients you know had to adopt the li the library which makes sense but like if some do have the latest and some don't you know did you have backwards compatibility how did like how did you

address that issue? Thanks. Yeah, I mean I talked a little bit about the backwards compatibility. We we try and be forward looking with those client libraries. So we minimize the need for for those for those version bumps, but the problem is like the first time you enable the the security features for this service, all of these services that talk to it need to send their credentials. So I I don't have a solution to the the cat hurting problem of going and tracking down all those service owners and asking them nicely to you know take this version bump their package version and deploy it. Uh on the networking question I I don't know what you mean

because uh dropped the networking requirement. We dro we didn't have a requirement for encryption. Is that what you're talking about?

Yeah, I think that was at the beginning that we that's that's inherently not been a problem for us in in our service mesh. The service discovery uh has always been there. Okay. I I have a question here. Hey, yeah, great presentation. Two questions. One, I think you mentioned earlier no side cars. That was part of your decision-m process. So, I just want to understand why no sidecars. And the second question is that uh you say that this has increased the adoption uh but you're also expecting the app owners to embed these libraries right uh wouldn't a sidecar have solved this problem much more easily or is yeah the sidecar uh I think I can answer both in one one fell

swoop um back in 2015 when we first adopted this uh service mesh framework uh uh using Airbnb smart stack side cars weren't like the standard thing. So, our infrastructure just was not built with sidecars in mind. And trying to take this infrastructure that's evolved over many many years and making sidecars work. We haven't been able to do that. And that's not so much on the security side. It's kind of more on the the team that owns the infrastructure and the service mesh itself. Okay. What one more question? So my my question is like did you consider to soy on more infrastructure level like uh I'm not sure if you are all in as like the VPC lettuce or other

solutions like workload identity like spare or spiff uh did you consider any this kind of text stack or solutions? Yes. Um that that we did not consider the AWS lattice. We have looked at Spire and Spiffy uh in the past. I don't think I'll be able to get into the details of that in the in the next 10 seconds of the the trade-offs and why we couldn't do that. Okay. Well, thanks everyone. Thanks Daniel. Thank