Back to the SOCless Future

Show transcript [en]

welcome everyone we're almost at the end this is it's been a long two days with lots of good talks and we got one more here before the closing remarks so I'm gonna hand it over and let the speaker begin thanks John thank you everybody for coming to my talk I know it's almost closing time so I'll try to make it quick well I want to say to thank yous actually first of all to everybody who is in this room today listening to this I appreciate you coming in giving me some of your time and I also want to say thank you to everybody in the security community who has thought about worked on and tried to implement

our security automation at some point in time in their career much of what I've I'm talking about today is based off of what I've learned from the community and so this is sort of a way to try and get back um you may have noticed that the title of my talk changed that's because I refocused it but it's still pretty much we're going to be talking about automation here today and our civilization framework that we've built artists as a team Who am I my name is Bonnie balligan I am a senior security engineer at olio I've been there for three years thank you that is my team showing support team Talia I've been there for

three years and I've been focused predominantly on the challenge of automating security operations response plans I am a software engineer at heart and that's kind of why I'm here today to talk about some of the stuff that we've been working on what exactly am I on about on our team we've been creating a service security automation framework I'm going to talk about why we built it sort of our story what exactly it is how we built it and I'm gonna leave you with a call to action I will spoil the call to action a little ahead of time just to set expectations right we don't have a public github today but I still have a way for each

and every one each everybody each and every person in the audience to engage with me after this because our goal is to open-source war we've been working on if you have questions during the course of my talk slider you can push the questions there but I will also try and be I will hang around afterwards and you can ask me more in-depth questions cool so let's really begin secure why did we create a server security automation framework whoa because of this like one day we woke up and we realized that security operations had become way too hard and the reason it became hard for us was because at Twilio we operated overwhelming scale and we do so with

really small teams as the security operations team our job is to detect and respond to threats in Toyo's environment we do this through numerous procedures like is Incident Response for mobility management threat detection compliance auditing all of the good stuff that we've heard different talks about during the course of this conference the environment that we operating and operate in is extremely large and extremely diverse and it continues to grow and our engineering team is our security operations team it's pretty small so very early on we learned kind of the hard way that the path to success for us is to focus on the right things for us we felt that the right thing was to help our organization figure out what

it needs to do right help it figure out the processes procedures relationships and technologies that it needs to have in place to uplevel its security game unfortunately for us we found ourselves in a situation where we were spending too much time executing procedures manually that we couldn't invest as much time as we wanted in up levelling our security program our ideal state of things was to spend a lot of time creating procedures and very little time executing them to get there what we felt we needed was some sort of magic box that would take the procedures we create executed providers feedback on the execution of those procedures or escalate those executions to us as necessary and do all of this while being

low effort low effort to operate in low effort to develop so we wanted this magic box we realized that the magic box could have been full of anything cats dogs humans machines we didn't try but we suspect that a cats and dogs wouldn't work very well but we realized and we couldn't quite offer all of our procedures to humans directly because we didn't feel that one we didn't feel was a frugal solution to buy a sock and two we just felt that there could possibly be a better way some sort of synergy between humans and automations so we figured that automation might be the route to go with for our magic box but when we looked out

there we found very few perfect implementations of the magic box that we wanted we felt good implementations we found some good magic and we found some good boxes but we didn't quite find the perfect amount of box we saw great articles they told us that what you are looking for is a security orchestration and automation our framework but many of those articles didn't ship with a concrete implementation of a saw that's what they call it when we did see conquered implementations in the form of security products they were either not good or they were actually good but it came with a high cost and some orchestration requirements that didn't quite fit into our environment or that

we as a small team didn't want to adopt we did see some community are examples that got really close to being perfect there are only challenged there was that they were either to use case specific or they were examples that showed us how to get started but not how to go all the way through in solving our use cases so we thought hmmm maybe we should take a swing here trying to build out this perfect magic box for ourselves the first thing that we had to do was define what it was going to do well it was going to be sort of like a security automation and orchestration framework something that would allow us to

automate any of the security procedures that we have with minimal development an orchestration effort that was the additional constraint that we gave ourselves it has to take very little development time and orchestration time how is it going to do it well by not being perfect by not being magic in by not being a box right we wanted a perfect magic box that was actually not perfect not magic and not a box myth on some level might seem like doesn't make sense but what I mean by not perfect was that we weren't looking for a canned solution that claimed to solve our problem in what we wanted was a framework that will allow us very quickly create the use

cases and automate the use cases that we wanted by not magic we wanted to stay with simple technologies in simple components build up built on top of solid design principles that software engineers have been using for a long time to build their frameworks and by not a box we didn't want something that lived on a server that had proprietary components that we had to figure out how to scale or deal with when we run into situations so to frame the rest of the conversation I want to show a little bit of a demo of a use case that we've automated internally oh and to give a little bit of background here we have in our environment we have socks boxes

socks compliant rolls whenever an engineer SS agents into one of those boxes they are required to provide a tracking ticket that says hey this is why I'm SS Aging in this is the reason that I'm trying to change something on the box as a security operations team our job is to take that ticket validate that it actually exists and in the event that it doesn't exist follow up with the engineer right not entirely the most complex tasks are and it was something that we felt would be better served if we could automate it so this is a video showing just if I can find my mouse here what that automation looks like so this is the engineers view the SSH into a box

put their password they get prompted to put in a ticket number they put in a ticket number it lets them go into the box on the back end our automations are validating that ticket and shortly they get a message that says hey we noticed you logged into a box you provided a ticket however that ticket that you provided isn't quite valid maybe they put in a typo can you give me another ticket do you have a Jared ticket you have an incident tracking ticket the engineer says hey we have you got your ticket a pop-up comes up it takes the ticket goes back on the back end and validates that and eventually after validating it sends

them another message and says hey okay we validated thank you for going through this process with us so that's a high level of one type of procedure that we wanted to automate we have very many different types of procedures like a phishing email dispatch or just following up on on suspicious silence that we see in our environment so this is the circles view of things like the security operations engineer when our automations are done cuts enjoy etiquette and provide all of the information that they collected from the user in that your etiquette right so this is an example of something that we built out on top of our framework it's the kind of things that we wanted to

build and we felt that there was room for this in the community as well so let's let's go a little bit deep inside this framework and talk about the technology behind it right like how does this thing actually work show of hands how many people in the room are familiar with AWS step functions okay that's a number of people um if I'll just take a little detour here and say if you were trying to automate security operations procedures and you're looking for a way to get started I think a DeBeers step functions is a good way to start Oh what we did however was take that technology at a very high level it's a workflow automation engine that

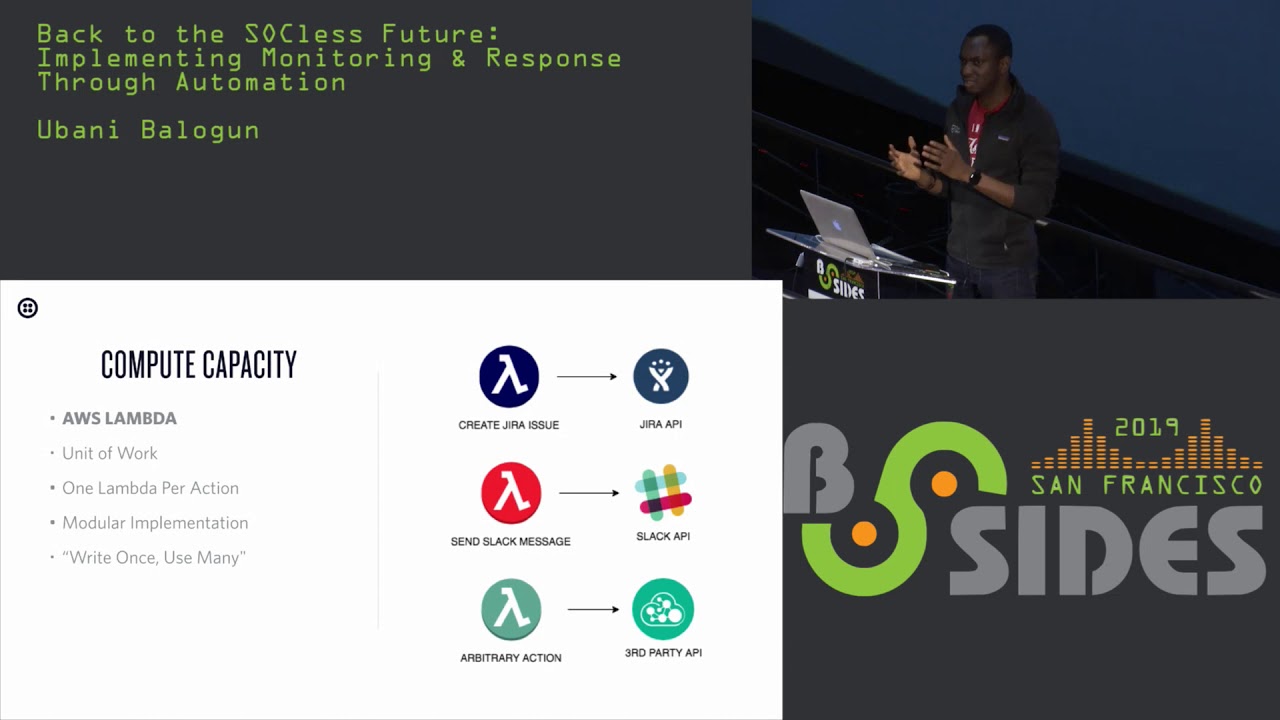

is hosted by AWS we took that technology which we saw as ideal for automating response plants and we surrounded it with the additional infrastructure and code libraries that we needed to create a full-fledged security orchestration and automation framework right so the infrastructure and the code that we added was focused on solving problems like executing executing actions taking in events processing events storing data long-term enabling human interactions in helping developers or create procedures so much more so let's go a little bit and talk about what is inside this box now we started to build it up for compute what we do is we write AWS Thunder functions so we take our procedures which we typically define in

words we split them out into different tasks and write one eight via slander per task Italy Istanbul function service our unit at work and when we design our functions we designed them to be modular so that we can write a function once and use it over and over again in multiple response plans this is how we cut down the automation time for the development time for automation tasks here are two sample lambda functions that we use for the sake of the conference I stripped out a majority of the error checking code but the rest of it is actually deployed into our environment and doing things for us right now the key things that I want you

to focus on here are one they're short lambda functions are pretty easy to write if you can write a script in Python you can write a lambda function and that was what we were going for and they are modular we don't hard-code any of the specifics of the request that they are trying to make to whatever API that they are interacting with and so because we don't hard-code any of that they're modular we can move them around between frameworks between sorry between procedures I'm gonna take a water break

go so we have these modular lambda functions modular lambda functions that we can put in different procedures and also share around to other people the next thing we do is that we orchestrate them together using a workflow engine this workflow engine is AWS step functions at a very high level what it does is takes a whole bunch of lambda functions and organizes them into a procedure the procedure can have linear components where things execute inline power components where they execute together or conditional components who are the the path of execution changes based off of some new data that is generated by a previous lambda function in the execution a Tobias step functions represents procedures as state machines

here is what a state machine looks like in the development phase it's literally just a Jason field like it's a jason object that has a description of what are the different states the state machine has what resources for the most part which are lambda functions is it using and what parameters are being passed to those lambda functions to get them to do their work right so with a Tobias lambda and with Olivia step functions we pretty much just these two components pretty bare-bones you already have a workable our security automation and orchestration framework but they're not enough there's some challenges that they don't solve by themselves out of the box one of them is data storage

so let's how cool the challenge of data storage what we use is AWS dynamodb we use DynamoDB for two reasons one to store the execution results of lambda functions and two to store data on the events that are coming into our system the first one is I think more fun to talk about because what we do there with storing the execution of lambda functions is that every time a lambda function executes within our system we automatically write the execution results to a dynamodb table so that all of its results become available to the subsequent lambda functions in this step function state machine EWS has its own database step functions has its own mechanism for passing data between

lambda functions we found it to me a little bit limited and we found that we couldn't in the future do analytics on top of the results if we wanted to so we took all of the state or execution context management outside of a table a step functions and we put it in dynamodb tables the next challenge for us so dynamodb was pretty much how we solved the problem of managing the the state or the execution context of a procedure as the procedure executed over time the next challenge to solve was getting information into the system for that we leverage AWS API gateway and Olivia's cloud watch events we use API gateway for real-time events to ingest real-time

events into the system and we use AWS cloud watch to invent to run scheduled events into our system we typically write one event per endpoint and at the event endpoints we a couple of things like processing the events taking them in deduplicating the events to ensure that we're not running the same response plan twice for something that we've already seen and we also route the events that comment the appropriate response plan that should be executed the other component that we really needed to build was the human interaction component the the portion that allows our system to go out there interact with the human virus something like a slot channel pull information back into the system and then change the

course of its decision based off of the information that it pulled in the way we pull this off is to leverage again EWS is step of feature in AWS type functions called an activity the activity essentially allows you to pause the execution of a playbook until some external job is is accomplished so we took that functionality of a double step functions and created around the additional architecture to support pulling to support interacting with an individual and pulling data back into AWS step functions a very high level the way it works is that we have one lambda function send out a message to the individual then trigger an activity which pauses the execution of the procedure when the person responds we

take the response in through another AWS API gateway endpoint and we put that information back into the procedure via the interview staff functions activity in this way we are able to reach often people get information pull it in and then change the course of our execution based off of that so all of this are is you know all of what we did was take a degree of star functions and build a lot of the missing components around it the thing that really glues all those missing components together are is the code library that we wrote for this called Atlas core it's implemented in Python it's what really makes this a framework for us right it's what takes a

double your step functions from being just a workflow engine to being the Atlas automation framework firms we ship the the library with every lambda function that we write and its goal is to solve some of the common of automating security or frameworks so specifically things like hey ensuring that the data that is generated as part of the execution of a response plan gets stored in the right place ensuring that lambda functions are processing data correctly ensuring that people have the right tools that they need to implement some of the most common use cases that they have with lambda functions like pulling data from an s3 bucket into a lambda function for processing so that is the job of the the code library it

just brings everything together and makes it easy for people to continue to build on top of this as they go forward all of this together is what we call our Atlas automation framework to recap events coming through the left side either through a Tobias AP a gateway endpoints or a cloud watch events triggered a cloud scheduled event events get stored in a dynamo DB table and then the endpoints trigger the execution of our procedures the procedures execute each and every step of the response plan reaching out to humans is necessary to gather more information and pull that in and all of the executions from the lambda functions get stored in dynamodb tables making them available during the

course of execution as well as after the course of execution so like in the future you can do analytics or dashboarding as necessary so I want to take that thermal procedure that I showed you early on and show you a little bit more of it of the behind the scenes of what actually goes on on the nab a step function side of things when a procedure is executing so on the Left you still have your slack your engineer at there slack there's slack up responding to a message on the right you have the aw step functions console showing step functions in action what you were looking here looking at here is the full-fledged response plan each box is either a

lambda function that is taking an action or an aw step functions mechanism for making choices based off of the data that is in the system cool just that was just zooming in to get you a better view and as you can see as the individual interacts step function progresses through the execution of the response plan it does a good job of giving feedback about what succeeded and what failed and because everything is modular and tied together it makes it easy for us when we're debugging response plans to figure out what was the component that was an issue so that is step functions in abysus step functions back-end just showing a little bit of how it works what it does ultimately

going forward we think any BSC step functions visuals are good there are some things that they are lacking we would love to continue to build on top of this and are the components that they may be missing so let me go through some of highlights again the whole thing is service which means that as a team we don't have to worry too much about orchestrating servers and ensuring that hey like this thing this the server that I bought from someone stays up we don't have to organize figure out how to orchestrate our servers into a high availability configuration it's also ballast also because it's selfless things auto-scale ridiculously well they don't auto scale infinitely but the auto

scale very well liked so for example lambda functions I think with AWS you can have a starting limit of a thousand concrete lambda functions running at the same time but you can follow the support ticket in AWS who bump that up for you with AWS step functions their state machines which is what we use to represent our procedures you can have a million of those running concurrently again that's the starting limit there will be there will happily bump that lemon up for you so that you can scale as needed or so that we can scale as needed so everything Auto scales you don't have to worry about auto scaling we found that in using this framework we've had a

rapid automation life cycle so for us it typically takes two weeks which is one sprint to take like an automation use case from idea to come to the concrete implementation the for the most part we found that the hardest part of it is actually figuring out what the right response plan should look like which is what we want to be doing as security operations engineers you don't want to be two balls down developing things but we want to know to figure out hey like what should the procedure look like right here and then very quickly implement that so we have a very like our development lifecycle in terms of automation has come down significantly all of the infrastructure

lives this code which makes it easy for us to deploy an audit as well and thanks to how any of your step functions works our procedures live us code as well everything is extensible it is all built on top of a WSS architecture and we try to build in a way that makes it easy for us to reuse components as well as extend components as our use cases grow so over time we have integrated like s/3 s/3 into our framework and we're continually evaluating and figuring out like hey what else do we need to get the job done we really like the fact that we're trying to keep all of this open because we know that hey even if it's not even

if we run into a situation where the framework isn't doing what we want it to do right now and here it isn't able to do what we wanted to do like it's extensible we can go build it ourselves we don't have to wait for somebody else's roadmap to figure out if we're going to get this feature or not and the last thing that I want to mention here is that we designed this thing for open sourcing from day one it was all it is always our intention we are committed to it it is our second priority which brings me to my last component which is the call to action we would like you to come build this thing with us we are on

our journey to open source the initial plan was to have this ready to open source today but hey life happens but what we are going to do is if you text that number you will get my contact details the way we will approach open sourcing this is by building a community we think the technology is great but when it comes to security automation and orchestration I think that the bigger thing is having a community of people who are all approaching the problem in a similar way so that we can all help each other figure out how to get this thing done better quicker and faster so we want to approach it by building out a community

of people starting by working closely with a handful of people who are excited about having an open-source alternative to secure automation so if you feel like you want to get in on the ground floor text that number or come talk to me afterwards and we will see how we can get you building on top of this thing as quickly as possible another way to come build is to join our team we are always hiring we are actually hiring a lot right now at oil I think the boots might be close but much of my team is here you can grab them talk to them see how you fit into our organization you can visit our jobs page as well and I'm gonna be

hanging around come talk to me yeah that's pretty much it that's my time I finished with a lot of time to spare I can't believe it [Applause] questions yes use cases we have about ten right now ten use cases that we've deployed into our production environment we are continuously building more on top of this we actually have one in the pipeline right now do the demo that I showed you is in the pipeline we're going to be deploying that this week and ten is a good start but we feel like hey we can do more so as much as possible we want to get we want to do more which is why we want the community to pitch in

here question over here yeah hi yeah sorry okay um great talk I really enjoyed it and I also work on a team that has a lot of resourcing problems so this really resonated with me I wanted to ask you about the slide that you showed that was the lambda function for necess hopefully this isn't too deep of a cut but I wanted to ask you did that code have nessus secret hard-coded into it let me see I don't believe so because we absolutely don't hard-code anything but there was a reference to a NASA secret so what we do that's a good that's a great question let's actually talk about how we handle secret management in our

framework what we do is that we use another what we do is that we use a another yeah no secret what we do is that we use an a degree a service called a secret manager SSM that's what it's called so in our framework we store all of our credentials in that service and when we are deploying our lambda functions we have it pull those credentials and deploy them as environment variables and what you're looking at there is a reference to an environment variable in the lambda function that has the credentials whitelisting IP is further necess source IPS like their engines yes so white listing the Nessus is or can you say that again please I'm not super

familiar with necess because we use a different tool but when they're scanning your server say how are you handling that they're able to scan and send that traffic yeah so the way meses does that so on we do for the most part we do external Scott's right so we scan our parameter and when we want to do internal scans Nessus allows you to deploy something in your environment that does a scan so we threw a like we set up our security groups to allow the necess internal scanner to scan our internal resources as we need it to so for external scanning we don't need to whitelist and for internal scanning we do need to whitelist

we deploy in essence box and then configure our security groups to allow it access to the hosts in our environment that we want to scan we do that through automation so we've active you in another set of automations on our framework to handle the deployment of our nasa scanners triggering our scanning and tearing down those hosts so we don't have to worry hello this is really cool I'm definitely a fan and I'm definitely going to text that number as well I wanted to ask you about the dev environment so how do you set up dev environment for something like this what do you use beautiful question Oh so the way we have all of I

think this goes back to how we orchestrate a little bit how many people are familiar with the server list framework yes cool for those of you who want familiar with the service framework it is sort of in the context of AWS you can think of it as a wrapper around CloudFormation it allows you to very quickly deploy lambda functions for service applications so we orchestrate all of this framework is orchestrated using the service framework and what we do right now for a development environment is that we deploy to one region of AWS for development and another region for our production then answer your question yes that's how we handle it the trace of step functions is pretty brilliant

reserve anything suboptimal that you ran into with step functions yeah yes yes in many ways much of the library that we built around step functions was to tackle the limitations of AWS at functions right one of the limitations for example is that the AWS is like step functions default execution memory for the lack of a better term is limited to about 32,000 characters that's not that we founded that wasn't enough when automating a response plan where you have to keep all of the state from previous lambda functions to satisfy like lambda function down the line so we broke all of that out all of that state management out dynamodb which I think has about four gigs per record so they

significantly expanding the amount of memory we have to store data span over execution that was one of them another one of them was that initially when step functions launched it didn't have a good way to allow you to create a modular lambda function because it didn't have a mechanisms and dynamically passed parameters to a lambda function so we wrote around that functionality with the help of our libraries um but in I think last year they did launch some support for parameters it's something that we're going to look at and see if we can now adopt those like those nice features into our framework or and see how we can benefit from it so there were a couple

of limitations and stuff functions that we saw but overall we felt that the core was great and it has proven to us that it is great and so we've invested time and building around it okay with that we are at time so I want to thank you all for being here we have a closing remarks coming up I don't know if they're in this room but very much I want to thank you for that presentation is very good and very interesting and I wish I can get some of my people automate more so everybody thank you very much for coming there will be some closing remarks and [Applause]