LLMs at the Core: From Attention to Action in Scaling Security Teams

Show transcript [en]

it's my pleasure to introduce fotus and Paul of open AI to you guys where they'll be speaking to us about uh llms at the core take it away guys thank you so um so in today's world uh security teams are so stretched th that if they were a yoga POS they'd be called The Eternal scream I spent at least an hour brainstorming with ch GB to write this line and it's still a little bit underwhelming uh But Here Comes AI like a new caffeine pill so that the security team can focus on the real threats uh but you we are here to make sure that AI doesn't just start flagging every Mouse click as an

existential crisis so a few things uh about who we are uh I'm foris I'm a security engineer and the red teamer uh some highlights of my work include uh publishing a book on practical iot hiking paper on exploiting TCP published that frack and writing and crack the network authentication cracking tool of the anal project uh I was also the first security engineer at open AI starting back when we had just released gpt3 uh in 2020 so I was there before the chbd hype you were also around the time of codex our first code generation model right I was there Paul I was there 3,000 years ago I was there when it was written it's okay photus I

understand a year here feels like 10 anywhere else with so much happening my background is in building and securing clouds both private and public I'm also on the security team for several open sour Source projects that everyone in the room uses regularly and still I've never seen anything like the last year of growth here working on product security at open AI this talk is based on work our whole team has done and we want to specifically acknowledge the contributions of Colin Jake Tiffany Harold and will who aren't here on stage with us today but first a quick story when we released chat GPT millions of users flocked to the product and we had a hard

time keeping up with demand also we hadn't released an API yet predictably there were many very excited attackers I mean enthusiasts who wanted to use the product in unauthorized ways we played Cat and G Mouse game with them they would find new apis to abuse and we would improve our bot detection it was pretty exhausting one day we noticed a very unique attack signal and rather than just blocking the Bots one of our Engineers decided to send all the incoming requests to a very small very dumb model that only wanted to talk about cats and so cat GPT was born our attackers were a bit perplexed uh perhaps even unhappy but I think they enjoyed the joke almost as much as we

did one user suggested we should have rickrolled them but honestly cat GPT was funnier there are many fun things you can do with an llm but this talk is also about some more serious practical topics okay enough work stuff everything from here on out is strictly vendor neutral so let's see what it's actually about while we appreciate the good old traditional security tools we found that LMS can augment them very effectively AI isn't going to replace standard tooling but enhancing but it instead enhance workflows using llms can have a profound impact on a team's efficiency today there are these are the key things we want you to take away we're talking about using the models to help

developers go faster by reducing security friction help humans focus on the important parts of Defense use llms to improve the way that we catch attackers and use them to accelerate the other kinds of security drudgery we have to deal with on a daily basis and by the way we're uh we're open sourcing three new tools and uh you want you can get started on this too so we're not talking about chat GPT the website Auto GPT Lang chain jailbreaks or fine-tuning these are all off-the-shelf results with off-the-shelf models and as I said this isn't a vendor prge this is the last the prior sides where the last time you'll see our company name and these

techniques should work with your favorite models from any of the major vendors so let's start with a quick refresh on how most modern base llm apis work today models are composed of tokens which represent about 3/4 of a word the model weights en code relationships between those tokens and they're stored in the GPU when you query a model it happens in a single request response cycle your query is encoded as tokens passed through the weights in the GPU producing your response this means that the model is stateless when you query it those model weights don't change and the model can't remember a prior conversation doesn't learn from what you pass through so given all that how does

chat GPT work how does chat seem to remember things context don't try to read this text it's deliberately too small remember how I said the model was stateless it doesn't actually remember things between submissions the gpus and instead it just includes as much of the prior conversation as it can when it runs out of space to remember it starts deleting part of the history so that each request can fit into a single request response cycle there's no magic there and it doesn't change what's going through the gpus right so that was a cool story about the the meow and Cat gbt pole but uh here I'd like to introduce you to woofpak so woe is a company that makes

AI power dog colors that can translate ws and Barks into human speech I know some people that would pay an infinite amount of money for that kind of product and as you're watching this video of husk and sheas roaming a cyberpunk themed City wearing a woof speak a collar we're going to describe a scenario to demonstrate our llm driven tools uh the engineers of our who speak company are building a new inventory tracking system for the various versions of dools the compan is producing and once this video finishes um it is comprised of all the standard uh components you'd expect from such an app so it use a web UI with react as the front end no JS as the back

end myle for Storage off zero for uh user auth and authorization and the most important thing is that this will be accessible only through the company's VPN so it will be for employees only and with no internet access and surprise surprise the engineers are on a very tight timeline to build all of this the software development uh life cycle is not going to be very linear for uh wo speak so the sdlc uh is a structur process as we know used for planning creating testing and deploying software uh but truly in practice uh the sdlc in a very fast uh fastpaced company like ours can often seem like the world's most unpredictable recipe imagine trying

to bake a cake but the ingredients keep changing the oven randomly adjusts its own temperature and every now and then someone comes in to tell you they actually wanted a pie and the security team has to be embedded in that cycle and be able to evaluate if a project uh or feature that is being developed needs a human Security review but also one of the biggest problems that a security uh Team face is that they have very limited human bandwidth so it's like playing guacamole but if you miss the mold steals your data and sells it on the dark web for that reason we develop the stlc bot a slack that uses llms to help us with

prioritizing which projects or features uh we as a security team uh need to assess first and we're open sourcing this so here's how it works it asks the user some basic security questions about the project that step is optional uh it scours and monitors important threads on slack analyzes design documents relevant to the project then with the help of the LM it gives you a risk rating and a confidence level based on the above context so let's see how this works in practice uh you there's an initial design document here for the inventory tracking system you don't have to read this this is a very boiler play design document that goes through all the basic

architecture decisions but there's an important note uh left down there which says that the system will be accessible from the company's VPN only and will not be exposed to the Internet so this reduces the risk profile of the uh app so now let's tell our sdlc B about this project I'm going to type in some very basic uh information about the project like a quick description a link to the design document and specify a point of contact and then the go to live date uh we have versions of the B that will skip these questions and can um that sometimes it gives you like some follow-up questions to answer but this doesn't show it the model will then uh

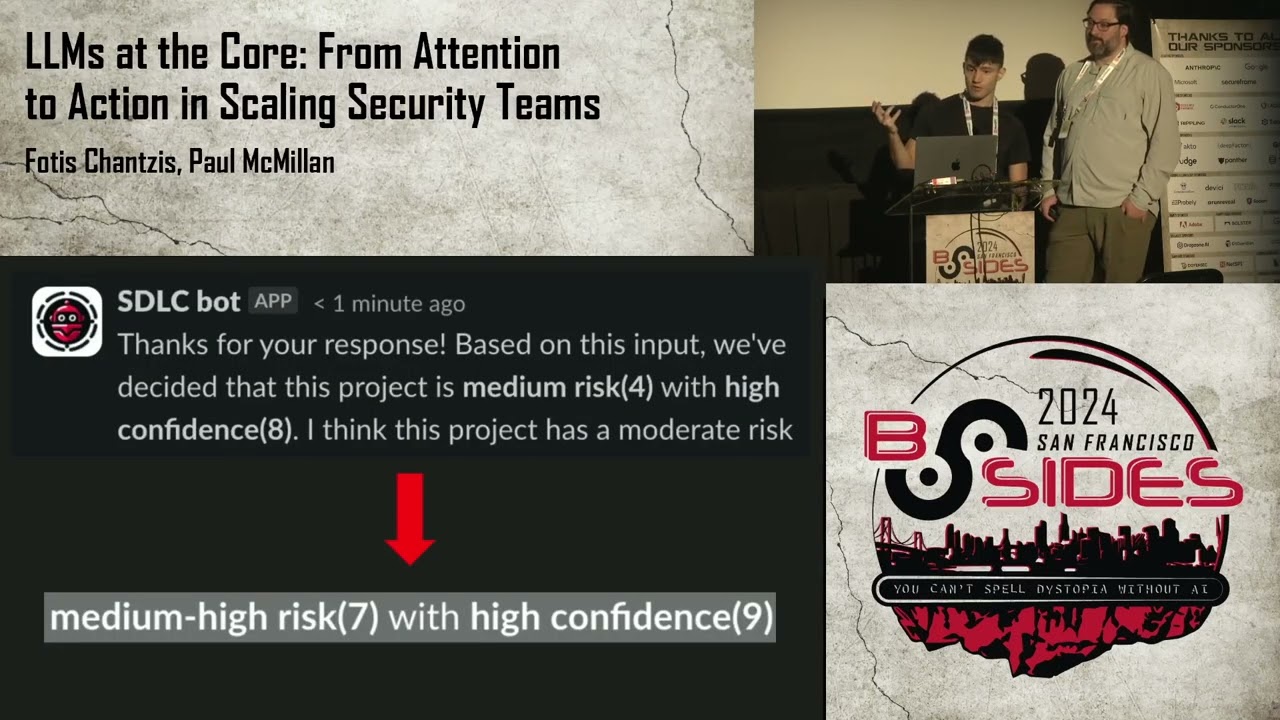

dynamically generate a bunch of of these questions if uh some of the times and then uh here's the response of the model so uh it tells you that there is a specific risk rating and uh a specific confidence that uh that it made this decision with so in our our story the deadline is approaching fast and our Engineers decide that there's no time to create private links for all the cloud components so that they you know that they can reside within the VPN but someone fortunately Updates this uh and then the llm kind of knows because it monitors this uh design document the sdlc B um will update the decision and now it will give a new confidence score

and a new risk rating which will be much higher than the previous one uh and so now the risk is is increased so if this is not I'm not sure if the font is very visible but uh to reiterate um and so this with a bigger front the model increased the risk score uh from a medium risk level of four uh to a medium high risk level of seven uh the scale is like you know 0 to 10 and some of the things we learned while building this the model overfits on the initial questions uh so if you prompted with some starting security questions as examples the model will not go too far away from those examples uh

but it will also ask some context based ones uh regarding prom engineering this is probably one of the uh best lessons we learn that you have to praise your models so the more you tell the about that they're an expert in the field the better responses you'll get um like so tell it you know they are an expert cyber security engineer versus like a mediocre one and you'll get better responses so the other thing we notice is that the model also gives far better results when asked to Output scores and numbers instead of words so in a previous version of the stlc bo the first one we were asking it to give an answer in uh

basically just a sentence format but when we asked it to give us confidence and risk scores in numbers the responses were of much higher quality uh there's one more thing we've developed an extension to the stlc board uh this is an internal version only because we didn't have a good way to publish this uh but it's it's a better version in that it's enhanced with being able to monitor a slack channel on its own and automatically find the topics of Interest so that way it has less user friction because you don't have to like manually go there and like you know uh input the the fields and um you know manually add all the information it can

do it automatically so here's how it works the model will infer the topics I'm interested in based on my slack history and then with the with the help of the of the models it can also directly be fed um or it can also directly be fed those through a file but my interests are mostly security Focus so there we have a discussion about the architecture and then they say that HR send a request to include an admin account without MFA uh on the um on their components so that is probably not a very good thing but here I get like an automatic flag of that conversation because the sdlc board is invited in the channel and is

monitoring the channel and so I now can as you know a security team member know that probably that's not a very good idea I should we maybe we should do a manual uh review uh another problem security teams face is that they have to Tri us a great number of security related requests for that we built the triage boot which with the help of the llms uh automatically answers the users request saving valuable time for the uh on call engineers and we're also open sourcing this so um some common requests that the Trias Bo can autom automatically uh do is like redirect to the right team or even automatically answer uh so some examples are user requests uh a password

reset for their corporate account the board will then assign the request to the IT team uh or a user asks for access to a storage container then the bo will notify the user to send a request to uh access manager on which we'll talk about later so let's see the board in practice one of our uh who speak application Engineers who is new to the team was recently locked out of their computer so they ask on the security help Channel okay what what should I do the bot will respond that this is something that it can help with so um this is all done automatically through the models um then uh they might want elevated permissions

because they want to uh access a storage container so again the Trib will redirect them to use uh ghlas access which is our like internal access management tool um there is also another uh new feature that our team developed uh which is that the B now has context of the slack history of that channel and can also respond in more detail about a user's question based on a similar issue that was resolved in the past so um the user asks about a specific storage account uh for example like a credential error that occurred when trying to access this Azure storage container and then what the the bot here uh not only suggests that the user should use the

access manager tool that we have but they also offer another suggestion which is to restart the local DNS service so this is based on context of the slack history and seems to have been a step to debug and resolve a similar issue in the past so this is like additional um information there from the boat speaking of access management we also developed another tool to help us with this access manager helps us uh helps users automatically find the right permissions without them having to know the name or details about the access group uh so it works with available group descriptions and metad data to find good conceptual matches and explain them uh to the user

uh even if they didn't use uh matching keywords in their query so here's how it works uh one of the engineers tries to list the contents of the other uh a St account they get an error because they don't have access to that account and then they use the access manager CLI to ask what's wrong access manager will then with the help of the model suggest the right group name to which the user should belong in order to get access to that container and then they also offer to send the access request on behalf of the user so now the manager of the of that engineer will see a pending request for that group and then they have the

option of clicking approve or or not depending on the use case and then then the engineer will be granted access uh to that storage account so this is uh the workflow and how it works the the manager will hit approve and then after rerunning um the the CLI tool to access the storage container the engineer can Now list the the contents and veryify they have access to the storage group uh and then the access Grant will be locked creating an odit Trail and finally it can also be said to expire after a certain time uh and there's more our team has also experimented with Dynamic authorization where the decision of granting access is based on a number of

factors including user context such as location time device or even the job role uh resource context for example the sensitivity of the access resource uh and also environmental context uh for example the network conditions so if you compare a typical researchers uh access patterns versus a security Engineers they will be quite different uh so this graph is showing the cosign similarity between the access patterns from say an infrastructure engineer and also a security engineer and you can see that these groups they they access on a daily basis are quite different meaning that a request for a group outside the normal patterns should trigger more scrutiny on the other hand if the requests that look similar to regular access uh could

potentially be uh automatically granted without further approval so another place we're using the model to help us is with tree aing security reports you can use it for bug Bounty security disclosure email aliases even internal findings the key thing is that not only can It help evaluate reports it can help make those reports better and more actionable for your team so back in April we launched our bug Bounty it got a lot of attention this is not a reasonable volume of reports for humans to triage if you can't see the Top Line we were getting over 900 reports per day to our program at first and I want shout out to our partners at bug crowd for throwing a bunch of humans

at this initial volume to help us help us get through it so here are our stats at the end of the first week we'd fixed 32 real issues had seven accepted Open tickets and en closed nearly 3,500 tickets as invalid so that's a lot of invalid reports for one reason or another as you may expect given the topic we turned to our model to help us rather than trying to get the model to try to figure out whether a security report was valid we started with the lower hanging fruit rejecting reports that were not appropriate for our bug boundy program we asked the model to sort the reports into several categories so model safety or correctness is this

report a complaint about something related to the model safety or correctness is it a complaint about the answers the model is giving is it harmful is it factually correct Etc so we've got a separate ingestion mechanism for these types of reports we want to make these improvements but the bug bunny programs the wrong place to submit them for the second category customer support is it is this report actually a request for customer service does a reporter have a question about the product is it a problem with payment subscription refund is it a report about a functional bug without any obvious security impact if so we send them to our customer support team the third out of scope bug reports in

about things that are technical but not uh not important to us this one's definitely harder for the model to get right because it does require the model to make some judgments about what category a report falls under this is our technical out of scope list and we try to keep the list that we use for the model prompt in exact sync with what we have in our out of scope section in the public bug Bounty if the model can precisely understand your out of scope list hopefully so so can your human Hunters uh this is for things like reports of missing server headers missing SPF or DeMark records server error messages server version strings Etc and finally maybe it is actually a

security report does this appear to be a report of a legitimate security issue if it doesn't fall into one of the earlier categories it should be in this one so you've got things like xss csrf subdomain takeovers all the other security issues reports in this category don't get an autor response and instead go to go to human trios team directly so failure modes you may be worried about using LMS to do security critical stuff here but and of and of course there's some anti- prompt injection work here as well but it turns out it isn't a big deal for this use case we're not making payment decisions and we're not even having the model write the responses

it's just making sure users who report an issue to the wrong place get sent to the right place using pre-written messages the worst you can do is get your bucket T your ticket but triaged into the wrong bucket um so how do we figure out if we're getting good results you can't just write a prompt and hope it does the right thing you need data to show you're not making the problem worse but evals are surprisingly often all you need so uh the way our evals work is you start with a list of known good questions and answers um you ask the model the question you check the answer and then you ask the model you give the model the

answer it gave and the correct answer and it says and you ask it is the is the question is the response the model gave me the correct answer so the model grades its own responses and says yes the answer is correct or no that doesn't doesn't work right um so practically how do we double check our work I exported a CSV of the first couple thousand reports I ran through them in hand scored them into different categories then we ran the model this is another one you don't need to read in details just here show help you look at the columns you can do human judgment you can see my human judgment on the in the middle left and

you can see the models judgment in the middle right as well as an explanation of why it made that decision doing it as a spreadsheet is a really fast easy wayed low cost to do it you don't need to build a fancy UI and you get the answers quickly about how well you're doing by all means use the fancier tools but start with the easy ones going further there's a bunch of other data points you can analyze too you you can analyze commentary on historical tickets to help you detect when you're bought or the humans make an in initial triage error you can use the model to help detect human mistakes going forward as well but of course the key thing to for

this is keeping it fair if someone disputes the model's autoclose reason a human always reviews it always make sure there are humans in the loop when you do this the model isn't infallible and if someone asks for a second look go do it the next two things that these next two things are things we've experimented with but haven't actually deployed yet report quality is one of the recurring challenges with the bug Bounty program reporters are in a hurry they assume you're psychic and often they do not submit enough information to help you tri out the bug the model can help the reporters make sure you have all the information you need for instance you report about product X well we need a

full URL to debug that or you you did a report on why to check that we need your email address hey it looks like you submitted the template without changing much can you go back and give us more detail in your own words like you can help triers focus on the important stuff as well bug bny reports are sometimes worse than internet recipe sites and reporters often confuse using lots of words with having an important bug waiting through that is exhausting so the model can help us do things like automatically fix the categorization summarize the key points of of the report extract Repro steps provide suggestions for clarifying questions and even help push common types of reports

into other tool cues for example subdomain takeovers can often be tested and resolved almost entirely automatically so let's take a look at an interaction here you can see a typical bug Bounty report now we're not really in the business of selling chicken exactly and even if it were this isn't a security issue um and here's the bot politely telling the user this isn't the right place to report it we're not having the bot write each of these responses individually it's only selecting the appropriate pre-written carefully vetted one from our list of responses so uh another interesting use case is uh using the models to help find attackers so uh llms are consistently decent on finding the needle in the Hast

stock while humans can be sometimes unreliable they miss data uh they get tired and they make mistakes and logs are often noisy long and frankly quite boring to go through so uh let's see an example of log analysis we give uh the model the following prompt summarize the session transcript and decide if the activity warrants alerting security or reversal looking for Secrets or persistence uh and here's a very small sub of user's bus history uh bus history can be long and boring you don't have to read this but halfway through the user invokes a PE oneliner that pop Ser shell back to a C2 so the model is pretty good at identifying this the response from the

model in this case was uh we should Alert security because the malissa reversal uh Commodities run in the middle of the session so the first DNR use case for llms is to acess accelerate long and boring log analysis the second part is to augment and accelerate incident response so specifically to help our defense team with a large volume of alerts that they have to deal with on a daily basis uh we developed a b that helps with triing uh certain types of alerts so the board ingests alerts from a traditional seam and we have to point this out this is like a very separate function like this kind of is attached to a traditional seam that

has nothing to do with uh with llms so we use traditional detection engineering there and for those that for certain uh subset of those alerts like ones that could be an accidental mistake for example it can start a chat with a user to inform them of the potential problem so as an example um is the following so the user shares a potentially sensitive Google Drive public uh Google Drive document to the public the board alerts them about this action and asks them uh if this was their intention so giving them an opportunity to revert their change if it was indeed accidental and also a notification will be sent to the DNR backlog so let's see an example of

this in practice one of the uh W speak Engineers want to share a document named inventory details with sensitive information about the company with say a contractor who had signed an NDA but the engineer accidentally like makes it public and so the alert is created and then the uh the boat starts chatting with the engineer here uh if the user tries to to avoid the question like saying okay what is your favorite color the B insists and doesn't mess around as you'll see uh so when the user responds with something the conversation is summarized the model uh by the model and is sent back to the DNR team for followup so uh this is how it works it's

like a simple use case but it's actually very useful and uh speeds things up uh here you can see the summary of the conversation as it was uh done by the model and this was basically our final demo so let's see what the most important lessons were so some final thoughts the more high quality data you give the model the better it can do don't just shove everything in there the models are better with more context in your environment but that context has to be high quality in high trust environments the model can do more externally you should be more restrained in how you use it and tell your model it's an expert use the evales finding the right prompts

goes a long way and also remember to always have a human in the loop uh like we said earlier uh models are not infallible they can also make mistakes and even though they are very powerful in augmenting these security workflows we have to be mindful that they're not going to be 100% accurate they can hallucinate they can miss things and yeah that's to reer that humans are here to stay so um yeah this is easier than you think you should go try it um this is the final slides uh we are we've open sourced all these things so you can find links to all of them here um and yeah that's all thank you wonderful talk from our speakers um

we'll be taking Q&A now we have uh until I think about a quarter after to get all the questions in um I see there's a few coming in for those of you who are not already aware we're taking questions via slid you can see instructions on how to connect and uh submit questions there at bides sf. org slq and that's bides sf.org qna um and we'll take questions from there our first question um mentioned praising the model U to positive results have you tried the inverse yeah well it doesn't work that well next question um when someone interacts with a bot uh EG through triage is it disclosed um is this also explicit if the interaction is with the

human I mean it's very it's very clear that you're interacting with a with a bot like yeah it's it's in the user profile yeah which threat of Cl um which threat actors or class of threat actors such as AP nation states corporate Espionage Etc POS the greatest risk to um open AI the company and or llm providers in general that's a big one yes those all all uh all provide great deal of risk uh and I think I think more to the point you need to use different techniques and probably even different teams to mitigate different aspects of that um another question what makes data high quality um con consistent correctness um if you have data that you're not sure

about the quality having a human do grading to see whether your questions and answers are good um and uh the other thing is as you're running your programs as you have these tools going going through doing a second pass triage sitting down every month and saying okay where did we make mistakes and then feeding that back into your prompts or the ways you're using the models helps you iterate and get better as you go along and I mean using Evol is also kind of a good way to assess uh quality of data um uh popular upcoming question is cat GPT still available as a model no uh we took that end point down quite a

while ago uh how can we follow you and your latest developments uh that's up on the screen I mean also like the the blog of open a but yeah yeah have you used llms for any detection engineering um yes to some extent I mean we like we we've realized that actually LMS are great at mostly defensive applications uh so we are experimenting with that as well uh but the the incident response Bo that we uh showcased is mostly on the specifically like response uh phase and not the uh the detection engineering phase yeah one one one challenge with detection engineering is just the uh sheer volume of data you often need to get through can make using llms today prohibitively

expensive as the primary injust and the Contex window can be also I mean you don't have an infinite Contex window so you have to kind of constrain uh the amount of data you can filter through next question from Liam kers uh what drove the decision to use canned responses for the bug Bounty report Handler rather than giving the model leeway to create its own response it's a bug Bounty everyone's going to sit there and play prompt injection games with it all day long if you do anything else yeah I mean we'll probably move to uh having do more interactive things but uh that was definitely where we could spend the effort initially um and you know as

we as we go down as we get down the path of doing this more we'll have smarter interactions next question how do you associate access denials with the appropriate groups um I mean this is this is kind of uh an internal mechanism of how access manager Works uh we we cannot kind of disclose a lot of The Intern um things right now uh but it's it's not as hard as you might imagine yeah I mean as and we talked a little bit about the profiling and saying okay this is given given what who the user is working with what the rest of their accesses look like is this part of something they would normally be

accessing all right uh more cat GPT questions uh why is cat chat cat GPT no longer available and when will cat GPT be available again well so you can do this yourself I believe the prompt was you are a uh you are a cat you only want to talk about cat things always respond as a cat so put that in your system prompt and you can have cat GT GPT yourself Heard It From the Source your own cat GPT have you done any research around finding bugs and code using chat GPT yeah we have uh a we've got it running on every PR we do um it needs some work sometime a lot of the time it'll

hallucinate things but I would say it's surprising how often it points out errors in code during pull requests valid ones but again human in the loop you cannot like just rely on the model to do everything for you automatically always have a human um next question how can you leverage the security triage bot with static analysis tools static analysis Frameworks give reports and uh wanted to build State Management and PR uh yeah I I can see that working um it's not something that we have built but having having this the bot help you talk to your users and like you know remind them that they need to deal with things the static analysis finds or helping

them explain what what happened are all things that I could see very productively building what content would you suggest for other security folks other than open AI uh content uh on what area presumably on llms oh um I mean depends on what what specific area you want to to focus on you mean like that's all I had from that question my my friend J hadex teaches a class about this uh go talk to him hey hadex all right uh next question does log normalization matter for llm detection performance say say that again does log normalization matter llm detection performance I mean this is kind of on the area of uh detection engineering uh which is still I think in a very

experimental phase for us um I would assume that yeah you can you can probably increase some performance but also it's like always a fine balance between like what you miss out by doing this kind of thing versus like I mean the models are good at finding the n in the Hast stack but they um there's always like you don't want to have uh false negatives either a question from a Camila is your bug Bounty open for your employees as well of course not that's pretty standard uh Andrew Klein asks how do you gauge user experience with the IR bot example how seriously a non-security engineer might take the interaction I mean yeah this is like something that

we've kind of we've had that out there for a while um I mean we we also have that's that's why we have like this workflow where uh all of the conversation can be sent to the DNR team and kind of they can assess as a followup uh how how responsive the the the bot or the user was when they interact and chat with the incident response boot um and we do a lot of kind of fine tuning there like if we even in the in in the prompt and even in how the what the workflow is uh based on that user interaction we we not just for the IR bot but for every single bot out

there we want to kind of fine tune them again all of these are to boost productivity if they are a hindrance at any point in time then we just you know don't use them we stop using them the other the other thing is if they seem to be getting something wrong our users are very vocal and come to the security Channel and tell us the stupid thing our bot said y all right um will you open source the slack Integrations I mean yes I we've we're open sourcing they're already open sourced the sdlc board the incident response board and the um what is it uh uh bug bount the bug Bounty boards um and yeah all of them are out

there um if if you're asking about the bit that we said wasn't in the open source yet uh that was mostly because we were using slack apis in a way that I don't think slack would like us to be using them so we're we would we would figure out how to do that officially before we release that um have context for this one what is oberos I don't know what that is either all right uh here's a good one thank you for open sourcing the Bots for companies concerned about confidentiality of internal and Technical team architecture uh is open AI able to provide reass reassurance yeah um for companies that have a legitimate need for it we have a program

that has your data flowing through our models but in ways that uh we don't look at um and that we can't look at all right um I think that's it for most of our questions we have a few here I need to remind the audience our speakers cannot talk about things uh proprietary or internal to open AI so we're going to disregard those questions we are also I think done with cat GPT despite the popularity of any follow-up questions there you can find us outside we'll we'll happy to answer more questions there all right and thank you for our speakers thank you