Let's Start Over!

Show transcript [en]

besides DC would like to thank all of our sponsors and a special thank you to all of our speakers volunteers and organizers for making security my background is I don't know I've been doing security analytics for a long time we're going about 1/2 a decimal large-scale security and like right here some liberal products that you may use some of them were private so the reason I started out - is that a few years ago I was working at a larger web property and I found out what the biggest problems that we had is what I like to call the equation batik simply because security you know security stick being taken a lot more seriously which is good

for us but it's also becoming much more expensive and at the same time it's becoming more expensive in a number of dimensions at the cost of talent is inflating to historic levels didn't concede anybody would more than fear of experience can probably easily find a number of jobs here the conference even accomplished the cost of security tools and products is inflating at the same time because they're paying higher prices for these because they're becoming more and more essential and all of this my thesis is that all of this inflation is it's not only a problem for the from the perspective of the business leaders but it's becoming a problem for us as well because it actually starts to degrade

our attention and our focus and doesn't help our mission now doing security in the process now just a little bit of background in terms of you know why did we do large-scale logging and monitoring and security analytics well the short reason is that it's required by a number of standards so if anybody has if anybody has to undergo healthcare audits and certifications it's required by those it's required by things like the sock to Ceres and and the PCI certification it's required by the NIST 800 if anybody's doing NIST 800 it's required and in fact even a wasp last year added a line item to the top 10 called insufficient logging and monitoring so even a wasp is is

emphasizing the importance of logging and monitoring so we we set about to do this at great scale so the the the story is you know it's a fairly typical story I think a lot of us will live through this so senior security person you go into a new organization and you find that security upgrades are needed that more needs to be done in a number of areas one of which is logging monitoring detection and analytics so in my case so I said about to do this and I found that I was able to build a really strong coalition with my with my audit and compliance department because they had requirements they had all these alphabet-soup requirements and and we

had customer requirements as well and they actually had some money to fund these requirements my team had had experience and skills to actually do the work so we said about working together to try to solve this so we got together one day and said you know look InfoSec has about six seven hundred thousand in budget for doing really large-scale logging and monitoring and detection and security analytics so let's just do it so we said about to see what we could do turned out we didn't actually we didn't have enough money we'd have merely enough money to do this at the scale we were trying to do which is I'll go into so in terms of where we spent our time

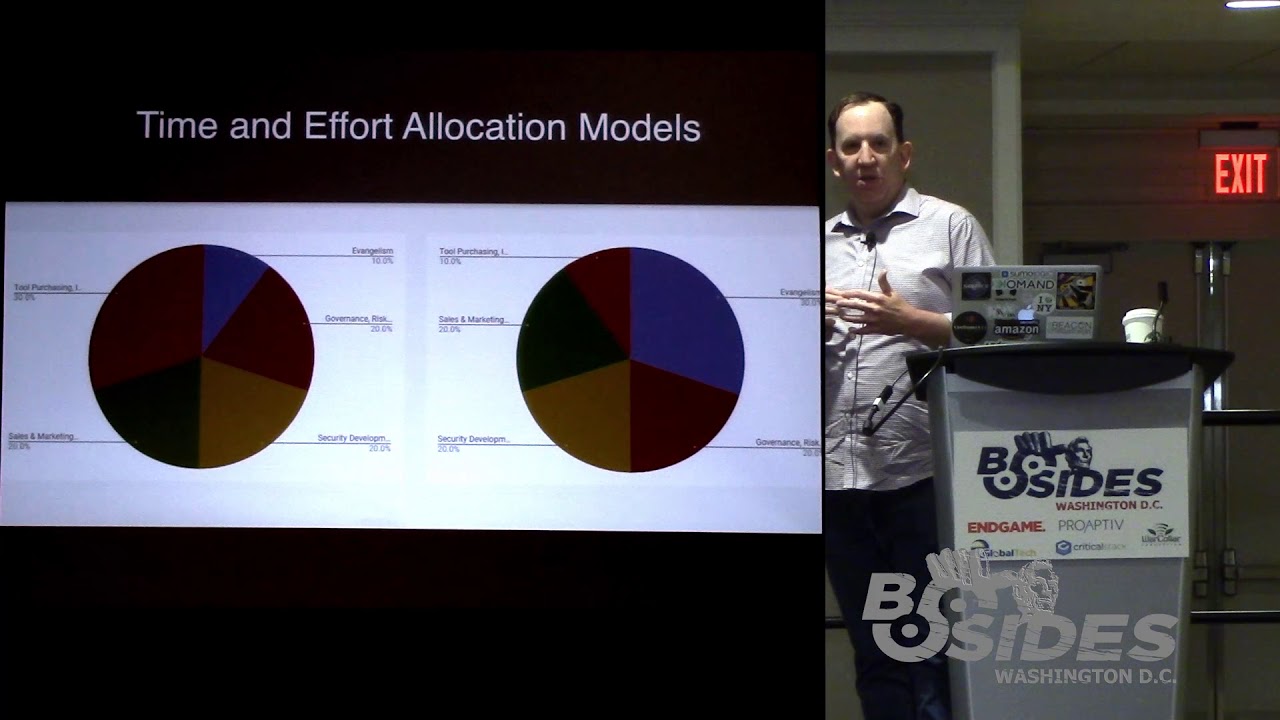

the reason that security inflation and what I call product sprawl which I'll define is became such a problem is that these were these were kind of the core work streams in terms of these these are the work streams that security needed to be do to be doing that were relatively high value so customer conversations security product management governance risk and compliance obviously working with audit and InfoSec and then there of course there are engineering work streams there's a whole another set of engineering work streams about security develop life cycles and architecture and product security response one of those is is tool selection it's one of those as security tools which has a number of work streams like selection and

purchasing operations and then finally there's one that I call evangelism and this is one that sometimes it's hard to make time for this but I think it's actually one of the more important work streams because as I talked to business leaders in my current role and in the past I find that evangelism is one of the most important things that we need to be doing just because there are so many misconceptions about security and about how security works and what it does and this is a large work stream and it's hard to make time for it but it's actually really important because no.41 the business is never going to learn to speak our language for the most part

they're never going to learn to speak security and come understand the way you know understand is talking in our native language talking about how we talk to each other we really have to learn to talk to them and to because there's a lot of misconceptions about security even though that there's much more awareness of security problems that's these days there's there's a lot of misconceptions and this is I think it's not so much because people don't so because people don't care about security or they're trying to do the wrong thing it's just because there's there's so much confusion and there's you know in the final analysis security is attacks from the perspective of the business

it's largely attacks it doesn't generate revenue with few exceptions it doesn't actually boost the the Alpha or the bottom line to a significant extent apart from doing what you need to do to get deals done so the business leaders are always gonna find a way to try to find shortcuts not because they don't care about security but because they're just they're trying to get their work done in ship product so they're always there trying to find shortcuts in all problems that's not just security so in terms of where we spend our time so the problem was if you look at this this is kind of a comparison between two models so on the Left I found we were spending about a

third of our time on security tools and products between selection evaluation purchasing opera operationalization if that's a word and we're spending way too much time on just buying and operating security products which left us very little time for evangelism in turn in terms of educating the business exploring assumptions exploring you know why we need to do security this way versus that what the risks are to the business how we need to address them the fact that we can't just buy security in a box that's a work stream that is really hard to time box it takes as long as it takes to talk through these kinds of misconceptions and do these kinds of educational efforts and outreach and if

you if you get into this kind of a feedback loop where we're spending more and more time on just keeping the lights on keeping the tools and the products in the operational environment running and doing the core security work streams we don't have enough time for evangelism then we don't have this time to have a meet a meaningful dialogue with the business about what we need to do and how do we do it better then it can be really hard to get out of this loop you can be kind of end up stuck in the loop where you spend all of your time on this operational stuff and yeah you don't have any time to talk about actually

trying to make things better so in terms of the tool workstreams this this was what the tool workstreams consisted of it was everything from evaluation of tools and products to approving proof of concepts to selling in evangelizing you know proof of proving business value and selling the concept of business value to stakeholders and allocating budget in order to buy tools at great cost and this is where this is the work stream that because this you know where this leads to is this led to spending a lot of time meetings and more and more time in meetings just talking about the process of which tools are we going to buy which products and were we going to buy and

services and how much is it going to cost and who's going to pay for it and so that led to that led to us you know we there were times we could spend almost a third of our time was spent in and just in the tool work streams between selection purchasing deployment opera opera operationalization which is the word I'm making up and just kind of babysitting tools and products and services at great scale so this led to what I call product sprawl which is where and I see this you know I feel like I see this everywhere I've been in the last four years the more the the more money and organization has the richer it is the more of this you see

where security teams have have more and more products that kind of go on a buying spree and so you go to you go to conferences and you come back with a set of new products every year and eventually the security team the security team breaks out into groups like architecture and operations and it grows because the security team spends more and more of its time just installing and managing and administrating operating and troubleshooting products and managing fleets of security products which takes time away from our core mission of actually doing security engineering architecture risk management etc and and so I feel like this is a this is actually a drag on our productivity and it's actually a risk to our success as

we spend more and more time on this operational stuff and just product maintenance it becomes an actual risk to success in our mission so I wanted to get rid of that so we set about taking a look at what an alternative world would look like so this is a cost comparison we worked out we were looking at so in our case we're operating a fleet of roughly 20,000 give or take a thousand twenty thousand servers in an AWS environment one of the largest and in order to instrument those with two security agents in order to meet various requirements why intrusion detection agent one endpoint detection response agent to catch things that the the IDs agent didn't see and to do other things

it was going to cost almost four million in spent between that and the cost of ingesting several terabytes almost a terabyte per day of log data into a large-scale data analytics product we were looking at and a burn between three and four million a year and obviously that was a that was a lead to a so you know a significant conversation that was hard to get past with the business because that price tag is so large that the business would would just have sticker shock so looking at open source alternatives we realized that we could replace all this with open source tools and I'll show you what that looks like what that model looks like

and now the operational cost doesn't go away there's still compute storage and operations because somebody has to build and run all this but we estimated it would be about roughly ten percent of the cost of doing this with commercial products and you know on top of that we have the cost of head count so we're looking at no we're looking at numbers that were large enough that it was became just a block an obstacle that we couldn't get past at times because you know between the tools cost and the head count cost now we're talking about trying to take budget away from from flagship products that produce revenue and generate happy customers and that's you know it's really hard to compete for

dollars wait for things like security initiatives it's it can be difficult sometimes are challenging to ask for for budget numbers in these ranges unless you have a unless you can build a really compelling business case which sometimes you can do but you know even if we even if we were horribly wrong about our cost projections even if our cost projections were off by half even if we're say the cost of doing the cost of DIY or Do It Yourself is up around three quarters of a million it's still it's still a massive decrease in a massive savings from the the you can see here it's still a massive decrease from the the model of using

commercial products and services that we're looking at and this was this was just to get started with the first set of requirements so so the great thing now is what we found is that going in an alternative direction like this the great thing is now the business is actually interested and and the business is really interested in talking to us now and they're actually happy to talk to security which is something it's that's can be hard to get to but if you're talking about 18 and 90 period cost reductions with possibly equal or better outcomes now you've got a much more interested set of business leaders so what we ended up the direction we decided to go in was the goal was to to

use open source tools in the sock instead of the commercial products that were that we were going to burn three to four million a year on and the goal was to try to make an open source immoral what's sometimes called a security information and event manager because that was one of the requirements for the sock is the sock wanted to have a sim or a sim like tool in order to do their work we need to support a lot of all like all the major popular use cases for Sam that like you see here and I'm gonna go will go through those one at a time the other requirement I had is I wanted this to be I wanted this to be semi

agentless because it's hard to get to an agentless world in order to get all the data that that that you really want that I like to have it's hard to be really agentless but I wanted to at least get to a very lightweight agent footprint because I didn't I wanted to stop spending time on troubleshooting agents you know troubleshooting agent interoperability problems agent performance reliability problems agents that fail agents that fail and take applications down with them and associated 7-1 emergencies and emergency support you know work associated with that not only because operations wanted to get rid of that work stream they wanted more more reliability there but also because security really didn't the security team

wasn't equipped to kind of try to do that 24/7 so this is what our this is what our n solution look like so in the place of so first of all the log analytics platform is replaced by an elk stack anybody now I imagine is probably some people using L care L extends it's not cream it's elasticsearch logs in Cabana I'll shell actually show you a live demo what it looks like so that takes the place of the log ingestion and analytics platform and then the the data store the primary data source is the bottom so we have so from Windows we take sis Mon events system on is it is a this one is a new audit log that was

introduced in Windows some years ago the keeps you incredibly rich detail about almost everything that's happening on a Windows host we take the system logs like application system and security event logs will take Windows Defender logs which give malware detection then the linux world and the links where we have OS query and we use OS query but to that we started adding audit D data because audit D if anybody's anybody used on it D so few people oddities was almost like the system on equivalent for Lennox gives you an incredibly detailed record of process executions user commands network connections almost any cisco that takes place so oddity and then we take system logs for things like

an authentication and sudo activity and anything else will take flow logs in our case we're taking flow logs from a V PC in a cloud environment you could replace that with net flow in a terrestrial or non cloud environment firewall IDs events the IDs the IDS events we took from sericata there's a couple of options here there's sericata and there's bros anybody using sericata anybody using I imagine there's a about bro I imagine there's a few bro users here so I use now either one is is they're both great I used Cercado only because my security team my folks were more familiar with snort than Barroso sericata it was relatively familiar and it was an easy transition from them and

then finally cloud trail if you're if you're doing this in an AWS environment in the cloud the cloud trail event log baudet log is really useful because not everything is going to be seen by either host or network activity if somebody's persisting in the control plane itself or doing something strange you really need the the logs from the control plane whether it's cloud trail or stack driver in Google Cloud or the azure equivalent and so this is kind of a high level diagram so all this data the way that elk works is all of this data is streamed into a log stash of listener or log stash collector by various means someone comes in by

syslog some of it come in comes in from agents that gather this and send it as JSON some of it is a pull model we have to connect out to like an s3 bucket and read data from there for some of the AWS data types but basically so all this data is gathered by logstash goes through a series of transforms in order to parse the log messages into fields so we have feels like source IP DES type e username etc and can normalize those fields across event streams and then all of that normalized data gets saved into an elastic search data store which is the really really big service for having really really large amounts of data and messages and

then finally Cabana is the user interface cabana is a not exactly a sim tool but it's a sim like user interface into the data allows you to run searches and visualizations and and hunt for threats and run reports and all the things that the SEM users want to do so are we on time so we're good so in terms of use cases that's an unfortunate color choice in the font but what it says that on the left is these are actually and I'll share these afterwards but so these are actually on this these are the sim use cases that were defined by Antoine Shaw okay at Gartner who's an analyst at Gartner now he spent a lot of time in

the sim in the log analytics world and now he's at Gartner and so these were the these were the major use cases that I had to solve for and what I found that if you asked me say five or six years ago I'd say we might not be able to do all of these in open source tools but the fact is today that the the the elk stack has become sold mature and silver of us today that now out of the box obviously out of the box none of the open source log analytics tools will do these on turn-key but with a little bit of work in development I find you can actually do all of these and so let's

take them one at a time [Music] so these are authentication alerts so here we're taking authentication events from Linux instances Windows instances also taking authentication events from web servers like nginx and the like and so here you want to do things we're looking for things like anomalous authentication activity like you know the same user is logging in from from Washington and from Romania at the same time Geographic time-based anomaly detection suspicious activity is really easy to do really productive in my experience malware detection so we can do this several different ways so on the top here the the first event is ism is a detect coming from Windows Defender on a Windows instance and the bottom list is

a set of malware detects coming from a sericata instance that are basically looking for malware doing beaconing or situ across the network in terms of validating IDs alerts so these are these are IDs alerts from sericata on the bottom so we had an IDs alert for some suspicious web activity somebody actually going out and doing a get Vai curl and on the top we have audit D events and what's interesting about this is or sorry actually these are actually now I'm sorry it's backwards so in the top on the top we have sir Qaeda IDs alert on suspicious suspicious outbound web download activity the bottom we have oddity events and what's useful about this is so you're looking at the IDS

alert we see that hey it looks like you'd think it somebody went out and downloaded something via a curl and that's interesting and that but you know that that could be that could be a dropper for a malware detonation or it could be various things or it could just be a human an ops person going to get a piece of code or a data dictionary it could be something innocent it could be an application that uses curl to go and call api's and web services so what's what's interesting about what's useful about the oddity data is oddity and the bottom gives us it gives us tells us yes it was W get or curl but also tells you the URL that was

connected to and it tells you that the name of the user who did it so there's a lot more information there in terms of trying to investigate IDs alerts and make a decision flow data so these are visualizations of flow data these are actually flow logs from a V PC what you do the same thing with net flow to all kinds of flow based visualization analysis from-from Geographic to port based to traffic shape to byte counts and and you know lots of others almost anything I think that the the net flow or flow analyzers do we can do in in Elkin Cubana so tracking system changes so this is kind of interesting so this is um this is actually a cloud trail

event called modified snapshot attribute and the reason I picked this one is anybody read the there's been some indictments related to the the intrusions in the DNC that took place one of the indictments that came out I think earlier this year talked about the investigation into the the intrusion into the DNC of the Democratic National Committee and one of the things that I noticed in there that surprised me was that when they when they came to time to start X filling data they did it in a way that I'm not sure that many of us have thought about before so actually the if the indictment is correct that the attackers actually started sharing snapshots so a snapshot

is like a it's almost like a VMware snapshot it quit but it's but it's not exactly but it's basically like a disk image of a point in time of a virtual machine in in AWS so the attackers started sharing the snapshots with their account which is a supported feature and they basically just shared all the snapshots they could find in order to just exfil all this data in bulk back to Russia or whatever it was going so this is a clot this is actually the cloud trail event that you get when somebody shares a snapshot when they modify his snapshot permissions to share it with another account and you can actually see it's partially redacted but the the

destination account number is in the lower red box so this is something that if we were doing real-time monitoring and alerting on this we could alert on this and if somebody could jump on this and say hey why are snapshots being shared with some account we've never seen before then depending on how fast you can react you might be able to shut this down these are nginx events so web application attacks so we used OSX for this is a lot of people do Oh a sec is really good at this what we found though is that because I was sec is largely doing log analytics we could actually do most of what it does natively by writing

our own searches in the web logs and so we did so we were able to do I think most what OS sec does just by doing analytics on things like web of web server and web application logs cloud activity monitoring so these are analytics on the cloud trail data so looking for anomalous activity there's all kinds of interesting things you can do here and then finally threat intelligence so one of the requirements one of the big requirements that my team had was for threat intelligence Cercado actually turns out does have threat intelligence feeds and integration it does give you threat Intel events you can add obviously we can add any sort of threat intelligence we want we have

threat intelligence subscriptions we can bring that in to elasticsearch Kabana and do correlation with those and then finally endpoint detection response so these are and so worried about these here's an illustration of a couple of illustrations of why I wanted to have audit D data so these are flow logs and these are flow logs in one of these flow logs one of these flows is actually from its from a persistence mechanism is from netcat exporting a command shell from a web server that's been hacked so but which one is it you know looking at these it's not always that easy to make a decision from from the information that we get here it's not always that easy to make a decision

about which one of these is the intrusion or which one is the one we want to investigate so if you look at the same thing an audit D data anybody see it here

so it's right so it's the so it's the ninth one down you can see the netcat execution and then you can see the next neck cut actually executed and then it made a connection and then these are actually in descending time order so you can up at the top you can actually see the commands that were running the shell like somebody ranked at Etsy password so that's great cuz there's so much more information here in order to make a quick decision as to whether this is something worth investigating or not and obviously with this amount of detail there's there's so many things we can we can readily detect like for example here's another one here's a set of flow

logs and one of these is data X fell one of these is data being copied somewhere else which one is it though you know we can look at things like destination ports right but there was the but sssh port activity in my environment was supernumerary it'd be really hard to find the one connection in a million that was that was suspicious we can look at things like byte counts but if you have tools that use SSH to manage instances and transfer data around it's going to be hard to try to find the good from the bad there too so here it is again in these are oddity events which one is the X file somebody said SCP so yeah it's basically

the sixth one down and this is great so now we know that yes there was it wasn't necessary was SCP somebody's copying files we know the name of the files that were copied that we know where they went and we know the user who did it so we can go ask the user hey is this normal what's going on here these are Windows events these are these are system on events you can see that system on for example it gives us a record of process execution among other things so in here it's found that this is actually this is actually me me cats which is a tool for extracting passwords it's trying to look like it's part of

Windows so it's it's being it's been renamed to for some reason it's been renamed with two extensions to WMI Dex exe there so we could you know we could find that a couple of ways we can find that based on the name or the path or the hash or a Windows Defender event but let's talk about something more interesting now so all nine of these use cases are actually I think we are pretty easy to do well wouldn't say easy but all nine of these uses can be done however there's one kind of big popular use case that's missing here don't say blockchain because it's not that right but what are we missing the dashboard

I'll show you know um so machine learning right so machine learning is the thing everyone's excited about and I think it has great potential now machine learning if you know there are there are open source tools to do machine learning with that's a bit more work to get up and running with those and we're not gonna I'm not going to try to go into that here however I want to leave you with something that you could actually leave here and use today and that because it turns out that there there is one algorithm in the open-source version of ELQ called the significant terms aggregation and it was originally designed as and the reason that they use this analogy is they

describe it as when they describe how it works it's a it's a it's a statistical algorithm that tries to find heat differentials between background and foreground terms and sets of data so it was originally designed for fraud detection but it turns out it's pretty good at things like threat hunting as well and finding an anomalous activity so for example the cloud trail the cloud trail issue with it with the snapshots is a great use case for this I think because that's a case where you know because I can if as long if we know what we're looking for then we can chances are we can we can easily readily write rules and searches visualizations to detect the threats

that we know we need to look for and detect the problem is how do we detect what do we do about the things that we don't know to look for the things that somebody's gonna think up and try something new next week or next year and we we don't we're not going to alert on it because we've never thought of it before so that's a use case like for example in the case of the cloud trail incident if we were doing if we're doing anomaly detection with an object with an aggregation like this looking for anomalous commands or anomalous command username or anomalous command and source IP address tuples because you need to use this on tuples or data pairs and you

potentially find what is it potentially tell you that hey here's a command I've never seen before or here's a command I've never seen from this user before which is really interesting another example if you run this on things like Linux processes it's actually pretty good at it like here it's it's found the netcat process the persistence mechanism and it's found the the data ex ville the SCP activity both of which were they had only occurred between three and four times so they were very rare and so they stood out as anomalous same thing for a network data here it found if you do this on things like process and network activity I find that the human activity tends to

percolate to the top it's pretty good at finding it hey here's human activity that is really unusual may or may not be an actual threat but it's unusual and therefore interesting in this case it found it found a couple of downloads it found somebody running TCP dump it found a download by curl then it found somebody installing a Python module so for Windows events here's another one in this case this is actually me me cats renamed to Google update X exe again with two extensions I'm not sure why but here it's found it because the combination because this one gives us hashes if you turn it on since bond can give you hashes for a process for

processes that execute in the window so it's this want to give you the hash of the image file so here it's found that hey this this hash and process name combination is really rare and unusual and in fact because it's it's not actually what it claims to be so this is a great way of say if we if we don't know you know because we don't always know well what's the name of the malware file going to be what path is it going to execute from etc we can't know that in advance so this is this is um I'm finding this is great potential for finding malware so questions and time how much time do we have by the way

we have yeah we have 14 minutes so questions yes

[Music] I was operational sure so the question is do we have I don't do we have hard data I don't have hard data yet because what's happen is is I actually left this job that I was at in order to pursue this full-time I'm now in the process of getting a handful of environments up and running and getting operational with this they're not in the 20,000 server fleet range at this at this point they're their environments that ranging more between between three and ten thousand servers so I don't have hard data yet on what this will cost but as soon as I do I'll start to share that in terms of what this looks like yeah question

all right so the question is could these use cases be met with a more restrictive execution policy depending on the environment yes if it's if we're talking about a controlled corporate environment where we can apply things like whitelisting policies or respective security policies yes in my case running a web property one of the features of the web property was the ability to for customers to to develop and run arbitrary programs in in certain languages and so trying to place restrictions on what they could do wasn't it wasn't necessarily viable so this this is an elk instance this instance is running an AWS and it's connected to a couple of it's connected to a Windows and a Linux instance that

are just acting like honey pots in order to give us live data so we're bringing in all the data types that I described so bring in Linux audit D and system logs Windows sis mana and system logs bringing in nginx web server logs circuit events flow logs cloud trail events and all of that feeds into a series of visualizations and alerts so for example these are visualizations on the cloud trail data I like to start with visualizations and then drill down into more detailed views and then this is what and you know what the the connection has dropped one

while we're reconnecting this is what the alerts index looked like so in order to do alerts in Cabana you need to I need a few sidecar tools so you can use something called a last alert which is another open-source project from Yelp you can use something called four one one which is I believe from Etsy which has a bit of a UI either way we can so these are alerts that have been generated and and sent to and alerts index for review here what we typically do with these is in addition to displaying them here is either send them out to slack or to JIRA via web hook for workflow management in JIRA or we could

just do an they can just be emailed if workflow is email but you can do almost any alert workflow with these

this is what the significant terms operator looks like so here it's doing in order to use this effectively it needs to operate on on tuples so it's it's running on things like user names and processes processes and network connections any kind of data combinations that that you can think of so far with those combinations I find it's pretty good at finding things like malware persistence mechanisms data data Excel there's a lot of kinds of common canonical security tech in use cases I think it has great applications for threat hunting because one of the things people do like for example over here and you'll see people do a lot of Windows is here's these are actually these are the

windows events coming from a Windows instance and one of the things people will do is they'll start looking at the least frequently occurring processes in order to to hunt for suspicious process activity and and that's really useful like it's really productive but I think that and you know if you have if you have time to do that though I think that one of the applications for the significant terms algorithm is we can start to speed that up or start to semi automate that by using the anomaly detection algorithms to find specific processes without having to necessarily dig through and and and you know look at these one by one and try to make judgments about

which ones are suspicious and worth investigating this is an event viewer so there's I made a conventional Event Viewer for both the intrusion detection alerts and for the flow logs in order to be able to work with those you can create almost any kind of data visualization or representation here in Cabana this is the Linux data we have we have processes and commands so basically you know here's everything that's executing on the linux instance both the the process name and the and the command path that it ran as and then we have things like processes and network connections so we can see you can see things like what processes are connecting to what network destinations

you know is something is there a process and it's another great case for anomaly detection so if you have a process say like in a Windows environment normally only else S or W or one of the other system processes will connect to a domain controller you see anything else connecting to a domain controller that's often interesting in the Linux environment if you know that your automation is based in Python or or it's based in Ruby or something else then you're mostly going to see those interpreters making network connections to api's if you see something else making a connection and API or you see something random or you see one of these interpreters or maybe just a script

making a connection out to a remote destination that doesn't really make sense it's really useful and it's interesting and it's really useful to have the it's useful to have these these events because again just looking at network connections they're looking at things like flow data IDs alerts sometimes it's hard to make decisions about whether that the activity are looking at is normal or abnormal so with the network data it's really useful to know the process and the command that was attached to the network socket and the the name of the user context that ran it more questions yes

Here I am not so the question is am I using x-pac x-pac is a licensed feature so x-pac gives you some security features machine learning features graph analysis alerting a number of features x-pac is not free or open-source it bits of it have been open-source but it's not free as the license feature some of the some of the organization's I work where they're using it and some don't well you see here I'm doing this without x-pac because my original requirement was to do this using open-source tools so in order to do the alerts I'm using the last alert yes

so the question is do we on do we have the ability to contribute back so I think well I don't personally have the ability to contribute money back at this moment however I think some of it for some of the organizations that I'm working with I think that's possible it may be possible

I'm sure when you were selling this

[Music] so yeah I mean the short answer is yes definitely encourage that I do try to I try to contribute ideas and enhancements back to the project wherever I can and I think you know my intention is to eventually to open-source all of what's been done here but sometime in the future probably not until next year but I do want to to explore moving in that direction we have we have time for one or two more questions yes [Music]

on on this instance you see here give it a try there so there is an authentication layer in front of this Cabana instance it's you didn't see it because I logged in before I started the demo so we're using in this case this is a demo so we're actually I'm actually using an engine X proxy with no default website and no HTML content the nginx it just acts as a proxy it proxies connections it listens on TLS and does basic off and then it proxies connections to a Cabana instance behind it so yes

or what about different the difference in the labor cost is interesting so the question is what is the difference in labor cost between commercial products and open-source we believe that we projected that the labor cost would be similar there may be some savings there in terms of the but you know the short answer is I don't have data for you on that yet I think it's a really interesting place to measure in terms of is their cost differential one way or another because there's some savings in terms of there there's some savings by eliminating product sprawl gives us some savings that we're managing fewer products and we're spending less time in the tool purchasing and management work streams

but there's some operational cost in terms of getting open source tools operating at scale the training cost for some of this stuff is a bit less because there are training options out there that are priced below the the you're in ranging between 500 and 750 dollars there's some basic training options available for many of these tools so it's relatively inexpensive to get up and running because these are open source tools and they're so accessible it's sometimes possible to use slightly less expensive team member like you may find it's more feasible to use interns or team members that don't necessarily cost as much as a senior senior security person if they've had experience with these tools in

college or if they've just gone and learn them on their own and they already can make some of this stuff work but you know to answer your question it's it be really interesting to measure the cost Delta between proprietary and open source and that's one of the areas where I want to develop more data we've time for one more I think who's first okay [Music]

so in our case we were supporting this ourselves we had the we had the ability to support this ourselves however if if for an organization that doesn't necessarily want to go that route you don't actually have to do it yourself you can actually contract for for for the Elks deck you can actually buy support from the elastic product there's actually a commercial vendor behind it you can actually buy support from them you can also use a number of services there number of there at least two or three different elk as a service SAS offerings where you can consume it on a SAS basis and you don't have to operate it yourself we have one minute

left so yes [Music]

so meantime to remediation or I like to talk about mean time to know that's another case would be really interesting I think that our in general our productivity our speed our response time and our productivity in the stock was higher because we were spending less time on managing tools and more time on doing analysis and threat hunting we were we were able to build pretty much almost any kind of search analytics or alert that we wanted to we were able to do without limitations imposed by limitations of the tooling so it was definitely the mean to response time and mean time to know was definitely better that was one of the things that kind of

sold me on it was if we can get faster we can go faster and have better results at lower cost then it became very interesting and we are out of time but I'll stick around thank you for coming to my talk