OneClick Purple Teaming & Other Lies We Tell Ourselves - Brains

Show transcript [en]

So Grant Collan um what is your talk? >> One click purple you mean and other lies we tell ourselves. >> There you go. >> Thank you. >> Thank you.

>> Don't get too excited between organizing this and the battle. I'm really looking forward to what I say now. Um so yeah so going to be talking about uh obviously automation uh within the offensive security space obviously looking at it from the bent of uh purple teaming but it does apply to red teaming as well. Um so very quickly who the hell am I and why should you care? So I originally started as a blue teamer uh in engineering so obviously a lot of automation opportunities there. So that's where I originally started writing and doing automation and building tools. Um I then moved into the sock into our CDC um in the company where I work and worked on a

team specifically to build tools for our blue team to help make their life easier. And now I'm on the off side um in the same company after a brief break with this lot here. Um I am back at there uh doing red and purple teaming. Uh we do a bit of both. Uh little call out to Stuart. I don't know if he is here. No, I'll need to give him grief for that. He called it that I ended up in red teaming. Um, first time I met him in Bsid's London. Um, I told him what I was doing, blue team, some side projects I had and he said, "Have you ever considered, you know, joining the red

team and I thought, me? No, blue team for life." So, he called it um and red teaming and it's uh good fun. I can't stand slide decks. So, we have about eight slides total for this whole talk. So, you are mostly going to be listening to me just waffle with just some PowerPoint or uh bullet points and hopefully some funny pictures. Hopefully, they're funny. Um, throughout this talk, it's going to seem very much like I don't like automation. Okay, I promise you I do. But I like automation whenever it's in the right space. I have always seen myself as an engineer. In my mind, an engineer is someone who builds a solution to a problem. Um, and I feel

like that's in cyber security. in red teaming. I think we still do that. It's just the problem is this EDR is catching my malware. Let's build a solution to get around that. I think the principles are about the same. Um I do make some weird tools outside of this. Um so the QR codes up there. It's got a link to my GitHub, all that stuff. I will say I also have ADHD. There's a lot of unfinished tools on there. Uh there are a lot of tools. Uh and then a completely shameless self plug. I am the bots soon to be combat robotics and I person. Um, so the guys are out in the main hall if

you want to have a go trying to beat the duck. Uh, you can win a prize at the end of the conference. Um, so if you want to give that a go. So what I'm going to be talking about uh is what I mean whenever I talk about purple teaming because every company's uh setup, how they operate um their workflows are completely different, right? So whenever I'm talking about these tools and perceived downsides that I have, I want to make sure that you know that my angle of where I'm coming from when I'm talking about purple teaming because you might be sitting there going actually these workflows make perfect sense for us and that's fine, right? This is obviously from

why these tools are am I cutting out weird? No. Okay. um uh a little bit about why these tools can be um useful, what the benefits are, pros and cons, um where I believe the issues are with these kinds of tools, um where I think automation is best suited, and obviously where humans fit into all of that. So, purple teaming and automation today. Okay. So whenever I talk about purple teaming the way um that we operate uh or sorry our our maturity level I guess there was a really good diagram I think it was made by the SANS Institute um and I cannot find it for love money. I don't know whether they've now put it behind a

payw wall or what but I can't find it. After I describe it to you if any of you can find it and send it to me please do. I'll buy you a beer. Um but it had a really nice metric for purple teaming uh maturity. Um now it was very oversimplified but I think for this it makes sense. Um so it had it in three phases. The first stage of purple teaming is just doing retests of red team engagements. So you've done a red team engagement, your blue team have done whatever they've done and you just want to do a retest after it side by side. Right? That was sort of the first maturity model. The second level was

then doing also ad hoc assessments uh upon request almost of your blue team whenever they say that they want something tested. Maybe there's a new um vulnerabilities came out, whatever it may be. And then the third level, which is where we sit um is you have a dedicated purple team program. And we have red and purple teaming teaming in our uh in our function. We're just called offensive security, but we do a bit of both, but we have a dedicated um purple teaming timeline for the year, right? So, we have that outlined. We also then do the retests of the red team stuff and any ad hoc requests as well. Now the reason I say that is because we

are sitting at the third that that bracket where we have our own program. We have a fairly sizable team. If you're sitting here and whenever I explain the tools and I'm talking about the downsides and you're one sock analyst and you want to do some purple teaming, maybe some of these things might actually make sense for you. But that's um that's sort of what we are how we operate the automation tools themselves. So, I'm going to bundle these under breach and attack simulation tools. We we use one of these in the company. I am not going to say which because I have very not nice things to say about it and uh I don't want to get sued. Um so, I'm

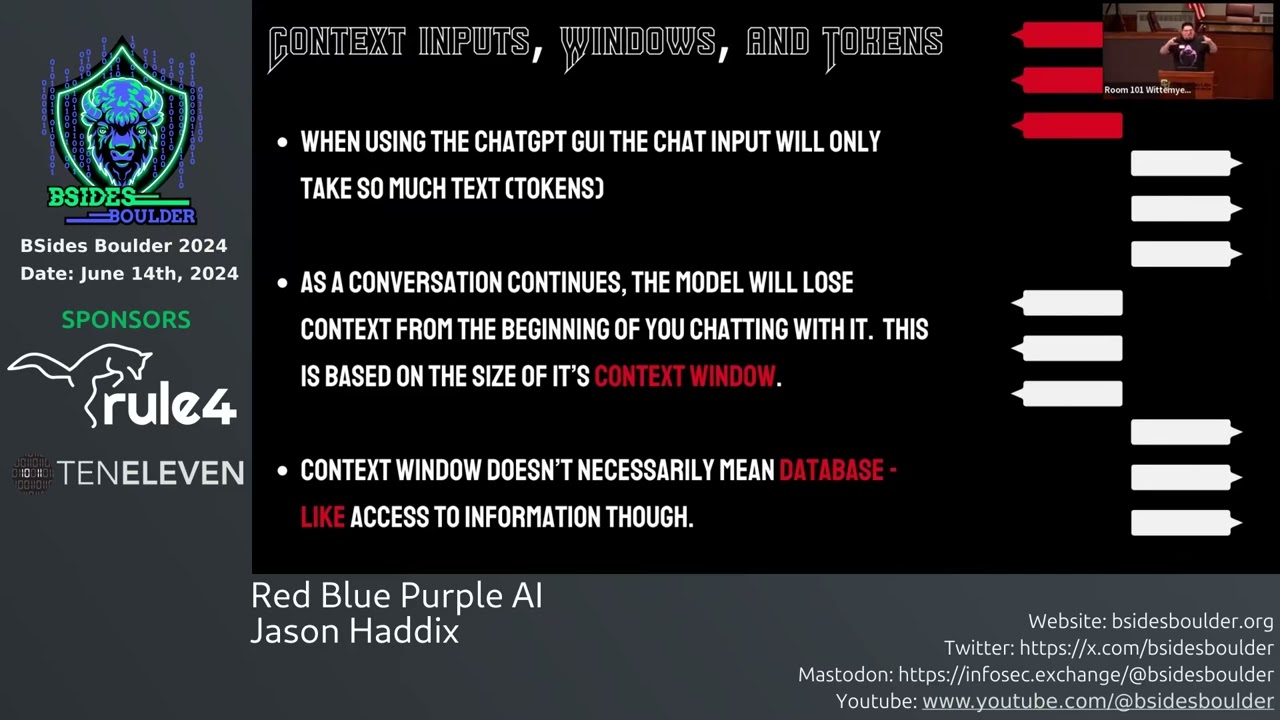

not going to tell you which, but it's up there. Um but I'm going to bundle them under as breach and attack simulation tools. Um they some of them would argue that they're not, but I I think that's much of a muchness. But how these tools work or what their their selling point is is to basically automate the entire purple team engagement almost. So you have effectively a guey uh if any of you have used a sore tool um very similar idea. You have a GUI that you can configure your um attack that you want to do. A big selling point for these things is their threat libraries. I'll talk about that in a second. Um but you

you pick the campaign, you pick the thing that you want to do. you'll have a server somewhere that has an agent on it or something like that. Um, you click run and then it runs through that campaign on that box and then you can check your EDR and see whether anything was detected. It's all very fun and everyone's singing and it all works perfectly well. The selling point that they have is their threat libraries. Now, their threat libraries are varied, but at its core it's going to be an AP group of some shape or form. Let's say Lazarus group, right? They'll have a threat library with their TTPs and that's you can select that and go,

right, let's see if Lazarus hacked us, would we would we actually find it or not? Um, the size of their threat libraries varies drastically depending on which one you talk to. Um, these numbers are purely fictitious, but let's say Scythe says that they have a threat library of 100. Attack IQ will then come in and say they have a threat library of 500. and then simulate will come in and say, "Oh, they have a threat library of 1 million." Um, they are all exactly the same threat libraries. They are just measuring with a different stick. Uh, if anyone's used uh centimeters instead of inches to make something seem bigger, you know what I mean? Now,

anyway, so I think ultimately it's much of a mness, but they will try and sell that quite uh quite heavily. Um now just from that brief description right of you you select a threat library item right which typically maps to MITER attack in some way shape or form you select a threat library item of like let's say Lazarus group you hit go it runs on the system and then you see the EDR afterwards anyone off top of head any issues with that go on go for it can do yes certainly anything

It's a very valid point. Yes, I'll talk about that in a second. You're ahead of me. No. Okay, don't worry. We will come back to that question because it's a couple of slides from now. Um, so how you get the access to run them is a very valid point because um actually no, I'm not going to talk about that just now. I'm going to leave there's a slide that's more related to that I think. So, these tools are um interesting to say the least. Before we move on to the next slide, I will say there is one of these that's not like the others. Um Caldera at the bottom. I'm not promoting it. I'm not saying

it's any better. It's open source. These other tools are very very expensive. You could buy a purple team analyst in Belfast probably too for the same price of these other tools. Calera's open source. So a lot of the issues that I have come down with the amount of money that you're also outlining for the perceived benefit of it. Um whereas Caldera is free. So um I would maybe treat it a little bit more favorably. So as I said these are these tools are there to try and make it nice and easy for someone to um go in and do a purple team assessment. Um one of the pros that I will not argue with I have no caveats. I

have no uh asterises beside is the easy demos. It is very very good at that. The way that we operate whenever we're doing particularly a purple team exercise, let's say they want us to test data exfiltration. We would go away, we would think up, okay, how me how might we do data exfiltration in the company, we come up with a list of test cases. We then talk to the blue team and say this is what we think. We get their input and then we go and test them and you know validate that what we think works the way that we think it does. And then we do a demo. So we use a tool called Vector. Anyone

heard of Vector? Okay, two, maybe three. Someone wasn't too sure. Um, we use a tool called Vector to basically put our test cases into. Um, so we put all of our test cases into Vector. We then, um, we then run through those things manually for the most part. Um, and then we do a demo. And the demo the demos are really really helpful for the blue team because our operator guidance in vector makes sense to us as red teamers and offensive security. Sometimes it doesn't make sense to them. Okay. The amount of times that we've been running through a demo and the blue team have went, "Oh, that's what you were doing. That's the that's

the way you're using that command." I see. So maybe partly our fault for bad documentation. I don't know. But I'm going to say it's just they they just don't know how offensive goes. Maybe. Um, so the easy demos, um, is definitely a pro to these because whenever we're running through that manually, we are copying commands into the command line with the blue team there. It's a fairly manual process. We're putting the commands in and then we're explaining them, right? Whereas these tools, once you get your campaign and you're happy with it, um, and you run it, you can put in pauses, you can put in break points in the progression of that. It's very much like code. So you can have the tool

go through and step through each of those attack things while you're on the call with the blue team talking them through it and yeah it's all pretty good. So that is the one pro that I will not have any caveats against. The others however repeatability they are certainly repeatable. That is the whole point of automation. Let's get some audience participation going again. Hands up if you're offensive security. Yeah. I was about to pick on you. Keep up. Keep up. Okay. So, keep your hands up if you have been asked to do a retest of something. Okay, keep your hands up. Keep keep it up. Nice and see you. Keep your hand up if during that retest the blue team did

not ask for any changes to the attack at all. Okay, couple of hands down. Stuart's not so sure, but really no changes. Wow. Okay. So in our experience, so for those of you who put your hand down, my experience has been that as well. Usually there is some variation on it. They've went away, they've done their checking and they're like, "Right, we want to retest on data excfiltration, but the way that you did this, can we add this or can we can you try this in this way?" So we have a detection. We're not sure if it's brittle or not. So can you tweak it a little bit? If we're running through those demos manually,

not a problem, right? We can just easily add that in these automation tools. If anyone's worked with them, I'm going to pick on sore tools again here just because I don't really have time to do it in the talk properly. So, I just want to get the digs in while I can. But if you've worked with a sore tool and tried to edit an automation, it's painful, right? It's awkward to do. It can be difficult. It's honestly in in my opinion more effort than it's worth um to go in and edit it for like a minor change. You just go and run that on its own. So the repeatability, yes, if that's your workflow. Um, but if it's

not, then it can make repeatability more awkward because there's maybe some variations in the test cases that you're you're wanting to do. Uh, quick wins, self-explanatory, right? Um, if you are a uh small company, maybe you don't have a large offensive security uh function, maybe you don't have any and you want to do some like uh quick sanity checks against your tooling. Certainly, these things work well. I would recommend Calder because it's free, but these tools do work well for those quick right I'm just going to throw this into against RDR and see what happens, right? And it gives lots of nice metrics and graphs that you can give to Calele staff that they love to look at all day. So it

does give that it can then give you of course obviously some validations of your tools. The biggest con which is coming back to your point is whether those uh tests are actually meaningful right because yeah so whether those tests are actually meaningful. So to your point, how do you get it to run in the first place? You have to get it whitelisted. If you whitelist the binary, how do we know that that hasn't affected all the other detections? Right. Okay. These tools are also very systematic, right? They're computers. They're running through a list. Anyone have any idea what kind of security tools are really good at seeing something going through a pattern? Same edrs. all of them really, right?

They see a pattern and they go, "Oh, that's someone doing something [ __ ] suspect. Let's let's block that." Right? There's no variation in it, right? Because you don't have a person at the end of the keyboard actually typing the commands, right? Uh he's got a brief flash of that, right? The tools are predictable, the people aren't, right? How many times have you mistyped a command? Attackers mistyped commands. I didn't actually need hands up for that, but I appreciate the effort. Um so whenever these things are running through they're running through in a very predictable pattern. You can add sleeps you can add variation but they are glorified if else statements. EDR security tools very good picking up on

that right it's very much like any sort of prepackaged malware. Most AP groups, albeit there are a few, and I know there's someone from our threat intelligence team who will tell me off here for putting a broad blanket on all AP groups, but most AP groups will not be running an automated script like that, right? They will do some, right? Certainly, there's going to be enumeration that they'll maybe do. They'll run some commands, but there will be for most advanced AP groups, they're going to be hands on the keyboard typing something. And that adds variation. that adds um you know typos, right? Um and time delays, right? No two attackers are going to sit there and

write the exact same command unless they're working from a cheat sheet, which again some of them do, but you still don't have that variation, right? The other reason I think it's not necessarily meaningful, right? And I I blame MITER attack for this a little bit. I do think MITER attack is a good thing. But anyone here think that an AP group whenever there's the MITER attack profile of let's say how fuzzy bear operates, hands up who thinks they follow that TTP to the letter, right? It's a rough outline. It's a rough guide of this is more or less how they work. If they're in a network and they usually go through like an RDP session to do something and they land on

a box and there's no RDP sessions, but there is a boatload of SSH keys, I don't think they're going to sit there and go, [snorts] "Oh, no. I won't touch those. No, it's not RDP. No, no, we'll leave it. We'll leave it, guys. Come on. We'll get we'll get the next one." Right? They're not going to do that. And that's where the human creativity comes in. And that's where a purple team operator, an actual physical person looking at it, can identify those things. Now potentially you are doing a test that has a very limited scope and maybe your blue team, your sea level staff, whoever it may be, don't want you to, you know, go really off track, but you can still

identify it and raise it and say, "Right, we did this purple team exercise. We did it the way you wanted it to do." Um, but we did notice there was a ton of SSH keys to admin accounts sitting there. Maybe we want to do something about that. And the tool isn't going to pick that up, right? it's not going to know um that that's there unless you're explicitly telling it to look for it.

So, as I said, I quite like automation as a thing, but it's automation where it wants to be, right? Um, as I said before, right, there's no there's no human element at the end of it. Um, a a quote, I can't remember who told me this, but I quite liked it was that if the tool is doing everything, then who's thinking like the attacker? And I quite like it. I was going to put it up there, but it seemed too pretentious. Um, the main thing as well that I think from a purely selfish standpoint for these tools Anyone will do a hands up again. Is the hacking part the fun part of your job for those

of you in file offensive security? Yeah. Why the [ __ ] would you automate that? Right? If that's the enjoyable part of the job, why would you automate that? Right? I can understand it. Again, I will stress if you don't have a purple team, right? If you're early in your maturity level, you know, potentially these kinds of tools are beneficial because you just, you know, something is better than nothing. again because I'm juded with these tools because the one that we use never works right. It's a pain. I don't like it. I will tell you which one if you buy me a pint later. Um not going to set up here. Um I would recommend Calera if you were

looking for a free and open source one. Um but the boring parts of my job are the report writing. They're building the testing infrastructure, right? They're collecting all our log data to build a timeline. To me, that's all the boring stuff that I don't want to do. And that's where I think automation really can benefit. And I've got some examples of automations that we built and tools that we built inside the company just to give you some ideas. Um, obviously all that code is closed source. There may be a legally distinct version of it on my GitHub. Just saying. Um, so payload testing is a is a big one. So one of our uh red team operators from London, he um

he does most of our malware development or used to do most of our malware development. I managed to worm my way in there. So, that's been good fun. But he said the payload test itself and getting something that can evade EDR took him weeks and it was mostly getting the infrastructure set up and the um security tools installed on our testing box that we might be going against running it failing. We try again and eventually most EDRs will eventually do a sort of like shadow quarantine um where it hasn't overtly quarantine this machine but the machine's like sort of now higher risk. So it now means our detections are maybe stronger than what they would be on the server that we're

maybe going to attack. So whenever that happens we have to blow it away spin it all up again. So one of the tools that we built using a lot of infrastructures code is an automated payload tester. And the idea is that it is a a web gooey. You go in, you upload your payload, your malware to it, whatever it may be. And then you select the operating system, the thing that you want it to to go against and uh test. You select all the all the settings that you want. You hit go. It will go. It uses Terraform, Anible, Python, a little bit of Go just because I got sick of looking at Python by the end. um to basically spin up

these servers in our infrastructure, configure them with the right security policies and settings that we want, add any users that we want onto it, anything like that, and then detonates the payload. It then ties into our security tools, our SIMs, our testing one, and looks for any alerts against those boxes and then pings our chat to basically say, "Hey, you got caught." Um, I did have the message saying you got got, but apparently no one in our team got the reference, so I had to remove that. Um, and so that's that's got the time down for that. Um, he was using it uh recently for some testing. Um, and that's got the time down to about a day

for his testing because he can just hit go get a cup of coffee, go work on something else, and then come back and it's done. Um, the lab tear down and setup exactly the same, right? Infrastructure code is everywhere now. It's fairly easy. The payload tester is a bit of a bear. I I I started that project thinking, ah, this will be easy. Few lines of Python will be easy. Um, it got bigger really quick. But even simple things like lab setup and tear down, infrastructures code, terraform, very easy to do and get set up to help um remove that repetitive spin up and tear down. Evidence collection was another fun one. Um I don't know if you've ever

been here. I'm going to look specifically at SH for this one. um where you're going through you're going through an engagement and you've been hitting your commands in something's going not right for you and you've been just trying to get something to work and then whenever you look back at your timeline document that's keeping note of everything you ran on the box and you're like did I put all those commands in did I miss one have I what time did I run that at right been there yeah okay cool just check um I thought maybe I was an idiot it's possible um Right. So, keeping track of the evidence that we run on an engagement is is an important thing.

It's important for our blue team to be able to see, right, this is everything we did in a chronological order order. Um, and trying to do that manually is a bit of a bear. So, we made an evidence collection system. So, we use vector to keep track of all of this stuff at its core. It has an API. Um, and so we made effectively a log parser. I guess we can dump out all of our log logs from our C2s. um chuck them into this tool. It parses them, makes them all pretty for us into a vector format and puts it into vector for us. So, it's built. We can also do it with um SSH command history

as well. So, if I'm on a command line typing things, we can dump out my history at the end of the OP, I can chuck it in, and again, it adds it in there. Okay. takes a massive headache away from me trying to remember to keep note of everything I've done or if I clear history to try and make life difficult for the for the blue team but I forget to make note of what I ran first it makes my life difficult trying to figure out what I did. Um so that's another big uh pain point that you know we can take away uh from the you know the the brain space of your red team

operators right let them uh let them just focus on the hacking side um and the reporting and tracking findings. Okay, same idea, right? We have all this stuff into vector. It has an API. We can then um uh we can then pull that into like Jira. I can't think of any other ticketing systems, so we'll just stick with Jira. But you know, you can put it in your ticketing system of choice to track all your vulnerabilities. Um and you can automate that, right? And even the reporting vector in particular, I'm not selling vector by the way, it's just what I know. Um has some nice graphs that it can import. Again, you can stick that to your sea level staffs, keep them

happy. Right? So, those are just some examples of automation where I think the the pain points are. Um, as I said, reporting is one of the things that I hate most. Um, and like even even simple things with your reporting of like just having templated documents. Uh, if any of you work for a consultancy, I'm sure you probably have um templated documents anyway, but for an internal security team, it's not always something that that they do. Um, and it can make life a lot easier of then just drag and drop the findings, slap a big critical on it, and then uh go home. Um, it's best to do that on a Friday. Really, uh, spices up

the blue team's day. So obviously I said that you know the the main thing is to take out take away the pain points and the real thing is to make sure that we automate the right parts that we want to be repeatable, scalable, all that good stuff and remove any of the I mean the bits that we like about the job. As I said, right, it's the fun part of the job is actually hacking it. And I think cyber security, offensive security in particular, you kind of need that that little bit of creat creativity, that little bit of gut instinct. Um, and then also the uh the context is a big one, right? Um, for anyone who is a um

redteamer for your internal uh we'll do a hands up. Anyone an internal redteamer? So, you only work for one company. Oh, yeah. Cool. Right. How many times have you been on an app and your knowledge of the company has helped you do something that someone external wouldn't have been able to do? Right? Is the [ __ ] automation tool going to know that? No. Right. It's not going to know that. It's going to run through a very predefined set of list of things. Um, another thing that I want to mention just before we start to wrap up because I am I'm almost bang on time. um is I have no evidence for this at all.

However, if you have an EDR product, let's say strike crowd, right? You make a new one, right? Bear with me. and you want this tool to look good and you know that there's automated tools out there like Scythe, like uh Attack IQ, like Simulate that run these attacks in a very predictable fashion. What would you do as the EDR team to try and give your EDR the best chance against these things? Go on. Any ideas? Run it against the tool, right? They're going to fingerprint it, aren't they? We're going to look and see how does this tool operate and then use that as a benchmark to basically detect those things even when potentially they can't.

Now I say I have no evidence of that. I have a little bit of evidence and a gut instinct. Um we have seen our EDR pick up the tool doing things that whenever we do it manually doesn't pick it up. Now there could be other reasons for that. my guts telling me to go with the scummy way. Um, but there could be other reasons for it, but I think there is a fair chance that they're they are fingerprinting it, right? They've they've got the same access that you have to these things. They've got the money to buy access to these tools. Um, and it makes them look nice and shiny of like, oh, look, we would catch Lazarus

Group. It's like, you'd only catch them if it was run through Scythe. Um, so I wanted to end on that. And again just to get the really pretentious quote out of the way because I quite like it is if if the entire attack chain is automated then there is nobody thinking like the attacker. And with that any questions

this image was really surreal to me because I googled it because I didn't want to generate it through AI and an AI generated image popped up in Google. So not only so someone had already generated it, so I don't know if that makes it better, but it seemed very weird to go to Google and be like, "All right, I guess I'll use it." [snorts] Any questions? Limicks concerns. Go ahead.

>> No, sorry. So the the quest [snorts] the question there was uh has our red team uh which is me on half the days of the week um had any use for the automated pre-anned red team tools and no not really um again our like there might be uses use cases for them for some um some companies but we've been I'm not saying this to brag trust me I am not a company man by any stretch Um but we've been doing security for quite a while and have quite a big and mature security um team and posture um even from the defensive side. So anything pre-anned typically gets caught like just straight out of the box um by

something. Um I think I seen AM people I did our identity and access management people even caught us one time which was interesting. Um but yeah so no

Uh no that well they're not meant to know. Um yeah we do try and keep it fairly uh secret. So we do and we are okay at it but because of the size of the company and the regulations around we have to have a lot of people read in that it's happening. Um and so again no evidence for this but the cynical part of me is that there's maybe some sock manager somewhere going do you want to maybe check what's going on in this server? Um again no evidence but maybe but no we we do do them uh typically completely blind.

Yeah.

>> As in, how do we feed in MITER attack or

network.

>> Uh the honest answer is we cheat. Um so the the question there was um how do we feed back into our blue team um the the different paths we took under the guise of well this is what the AP group would do. Does that sum that up? Yeah. Yeah. So uh we sort of don't to a degree is the the way that we outline them typically if you look directly behind you there's a very hairy man. Yeah. um he is part of our threat intel team and uh they would they would uh engage with us at the start of a whenever we're planning for a red team operation they would say okay here's the

AP groups that that we think are potentially going to attack companies like us um so they give us that intel in they're also feeding the same intel or have fed the same same intel into the blue team they obviously don't tell the blue team hey we told the red team to do this by the way um but what we do is we then basically outline um in our statement of work a narrative, a rough narrative of this is the hacking group. These are the rough steps they take. But we keep it specifically vague so that we're not limited in whenever we get somewhere like the example I gave, right? Maybe there is an attacking group

that only sticks to um Ubuntu servers and we find a way to get into a red hot box, right? We'll just go into the red hot box anyway. Um, so we we try and keep it specifically vague, but that's that's also been agreed with the blue team, right? So with their stakeholders, we didn't just decide to do that for the giggles um to make our lives easier. Um, they seen it as as valuable because it meant that they would get potentially more findings and more things identified. Um, that works well with us um because we have quite a decent relationship with our blue team. Um, I know some companies it can be quite adversarial of us versus them. So in

those cases maybe I could see that being a bit contentious. Um but we have a decent relationship and so they see it as yeah if you find these things it means an attacker won't or we can fix it before they can. Um so yeah short answer we don't effectively. All good. Cool.

>> [applause]