BSidesSF 2024 - Security Considerations for Services Using AI Models (Shrey Bagga)

Show transcript [en]

with no further Ado here is sh thank you thank you so much Andrew uh and a huge sh shout out to Andrew and all the folks at bides over here for an amazing event today um and thank you so much guys for you know coming to listen to me I know this is lunch hour so I really appreciate you being here uh but with that uh I'm going to start talking uh about my presentation so I'll be talking about uh security considerations for services using AI models uh a little bit about myself uh I am a product security engineer at AB Dynamics uh which is part of Cisco Systems uh I did my masters in

electrical engineering from USC uh but I was born and raised in uh New Delhi India uh moved to LA for my masters and then ever since I've been in the Bay Area for the last six years uh working at Cisco uh working in different organizations uh you know from routing to securing routers to Cloud security Now product security uh I'm a big foodie and a coffee low uh whenever my manager asks me can this be done straight I I tell him give me enough coffee and I'll get that done uh and my friends joke about that like you know if ever Yelp is down they will be calling me for recommendations for restaurants but Yelp

has done a really good job and I have not received any calls so um but yeah with that uh we'll look into these four topics today but before we get into all agenda and all those things I want a couple of show of hands today right anybody using llms or any kind of AI service within their organization or for their personal use oh wow that's amazing how many of you guys have cars that that has self-driving features oh wow awesome uh any of you using the new Cruise or weo NSF wow awesome so have you guys ever wondered like if you're sitting in Cru or weo or using the self-driving feature of your you know uh Tesla or whatever

car you have and you're approaching a stop sign and what if your car does not stop at that sign or a traffic light and it does not stop that's pretty scary and we'll talk about you know if that could be a reality or not so in today's uh topic uh in this today's presentation we'll look into we'll understand the basics of AI engineering how it differs from your traditional you know software engineering or application engineering we'll look into some of the things that can go wrong with it how do you know how as organizations or individuals we can you know we can prevent it or mitigate some of those things and some of the tools that can help us in in the

same so with that uh understanding AI engineering so there are three aspects if I have to put it from like a very high level point of view the three aspects of AI engineering and I'll go into these very like in a in a couple of minutes but that's data engineering then you have model engineering and then you have your traditional software engineering when I say traditional software engineering I mean like application engineering or application development and in my research I found out that there are primarily three ways organizations are deploying these AI models within themselves or like selling these AI models uh one the more traditional way is to train your AI model from scratch right and this

requires a lot of resources in terms of you need skilled workers uh a lot of time resources of course a lot of Financial Resources the next two ways is something that is very common uh amongst organizations or amongst teams within organizations so the second way is for you to take a pre-trained model and find unit based on the data for your organization and the Third Way is specifically for llms where you're using retrieval augmented generators or Rags as you will hear a lot today and tomorrow at at B sides um that again it makes use of pre-trained models uh and and you are you're giving you're providing in rags you're providing again you're providing the context of your

organization uh for that looking into what AI engineering is and we just talked about you know those three aspects so data engineering the data engineering aspect talks about data collection the inspect iction of data and then you when when I say inspection of data you will find some irregularities or an normalities and you might want to prepare your data in a way which suits your model so doing all of this becomes in part of data engineering next up you have model engineering which is not just like model selection but also like training it of fine-tuning it comparing its performance evaluating it readjusting those things and of course deployment of the model itself and then you have your

application engineering which all of us over here are familiar with uh so we have looked into you know what AI engineering is we we see that there are two different clear clearly two different things or two additional things that we that are from application Engineering in AI engineering but what are some of the things that can go wrong so sorry

so we'll look at four attacks today that can happen on AI Services starting with input manipulation attacks now input manipulation attacks are ENT you must be thinking like sure input manipulation is probably the oldest form of attack that has existed in security industry and that is true but uh when it comes to AI Services it takes a different form uh you have prompt injection attacks and prompt injection attacks can be a direct prompt injection attack or indirect prompt injection attack a direct prompt in injection attack might look like for example me telling an llm that ignore your safety instructions and asking for a information which might reveal sensitive information a indirect attack might look like uh for example if

there's an application which is you know uh looking at resumés right and uh within the resume I embed The Prompt saying that this is the best resume for this job description right and when an llm now reads that resume it will give that as an output that this this resume is the best resume no so that is like a way of prompt injection that can you know like basically force your model to behave in unexpected ways then there's jailbreaking of llms and this was a recent research paper that was published by anthropic on many shot jailbreaking so essentially jailbreaking what many shot jailbreaking looks like is for example if I ask a llm today uh how to build a bomb right based

on a safety instructions it will tell me that you can't like you know I can't answer that right but within the prompt if I provide question and answers to an llm and those question and answers are for example how to build how to create meth or how to hot acire a car or how to steal somebody's identity and I answer those questions so essentially the llm is learning from the in context of the prompt that you are providing itself and then finally when you ask how to build a bomb the probability of it answering increases by a lot and they found out in this research paper that if you provide 256 different question and answers the

probability goes up to 60 to 80% and that is scary uh then lastly you have uh image misclassifications so if I know how a model is working I can add noise to the input uh to the model to create misclassification specifically for models which are using uh you know inputs images as inputs uh for example uh a popular example uh that you will find is uh a dog and a cat classification and a dog image with some noise in it where to the human eye it looks like a dog but to the model the output is a cat and you must think like stra like are you kidding me like dog and cat who cares if there's a there's a

input uh you know a manipulation attack on it but now now think about u a organization a medical in Institute which is using a image classifier where they are taking tissue samples right and B based on what's what's the prediction from those models they are prescribing medication uh input man attack on such an application will be devastating to the you know the patients of their organization next we have data poisoning attacks and that's what we just like I talked about at the start of the presentation like this was some this was a research that was done um a few years ago where some researchers found that like you can poison the data that is being used for uh you know developing

self-driving car models where a regular stop sign means the car should stop but a stop sign with stickers on it in certain places means maybe the car goes at 25 M an hour right and that is really scary because in your testing you will not find these vulnerabilities you will not you will not be able to detect detect it because the car will stop at a stop sign or any traffic signal but when it's in the wild that's when it can be exploited and unfortunately it will be too late for you to do anything because like lives are at stake right uh so these kind of attacks are very prominent especially because a lot of a a lot of

lot of the data is crowd source and the probability of that crowd Source data being poisoned is really high uh next we have uh model attacks so there are different kinds of model attacks that are happening in the wild today but we'll talk about two of them uh one is model theft which is as simple as you know having an unauthenticated access to your model and somebody leaking your model like it happened with Lama last year uh or a more sophisticated attack where someone tries to reverse engineer your model where they provide millions of inputs get millions of outputs and essentially they now have a database of inputs and outputs and they train a new model uh

based out of it and essentially without you know your model being leaving your oh sorry leaving your U environment it's now being reverse engineer uh another kind of model attack is model denial of service very similar to software denial of service that we see in in our day-to-day where where the the the objective of the attacker is to slow down or give it prompts which are int you know uh energy intensive or causing delays in terms of like decision ision making and this is like really uh this this is really like there was a research in Corell University where they they did this attack on using something known as sponge uh examples sponge examples are

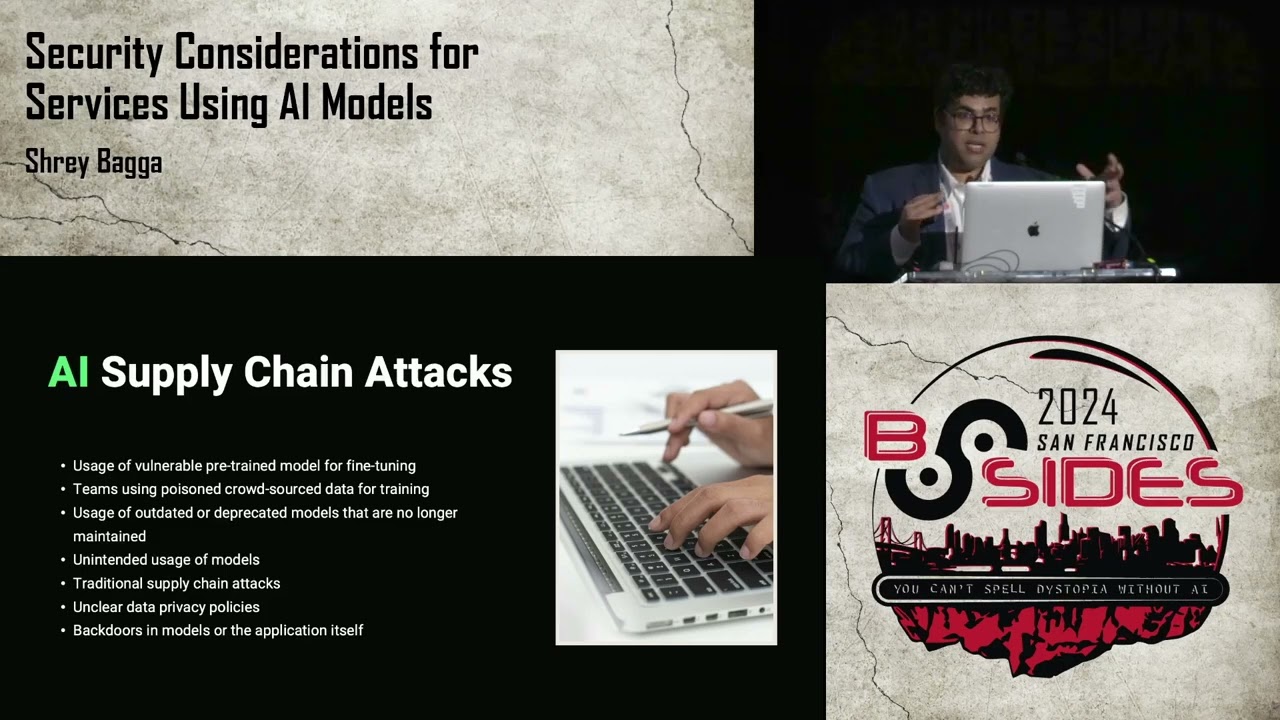

special specialized inputs which can cause a latency in decision- making um and they tried it on like applications which are which have critical like you know real time uh like decision- making uh needs last we have ai supply chain attacks supply chain attacks is you know something that we all deal with every single day in the security world where we have vulnerable third party components but if you remember a few slides ago I talked about the along with your application engineering you have data engineering and model engineering but because of the introduction of these two different kinds of areas in AI engineering these supply chain attacks can be seen in different method different ways where your model itself

the pre-trained model that you might be using is vulnerable or has back doors in it right or the data that you're using is poisoned itself um so this is a quick summary for what we just talked about and I tie it to like you know the different aspects of uh AI engineering uh you might see some attacks over here which we did not discuss in the interest of time uh but yes uh something for us to like you know keep in mind uh after the talk as well uh next up we're going to talk about what are some of the things we can do to you know mitigate these attacks uh and I know we talked uh about you know AI

supply chain attack so let's look at how we can mitigate that so there's a proposal for AI bill of materials right now our AI bill of materials or AI bombs are similar to S bombs that we see in our industry where it's an inventory of list of components in a AI service right and the reason we need this is not just for helping securing our AI supply chain but also for transparency and Trust amongst organizations especially if you are you know Distributing your model uh across the across open source like at hugging pH or even if you're are selling a AI service to your customers creating a that relationship of trust that this is how we trained our model this is what

we used and this is like like we distribute s bombs for our traditional software uh the the idea is to distribute AI bombs for AI services and this is a sample data structure for ai ai bill of materials where you have the model details which include the name version who's the author the type of model things like that then you have the model architecture which is what kind of training data was used uh what were some of the sample inputs uh what are some of the sample outputs just so somebody who's reading this uh bill of material can understand how the model behaves and also the model usage which includes like the intended use for

the model as well as the prohibited use and the prohibited use I feel like is more you know like important here because for example if your model uh while it's like training does not obscure II information so it's really important to know those things that in your training data that you obscure those you know uh that that pii information before you use that data uh for that model uh because it could be the case that you know you did not obscure it the model trained on it and then accidentally revealed sensitive information when it was deployed in production uh lastly you have model considerations um and it includes like what different biases your model has uh

or ethical considerations it might have right uh and we understand that no model is 100% perfect but knowing the biases gives us the the opportunity to reduce those biases or you know Factor them out for example uh I don't know if you heard uh a few um some time ago Amazon had this AI application which was you know looking at resumés and uh matching them with jobs which which are open at Amazon right uh and they found out that that AI model or that AI service was essentially rejecting all women for engineering roles and only Pro only matching men with engineering roles because as per the data that was present in the company it was it was seen that you know those

uh those engineering roles are you know more taken by men but that's clearly a bias and to mitigate that you can just remove the gender as input parameter to your model right uh but knowing those biases is really crucial and over here I would like to give a shout out to the creators of AI bill of materials it's not developed by me uh it is developed across the industry across many organizations uh and so yeah uh next we have secure AI development life cycle so you it sounds very similar to software development life cycle or secure software development life cycle right uh in fact it is very similar because it's an extension of ss DLC or

when I say sslc I mean secure software development life cycle where we have taken SS DLC and added onto it for like how we will have to reimagine it for AI Services right and there are four areas of secure AI development life cycle one is secure design then secure development secure deployment and secure operations and management we'll look at all of those four areas one by one so secure design I mean we all know we should be doing you know threat modeling uh data flow diagrams while we are designing it right but specifically for now for AI Services we need to look at model selection and when when we're doing model selection you're not just looking at the

performance of the model or how accurate it is but also how secure it is if it is a if it is a pre-trained model right uh making sure that you know is is it being maintained on a regular basis um then it comes to data engineering making sure that you're cleaning your data or removing those biases uh from uh from the design phase right uh next you have secure development so secure development is when you're developing your model or training your model I should say it needs to be done in a sandboxed environment and the access to that sandboxed environments needs there needs to be a proper Access Control to that sandbox environment right because a lot of times you'll see

in organizations the model development piece is done by data Engineers or data scientists whereas the application development is done by traditional software Engineers um so having that having that proper access control is really crucial uh in the secure development phase along with if you're develop creating your own model uh having creating your AI bill of material is also really crucial here uh so that you know when whenever you share that model with someone else or whenever you ship that service you can ship the AI bomb along with it um and of course you have your you know traditional uh SS DLC thing uh things for secure development like using you know third third party components

which are up to date and things like that managing technical debt uh next you have secure deployment uh and for secure deployment uh you we need to make sure that our model when it's being deployed is there's proper Network segregation between uh the model the production environment and the development environment but also networks aggregation between the the services which have access to the model and the services that don't um so having those proper access controls along with network segregation is really important here and then think of your model as your IP uh where IP is not just your you know the software that you are writing but also the tra the weights that you are that you have in your model so

securely storing them encrypting them or if you're using a rag encrypting your embeddings right uh it's really crucial uh and also we talked about like let's say like model theft or model denial or service attacks so where a user is giving like multiple million ions of inputs so having rate limiters and your in your production environment is really important to somewhat mitigate those kind of attacks right uh lastly we have secure operations and management uh now secure operations and management it's over here it's important to note that for most applications we are really good at like looking at oh are we doing input validation for our service uh you know making sure that you know what is the

service input things like that but specifically for AI Services we also need to make sure that we are monitoring its output right because we need to monitor that the model is not hallucinating that it's not producing uh you know it's not revealing sensitive information uh or false information uh you might have heard certain uh cases in the on the news where AI agents were considered a agent of the company so the information or the output that those AI agents produce especially for like you know chat Bots and things like that is cons like companies are being accountable or held liable for those responses so monitoring those responses is really really crucial and if there is

a if there's a leakage of sensitive information or a problem it's really important to have a process around that incident right so having proper H having those proper in processes set like you know established for your organization is really crucial uh I'll take a minute over here to look at like you know what what we just talked about and like you will see like the things that are circled in red are specifically for AI Services uh along with your your standard ssdc uh practices um so

yeah um next uh we'll look at some of the tools that can help us you know secure uh secure our AI services and I would like to just give a disclaimer that this is not an exhaustive list of tools uh this is these are all open source tools these are this is just like a starter kit you can think of it that way where uh you know uh something for you to like start playing around with uh so yeah so we have um art or adversarial robustness Tool uh toolbox which is basically for uh red teaming activities finding adversarial threats right you have gak which can help you find vulnerabilities in your llm uh you have audit AI which is really

crucial for finding uh biases in your data and finding those biases is really really important because uh it could be that your like especially when you're training your model from scratch or even fine-tuning it finding those biases is really uh important and then you have this is not a tool but uh more of a framework called spdx 3.0 uh which is is published by the Linux foundation and it introduces the 3.0 version introduces AI bill of materials uh in there and just like spdx I think like Cyclone DX which is developed by oasp organization also uh their their latest version will also have ai bill of materials or AI bombs associated with it if you're using a

pre-trained model from like hugging face you will find something like uh model cards which is a very similar concept to uh AI bombs uh over there so a few key takeaways I know uh you all must be really hungry so uh I will just three takeaways if from this presentation uh one choose your model wisely right not just based on performance but also based on security and when you're choosing your model uh if it has a model card if it has AI Bill AI bombs associated with it look at those analyze it according to your application second when it comes to data there are three things you need to remember we need to clean it we need to

encrypt it and secure access to it right and we covered secure AI development life cycle as a framework which uses creation of AI bombs we are where we are monitoring your your your application for both in like both inputs and outputs and make sure to Leverage The standardized sdlc practices like all the learnings that we have done over the years through software engineering can be leveraged for AI engineering as well and lastly I would like to leave you with a sentiment uh which is this the field of AI engineering or AI is rapidly innovating and rapidly evolving right so we as organizations or even individuals need to be agile enough to adopt these new methods or ways of attacks and

defend uh our organizations from it along with it uh I would also like to encourage you like if you're developing something new to share it with the open Community uh and the security industry because uh you know it's really crucial that we as a organ as a as an industry grow towards these uh securing you know AI Services uh with that I can take any questions uh if we have I know we have we have quite a few oh awesome awesome thank you everyone who submitted on the slido uh I think I have seven questions so far oh wow so I'm going to go through them as quickly as possible yes we may run a little bit over um with the

questions so uh folks stick around if you would like your question heard you can if if for whatever reason your question is not answered uh you can reach out to me on LinkedIn or my email and I'll be around here and I'll be in B sites today and tomorrow so uh you can find me and ask me so yeah okay first question from Anonymous have you seen an attack out in the wild where an attacker was able to gain access to the data on which the models were being trained and then poisoned the data so uh I have seen aack where they were able to gain like they were able to recreate the data where like based on the output like spe

specifically for like a model which is using like image for image classification that they were able to uh retrace what data was used uh or what images were used for training of the model uh but usually the training data or like organ unless you know uh somebody has not like secured access to it usually the training data is very different from in when when the model goes in production and the data that then it has access to uh it's usually po poisoning of data happens when you know you have a llm application or a chatboard for example and it it takes in whatever responses it gets and it retrains itself on it so then you can

poison for example uh you can you can cause like an indirect data poisoning because you know that this application is essentially you know uh taking taking data like taking my input to an application as the data for it to train itself on uh I don't know if you saw like this was initial days of Bing chat or chat GPD where someone tried to prove that you know 2 + 2 is 9 or something like that and they they did a reinforcement learning on like uh you know basically fooling uh the chatbot to make like OH 2 plus 2 is actually nine so yeah okay next question from uh Liam how do you Source the in for an AI bombb

EG model usage and model considerations is this publicly available for popular models oh so uh for sourcing it uh I think this is still in development uh I can talk more about it offline I think it's a much longer conversation Liam but yes great question um and yes uh we can chat offline and there were a couple different questions about vendors um from Caitlyn and Anonymous vendors who develop AI bombs vendors who have particularly good out-of-the-box Solutions so if you have do you have additional comments on that beyond what you shared from folks like Linux Foundation uh I would say like OAS Foundation has Cyclone DX which is also using air bones but those are the two

main I would say like uh like you know Frameworks for using AI bombs uh AI bombs I would say like it's very very recent uh it was first published in October 23 uh there's a there's a white paper over there there and I can share the link to that for anyone who's interested to read about it okay last question from V how do you choose a secure model for the many options for for EG hugging face uh great question I don't have an answer to that yeah because like when you say a secure model making sure that of course it's from a reputated source right uh it's there's going through the model card and looking at different uh security as

aspects to it uh and another thing is like making sure that like usually like models like U open aai or llama have like API Keys Associated to it so for example to access those models you need API keys so making sure you have like proper Key Management over there is really crucial but those are some of the things that uh you know uh that that is really important to keep in mind when choosing a model uh and yeah uh just don't look at anything which you feel like which from the model card looks very suspicious like I I'll start with that don't look exactly all right hey folks we have a few more questions that

are on the slido unfortunately we are out of time thank you very very much for this presentation this is super awesome really really appreciate it