BSidesWLG 2017 - Josh Brodie - Ethics in penetration testing

Show transcript [en]

All right, I am Josh Brody. I am a security consultant from Lateral Security. And I will be talking about ethics in penetration testing and offensive engagements in general. This talk was inspired by a number of concerning Twitter threads that I've seen and general commentary on Reddit from people purporting to be offensive security consultants, asking questions such as, is it appropriate for me to take an NSA binary that was leaked to me by the shadow brokers and just throw that at a client network. Can I do that? Or things like posting screenshots of things that indicate vulnerabilities and indicate who the client is. As such, it's going to feature as a rough guide to not being

a cowboy. Fortunately for me, there's been a recent discussion on Twitter focusing around ethics in Red Team, which has let me really refine the material that I've got. And I've structured my material around some of the tweets that came out during that. So hopefully, this isn't going to be exhaustive list of things you need to consider, but it should feature as a good starting point for these discussions. As I say, I'm from lateral security, and if I put this in my slide deck, this is wiggling a whole bunch, if I put this in my slide deck, I can expense my breakfast to them. So I'm gonna be focusing on a subset of people you have obligations to, namely your employer, the client organization,

and the profession collectively. A professional also has obligations to peers, people who report to them, the cultural health of their company, society at large. And any one of these could be their own presentation, but aren't going to receive much focus here. So there are a number of codes of professional conduct which have been defined by organizations such as CREST. I'm not going to be referring to any specific code of conduct, but instead kind of reporting back on what my experience has been with the expectations of these stakeholders in the real world. So it's the nature of ethical dilemmas that they can be hard to map to standards such as these, which will commonly provide a

prescriptive list of rules that you need to follow. But in the real world, it's more difficult to navigate ethical spaces, which could have situations where there are multiple competing ethical obligations that you've got to weigh up against one another.

Examples of this could be when you need to balance commercial pressure on either you, the client organization, and weigh that against doing the most thorough job you can. With that said, I did refer to the Crest Code of Conduct in detail when pulling this presentation together, and to a presentation, Ethical Dilemmas and Dimensions and Penetration Testing by Shemal Faley, John McElhenney, and Claudia Jacob. There's a reference to that which will be when the slides come out. So things like use good judgment when conducting offensive testing or do no harm, they're not sufficient guidelines for people to really act on in the real world. They're good high level statements of intent around the moral posture of

the profession. But as in computer systems development, we probably all know there is a world of difference between the design intent and what the real world implementation looks like. And how well you manage that translation comes down to your experience. So I'd say the ethical sense needs to be cultivated and developed. It's not something we can rely on people to just pick up along the way as they go without being directed by leaders. Any time an ethical decision has escalated to a leader, they should be sitting down with staff, unpacking it, kind of weighing up the what and why the decision is being made. So despite the limitations I've noted, these codes of ethics do serve as good foundations for a moral framework, but

your employer needs to be able to rely on your moral autonomy. And so you've got to develop a sense for how to navigate ethical spaces yourself, and it's just part of being, you know, getting suitably qualified for the job. So before we move into the ideas about how, we should probably agree that it's worth being ethical to begin with. I'm not gonna use any academic definitions or dive into complicated philosophical theories about this, but I'll just briefly explore some pragmatic reasons to conduct yourself ethically, so we're on the same page about it before we jump into how. Obviously, you chose to attend a presentation about ethics, so that's a good sign, unless you're looking for

a quiet place to eat your lunch. But most people wanna do the right thing most of the time, and it's a good place to start from. If you've already got a fairly good foundation of good values it should be enough to say that malpractice in this space can cause harm and loss to your client and their employees to your employer and your colleagues and to users users of the systems you're evaluating who are your clients customers if you subscribe to the term ethical hacker it's arguably half your job and if you're a certified ethical hacker you shift some of that away and focus on certifications as well I suppose in this industry technical skills are

aren't really worth much unless you've also got a reputation for being trustworthy. So this reputation is your most valuable asset. Your employment and the continued success of your business is conditional on client trust. I've got that slide in there twice. Should I say it again? You guys got it, okay. So I'll jump into my first ethical dilemma, which was presented by a security researcher, XOZ, This is a good example because it brings up a number of competing ethical considerations you need to make. As a spoiler, I am gonna be arguing against targeting personal Gmail addresses, but I will review some of the pros and cons. It'll be pretty clear what my perspective is in doing that. As a starting point

on figuring out how we address this, we should think about what is your job in this situation? The question does specify a red team engagement, which is typically a wider scope engagement giving a more realistic impression of actual attacker capabilities. As such, it's more likely to involve the bypass of physical controls, social engineering, the execution of an end-to-end attack, so breaching a perimeter, gaining internal foothold, lateral movement, and then where you go from there is depending on an objective that can be set.

So with this in mind, what a good job looks like might be most accurately reproducing attacker behavior to most accurately highlight real exposure. And this is the argument a lot of people fell back on when asserting that everything is in scope on a red team, so yeah, you better pop those personal mails. I think this misses some key context, such as only things that are formally in your scope are in scope on a red team. So what am I arguing your job is instead? What would I say you do here? An offensive security professional is someone who uses their expertise in attacking information systems in the service of the business objectives of their clients, while

observing the ethical boundaries expected of their profession. This doesn't sound as fun as pop boxes, and it's not a new or comprehensive definition, but I think it's key to note that simulating attackers without targeting that simulation to answer real business risk questions that your client has, or factoring in the wider business context, has little value to them. I'm not gonna throw out the motivation to simulate the real behavior of the threats that the organization faces, but this needs to be factored in alongside other considerations. The area of the world in which you attack systems with no sensitivity to the business context is already occupied by actual attackers. So a lot of responses focused on this

idea that using every tool at your disposal to achieve your objective of owning boxes is your goal. I'd say this is partially correct. You do need to use every tool at your disposal to achieve your objective. But that objective isn't owning boxes. It's more nuanced. It's broader than that. It includes things like influence meaningful security gains in the organization. Don't introduce further business risk for your client. Don't embarrass or expose your employer. And the difference in objectives between you and a real attacker changes which of the tools in the arsenal available to you are appropriate to use and in what way they're appropriate to use. I think a lot of people fall into this trap of what I've taken to term cyber security cosplay, which

is where you dress up as cyber adversaries and just wreck things or as pipes noted earlier thinking that you're willy apiada charging in guns blazing you can understand how you find yourself in this position it's widely considered the fun part of the job wrecking shit is great and it aligns with the kind of the hacker archetype a lot of us have as a self-image but it can lead to losing sight of the actual objectives of your clients which are the objectives of your engagement overall so it also leads to viewing clients as adversaries which can create social friction And it can lead to you failing to notice that in addition to having objectives that attackers don't have and constraints that they don't have, you've got resources they don't

have too. So where it might look like you've got competing considerations against hack all the things and don't actually break them, you can escalate decisions to a client. So you've got the option of collaborating where you can say, look, I think I can break in this way. I expect it could have this harmful impact. what do you wanna do here? It will give me this internal foothold, for example. You can say, do you wanna just give me the internal foothold? We won't have all that risk involved and move on from there. Do you want me to actually attempt to break in with your organization fully understanding the potential impacts involved in that? Or should I

just note that we think this is possible, we couldn't do it because you said, and detail the limitations of that in the report. So clients are quite often invested in and even proud of the systems they've built. Even if you going in there and breaking things don't really think that they should be.

Your job isn't to swan in, wreck things, tell them that they're bad and they should feel bad, and then right away into the sunset. You're joining a team to collaborate on delivering a more secure outcome. I like to think of it like this. While the blue team focuses their time and skills on defending systems, red team directs their efforts towards also defending systems, We do this by attacking them, but the defensive information systems is our objective. It's easy to get caught up in the hacking hype and the allure of pretending to be a baddie, but losing sight of this key principle is a key factor in some of the ethical missteps I'll touch on today.

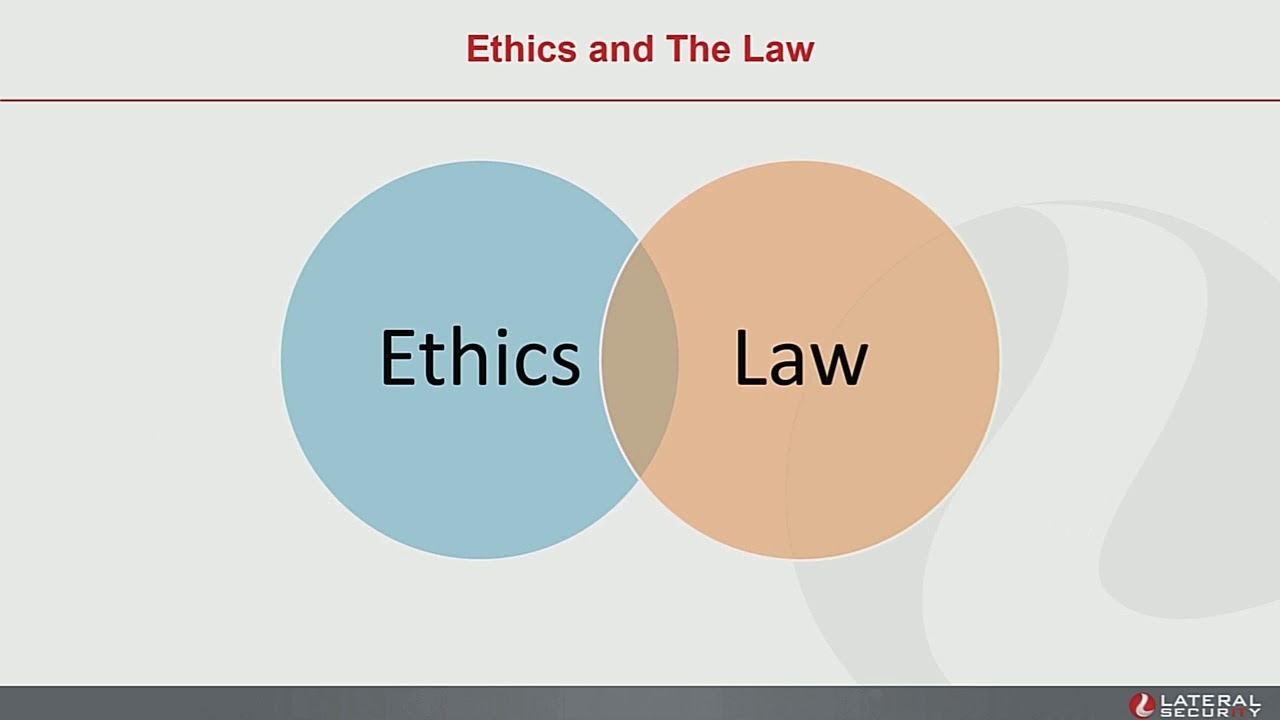

So back to the example at hand. We briefly touched on a potential motivating factor for attacking personal Gmail addresses. So I'd like to hit one of the first stops on the, okay, but here's why not, tour. First off, depending on what you mean by targeting personal Gmail addresses, in doing this, you may very well run afoul of the law. Ethics and the law are two intersecting domains. There are behaviors that are ethical but legal, and there are behaviors that are legal but unethical. Despite this, it's a good baseline and a minimum viable standard on top of which you can build whatever moral framework you feel like. Ethical decisions are quite often made in the absence of detailed legislative guidance. because the law can't cover the full spectrum of

possible action. There's a lot of nuance in the interaction between ethics and the law, which I'm going to entirely skip over because there's no time and we need to get on with it. Suffice to say, offensive security involves performing a number of actions which would, absent mitigating factors such as client consent, be really sketchy. worth noting that there are some people who do operate in gray areas of the industry. But for most of the peers and most of the people that I'm kind of addressing with the presentation, breaking the law is a career-limiting move. In my case, if I broke the law, it would upset clients. And upsetting clients would upset my employer. Reasons for this include, we get given fairly privileged positions inside client organizations, inside

their network, access to reasonably sensitive data. And they wouldn't be doing their jobs if they let us have that access if there were any question about our trustworthiness. They wouldn't be meeting their ethical obligations to the people that they have obligations to. Also, clients and employers need to be able to rely on your judgment, given the trust that they're placing in you and the capacity for harm if we make bad judgment calls. So the law relating to computer crimes in many jurisdictions maps pretty poorly to reality, which means there's a lot of room for discretion, which is Also a lot of room for a series of unfortunate misunderstandings that lead to bad times for you.

So some considerations to make in this case. The client here is likely not authorized to access the mail content of their employees. It's unlikely that they've got a contract which allows them to pop open their employees' personal mail accounts. And if they don't have that, they can't delegate that access to you. Courts in New Zealand have sided with the employer, typically, on accessing personal mail on company accounts. There's existing cases which are relevant here, such as where an employer logged into an employee's personal mail, having installed a key logger on all of their workstations. The key logger was found to be in breach of the Privacy Act because they'd insufficiently disclosed that that behavior was

occurring, and the breach of the mail account was found to be in breach of the Privacy Act because, honestly, what are you doing? It didn't go any further than that, but legal opinions did suggest that pursuing criminal charges would have been viable and 252 of the Crimes Act, accessing a computer system without authorization. Familiarity with the Privacy Act is also useful, both regarding identifying potential risk areas for your clients, with the caveat that you're not a lawyer, but hey, I've seen something, you should get one to look at. And with your own data handling, making sure you don't wind up with too much stuff that you need to be taking that into consideration. If in this case we mean access personal mail as a delivery mechanism,

We need to factor in that this could impact devices which are out of scope, where the scope is part of the contract constraining the entire engagement. You don't want to go past that. So you need to be confident in your legal position, but you've also got to factor in good faith. Employment relationships in New Zealand are governed by this kind of concept of good faith, which is a requirement for there to be mutual trust and confidence between employers and their staff, and this is a relationship without deceit or misleading one another. say that a red team engagement could include some elements of controlled deception techniques. Using these techniques without understanding them or understanding the impact of them is charging into an ethical minefield. But it does

happen when people play red team fast and loose. For example, it's inappropriate, in my view, to, on behalf of your client, undertake actions which damage the trust between them and their employees. This causes harm to both the personal relationships between them, and it could cause legal issues. So engagements that move into this space need to be approached with due care. One example of this, which was covered in Katie Drake's keynote around blame language, is making sure that clients are insulated, client employees are insulated from the consequences of being used in a social engineering system. So with social engineering in mind, I'll briefly dive into that. The book, Unauthorized Access, Physical Penetration Testing for IT Security Professionals, Doesn't half-arses description here,

noting, computers don't care if they're abused. People on the whole do. Social engineering is, in a very real sense, abuse. At the very least, because it involves deliberately deceiving target staff at the behest of an employer, but also because social engineering techniques are designed to achieve goals through the manipulation of a wide range of emotions, not all of them pleasant. Some of the techniques described in this book can be extremely effective, but it's your call whether or not they're appropriate in a specific setting. Conducting testing that focuses on causing an individual to be the weak link in corporate security will cause a poor perception of and loss of trust in management. Ultimately, the impact of

this can be at least as negative as a security breach. More importantly, social engineering attacks can do psychological damage to the individual that is duped. This is not a game and is not behavior to engage in lightly. Always assess how important using individual employees really is and never use it more than necessary. There are definitely valid use cases for tools like social engineering. But there are also cases in the real world where social engineering engagements are conducted, but limited value is being obtained in contrast to the harm done or the potential for harm done. And there are cases where the use of these tools is come back. Use of these tools is justifiable, but doesn't

factor in harm. And so it takes no steps to minimize that. So the decision to use any particular tool or technique should be factoring in what outcome are you looking for? Is the technique or tool you've chosen the only way to achieve this outcome? Or are there less risky alternatives available to you, such as collaboration with your client? And is this providing the client with new and valuable information? Social engineering being another great example. If it's not useful to the client to drive change in their organization, the knowledge that their employees are going to click on sketchy links, maybe consider whether it's valuable as part of the assessment. So these considerations should be made as part of a larger attention to whether the client is receiving value for

the services you're offering. And our organizations really need to be developing better guidelines on appropriate uses of perception techniques. So your job is to provide specialist information to inform client risk decisions. And this includes the pre-engagement decisions about what type of testing services best suit their environment and the questions they're looking to answer. Conducting offensive security testing carries with itself a degree of risk. Even if you're being polite, there's the possibility of unexpected reactions from systems or impact spreading beyond the intended target due to connections you didn't understand or connections that the client just forgot they had. So you've got to consider the impact of any testing you're conducting and make sure that your client is fully informed as well because they might

not understand what it is you're doing at all. So you need to educate them on the assurances and the limitations of the assurances that you're able to provide. We need to be honest with them about our capabilities and our limitations. So I've noted that engagements are rarely fully accurate simulations of attacker methodology or intent. And part of understanding the assurances that you're able to provide is understanding the differences between you and a real attacker. There's a lot of focus on not misusing the mighty and incomprehensible powers of InfoSec. But we also need to appreciate that these have limitations. Our methodologies are incomplete. They have flaws. There's room for them to do it. room for them to develop. I've got here something about

skillfully weaving in the next bit, but I'm just going to read it off now. You need to inform clients if any aspect of the engagement isn't worthwhile, which requires you to have a sense for which of your tools provides useful feedback and in which context. And as well as informing the client about what it is you do and what it is you can do, you need to ensure that you understand what it is they do. So you need to make sure that you thoroughly comprehend the corporate objectives underpinning the engagement. You need to make sure that you've got a good grasp on any constraints or risks presented by the client environment. You need to understand

the desired business benefit and how those benefits can be measured. Make sure you've got an appreciation for the importance of business continuity, because nobody is going to care about your sweet findings if you caused a disruption to their operation. It's also important to factor in the human side. As we've covered before, ensuring activities which could cause emotional harm to humans are not undertaken lightly. These environmental considerations also need to factor in into the advice you provide. So if best practice isn't achievable in a particular environment because of constraints and limitations that they've got, you need to provide recommendations on mitigation in pursuit of the objective of meaningful security gains and fully inform your client of

what the limiting things in their environment are exposing them to. An understanding of scope is essential. A scope is the part of the agreement that forms the dividing line between your conduct being legal or pretty legal, which courts have found to be still illegal. Any doubt about whether an action exceeds a scope, you need to be escalating that. And if the scope is so limited that the engagement might not have any value or could turn into a simple tick the box exercise, this needs to be escalated to your leader also. so that you can disclose the limitations of a basic vulnerability assessment to your client. You also need to make sure you don't undershoot your scope, making sure that you've got appropriate coverage over all services sold. This also

includes things like confirming authorization from the client and any relevant third parties who may be hosting things, making sure you've got documented exception procedures in case of deviation from the assignment or in case of identifying issues that require immediate attention. And if you're ever unsure whether a decision sits with you, or maybe making it would be assuming a risk decision on behalf of someone else. That needs to be escalated. So we'll wrap up the first example. Targeting personal Gmail accounts is unlikely to be legal or in scope. And even if it were, it's likely to be unethical because of the potential for harm to the employee outweighing the benefit they're obtaining. If this is needed

to gain internal access, for example, it's likely you can achieve the same objective with fewer risks by collaborating. Some of the examples mentioned that maybe the client wants to use a red team to determine whether employees are storing company data on their personal mailbox. I'd argue that this is the wrong tool for the job. The right solution starts with managing employees, escalating through HR and legal, if it's reactive, and if it's proactive, things like data loss prevention technologies and network controls. So move on to our second example, a comment from red team Wrangler. I think everyone I've got in here is worth a follow on Twitter. So for context, Cobalt Strike is a threat emulation platform, which comes bundled with all sorts of goodies for things to

aid a red team for recon, phishing, exploitation, gathering all the information together, making it easy to report on. So the information that's logging is somewhat sensitive. You don't want to go committing it to the webs. There are a couple of ethical missteps that have led to this, which can largely be summarized by a couple of ideas tool assurance and information management. We'll start with tool assurance. Within reason, we need to be able to account for the behavior of our tools. Ideally, you would have a comprehensive understanding of every facet of your tooling, but this can be impractical in the real world with heavily featured tool sets that have become critical to our jobs. So as we've discussed, testing carries risk. When using tools on client

systems, the client is accepting that something might go wrong and cause disruption, But they're also relying on your expertise to minimize the chances of that and protect them from irrecoverable consequences, such as data exposure. You need to ensure that you understand the risk appetite of the client and of your organization. So business context needs to be informing every decision to run a script, making sure that you fully understand it, to add a plug-in to your burp suite, or to run a meta-spoint module. You need to make sure that the actions you're conducting fall within the client tolerance for these behaviors. This involves evaluating your tools, assessing their benefits, weaknesses, and making sure that they're appropriate for client environments. We covered technique relevance briefly in the context of

social engineering. But this can get overlooked when people get caught up in things like cybersecurity cosplay, leading to testers that Ned described here as backwards militarized sheriff's departments waving military-grade weapons they don't really understand and treating citizens as the enemy. which capiers for me quite well both the misuse of tools and the mistaken view of clients as adversaries. Outside of any engagement, this also includes maintaining your knowledge and skill set, keeping up to date on emerging tools and vulnerabilities. So we've said it's only one of the considerations that's still very important. The further your methodology is from real attacker behavior, the less valid the results of testing will be for assessing real world vulnerability. So you need to expose yourself to

news sources containing pertinent information about the general environment so that you become aware of things like legislative changes, new attacker developments, developments regarding tools, especially if this contains information about vulnerabilities in your testing tool set. You also need to keep up to date with technological advances through things like training, technical publications, specialist group, and conference attendance. So you're doing great. So this example also raises issues relating to information management, which we've touched on briefly in the context of the Privacy Act. All information relating to client organizations need to be kept, all information relating to client systems need to be kept within your organization and only exposed to the client through approved channels. Failure to perform here is another of the career limiting

moves you might encounter. So nobody's gonna hire the firm who, unaware of the juiciness of a given tidbit of information, announced it in a crowded bar or otherwise disclosed it. Reputation for things like the spreads. It's a fairly small industry. And you'll find that competitors share information regarding untrustworthy consultants for the good of the industry. It's a hard sell to hire a consultant who had that impact on their last firm. Data exposure doesn't just occur through loose lips. Software can also betray us. So we need to make sure that our tooling isn't leaking information to outside parties through things like referrer headers or providing links to attacker.com or evil.com in our example payloads. And factor in any unknowns regarding cloud-based tools which could automate

interactions with your target. Make sure that you know that their behavior is appropriate. So as we prepare to conclude our brief dive into the ethics of being bad goodly, I'm going to smoothly segue into a conclusion, revisiting an overarching theme. In my experience, most testing engagements, they're not 100% They're not 100% faithful simulations of attacker methodology and intent. And this is partially driven by most clients not looking for a perfect simulation of attack, unless the key control they want to exercise is something where it's relevant, like their responsiveness to an actual attack. The Gruck wrote a great medium post a couple of months back in which he detailed the differences between cyber operators and explained ways in which penetration testers have a cyber kill chain that

doesn't bear much resemblance to a real threat actor. And he was right about this. In a typical offensive security assessment, all of the phases of the engagement function as information gathering exercises for the delivery of a final, well-crafted payload, which isn't typically used in a real attacker's kill chain. Like any exploit delivery, it's important to take into consideration the objective it needs to achieve, the environment it's gonna be triggered on, maximizing how reliably it will achieve its aim. It's often delivered via email or removable media, and it executes the political air of the system in question. The most important exploit any offensive security professional needs to write is a report. Post-engagement deliverables are the vehicle through which security games are driven. Up until this stage,

the client hasn't really got much value for their money, except from what they can infer by looking at logs or things they detected during the attack. If you do an amazing job bypassing network defenses, and then you fail to target your deliverable to the client environment and don't drive change for them, All of the tech you did was wasted. As with the crafting of exploits, you need to carefully consider the environment this is going to run in. So report content targeted at a C-level is put together differently to report content targeted at technical staff. Other considerations that we need to make here are ensuring that you avoid spreading fair and unreasonable doubt. And when describing

severity, make sure that you target it to the client environment. Also, don't limit impact to technical potential for compromise. You need to consider reputational damages loss of user trust, privacy harm, other parts of financial loss, and system behaviors which condition users in harmful ways. So to summarize the key points we've looked at, codes of ethics are great, but clients and employers need to be able to rely on your moral judgment, and this is a matter of training and experience. While accurately reproducing attacker behavior is a key part of the job, it's not an exhaustive description of your responsibilities. Your responsibilities need to factor in other things, such as Ethical expectations of the profession? Is the testing being targeted at some legitimate business risk questions? Does it factor constraints

and risks presented by the business context, and do you understand this context efficiently? Are you using the most appropriate tools for the job in the most appropriate way? And are you ensuring that no undue harm is done to client employees using deceptive tactics? Are your actions in line with legal requirements? Are you being transparent about the risk and the value of the services you're offering? Do you know how your tools are working? This is a key part to knowing what impact they're capable of having. Are you managing client information with due care? And are your post-engagement deliverables, such as reports and meetings, effectively driving the intended benefits for the client? And we're done. This isn't an exhaustive exploration of every ethical peril and pitfall, but hopefully it serves as

a suitable starting point to kick off further discussion in this space.