Amir Shaked - CSP is broken, Let's fix it

Show transcript [en]

yes okay amir please join me here on the stage so everybody please help me by welcoming amir shakir yeah and i want to tell you a couple of fun facts about emil so amir is the vp senior vp of research and development at perimeter x and he's here to talk about hacking and fixing the csp standard the content security policy standard but what you didn't know about amir is that other than being a security pro he also has a degree in physics amir quickly what is pi after five points after the five numbers after the point of pi three point i really don't know oh okay well i wanted to tell you he got his degree at tel aviv university

but since he didn't know it you got it in another university so he has a degree in physics and an mba both from here from tel aviv university and his i asked him for his hobby he says he likes python 2.7 so there you have it please welcome amir to our stage all right let's see if it works it works excellent hi everyone uh great being here so much fun doing a live event after such a year with only zoom and seeing on myself we're going to talk a bit about csp how we might try to fix it and part of the purpose of this talk is to invite everybody here to try and dive in find more problems

and try to contribute to the csp3 standard to make it better secured and improve everyone's security along the way we'll just demystify a few things that you might have already known or not like karen said my name is amir shaked i lead the research and development at perimeterix i've been around security for many years i avoid warranties i am a lifelong hacker aspiring father trying to teach them to break stuff as well and will that will dive in straight um to i think hypothetical story but if somebody had such a case in his own work it would be very interesting to see so i wanted to enable csp on my website on our own website

we read and understood the spec completely it's very well and easily understood we added the header with report only mode and then a day later we marked all the resources all the all the notifications that we got all the violations supposedly violations we cleaned everything we rolled it out we had zero issues with the website and everything worked fluently i think no one said ever anybody here tried to use csp as a technology one two okay that's pretty much the stats that we see also in the internet so it works long now it really is a very complicated standard and it has a lot of problems along the way on trying to implement it and use it

uh what is uh really frustrating is that a real story that we did encounter and helped with was something a bit differently so we got a call from the company um um we got a call from the credit card company telling us uh they see a lot of credit cards being used on some website uh being uh circulated in some forums being used and they correlated all those credit cards to a specific website and they're talking with that company trying to understand what happened reviewing all the security possibilities along there and then what we see is they configured everything properly everything was well defined but there was a fundamental flaw which they have no control over and

that's the supply chain some third-party vendor that got hacked that specific case was a chatbot they were using they got hacked their social code was replaced with an additional code and that code worked and basically exfiltrated data from the website digital scheming if you want to an external entity now it was whitelisted and everything was supposed to work properly but why this doesn't mean that you will not be able to accelerate information and let's go here and from there i want to talk a little bit about csp what does it mean how is it used and then we can see a few of the cases where it breaks down and has issues so csp is a standard

um it's actually been around for a lot a long time it was first conceived in 2004 by robert hansen he um gave the name content security policy only around 2012 it actually became a standard by w3c that websites started to use and implement in their own infrastructure and the common version version 2 which is the official one latest version 2016 that's the one that most browsers support if you want to see which kind of features specifically it actually varies so nobody really implements the entire standard like any security standard can i use is actually a great resource to see which specific [Music] component of the standard is actually being supported and the version 3 which started around

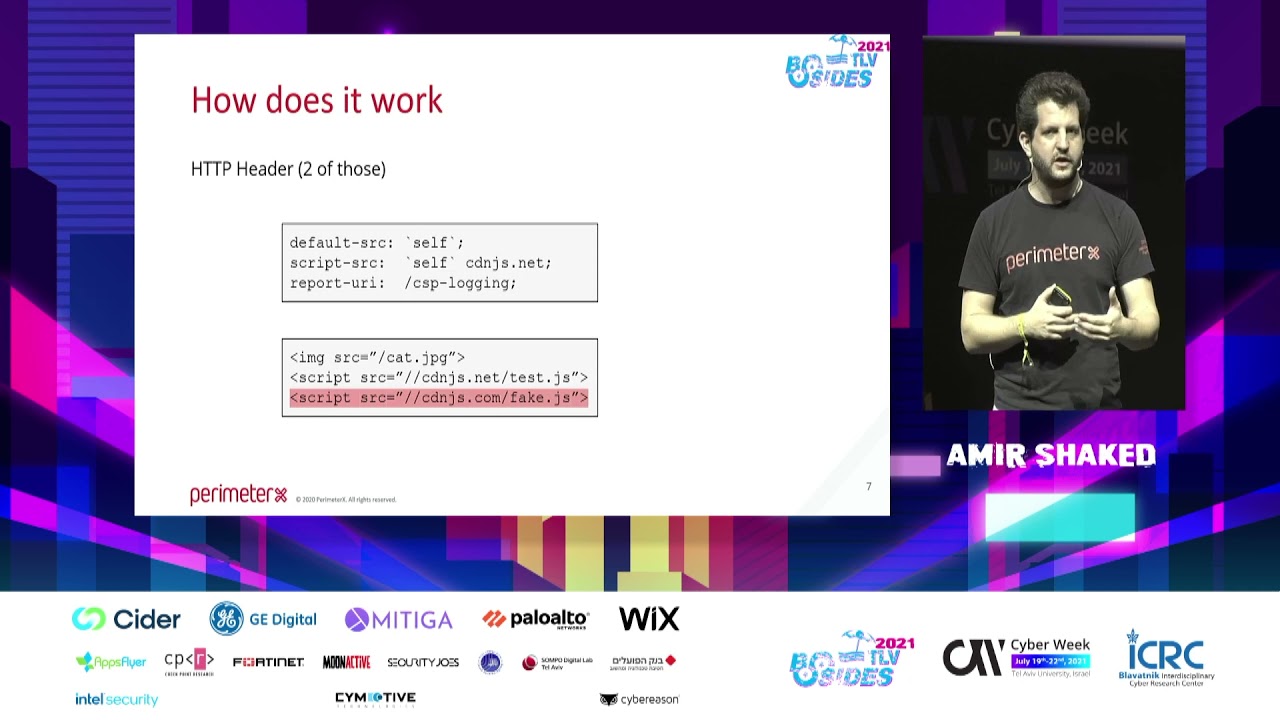

2015 is still a work in progress so it's not finalized yet and that's being worked on now before i continue um if anybody has any question you can just shout and i'll stop and let you ask it now we don't have to wait for the end of the talk okay so very briefly how does the policy work what's the standard it combines two headers one is um content security policy and the other is content security policy report only basically implementing this exact same standard but not blocking anything just reporting the violation if there is a report destination url where you can send information to so if we look at this we can see a pretty simple

description of a policy saying the default source is to allow any content to come from the website source code javascript code can come from the website itself and a specific domain cdnjs in this case and the report uri on any violation will be to the website slash csp logging and then in this case this is a two examples the image and the script will work because they're coming from an allowed destination and if everything works properly the last one will not work because it's trying to download the script from an unauthorized destination basically not within the policy so the entire concept of csp is a whitelist method where you define what is allowed if something is not allowed it will not

work which is great in theory somebody here tried to manage iptables policies yeah yes to both you have a question louder please

i get your question so actually this is a server protection because this is a csp can define will be defined in two ways actually didn't thought of mentioning that one way which is not recommended would be within the html tags you can define the policy the other would be the http header itself so the server is the one you need to protect defining the and releasing that header so that's the that's the place where you need to make sure that sends the right header the server

yes if somebody changes if somebody has control over it's not just the servers but you need to change specifically what's configuring the http headers because in big websites you have a chain of elements say the headers come from the front edge on the cdn then it will not be able to manipulate that even if somebody defines something down the stream so it really depends on where you control and how the website is specifically defined there was another question here shout because it's hard to see okay so that's around that um it's most commonly practiced is to define it with http headers i really don't recommend defining it within the html it's really hard to maintain okay

so um i don't know about you guys but being a hacker seeing a specification just calls out to let's try and see where it breaks and what you can do with it and different methods we can play with it first of all it's not fully defined even with all the uh well um rich documentation that i invite everybody to read it's not fully defined there are items um that varies what the reports hold when to report and i'm going to show some example of that and that leads to inconsistency when you're trying to analyze what it is that you're viewing the implementation itself may be lacking um just char researching csp and the cv database and see where

where there are gaps in browsers themselves and it's very usage dependent and can be abused and i'm going to give examples on any of all of these uh how they're actually being exploited so let's start with numbers we've seen this as a security technology it's been around for a long time if you actually try to see what kind of security methods are being used by websites we can see the numbers do people know http archive the amazing database it's an amazing resource i'm glad to see only a few hands so i invite you all to try and dive in it's an amazing resource they scan in the past three four years they've been scanning four million

websites every month storing a lot of information about that if you're trying to do analysis on web usage uh over history that's a very rich information where you can try and see a lot of things what i was specifically looking at was how the different http headers were being reported by websites and what we can see here the top three are actually the one with the dashes they're actually deprecated but still growing with numbers so csp is replacing that but still people use deprecated security headers within their infrastructure and then the bottom three it's uh csp see i don't have a way to shine on this um i'm also colorblind so i'm not sure which colors i'm gonna say

so the lower one that's report only and two one two after that that the content security policy itself now it's only the mentioning of the header it doesn't say what's actually being defined and we're going to see a bit more numbers around that what is i was happy to see that over time and i've been handling these this in the past few years the numbers did grow so we're around 10 now of csp usage uh when it was much lower in the past so it seems like a positive trend but when uh looking at those comes down to about seven hundred thousand websites uh as of last june uh last month what are they actually using we're gonna

see the following directives so what is the policy we saw before several examples of directives these are the actual directives being used by websites and what do they mean the first one the frame ancestors that basically mean if you can embed a page within a frame or an iframe and it's just replacing a previous security header that we saw which was the one of the most common the second one to upgrade insecure request again means moving all http traffic to be https um and that as well is replacing a defecated security header that we saw in the previous slide so if you look at that we see that most of the websites implementing csp are

just replacing uh the different security headers with the new version they're not implementing the full scope of what you can do with this technology and the following ones pretty low numbers those are the ones that are actually interesting the fetch directives which tells us where we can get content from and where we can send it to these are the interesting ones we can really use to secure our website they're not being used that much and i believe part of the reason is the complexity of using the technology within the website and that is pretty low numbers so it is still sad to see but will with those actually trying to use that we're still going to see a few

fundamental gaps within the technology um i took the example of several websites um and this is frustrating so even those trying to implement csp they're using the top one the top one basically says you can bring source but it can be unsafe evil and unsafe inline meaning we don't check anything everybody can add code to the page and run it and we're not going to validate anything the bottom one which i've seen a very few websites use dictates that if there is an inline javascript code within the page it has to match the specific hash so you can't just add any type of code you want to add into the page it has to match the exact hash harder to manage

but actually brings the security into in default and like any system um looking at the directives we said okay there should be around 30 why do we see 700 and that's because people don't check anything [Music] all kinds of mistakes i really don't understand the last one the go for it it's interesting um it's been around on more than one website i'm not sure what they wanted to do with it but all kinds of examples and when the directive is wrong the policy just breaks and doesn't work so it's as if we have nothing so even out of those websites not everything is actually working the google csp validator is the best one i've seen to date so if you're trying to

write a policy please use that all right it's jump no cool okay so we defined the policy we defined the report uri it's json so it's pretty simple supposedly to just read the reports and trying to understand what are we seeing and um and that's the next phase of the madness data is not normalized at all it's full of stuff coming from extensions and ads and it's also unmanageable because if you have an extension running within the browser by design they bypass the csp but if they're injecting code into the page the page will still report csp violations so now you're trying to read your own violations and they're not actually coming from the website they're

coming from one specific user and within the standard there is nothing that tells you it's a user you need to add a bit of hackings into the reporting information itself to understand which user was it that reported that violation so it's really not helping you to analyze the information at all and i'm not going to jump into the link but i invite you to go to the last one it's full of really fun examples that's the explained one you should look at the unexplained one it has a lot of examples of csp reports websites see and they really don't understand where they come from the last one when we went into high scale of looking at the actual

information was browser misalignments when the report is actually being sent and what are we seeing and the few of the examples which are still relevant chrome um works differently if the report is uh to be blocked they will send one report per page fine that's gone that's easy firefox will report it multiple times per page so now you have the differences between how many reports you see and how you're trying to analyze them and then safari behaves completely differently if you have a repetitive call blocked and and it will send it it just sends the top level domain so you don't even get the full page of the reporting [Music] of where it originated from

and then of course like any reporting service there could be log spamming we actually saw some interesting cases where lockspain was trying to be used to manipulate systems but this is still i would say next phase of attacks because some vendors are trying to build systems which will automatically configure and uh define from reporting policies what is a uh something that should be whitelisted you can actually spam the system and trying to abuse the machine learning to to learn a specific domain as should be whitelisted i haven't seen an example yet but i anticipate it will happen as it's becoming more abundant so we went over that part the next part we wanted to look at

was actually cves and how do browsers behave so this is the cvs report over the years specifically in total in firefox and in chrome i'm going to dive a bit to one that we found a while back it's pretty straightforward the following code if you look at the directive it says that object source uh shouldn't come from anywhere and skip source will only come from the page itself and then this code uh should not work it should be blocked by the browser basically trying to create an element with a script and fetch it from a non-white listed domain but then if we add a tiny bit of hack this is already by the way mitigated so we don't have to

worry just opening an iframe and adding it into the iframe that worked now i'm not blaming browser developers it's really hard to build a fully protected browser and to match all the different possibilities you can have with all the different variations but these kinds of cvs happen all the time and i've actually reviewed a few of the latest 2021 cves they've seen um breaches reappear after they were closed three and four years ago because code was refactored again any questions so far

okay so let's assume we have a well-defined policy and let's assume the browsers work well what can possibly go wrong with this concept of policy [Music] i asked the simple questions again going back to http archive let's see which are the most commonly white listed uh domains that websites use let's not assume anything let's just see what they're using and let's see what we can do with it so going back to march 2020 and then looking at last month we see these domains here is a question for you if we look only on the top one does it bring you an idea what you can do with it

malaysia's advertisement okay any other idea

yes so um thank you um first of all it's not that the top one it happens again and again when you're going down the list so it's more than just the top one it's actually more common and the simplest idea here was okay i'm looking at this information i'm saying sounds pretty simple let's just try and use the top domain which is white listed to expectate data will it work will it be that simple and the answer is it is because any uh jsonp uh endpoint that is whitelisted can be used for data explication and it's just just google analytics this is just the simplest one to try to implement this bypass it can be with anything it

could be with trying to send tweets or a facebook message if it's whitelisted new relic you can open an account for a few dollars if that is whitelisted and use that any service that is shared and is commonly whitelisted because websites are actually using it can be used for the data exfiltration so even if we did all the work of defining a well-protected policy uh somebody can try and bypass it and it's pretty simple to write the code so we just added a few bits of lines as you can see this isn't even a complicated scenario we just doing all the information on the query string itself not even trying to do it as an ajax

request and obviously it worked we have the information we can export it from google analytics and then we have a user password that we expected uh from a website even though uh it was tricked just because google analytics was whitelisted now we went back to the um to the standard and said okay interesting let's dive in and see if somebody tried already to resolve it so let's at least with the questioning itself can we do some kind of limitation to protect it and as you can see by this by definition it doesn't this is a quote from the standard itself we do not go into the query string we don't try to validate anything beyond

top level domains that is the only level of protection we can efficiently implement within the standard itself so that can be supported unfortunately then we said fine so far still an interesting story let's publish that we didn't think it would be that um biggest bigger story as itself we published the method um it got a lot more exposure than we expected because it seems pretty straightforward and then just a few days later other security companies started to report on actually seeing this being used in the wild from kaspersky from sunsec and a few other companies reporting that they actually see this being abused so it's not even just story and a theory it's just a matter of looking at the

data and trying to underst on playing the game of if we would have been the attackers what we would have done looking at uh websites and seeing that yeah somebody is already trying to do that it's just a matter of exposing the method and bringing it into lights so today by the way if you're going to add google analytics as a destination domain and go to the csp validator tools they will point it as a potential risk they don't have any suggestion on how to solve it but at least they will tell you that there is a potential risk so that is an addition which is an important one

okay so if you look at the story from where it went wrong the ma with the fundamental problem here is we're trying to protect a modern website with a lot of different elements and tools with a policy and like i asked before who tried to configure an iptables policy these things are really hard to configure well and there are a lot of not a lot of best practices i'm going to mention a few but it is really problematic and when we're defining a policy the most common vector of attacks today that we see on modern websites is coming from some kind of a whitelisted target i don't know if you've seen just earlier this week cdnjs reported

they had the major potential breach have you seen it nobody so cloud4 reported that they had a major potential breach where somebody could basically change the source code on any javascript placed on cdnjs that's a pretty high risk item they fixed it after they discovered it but that happens all the time that means that code we bring into our website which is running on multiple platforms we didn't build it we didn't own it we're using a lot of different tools when we're building our system or the marketing team adding all kinds of tags when they're just trying to improve uh some aspect in their website we don't have actual full control over what's joining and we have no control over the security

of those elements and they're being whitelisted and they expose us to data exploration from both angles from where source is coming and where data can go to because we're using all these tools so what can you do to make the best of it uh it's still a good standard i'm still recommending everybody to use it i'm not recommending anybody to just avoid it it's still raising the bar and make requiring a potential attacker to try and work harder to try and breach and expert rate information first of all pretty basic but still not that common is just to add an identifier on the report uri at one some kind of an identifier telling you this is a user a or user b so you can

look at the logs later on when you're trying to understand what you're looking at obviously filter the reports my go to here is just look at the most common newest browsers if you're trying to understand if your website is working well enough ignore all the minor cases just focus on those which are the most common if there is a problem that will tell you where it is you don't need to focus on anything else on all the long tail of noise and the last one which is a pretty nice hack that you can do i haven't seen any open source system yet to do it but it's a pretty nice method is to a b the policy the way

you can start is with the report only policy which is the strictest allow nothing get all the reports and then just starting to add elements one by one to be still as strict as possible and see with the report only what kind of reports you're using once you get to a position when you don't see any reports that you don't think you should get then switch the policy from report only to mitigation mode basically changing the header from report only to to block and that will give you all the information that you need and then you can play with it as you go along you can keep on iterating on the policy uh back and forth on

uh portions of the users on actual part of the sites and then see what how you can verify that both domains that you have there should be should be there and that they're not obsolete this is something that i didn't mention before we've seen cases where the csp whitelist has domains that were obsolete because somebody did it fast it didn't work they added a domain and they they forgot about it because you never change it there is never a report on something is not needed and the domains got obsolete and are out there to be bought so somebody can actually buy domains that are whitelisted on websites um not very popular one but assume you're using startups sometimes

they close and if you don't remove them from the policy somebody else can try and buy that domain and reuse that as a as a target so like i said clean up after once in a while that's the idea behind this and complicated but i definitely recommend you use the nuances and hashes to make sure the actual inline code is also the one you intended requires a lot more work but still something that should should be relevant and and the last one still not common enough is to be context aware you don't need to use the same policy for the entire website you can be strict on different pages according to what's being run there on the login page

be very strict on the front page you can allow google analytics you can also decide that there is no google analytics on the login page and just remove that risk you don't need to assume you need it on every single page so this is something you can take into account when you're trying to protect it and the last one is really to me the most important one try to contribute to w3c to the csp3 with comments and ideas it's still a very important standard and a lot of browsers are still implementing it and it's still open for reviews so try and hack it trying to find new ways to bracket to break the the standard and try to

find ways and ideas in order to push everything to be better because it's protecting all of us as users in the internet with browsing those websites any questions

yeah so welcome yeah so the attack vector the csp is trying to prevent is if someone several client side manage to inject malicious javascript code and you want to block exfiltration office for example that's the whole point the server sends the http header and the browser itself enforces the policy that the server defines that's the basic idea behind it csp is mostly intended to prevent xss so cross-site scripting those kind of attacks they can come from different places but that's the main idea behind the the method it's not only that because one thing that we see is that the standards are trying to move all the security uh headers the different headers that we have as directives into csp so it's

also adding of more layers of protection i would say but it's just a matter of making more convenient for the security person to manage one context and not and learn less about less and less methods that you can try to protect like the upgrade insecure request for example that has nothing to do with access it's mostly about making sure the website is only https but it's most but that with that said it's mostly around access yeah thank you

how are you answer that ask a question get a prize any other questions over there all right somebody over here hi can you kindly please comment about the age of xss in view of csp can you repeat that eh what is the status of xss attacks in view of css csp implementations interesting so i haven't seen them myself i read a few reports and from the reports i've read xss is first of all still very common as an attack vector but it is very csp is very effective in blocking that on websites that published information on how xss is actually being trying to be abused and being blocked with csp so i've seen very few reports of those but

from what they're reporting they report on very effective mitigation on various access other questions someone back there i'm going to need the mic do you think we could use the do you think we could that a new version of csp could possibly defend against csrf attacks or is it that just not going to work ever i don't think so because csp is mostly intended to be the client side part of the protection and csrf tries to manage both ends so i don't see it going into that they're mostly focusing on the xss part and the client side protection and i think that's where they should focus it's coming to protect the user using the websites

hi do you think csp should also support by this thing of urls full urls on just domains because currently um you can just find a url inside the domains that are already whitelisted that you can just do whatever you want with it yeah it's a good question it's one of the items we actually we looked at interior would say yes but it adds a very complicated layer to the browser themselves trying to be effective when they're trying to analyze and mitigate so from the security perspective i would say yes but as a user this might affect us in a negative way so i'm not sure it's something that they will be able to do there is a reason

so far it's being explicitly said it's outside of the standard with that i think it's a combination the big services that are a potential leaking endpoint they should also do their part in trying to prevent uh themselves being part of that attack factor and uh and and protecting uh basically the businesses that using them as services so it should be part of the story it can be something that is just one way and one-sided in practice you know google analytics should also do something on their end to try and protect the users it can be something that only the browser does because they're on potential risk it's not only them but also them any other questions

going once going twice all right thank you [Applause]