Running an AppSec Program in an Agile Environment

Show original YouTube description

Show transcript [en]

-Yes. -Everyone, thank you for joining my talk. I'll be talking about how to run application security program in agile environment based on my previous experiences. First, I'm going to introduce myself and some terms like application security and agile practices because it may depend from person to person, even though they're just terms. It's kind of a few lines between the terms, so I'm going to define them. so that we can go on the same page for the upcoming approaches. So after that I'm going to introduce two different approaches for application security. One is the activities that we're doing before deployment, kind of like proactive approaches that we do before deployment and as early as possible in the cycle. And the reactive approaches while we're doing

our day-to-day stuff and after deployment activities. So I'm Mert, I'm a security engineer at Amazon. I have a bunch of certificates and conference talks. I'm trying to give away as much as possible with my knowledge in the conferences. And also don't forget to check that we're hiring. So I always welcome any applicants and engineers to our team. So Application security's incessance is finding, fixing, and preventing vulnerabilities in every stage of the development lifecycle. And it also means as a security engineer, you're also responsible for finding and preventing vulnerabilities even in the requirement collection or design steps of the development lifecycle, not just the other implementation specific technical parts. So on that thought, agile practices is also now nowadays used by enterprises

much to develop software in short sessions and while getting rapid feedback, they refine requirements and try to create a sustainable way to develop software. So coupled with our need to be of the way and make the software more secure as a security engineer. It really needs us to be more working towards like an enabler for our teams. And also we need to work much faster than before, I mean. In the past, our engineers were set up with some scanners and they were good to go, but now it requires again copying from the agile practices. We need tools and procedures that have short iterations and getting rapid feedback from the developers so that you can refine your

tooling and rules so that you can create a sustainable application security program. So on that, what I would like to divide application security is proactive and reactive approaches. Proactive ones are mostly activities that we're doing to shift as left as possible in the lifecycle. And in my experience, it starts with teaching developers how to do coding, how to do designing in a secure manner. So, security practice for developers is one of the most important things that you need to employ in the application security development. The common approach is doing regular meetups with your developers, kind of like starting from what is security, how application security works in real world, what is OWASP, top 10 initiative, this kind of stuff to actually what I would

recommend is the threat modeling sessions with your developers so that they can also learn how to do threat model. You can gather some development team and they can introduce their architecture or their infrastructure to you that after that you can do that model with them by asking questions or by doing it yourself and they are watching it. So after some time maybe it would be best to incorporate this throttling sessions to turn it like a workshop and when you onboard new developers into the company or into the development teams, you can provide them with these threat modeling workshops. So even there is a newcomer without security knowledge or security in their mind, they can get used to the company's culture and

team's culture of security. So it's all about creating the right application security culture within the developers so that they always think about security in one way or another when they are doing modeling or architecturing or coding. following thread modeling. The other thing is code review sessions. These are mostly from circuit engineers. We're obviously finding lots of bugs, lots of vulnerabilities, whether it's on the code or dynamically, or it may came from our bug banter vulnerability management programs. But in the end, we will have some kind of vulnerability and this vulnerability is going to have some kind of coordinate in some kind of repository. So finding that and finding the owner and meet with the owner to do code review session

to see the root cause, how it actually happened is really valuable learning lesson for developers because when you teach them how to print it they're not the most reluctant to do it, do the same mistake less likely because in the end they're just developers, they're as agile practices now, agile practice or agile culture relies upon is they are really trying to do everything as fast as possible in a customer-centric way. So when you're going to introduce them to the root cause of the vulnerabilities that happened on the code, they're going to learn it. And once they learn it, they're not going to forget it when they are doing this kind of stuff. So creating security culture for developers is actually one of the

most valuable thing that you can do in the company. The other thing is obviously you cannot teach developers everything so we have to employ static and dynamic scanning and also some kind of container scan or maybe cloud formation or terraform check, terraform scans at CI/CD pipeline and why we're doing in the pipeline is the It's the first line of defense for us as an application security engineer to actually look at the issues on the code or on the infrastructure thing. If you're doing everything as code, it's the best place to enforce some kind of rules and checks so that you can introduce your security early and as early as possible. We will come to that on the upcoming slides. So I'm going to continue the risk

scoring and measure to measurement. The risk scoring is as we're circuit engineering evolves as the tech and the security scene is evolving, we are having lots of data from our day-to-day activities. So by gathering them and analyzing them, we can create teams risk scores based on how they're handling data and how their data is critical. And also we can use mean time to fix vulnerability count by team or by developer, etc. This kind of data can enable us to calculate some risk for the team and maturity for a team or individual developer so that we can see what we need to focus on on the development basis for example if some kind of team doing the same injection kind of vulnerabilities

again and again maybe you have to refine your trainings workshops or maybe you need to refine your sessions accordingly with this team and When you do that, you're going to see the optimization of the scoring on their behalf. So you will see that you're really improving their posture at the same time. So the last thing that I want to mention is the abstracting some features from developers that's complicated enough for them to not to implement, not developers to implement. It would be a secret management if you're using hybrid or on-prem environment rather than the cloud native. And also it would also be authentication authorization logic, even though it's kind of, there's also frameworks and kind of built-in approaches, it's still for some developers, it's

still hard to understand the logic behind the authentication authorization issues according to the OS.10. So the second management part, what I would suggest, for example, would be using Vault's open source version for this example. You can set up Vault. to gather all of the secrets on the code so your developers can store their secrets on vault instead of the code or config files so once you store them in the central place, you can use various tools. For example, if you're using Kubernetes infrastructure, you can use sidecars to inject vault secrets to the application. So in that way, your developers don't know anything about their secrets, but they're using it on the code as a way of

configuration. So you can abstract this management or introduction of secrets into the code by using some kind of secret storage. And doing it like this, using the open source solutions can enable you to scale. and can enable you to, if you're going to do hybrid or cloud native in the future, it also enables you to extend it to the cloud as well at the same time. So once you define your policies about the secrets, it can work at scale. So the another abstraction feature that we were talking about was the authentication authorization. So for this, I'm using an example for the Kubernetes infrastructure. If you're using Kubernetes platform as your applications platform, you can employ

service mesh like Istio. And what is this service mesh does is its essence is differentiate data plane from control plane. So you can just do your thing on the control plane without affecting the data plane itself. So by configuring the control plane, you can achieve intent encryption with mutual TLS. And with that mutual TLS, you can couple SPF with it. SPF is the workload identity that's also open source. So by introducing workload identity coupled with MTLS, you can then using Istio's authentication authorization policies to enforce API to API or service, we could say service to service authentication authorization through service meshes. So what you can do is you can extend these policies and you can wrap them around to

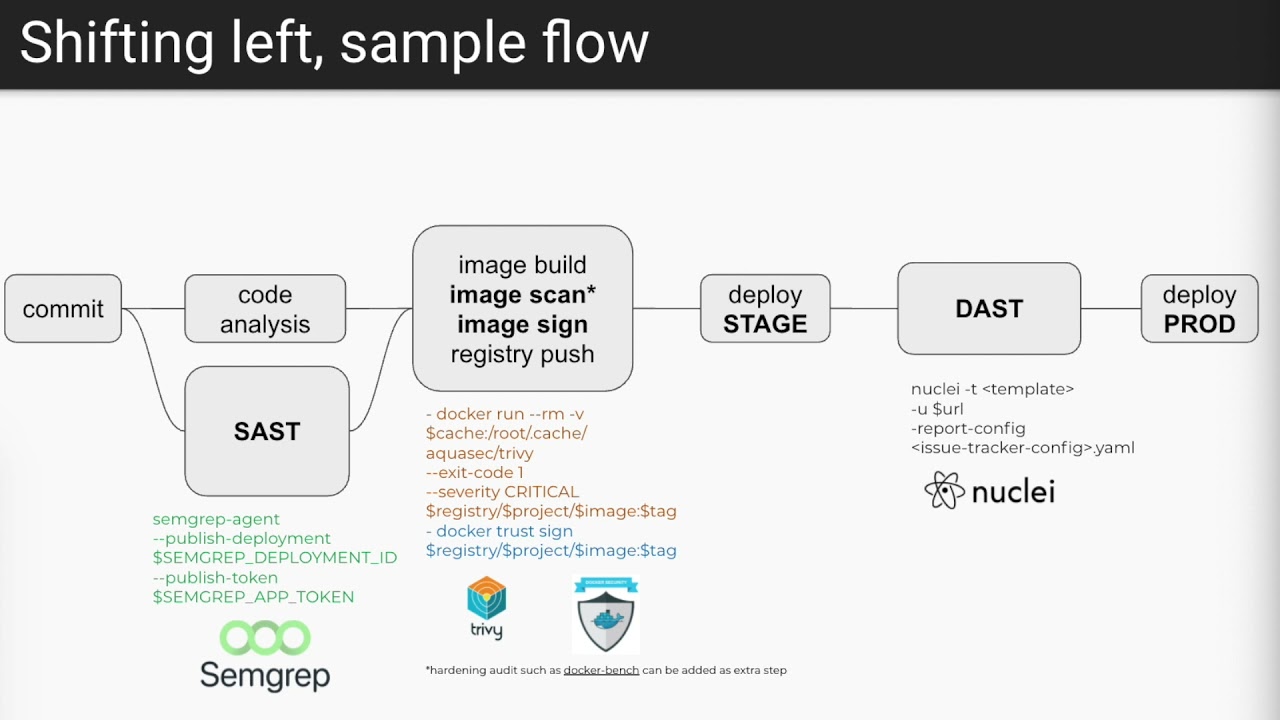

give developers some kind of service, authentication as a service, for example, so that once they are using it, your developers can just introduce their services, which services are they're talking to and which services are allowed to talk their service in config manner. So once they include their config file in the repository, you can fetch it and with your wrapped around script, you can translate them into these Studio policies so that developers don't have to do this kind of service authentication authorization by themselves and instead you can offload these verb to the infrastructure platform itself. So I was talking about CI/CD pipelines. This is one of the sample pipeline that you can build in your enterprise

by using nothing but open source tools. With every commit or by just commit to the development environment, it doesn't matter. You can choose whichever commit that you want to build into. But in this example, it's per commit basis. For every commit, there's the static scan using semgrep. And with refined rules of semgrep, you can lower the false positive ratio as much as possible. And after the static code pay analysis, you can use 3D open source tool, for example, to scan your image, dockerized image. And with this, you can also achieve your 3D degree, your code and your dockerized files security. And you can after that sign that image so that you can enforce unsigned images, you can

print unsigned images from deployed to the environment. So you can always make sure that rogue images does not run on your production environment and only the images you know actually runs on the infrastructure. So the images that you know will be through, will be processed through this pipeline. So you as a security engineer, you can always make sure that every image will be scanned according to your rules, according to your standards, and will be signed if they are at that standard. You can also couple this logic with the tools like Docker Bench to see if the rules or configs are as hard as possible. So after these checks, you're deploying your app to a stage environment And that's where comes our DAS

tooling. I used Nuclei for this example. You can use Nuclei for dynamic application testing based on templates. These templates are like Semgrep's rulesets or 3D scan rules. It's like that. you're introducing the template as a rule set for the nuclear and it just scans based on your rules and after that if there's an issue any of this in the any of the steps you can just block the pipeline or you can open an issue for the developer so that they can fix afterwards so that you're you're not a blocker for them for the vulnerabilities that that's on medium or whatever your risk tolerance is. But after all of these steps, application will be deployed on the production environment. So you're going to make sure that

at least there's some kind of automated control is enforced on that application. So that brings us to the reactive approaches because before the production deployment, yes, we're employing automation in our CI/CD pipeline. And before that, we're try to train our developers and teach them how can code and build secure applications but in the end automation cannot solve any problem or automation cannot find any or any and all vulnerabilities and also your developers will going to do mistakes because they're humans so penetration testing done from your team is always for in my opinion is needed before production deployment for at least critical applications or high-value applications in your enterprise so that you can make sure at least one technical engineer looked at your

app before every possible scheduled scan or pipeline scan is run through for the application. So before production deployment, you're doing plantation testing. After that, you're deploying it to the production. Eundo decode pipelines continuous scan for each commit or each deployment. The vulnerability scanning procedures with scheduled scans on top of the practices that we're also already employing is, in my opinion, is the complementary part for all of the things that we did. And if we couple CI/CD practices with rule-based scanning is complementary, I would say complementing penetration testing is the bug bounty. So by employing the bug bounty program, you're basically virtually extending your team to the hacker community that tried to find bugs on the applications. by complementing patentation testing

through the BugBounty program, you're basically achieving 24/7 scanning on your applications and on your infrastructures if you're going to that extent with the BugBounty program. So what I would suggest as a newcomer to the BugBounty program is to start with the private one. Because as much as it seems easy on the outside, backbite programs are a hell of a lot harder to run if you're a corporate. I would suggest getting some kind of assistance program so that you can learn from them. to actually run your own program in the future. When it goes public, you can run it yourself. But at the first steps, I would suggest doing it with the private one and with the managed

program so that you can learn from them. You can also learn how to communicate with the hackers because it's a really important skill to have if you're going to run the back-bound program. So coupled with all of the reactive approaches with the proactive one is actually the completing part of the application, any application security program in existence if you're going to do it in an agile manner with the scaling and customized tools. So thank you for joining my talk. You can reach me from LinkedIn for any of the questions that you may have. And to remind us, we're hiring, so we would appreciate the application. And if you have any questions now, I can answer them. I will be at the Gather Town

or at the Slack. You can reach me during the conference. Thank you. Brilliant. Thanks very much, Mert. um got a got a couple of questions for you um firstly off you were saying at the start about um short sprints and you know kind of keeping it really short and being able to be reactive and angelic for it so how short the sprint do you kind of look at and how small are your teams you're saying about keeping it quite closed so it obviously doesn't get quite out of hand with you too many cooks so kind of how small and quicker are the teams you kind of you find that you find in your own experience

to be yeah well at my experience we were having teams that uh doing uh we we were having multiple teams that deploying codes 60 or 70 times a day so it was really short bursts of sprints for them The usual ones that I experienced in average is five to ten days, but in the five-day sprint environment, there is lots of deployments. For example, most of the general or old-fashioned toolings wasn't fast enough to catch them up with their deployments. Okay. I also have a question. you've shown a sample pipeline is that a pipeline that will run just over a single sprint or is it kind of you'd have your commit for one sprint and then your code analysis over a second

is it just all kind of self-contained in each sprint that you do uh well what we would generally did in my experience before uh what my experiences before was yeah we're uh We're building all of the steps into the pipeline in central way. For example, if you're using GitLab, if you're using global template configuration for developers pipelines, you can include the security checks over there. So it's kind of your decision, but you can also run the steps in parallel for each commit. So if it runs in parallel, for example, for GitLab, i'm talking about the github one if it's if they're running on parallel for example your developers doesn't see the steps on the pipeline because they're

parallel so by in any means they are not i mean I'm not saying that they are, but some developers might need, might try to circumvent the security checks to actually develop their deployment faster. So by doing parallel testing like that would prevent them. But in the end, if you're signing your deployments or dockers or containers, if you're signing them, they're not going to circumvent in any way because you're going to I have some rules to prevent them to deploy unsigned images. So at the end, it's your decision, but I would suggest doing them centrally. But maybe you can change the configurations for the teams based on their data or based on their application risk. I've got to say, I thought it was great

seeing when you talk about append, appendage tracing testing going out before deployment. Does that fit in between your DAST stage and your deploy prods or do you try and have someone poke at the code while it's still being written? You know, at an earlier stage or where does it fit in your pipeline? The pipeline itself runs and after that, after the DAST, there's the deployment prod stage and that stage is where the penetration testing resides for me. In my past experiences, you can do them in parallel. I mean, you can run your pipeline scanners at the same time you're doing penetration testing, but in that way, you're doing rework for some kind of vulnerabilities because your rule set will already find some kind of vulnerabilities in the code

if there is any and also they are going to do the crawling and all the other things that you're going to do within your pen test procedure so by having the scanners output in your hand makes plantation testing so much easier because you already have the preliminary information that kickstart your plantation testing process That's all the questions we have for you and thank you very much again for your time and we'll make sure the recording gets out for you. I'll speak to Ben and then we'll be able to give an update. Thanks very much. Thank you.